By Ye Lei (Daonong), Senior Technical Expert of Alibaba

This article introduces some underlying fundamentals for the Kubernetes network model. Kubernetes does not restrict network implementation solutions, and therefore no existing ideal reference cases are available. Kubernetes defines a container network model to determine restrictions on a container network. Generally, this is summarized into three requirements and four goals.

These three requirements must be met for a qualified container network solution. The four goals are to satisfy major metrics such as connectivity in the design of the network topology and the implementation of network functions.

The three requirements are as follows:

The reason why Kubernetes has some arbitrary models and requirements on container networks will be described later.

The four goals specify how to connect an external system to applications in a container in a Kubernetes system.

The ultimate goal is to enable the connected external system to provide services for containers.

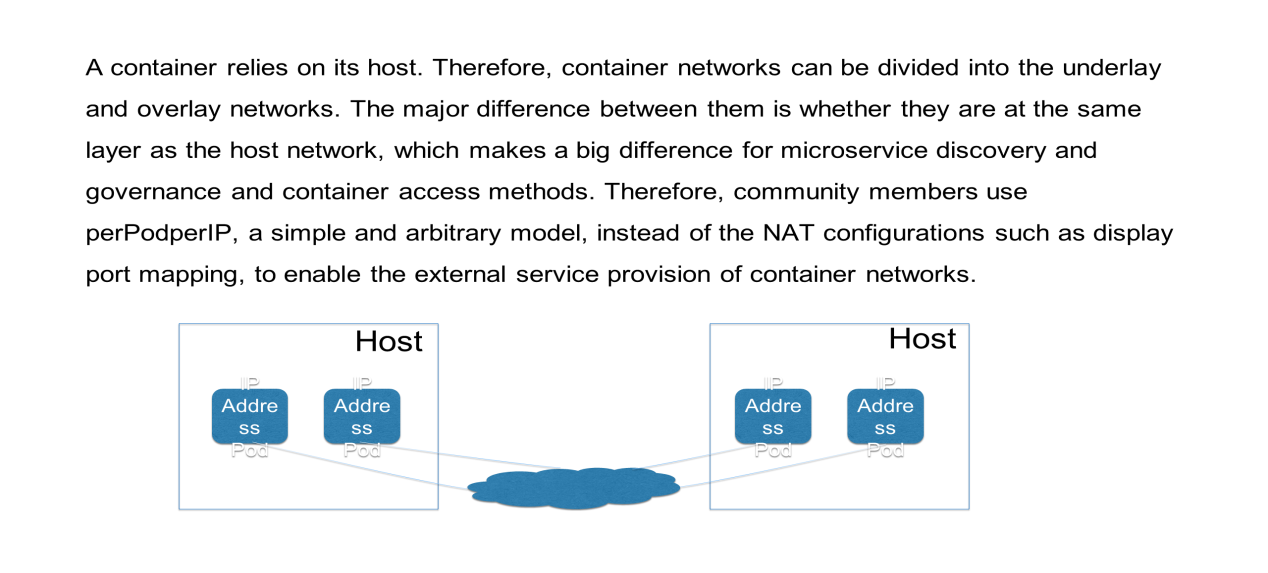

The development of container networks is complex because it depends on the host network. From this perspective, container networks are divided into two categories: underlay and overlay.

It is critical to understand why does the community put forward perPodperIP and which is a simple and arbitrary model. In my opinion, it is because this model provides a lot of benefits for follow-up performance tracking and the monitoring of certain services. Always using the same IP address is beneficial for cases.

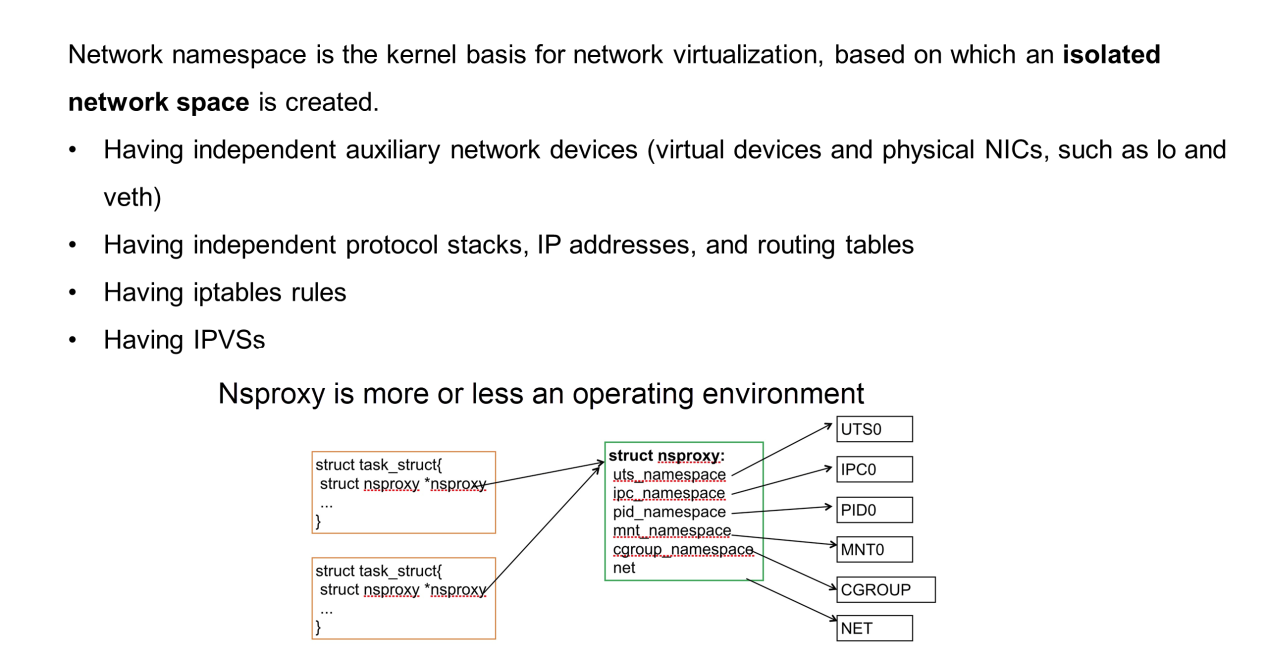

This section describes the kernel foundation that is implemented by the network in the network namespace. In a narrow sense, the runC container technology is executed based on its kernel without relying on any hardware. The kernel representative process is a task. If isolation is not required, the host space (namespace) without a special space isolation data structure (nsproxy-namespace proxy) is used.

However, if an independent network proxy or mount proxy is used, private data needs to be added. The data structure is shown in the preceding figure.

The network namespace looks like an isolated network space that has its own network interface controller (NIC) or network device. The NIC is either a virtual or physical, which has its own IP address, IP table, routing table, and protocol stack status. The protocol stack is the TCP/IP protocol stack, which has its own status, iptables, and IPVS.

In other words, it is a completely independent network, which is isolated from the host network. The code of the protocol stack is common, but the data structures are different.

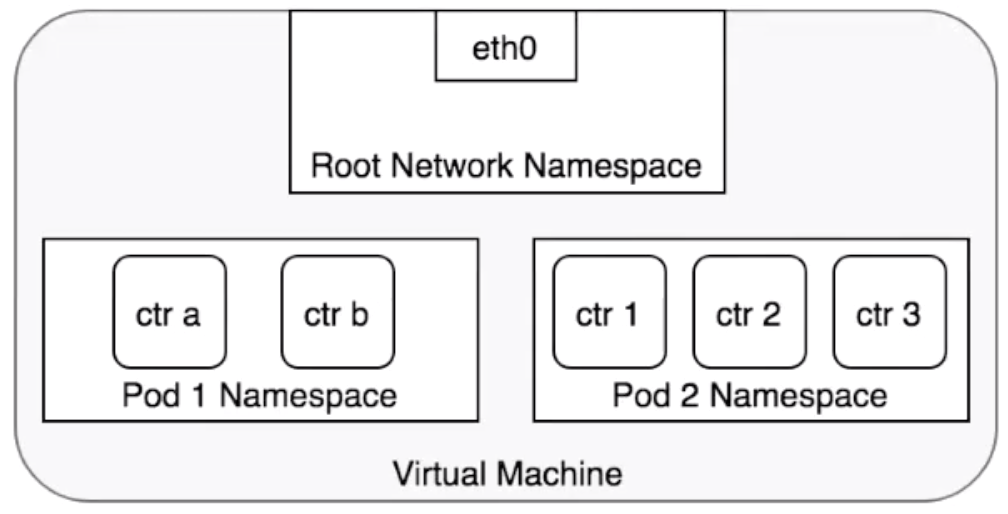

The preceding figure shows the relationships between pods and network namespaces. Each pod has an independent network space that is shared among pod net containers. It's recommended to use the loopback interface for Kubernetes to enable communication between pod net containers. All the containers provide external services by using the pod IP address. The root network namespace on the host is considered to be a special network space whose PID is 1.

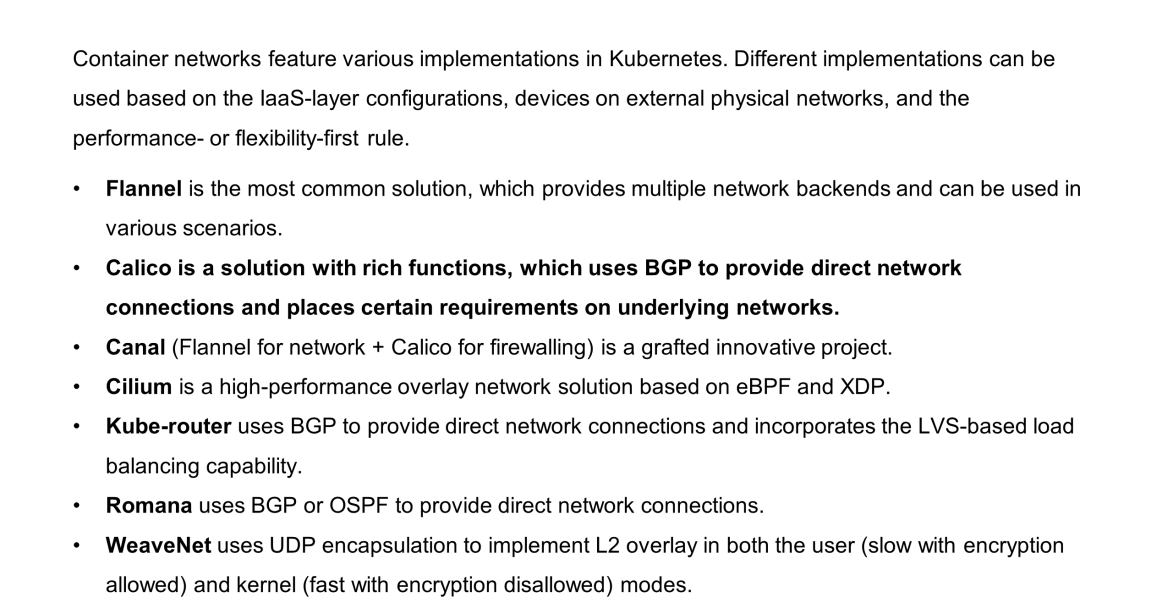

This section briefly introduces typical implementation solutions for container networks. Container networks are implemented in Kubernetes in various ways. The container network is complex as it involves coordination with the underlying IaaS network and a trade-off between performance and the flexibility of IP address allocation. Therefore, various solutions may be used.

This section describes several popular solutions, including Flannel, Calico, Canal, and WeaveNet. Most of these solutions use a policy-based routing method similar to Calico.

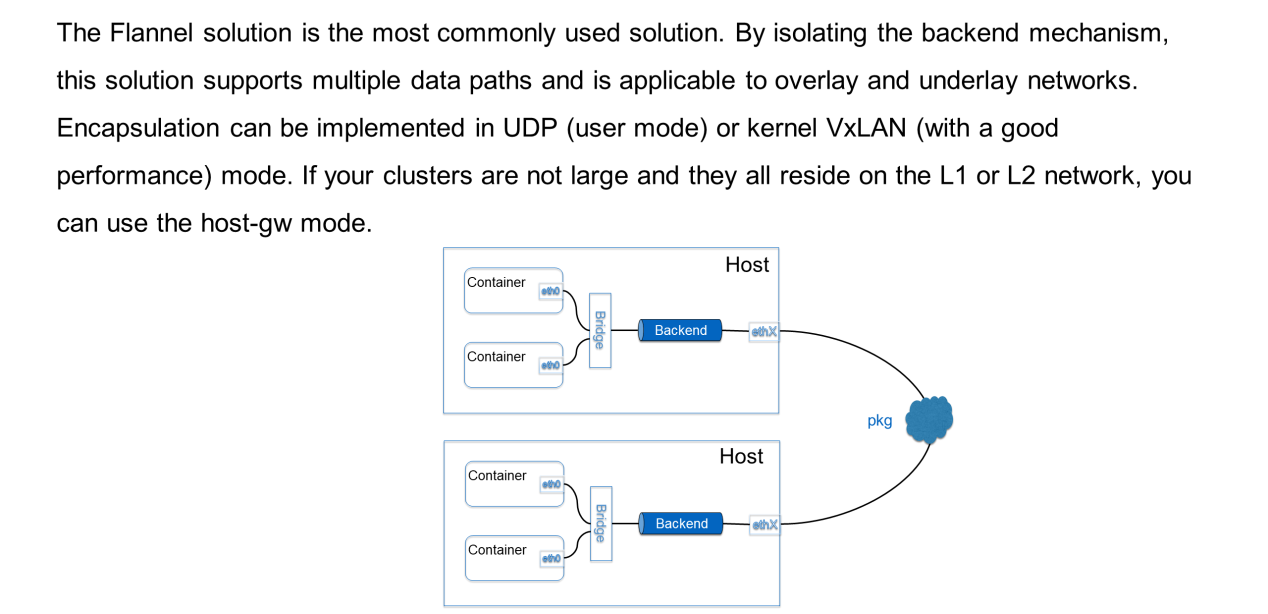

The Flannel solution is the most commonly used solution. The preceding figure shows a typical container network solution. First of all, a bridge is added to send a package from the container to the host. Its backends are independent. Hence, allows customization of how the package is transmitted off the host, which encapsulation method is used, and whether encapsulation is required.

The following section describes three major backends:

Let's understand the concept behind network policy.

As mentioned previously, the basic network model of Kubernetes requires all pods to be interconnected, which may incur some problems. In a Kubernetes cluster, some traces cannot call each other directly. For example, if you want to block department A from accessing the services of department B, using policy is mandatory.

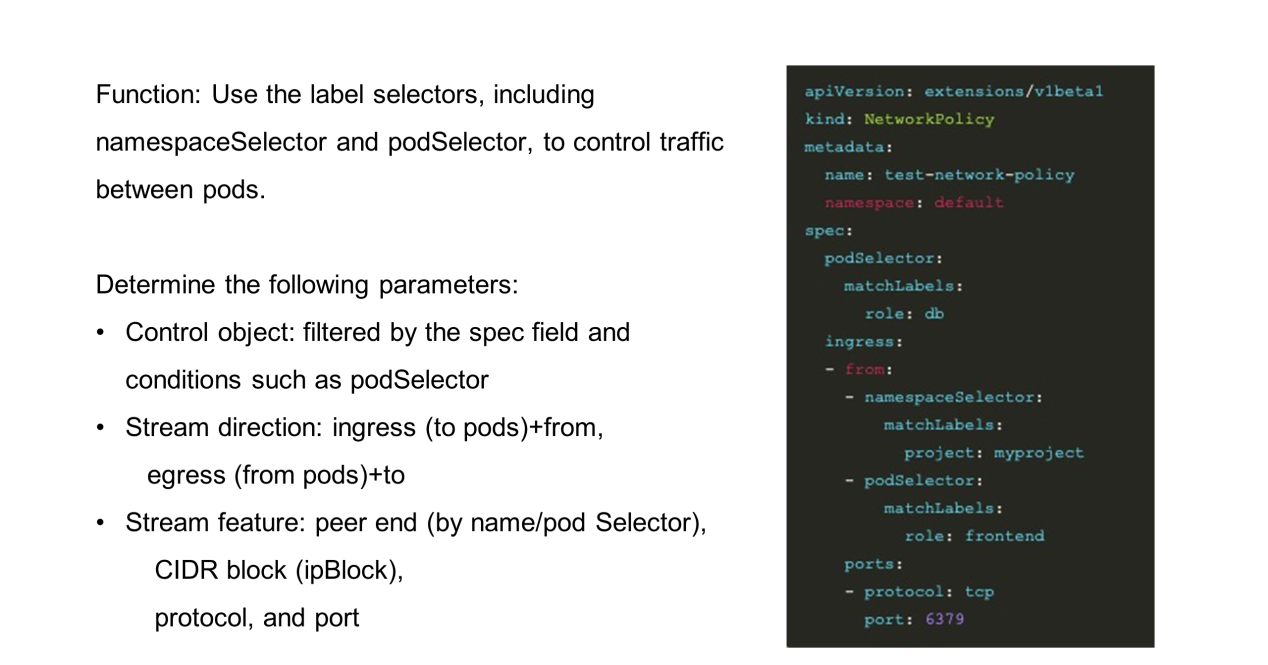

Basically, various selectors (labels or namespaces) are used to find a set of pods or both ends of the communication. Then, whether they can be interconnected is determined based on the stream feature description, which is considered as a whitelist.

Before using the network policy, enable extensions, v1beta1, and network policies for the API server, as shown in the preceding figure. More importantly, the specific network plug-in must support the implementation of the network policy. The network policy is merely an object provided by Kubernetes, which has no built-in components for implementation. The implementation depends on the selected container network solution. For example, the Flannel solution does not help because it does not actually implement the network policy.

To design a network policy, determine the following parameters:

This section briefly summarizes this article.

Here are some questions for you:

1) Interfaces have been standardized according to the Container Network Interface (CNI). However, why does the container network have no standard implementation but is instead built inside Kubernetes?

2) Why does the network policy have no standard controller or implementation but is instead provided by the container network owner?

3) Is it possible to implement the container network with zero network devices? Given the solutions that differ from the TCP/IP solution, such as RDMA,

4) Many network problems occur during O&M and it is difficult to troubleshoot them. In this case, is it necessary to develop an open-source tool to check the network status between containers and hosts, between hosts, or between encapsulation and decapsulation? As far as we know, this tool is not yet available.

So far, this article covered all the concepts and the utilization of network policies for Kubernetes container networks.

Getting Started with Kubernetes | Observability: Monitoring and Logging

How Yuque, Alibaba's Work Collaboration Software, Has Evolved Over Time

703 posts | 57 followers

FollowAlibaba Developer - June 19, 2020

Alibaba Cloud Native Community - November 15, 2023

Alibaba Developer - June 22, 2020

Alibaba Developer - June 16, 2020

Alibaba Clouder - February 22, 2021

Alibaba Developer - April 1, 2020

703 posts | 57 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Elastic IP Address (EIP)

Elastic IP Address (EIP)

An independent public IP resource that decouples ECS and public IP resources, allowing you to flexibly manage public IP resources.

Learn MoreMore Posts by Alibaba Cloud Native Community