By Changlv, Technical Expert at Alibaba Cloud

Access control is an important part of cloud-native security and is also a necessary and basic security hardening method for Kubernetes clusters in multi-tenant environments. In Kubernetes, access control is divided into three parts: request authentication, authorization, and runtime admission control. This article describes the definitions and usage of these three parts and provides best practices for security hardening in a multi-tenant environment.

Access control is an important part of cloud-native security. It is also a basic security measure that a Kubernetes cluster must take in a multi-tenant environment.

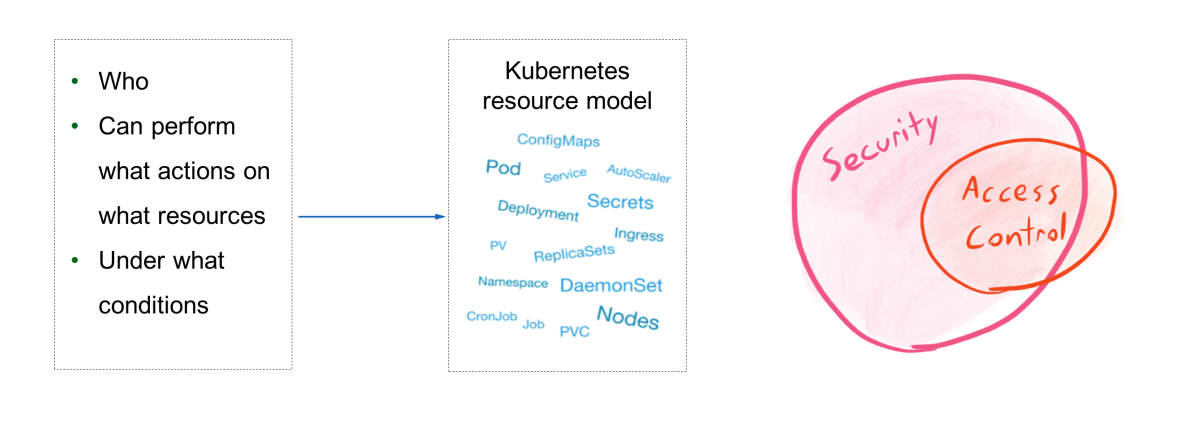

Access control can be abstractly defined as the control over who can perform what operations on what resources under what conditions. Here, resources refer to the resource models in Kubernetes, such as pods, ConfigMaps, Deployments, and Secrets.

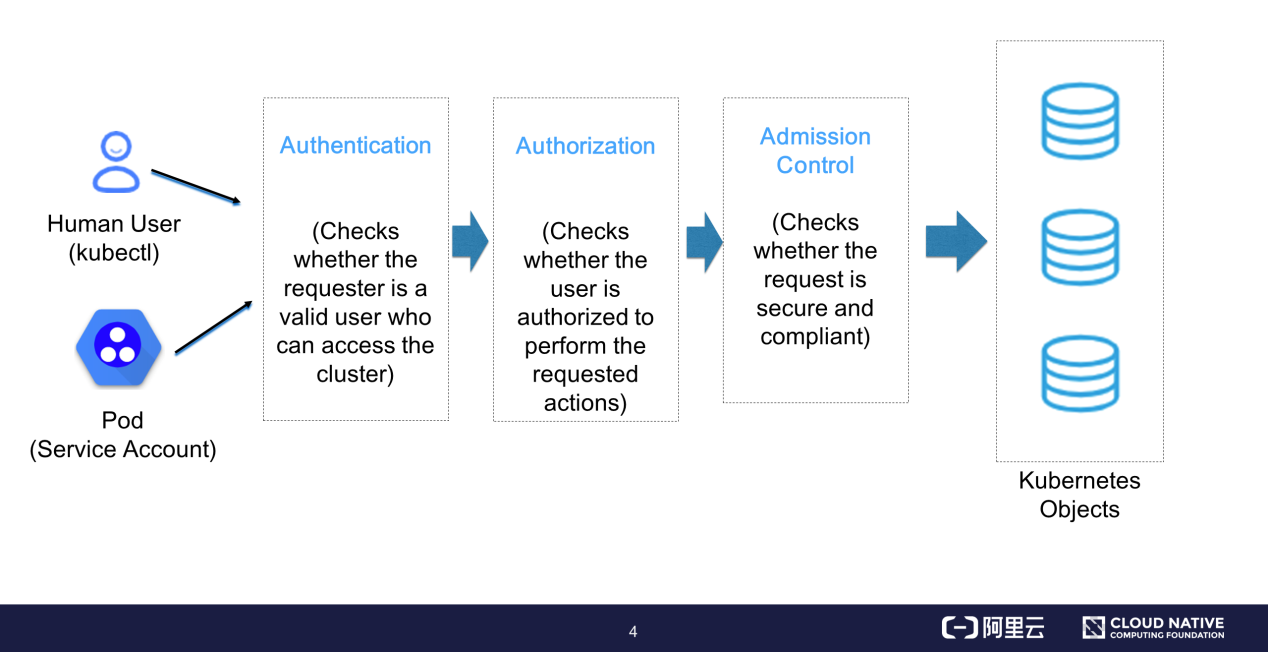

The preceding figure shows the process from when a Kubernetes API request is initiated to when it is persistently stored in a database.

Request initiation is divided into two parts:

The API server enables access control after receiving the request. Access control is divided into three phases:

Then, the request is converted to a change request for Kubernetes objects and is persistently stored in etcd.

In the authentication phase, the API server identifies the request initiator and converts the request initiator to a system-recognizable user model for subsequent authorization. Let's take a look at the user models in Kubernetes.

Kubernetes does not provide user management capabilities. In Kubernetes, we cannot use APIs to create and delete user instances in the same way as pods. We cannot find user-mapped storage objects in etcd either.

During the access control process, a user model is created through the requester's access control credentials, which are provided by the certificate in kube-config or by the service account introduced by a pod. After authenticating the requester, the API server converts the user identity in the request's credentials into a user or a group. The API server uses this user model during the subsequent authorization and audit processes.

Basic Authentication

In basic authentication mode, the API server authenticates users by reading a whitelist of user names and passwords that an administrator places in a static configuration file. This authentication mode is inextensible and typically used for testing because it does not provide sufficient security protection.

X.509 Certificate Authentication

This authentication mode is often used by the API server. The API server starts the Transport Layer Security (TLS)-based handshake process when receiving an access request that is initiated through the client certificate which is signed by the cluster-dedicated Certificate Authority (CA) or by the trusted CA in the API server's client CA. The API server checks the client certificate validity and verifies the information in the certificate, such as the request source address. X.509 authentication supports two-way authentication and provides robust security. By default, it is used by Kubernetes components to authenticate each other and provides access credentials that are often used by kube-config for the kubectl client.

Bearer Tokens (JSON Web Tokens [JWTs])

X.509 authentication uses JWTs that contain metadata such as the issuer, user identity, and expiration time. During X.509 authentication, a signature is added by a private key to identify the requester, and a public key is used to authenticate the signature. Token authentication is used in a wide range of scenarios. For example, the service account that is used by an application managed by Kubernetes pods is automatically bound to a signed JWT for sending requests to the API server.

The API server supports token authentication through OpenID. The API server can be configured to connect to a specified external identity provider (IDP). The IDP can be managed by open-source services such as Keycloak and Dex. The requester can be authenticated on the original authentication server through a familiar method. Then, the authentication server returns a JWT to be used by the API server during the authorization process.

The request token can be sent to a specified external server through webhooks for signature verification.

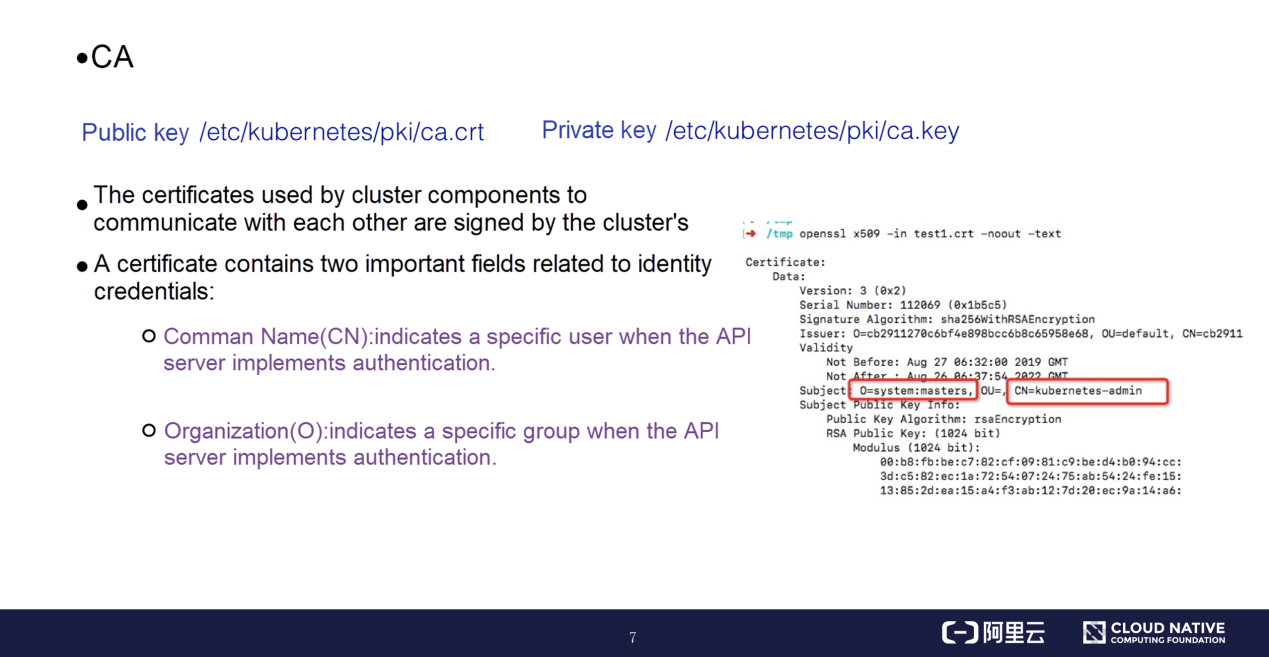

For a cluster certificate system, the CA provides an important certificate pair. By default, the certificate pair is stored in the /etc/Kubernetes/pki/ directory of the primary node. The cluster contains a root CA that signs the certificates required by all cluster components to communicate with each other. A certificate contains the common name (CN) and organization (O), which are the fields related to identity credentials.

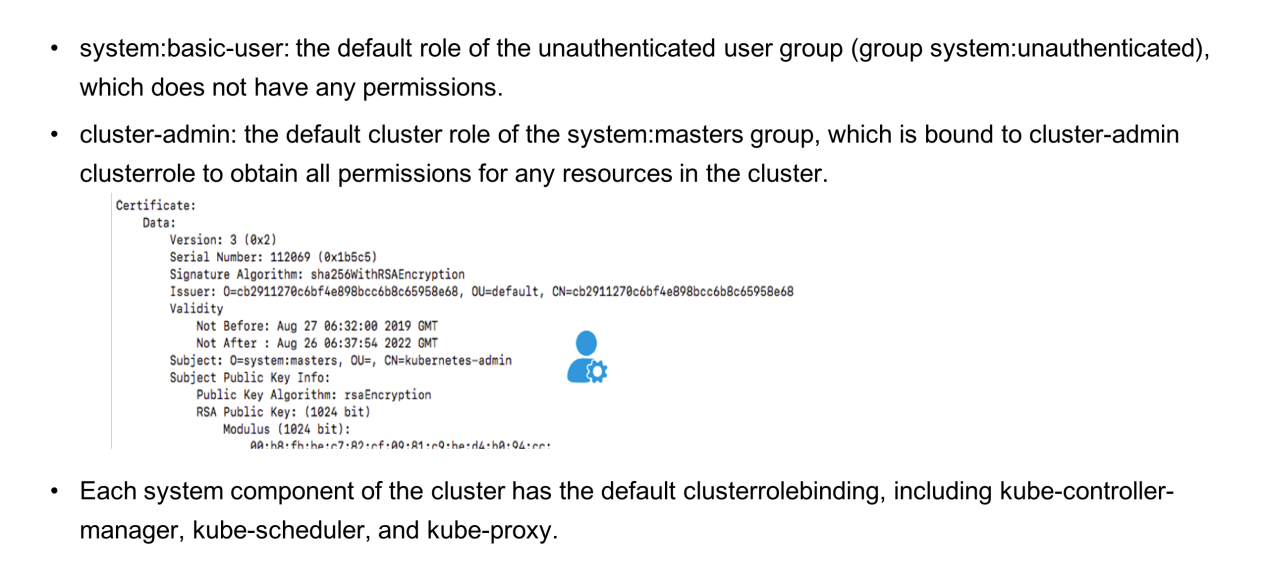

Certificates can be parsed by the openssl command. As shown on the right of the preceding figure, the CN and O fields are under "Subject" and contain useful information.

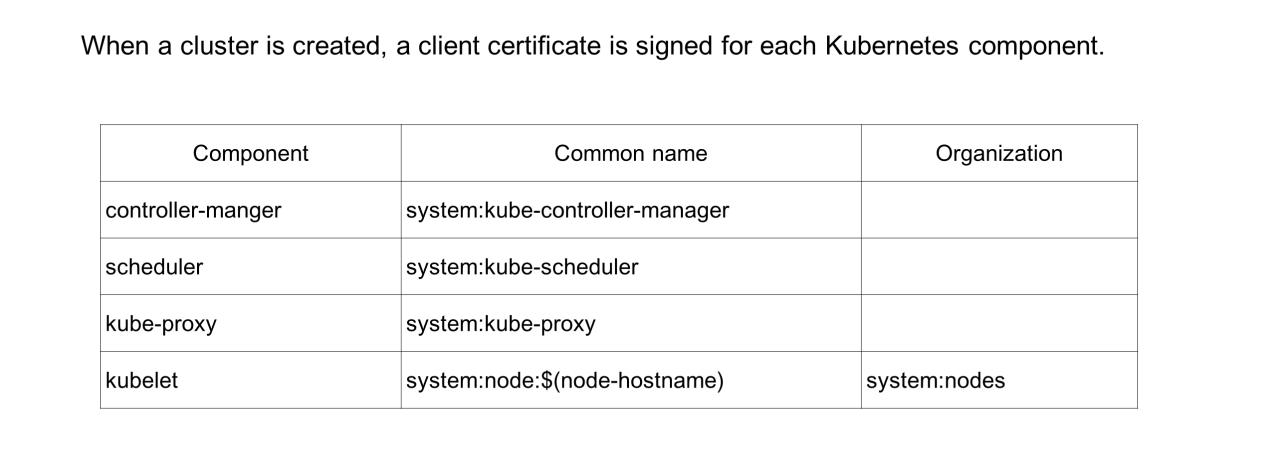

Each of the preceding component certificates has a specified common name and organization used to bind a specific role. In this way, each system component is only bound to the role and permissions within its function range. This ensures that each component is assigned the least permissions necessary.

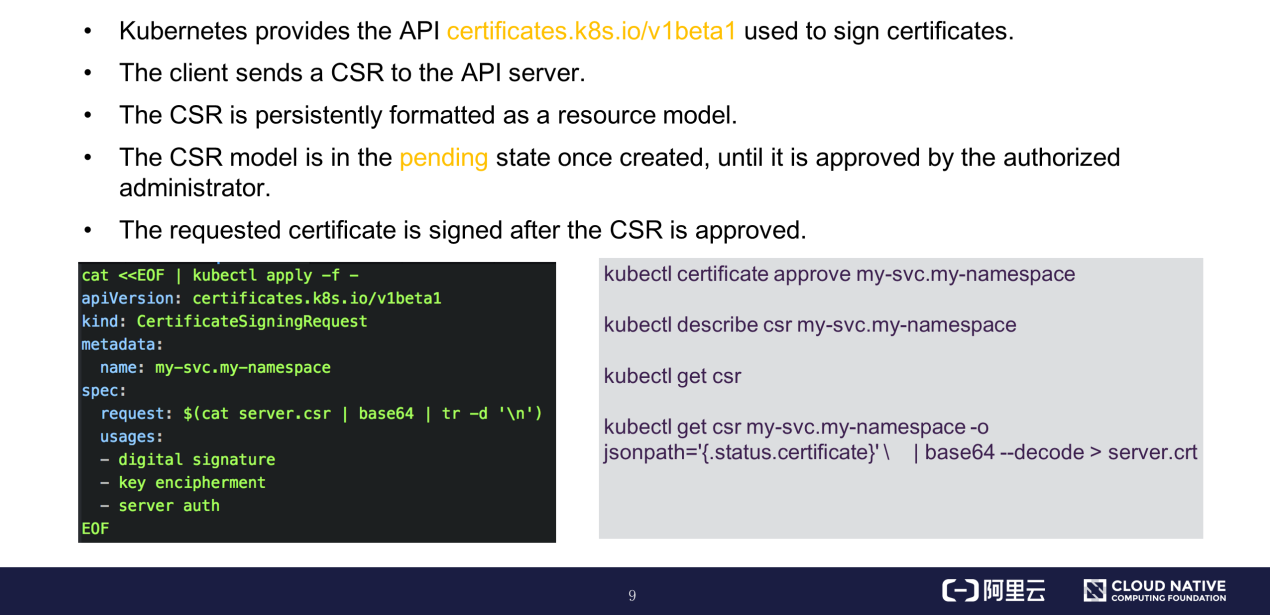

Kubernetes clusters provide an API for signing certificates. During cluster creation, cluster installers like kubeadm can call the corresponding APIs of the API server based on different certificate signing requests (CSRs). The API server creates a CSR instance based on this CSR resource model. The CSR instance is in the pending state once created and changes to the approved state after being approved by the authorized administrator. Then, the certificate is signed.

You can view the certificate information by running the command shown on the right in the preceding figure.

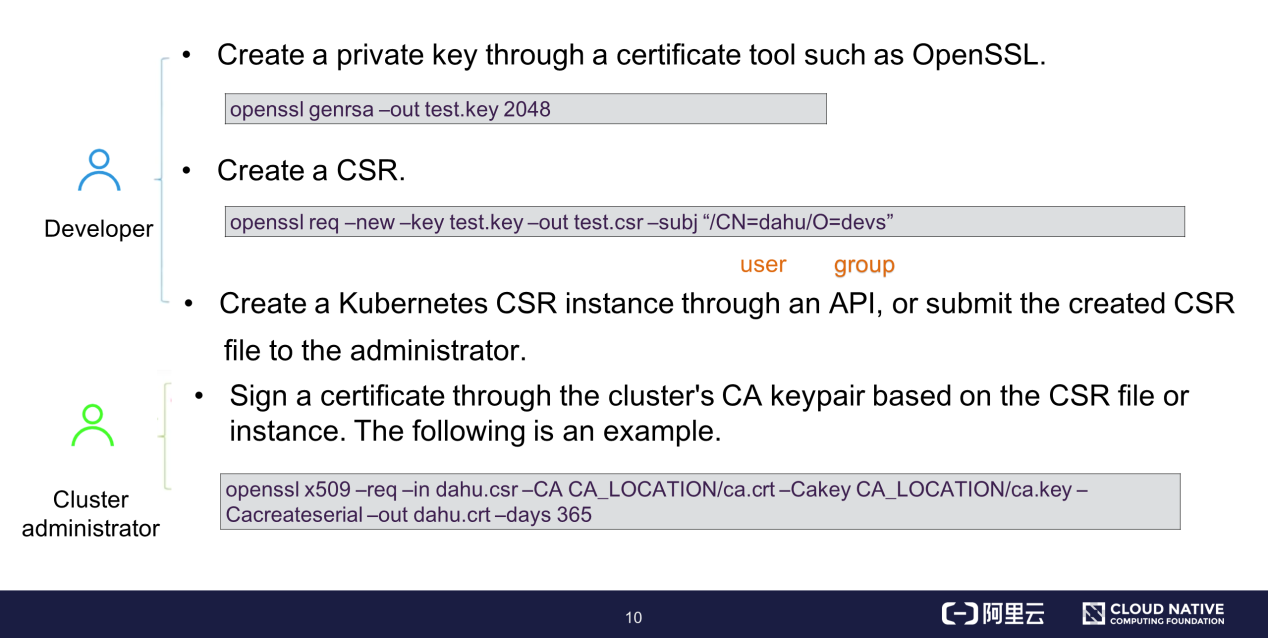

User certificate signing consists of the following steps: (1) Create a private key by using a certificate tool, such as OpenSSL; (2) Create an X.509 CSR file and specify a user and group in the subj field; (3) Create a Kubernetes CRS instance through APIs and wait for the administrator to approve the instance.

The cluster administrator can directly read the cluster's root CA and sign the certificate based on the X.509 CSR file, without having to define or approve the CSR instance. The last line in the preceding figure shows an example of signing a certificate through OpenSSL. In the command, specify the paths of the CSR and ca.crt files and the expiration time of the signed certificate.

Each cloud vendor can create cluster access credentials in one click based on the logon user and target cluster.

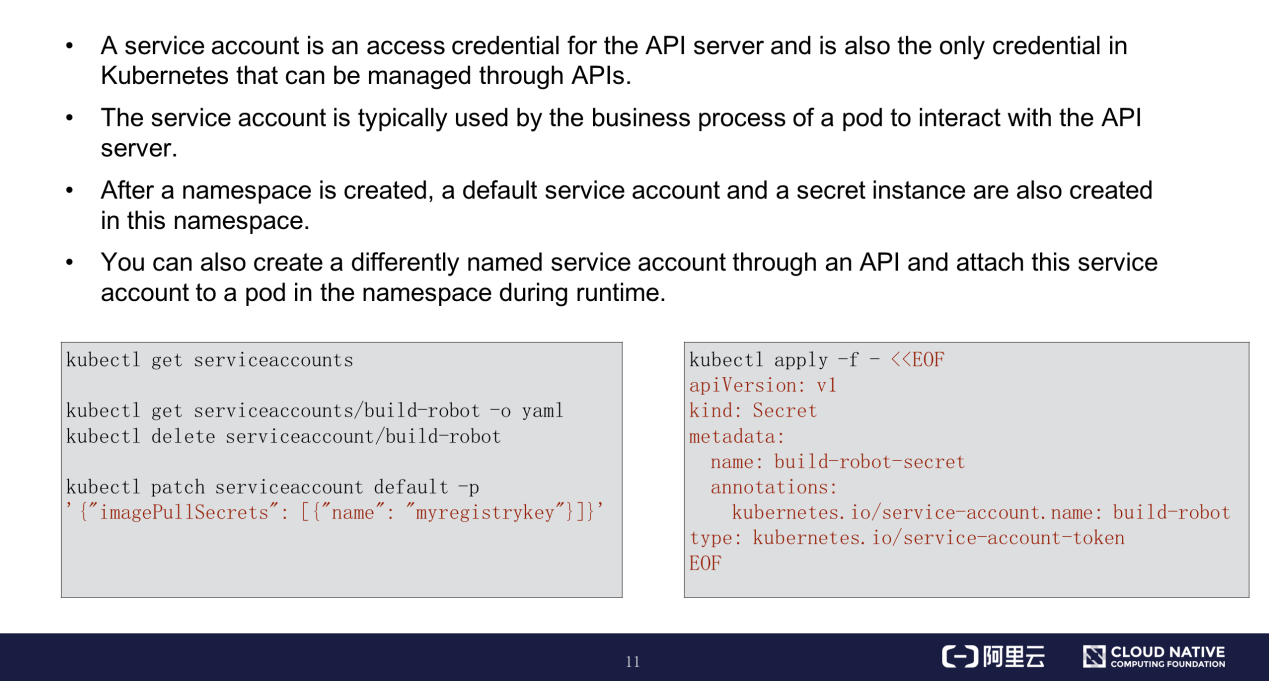

The API server also supports service account authentication in addition to certificate authentication. A service account is an access credential for the API server and is the only credential in Kubernetes that can be managed through APIs. For other features of service accounts, see the preceding figure.

The preceding figure also shows examples of how to use kubectl to add, delete, modify, and query service accounts through APIs. You can create a secret instance that maps the token of an existing service account. If you are interested, you can try this out in a Kubernetes cluster.

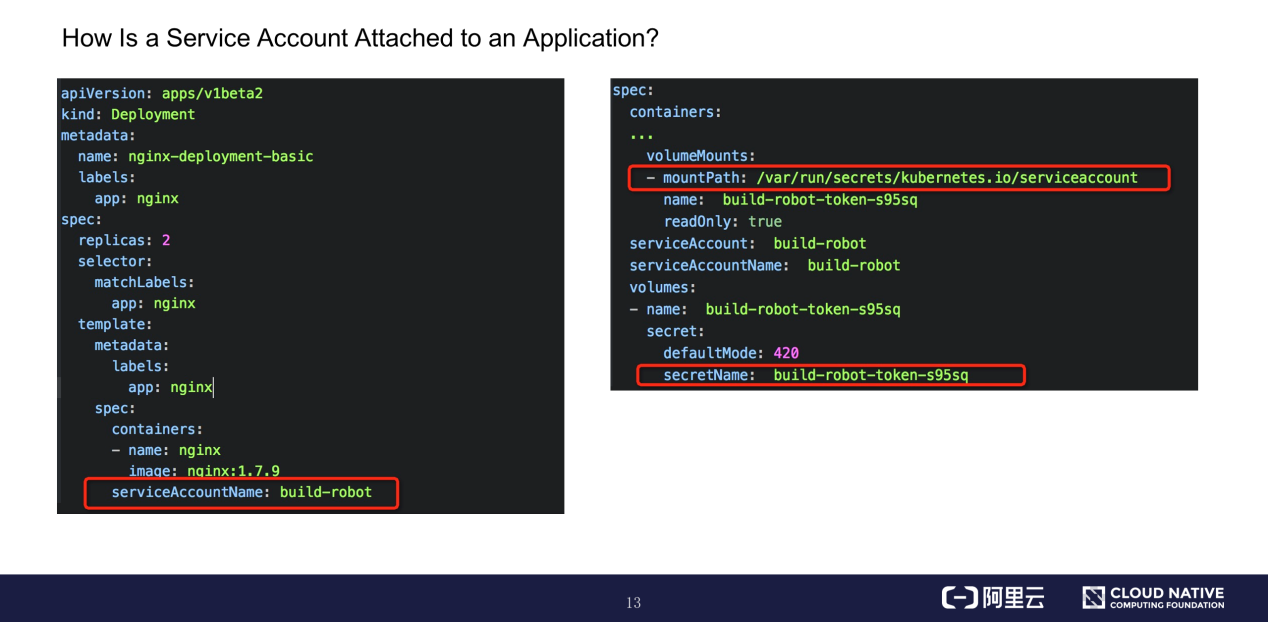

The following figure shows how to use a service account.

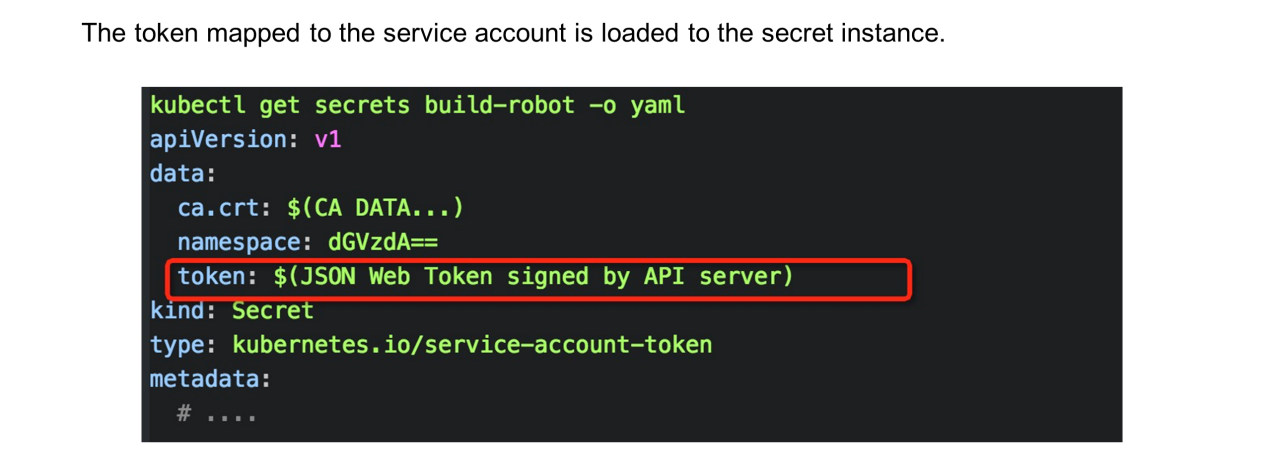

Run the get secret –oyaml command to view the secret instance of the service account. The token field indicates the Base64-encoded JWT for authentication.

When deploying an application, declare the name of the desired service account through the serviceAccountName field in template.spec.containers. The pod creation process is terminated if the specified service account does not exist.

The created pod template includes the CA for the secret instance of the service account. The namespace and authentication token are attached to the specified directory of the container in the form of files. The content of the attached service account cannot be updated once a pod is created.

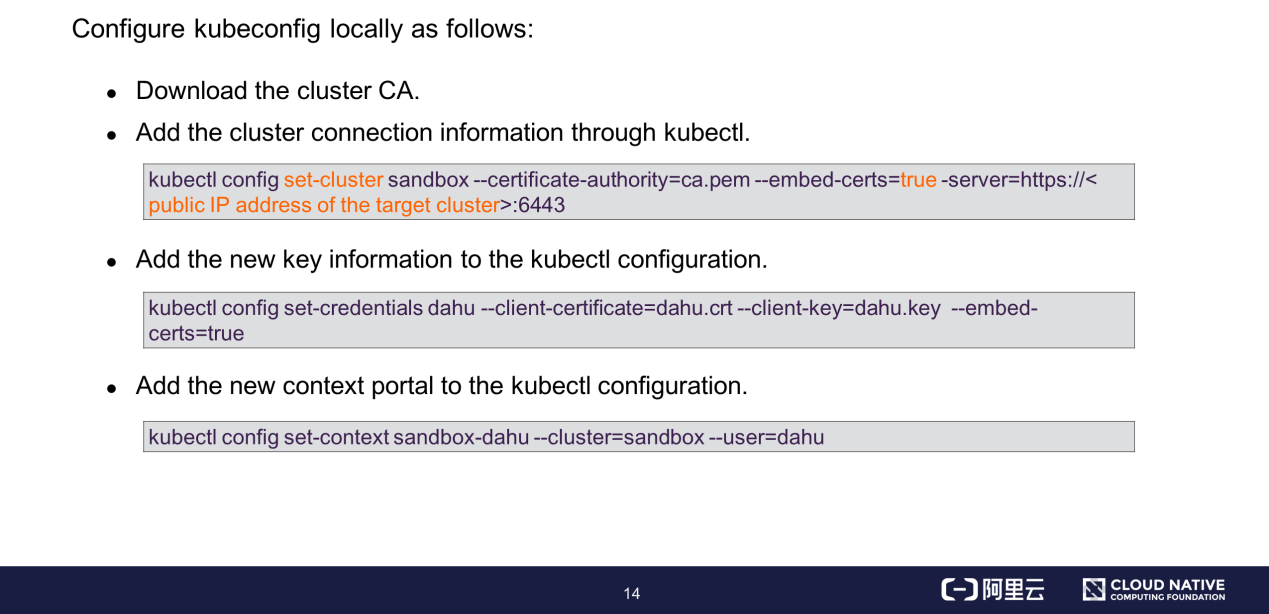

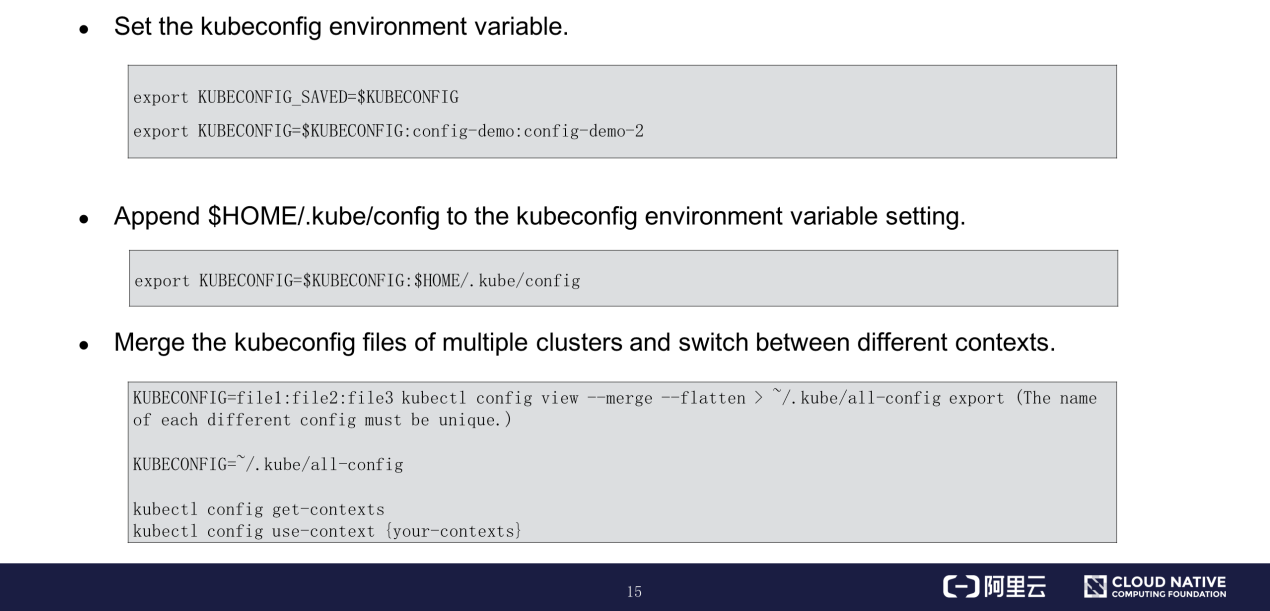

kubeconfig is an important access credential for connecting a local device to a Kubernetes cluster. This section describes how to configure and use kubeconfig.

After authenticating a request, the API server considers the requester to be a valid user and proceeds to the next step:

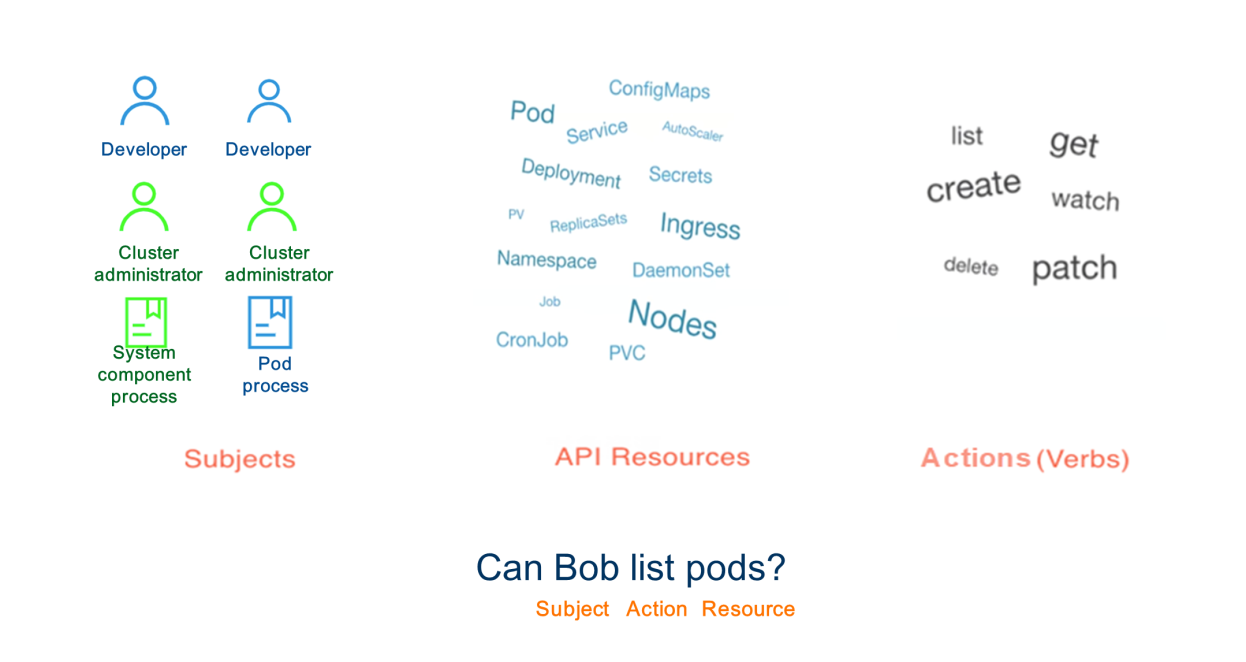

Kubernetes authorization – API server controls the user's access to specific resources in specific namespaces of the cluster. The API server supports multiple authorization methods. This section describes role-based access control (RBAC), an authorization method that provides robust security protection.

For example, assume that an authenticated user named Bob requests to list all pods in a namespace. This request's authentication semantics are "Can Bob list pods?" Bob is the subject that requests to perform the List action on resources, specifically pods.

The preceding section describes the three elements of the RBAC role model. A role must be bound to a specific control domain based on the RBAC policy. This control domain is a namespace in Kubernetes. Namespaces are used to limit the operations of Kubernetes APIs to different scopes, allowing us to logically isolate users in a multi-tenant cluster.

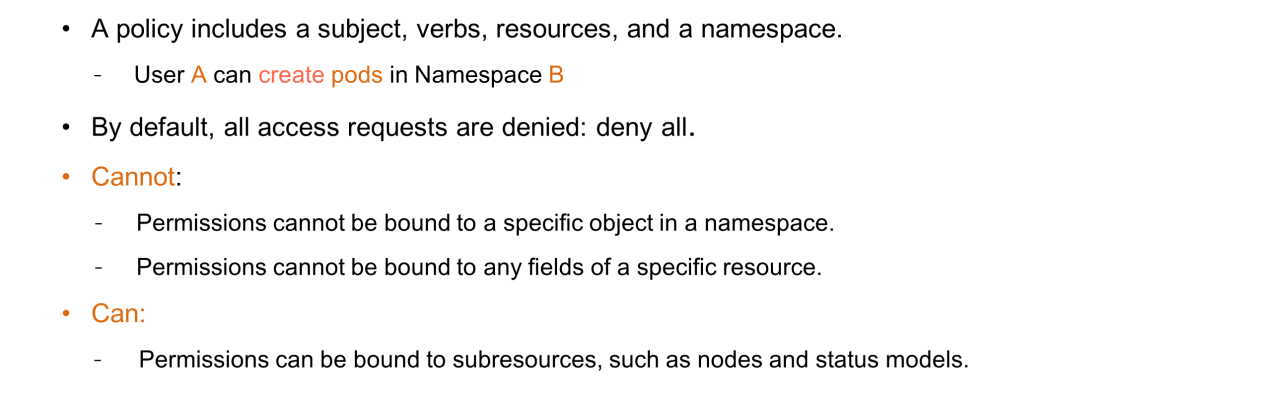

The preceding example can be changed as follows: User A can create pods in Namespace B. RBAC denies all access requests if no permissions are bound.

RBAC implements fine-grained access control on the API server. However, fine granularity is a relative concept because RBAC supports permission binding at the model level. Therefore, permissions cannot be bound to a specific object instance in a namespace or to any field of a specific resource.

RBAC can control access at the level of Kubernetes API subresources. For example, RBAC can control access to nodes or status models in a specific namespace.

This section describes what types of permissions and objects that can RBAC bind.

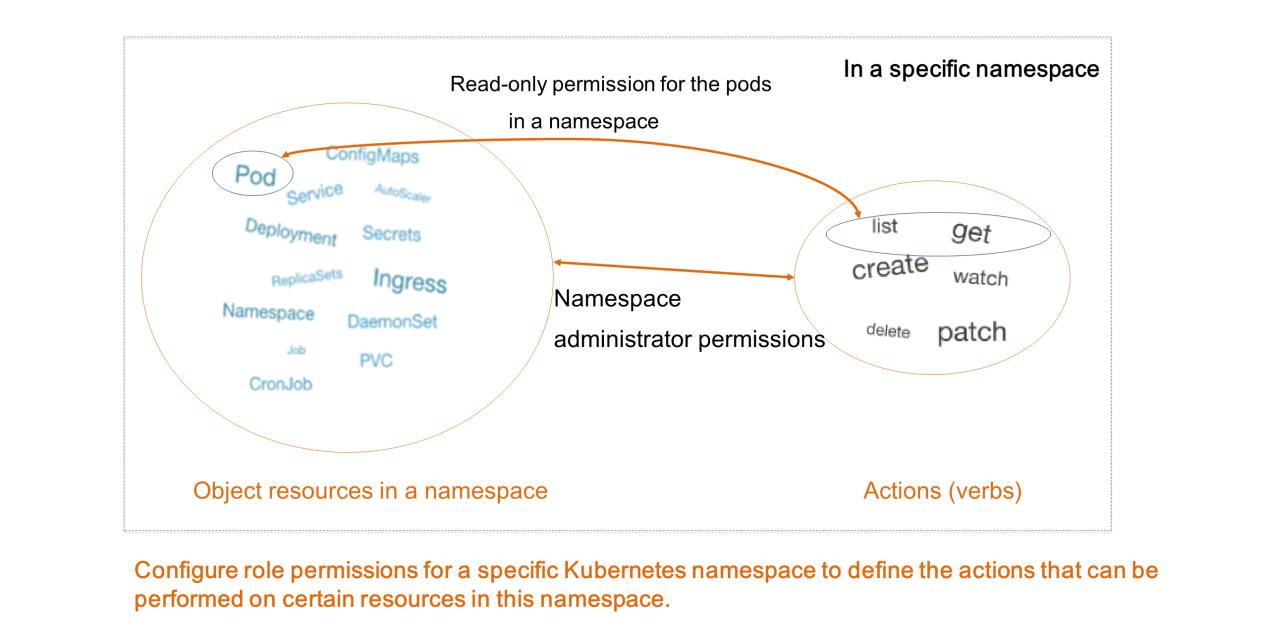

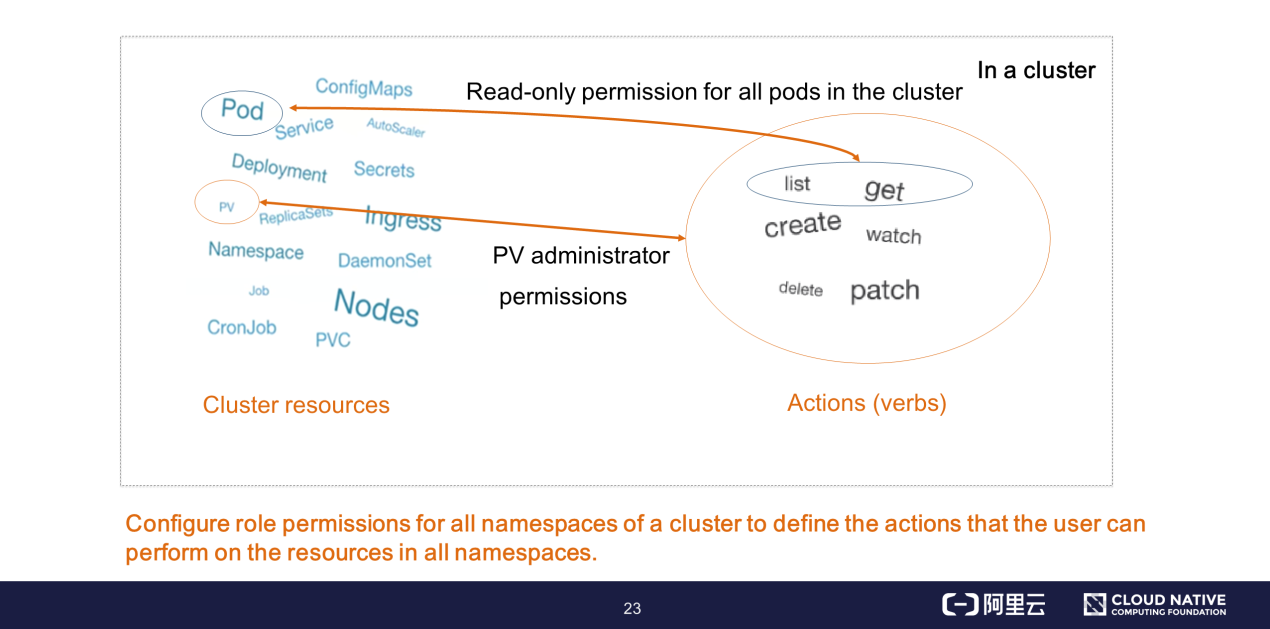

A role defines the actions that a user can perform on the resources in a specific Kubernetes namespace. For example, you can define a role with read-only permission for the pods in a namespace, or define a role with administrator permissions that can perform any actions on all object resources in a namespace.

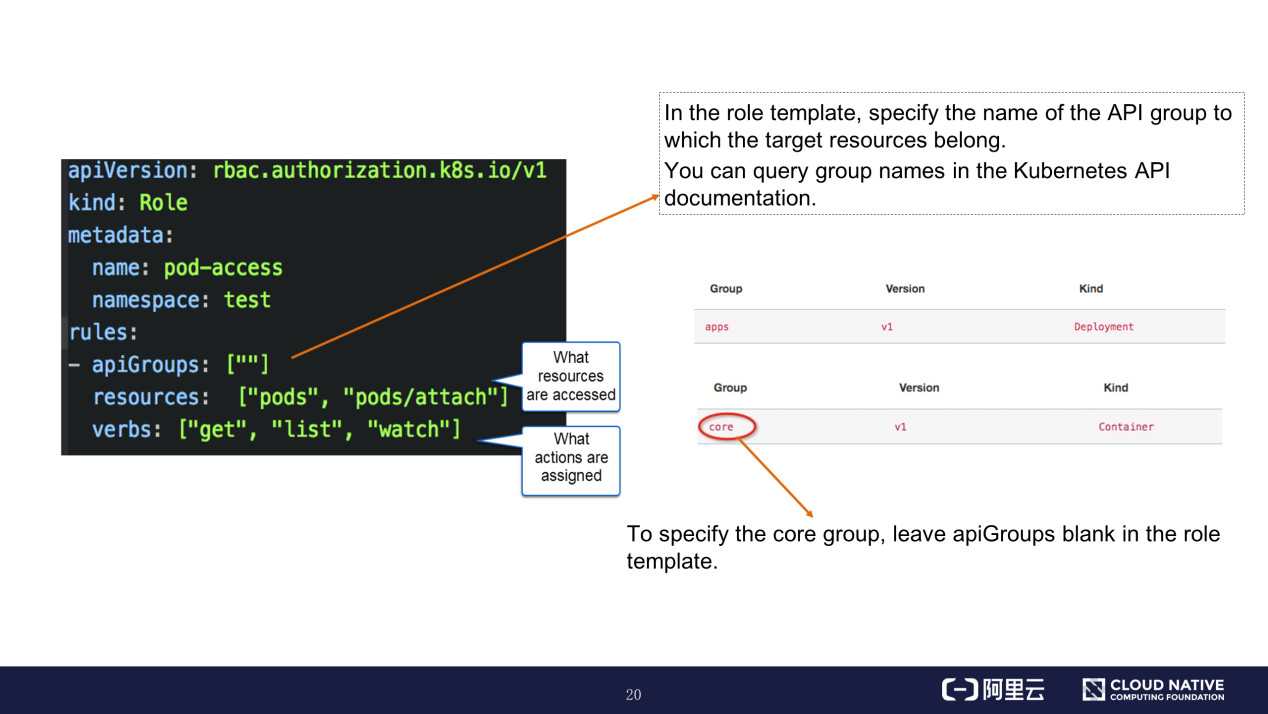

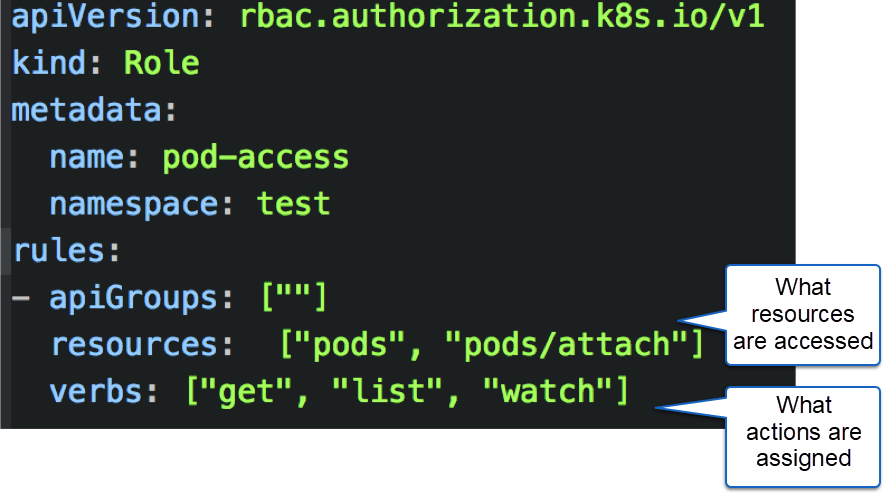

The preceding figure shows the orchestration file of a role definition template. The resources field defines the resources that the role can access, and the verbs field defines the actions that the role is authorized to perform. Set the apiGroups field to the name of the group to which the target resources belong. You can query group names in the Kubernetes API documentation. To specify the core group, leave apiGroups blank in the role definition template.

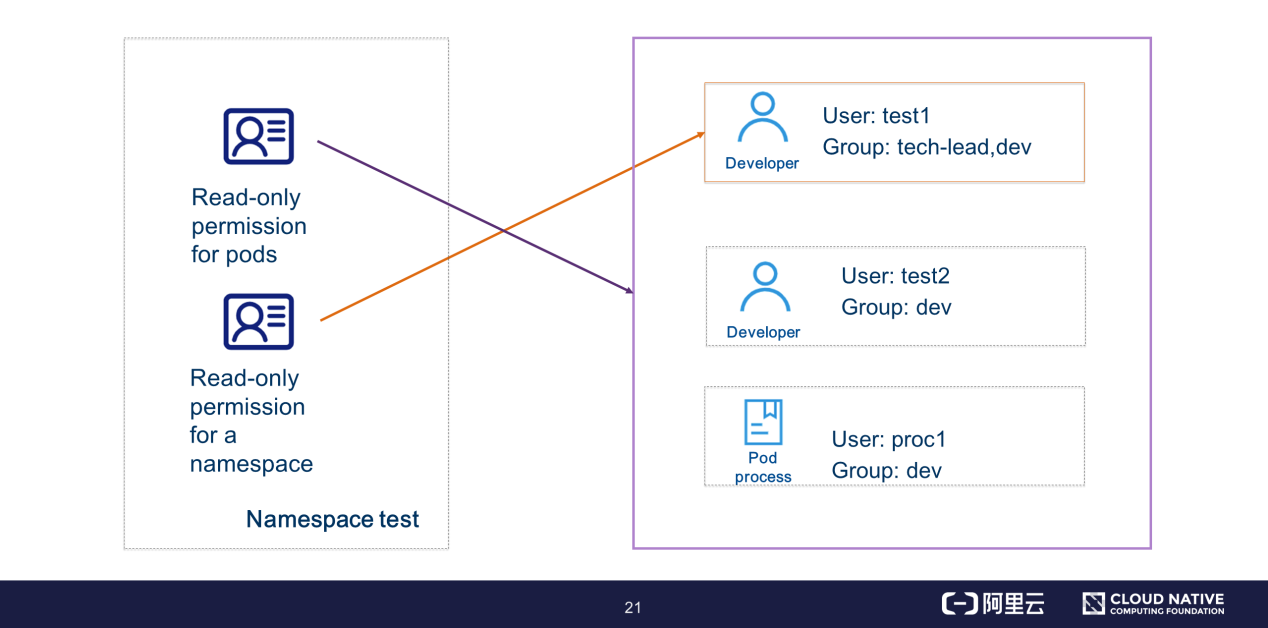

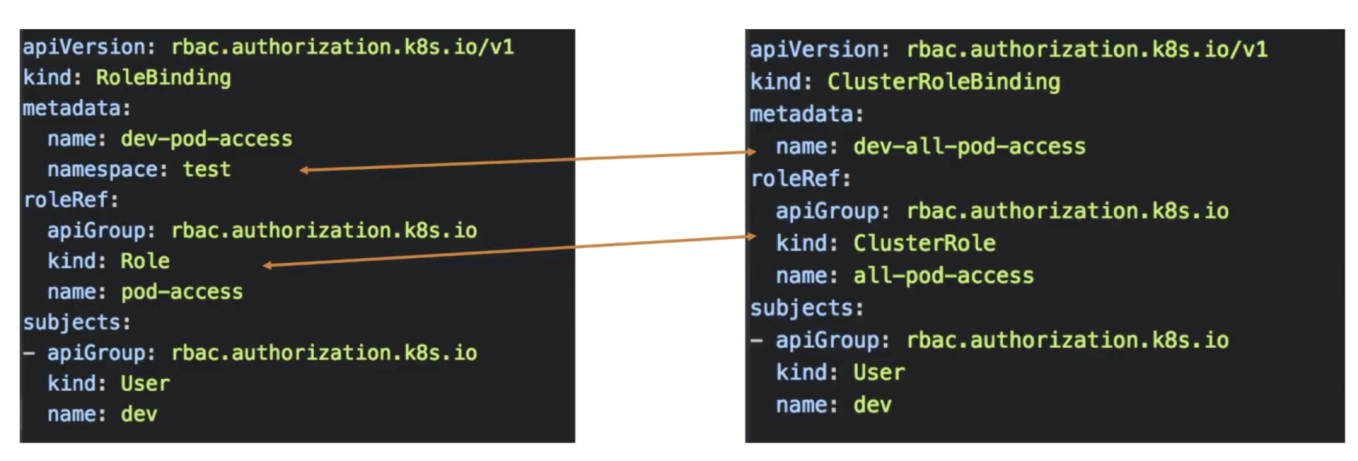

After defining a role in a namespace, you need to bind the role to a subject through a role binding model so that this subject is assigned the role's permission model.

For example, you can assign the read-only permission for pods in the namespace "test" to the users test1, test2, and proc1. Alternatively, you can assign the read-only permission for the namespace "test" to the user test1 in the tech-lead group. In this case, users test2 and proc1 do not have the "get namespace" permission.

Let's take a look at the orchestration file template of role binding.

The roleRef field declares the role to be bound. Only one unique role can be specified in a binding relationship. The subjects field defines the object to the bound, which can be a user, group, or service account. You can bind multiple objects to a single role.

In addition to defining a permission model in a specific namespace, you can also define a cluster-level permission model through a cluster role. You can define a cluster-level permission model in a cluster instance. For example, the permissions for resources such as persistent volumes (PVs) and nodes in a namespace are invisible, but you can define these permissions through a cluster role. The actions that can be performed on the resources are the same as the role-defined actions mentioned earlier, such as Add, Delete, Modify, Query, List, and Watch.

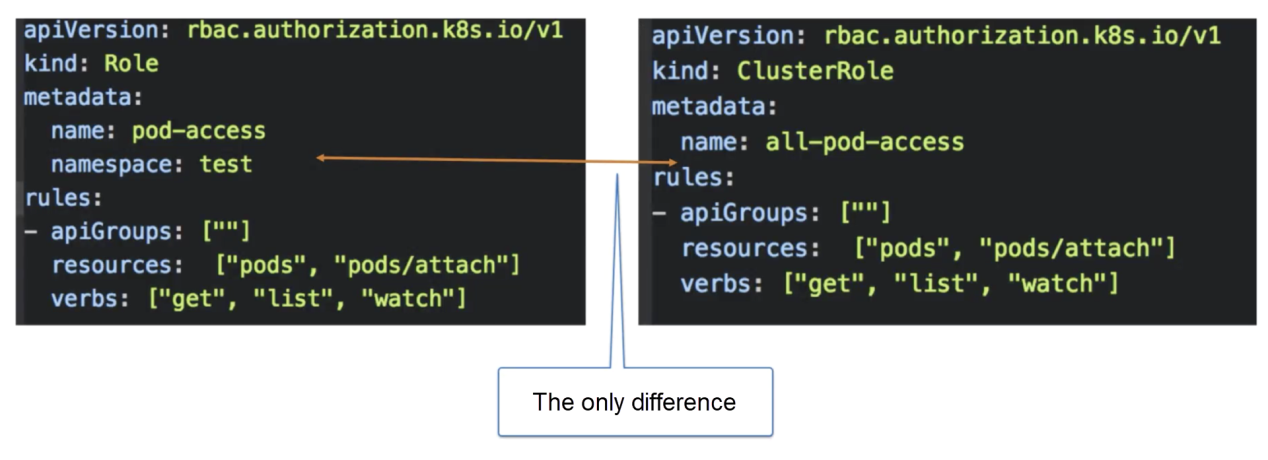

The following figure shows the orchestration file template of a cluster role.

The only difference between a cluster role orchestration file and a role orchestration file is that a cluster role defines all permissions at the cluster level, rather than at the namespace level.

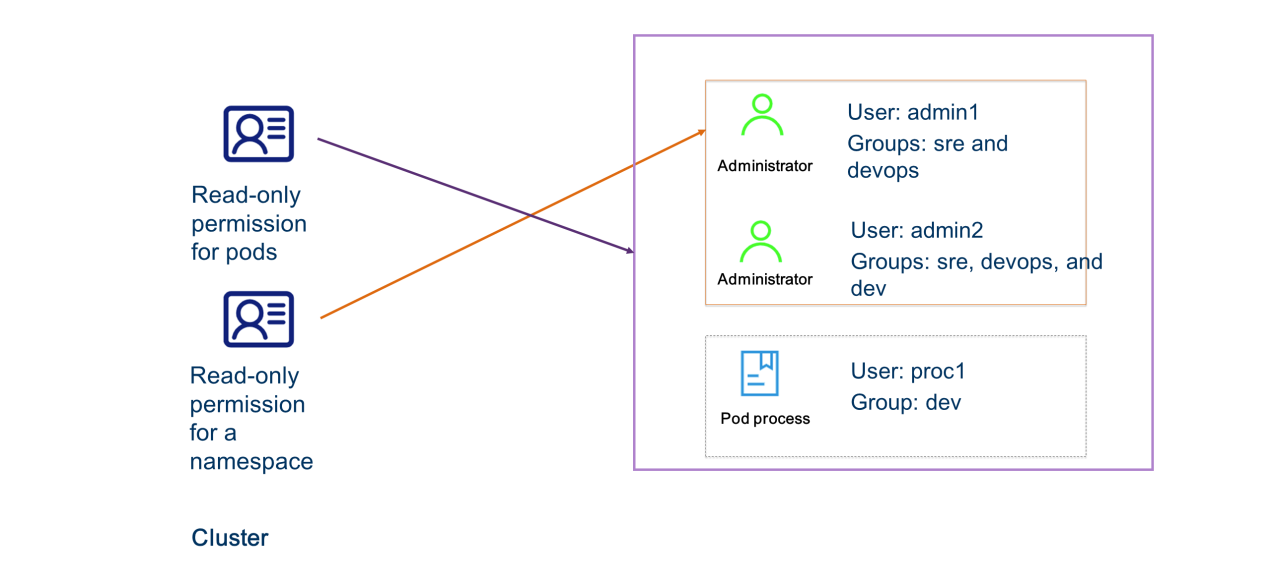

You can bind a cluster role to a subject. A model instance of cluster role binding allows you to bind a cluster role to specific subjects in all namespaces of a cluster.

For example, you can assign the List permission for all namespaces to the administrators named admin1 and admin2 in the group "sre" or "devops".

The cluster role binding template has the same definition formats as the role binding template, except for the definitions of permission object models related to namespaces and roleRef.

As mentioned earlier, RBAC denies all access requests when no permissions are bound. In this case, how do system components send requests to each other?

When a cluster is created, a client certificate is also created for each system component and includes this component's role and environment objects. In this way, the components are assigned the permissions required for interacting with each other.

The following figure shows the predefined cluster roles.

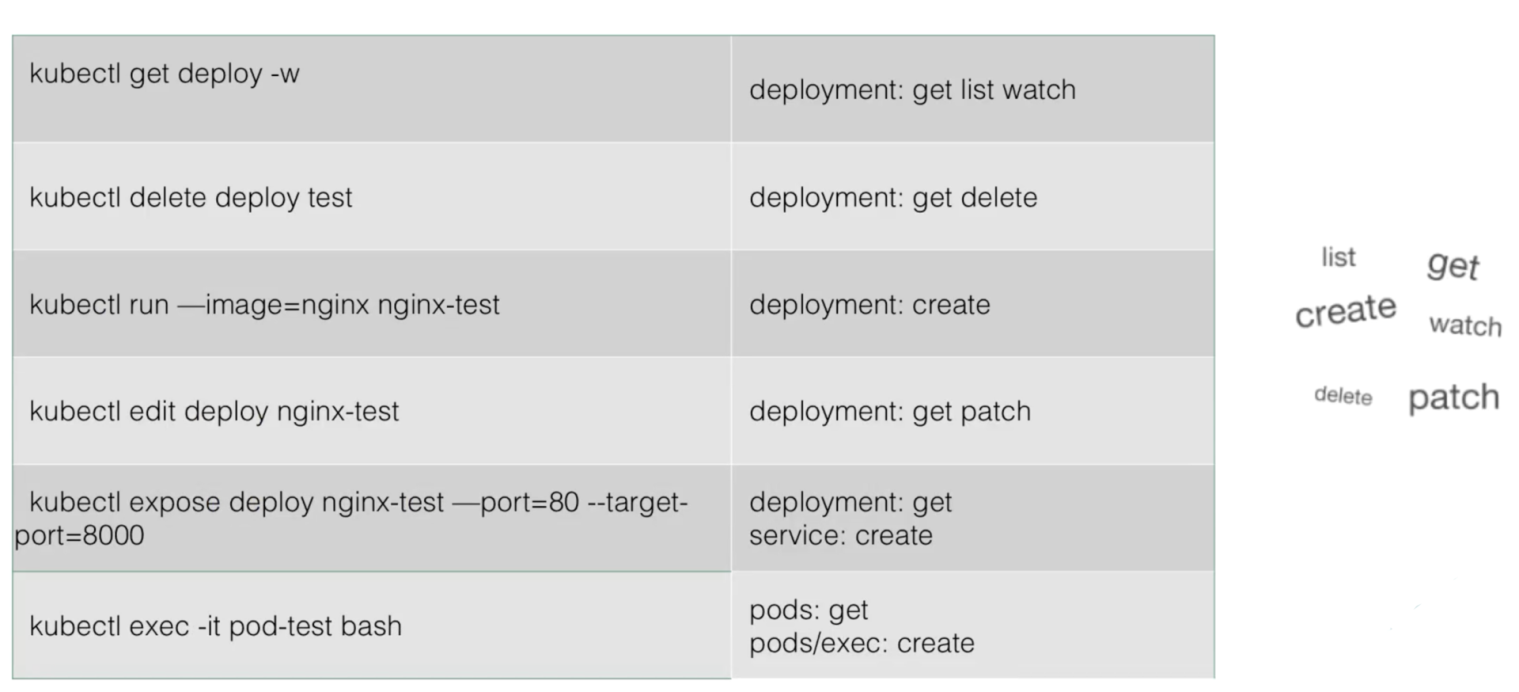

At the moment, you have a basic understanding of the RBAC model. However, further knowledge and practical skills are required to define an action policy for each API model in a complex multi-tenant scenario. This section describes how to use kubectl in RBAC.

For example, to allow users to edit a Deployment, you need to add the Get and Patch permissions for this Deployment in the related role template. To allow users to perform the exec action on a pod, you need to add the Get permission for this pod in the related role template and add the Create permission for the pod/exec model.

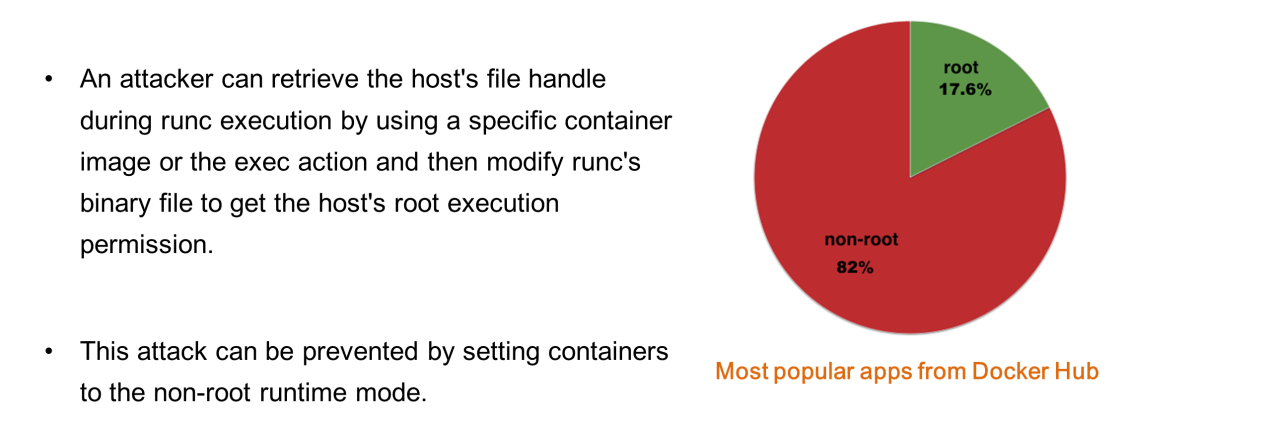

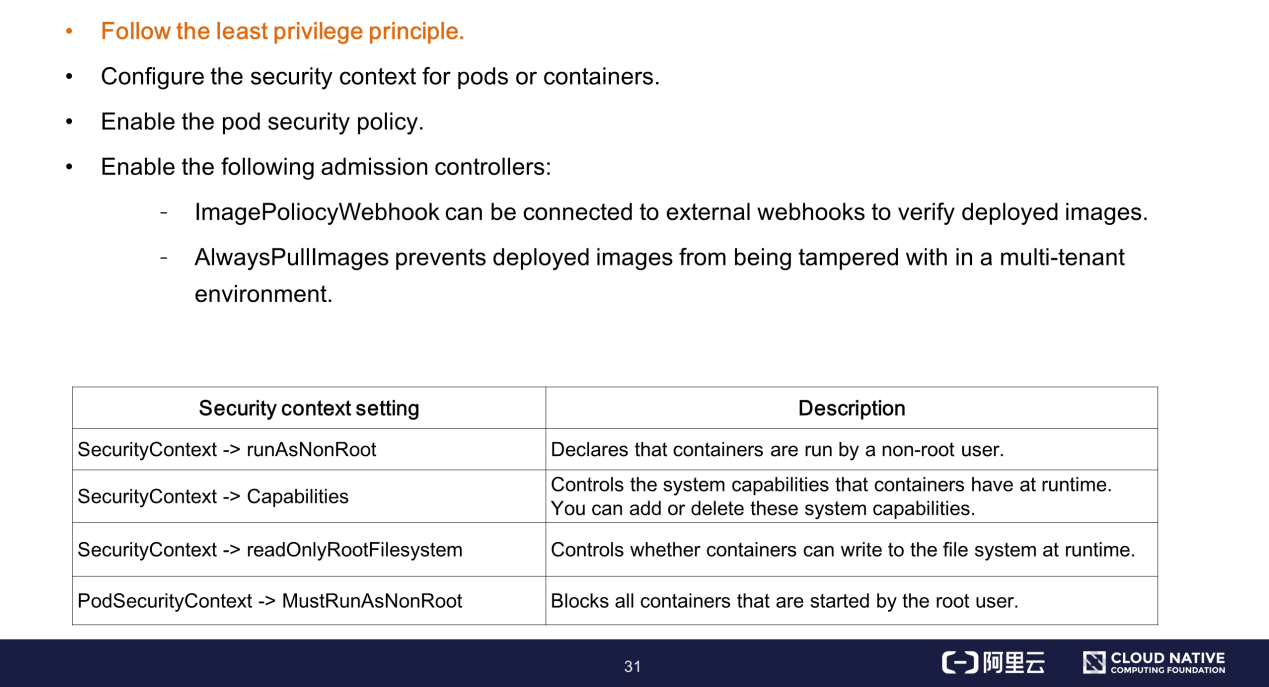

Statistics from Docker Hub show that 82.4% of mainstream business images are started by root users. This survey was not very optimistic about security context use.

The preceding analysis results show that attacks can be prevented by setting safe runtime parameters for business containers. In this case, what type of runtime security hardening is required by the business containers in a Kubernetes cluster?

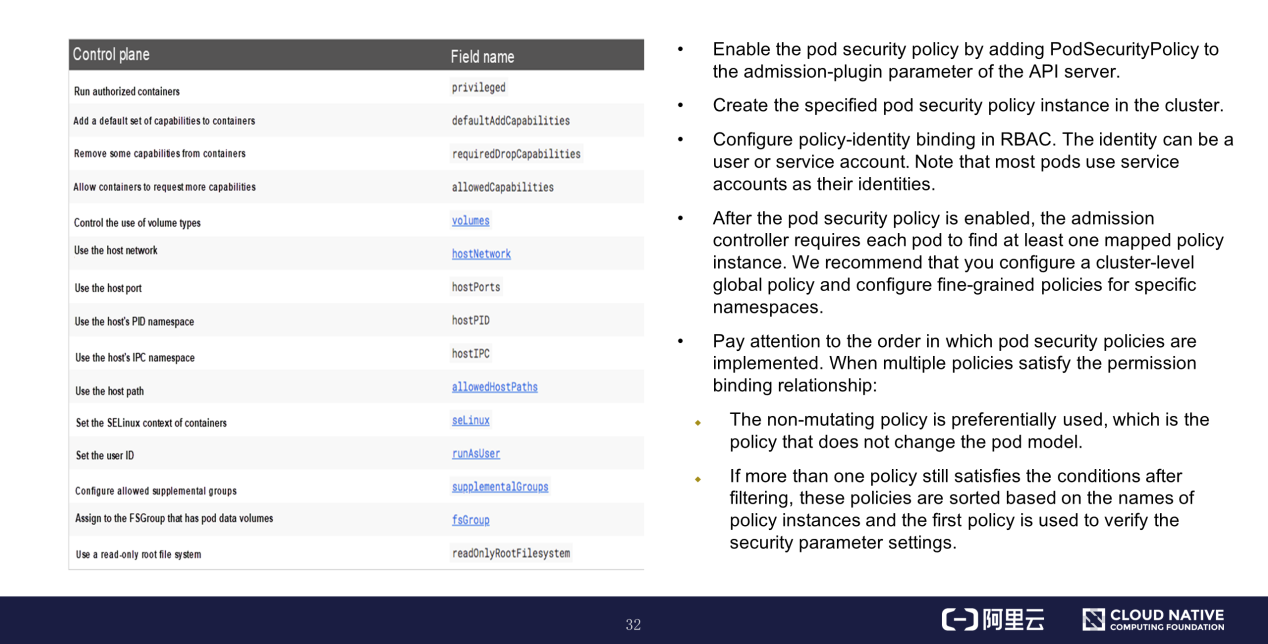

This section provides important information about using the pod security policy.

You can directly operate the pod security policy instance through APIs. The left part of the preceding figure shows the parameters of the pod security policy, such as the privileged container, system capabilities, runtime user ID, and file system permissions. For more information about these parameters, see the official documentation.

Security parameter settings are verified based on the pod security policy before business containers are run. The pod is prevented from running if this policy is not satisfied.

The right part of the preceding figure shows the precautions that you must observe when using the pod security policy.

This section summarizes the best practices of security hardening in a multi-tenant environment through the native security capabilities of Kubernetes.

Let's summarize what we have learned in this article.

Getting Started with Kubernetes | Kubernetes CNIs and CNI Plug-ins

Getting Started with Kubernetes | Kubernetes Container Runtime Interface

703 posts | 57 followers

FollowAlibaba Developer - June 23, 2020

Alibaba Clouder - February 14, 2020

Alibaba Developer - June 18, 2020

Alibaba Clouder - February 22, 2021

Alibaba Developer - June 19, 2020

Alibaba Developer - April 7, 2020

703 posts | 57 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Secure Access Service Edge

Secure Access Service Edge

An office security management platform that integrates zero trust network access, office data protection, and terminal management.

Learn MoreMore Posts by Alibaba Cloud Native Community