AnalyticDB for MySQL (the cloud-native data warehouse product) is introduced based on Alibaba Cloud's best practice of Double 11 e-commerce online analysis of 10 billion times of businesses. It is the industry's first cloud data warehouse product compatible with the MySQL protocol, and its performance ranks first in the world (TPC-DS 10TB). Enterprises only need to recruit data analysts with SQL skills. They can use QuickBI, DataV, or in-house visual reports to quickly visualize the key indicators of enterprises in real-time. It helps enterprises transform into data-driven decision-making types.

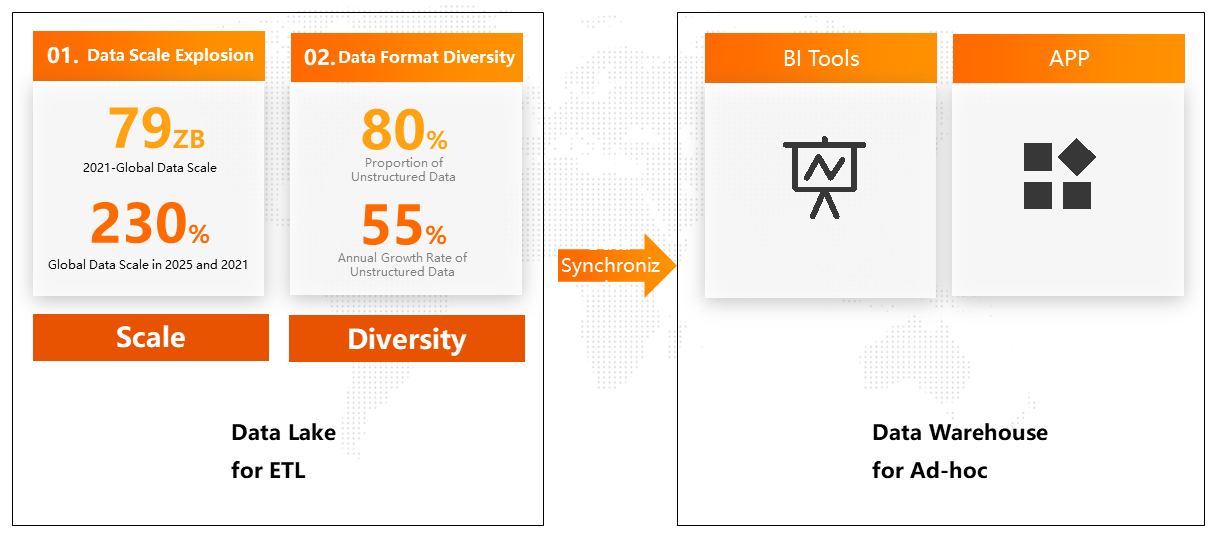

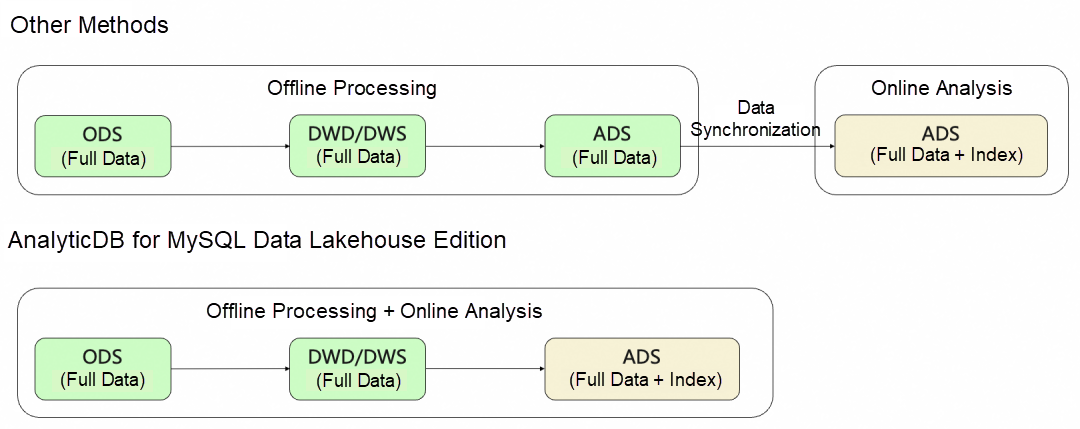

In the process of business innovation with customers, we found that with the increase in the number of business customers, the increase in business complexity, and the accumulation of existing data, the data scale has increased from GB to nearly PB. The data format has added a lot of semi-structured (JSON, etc.) and unstructured data from structured data with TP data sources as the core. Customers usually perform offline processing once in the data lake to clean, filter, and order data and use data synchronization tools to synchronize data to the AnalyticDB for online analysis.

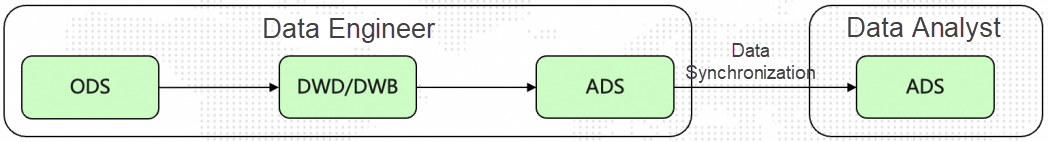

If data is synchronized between multiple systems, problems with data consistency, timeliness, and data redundancy may occur due to the stability of data synchronization tools. For example, the ads table for data engineer in the data lake may be different from data analyst in the data warehouse. The correctness of data is the very foundation of data analysis. The problem of data correctness can only be truly solved by avoiding data synchronization and using one data to support low-cost offline processing and high-performance online analysis.

This year, we launched the Data Lakehouse Edition at the Aspara Conference 2022 Database Sub-Forum, which has been precipitated and polished for more than a year. Let's take a look at AnalyticDB's research and practice of Lakehouse.

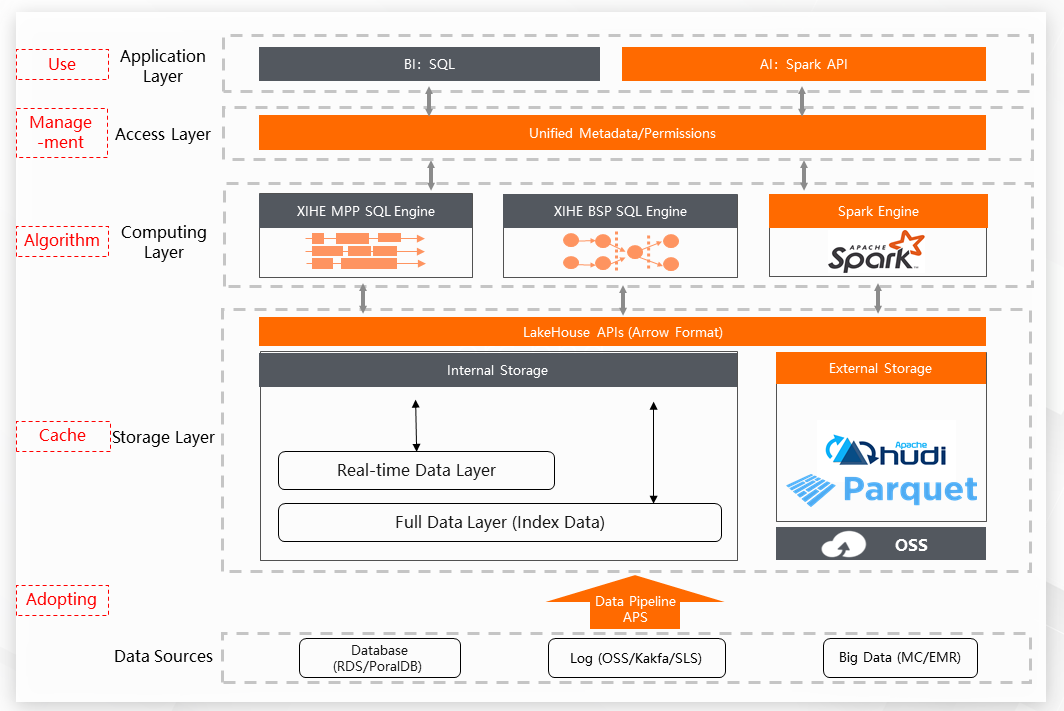

The following is a figure from the Data Lakehouse Edition. The orange part shows the new functions, and the gray part shows the iterative functions.

The left box is our in-house engine, including Xihe Computing Engine and Xuanwu Storage Engine. The right box is our integrated open-source engine, including the Spark computing engine and Hudi storage format. We hope to use open-source capabilities to provide richer data analysis scenarios. At the same time, it opens up mutual access between in-house and open-source to provide an integrated experience.

Let's talk about how the in-house engine can realize the lakehouse capability based on one data and integrated engine.

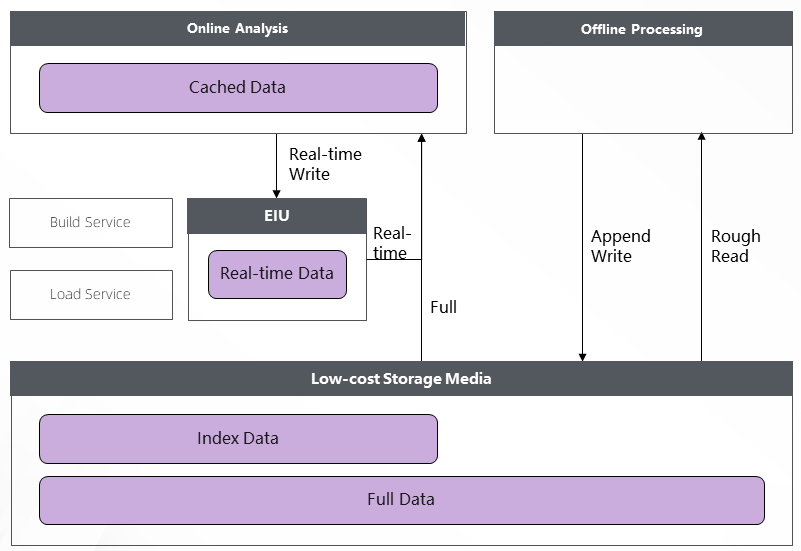

One piece of data refers to one piece of full data. The difficulty here is solving the problems of both high-performance online analysis and low-cost offline processing. The requirements for storage in these two scenarios are inconsistent. We want data to improve performance on high-performance storage media in online analysis scenarios as much as possible. We want data to reduce storage costs on low-cost storage media in offline processing as much as possible.

The solution is to store full data on a low-cost and high-throughput storage medium and directly read and write low-cost storage media in low-cost offline processing scenarios. This reduces data storage and data I/O costs to ensure high throughput. Secondly, real-time data is stored on a separate storage I/O node (EIU) to ensure the real-time performance of row-level data. At the same time, the full data is indexed, and data is accelerated using the cache capability to meet the requirements of hundreds of ms-level high-performance online analysis scenarios.

Data Lakehouse Edition Storage Architecture

The one data solution of the Data Lakehouse Edition solves the problems of data consistency and timeliness caused by data synchronization.

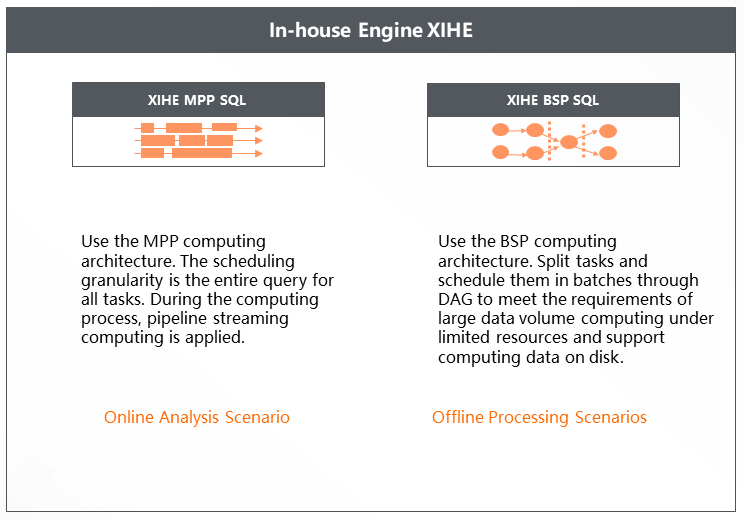

Behind the support for high-performance online analysis, the computing part is mainly the in-house Xihe Computing Engine MPP mode, but this streaming computing mode is not suitable for the offline processing of low cost and high throughput. Therefore, we have added a new BSP mode to the Xihe Analysis and Computing Engine in the Data Lakehouse Edition, which uses DAG to split tasks and schedule them in batches to meet the requirements of large data volume computing under limited resources and support computing data on disk.

However, we think that MPP and BSP modes are too expensive for common users to understand and learn. Therefore, we upgraded the Xihe Computing Engine to the Xihe Integrated Computing Engine with both MPP and BSP modes and automatic switching capability. The automatic switching capability means the system automatically switches to the BSP mode for execution when the query cannot be completed within a certain duration using the MPP mode.

The biggest advantage of cloud-native is flexibility. The Data Lakehouse Edition provides more sufficient inventory to ensure elasticity through a new two-layer control base based on Shenlong + ECS/ECI. The next step is to speed up. If it takes ten minutes to start an offline query, the efficiency use is bad, and it costs a lot. In addition to the Time-Sharing Elasticity mode suitable for online analysis scenarios, the Data Lakehouse Edition has introduced the On-Demand Elasticity mode suitable for offline processing scenarios. In terms of elasticity, the query speed can be 1200ACU (1ACU is about 1 Core4 GB), and the elasticity time can be about 10s. Finally, with the help of Workload Manager (WLM) and self-sensing service load technology, it ensures the accuracy of elasticity* and fits the service load, reducing resource costs.

In-house is the foundation for building technical depth. We also actively embrace open-source to satisfy customers that have grown on the open-source ecosystem to use the Data Lakehouse Edition smoothly. In addition to the append type data format (such as Parquet, ORC, JSON, and CSV), the external type adds the Hudi data format that supports batch updates to help users access data (such as CDC) at a lower cost. The computing engine has added a Spark engine with high open-source popularity based on the deep Xihe Integrated Computing Engine to meet users' needs for complex offline processing and Machine Learning.

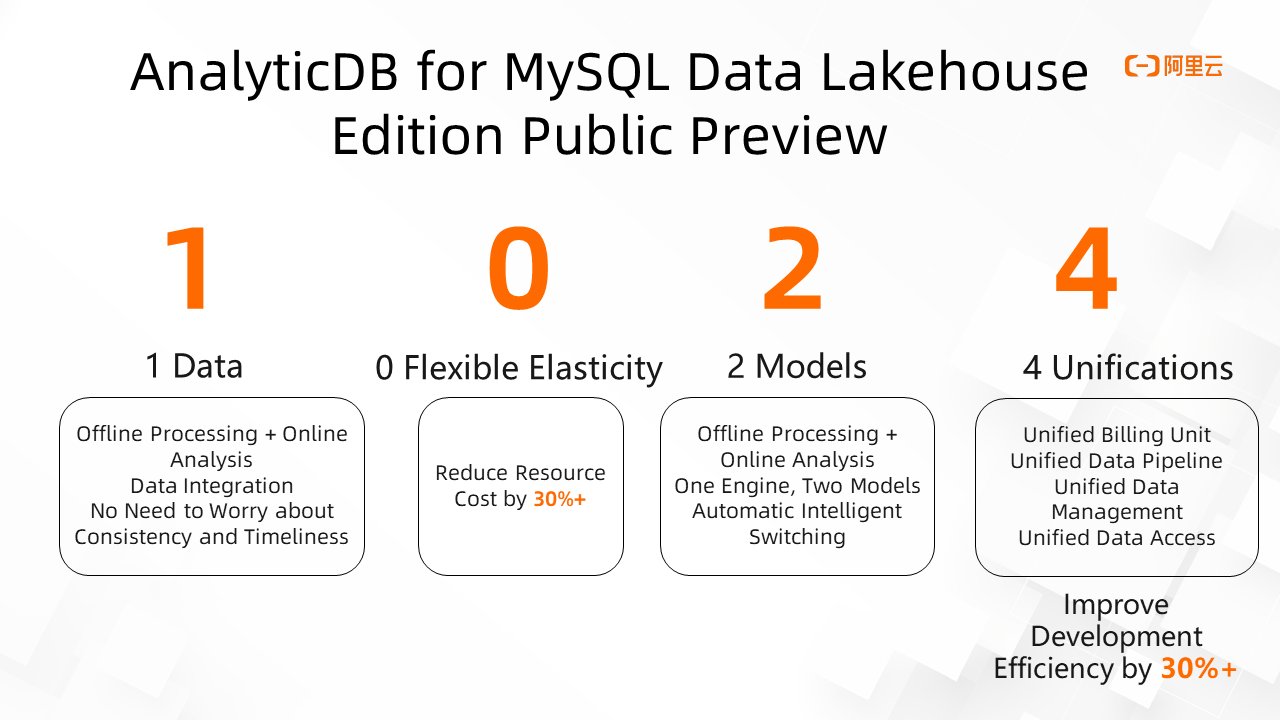

We summarize the advantages of the Data Lakehouse Edition with the number 1024 (a number programmers are most familiar with) to make them easier to remember.

1: It refers to one data. It can prevent issues in data consistency, timeliness, and redundancy caused by data synchronization.

0: It refers to ultimate scalability. It best suits the requirements for business workloads in Serverless mode to ensure query performance and reduce resource costs.

2: It indicates that Data Lakehouse Edition can meet requirements for low-cost offline processing and high-performance online analysis.

4: It refers to the four features of consistent user experience: unified billing unit, APS, data management, and data access. This part will be introduced more in the next article.

We have launched AnalyticDB for MySQL Data Lakehouse Edition, completing the first step of building a cloud-native comprehensive data analysis platform available to everyone from the warehouse to the lake. In the future, we will continue polishing and enhancing the following aspects:

Cloud-Native Elasticity

In-House Integrated Computing Engine

Spark Engine

AnalyticDB for MySQL Data Lakehouse Edition is suitable for users with low-cost offline ETL processing that need to use high-performance online analysis functions to support BI reports, interactive queries, and applications. AnalyticDB for MySQL Data Lakehouse has been applied in various Internet applications (such as a well-known video platform and a broadcasting application) favored by users.

ApsaraDB - March 15, 2023

ApsaraDB - October 24, 2025

Alibaba Cloud Community - January 13, 2023

ApsaraDB - February 29, 2024

Alibaba Cloud MaxCompute - September 30, 2022

ApsaraDB - July 25, 2023

Data Lake Formation

Data Lake Formation

An end-to-end solution to efficiently build a secure data lake

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn More Quick BI

Quick BI

A new generation of business Intelligence services on the cloud

Learn More Hologres

Hologres

A real-time data warehouse for serving and analytics which is compatible with PostgreSQL.

Learn MoreMore Posts by ApsaraDB