Enterprise data platforms have become assembly lines of specialised engines, columnar warehouses for historical analysis, real-time engines for sub-second queries, streaming runtimes for event processing, and a mesh of upstream sources spanning operational databases, message queues, and object storage. Each engine ships with its own SQL dialect, scheduler, permission model, and metadata catalogue.

The engineering problem is not one of capability, but of coherence. Without a unifying control plane, pipelines fragment into isolated jobs, lineage breaks at every engine boundary, sensitive columns drift across teams without classification, and a single upstream schema change can ripple silently through downstream consumers before anyone notices. Governance, in this environment, is not a policy document; it is an architectural property of the platform.

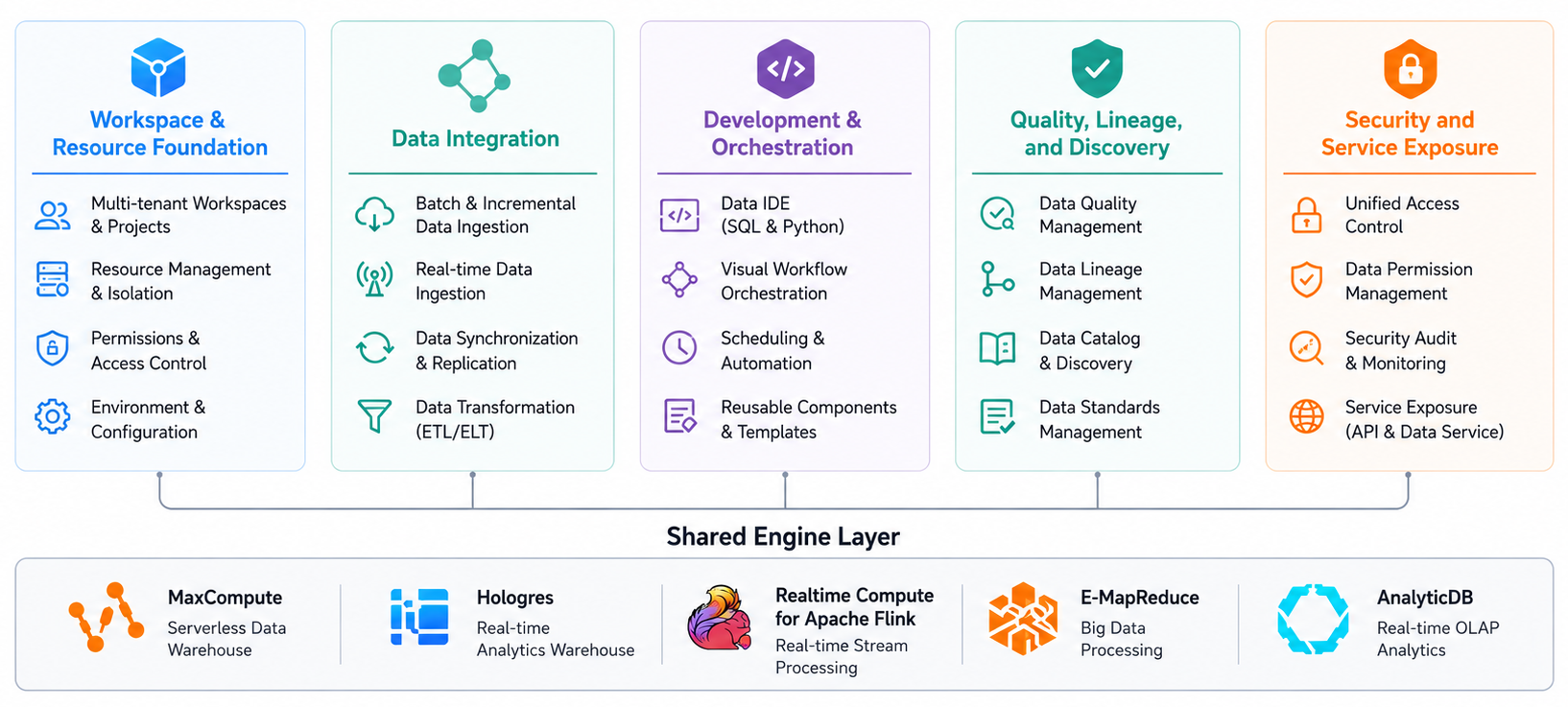

DataWorks is Alibaba Cloud's control plane for this requirement. It binds to MaxCompute, Hologres, Realtime Compute for Apache Flink, E-MapReduce (EMR), and AnalyticDB as execution engines, and exposes a unified environment for integration, development, scheduling, quality, lineage, and API exposure. The following sections document its architectural surface and the configuration decisions that govern each capability.

Figure 1: DataWorks architectural surface — five capability layers over a shared execution engine surface.

A workspace is the administrative boundary for all data sources, nodes, tables, rules, and APIs. Standard mode separates development and production environments behind an explicit publish step and is the appropriate default for any workload with operational consequences. Basic mode collapses both into one environment and suits exploratory or sandbox use only.

Access is granted through workspace roles mapped to RAM identities: Project Owner, Project Administrator, Developer, Deployment, and Visitor. The Deployment role alone may publish to production, allowing a two-person rule on production changes when Developer and Deployment assignments are separated at the RAM policy level. Compute runs on resource groups; shared groups are multi-tenant with no SLA; exclusive groups are ECS-backed, sized in compute units (CUs), and required for VPC-attached data sources or for guaranteed throughput. A starting allocation of 2 CUs per 20 concurrent batch sync tasks is a reasonable baseline, refined against Operation Center concurrency metrics after the first weekly cycle.

Data Integration ingests external data into the bound engines through batch sync and real-time sync modes. Batch sync runs on a DataX-based engine and supports relational databases, NoSQL stores, OSS, Kafka, and file systems. Concurrency is governed by three parameters: channel count (parallel reader/writer threads), split key (a uniformly distributed indexed column for parallel extraction), and BPS rate limit (a throughput cap that prevents sync jobs from saturating source databases during business hours).

Incremental sync is expressed through source-side WHERE filters with scheduling placeholders ${bizdate}, which determines the instance business date at runtime, eliminating per-partition sync definitions. Real-time sync consumes MySQL binlog, PolarDB CDC streams, Kafka topics, and DataHub queues along with sub-minute end-to-end latency. For database CDC, an initial full snapshot precedes incremental consumption, which requires source binlog retention to exceed the snapshot duration plus a safety margin; otherwise, log truncation during cutover loses change events that cannot be recovered without re-snapshotting.

DataStudio is the development environment in which transformation logic is authored and bound to the scheduling graph. Node types map to engines (ODPS SQL, ODPS Spark, Hologres SQL, EMR Spark, EMR Hive, Shell, PyODPS 3) and include a zero-load assignment node that carries no execution payload and serves as a synchronisation barrier between parallel sub-graphs.

Dependency resolution is automatic via output names. A downstream node referencing an upstream output inherits the dependency without manual graph editing, and schema or output-name mismatches surface at publish time rather than at runtime. Scheduling is cron-expressed with three dependency types: same-cycle (waits for upstream within the same instance), cross-cycle (depends on a prior cycle of itself or another node, enabling rolling-window aggregations), and dry-run (executes scheduling logic without engine submission, used to validate workflows before they consume compute). Operation Center exposes Gantt-view dependency graphs, retry controls, and baseline alerting; baselines should target terminal nodes feeding dashboards or APIs, not intermediate transformations, to avoid alert fatigue.

Data Quality enforces correctness constraints at the table level after load completion. Rules fall into two enforcement categories: strong rules block downstream nodes on failure, weak rules raise a warning but allow the pipeline to proceed. Common rule templates cover row count fluctuation against a historical baseline, null rate per column, uniqueness on a candidate key, value range bounds, and arbitrary SQL expressions. Thresholds are configured with red and orange levels, allowing graduated alerting. Sampling can bound execution time on multi-billion-row tables but should be avoided for uniqueness rules and any rule whose violation depends on rare events.

Data Map harvests table schemas, partition lists, storage size, and update frequency from the bound engines on a configurable refresh schedule. Lineage is derived from engine execution logs for MaxCompute; the SQL parser extracts column-level read and write relationships. Nodes implemented in Shell or external Python that bypass the SQL parser produce only table-level lineage, with column relationships unresolved. Hierarchical tags applied at the table or column level give non-engineering consumers a discovery path through workspaces containing thousands of tables.

Data Security Guard identifies sensitive columns through a combination of regular-expression patterns (for structured identifiers, national ID, phone numbers, payment cards) and statistical classifiers (for less structured fields). Identified columns are tagged with classification levels L1 through L4, which become inputs to masking and access rules. Dynamic masking applies at query time without modifying stored data, with algorithms including full mask, partial mask (configurable visible prefix/suffix), one-way hash, and fixed-value substitution. Masking rules bind to a combination of classification level, target column, and querying identity. Access events against L3 and L4 columns are recorded for compliance review through ActionTrail.

DataService Studio exposes governed data as REST endpoints without a separate API tier. Wizard mode constructs parameterised queries against a registered table; script mode accepts arbitrary SQL with declared input and output schemas. Each endpoint supports per-API QPS throttling, parameter validation, and timeout configuration. Authentication options include AppCode and HMAC signature; published APIs can be exported to API Gateway for unified rate limiting and IP allow-listing where external consumer exposure is required.

The architecture workspace and resource foundation, data integration, development and orchestration, quality and lineage, security and service exposure define a complete governed-data control plane on Alibaba Cloud. Each capability is independently configurable, supporting incremental adoption: integration-first for teams replacing ad-hoc sync scripts, governance-first for teams formalising existing pipelines, or end-to-end for greenfield deployments.

Engineers extending this architecture should evaluate three patterns. Exclusive resource groups with elastic scaling suit bursty scheduled workloads where steady-state provisioning over-allocates off-peak hours. Hologres binding alongside MaxCompute provides a sub-second serving tier for curated marts without exporting data outside the governed perimeter. For organisations operating an existing metadata catalogue, the DataWorks OpenAPI exposes lineage, schema, and tags for outbound synchronisation, allowing DataWorks to act as a node in a federated catalogue rather than a closed silo.

Disclaimer: The views expressed herein are for reference only and don't necessarily represent the official views of Alibaba Cloud.

Event-Driven Architecture on Alibaba Cloud with EventBridge and Function Compute

92 posts | 2 followers

FollowAlibaba Clouder - June 23, 2021

Alibaba Cloud Community - March 29, 2022

PM - C2C_Yuan - May 20, 2024

Alibaba Clouder - February 11, 2021

Alibaba EMR - July 9, 2021

Alibaba Cloud Community - March 4, 2022

92 posts | 2 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Financial Services Solutions

Financial Services Solutions

Alibaba Cloud equips financial services providers with professional solutions with high scalability and high availability features.

Learn MoreMore Posts by PM - C2C_Yuan