Modern cloud workloads rarely operate in isolation. A single business event, such as a file uploaded to object storage, a database record changed, or a payment processed, typically needs to trigger multiple downstream actions: a notification, an audit log entry, a search index update, and a cache invalidation. Building these integrations as direct point-to-point calls produces tightly coupled systems where every new consumer requires modification to the producer, and every producer failure cascades into downstream silence.

Event-driven architecture inverts this pattern. Producers emit events to a routing layer without knowledge of who consumes them; consumers subscribe to the events they care about, independent of who produced them. The decoupling shifts complexity into the routing layer, which must handle filtering, transformation, retry, and ordering with operational guarantees strong enough to be trusted as the integration substrate. On Alibaba Cloud, EventBridge implements the routing layer and Function Compute implements the execution layer, with native request integration between the two services. The remainder of this article addresses each of the four functional concerns of an event-driven workload on these services: event production, routing, consumption, and failure handling, followed by operational factors that determine reliability at scale.

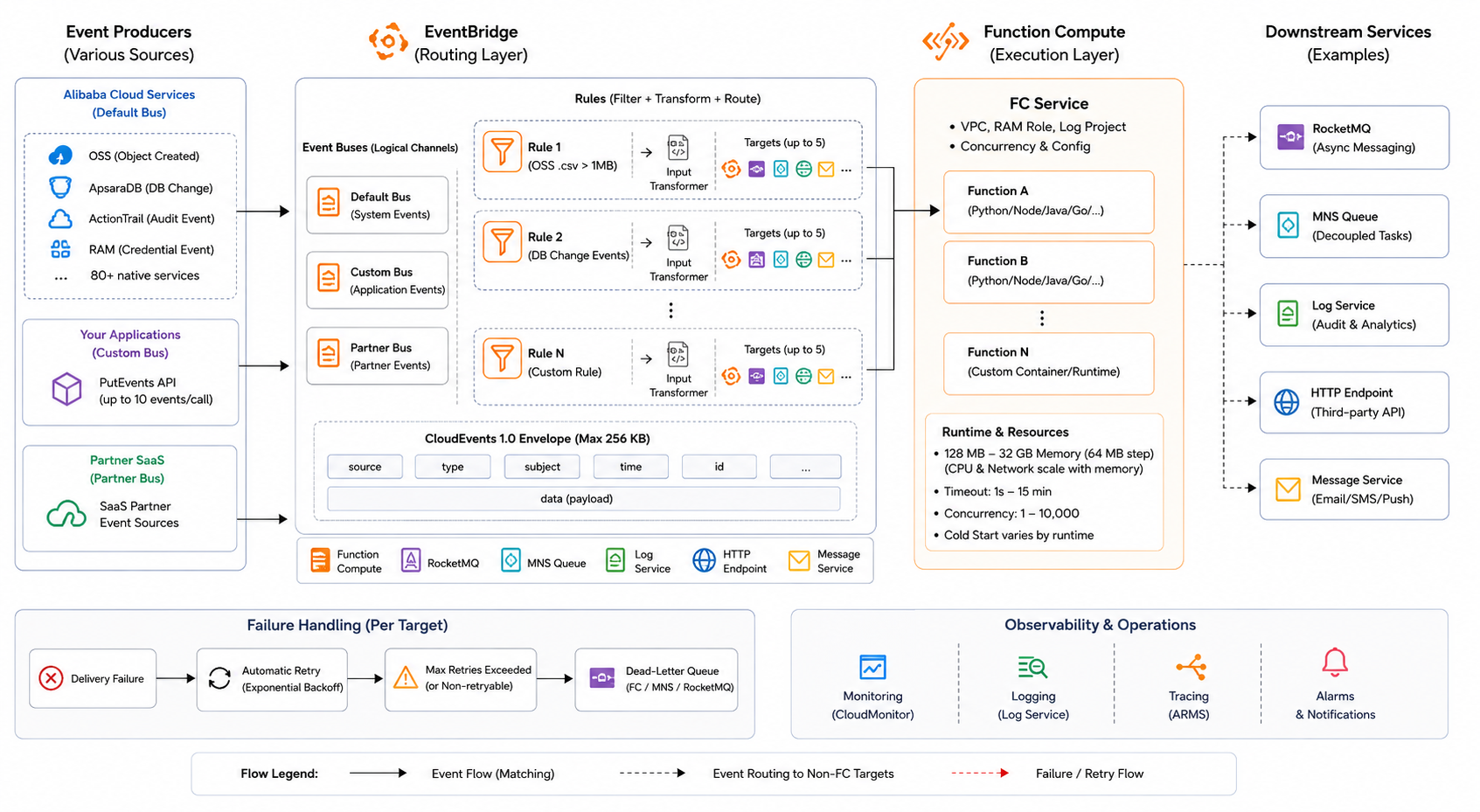

Figure 1: EventBridge and Function Compute Architecture Overview

EventBridge organises event flow around buses' logical channels through which related events transit. Three bus types are available. The default bus receives system events from over 80 native Alibaba Cloud services, including OSS object operations, ApsaraDB lifecycle changes, ActionTrail audit records, and RAM credential events. Custom buses receive application-emitted events submitted through the PutEvents API, authenticated via RAM AccessKey signatures and accepting up to ten events per call. Partner buses receive events from SaaS providers integrated as event sources, isolating partner-supplied data from the customer’s own event streams.

All events conform to the CloudEvents 1.0 specification, with a fixed envelope containing source, type, subject, time, and id fields alongside an arbitrary data payload. The standardised envelope enables routing rules to match consistently across heterogeneous producers without producer-specific parsing logic. Event size is capped at 256 KB per record; payloads exceeding this limit should be stored in OSS with the event carrying only the object key as a reference, a pattern that also avoids re-transmitting large bodies across retry cycles.

Each EventBridge rule is secured to a single bus and defines three components: an event pattern, an optional input transformer, and a target list. The event pattern is a JSON expression matched against incoming events using one of several operators. Exact match operates on literal field values; prefix and suffix operators match on string boundaries; numeric operators support range comparisons; the anything-but operator excludes specific values; and the exists operator tests for field presence. Patterns combine with implicit AND across fields and explicit OR within field arrays, supporting compound filters without requiring application-side filtering after delivery.

This rule matches only PutObject events under the uploads/ prefix where the object key ends in .csv, and the file size is at least 1 MB, with three independent conditions evaluated as AND. Every event that does not satisfy all three conditions is discarded before reaching Function Compute, avoiding the cold-start exposure, billed execution time, and concurrency consumption that an unnecessary invocation would incur. For high-volume buses, the difference between a 1% match rate and a 10% match rate translates directly into a tenfold change in downstream compute cost.

Input transformation applies after pattern matching but before delivery, extracting fields from the matched event into a path map and assembling a new payload using template syntax is helpful when the downstream target expects a different schema than the source emits. Each rule supports up to five targets, including Function Compute services, RocketMQ instances, MNS queues, Log Service projects, HTTP endpoints, and Message Service notifications. When multiple targets are attached to one rule, deliveries are independent; failure on one target does not block delivery to others, and each target maintains its own retry state.

Function Compute executes the business logic triggered by routed events. Functions are organised into service logical containers that share VPC configuration, RAM role, and log project bindings, and each service contains one or more functions with independent runtime, memory, and timeout settings. Runtimes available include Python 3.9 and 3.10, Node.js 16 and 18, Java 11, Go 1, PHP, and custom container images for workloads requiring specific binary dependencies.

Memory allocation ranges from 128 MB to 32 GB in 64 MB increments, with CPU and network bandwidth scaled proportionally to memory. Because billing is the product of memory and execution duration, a function completing in 1000 ms at 512 MB may be more expensive than the same function completing in 400 ms at 2 GB. Profiling actual execution time at multiple memory sizes is the only reliable way to identify the cost-optimal configuration. Cold start latency varies by runtime: interpreted runtimes such as Python and Node.js typically start in 100 to 300 ms for small handlers, Java cold starts range from 500 ms to 3 seconds depending on classpath size, and custom container starts depend on image size and pull cache state. For latency-sensitive paths, provisioned instances maintain a warm pool of pre-initialised function instances, eliminating cold start at the cost of paying for idle capacity.

EventBridge invokes Function Compute asynchronously by default. Asynchronous calls are buffered in an internal queue. These are delivered with at least once semantics. Handlers must be idempotent or perform deduplication, usually by storing a marker keyed on the CloudEvents id field. Synchronous invocation is available for rules needing immediate response. However, it extends producer-side latency by the full execution duration. Synchronous invocation is rarely best for routed events.

Asynchronous Function Compute invocations support automatic retry on handler failure. The default policy retries three times with exponential backoff between 1 and 60 seconds; the maximum age of an event in the retry queue is configurable up to six hours. After retry exhaustion, events can be routed to a dead-letter destination, either an MNS queue or a RocketMQ topic, preserving the original event for offline inspection and replay rather than discarding it silently. A common pattern is to route failed events to a dedicated MNS queue, with a separate function consuming that queue on a low-frequency schedule for diagnostic logging, separating failure handling from the hot path of normal event processing.

For workflows requiring strict ordering or transactional semantics, which event-driven architectures by default do not provide, EventBridge can route events to RocketMQ in place of direct Function Compute delivery, using RocketMQ’s FIFO topic ordering guarantees. The trade-off is throughput: FIFO topics impose per-partition serialisation, which is appropriate for ordered workflows but underutilises Function Compute’s parallel execution capacity for workloads that do not require it.

Observability is delivered through native integration with Log Service, which captures rule match status, target delivery status, function invocation latency, error stack traces, and billable duration as queryable fields. ARMS Application Monitoring can be enabled on Function Compute services for distributed trace correlation across functions, helpful when an event triggers a fan-out whose collective behaviour determines workflow success.

Three operational factors determine whether the architecture performs reliably as event volume grows.

EventBridge and Function Compute together cover the routing and execution layers of an event-driven architecture on Alibaba Cloud. Both services operate as managed offerings, which removes the requirement to provision and operate dedicated message brokers or compute clusters for these layers.

Engineers extending this architecture should consider three alternative patterns. Multi-step workflows with conditional branching and long-running state are better expressed in Serverless Workflow than as chained Function Compute invocations. For high-throughput synchronous request paths, Function Compute HTTP triggers fronted by API Gateway provide a lower-latency entry point than the EventBridge routing layer. For deployments where events originate primarily from a single SaaS partner, a partner event bus dedicated to that source simplifies access control and avoids comingling external and internal event streams.

Disclaimer: The views expressed herein are for reference only and don’t necessarily represent the official views of Alibaba Cloud.

Accelerating Global Application Delivery with Alibaba Cloud CDN and DCDN

91 posts | 2 followers

FollowAlibaba Developer - April 19, 2022

Alibaba Cloud Native - June 12, 2024

Alibaba Cloud New Products - December 4, 2020

PM - C2C_Yuan - May 23, 2024

Alibaba Cloud Native - March 27, 2024

Alibaba Cloud New Products - December 4, 2020

91 posts | 2 followers

Follow Microservices Engine (MSE)

Microservices Engine (MSE)

MSE provides a fully managed registration and configuration center, and gateway and microservices governance capabilities.

Learn More Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn More Serverless Application Engine

Serverless Application Engine

Serverless Application Engine (SAE) is the world's first application-oriented serverless PaaS, providing a cost-effective and highly efficient one-stop application hosting solution.

Learn MoreMore Posts by PM - C2C_Yuan