From December 9 to 10, 2021, the KubeCon + CloudNativeCon + OpenSourceSummit China 2021 was held online. Yi Li, a Senior Technical Expert of Alibaba Cloud and Head of Container Service R&D, gave a speech entitled Cloud Future, New Possibilities at the main forum of the conference. He shared Alibaba Cloud's technical trend judgment and technological innovation progress based on large-scale cloud-native practices.

The following is the full transcript.

Yi Li, Senior Technical Expert and Head of Container Service R&D at Alibaba Cloud

Hello everyone, I am Yi Li from Alibaba Cloud. I am currently in charge of the container service product line and also a member of the CNCF governing board. This is the second time I have communicated online in KubeCon. Today, I will share Alibaba Cloud's practice and thinking in the field of cloud-native, as well as our judgments about the future.

Since 2020, the pandemic has changed the functioning of the global economy and people's lives. Digital production and lifestyle have become the new normal in the post-pandemic era. Today, cloud computing has become the infrastructure of the digital economy, and cloud-native technology is changing the way enterprises migrate to and use the cloud.

Alibaba Cloud defines cloud-native as software, hardware, and architecture in response to the cloud created to help enterprises maximize cloud value. Specifically, cloud-native technology brings three core business values to enterprises:

If cloud-native represents cloud computing today, what will the future be like?

As the power engine of the digital economy, energy consumption growth has become a problem that cannot be ignored in the development of cloud computing. It is reported that the power consumption of data centers exceeded 2.3% of the total domestic power consumption in 2020, and the proportion is increasing year by year. Alibaba Cloud is promoting green computing to reduce data center PUE, such as using submerged liquid-cooled servers. It can be seen that the computing efficiency of data centers has a lot of room for improvement. According to statistics, the average resource utilization rate of global data centers is less than 20%, which is a huge waste of resources and energy.

The essence of cloud computing is to aggregate discrete computing power into a larger resource pool. We can fully peak load shifting to provide the ultimate energy efficiency ratio Through optimized resource scheduling.

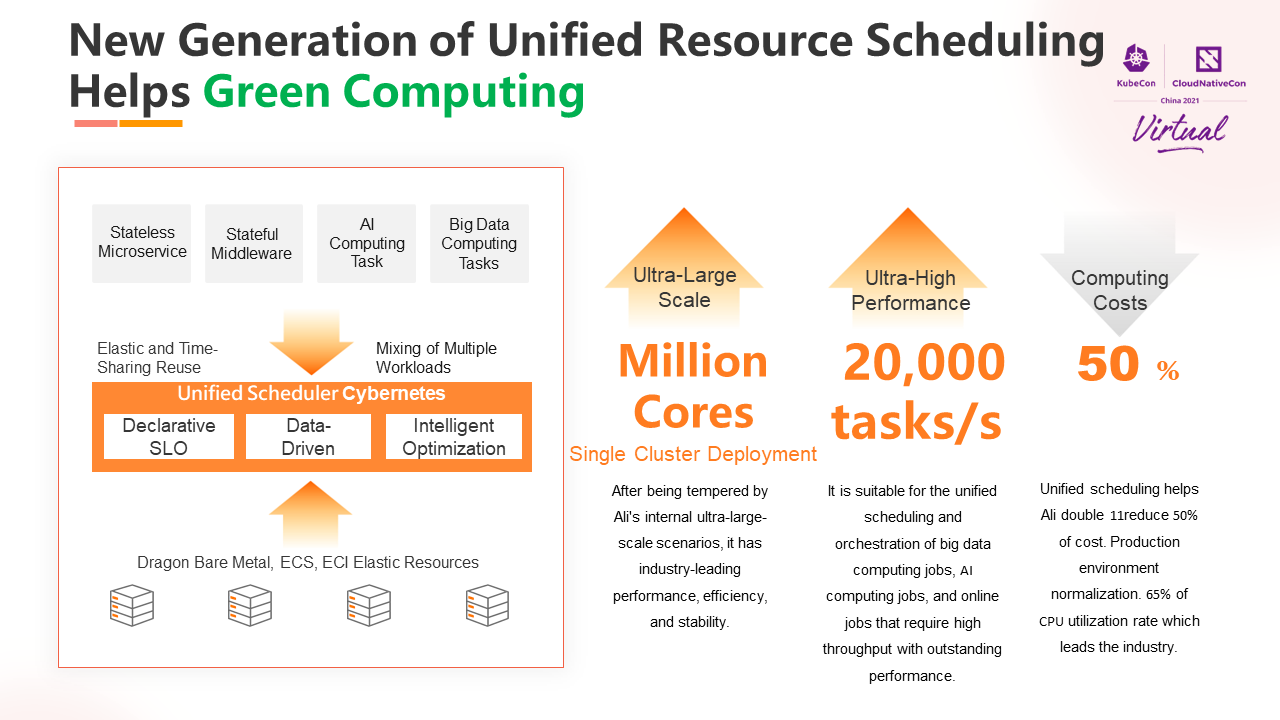

After Alibaba Group realized comprehensive cloud migration, we launched a new plan — using cloud-native technology to carry out unified resource scheduling for the tens of millions of cores of Alibaba Group's server resources distributed in dozens of geographies around the world to improve utilization. The unified scheduling project has achieved a total success during the 2021 Double 11 through the efforts of teams from Alibaba Group and Alibaba Cloud!

Based on Kubernetes and the unified scheduler Cybernetes developed by Alibaba, it uses a set of scheduling protocols and a set of system architectures to intelligently schedule the underlying computing resources. It supports the hybrid deployment of multiple workloads. This improves resource utilization on the premise of ensuring application SLO. Let e-commerce microservices, middleware, and other applications, search and promotion, and MaxCompute's big data and AI services all run on a unified container platform. It can reduce the purchase of tens of thousands of servers each year for Alibaba Group, bringing hundreds of millions of resource cost optimization.

The size of a single cluster exceeds tens of thousands of nodes and millions of cores. The task scheduling efficiency reaches 20,000 per second, which meets the scheduling requirements of high-throughput, low-latency services (such as search, big data, and AI), and has excellent performance. Unified scheduling reduced 50% of the cost during Double 11, and the CPU utilization in the normalized production environment reached 65%.

Multi-modal pre-trained AI large models are widely recognized as a critical path toward general-purpose artificial intelligence.

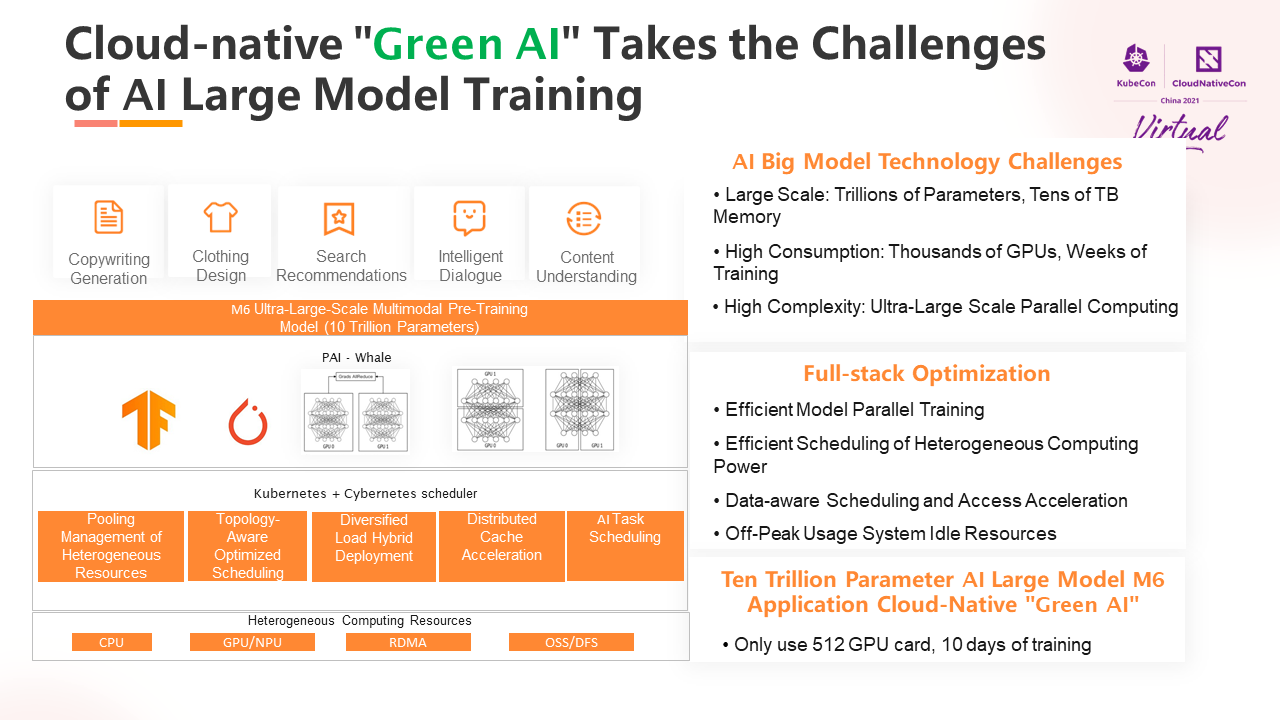

The well-known GPT-3 has hundreds of billions of parameters, and it can be as good as human beings in some natural language understanding fields. The newly released Alibaba DAMO Academy ultra-large-scale pre-training model M6 has entered the era of 10 trillion parameters. M6 has multi-modal Chinese task processing capabilities. It is especially good at design, writing, and question and answer and has broad application prospects in e-commerce, clothing, scientific research, and other fields.

Kubernetes' support for deep learning tasks is maturing. However, ultra-large-scale model training still faces severe challenges. The trillion-level parameter model training requires thousands of GPU and tens of T memory computing resources, and it takes days to complete the training.

Cybernetes extends the ability to schedule large-scale AI tasks based on native Kubernetes to address these challenges. the GPU computing efficiency is improved through efficient heterogeneous computing power scheduling, data awareness, and access acceleration. The idle resources of the cluster are fully utilized through staggered peak scheduling. It supports the cloud-native PAI-Whale framework and efficient parallel model training.

M6 finally realized that it used only 512 GPUs and could train a trillion-scale super-large model in 10 days. It improves the efficiency and resource utilization of model training. Compared with the international model of the same scale, the energy consumption is reduced by more than 80%, and the green AI is realized.

The digital world and the physical world are more integrated because of the maturity of new technologies, such as 5G, the Internet of Things, and AR/VR.

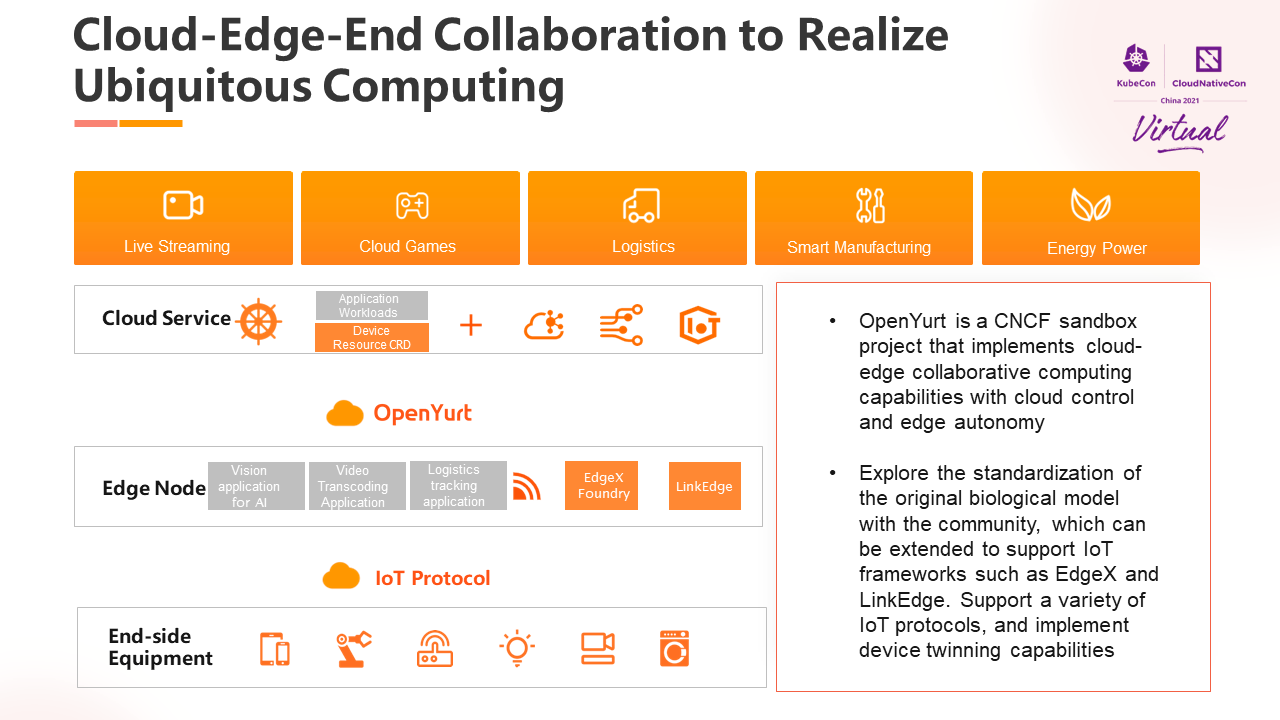

OpenYurt is the industry's first open-source zero-intrusive cloud-native edge computing project and became the CNCF Sandbox project in November 2020.

Edge computing faces technical challenges, such as decentralized computing power, heterogeneous resources, and weak network connections. OpenYurt builds a cloud-edge collaborative computing framework based on Kubernetes. It has landed in many industries over the past two years, such as streaming, cloud games, logistics, intelligent manufacturing, and urban brain.

We hope to implement device twinning in a cloud-native way and solve the management and O&M challenges of massively distributed devices in IoT scenarios. After the collaboration between OpenYurt and the EdgeX Foundry community, VMWare, Intel, and other engineers, we hope to achieve unified modeling and management of end-to-end device and application management. Next, I will introduce a case of using OpenYurt to implement ubiquitous computing.

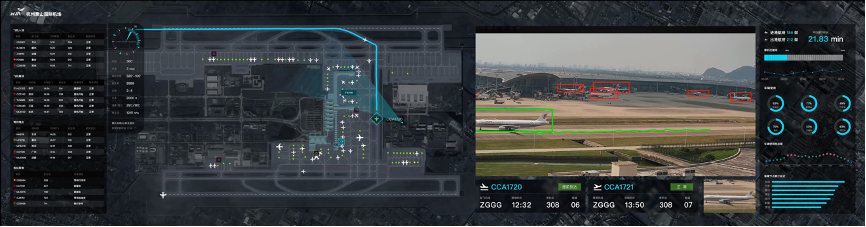

The efficiency of airport operations is crucial to meeting the growing demand for passenger flow and logistics. Meanwhile, the challenges of airport security are becoming more prominent. In the Intelligent Airports project, the Cloud-Edge-End integration architecture built by OpenYurt can complete the airport perception layer built by cameras, sensors, and edge AI all-in-one machine. It is also possible to build global unified management and big data platform based on the cloud platform. This enables the sharing and analysis of airport global data, thus realizing the ability of airport panoramic video stitching, secure full-area monitoring, and physical visualization of the whole view field.

With the rapid development of the mobile Internet and the Internet of Things, computing generates massive information everywhere and every moment. The way to make infrastructure credible and protect private data from theft, tampering, and abuse has become important challenges. With the implementation of the National Data Security Law, the privacy-enhanced computing business has received more attention from the industry.

Gartner predicts that 60% of large organizations will adopt privacy-enhanced computing technology to process data in untrusted environments or multi-party data analysis use cases by 2025.

An important technical branch of privacy-enhanced computing is data protection through a hardware-based trusted execution environment TEE. The security of TEE is a boundary-based security model. Its security boundary is small and exists in the hardware chip itself, so the applications executed within TEE could be protected from threats from other applications, other tenants, or platform parties.

Confidential container technology that combines containers with trusted execution environments improves the protection of sensitive information. On the one hand, containers have a smaller attack surface than a complete OS. On the other hand, the container-based security software supply chain can ensure trusted and traceable application sources.

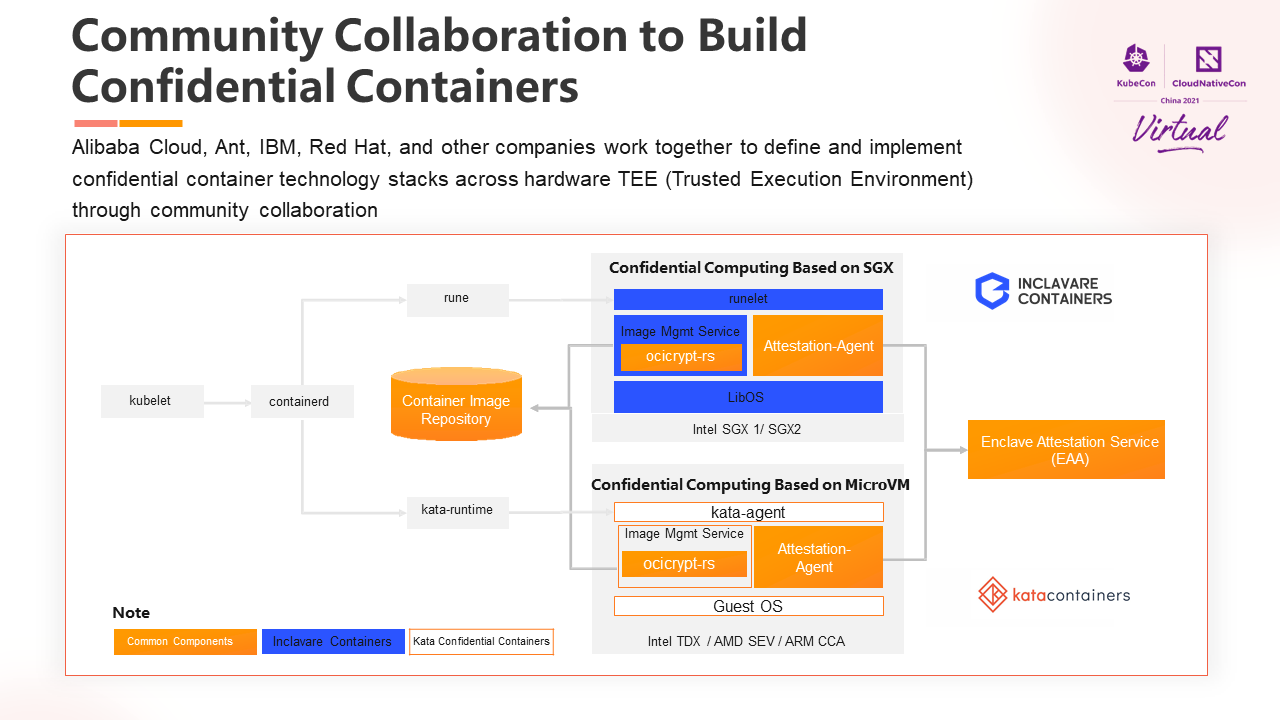

Inclavare Containers was open sourced by Alibaba, the industry's first container runtime project for confidential computing. It became a CNCF sandbox project in September 2021. Confidential containers can hide all the complexity of the underlying system of confidential computing. It follows the existing cloud-native standardized interfaces and specifications and is compatible with the existing ecosystem. It will accelerate the popularization of this technology. In community collaboration, it can be seen that engineers from the Kata Container community are also exploring.

As shown in the figure, the SGX confidential containers supported by the Inclavare Containers project and the MicroVM-based confidential container supported by the Kata Confidential Container project are similar in technical form. Therefore, the developers of the two projects are cooperating to maximize the value of technology by reusing each other's technical components. It can also achieve a unified developer experience for different TEE implementations. This also reflects the power of the open-source community.

From the technical point of view, compared with runC and Kata container runtime, container images containing sensitive data need to be encrypted and digitally signed in advance. The download process of the image is carried out in TEE to ensure the security of the image decryption process. The relevant key will be transmitted to TEE through the secure and trusted channel established by the unique remote authentication mechanism of confidential calculation to ensure its contents will not be leaked and tampered with. Finally, the entire confidential container runs in a hardware-protected TEE at runtime. The data generated during its calculation is encrypted in memory and protected by integrity.

Popularizing digital trust through cloud-native technology is still emerging, and I look forward to building it together with everyone!

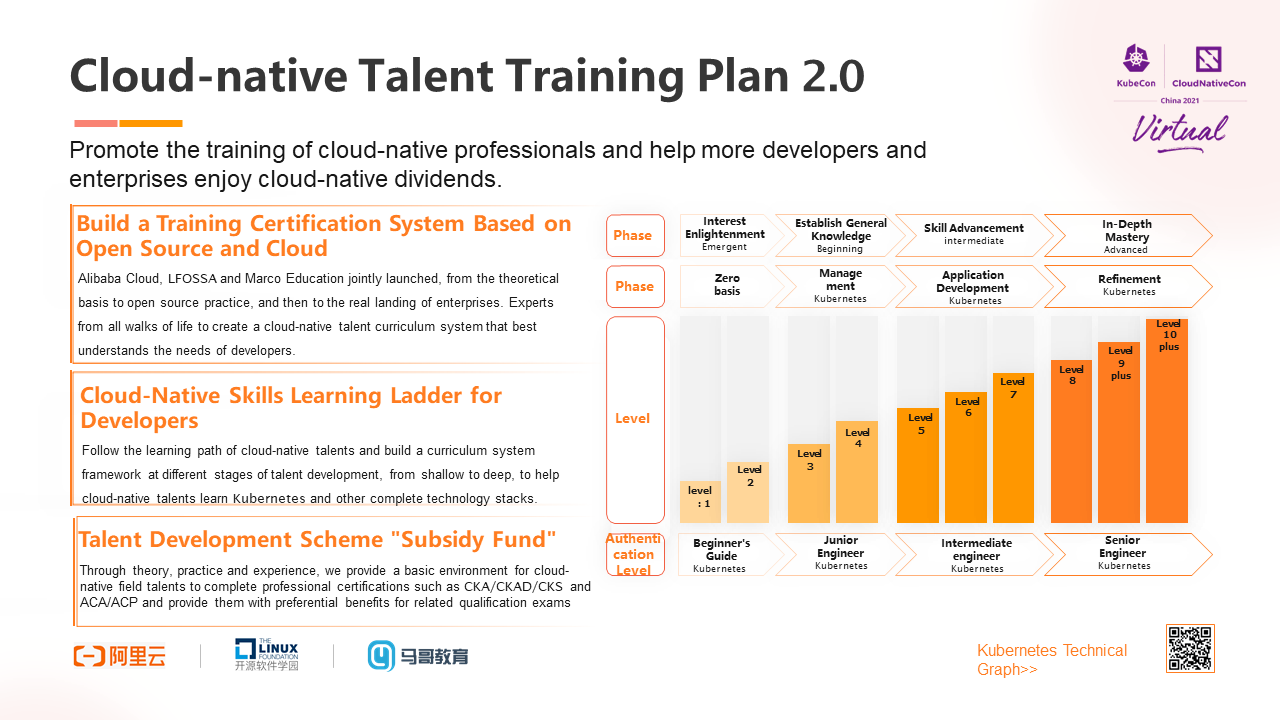

We believe that the development and popularization of any new technology must be driven by professional talents. As a practitioner and pioneer in the field of cloud-native, Alibaba Cloud attaches great importance to empowering developers through its experience.

In August 2021, Alibaba Cloud, Linux Open-Source Software Academy, and CNCF jointly released the Cloud-Native Talent Training Program 2.0. With the combined efforts of ecology, Alibaba Cloud has jointly cultivated cloud-native professionals through open skill maps, professional courses, and certification benefits. We also welcome more developers and friends to embark on the cloud-native learning road.

We believe green, ubiquitous, and credible cloud computing will promote the development of the industry and help us achieve a better future. Thank you!

Cloud-Native Encountering Hybrid Cloud: How to Balance between Change and Stability

703 posts | 57 followers

FollowAlibaba Cloud Community - January 11, 2022

Alibaba Cloud Community - July 27, 2023

Alibaba Cloud Native Community - November 8, 2021

Alibaba Cloud New Products - June 2, 2020

Alibaba Cloud Native Community - July 20, 2021

Alibaba Cloud Community - May 5, 2022

703 posts | 57 followers

Follow Link IoT Edge

Link IoT Edge

Link IoT Edge allows for the management of millions of edge nodes by extending the capabilities of the cloud, thus providing users with services at the nearest location.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn MoreMore Posts by Alibaba Cloud Native Community