In audio and video systems, audio and video transcoding consumes a large proportion of computing power. You can build an elastic and highly available serverless audio and video processing system by using Function Compute and CloudFlow. This topic describes the differences between serverless audio and video processing systems and conventional solutions in engineering efficiency, O&M, performance, and costs. This topic also describes how to build and use a serverless audio and video processing system.

Background information

You can use dedicated cloud-based transcoding services. However, you may want to build your own transcoding service in scenarios to meet the following requirements:

Auto scaling

You need to improve elasticity of video processing.

For example, you have deployed a video processing service on a virtual machine or container platform by using FFmpeg, and you want to improve the elasticity and availability of the service.

Engineering efficiency

You need to process multiple video files at a time.

You need to process a large number of oversized videos at a time with high efficiency.

For example, hundreds of 1080p videos, each of which is more than 4 GB in size, are regularly generated every Friday. The videos need to be processed within a few hours.

Custom file processing

You have advanced custom processing requirements.

For example, you want to record the transcoding details in your database each time a video is transcoded. Or you may want popular videos to be automatically prefetched to Alibaba Cloud CDN (CDN) points of presence (PoPs) after the videos are transcoded to relieve pressure on origin servers.

You want to convert formats of audio files, customize the sample rates of audio streams, or reduce noise in audio streams.

You need to directly read and process source files.

For example, your video files are stored in File Storage NAS (NAS) or on disks that are attached to Elastic Compute Service (ECS) instances. If you build a custom video processing system, the system can directly read and process your video files, without migrating the video files to Object Storage Service (OSS).

You want to convert the formats of videos and add more features.

For example, your video processing system can be used to transcode videos, add watermarks to videos, and generate GIF images based on video thumbnails. You want to add more features to your video processing system, such as adjusting the parameters used for transcoding. You also hope that the existing online services provided by the system are not affected when new features are released.

Cost control

You want to obtain high cost efficiency in simple transcoding or lightweight media processing scenarios.

For example, you want to generate a GIF image based on the first few frames of a video, or query the duration of an audio file or a video file. In this case, building a custom media processing system is cost-effective.

You can use a traditional self-managed architecture or the serverless solution to build your own transcoding services. This topic compares the two solutions and the procedure of the serverless solution.

Solutions

Conventional self-managed architecture

As the IT technology develops, services of cloud vendors are constantly improving. You can use Alibaba Cloud services, such as ECS, OSS, and CDN, to build an audio and video processing system to store audio and video files and accelerate playbacks of the files.

Serverless architecture

Video processing workflow system

If you need to accelerate the transcoding of large videos or perform complex operations on videos, use CloudFlow orchestration functions to build a powerful video processing system. The following figure shows the architecture of the solution.

When a user uploads an .mp4 video to OSS, OSS automatically triggers the execution of flows in CloudFlow to transcode the format of the video. You can use this solution to meet the following business requirements:

A video can be transcoded to various formats at the same time and processed based on custom requirements, such as watermarking or synchronizing the updated information about the processed video to a database.

When multiple files are uploaded to OSS at the same time, Function Compute performs auto scaling to process the files in parallel and transcodes the files into multiple formats at the same time.

You can transcode oversized videos by using NAS and video segmentation. Each oversized video is first segmented, transcoded in parallel, and then merged. Proper segmentation intervals improve the transcoding speed of larger videos.

NoteWhen you segment a video, you split the video stream into multiple segments at specified time intervals and record the information about the segments in an index file.

Benefits of a serverless solution

Improved engineering efficiency

Item | Serverless solution | Conventional self-managed architecture |

Infrastructure | None | You must purchase and manage infrastructure resources. |

Development efficiency | You can focus on the development of business logic and use Serverless Devs to orchestrate and deploy resources. | In addition to the development of business logic, you must build an online runtime environment, which includes the installation of software, service configurations, and security updates. |

Parallel and distributed video processing | You can use CloudFlow resource orchestration to process multiple videos in parallel and process a large video in a distributed manner. Alibaba Cloud is responsible for the stability and monitoring. | Strong development capabilities and a sound monitoring system are required to ensure the stability of the video processing system. |

Training costs | You only need to write code in programming languages based on your business requirements and be familiar with FFmpeg. | In addition to programming languages and FFmpeg, you may also need to use Kubernetes and ECS. This requires you to have a better understanding of services, terms, and parameters. |

Business cycle | The solution consumes approximately three man-days, including two man-days for development and debugging and one man-day for stress testing and checking. | In addition to the time required for business logic development, the system requires at least 30 man-days to complete the following tasks: hardware procurement, software and environment configurations, system development, testing, monitoring and alerting, and canary release. |

Auto scaling and O&M-free

Item | Serverless solution | Conventional self-managed architecture |

Elasticity and high availability | Function Compute can implement auto scaling in milliseconds to quickly scale out the underlying resources to respond to traffic surges. Function Compute can also provide excellent transcoding performance, and eliminate the need for O&M. | To perform auto scaling, you must create a Server Load Balancer (SLB) instance. The scaling speed by using an SLB instance is slower than the scaling speed by using Function Compute. |

Monitoring, alerting, and queries | More fine-grained metrics are provided for execution of flows in CloudFlow and execution of functions in Function Compute. You can query the latency and logs of each function execution. The solution also uses a more comprehensive monitoring and alerting mechanism. | Only metrics on auto scaling or containers can be provided. |

Procedure of building a video processing workflow

Prerequisites

Relevant services are activated and an OSS bucket is created.

Function Compute is activated. For more information, see Step 1: Activate Function Compute.

Object Storage Service OSS. For more information, see Create buckets.

CloudFlow

File Storage NAS

Virtual Private Cloud

Serverless Devs is installed and configured.

Serverless Devs and dependencies are installed. For more information, see Install Serverless Devs and dependencies

Serverless Devs is configured. For more information, see Configure Serverless Devs.

Procedure

This solution uses CloudFlow to orchestrate functions and implement a video processing system. Code and workflows of multiple functions need to be configured. In this example, Serverless Devs is used to deploy the system.

Access the multimedia-process-flow template.

Click Deploy in the Development & Experience section to go to the Function Compute application center to deploy the application.

On the Create Application page, configure parameters and then click Create and Deploy Default Environment.

The following table describes the major parameters that you need to configure. Use the default values for other parameters.

Parameter

Description

Basic Configurations

Deployment Type

Select Directly Deploy.

Role Name

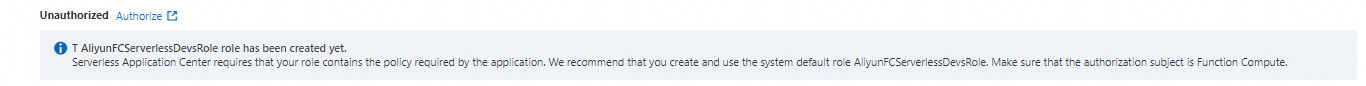

If you use an Alibaba Cloud account to create an application in the application center for the first time, click Authorize Now to go to the Role Templates page, create the

AliyunFCServerlessDevsRoleservice-linked role, and then click Confirm Authorization Policy.

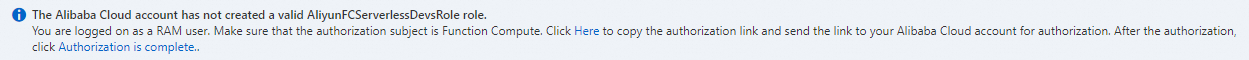

If you are using a RAM user, follow the instructions on the page to copy the authorization link to your Alibaba Cloud account for authorization. After you complete the authorization, click Authorized.

Note

NoteIf the Failed to obtain the role message appears, contact the corresponding Alibaba Cloud account to attach the

AliRAMReadOnlyAccessandAliyunFCFullAccesspolicies to the current RAM user. For more information, see Grant permissions to a RAM user by using an Alibaba Cloud account.

Advanced Settings

Region

Select the region in which you want to deploy the application.

Workflow RAM Role ARN

The service-linked role that is used to execute the workflow. Create a service role in advance and attach the AliyunFCInvocationAccess policy to the service-linked role.

Role Service Function Compute

The service-linked role that is used by Function Compute to access other cloud services. If you do not have special requirements, we recommend that you use the default service role AliyunFCDefaultRole provided by Function Compute.

Object Storage Bucket Name

Enter the name of a bucket in the same region as the workflow and function.

Prefix

The prefix of the directory in which the original video is stored. In this example, the prefix is

src.The directory in which the transcoded video is saved

The directory in which the transcoded video is stored. In this example, the directory is

dst.OSS trigger RAM role ARN

We recommend that you use the AliyunOSSEventNotificationRole role. If you create the application for the first time, click Create Role and complete authorization. Then, the AliyunOSSEventNotificationRole role is created.

After 1 to 2 minutes, the application is deployed. The system automatically creates five functions and the

multimedia-process-flow-3oihworkflow. You can log on to the Function Compute console and CloudFlow console to view the creation results. The functions have the following features:oss-trigger-workflow: uses a corresponding trigger to trigger the execution of the workflow when the system perceives that a new video is uploaded to the specified directory.

split: segments a video in the working directory based on the segment length.

transcode: transcodes a segmented video based on the specified video format.

merge: splices the video after the segments are transcoded.

after-process: clears the working directory.

Check whether the audio and video processing system is built.

Log on to the CloudFlow console. In the top navigation bar, select a region.

On the workflows page, click the

multimedia-process-flow-3oihworkflow. On the Execution Records tab of the details page of the workflow, click Started Execution to execute the workflow. In the Execute Workflow panel, specify Execution Name and Input of Execution and then click OK. Example of the input of execution:{ "oss_bucket_name": "buckettestfnf", "video_key": "source/test.mov", "output_prefix": "outputs", "segment_time_seconds": 15, "dst_formats": [ "mp4", "flv" ] }If Execution Succeeded is displayed next to Execution Status on the Basic Information tab of the Execution Details page, the execution is complete.

Log on to the OSS console and go to the bucket and the

/outputsdirectory in which the transcoded video is stored. Then, view the transcoded video.If the transcoded video file is stored in the /outputs directory, the audio and video processing system works as expected. The following figure shows that the video has been transcoded and merged.

FAQ

I want to record the transcoding details in my database each time a video is transcoded. I also want the popular videos to be automatically prefetched to CDN points of presence (POPs) after the videos are transcoded to relieve pressure on the origin server. How can I use such advanced custom processing features?

For more information about the deployment scheme, see Procedure. You can perform specific custom operations during media processing or perform additional operations based on the process. For example, you can add preprocessing steps before the process begins or add subsequent steps.

My custom video processing workflow contains multiple operations, such as transcoding videos, adding watermarks to videos, and generating GIF images based on video thumbnails. After that, I want to add more features to my video processing system, such as adjusting the parameters used for transcoding. I also hope that the existing online services provided by the system are not affected when new features are released. How can I achieve this goal?

For more information about the deployment scheme, see Procedure. CloudFlow orchestrates and invokes only functions. Therefore, you only need to update corresponding functions. In addition, versions and aliases can be used to perform canary releases of functions. For more information, see Manage versions.

I require only simple transcoding services or lightweight media processing services. For example, I want to obtain a GIF image that is generated based on the first few frames of a video, or query the duration of an audio file or a video file. In this case, building a custom media processing system is cost-effective. How can I achieve this goal?

Function Compute supports custom features. You can run specific FFmpeg commands to achieve your goal. For more information about the typical sample project, see fc-oss-ffmpeg.

My source video files are stored in NAS or on disks that are attached to ECS instances. I want to build a custom video processing system that can directly read and process my mezzanine video files, without migrating them to OSS. How can I achieve this goal?

You can integrate Function Compute with NAS to allow Function Compute to process the files that are stored in NAS. For more information, see Configure a NAS file system.