This tutorial shows you how to build a Retrieval-Augmented Generation (RAG)-based large language model (LLM) chatbot using Platform for AI (PAI) Elastic Algorithm Service (EAS) and ApsaraDB RDS for PostgreSQL as the vector database.

In this tutorial, you:

Set up a vector database in an ApsaraDB RDS for PostgreSQL instance

Deploy a RAG-based chatbot through the EAS console

Test the chatbot using the web UI with retrieval, LLM, and RAG query modes

Call the chatbot API from cURL and Python

How it works

RAG addresses the accuracy limits of standalone LLM applications by combining retrieval with generation. When a query arrives, the system retrieves relevant documents from the vector database, injects them into the prompt, and sends the enriched prompt to the LLM — producing answers grounded in your specific knowledge base rather than the model's training data alone. No model retraining is needed.

ApsaraDB RDS for PostgreSQL serves as the vector database in this architecture. The pg_jieba extension enables keyword-based retrieval and recall for Chinese text.

Prerequisites

Before you begin, ensure that you have:

A Virtual Private Cloud (VPC), vSwitch, and security group. For more information, see Create and manage a VPC and Create a security group

(Optional) An Object Storage Service (OSS) bucket or Apsara File Storage NAS (NAS) file system, if you plan to use a custom fine-tuned model. For more information, see Get started by using the OSS console or Create a file system

If you use Faiss to build the vector database, an OSS bucket is required.

Limitations

The ApsaraDB RDS for PostgreSQL instance and EAS must reside in the same region.

The chatbot is limited by the LLM service's token limit. Long conversations may hit this limit. To reduce the likelihood of reaching the limit in single-turn scenarios, disable Chat history in the web UI. For details, see Disable chat history.

Step 1: Set up the vector database

Create an ApsaraDB RDS for PostgreSQL instance. Place it in the same region as your planned EAS deployment to enable VPC-internal connectivity. For more information, see Create an instance.

Create a privileged account and a database for the instance. For more information, see Create a database and an account.

Set Account Type to Privileged Account.

When creating the database, select the privileged account from the Authorized By drop-down list.

Get the database connection details.

Go to the Instances page. In the top navigation bar, select the region where your instance resides, then click the instance ID.

In the left-side navigation pane, click Database Connection.

Note the endpoint and port number. You will need them when deploying the chatbot.

Add

pg_jiebato theshared_preload_librariesparameter. In the instance parameters page, findshared_preload_librariesand addpg_jiebato Running Parameter Value — for example:'pg_stat_statements,auto_explain,pg_cron'. For more information, see Modify the parameters of an ApsaraDB RDS for PostgreSQL instance.The pg_jieba extension segments Chinese text for keyword-based retrieval and recall. For more information, see Use the pg_jieba extension.

Step 2: Deploy the RAG-based chatbot

Log on to the Platform for AI (PAI) console.

In the left-side navigation pane, click Workspaces. On the Workspaces page, find your workspace and click its name. If no workspace exists, create one. For more information, see Create a workspace.

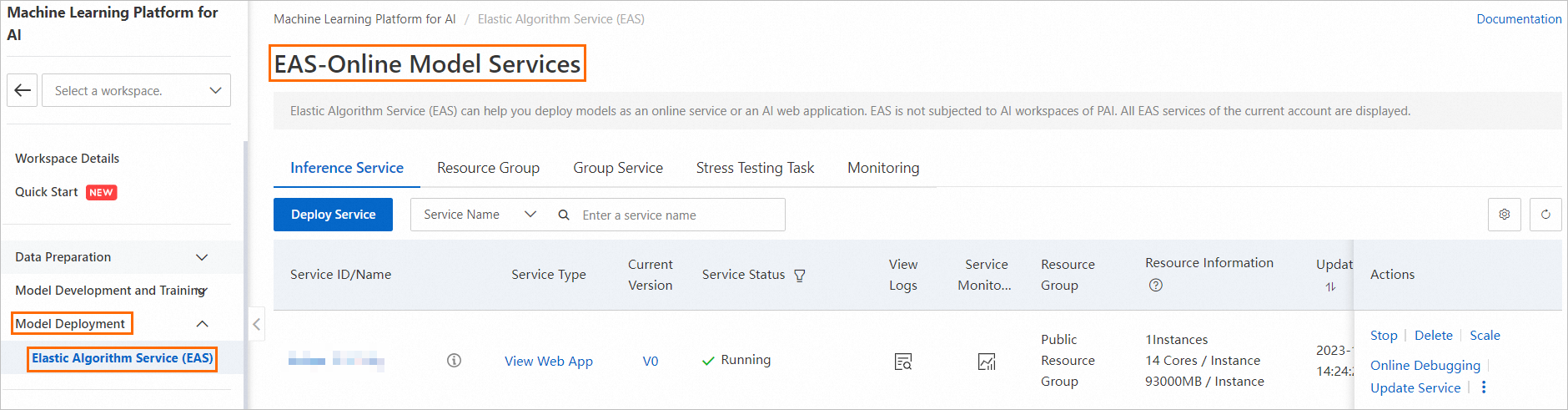

In the left-side navigation pane, choose Model Deployment > Elastic Algorithm Service (EAS).

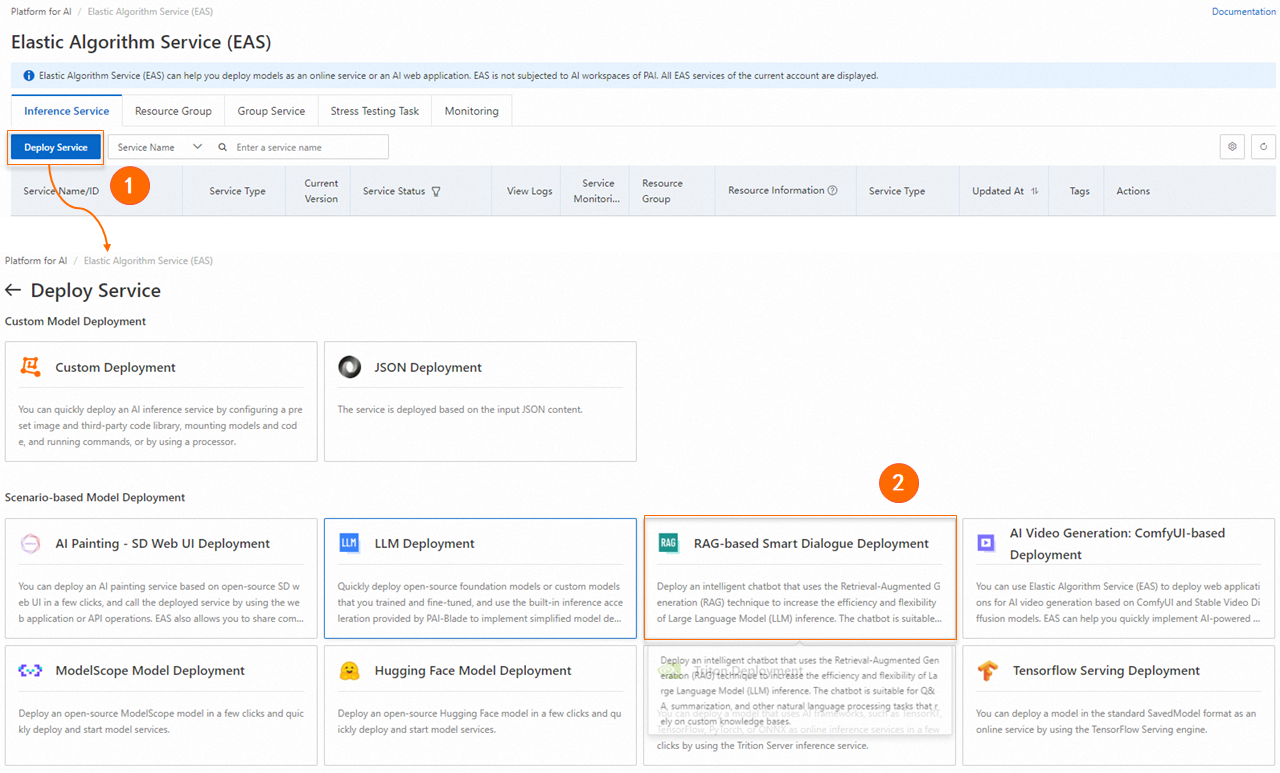

On the Elastic Algorithm Service (EAS) page, click Deploy Service. In the Scenario-based Model Deployment section, click RAG-based Smart Dialogue Deployment.

On the RAG-based LLM Chatbot Deployment page, configure the parameters.

Basic information

Parameter Description Service Name The name of the service. Model Source The model source. Valid values: Open Source Model and Custom Fine-tuned Model. Model Type The model type. Select based on your requirements. If Model Source is Custom Fine-tuned Model, also configure the parameter quantity and precision. Model Settings Required when Model Source is Custom Fine-tuned Model. Specify where the fine-tuned model file is stored. The model file format must be compatible with Hugging Face Transformers. Valid values: Mount OSS (select the OSS path) and Mount NAS (select the NAS file system and source path). Resource configuration

Parameter Description Resource Configuration If Model Source is Open Source Model, the system selects an instance type automatically. If Model Source is Custom Fine-tuned Model, select an instance type that matches your model. For more information, see Deploy LLM applications in EAS. Inference Acceleration Available for the Qwen, Llama2, ChatGLM, or Baichuan2 model on A10 or GU30 instances. Options: BladeLLM Inference Acceleration (high concurrency and low latency) and Open-source vLLM Inference Acceleration. Vector database settings

Parameter Description Vector Database Type Select RDS PostgreSQL. Host Address The internal or public endpoint of the RDS instance. Use the internal endpoint when the RAG application and the database are in the same region. If they are in different regions, use the public endpoint — see Apply for or release a public endpoint. Port Default: 5432. Database The name of the database you created in Step 1. Table Name A new or existing table name. If you use an existing table, its schema must be compatible with the RAG-based LLM chatbot format. Account The privileged account of the RDS instance. Password The password of the privileged account. VPC configuration

Parameter Description VPC If Host Address is an internal endpoint, select the VPC of the RDS instance. If Host Address is a public endpoint, configure a VPC and vSwitch, then create a NAT gateway and an elastic IP address (EIP) for internet access. Add the EIP to the IP address whitelist of the RDS instance. For more information, see Use the SNAT feature of an Internet NAT gateway to access the Internet and Configure an IP address whitelist. vSwitch The vSwitch associated with your VPC. Security Group Name The security group. Do not use the security group named created_by_rds— it is reserved for system access control.Click Deploy. When the Service Status column shows Running, the chatbot is deployed.

Step 3: Test with the web UI

Use the built-in web UI to validate chatbot performance before integrating it into your application.

Configure the chatbot

On the EAS page, click View Web App in the Service Type column.

Set the embedding model:

Embedding Model Name: Four models are available. The optimal model is selected by default.

Embedding Dimension: Auto-configured after you select an Embedding Model Name.

Click Connect PostgreSQL to verify the connection to the RDS vector database. The connection settings come from the deployment configuration and cannot be modified here.

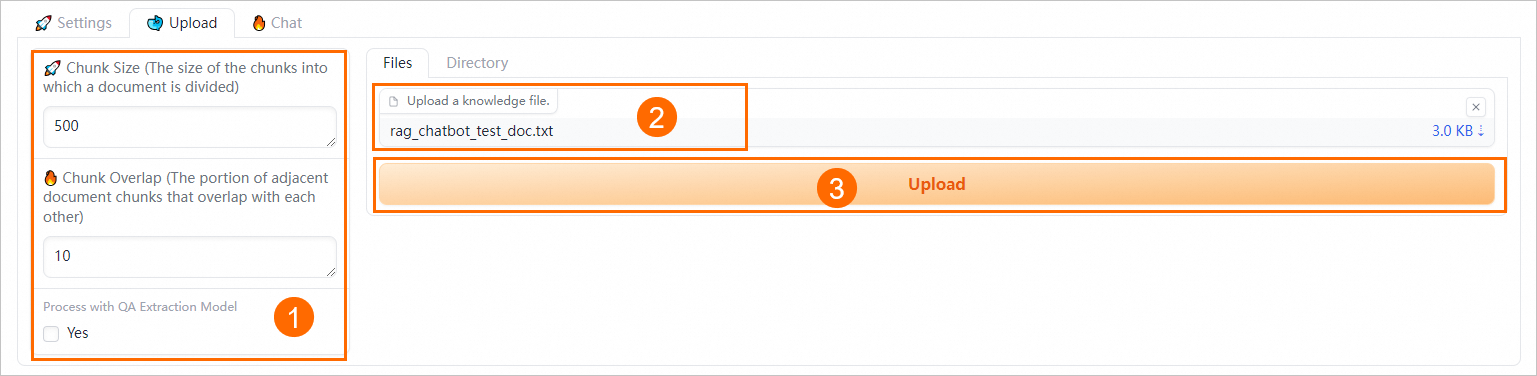

Upload knowledge base files

On the Upload tab, upload your business data files.

Supported file formats: TXT, PDF, XLSX, XLS, CSV, DOCX, DOC, Markdown, and HTML.

Configure chunking parameters to control how documents are split.

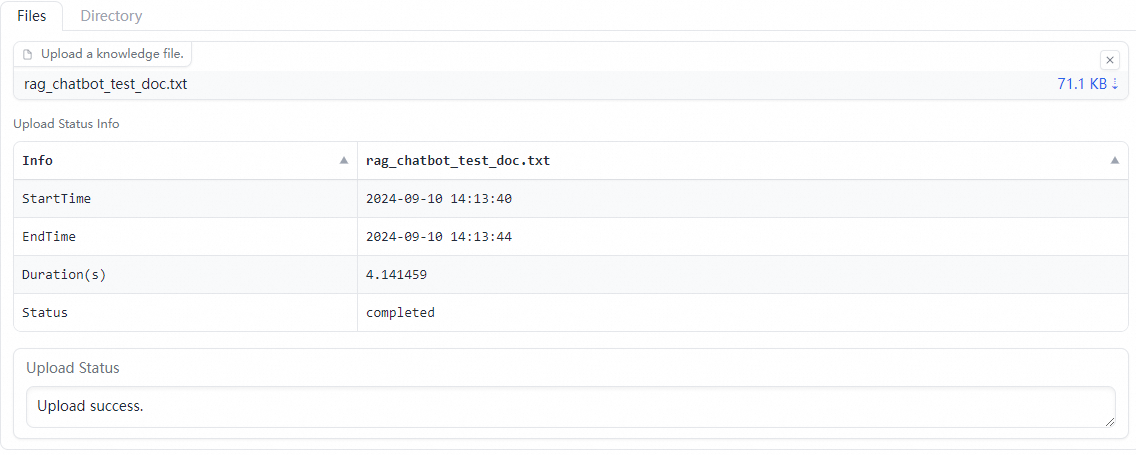

Parameter Description Default Chunk Size The size of each chunk, in bytes. 500 Chunk Overlap The overlap between adjacent chunks. 10 Process with QA Extraction Model When set to Yes, the system extracts question-answer pairs from uploaded files, improving retrieval precision. — Upload files on the Files tab, or upload a directory on the Directory tab. For example, upload the rag_chatbot_test_doc.txt file to test.

The system runs data cleansing (text extraction and hyperlink replacement) and semantic-based chunking before storing the data.

Configure inference parameters

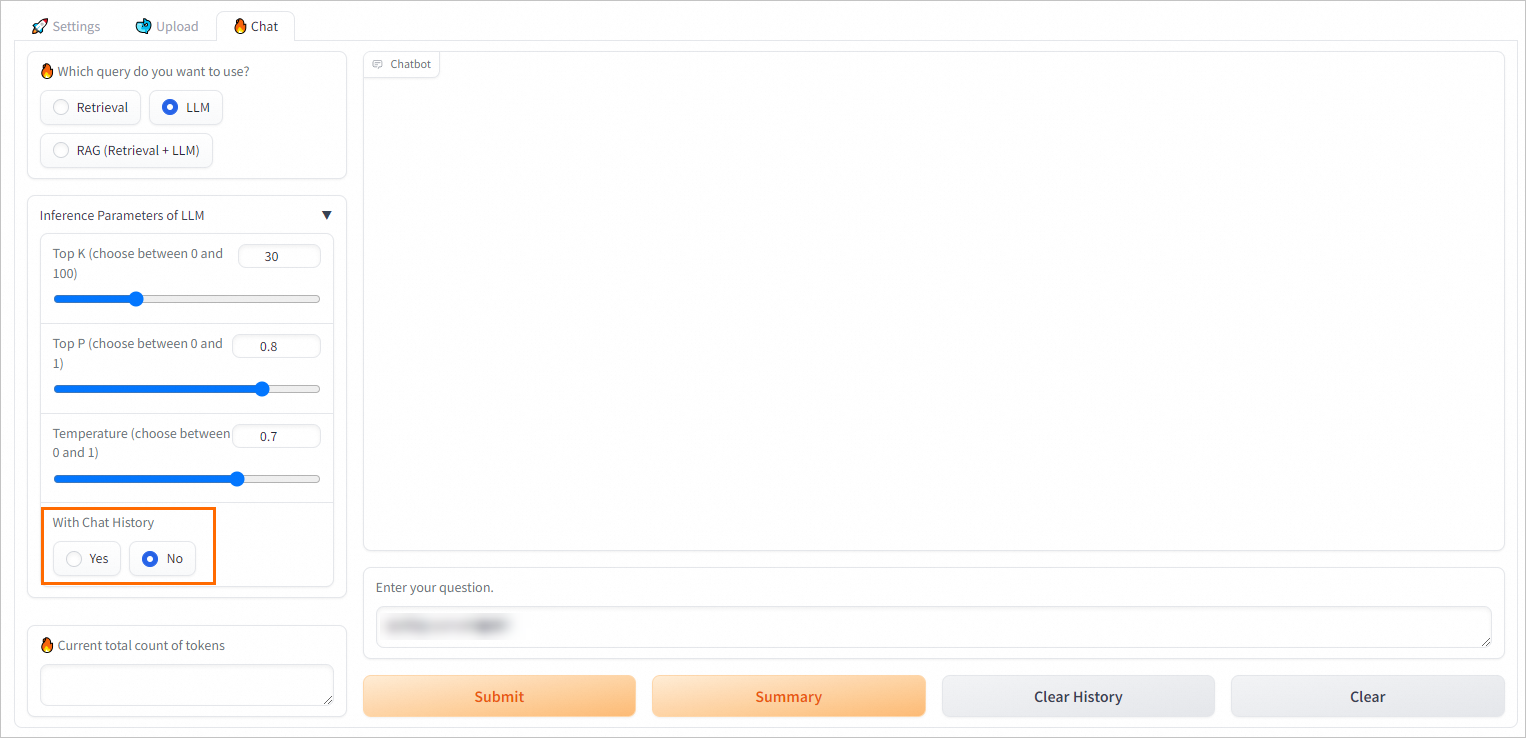

On the Chat tab, configure retrieval and generation parameters.

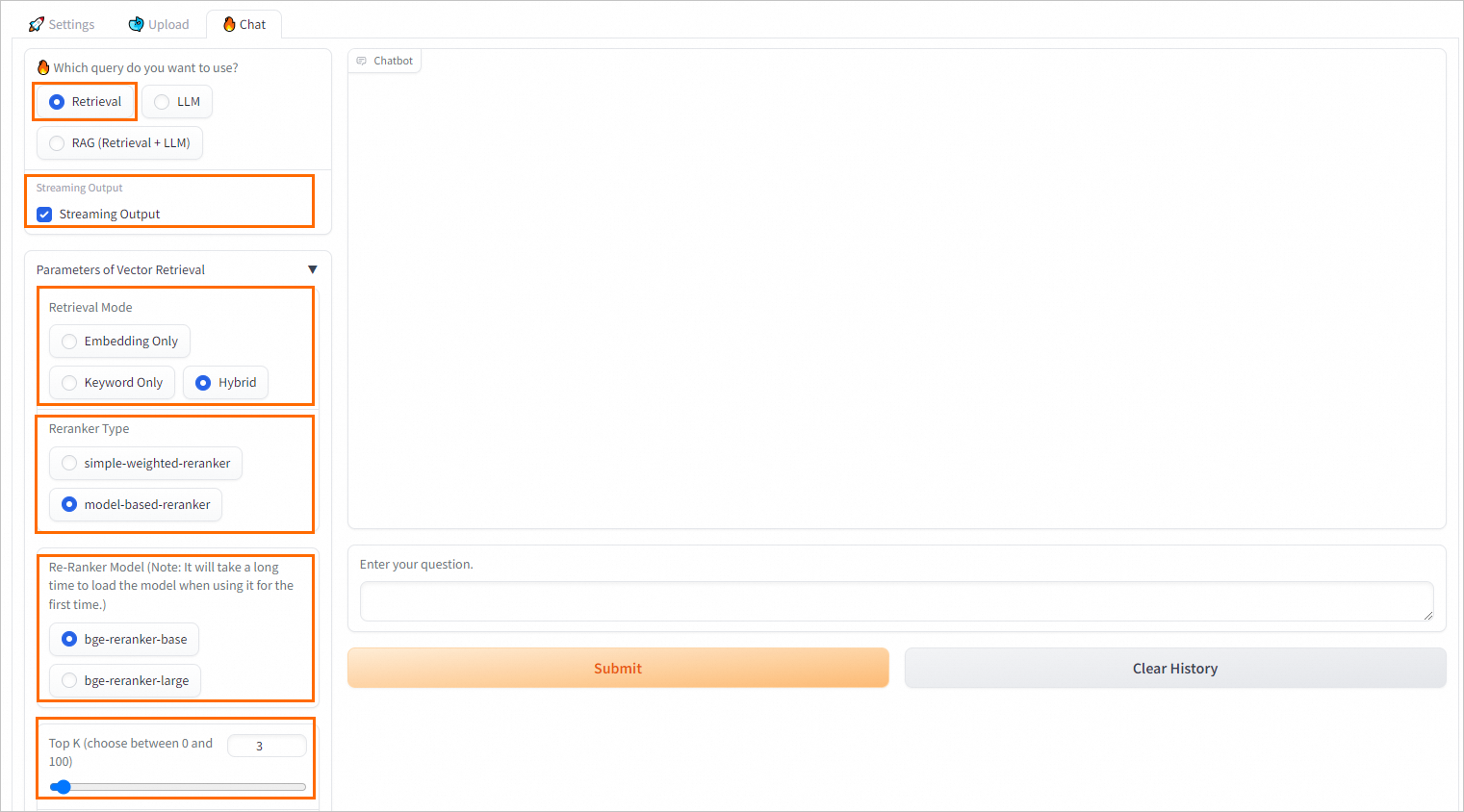

Retrieval-based query settings

| Parameter | Description |

|---|---|

| Streaming Output | Return results in streaming mode. |

| Retrieval Model | The retrieval method. Embedding Only: vector database-based retrieval. Keyword Only: keyword-based retrieval. Hybrid: combines both methods. In most scenarios, vector-based retrieval delivers better results. For corpora where precise keyword matching matters, Keyword Only or Hybrid may perform better. ApsaraDB RDS for PostgreSQL uses pg_jieba for Chinese text segmentation. For more information, see Use the pg_jieba extension. |

| Reranker Type | Apply a second-pass ranking model to improve result precision. You can use the simple-weighted-reranker or model-based-reranker to perform a higher-precision re-rank operation on the top K results. Note If you use a model for the first time, you may need to wait for a period of time before the model is loaded. |

| Top K | The number of top results to retrieve from the vector database. |

| Similarity Score Threshold | The minimum similarity score for a result to be returned. A higher value returns fewer but more relevant results. |

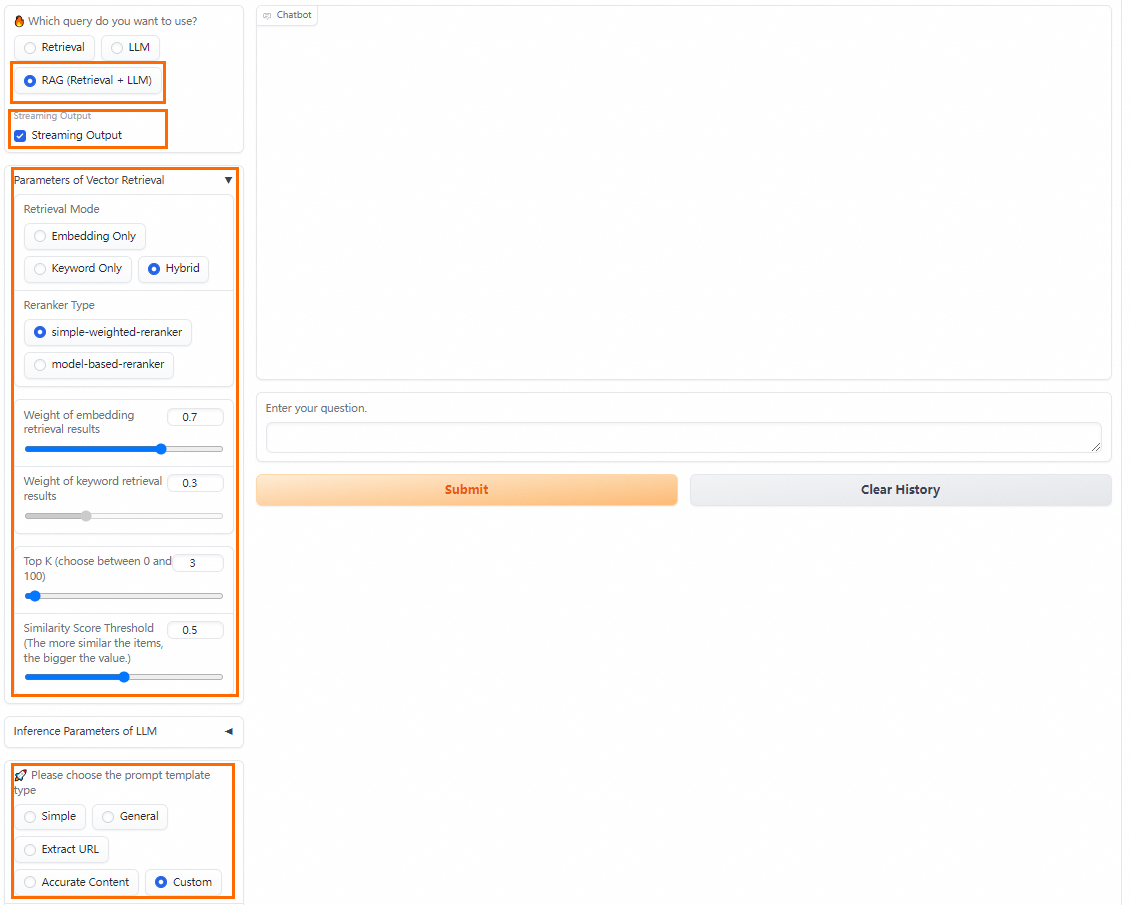

RAG query settings (retrieval + LLM)

Select a predefined prompt template or define a custom one. You can configure Streaming Output, Retrieval Model, and Reranker Type in this mode as well. For more information on available prompt policies, see RAG-based LLM chatbot.

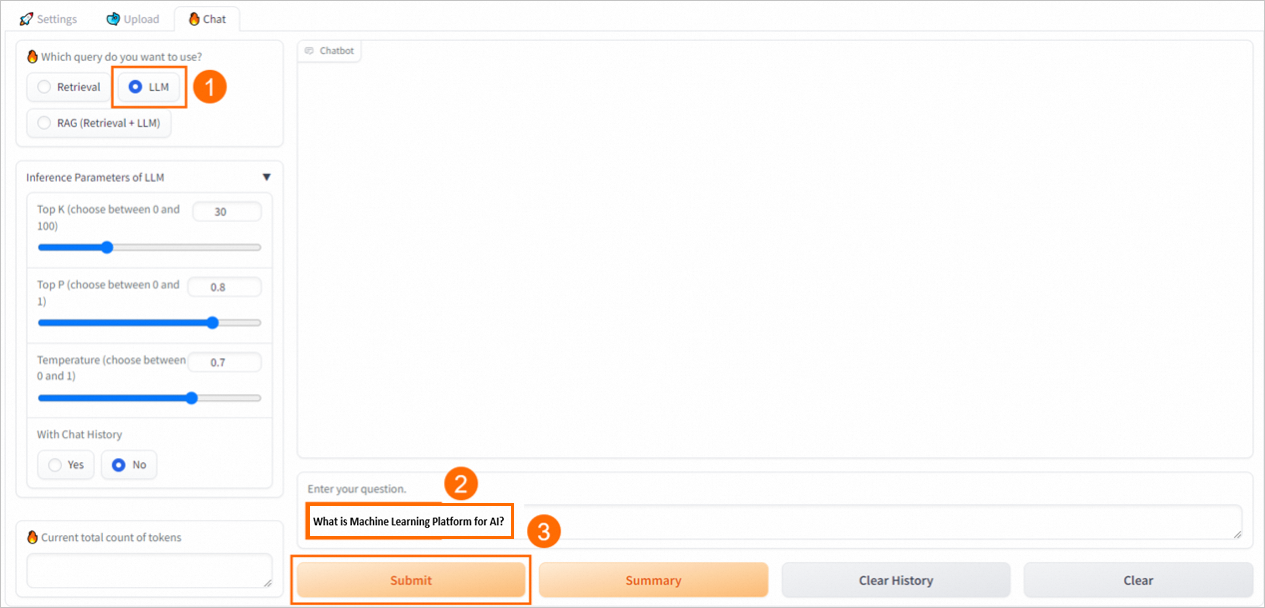

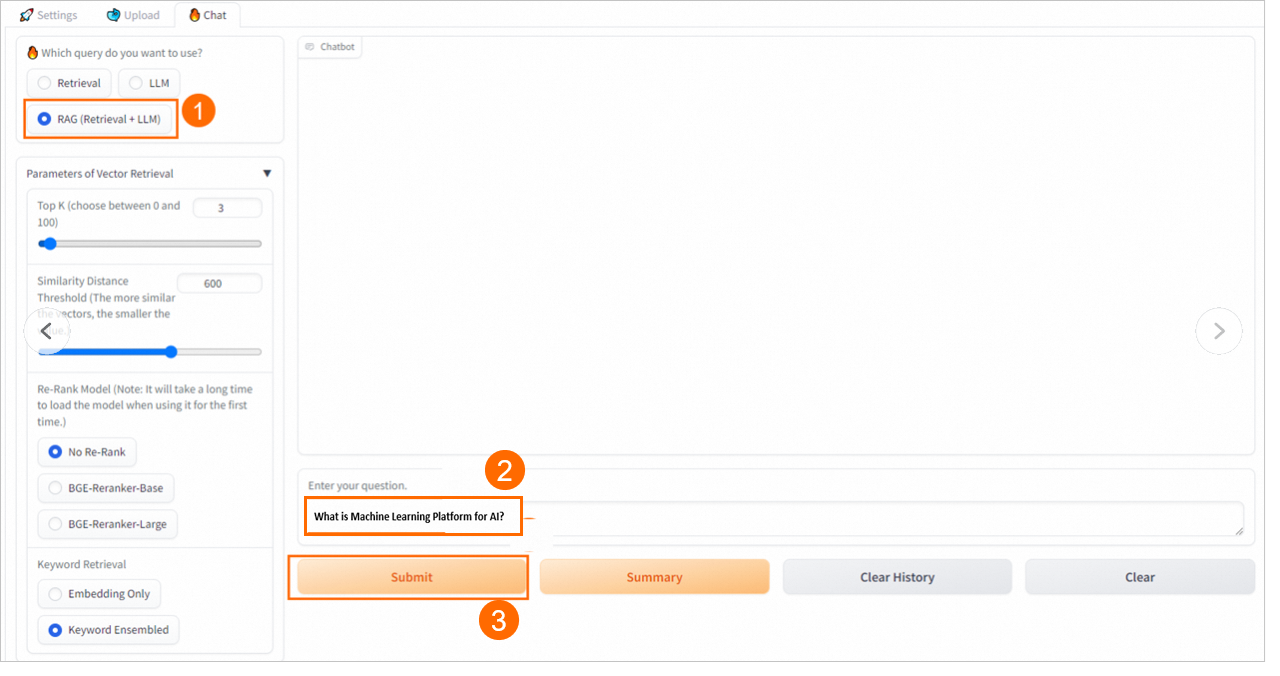

Run queries

The chatbot supports three query modes:

Retrieval — Returns the top K relevant results from the vector database.

LLM — Uses only the LLM application to generate an answer.

RAG (retrieval + LLM) — Retrieves relevant documents, injects them into the selected prompt template, and sends the combined input to the LLM.

Step 4: Call the API

Get the endpoint and token

Click the chatbot service name to open the Service Details page.

In the Basic Information section, click View Endpoint Information.

On the Public Endpoint tab of the Invocation Method dialog, copy the service endpoint and token.

Upload knowledge base files

Connect to the vector database and upload knowledge base files before calling the API. Alternatively, populate the vector database directly using a table that conforms to the PAI-RAG schema.

Call the API

The chatbot exposes three endpoints, one for each query mode:

| Mode | Endpoint |

|---|---|

| Retrieval | service/query/retrieval |

| LLM | service/query/llm |

| RAG (retrieval + LLM) | service/query |

Replace <service_url> and <service_token> with the values from the previous step. Remove the trailing slash (/) from the service URL.

cURL

Single-turn requests

# Retrieval mode

curl -X 'POST' '<service_url>service/query/retrieval' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What is PAI?"}'

# LLM mode (supports optional parameters such as temperature)

curl -X 'POST' '<service_url>service/query/llm' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What is PAI?", "temperature": 0.9}'

# RAG mode (retrieval + LLM)

curl -X 'POST' '<service_url>service/query' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What is PAI?"}'Multi-turn requests

Multi-turn conversations are supported in RAG and LLM modes. Use session_id to maintain conversation state across requests, or pass chat_history explicitly.

# Round 1: send the first question and get a session_id in the response

curl -X 'POST' '<service_url>service/query' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What is PAI?"}'

# Round 2: include the session_id to continue the conversation

curl -X 'POST' '<service_url>service/query' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What are the benefits of PAI?", "session_id": "ed7a80e2e20442eab****"}'

# Alternative: pass chat_history directly as a list of {user, bot} pairs

curl -X 'POST' '<service_url>service/query' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What are the features of PAI?", "chat_history": [{"user": "What is PAI", "bot": "PAI is an AI platform provided by Alibaba Cloud..."}]}'

# When both session_id and chat_history are provided, the chat_history is appended to the session

curl -X 'POST' '<service_url>service/query' \

-H 'Authorization: <service_token>' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"question": "What are the features of PAI?", "chat_history": [{"user": "What is PAI", "bot": "PAI is an AI platform provided by Alibaba Cloud..."}], "session_id": "1702ffxxad3xxx6fxxx97daf7c"}'Python

Single-turn requests

import requests

EAS_URL = 'http://xxxx.****.cn-beijing.pai-eas.aliyuncs.com' # Remove trailing /

headers = {

'accept': 'application/json',

'Content-Type': 'application/json',

'Authorization': 'MDA5NmJkNzkyMGM1Zj****YzM4M2YwMDUzZTdiZmI5YzljYjZmNA==',

}

def test_post_api_query_llm():

url = EAS_URL + '/service/query/llm'

data = {"question": "What is PAI?"}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"======= Question =======\n {data['question']}")

print(f"======= Answer =======\n {ans['answer']} \n\n")

def test_post_api_query_retrieval():

url = EAS_URL + '/service/query/retrieval'

data = {"question": "What is PAI?"}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"======= Question =======\n {data['question']}")

print(f"======= Answer =======\n {ans['docs']}\n\n")

def test_post_api_query_rag():

url = EAS_URL + '/service/query'

data = {"question": "What is PAI?"}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"======= Question =======\n {data['question']}")

print(f"======= Answer =======\n {ans['answer']}")

print(f"======= Retrieved Docs =======\n {ans['docs']}\n\n")

# LLM mode

test_post_api_query_llm()

# Retrieval mode

test_post_api_query_retrieval()

# RAG mode (retrieval + LLM)

test_post_api_query_rag()Multi-turn requests

Multi-turn conversations are supported in LLM and RAG modes. Pass the session_id from the previous response to continue a conversation.

import requests

EAS_URL = 'http://xxxx.****.cn-beijing.pai-eas.aliyuncs.com' # Remove trailing /

headers = {

'accept': 'application/json',

'Content-Type': 'application/json',

'Authorization': 'MDA5NmJkN****jNlMDgzYzM4M2YwMDUzZTdiZmI5YzljYjZmNA==',

}

def test_post_api_query_llm_with_chat_history():

url = EAS_URL + '/service/query/llm'

# Round 1

data = {"question": "What is PAI?"}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"=======Round 1: Question =======\n {data['question']}")

print(f"=======Round 1: Answer =======\n {ans['answer']} session_id: {ans['session_id']} \n")

# Round 2: use the session_id from Round 1

data_2 = {

"question": "What are the benefits of PAI?",

"session_id": ans['session_id']

}

response_2 = requests.post(url, headers=headers, json=data_2)

if response_2.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response_2.status_code}')

ans_2 = dict(response_2.json())

print(f"=======Round 2: Question =======\n {data_2['question']}")

print(f"=======Round 2: Answer =======\n {ans_2['answer']} session_id: {ans_2['session_id']} \n\n")

def test_post_api_query_rag_with_chat_history():

url = EAS_URL + '/service/query'

# Round 1

data = {"question": "What is PAI?"}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"=======Round 1: Question =======\n {data['question']}")

print(f"=======Round 1: Answer =======\n {ans['answer']} session_id: {ans['session_id']}")

print(f"=======Round 1: Retrieved Docs =======\n {ans['docs']}\n")

# Round 2: use the session_id from Round 1

data = {

"question": "What are the features of PAI?",

"session_id": ans['session_id']

}

response = requests.post(url, headers=headers, json=data)

if response.status_code != 200:

raise ValueError(f'Error post to {url}, code: {response.status_code}')

ans = dict(response.json())

print(f"=======Round 2: Question =======\n {data['question']}")

print(f"=======Round 2: Answer =======\n {ans['answer']} session_id: {ans['session_id']}")

print(f"=======Round 2: Retrieved Docs =======\n {ans['docs']}")

# LLM mode with chat history

test_post_api_query_llm_with_chat_history()

# RAG mode with chat history

test_post_api_query_rag_with_chat_history()View knowledge base content

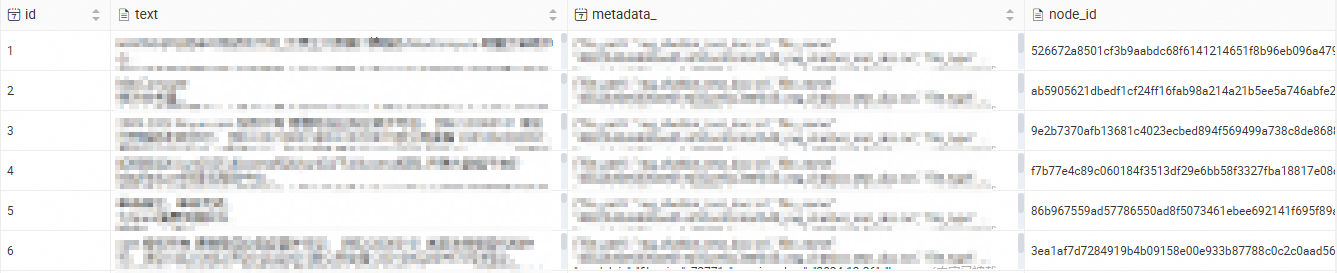

After the chatbot is running, connect to the RDS PostgreSQL database to inspect the imported knowledge base content directly. For connection instructions, see Connect to an ApsaraDB RDS for PostgreSQL instance.

FAQ

Disable chat history

To disable multi-turn conversation history on the web UI, clear the Chat history checkbox.

What's next

Deploy a standalone LLM: Deploy an LLM application callable via web UI or API, then integrate your enterprise knowledge base using the LangChain framework. See Quickly deploy LLMs in EAS.

Generate AI video: Deploy an AI video generation service using ComfyUI and Stable Video Diffusion. See Use ComfyUI to deploy an AI video generation model service.