Install dependency packages and run custom Python functions using the Python Script component in Machine Learning Designer.

Component location

Python Script is in the UserDefinedScript folder of the Machine Learning Designer component list.

Prerequisites

-

Grant required permissions for Deep Learning Containers (DLC). For more information, see Cloud service dependencies and authorizations: DLC.

-

Python Script component runs on DLC compute resources. Associate DLC compute resources with your workspace. For more information, see Manage workspaces.

-

Python Script component stores code in Object Storage Service (OSS). Create an OSS bucket. For more information, see Create a bucket.

ImportantOSS bucket must be in the same region as Machine Learning Designer and DLC.

-

RAM user who will use this component must have the Algorithm Development role in your workspace. For more information, see Manage workspace members. If the RAM user also needs to use MaxCompute as a data source, grant the MaxCompute Developer role.

Component configuration

-

Input ports

Python Script component has four input ports. Connect them to data from an OSS path or MaxCompute table.

-

OSS path input

Input from an upstream component's OSS path is mounted to the node where the Python script runs. System automatically passes the mounted file path as an argument. For example,

--input1 /ml/input/data/input1specifies the path for the first input port. In your script, read the mounted files from/ml/input/data/input1as if they were local files. -

MaxCompute table input

MaxCompute table inputs are not mounted. Instead, system automatically passes table information to the script as a URI argument. For example,

python main.py --input1 odps://some-project-name/tables/tableindicates the MaxCompute table for the first input port. For URI-based inputs, use theparse_odps_urlfunction from the component's code template to parse metadata such as ProjectName, TableName, and Partition. For more information, see Usage examples.

-

-

Output ports

Python Script component has four output ports. OSS Output Port 1 and OSS Output Port 2 are for OSS path outputs. Table Output Port 1 and Table Output Port 2 are for MaxCompute table outputs.

-

OSS path output

OSS path configured for the Job output path parameter on the Code Config tab is automatically mounted to

/ml/output/. The component's OSS Output Port 1 and OSS Output Port 2 correspond to subdirectories/ml/output/output1and/ml/output/output2, respectively. In your script, write files that need to be passed to downstream components into these directories as if they were local files. -

MaxCompute table output

If the current workspace is configured with a MaxCompute project, system will automatically pass a temporary table URI to the Python script, for example:

python main.py --output3 odps://<some-project-name>/tables/<output-table-name>. Use PyODPS to create the table specified in the temporary table URI, write the data processed by the Python script to this table, and then pass the table to downstream components through component connections. For more information, see the example below.

-

-

Parameters

Code Config

Parameter

Description

Job output path

OSS path for job output.

-

Configured OSS directory is mounted to

/ml/output/path in the job container. Data written to/ml/output/path is persisted to the corresponding OSS directory. -

Component's output ports OSS Output-1 and OSS Output-2 correspond to subpaths

output1andoutput2in the/ml/output/path, respectively. When an OSS output port of the component is connected to a downstream component, downstream component receives data from the corresponding subpath.

Code source

(Choose one)

Literal code

-

Python code: OSS path where code is saved. Code written in the editor is saved to this OSS path. Default filename for Python code is

main.py.ImportantBefore you click Save for the first time, make sure that the specified OSS path does not contain a file with the same name. Otherwise, the existing file is overwritten.

-

Python code editor: Code editor provides sample code by default. For more information, see Usage examples. Write your code directly in the editor.

Specify Git configuration

-

Git repository address: Address of the Git repository.

-

Code branch: Code branch. Default value is master.

-

Code commit: Commit ID. This parameter has a higher priority than the branch. If you specify this parameter, the branch setting is ignored.

-

Git username: Required if you need to access a private repository.

-

Git access token: Required to access a private code repository. For more information, see Appendix: Obtain a GitHub account token.

Select code source

-

Select code source repositories: Select a created code configuration. For more information, see Code configurations.

-

Code branch: Code branch. Default value is master.

-

Code commit: Commit ID. This parameter has a higher priority than the branch. If you specify this parameter, the branch setting is ignored.

Select OSS path

In the OSS Code Path field, select path where the code is uploaded.

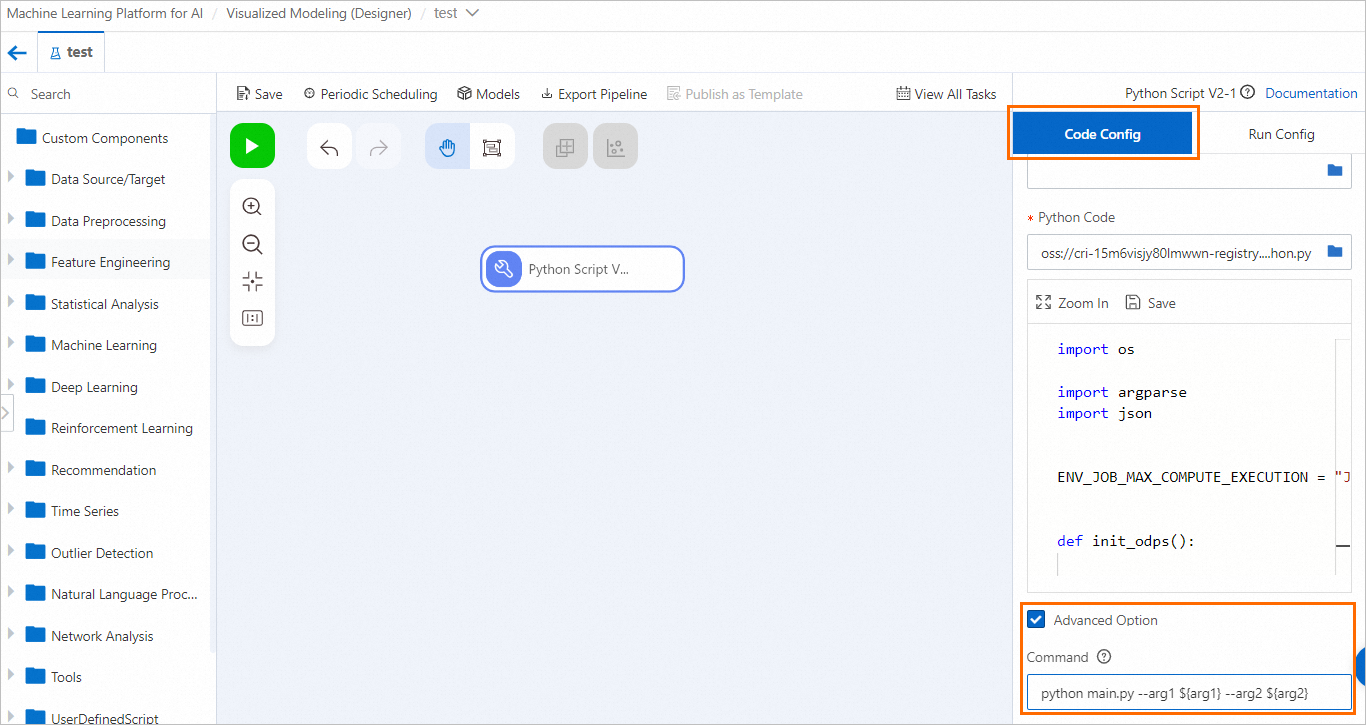

Command

Command to execute, such as

python main.py.NoteSystem automatically generates the execution command based on the script name and component's port connections. Manual configuration is not required.

Advanced option

-

Third-party dependencies: Specify third-party libraries to install using the Python

requirements.txtformat. System automatically installs these libraries before the node runs.cycler==0.10.0 # via matplotlib kiwisolver==1.2.0 # via matplotlib matplotlib==3.2.1 numpy==1.18.5 pandas==1.0.4 pyparsing==2.4.7 # via matplotlib python-dateutil==2.8.1 # via matplotlib, pandas pytz==2020.1 # via pandas scipy==1.4.1 # via seaborn -

Enable container monitoring: After you select this option, a configuration text box appears. Specify the parameters for fault tolerance monitoring in the text box.

Run Config

Parameter

Description

Select Resource Group

Select a public DLC resource group:

-

If you select a public resource group, configure the InstanceType parameter. Select a CPU or GPU instance. Default value is

ecs.c6.large.

By default, default resource group for DLC cloud-native resources in the current workspace is used.

VPC Settings

Select an existing Virtual Private Cloud (VPC).

Security Group

Select an existing security group.

Advanced option

If you select this option, configure the following parameters:

-

Instance count: Number of instances. Default value is 1.

-

Job image URI: URI of the job image. Default image uses open-source XGBoost 1.6.0. If you need to use a deep learning framework, change the image.

-

Job type: Modify this parameter only if your submitted code is implemented for distributed execution. The following values are supported:

-

XGBoost/LightGBM Job

-

TensorFlow Job

-

PyTorch Job

-

MPI Job

-

-

Usage examples

Default sample code

Python Script component provides the following sample code by default.

import os

import argparse

import json

"""

Sample code for the Python Script component

"""

# Default MaxCompute execution environment in the current workspace, which includes the MaxCompute project name and endpoint.

# This environment is injected only when a MaxCompute project exists in the current workspace.

# Example: {"endpoint": "http://service.cn.maxcompute.aliyun-inc.com/api", "odpsProject": "lq_test_mc_project"}.

ENV_JOB_MAX_COMPUTE_EXECUTION = "JOB_MAX_COMPUTE_EXECUTION"

def init_odps():

from odps import ODPS

# Information about the default MaxCompute project in the current workspace.

mc_execution = json.loads(os.environ[ENV_JOB_MAX_COMPUTE_EXECUTION])

o = ODPS(

access_id="<YourAccessKeyId>",

secret_access_key="<YourAccessKeySecret>",

# Select the endpoint based on the region where your project is located, for example: http://service.cn-shanghai.maxcompute.aliyun-inc.com/api.

endpoint=mc_execution["endpoint"],

project=mc_execution["odpsProject"],

)

return o

def parse_odps_url(table_uri):

from urllib import parse

parsed = parse.urlparse(table_uri)

project_name = parsed.hostname

r = parsed.path.split("/", 2)

table_name = r[2]

if len(r) > 3:

partition = r[3]

else:

partition = None

return project_name, table_name, partition

def parse_args():

parser = argparse.ArgumentParser(description="PythonV2 component script example.")

parser.add_argument("--input1", type=str, default=None, help="Component input port 1.")

parser.add_argument("--input2", type=str, default=None, help="Component input port 2.")

parser.add_argument("--input3", type=str, default=None, help="Component input port 3.")

parser.add_argument("--input4", type=str, default=None, help="Component input port 4.")

parser.add_argument("--output1", type=str, default=None, help="Output OSS port 1.")

parser.add_argument("--output2", type=str, default=None, help="Output OSS port 2.")

parser.add_argument("--output3", type=str, default=None, help="Output MaxComputeTable 1.")

parser.add_argument("--output4", type=str, default=None, help="Output MaxComputeTable 2.")

args, _ = parser.parse_known_args()

return args

def write_table_example(args):

# Example: Execute an SQL statement to copy data from a public table provided by PAI to the temporary table specified for Table Output Port 1 (--output3).

output_table_uri = args.output3

o = init_odps()

project_name, table_name, partition = parse_odps_url(output_table_uri)

o.run_sql(f"create table {project_name}.{table_name} as select * from pai_online_project.heart_disease_prediction;")

def write_output1(args):

# Example: Write data results to the mounted OSS path (subdirectory for OSS Output Port 1), and results can be passed to downstream components through connections.

output_path = args.output1

os.makedirs(output_path, exist_ok=True)

p = os.path.join(output_path, "result.text")

with open(p, "w") as f:

f.write("TestAccuracy=0.88")

if __name__ == "__main__":

args = parse_args()

print("Input1={}".format(args.input1))

print("Output1={}".format(args.output1))

# write_table_example(args)

# write_output1(args)

Description of common functions:

-

init_odps(): Initializes an ODPS instance for reading MaxCompute table data. Provide your AccessKeyId and AccessKeySecret. For more information about how to obtain an AccessKey pair, see Obtain an AccessKey pair. -

parse_odps_url(table_uri): Parses the input MaxCompute table URI and returns project name, table name, and partition. Thetable_uriformat isodps://${your_projectname}/tables/${table_name}/${pt_1}/${pt_2}/, for example,odps://test/tables/iris/pa=1/pb=1, wherepa=1/pb=1is a multi-level partition. -

parse_args(): Parses arguments passed to the script. Input and output data are passed to the executed script as arguments.

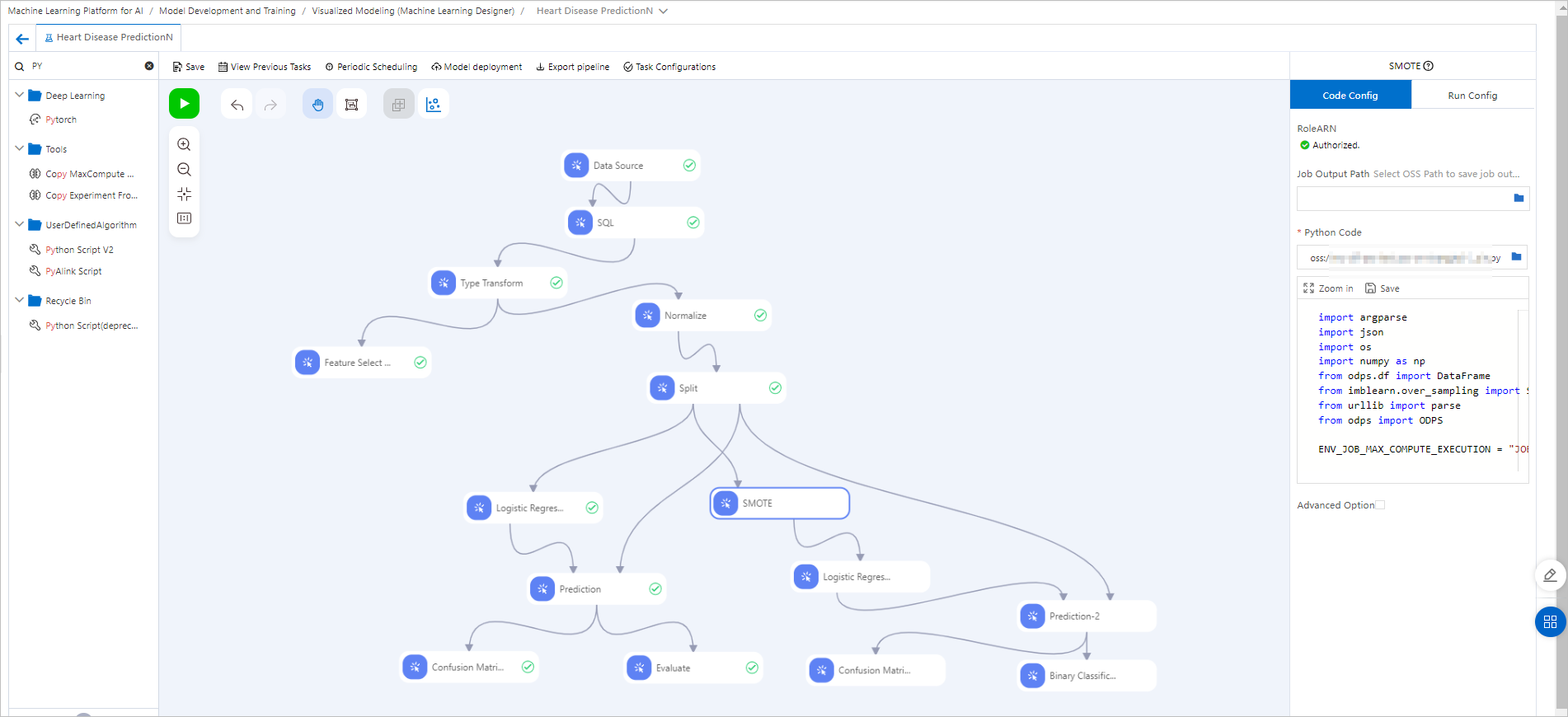

Example 1: Chain with other components

This example modifies the heart disease prediction template to demonstrate how to use Python Script component in conjunction with other Machine Learning Designer components. Pipeline configuration:

Pipeline configuration:

-

Create a pipeline from the heart disease prediction template and open it. For more information, see Heart disease prediction.

-

Drag the Python Script component to the canvas, rename it to

SMOTE, and configure the following code.Importantimblearnlibrary is not included in the image. Addimblearnin the Third-party dependencies field on the Code Config tab. Library is automatically installed before the node runs.import argparse import json import os from odps.df import DataFrame from imblearn.over_sampling import SMOTE from urllib import parse from odps import ODPS ENV_JOB_MAX_COMPUTE_EXECUTION = "JOB_MAX_COMPUTE_EXECUTION" def init_odps(): # Information about the default MaxCompute project in the current workspace. mc_execution = json.loads(os.environ[ENV_JOB_MAX_COMPUTE_EXECUTION]) o = ODPS( access_id="<Your_AccessKeyId>", secret_access_key="<Your_AccessKeySecret>", # Select the endpoint based on the region where your project is located, for example: http://service.cn-shanghai.maxcompute.aliyun-inc.com/api. endpoint=mc_execution["endpoint"], project=mc_execution["odpsProject"], ) return o def get_max_compute_table(table_uri, odps): parsed = parse.urlparse(table_uri) project_name = parsed.hostname table_name = parsed.path.split('/')[2] table = odps.get_table(project_name + "." + table_name) return table def run(): parser = argparse.ArgumentParser(description='PythonV2 component script example.') parser.add_argument( '--input1', type=str, default=None, help='Component input port 1.' ) parser.add_argument( '--output3', type=str, default=None, help='Component input port 1.' ) args, _ = parser.parse_known_args() print('Input1={}'.format(args.input1)) print('output3={}'.format(args.output3)) o = init_odps() imbalanced_table = get_max_compute_table(args.input1, o) df = DataFrame(imbalanced_table).to_pandas() sm = SMOTE(random_state=2) X_train_res, y_train_res = sm.fit_resample(df, df['ifhealth'].ravel()) new_table = o.create_table(get_max_compute_table(args.output3, o).name, imbalanced_table.schema, if_not_exists=True) with new_table.open_writer() as writer: writer.write(X_train_res.values.tolist()) if __name__ == '__main__': run()Replace <Your_AccessKeyId> and <Your_AccessKeySecret> in the code with your own AccessKeyId and AccessKeySecret. For more information about how to obtain an AccessKey pair, see Obtain an AccessKey pair.

-

Connect the SMOTE component downstream of the Split component. It uses the SMOTE algorithm to oversample the training data, balancing class distribution by creating synthetic samples for the minority class.

-

Connect the new data from the SMOTE component to the Logistic Regression for Binary Classification component for training.

-

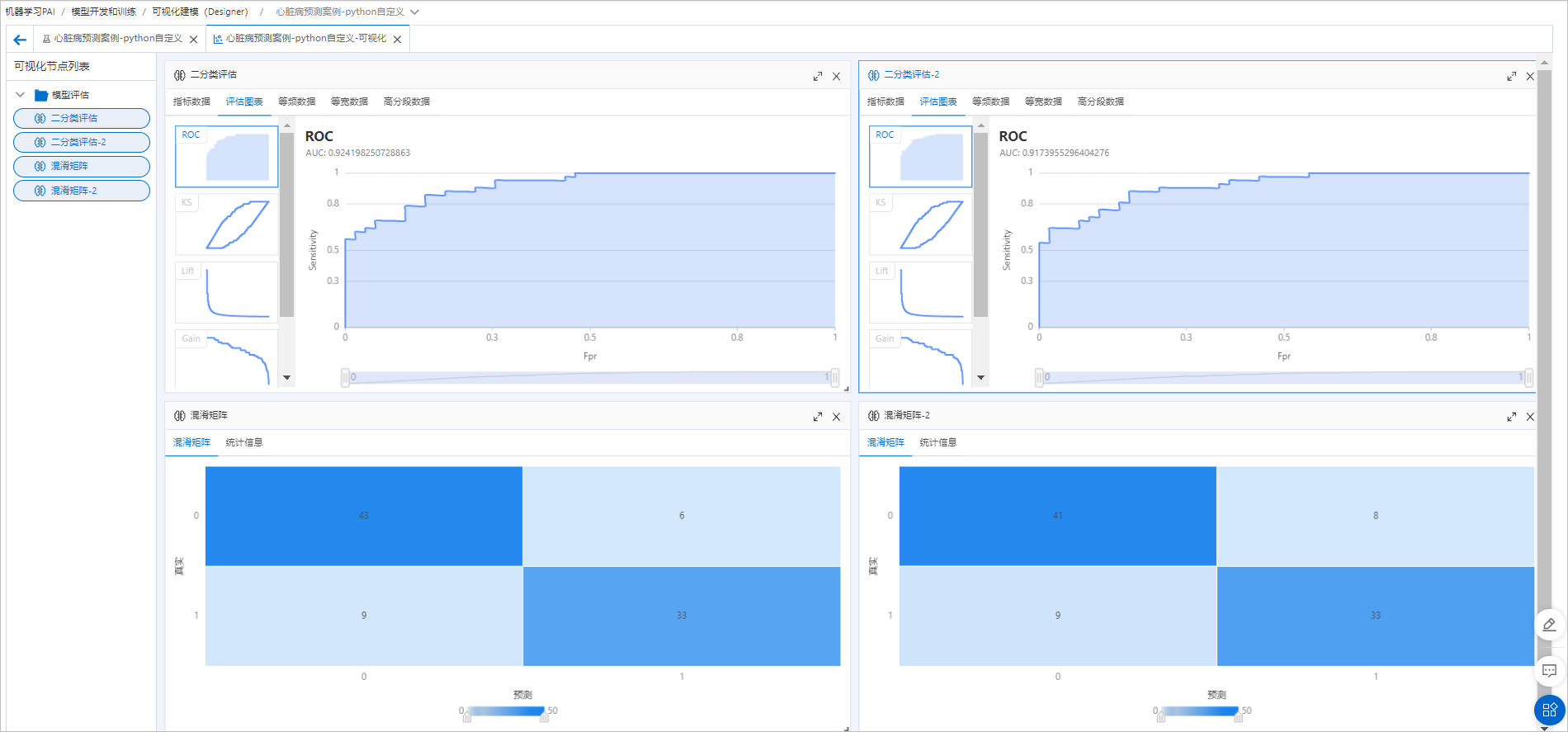

Connect the trained model to the same prediction data and evaluation components as the model in the left branch for a side-by-side comparison. After the component runs successfully, click the visualization icon (

) to view final evaluation results.

) to view final evaluation results.

Additional oversampling does not significantly improve the model's performance, which indicates that the original sample distribution and model were already effective.

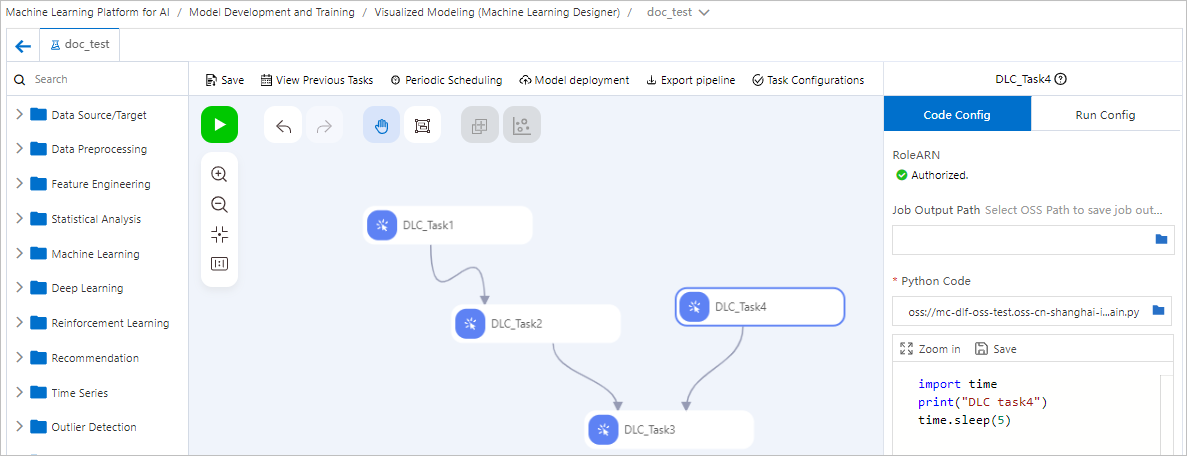

Example 2: Orchestrate DLC jobs

Connect multiple Python Script components in Machine Learning Designer to orchestrate and schedule a pipeline of DLC jobs. The following figure shows an example of starting four DLC jobs arranged in a Directed Acyclic Graph (DAG) to control their execution order.

If the execution code for a DLC job does not need to read data from upstream nodes or pass data to downstream nodes, connections between nodes only represent scheduling dependencies and execution order.

Deploy the entire pipeline developed in Machine Learning Designer to DataWorks for scheduled execution. For more information, see Use DataWorks to schedule Machine Learning Designer pipelines for offline execution.

Deploy the entire pipeline developed in Machine Learning Designer to DataWorks for scheduled execution. For more information, see Use DataWorks to schedule Machine Learning Designer pipelines for offline execution.

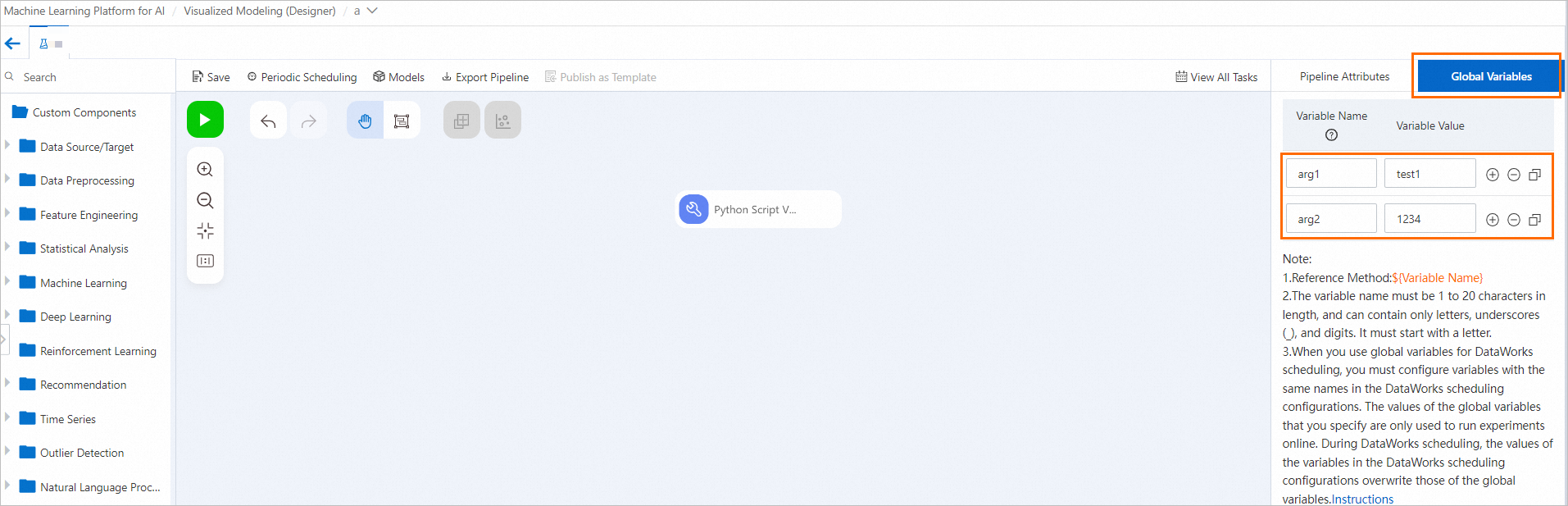

Example 3: Pass global variables

-

Configure global variables.

On the Machine Learning Designer pipeline page, click a blank area of the canvas and configure variables on the Global Variables tab in the right pane.

-

Pass configured global variables to the Python Script component in one of two ways.

-

Click the Python Script component node. In the right pane, on the Code Config tab, select Advanced option and configure global variables as incoming parameters in the Command field.

-

Modify the Python code to parse arguments using

argparse.Updated Python code is as follows. This code uses global variables configured in step 1 as an example. Update the code according to your actual global variables. Replace the code in the code editing area on the Code Config tab of the Python Script component node.

import os import argparse import json """ Sample code for the Python Script component """ ENV_JOB_MAX_COMPUTE_EXECUTION = "JOB_MAX_COMPUTE_EXECUTION" def init_odps(): from odps import ODPS mc_execution = json.loads(os.environ[ENV_JOB_MAX_COMPUTE_EXECUTION]) o = ODPS( access_id="<YourAccessKeyId>", secret_access_key="<YourAccessKeySecret>", endpoint=mc_execution["endpoint"], project=mc_execution["odpsProject"], ) return o def parse_odps_url(table_uri): from urllib import parse parsed = parse.urlparse(table_uri) project_name = parsed.hostname r = parsed.path.split("/", 2) table_name = r[2] if len(r) > 3: partition = r[3] else: partition = None return project_name, table_name, partition def parse_args(): parser = argparse.ArgumentParser(description="PythonV2 component script example.") parser.add_argument("--input1", type=str, default=None, help="Component input port 1.") parser.add_argument("--input2", type=str, default=None, help="Component input port 2.") parser.add_argument("--input3", type=str, default=None, help="Component input port 3.") parser.add_argument("--input4", type=str, default=None, help="Component input port 4.") parser.add_argument("--output1", type=str, default=None, help="Output OSS port 1.") parser.add_argument("--output2", type=str, default=None, help="Output OSS port 2.") parser.add_argument("--output3", type=str, default=None, help="Output MaxComputeTable 1.") parser.add_argument("--output4", type=str, default=None, help="Output MaxComputeTable 2.") # Add code based on the configured global variables. parser.add_argument("--arg1", type=str, default=None, help="Argument 1.") parser.add_argument("--arg2", type=int, default=None, help="Argument 2.") args, _ = parser.parse_known_args() return args def write_table_example(args): output_table_uri = args.output3 o = init_odps() project_name, table_name, partition = parse_odps_url(output_table_uri) o.run_sql(f"create table {project_name}.{table_name} as select * from pai_online_project.heart_disease_prediction;") def write_output1(args): output_path = args.output1 os.makedirs(output_path, exist_ok=True) p = os.path.join(output_path, "result.text") with open(p, "w") as f: f.write("TestAccuracy=0.88") if __name__ == "__main__": args = parse_args() print("Input1={}".format(args.input1)) print("Output1={}".format(args.output1)) # Add code based on the configured global variables. print("Argument1={}".format(args.arg1)) print("Argument2={}".format(args.arg2)) # write_table_example(args) # write_output1(args)

-