Visual understanding models answer questions about the images and videos you provide, supporting single or multiple image inputs for tasks such as image captioning, visual question answering, and object localization.

Supported regions: Singapore, US (Virginia), China (Beijing), China (Hong Kong), and Germany (Frankfurt). Use the specific API key and endpoint for each region.

Try it online: Go to the Alibaba Cloud Model Studio console, select a region in the upper-right corner, and then navigate to the Vision page.

Getting started

Prerequisites

To call with an SDK, install the SDK. Use DashScope Python SDK 1.24.6 or later, or DashScope Java SDK 2.21.10 or later.

The following examples show how to call a model to describe image content. See How to pass local files and Image limits.

OpenAI compatible

Python

from openai import OpenAI

import os

client = OpenAI(

# An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# Endpoints vary by Region. Modify the endpoint for your Region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

)

completion = client.chat.completions.create(

model="qwen3.5-plus", # Uses qwen3.5-plus model. See https://www.alibabacloud.com/help/en/model-studio/getting-started/models for other models.

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

},

},

{"type": "text", "text": "What is depicted in the image?"},

],

},

],

)

print(completion.choices[0].message.content)Response

This is a photo taken on a beach. In the photo, a person and a dog are sitting on the sand, with the sea and sky in the background. The person and dog appear to be interacting, with the dog's front paw resting on the person's hand. Sunlight is coming from the right side of the frame, adding a warm atmosphere to the scene.Node.js

import OpenAI from "openai";

const openai = new OpenAI({

// An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not set an environment variable, replace the following line with your Model Studio API key: apiKey: "sk-xxx"

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by Region. Modify the endpoint for your Region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

});

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.5-plus", // Uses qwen3.5-plus model. See https://www.alibabacloud.com/help/en/model-studio/getting-started/models for other models.

messages: [

{

role: "user",

content: [{

type: "image_url",

image_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

}

},

{

type: "text",

text: "What is depicted in the image?"

}

]

}

]

});

console.log(response.choices[0].message.content);

}

main()Response

This is a photo taken on a beach. In the photo, a person and a dog are sitting on the sand, with the sea and sky in the background. The person and dog appear to be interacting, with the dog's front paw resting on the person's hand. Sunlight is coming from the right side of the frame, adding a warm atmosphere to the scene.curl

# ======= Important =======

# Endpoints vary by Region. Modify the endpoint for your Region.

# An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# === Delete this comment before execution ===

curl --location 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.5-plus",

"messages": [

{"role": "user",

"content": [

{"type": "image_url", "image_url": {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"}},

{"type": "text", "text": "What is depicted in the image?"}

]

}]

}'Response

{

"choices": [

{

"message": {

"content": "This is a photo taken on a beach. In the photo, a person and a dog are sitting on the sand, with the sea and sky in the background. The person and dog appear to be interacting, with the dog's front paw resting on the person's hand. Sunlight is coming from the right side of the frame, adding a warm atmosphere to the scene.",

"role": "assistant"

},

"finish_reason": "stop",

"index": 0,

"logprobs": null

}

],

"object": "chat.completion",

"usage": {

"prompt_tokens": 1270,

"completion_tokens": 54,

"total_tokens": 1324

},

"created": 1725948561,

"system_fingerprint": null,

"model": "qwen3.5-plus",

"id": "chatcmpl-0fd66f46-b09e-9164-a84f-3ebbbedbac15"

}DashScope

Python

import os

import dashscope

# Endpoints vary by Region. Modify the endpoint for your Region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

messages = [

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"text": "What is depicted in the image?"}]

}]

response = dashscope.MultiModalConversation.call(

# An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If you have not set an environment variable, replace the following line with your Model Studio API key: api_key="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.5-plus', # Uses qwen3.5-plus model. See https://www.alibabacloud.com/help/en/model-studio/getting-started/models for other models.

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Response

This is a photo taken on a beach. In the photo, there is a woman and a dog. The woman is sitting on the sand, smiling and interacting with the dog. The dog is wearing a collar and appears to be shaking hands with the woman. The background is the sea and the sky, and the sunlight shining on them creates a warm atmosphere.Java

import java.util.Arrays;

import java.util.Collections;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// Endpoints vary by Region. Modify the endpoint for your Region.

static {

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"),

Collections.singletonMap("text", "What is depicted in the image?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not set an environment variable, replace the following line with your Model Studio API key: .apiKey("sk-xxx")

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.5-plus") // This example uses the qwen3.5-plus model. You can replace it with other models. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Response

This is a photo taken on a beach. In the photo, there is a person in a plaid shirt and a dog with a collar. The person and the dog are sitting face to face, seemingly interacting. The background is the sea and the sky, and the sunlight shining on them creates a warm atmosphere.curl

# ======= Important =======

# Endpoints vary by Region. Modify the endpoint for your Region.

# An API key is required for each Region. To get an API key, visit: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# === Delete this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"text": "What is depicted in the image?"}

]

}

]

}

}'Response

{

"output": {

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"text": "This is a photo taken on a beach. In the photo, there is a person in a plaid shirt and a dog with a collar. They are sitting on the sand, with the sea and sky in the background. Sunlight is coming from the right side of the frame, adding a warm atmosphere to the scene."

}

]

}

}

]

},

"usage": {

"output_tokens": 55,

"input_tokens": 1271,

"image_tokens": 1247

},

"request_id": "ccf845a3-dc33-9cda-b581-20fe7dc23f70"

}Model selection

Recommended Qwen3.5: The latest generation of visual understanding models. It excels at tasks like multimodal reasoning, 2D/3D image understanding, complex document parsing, visual programming, video understanding, and building multimodal agents. Available in the Chinese mainland and Singapore regions.

qwen3.5-plus: The most capable model in the Qwen3.5 series, recommended for tasks that require the highest accuracy and performance.qwen3.5-flash: A faster, more cost-effective choice that balances performance and cost, ideal for latency-sensitive scenarios.The open-source models in the Qwen3.5 series include

qwen3.5-397b-a17b,qwen3.5-122b-a10b,qwen3.5-27b, andqwen3.5-35b-a3b.

The Qwen3-VL series is also suitable for tasks requiring high-precision object recognition and localization (including 3D), agent tool calling, document and webpage parsing, complex problem solving, and long video understanding.

qwen3-vl-plus: The most powerful model in the Qwen3-VL series.qwen3-vl-flash: A faster, more cost-effective choice that balances performance and cost, ideal for latency-sensitive scenarios.

The Qwen2.5-VL series is suitable for general-purpose tasks such as simple image captioning and extracting summaries from short videos.

qwen-vl-max: The best-performing model in the Qwen2.5-VL series.qwen-vl-plus: A faster model that provides a good balance between performance and cost.

For model names, context, pricing, and snapshot versions, see Model list. For concurrency limits, see Rate limiting.

Capabilities

Visual question answering (VQA)

Describe and classify image content, such as identifying people, places, animals, and plants.

If the sun is glaring, what item from this image should I use? | When the sun is glaring, you should use the pink sunglasses from the image. Sunglasses can effectively block strong light, reduce UV damage to your eyes, and help protect your vision while improving comfort in bright sunlight. |

Creative writing

Generate vivid text descriptions from image or video content for creative scenarios like story writing, copywriting, and developing short video scripts.

Based on the image, write an engaging social media post for me. | Of course. This image has a rich Mid-Autumn Festival atmosphere, with a clever mix of classic and modern elements. Based on the key elements in the image, I've prepared a few social media post ideas in different styles for you to choose from. Poetic and aesthetic style Tonight, the moon shines bright for all to see, as autumn thoughts drift to thee. Chang'e's flight and the Jade Rabbit's potion—the romance of ages is rekindled tonight. May this moon light your way home and carry my deepest wishes. Happy Mid-Autumn Festival! Warm and heartfelt style A full moon for a full reunion on this gentle Mid-Autumn night. Watching fireworks, gazing at the moon, savoring a mooncake, and wishing you well. May all our hopes and dreams come true. Wishing everyone a happy Mid-Autumn Festival and a joyful family reunion! |

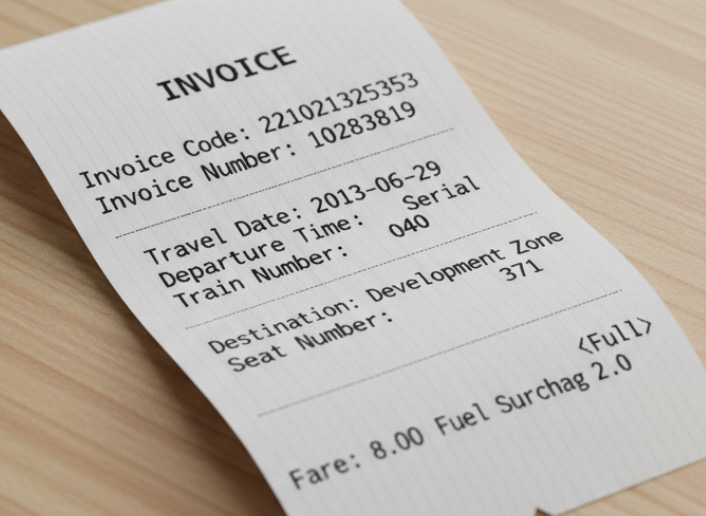

OCR and information extraction

Recognize text and formulas in images, or extract information from documents like receipts, certificates, and forms. These models support formatted text output. Both the Qwen3.5 and Qwen3-VL models have expanded their language support to 33 languages. For a list of supported languages, see the Model feature comparison.

Extract the following fields from the image: ['Invoice Code', 'Invoice Number', 'Destination', 'Fuel Surcharge', 'Fare', 'Travel Date', 'Departure Time', 'Train Number', 'Seat Number']. Output the result in JSON format. | { "Invoice Code": "221021325353", "Invoice Number": "10283819", "Destination": "Development Zone", "Fuel Surcharge": "2.0", "Fare": "8.00<Full>", "Travel Date": "2013-06-29", "Departure Time": "Serial", "Train Number": "040", "Seat Number": "371" } |

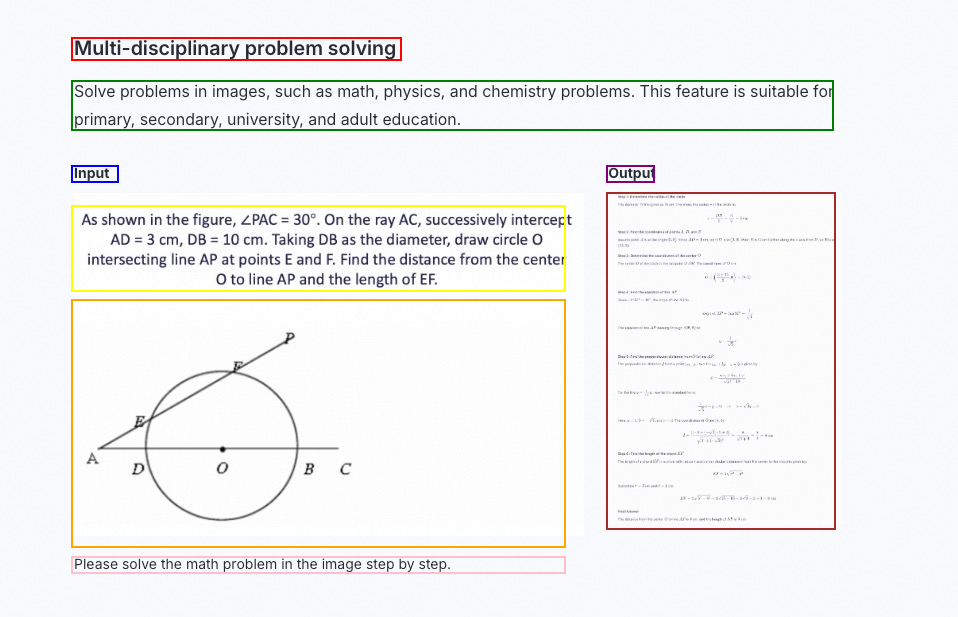

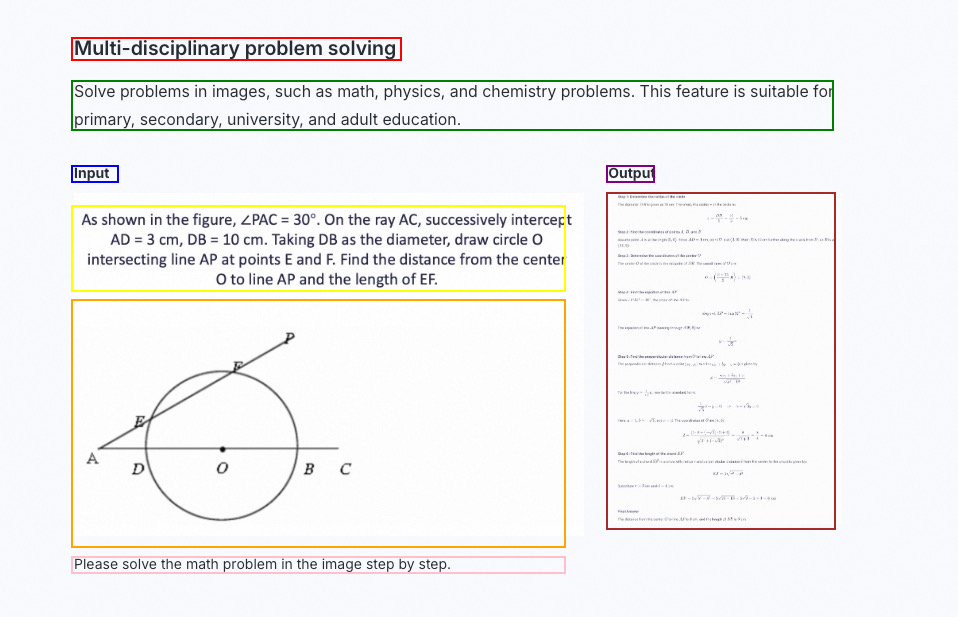

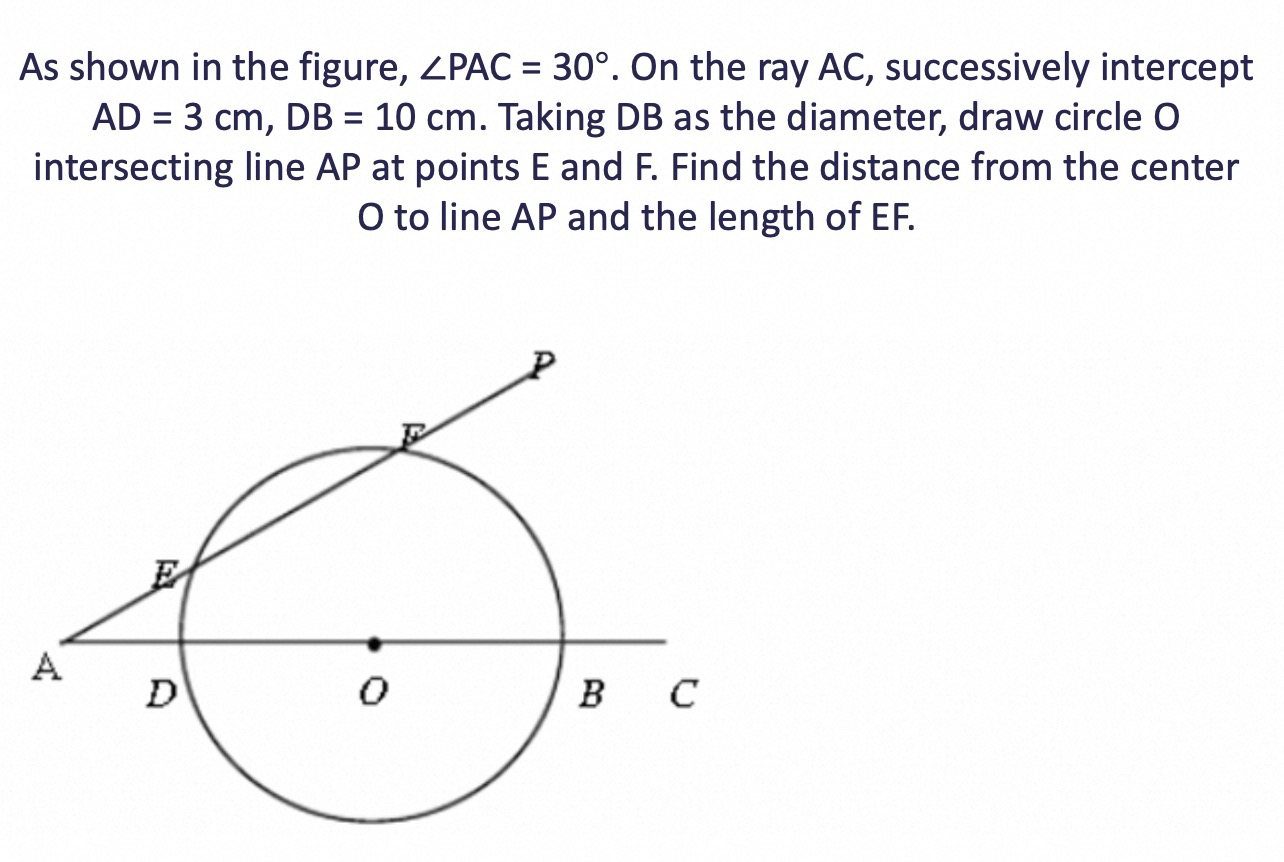

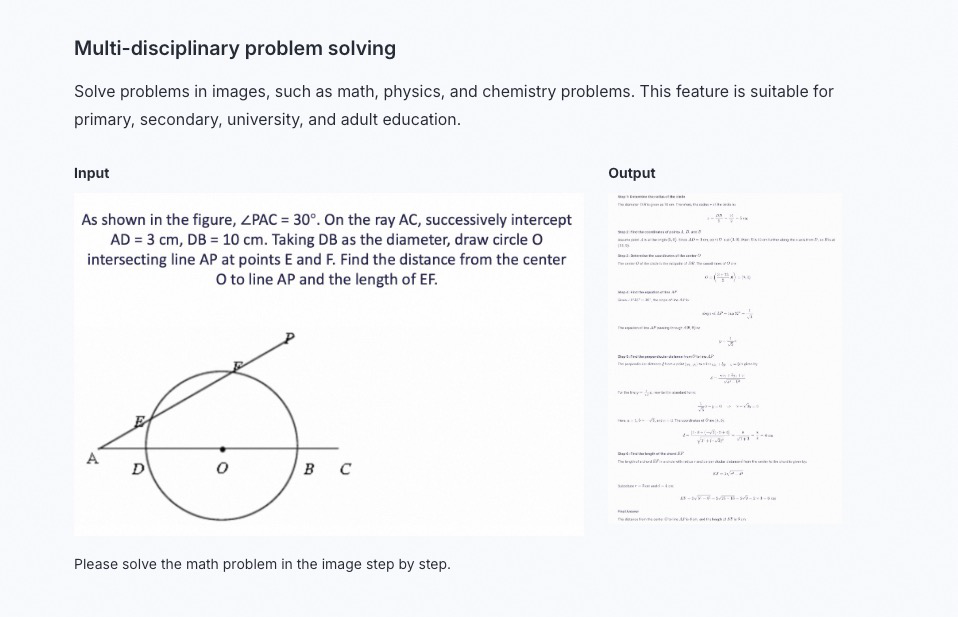

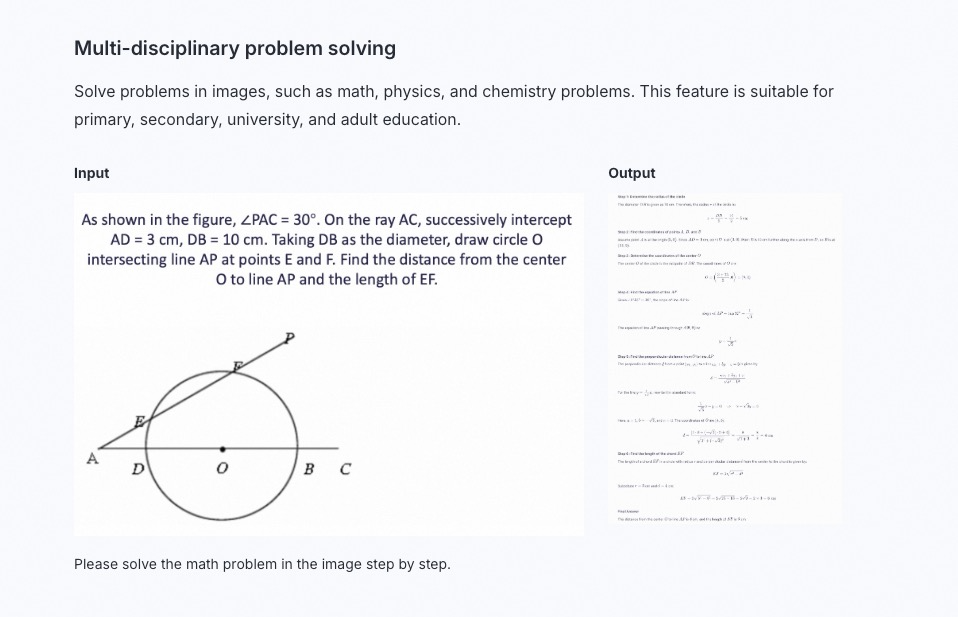

Multi-disciplinary problem solving

Solve problems from various subjects found in images, including mathematics, physics, and chemistry. It supports educational applications from K-12 to adult learning.

Solve the math problem in the image step by step. |

|

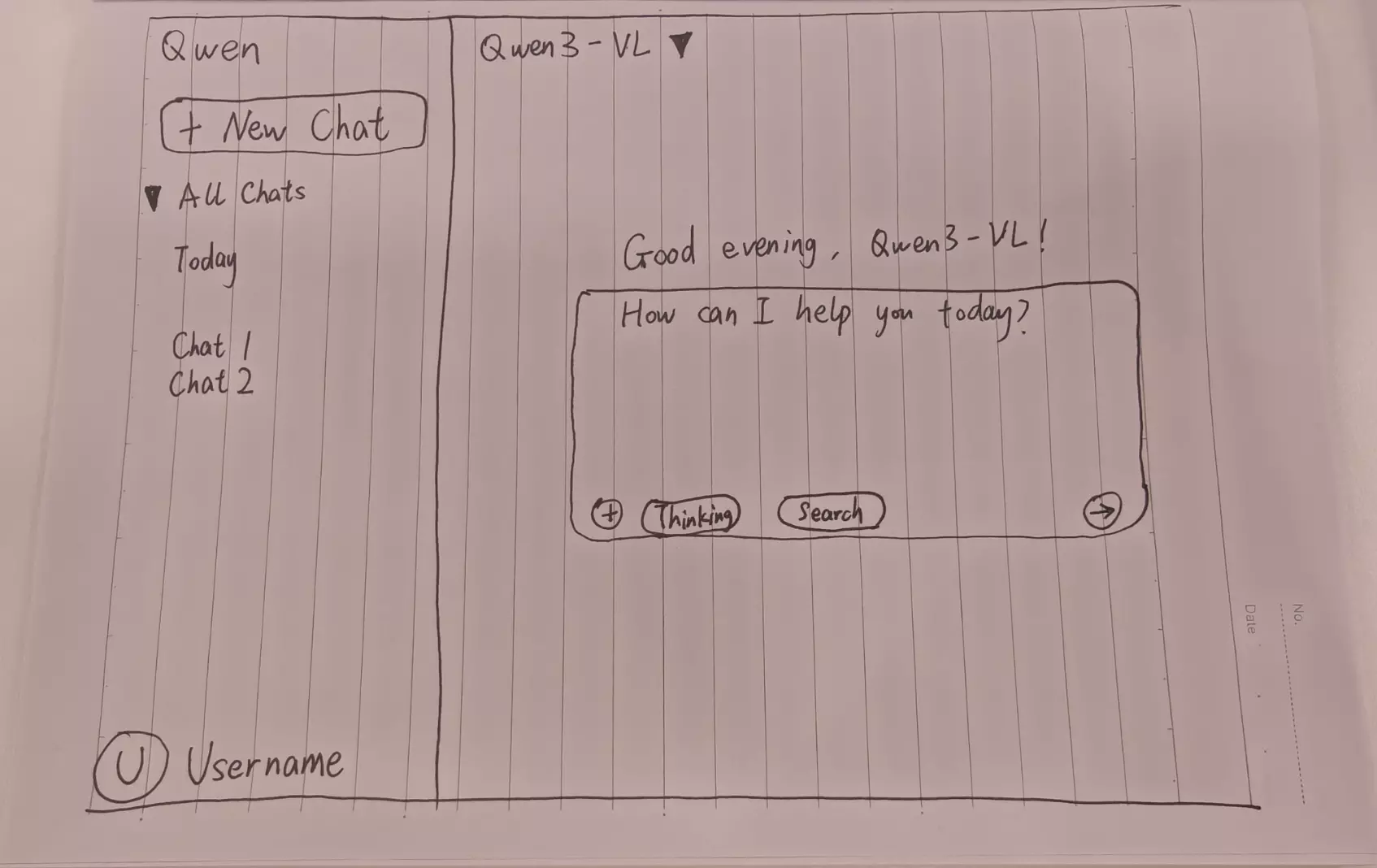

Visual programming

Generate code from images or videos to convert design mockups, website screenshots, and other visual inputs into HTML, CSS, and JS code.

Create a webpage using HTML and CSS based on my sketch. The main color theme should be black. |

Webpage preview |

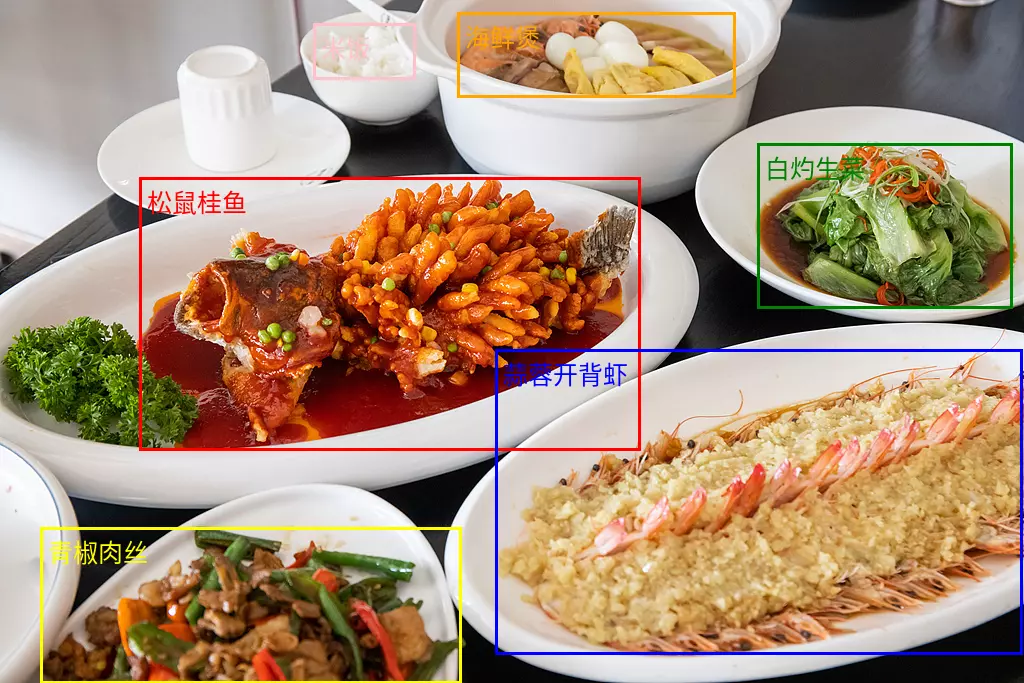

Object localization

Supports both 2D and 3D localization to determine object orientation, perspective changes, and occlusion relationships. 3D localization is a new capability of the Qwen3-VL model.

For the Qwen2.5-VL model, object localization is most robust within a resolution range of 480x480 to 2560x2560 pixels. Outside this range, detection accuracy may decrease, with occasional bounding box drift.

To draw the localization results on the original image, see FAQ.

2D positioning

| Visualization of 2D positioning results

|

Document parsing

Parse image-based documents, such as scans or image-based PDFs, into QwenVL HTML or QwenVL Markdown format. This format accurately recognizes text and gets position information for elements such as images and tables. The Qwen3-VL Model adds the ability to parse documents into Markdown format.

Recommended prompts:qwenvl html(to parse into HTML format) orqwenvl markdown(to parse into Markdown format).

qwenvl markdown.

|

Visualization of results

标注

发票 |

Video understanding

Analyze video content to locate specific events and get their timestamps, or to generate summaries of key time periods.

Describe the series of actions performed by the person in the video. Output the result in JSON format with start_time, end_time, and event fields. Use the HH:mm:ss format for the timestamp. | { "events": [ { "start_time": "00:00:00", "end_time": "00:00:05", "event": "The person walks to a table holding a cardboard box and places it on the table." }, { "start_time": "00:00:05", "end_time": "00:00:15", "event": "The person picks up a scanner and aims it at the label on the box to scan it." }, { "start_time": "00:00:15", "end_time": "00:00:21", "event": "The person puts the scanner back in its place and then picks up a pen to write information in a notebook."}] } |

Core capabilities

Enable or disable thinking mode

The

qwen3.5,qwen3-vl-plus, andqwen3-vl-flashseries are hybrid models that can respond either directly or after reasoning. Use theenable_thinkingparameter to control whether to enablethinking mode:true: The default for theqwen3.5series.false: The default for theqwen3-vl-plusandqwen3-vl-flashseries.

Models with a

thinkingsuffix, such asqwen3-vl-235b-a22b-thinking, are dedicated reasoning models. They always reason before responding, and this feature cannot be disabled.

Model configuration: In general conversational scenarios that do not involve tool calling, do not set the

System Messagefor optimal performance. Instead, you can pass instructions, such as model role settings and output format requirements, through theUser Message.Use streaming output: When

thinking modeis enabled, both streaming and non-streaming output are supported. To avoid long-response timeouts, usestreaming output.Limiting the thinking length: Deep thinking models sometimes produce verbose reasoning. You can use the

thinking_budgetparameter to limit the length of the thinking process. If the number of tokens generated during the thinking process exceedsthinking_budget, the reasoning is truncated and the model immediately begins to generate the final response. The default value ofthinking_budgetis the model's maximum chain-of-thought length. See the Model list.

OpenAI

The enable_thinking parameter is not a standard OpenAI parameter. If you use the OpenAI Python SDK, pass it through extra_body.

import os

from openai import OpenAI

client = OpenAI(

# Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# Endpoints vary by region. Set the endpoint for your region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

)

reasoning_content = "" # Stores the full reasoning process.

answer_content = "" # Stores the full response.

is_answering = False # Tracks if the model is generating the final answer.

enable_thinking = True

# Create a chat completion request.

completion = client.chat.completions.create(

model="qwen3.5-plus",

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"

},

},

{"type": "text", "text": "How do I solve this problem?"},

],

},

],

stream=True,

# `thinking mode` is toggleable for `qwen3.5`/`qwen3-vl-plus`/`qwen3-vl-flash`. It is mandatory for models with a 'thinking' suffix and does not apply to other Qwen-VL models.

# The `thinking_budget` parameter sets the maximum number of tokens for the reasoning process.

extra_body={

'enable_thinking': enable_thinking,

"thinking_budget": 81920},

# Uncomment the following code to return the token usage in the last chunk.

# stream_options={

# "include_usage": True

# }

)

if enable_thinking:

print("\n" + "=" * 20 + "Thinking Process" + "=" * 20 + "\n")

for chunk in completion:

# If chunk.choices is empty, print the usage.

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

else:

delta = chunk.choices[0].delta

# Print the reasoning process.

if hasattr(delta, 'reasoning_content') and delta.reasoning_content is not None:

print(delta.reasoning_content, end='', flush=True)

reasoning_content += delta.reasoning_content

else:

# Start printing the response.

if delta.content != "" and is_answering is False:

print("\n" + "=" * 20 + "Complete Response" + "=" * 20 + "\n")

is_answering = True

# Print the response content.

if delta.content is not None:

print(delta.content, end='', flush=True)

answer_content += delta.content

# print("=" * 20 + "Complete Thinking Process" + "=" * 20 + "\n")

# print(reasoning_content)

# print("=" * 20 + "Complete Response" + "=" * 20 + "\n")

# print(answer_content)import OpenAI from "openai";

// Initialize the OpenAI client.

const openai = new OpenAI({

// Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If the environment variable is not set, provide your Model Studio API Key here: apiKey: "sk-xxx"

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by region. Set the endpoint for your region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

});

let reasoningContent = '';

let answerContent = '';

let isAnswering = false;

let enableThinking = true;

let messages = [

{

role: "user",

content: [

{ type: "image_url", image_url: { "url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg" } },

{ type: "text", text: "How do I solve this problem?" },

]

}]

async function main() {

try {

const stream = await openai.chat.completions.create({

model: 'qwen3.5-plus',

messages: messages,

stream: true,

// Note: In the Node.js SDK, pass non-standard parameters like enable_thinking at the top level, not in extra_body.

enable_thinking: enableThinking,

thinking_budget: 81920

});

if (enableThinking){console.log('\n' + '='.repeat(20) + 'Thinking Process' + '='.repeat(20) + '\n');}

for await (const chunk of stream) {

if (!chunk.choices?.length) {

console.log('\nUsage:');

console.log(chunk.usage);

continue;

}

const delta = chunk.choices[0].delta;

// Process the reasoning content.

if (delta.reasoning_content) {

process.stdout.write(delta.reasoning_content);

reasoningContent += delta.reasoning_content;

}

// Process the response content.

else if (delta.content) {

if (!isAnswering) {

console.log('\n' + '='.repeat(20) + 'Complete Response' + '='.repeat(20) + '\n');

isAnswering = true;

}

process.stdout.write(delta.content);

answerContent += delta.content;

}

}

} catch (error) {

console.error('Error:', error);

}

}

main();# ======= Important =======

# Endpoints vary by region. Set the endpoint for your region.

# Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# === Remove this comment before execution ===

curl --location 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.5-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"

}

},

{

"type": "text",

"text": "How do I solve this problem?"

}

]

}

],

"stream":true,

"stream_options":{"include_usage":true},

"enable_thinking": true,

"thinking_budget": 81920

}'DashScope

import os

import dashscope

from dashscope import MultiModalConversation

# Endpoints vary by region. Set the endpoint for your region.

dashscope.base_http_api_url = "https://dashscope-intl.aliyuncs.com/api/v1"

enable_thinking=True

messages = [

{

"role": "user",

"content": [

{"image": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"},

{"text": "How do I solve this problem?"}

]

}

]

response = MultiModalConversation.call(

# If the environment variable is not set, provide your Model Studio API Key here: api_key="sk-xxx",

# Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

model="qwen3.5-plus",

messages=messages,

stream=True,

# `thinking mode` is toggleable for `qwen3.5`/`qwen3-vl-plus`/`qwen3-vl-flash`. It is mandatory for models with a 'thinking' suffix and does not apply to other Qwen-VL models.

enable_thinking=enable_thinking,

# The thinking_budget parameter sets the maximum number of tokens for the reasoning process.

thinking_budget=81920,

)

# Stores the full reasoning process.

reasoning_content = ""

# Stores the full response.

answer_content = ""

# Tracks if the model is generating the final answer.

is_answering = False

if enable_thinking:

print("=" * 20 + "Thinking Process" + "=" * 20)

for chunk in response:

# Ignore empty chunks.

message = chunk.output.choices[0].message

reasoning_content_chunk = message.get("reasoning_content", None)

if (chunk.output.choices[0].message.content == [] and

reasoning_content_chunk == ""):

pass

else:

# In the reasoning process.

if reasoning_content_chunk is not None and chunk.output.choices[0].message.content == []:

print(chunk.output.choices[0].message.reasoning_content, end="")

reasoning_content += chunk.output.choices[0].message.reasoning_content

# Responding.

elif chunk.output.choices[0].message.content != []:

if not is_answering:

print("\n" + "=" * 20 + "Complete Response" + "=" * 20)

is_answering = True

print(chunk.output.choices[0].message.content[0]["text"], end="")

answer_content += chunk.output.choices[0].message.content[0]["text"]

# To print the complete reasoning process and the complete response, uncomment and run the following code.

# print("=" * 20 + "Complete Thinking Process" + "=" * 20 + "\n")

# print(f"{reasoning_content}")

# print("=" * 20 + "Complete Response" + "=" * 20 + "\n")

# print(f"{answer_content}")// DashScope SDK version >= 2.21.10

import java.util.*;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import io.reactivex.Flowable;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.exception.InputRequiredException;

import java.lang.System;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// Endpoints vary by region. Set the endpoint for your region.

static {Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";}

private static final Logger logger = LoggerFactory.getLogger(Main.class);

private static StringBuilder reasoningContent = new StringBuilder();

private static StringBuilder finalContent = new StringBuilder();

private static boolean isFirstPrint = true;

private static void handleGenerationResult(MultiModalConversationResult message) {

String re = message.getOutput().getChoices().get(0).getMessage().getReasoningContent();

String reasoning = Objects.isNull(re)?"":re; // Default to an empty string if reasoning content is null.

List<Map<String, Object>> content = message.getOutput().getChoices().get(0).getMessage().getContent();

if (!reasoning.isEmpty()) {

reasoningContent.append(reasoning);

if (isFirstPrint) {

System.out.println("====================Thinking Process====================");

isFirstPrint = false;

}

System.out.print(reasoning);

}

if (Objects.nonNull(content) && !content.isEmpty()) {

Object text = content.get(0).get("text");

finalContent.append(content.get(0).get("text"));

if (!isFirstPrint) {

System.out.println("\n====================Complete Response====================");

isFirstPrint = true;

}

System.out.print(text);

}

}

public static MultiModalConversationParam buildMultiModalConversationParam(MultiModalMessage Msg) {

return MultiModalConversationParam.builder()

// If the environment variable is not set, provide your Model Studio API Key here: .apiKey("sk-xxx")

// Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.5-plus")

.messages(Arrays.asList(Msg))

.enableThinking(true)

.thinkingBudget(81920)

.incrementalOutput(true)

.build();

}

public static void streamCallWithMessage(MultiModalConversation conv, MultiModalMessage Msg)

throws NoApiKeyException, ApiException, InputRequiredException, UploadFileException {

MultiModalConversationParam param = buildMultiModalConversationParam(Msg);

Flowable<MultiModalConversationResult> result = conv.streamCall(param);

result.blockingForEach(message -> {

handleGenerationResult(message);

});

}

public static void main(String[] args) {

try {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMsg = MultiModalMessage.builder()

.role(Role.USER.getValue())

.content(Arrays.asList(Collections.singletonMap("image", "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"),

Collections.singletonMap("text", "How do I solve this problem?")))

.build();

streamCallWithMessage(conv, userMsg);

// Print the final result.

// if (reasoningContent.length() > 0) {

// System.out.println("\n====================Complete Response====================");

// System.out.println(finalContent.toString());

// }

} catch (ApiException | NoApiKeyException | UploadFileException | InputRequiredException e) {

logger.error("An exception occurred: {}", e.getMessage());

}

System.exit(0);

}

}# ======= Important =======

# Each region requires a unique API Key. To get an API Key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by region. Set the endpoint for your region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-H 'X-DashScope-SSE: enable' \

-d '{

"model": "qwen3.5-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"},

{"text": "How do I solve this problem?"}

]

}

]

},

"parameters":{

"enable_thinking": true,

"incremental_output": true,

"thinking_budget": 81920

}

}'Multiple image input

Visual understanding models let you pass multiple images in a single request for tasks like product comparison and multi-page document processing. To do so, include multiple image objects in thecontent array of user message.

The model's token limit restricts the number of images you can include. The combined token count for all images and text must not exceed this limit.

OpenAI compatible

Python

import os

from openai import OpenAI

client = OpenAI(

# Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# Endpoints vary by Region. Modify the URL for your Region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.5-plus", # This example uses qwen3.5-plus. For other models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=[

{"role": "user","content": [

{"type": "image_url","image_url": {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},},

{"type": "image_url","image_url": {"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},},

{"type": "text", "text": "What do these images depict?"},

],

}

],

)

print(completion.choices[0].message.content)Response

Image 1 shows a woman and a Labrador retriever on a beach. The woman, in a plaid shirt, is sitting on the sand and shaking the dog's paw. The background of ocean waves and sky creates a warm, pleasant atmosphere.

Image 2 shows a tiger walking through a forest. Its coat is orange with black stripes. The surroundings are dense with trees and vegetation, and the ground is covered with fallen leaves. The scene evokes a sense of the wild.Node.js

import OpenAI from "openai";

const openai = new OpenAI(

{

// Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If the environment variable is not set, replace the following line with your API key: apiKey: "sk-xxx"

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by Region. Modify the URL for your Region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

}

);

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.5-plus", // This example uses qwen3.5-plus. For other models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages: [

{role: "user",content: [

{type: "image_url",image_url: {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"}},

{type: "image_url",image_url: {"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"}},

{type: "text", text: "What do these images depict?" },

]}]

});

console.log(response.choices[0].message.content);

}

main()Response

The first image shows a person and a dog on a beach. The person is wearing a plaid shirt, and the dog has a collar. They appear to be shaking hands or giving a high-five.

The second image shows a tiger walking in a forest. The tiger's coat is orange with black stripes, and the background is filled with green trees and vegetation.curl

# ======= Important =======

# Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by Region. Modify the URL for your Region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

}

},

{

"type": "image_url",

"image_url": {

"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"

}

},

{

"type": "text",

"text": "What do these images depict?"

}

]

}

]

}'Response

{

"choices": [

{

"message": {

"content": "Image 1 shows a woman and a Labrador retriever interacting on a beach. The woman is wearing a plaid shirt and sitting on the sand, shaking the dog's paw. The background features the ocean and a sunset sky, creating a very warm and harmonious atmosphere.\n\nImage 2 shows a tiger walking in a forest. The tiger's coat is orange with black stripes as it walks forward. The surroundings are dense with trees and vegetation, with fallen leaves on the ground. The scene conveys a sense of wildness and vitality.",

"role": "assistant"

},

"finish_reason": "stop",

"index": 0,

"logprobs": null

}

],

"object": "chat.completion",

"usage": {

"prompt_tokens": 2497,

"completion_tokens": 109,

"total_tokens": 2606

},

"created": 1725948561,

"system_fingerprint": null,

"model": "qwen3.5-plus",

"id": "chatcmpl-0fd66f46-b09e-9164-a84f-3ebbbedbac15"

}DashScope

Python

import os

import dashscope

# Endpoints vary by Region. Modify the URL for your Region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

messages = [

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"image": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},

{"text": "What do these images depict?"}

]

}

]

response = dashscope.MultiModalConversation.call(

# Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If the environment variable is not set, replace the following line with your API key: api_key="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.5-plus', # This example uses qwen3.5-plus. For other models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Response

The images show animals in natural scenes. The first image is of a person and a dog on a beach, and the second is of a tiger in a forest.Java

import java.util.Arrays;

import java.util.Collections;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

static {

// Endpoints vary by Region. Modify the URL for your Region.

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"),

Collections.singletonMap("image", "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"),

Collections.singletonMap("text", "What do these images depict?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.5-plus") // This example uses qwen3.5-plus. For other models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text")); }

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Response

These images show animals in natural scenes.

1. First image: A woman and a dog are on a beach. The woman, in a plaid shirt, is sitting on the sand as the dog, which wears a collar, extends its paw to shake hands.

2. Second image: A tiger walks through a forest. Its coat is orange with black stripes, and the background consists of trees and leaves.curl

# ======= Important =======

# Each Region requires a separate API key. For instructions, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by Region. Modify the URL for your Region.

# === Remove this comment before execution ===

curl --location 'https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.5-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"image": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},

{"text": "What do these images depict?"}

]

}

]

}

}'Response

{

"output": {

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"text": "The images show animals in natural scenes. The first image is of a person and a dog on a beach, and the second is of a tiger in a forest."

}

]

}

}

]

},

"usage": {

"output_tokens": 81,

"input_tokens": 1277,

"image_tokens": 2497

},

"request_id": "ccf845a3-dc33-9cda-b581-20fe7dc23f70"

}Video understanding

The visual understanding model analyzes video content from either a video file or an image list (video frames). The following examples show how to analyze an online video or an image list specified by a URL. For limitations on videos or the number of images in an image list, see the Video limits section.

For optimal performance, use the latest version or a recent Snapshot of the model to analyze video files.

Video file

The visual understanding model performs content analysis by extracting a sequence of video frames. You can control the frame extraction policy with the following two parameters:

fps: Controls the frame extraction frequency, extracting a frame every

seconds. The valid value range is [0.1, 10], and the default value is 2.0. For high-speed motion scenes, set a higher fps value to capture more details.

For static or long videos, consider setting a lower fps value to improve processing efficiency.

max_frames: The maximum number of frames to extract from the video. If the number of frames calculated based on the fps value exceeds this limit, the system samples frames evenly to stay within the max_frames limit. This parameter is available only with the DashScope SDK.

OpenAI compatible

When you provide a video file directly to the visual understanding model using the OpenAI SDK or an HTTP request, set the"type"parameter in the user message to"video_url".

Python

import os

from openai import OpenAI

client = OpenAI(

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If you have not configured an environment variable, replace the following line with your Model Studio API key: api_key="sk-xxx",

api_key=os.getenv("DASHSCOPE_API_KEY"),

# Endpoints vary by region. Modify the endpoint based on your region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.5-plus",

messages=[

{

"role": "user",

"content": [

# When you provide a video file directly, set the type to video_url.

{

"type": "video_url",

"video_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps": 2

},

{

"type": "text",

"text": "What is the content of this video?"

}

]

}

]

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

const openai = new OpenAI(

{

// An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured an environment variable, replace the following line with your Model Studio API key: apiKey: "sk-xxx"

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by region. Modify the endpoint based on your region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

}

);

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.5-plus",

messages: [

{

role: "user",

content: [

// When you provide a video file directly, set the type to video_url.

{

type: "video_url",

video_url: {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps": 2

},

{

type: "text",

"text": "What is the content of this video?"

}

]

}

]

});

console.log(response.choices[0].message.content);

}

main();curl

# ======= Important =======

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by region. Modify the endpoint based on your region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "video_url",

"video_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps":2

},

{

"type": "text",

"text": "What is the content of this video?"

}

]

}

]

}'DashScope

Python

import dashscope

import os

# Endpoints vary by region. Modify the endpoint based on your region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

messages = [

{"role": "user",

"content": [

# The fps parameter controls the frame rate. One frame is extracted every 1/fps seconds. For more information, see: https://www.alibabacloud.com/help/en/model-studio/use-qwen-by-calling-api?#2ed5ee7377fum

{"video": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4","fps":2},

{"text": "What is the content of this video?"}

]

}

]

response = dashscope.MultiModalConversation.call(

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If you have not configured an environment variable, replace the following line with your Model Studio API key: api_key ="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.5-plus',

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Java

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Map;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

static {

// Endpoints vary by region. Modify the endpoint based on your region.

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

// The fps parameter controls the frame rate. One frame is extracted every 1/fps seconds. For more information, see: https://www.alibabacloud.com/help/en/model-studio/use-qwen-by-calling-api?#2ed5ee7377fum

Map<String, Object> params = new HashMap<>();

params.put("video", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4");

params.put("fps", 2);

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

params,

Collections.singletonMap("text", "What is the content of this video?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// If you use a model in the China (Beijing) region, you must use an API key from that region. Get an API key at: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured an environment variable, replace the following line with your Model Studio API key: .apiKey("sk-xxx")

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.5-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

# ======= Important =======

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by region. Modify the endpoint based on your region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"input":{

"messages":[

{"role": "user","content": [{"video": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4","fps":2},

{"text": "What is the content of this video?"}]}]}

}'Image list

When a video is provided as an image list of pre-extracted video frames, the fps parameter informs the model of the time interval between frames. This helps the model more accurately understand the sequence, duration, and dynamic changes of an event. The model supports using the fps parameter to specify the frame extraction rate of the original video. This means that a video frame is extracted from the original video every

OpenAI compatible

When you provide a video as an image list using the OpenAI SDK or an HTTP request, set the"type"parameter in the user message to"video".

Python

import os

from openai import OpenAI

client = OpenAI(

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If you have not configured an environment variable, replace the following line with your Model Studio API key: api_key="sk-xxx",

api_key=os.getenv("DASHSCOPE_API_KEY"),

# Endpoints vary by region. Modify the endpoint based on your region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.5-plus", # This example uses the qwen3.5-plus model. You can replace the model name as needed. For a list of available models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/models

messages=[{"role": "user","content": [

# When you provide an image list, the 'type' parameter in the user message is 'video'.

{"type": "video","video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"type": "text","text": "Describe the process shown in this video."},

]}]

)

print(completion.choices[0].message.content)Node.js

// Make sure you have specified "type": "module" in package.json.

import OpenAI from "openai";

const openai = new OpenAI({

// An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured an environment variable, replace the following line with your Model Studio API key: apiKey: "sk-xxx",

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by region. Modify the endpoint based on your region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

});

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.5-plus", // This example uses the qwen3.5-plus model. You can replace the model name as needed. For a list of available models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/models

messages: [{

role: "user",

content: [

{

// When you provide an image list, the 'type' parameter in the user message is 'video'.

type: "video",

video: [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps": 2

},

{

type: "text",

text: "Describe the process shown in this video."

}

]

}]

});

console.log(response.choices[0].message.content);

}

main();curl

# ======= Important =======

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by region. Modify the endpoint based on your region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"messages": [{"role": "user","content": [{"type": "video","video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"type": "text","text": "Describe the process shown in this video."}]}]

}'DashScope

Python

import os

import dashscope

# Endpoints vary by region. Modify the endpoint based on your region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

messages = [{"role": "user",

"content": [

# When you provide an image list, the fps parameter is available for the Qwen3.5, Qwen3-VL, and Qwen2.5-VL models.

{"video":["https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"text": "Describe the process shown in this video."}]}]

response = dashscope.MultiModalConversation.call(

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# If you have not configured an environment variable, replace the following line with your Model Studio API key: api_key="sk-xxx",

api_key=os.getenv("DASHSCOPE_API_KEY"),

model='qwen3.5-plus', # This example uses the qwen3.5-plus model. You can replace the model name as needed. For a list of available models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Java

// DashScope SDK version 2.21.10 or later is required.

import java.util.Arrays;

import java.util.Collections;

import java.util.Map;

import java.util.HashMap;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

static {

// Endpoints vary by region. Modify the endpoint based on your region.

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

private static final String MODEL_NAME = "qwen3.5-plus"; // This example uses the qwen3.5-plus model. You can replace the model name as needed. For a list of available models, see the Model List: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

public static void videoImageListSample() throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

// When you provide an image list, the fps parameter is available for the Qwen3.5, Qwen3-VL, and Qwen2.5-VL models.

Map<String, Object> params = new HashMap<>();

params.put("video", Arrays.asList("https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"));

params.put("fps", 2);

MultiModalMessage userMessage = MultiModalMessage.builder()

.role(Role.USER.getValue())

.content(Arrays.asList(

params,

Collections.singletonMap("text", "Describe the process shown in this video.")))

.build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured an environment variable, replace the following line with your Model Studio API key: .apiKey("sk-xxx")

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model(MODEL_NAME)

.messages(Arrays.asList(userMessage)).build();

MultiModalConversationResult result = conv.call(param);

System.out.print(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

videoImageListSample();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

# ======= Important =======

# An API key is required for each region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by region. Modify the endpoint based on your region.

# === Remove this comment before execution ===

curl -X POST https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.5-plus",

"input": {

"messages": [

{

"role": "user",

"content": [

{

"video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"

],

"fps":2

},

{

"text": "Describe the process shown in this video."

}

]

}

]

}

}'Pass a local file (base64 or file path)

Visual understanding models offer two methods for uploading local files: Base64 encoding and file path upload. Choose a method based on the file size and SDK type. For specific recommendations, see How to choose a file upload method. Both methods must meet the requirements in Image limits.

Base64 encoding

Convert the file to a Base64-encoded string and pass it to the model. This method is compatible with the OpenAI and DashScope SDKs, as well as HTTP requests.

File path

Pass the local file path directly to the model. This method is supported only by the DashScope Python and Java SDKs. It is not compatible with DashScope HTTP or OpenAI-compatible requests.

Use the following table to specify the file path based on your programming language and operating system.

Image

File path

Python

import os

import dashscope

# Endpoints vary by Region. Modify the endpoint for your Region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

# Replace xxx/eagle.png with the absolute path of your local image.

local_path = "xxx/eagle.png"

image_path = f"file://{local_path}"

messages = [

{'role':'user',

'content': [{'image': image_path},

{'text': 'What is depicted in the image?'}]}]

response = dashscope.MultiModalConversation.call(

# If you have not configured an environment variable, you can pass your API key directly by replacing

# the following line with: api_key="sk-xxx"

# An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen-vl-plus', # This example uses the qwen-vl-plus model. You can replace it as needed. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=messages)

print(response.output.choices[0].message.content[0]["text"])Java

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

static {

// Endpoints vary by Region. Modify the endpoint for your Region.

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

public static void callWithLocalFile(String localPath)

throws ApiException, NoApiKeyException, UploadFileException {

String filePath = "file://"+localPath;

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(new HashMap<String, Object>(){{put("image", filePath);}},

new HashMap<String, Object>(){{put("text", "What is depicted in the image?");}})).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// If you have not configured an environment variable, you can pass your API key directly by replacing

// the following line with: .apiKey("sk-xxx")

// An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen-vl-plus") // This example uses the qwen-vl-plus model. You can replace it as needed. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));}

public static void main(String[] args) {

try {

// Replace xxx/eagle.png with the absolute path of your local image.

callWithLocalFile("xxx/eagle.png");

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Base64 encoding

OpenAI compatible

Python

from openai import OpenAI

import os

import base64

# This function converts a local file to a Base64-encoded string.

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

# Replace xxx/eagle.png with the absolute path of your local image.

base64_image = encode_image("xxx/eagle.png")

client = OpenAI(

# If you have not configured an environment variable, you can pass your API key directly by replacing

# the following line with: api_key="sk-xxx"

# An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

# Endpoints vary by Region. Modify the endpoint for your Region.

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen-vl-plus", # This example uses the qwen-vl-plus model. You can replace it as needed. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

# Note: When passing Base64-encoded data, ensure the data URI format (for example, data:image/png;base64)

# matches the actual image format.

# PNG Image: f"data:image/png;base64,{base64_image}"

# JPEG Image: f"data:image/jpeg;base64,{base64_image}"

# WEBP Image: f"data:image/webp;base64,{base64_image}"

"image_url": {"url": f"data:image/png;base64,{base64_image}"},

},

{"type": "text", "text": "What is depicted in the image?"},

],

}

],

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

import { readFileSync } from 'fs';

const openai = new OpenAI(

{

// If you have not configured an environment variable, you can pass your API key directly by replacing

// the following line with: apiKey: "sk-xxx"

// An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// Endpoints vary by Region. Modify the endpoint for your Region.

baseURL: "https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

}

);

const encodeImage = (imagePath) => {

const imageFile = readFileSync(imagePath);

return imageFile.toString('base64');

};

// Replace xxx/eagle.png with the absolute path of your local image.

const base64Image = encodeImage("xxx/eagle.png")

async function main() {

const completion = await openai.chat.completions.create({

model: "qwen-vl-plus", // This example uses the qwen-vl-plus model. You can replace it as needed. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages: [

{"role": "user",

"content": [{"type": "image_url",

// Note: When passing Base64-encoded data, ensure the data URI format (for example, data:image/png;base64)

// matches the actual image format.

// PNG Image: `data:image/png;base64,${base64Image}`

// JPEG Image: `data:image/jpeg;base64,${base64Image}`

// WEBP Image: `data:image/webp;base64,${base64Image}`

"image_url": {"url": `data:image/png;base64,${base64Image}`},},

{"type": "text", "text": "What is depicted in the image?"}]}]

});

console.log(completion.choices[0].message.content);

}

main();curl

For an example of converting a file to a Base64-encoded string, see the example code.

For display purposes, the Base64-encoded string

"data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA..."in the code is truncated. You must pass the complete encoded string in your request.

# ======= Important =======

# An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Endpoints vary by Region. Modify the endpoint for your Region.

# === Remove this comment before execution ===

curl --location 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen-vl-plus",

"messages": [

{

"role": "user",

"content": [

{"type": "image_url", "image_url": {"url": "data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA"}},

{"type": "text", "text": "What is depicted in the image?"}

]

}]

}'DashScope

Python

import base64

import os

import dashscope

# Endpoints vary by Region. Modify the endpoint for your Region.

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

# This function converts a local file to a Base64-encoded string.

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

# Replace xxx/eagle.png with the absolute path of your local image.

base64_image = encode_image("xxx/eagle.png")

messages = [

{

"role": "user",

"content": [

# Note: When passing Base64-encoded data, ensure the data URI format (for example, data:image/png;base64)

# matches the actual image format.

# PNG Image: f"data:image/png;base64,{base64_image}"

# JPEG Image: f"data:image/jpeg;base64,{base64_image}"

# WEBP Image: f"data:image/webp;base64,{base64_image}"

{"image": f"data:image/png;base64,{base64_image}"},

{"text": "What is depicted in the image?"},

],

},

]

response = dashscope.MultiModalConversation.call(

# If you have not configured an environment variable, you can pass your API key directly by replacing

# the following line with: api_key="sk-xxx"

# An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

model="qwen-vl-plus", # This example uses the qwen-vl-plus model. You can replace it as needed. For a list of available models, see: https://www.alibabacloud.com/help/en/model-studio/getting-started/models

messages=messages,

)

print(response.output.choices[0].message.content[0]["text"])Java

import java.io.IOException;

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Base64;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import com.alibaba.dashscope.aigc.multimodalconversation.*;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

static {

// Endpoints vary by Region. Modify the endpoint for your Region.

Constants.baseHttpApiUrl="https://dashscope-intl.aliyuncs.com/api/v1";

}

private static String encodeImageToBase64(String imagePath) throws IOException {

Path path = Paths.get(imagePath);

byte[] imageBytes = Files.readAllBytes(path);

return Base64.getEncoder().encodeToString(imageBytes);

}

public static void callWithLocalFile(String localPath) throws ApiException, NoApiKeyException, UploadFileException, IOException {

String base64Image = encodeImageToBase64(localPath);

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

new HashMap<String, Object>() {{ put("image", "data:image/png;base64," + base64Image); }},

new HashMap<String, Object>() {{ put("text", "What is depicted in the image?"); }}

)).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// If you have not configured an environment variable, you can pass your API key directly by replacing

// the following line with: .apiKey("sk-xxx")

// An API key is required for each Region. To get an API key, see: https://www.alibabacloud.com/help/en/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen-vl-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

// Replace xxx/eagle.png with the absolute path of your local image.