This topic describes the rate limiting mechanism of Model Studio APIs and provides traffic control strategies for different scenarios to improve throughput and ensure service availability.

Model Studio APIs limit the number of requests, token usage, and growth rate over time. This is called rate limiting. Large language model (LLM) services have long latency and use two-dimensional rate limiting, which limits both request counts and token volumes. Traditional "retry on error" strategies are ineffective for these services, so you need to implement specific traffic control measures.

This topic introduces three types of solutions, ordered from the lowest to the highest implementation cost:

Platform configuration solutions (no code changes): Increase quota limits, PTU, and Batch API.

Client-side traffic control strategies (client code changes): Four strategies with increasing engineering complexity, from basic retry to adaptive congestion control.

Architectural fallback solutions (system architecture changes): Model fallback and peak-load shifting using message queues (MQ).

If you are currently troubleshooting a 429 error, go to Error diagnosis and strategy recommendations to identify the cause.

Platform rate limiting mechanism

Rate limiting is calculated independently for each model at the root account level. After rate limiting is triggered, the service typically resumes within one minute. For more information about the specific rate limiting conditions and current usage of each model, see Model Rate Limiting Conditions and Model Usage Monitoring. The Model Studio API includes the following three types of rate limiting rules:

Minute-level quota limits (RPM / TPM): The maximum number of requests per minute (RPM) and the maximum token usage per minute (TPM).

Instantaneous frequency limits (RPS / TPS): The maximum number of requests per second (RPS) and the maximum token usage per second (TPS). A high density of API calls or token consumption within a second may trigger rate limiting.

Growth rate limits (Traffic Burst): A sudden surge in request volume or token usage triggers rate limiting. The threshold is dynamically adjusted based on the service status. You can avoid triggering this limit by gradually increasing the request volume.

Based on these rate limiting mechanisms, the following sections describe solutions at the platform configuration, client-side traffic control, and architectural fallback levels.

Error diagnosis and strategy recommendations

The same error code can be triggered by different rate limiting dimensions. In addition, server saturation under high concurrency can also lead to slower responses or timeouts. You can mitigate this issue using the adaptive congestion control strategy described later in this topic.

Error code (DashScope / OpenAI) | Triggering dimension | Feature Diagnosis | Recommended strategy |

Throttling.RateQuota / limit_requests | Request rate exceeded | Intermittent errors. Success rate decreases over time. | Token bucket: Control the request quota per unit of time. |

Request rate exceeded | Concentrated errors at startup or during concurrency spikes. | Concurrency semaphore or smoothing rate limiter: Increase the interval between requests. | |

Throttling.AllocationQuota / insufficient_quota | Token usage exceeded | Intermittent errors when processing long texts. | Dual token bucket: Limit both RPM and TPM quotas simultaneously. |

Token usage exceeded | Instantaneous token consumption is too high during concurrent processing of long texts. | ||

Throttling.BurstRate / limit_burst_rate | Traffic growth rate exceeded | Sudden large volume of requests after startup or recovery from an idle state. | Use a token bucket with a low initial value, such as |

Platform configuration solutions

The following solutions can help you mitigate or eliminate rate limiting issues through platform-side configurations or resource adjustments.

Increase quota limits

If the default quota is insufficient, you can directly increase the temporary rate limit quota for a model in the Model Studio console. The change takes effect immediately. This feature is currently supported in the China (Beijing) and Singapore regions.

Scenarios: The default RPM/TPM quota is insufficient due to business growth, or a temporary throughput increase is needed for short-term events. For more information, see Rate limits.

Increasing the quota is simple. Evaluate this option before trying client-side traffic control strategies.

Provisioned Throughput Unit (PTU)

The PTU service provides dedicated, reserved computing power. It is the preferred solution for meeting real-time, high-throughput requirements and avoiding contention for computing power in the public resource pool.

This solution is suitable for scenarios where your business has deterministic throughput requirements, such as a Service-Level Agreement (SLA) commitment, or where you want to achieve stable, high throughput without complex client-side traffic control development.

PTUs are reserved resources and are billed continuously, even when not fully utilized. Evaluate the required specifications based on your actual peak business load to avoid resource waste.

Asynchronous batch processing (Batch API)

For tasks that do not have strict real-time requirements, such as data cleaning and batch analytics, you can use the Batch API to submit them for batch processing. These tasks are executed during off-peak hours, provide results asynchronously, and are not subject to real-time online request frequency or traffic limits.

This solution is suitable for offline tasks that can tolerate a result return time of several hours to days, such as data annotation, log analysis, and batch summary generation. The cost of the Batch API is typically lower than that of real-time API calls.

The result return time for the Batch API is not guaranteed. It is not suitable for online services that require immediate responses. After submitting a task, you must retrieve the results through polling or a callback.

Client-side traffic control strategies

If platform configuration solutions cannot meet your needs, you must introduce traffic control mechanisms on the client side. The core principle is to distribute requests as evenly as possible within a time window to avoid burst traffic that triggers rate limiting. When a system starts or after a long idle period, you should gradually increase the concurrency level instead of instantly using the maximum level.

The following four strategies are listed in order of increasing engineering complexity. Each strategy includes the capabilities of the previous one and enhances them:

Basic retry provides only passive defense.

Request rate limiting adds active queuing.

Traffic shaping further introduces token-level control and smooth sending.

Adaptive congestion control dynamically adjusts the sending rate based on real-time feedback.

Choose the strategy with the lowest implementation cost that meets your business needs.

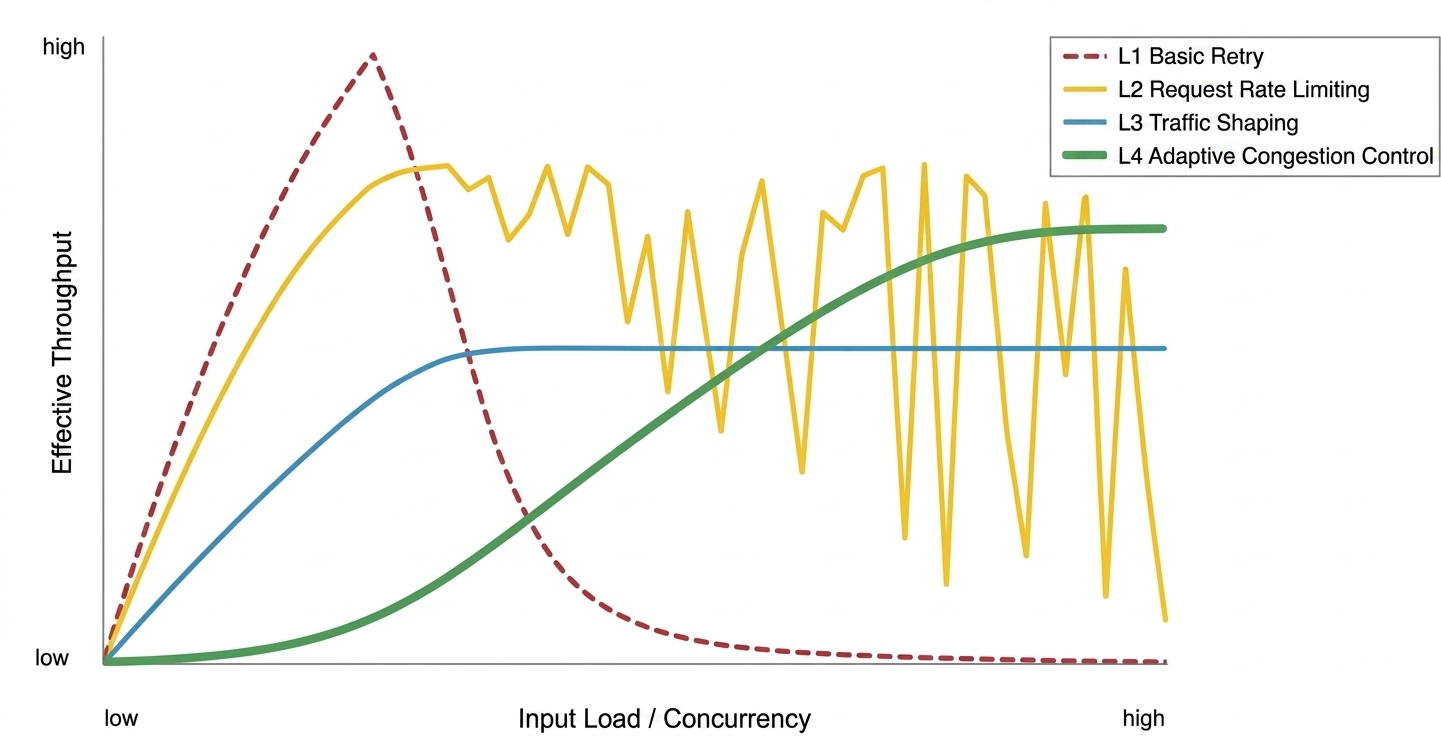

Throughput performance comparison of each strategy

The following list compares the effective throughput performance of the four client-side traffic control strategies under different loads:

Basic retry strategy: Effective under low load. Prone to congestive collapse under high concurrency, causing a sharp drop in throughput.

Request rate limiting strategy: Strong protection against collapse. However, under mixed workloads with long texts, throughput shows sawtooth-like fluctuations due to the lack of token control.

Traffic shaping strategy: High stability. Achieves smooth output by sacrificing some peak throughput.

Adaptive congestion control strategy: Can dynamically converge to a stable, high throughput point under high load, but has cold-start probing overhead.

Basic retry strategy

This strategy is suitable for non-high-concurrency scenarios such as personal testing, local scripts, and low-frequency background tasks. It does not limit the sending rate by default. It only triggers an exponential backoff retry with random jitter upon receiving a 429 or 5xx error.

This strategy has no proactive traffic control. Under multi-threaded concurrency, it can easily trigger rate limiting and cause many requests to back up and fail.

The preceding code uses exponential backoff instead of a fixed-interval retry. Fixed-interval retry, such as retrying all failed requests after 3 seconds, causes all requests to be re-sent at the same time. This can easily trigger rate limiting again and lead to persistent congestion. Exponential backoff with random jitter "spreads out" the retries:

Wait time doubles progressively: For example,

1s, 2s, 4s.... This avoids repeated requests in a short period.Add random jitter: Introducing a random value, such as

2s +/- 0.5s, spreads out the retry traffic. This prevents many requests from retrying at the same time, which would create a secondary flood (thundering herd effect).

The system can then recover in a distributed manner, rather than becoming stuck in a vicious cycle of "fail—retry in unison—fail again".

Request rate limiting strategy

Relying solely on passive retries is insufficient for real business traffic. Frequent retries significantly increase response latency. The request rate limiting strategy introduces active traffic control. It performs self-checks and adjustments before sending requests. This organizes a large, unordered influx of requests into a smooth queue that complies with the platform's RPM limit. After rate limiting is triggered, it usually takes some time to recover. Actively smoothing the request rhythm introduces a small, controllable queuing delay. However, this is far less costly than the time spent in a passive "error—wait—retry" loop. In short, you incur a small, predictable cost to avoid a large, unpredictable delay.

This strategy is suitable for online services that are sensitive to time to first token, such as chatbots and other lightweight, request-response interactions.

This strategy implements active queuing on the client side with two levels of control:

RPM token bucket: Limits the total number of requests per minute. The bucket capacity is the RPM quota, and tokens are refilled at a constant rate. This method supports borrowing. If tokens are insufficient, a request can borrow from future quotas but must strictly follow First-In, First-Out (FIFO) order.

Concurrency semaphore: Limits the number of concurrent requests. An asynchronous semaphore controls in-flight requests, preventing instantaneous high concurrency from triggering RPS limits and avoiding client overload.

These two levels of control must be executed in a strict order: first acquire an RPM token, then acquire a concurrency semaphore. Concurrency slots are scarce resources and should only be allocated to requests that have met the execution conditions. If the order is reversed (occupy a slot first, then wait for a token), it can easily cause head-of-line blocking under high load. A request occupies a slot but has no token available. It holds the slot for a long time without executing. All slots become occupied, but no requests are actually sent. The core principle is: do not perform potentially long-running waits while holding a scarce resource.

The following code initializes the token bucket to a full state (initial_tokens=rpm_limit). This is suitable for lightweight online services to process requests immediately at startup. If starting with a full bucket triggers a rate limit error, you can lower the initial number of tokens. For example, set it to initial_tokens=0, which is an "empty bucket start". This allows the system to enter its working state at a more gradual pace.

This strategy does not track token usage. It can still trigger rate limiting in long-text tasks by exhausting the TPM quota.

Traffic shaping strategy

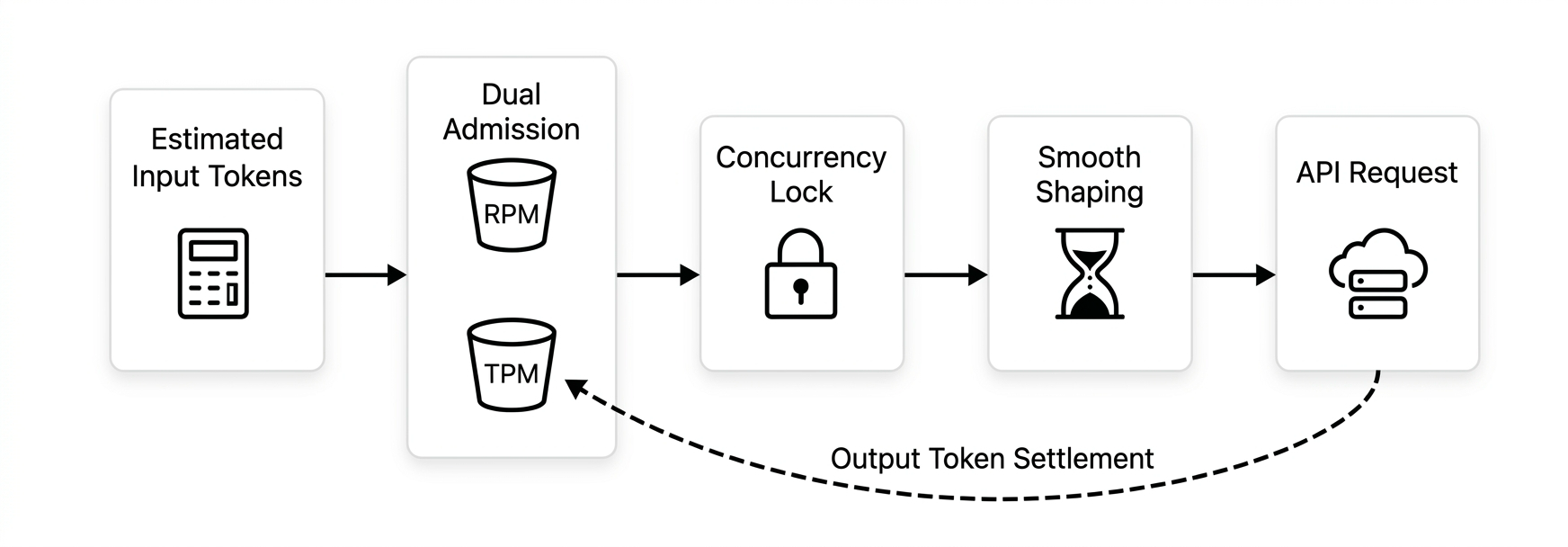

In batch processing scenarios that require high, stable throughput, such as real-time RAG ingestion and bulk analysis of long documents, the request rate limiting strategy has a significant TPM blind spot. To address this, the traffic shaping strategy provides dual resource awareness (RPM & TPM). It also introduces a shaping mechanism on the sending end to perform peak-load shifting on bursty traffic, converting it into a smooth flow.

This strategy enhances the original request rate limiting strategy with the following capabilities:

Dual resource control (RPM & TPM): Maintains both RPM and TPM token buckets. All requests must pass quota checks for both dimensions before being sent.

Pre-deduction for input, post-settlement for output: The length of the model's output is unknown before the request. The TPM token bucket only pre-deducts input tokens when sending. After the request is completed, the actual output tokens are settled. Even if the quota is insufficient at settlement (negative tokens), subsequent requests will wait for the token count to become positive, naturally smoothing the flow rate.

Continuous warm-up: During a cold start, the token issuance rate increases linearly over time, eliminating the risk of initial bursts.

Smoothing rate limiter (Pacing): Smooths the sending rate by enforcing a minimum interval between requests (pacing), reducing the risk of triggering rate limits.

Alternative solution reference: If your business is not sensitive to minor queuing delays at startup, you can reuse the standard token bucket logic (set initial_tokens=0) to achieve a safe start while reducing client complexity. In addition, the Python token bucket implementation in this topic is for demonstrating the design concept. In a production environment, use mature rate limiting components from your language's ecosystem, such as Guava's SmoothRateLimiter in Java.

In the code example, the smoothing wait is placed inside the concurrency lock to avoid request bursts caused by head-of-line blocking. Multiple requests might compete for the concurrency semaphore simultaneously after their wait ends, causing the smoothed traffic to become congested again at the exit. Although this slightly reduces concurrency efficiency, it ensures precise control over the sending interval.

The complete traffic shaping pipeline is: Estimate input tokens → Dual admission (RPM & TPM) → Concurrency lock → Traffic shaping → Send → Settle output tokens.

This strategy sacrifices some theoretical maximum concurrency due to its conservative smoothing mechanism. It is not suitable for online services that require extremely low latency.

Adaptive congestion control strategy

This strategy is suitable for large-scale, dynamic, mixed-load scenarios such as API gateways, complex proxies, and multi-tenant systems.

Selection tip: This strategy is not a universal solution

The core value of the adaptive congestion control strategy is to handle highly uncertain and volatile business environments. It is not a one-size-fits-all choice:

Performance paradox: If the business load is predictable and relatively stable, such as in quantitative batch processing, directly setting optimal static parameters based on experience usually performs better than dynamic probing that requires "trial and convergence".

Probing overhead: To find the boundary, dynamic algorithms inevitably involve a cold-start ramp-up and exploratory fluctuations. In known scenarios, this "exploration cost" is an unnecessary performance loss.

Maintenance cost: Introducing a closed-loop feedback mechanism significantly increases system complexity and troubleshooting difficulty.

Unless your business has a very large scale, complex load, and significant volatility, choose one of the simpler first three strategies.

The request rate limiting and traffic shaping strategies are classic defensive strategies based on static quotas. They are fully applicable in scenarios with stable and predictable loads. However, in complex gateway-level scenarios, businesses face dynamic changes from two sides. The downstream load is complex and variable, with a mix of high-concurrency short requests and long-running deep inference tasks. The platform's rate limiting thresholds fluctuate dynamically because second-level rate limits and growth rate detection thresholds are adjusted based on service status. Static strategies struggle to balance efficiency and stability.

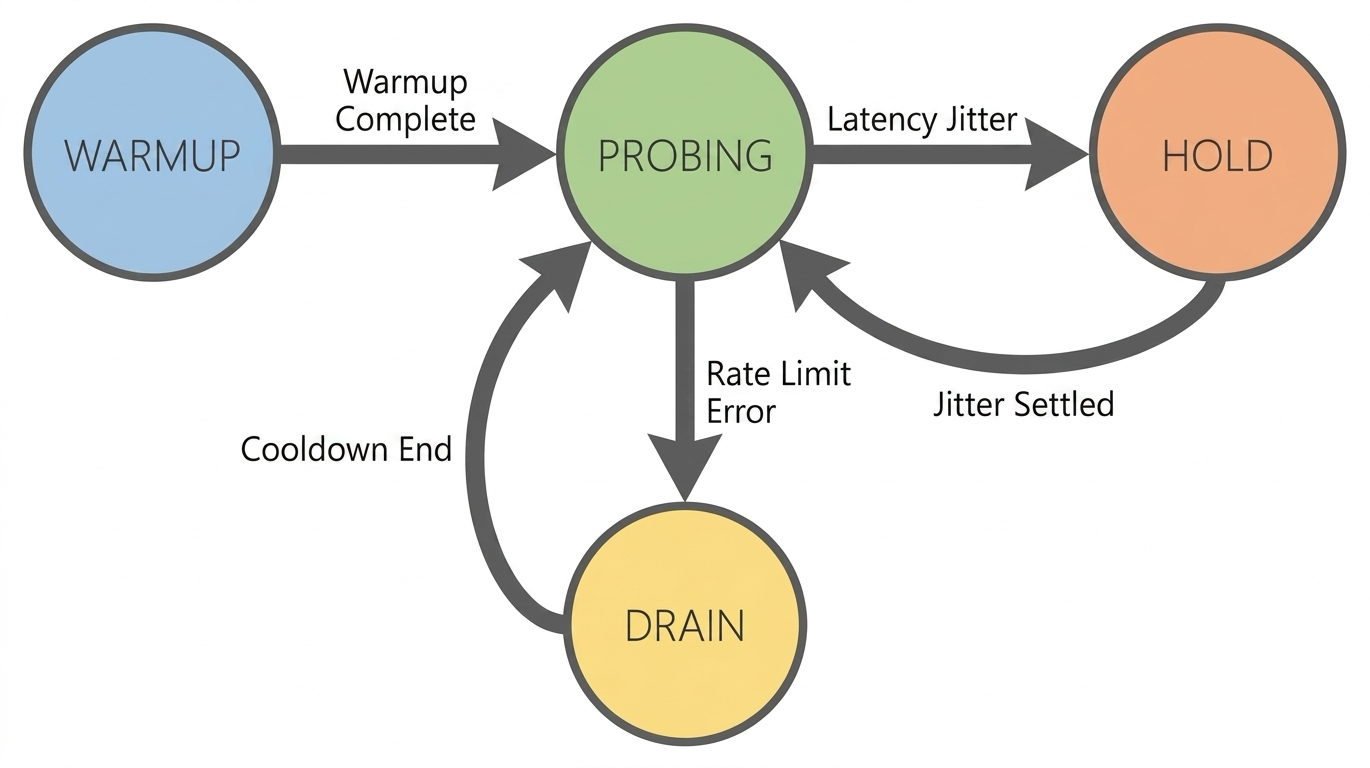

This policy is inspired by BBR (Bottleneck Bandwidth and RTT) and establishes a closed-loop control system based on EBP (Elastic Bandwidth Probing). Using RPM/TPM quotas as a guiding upper limit, this system dynamically calculates the optimal sending rate to maximize throughput based on real-time feedback, such as latency changes and whether rate limiting is active.

Elastic Bandwidth Probing (EBP): This method stores the historical highest successful watermark. It calculates the probing gain by simulating spring tension based on the distance between the current concurrency level and the highest watermark. The farther the distance, the faster the acceleration. The closer the distance, the slower the deceleration. A small linear thrust is added to ensure continuous boundary exploration even in highly saturated intervals.

TPT congestion awareness: The generation time of large models is proportional to their length. High latency in long texts does not necessarily indicate congestion. TPT (Time Per Token), or the processing time per token, is used as a metric to filter out the noise from content length. Congestion is only determined to be computational saturation when TPT significantly deteriorates.

Anti-burst rate governor: Regardless of the target concurrency level calculated by EBP, the rate governor forcibly limits the acceleration of concurrency growth. This ensures that traffic increases smoothly, avoiding step changes that could trigger growth rate limits.

Compared to native BBR, this strategy includes the following key modifications for large models:

Guided probing: Introduces known RPM/TPM quotas as a "guiding upper limit" to avoid frequent collisions caused by blind probing.

Signal source modification (RTT → TPT): Native BBR relies on RTT. However, in large model scenarios, the latency difference caused by content length is much greater than network jitter. TPT is used instead to eliminate interference from content length.

Response mechanism enhancement (ProbeRTT → Hold): In the face of latency fluctuations, it chooses to maintain the current concurrency level rather than proactively backing off and reducing throughput.

Hard rate limit response (Packet Loss → 429 Drain): Once a

429error is triggered, it enters an aggressive Drain state and performs a fast recovery after a cooldown period.

This strategy has the following limitations:

Congestion signal noise (TPT Noise): The current TPT is roughly estimated as "total latency / total tokens". Total latency includes network round-trip time, queuing time, and time to first token. It is susceptible to being inflated by network jitter or long inputs, which can mistakenly trigger the Hold state.

Large request starvation (Starvation Risk): To achieve maximum scheduling performance, this strategy uses a non-strict FIFO wakeup mechanism. When quotas are scarce, short-token requests may "jump the queue" and preempt resources, causing long-token requests to wait for an extended period.

Cold start problem: This strategy requires a warm-up period to build a statistical model. In low-load or short-lived tasks, throughput may be lower than the first three strategies because it probes from scratch.

Architectural fallback solutions

When platform configurations and client-side traffic control still cannot meet the business requirements for availability or peak throughput, you can introduce fallback mechanisms at the system architecture level.

Model fallback

When the primary model cannot respond due to rate limiting or service exceptions, automatically fall back to an alternative model with a more generous quota to ensure the main process continues to respond.

Fallback path design principles

Choose models from different series: In Model Studio, rate limiting is calculated independently for each model. When a model is rate-limited, you can choose a different model as a fallback. For example, you can fall back from

qwen3.6-plustoqwen3.6-flash.Trigger fallback only on rate limit errors: Fallback should be triggered for

429rate limit errors, not all exceptions. Switching models will not solve issues such as network timeouts or parameter errors.Validate the fallback model in advance: Ensure that the fallback model supports the features required by your business, such as Function Calling and structured output, to avoid functional exceptions after fallback.

Model fallback can be combined with client-side traffic control strategies. For example, you can integrate fallback logic into the retry mechanism of the request rate limiting strategy. When retries are exhausted and rate limiting is still triggered, switch to the fallback model.

Peak-load shifting using message queues (MQ)

For backend services that do not require immediate responses, you can introduce a message middleware, such as RabbitMQ or Kafka, for peak-load shifting. Burst traffic is first written to the MQ, and the consumer side pulls and processes it at a steady rate according to the rate limit quota. This architecture decouples frontend peaks from backend calls and can fundamentally prevent rate limiting errors.

Scenarios: Businesses where users can accept asynchronous notification of results after submitting a task, such as ticket processing, content moderation, and batch data annotation. The MQ acts as a buffer layer, absorbing traffic spikes from the frontend, while the consumer side sends requests to the Model Studio API at a stable rate.

Key architectural design points:

Consumer rate control: The consumer side should use the request rate limiting or traffic shaping strategy to consume messages at a steady rate based on the RPM/TPM quota, rather than pulling messages without limits.

Dead-letter handling: For messages that fail after multiple retries, move them to a dead-letter queue and trigger an alert. This prevents infinite retries from blocking consumption.

Back-pressure propagation: When the MQ backlog exceeds a threshold, propagate pressure back to the upstream, for example, by returning a queuing status. This prevents the queue from growing indefinitely.

Production environment considerations

The preceding code examples are based on a Python asyncio single-threaded loop and are intended to demonstrate the core algorithms. Before applying them to large-scale production, consider the following issues.

Adapting to non-text models

The preceding strategies use text models as examples, but the core control principles also apply to multimodal model services, such as image generation and speech synthesis. Apart from different units of measurement, the essence is the same: limiting the submission rate and processing capacity.

Models such as speech recognition are typically constrained by both the number of requests per unit of time (such as RPM) and usage (such as audio duration). The strategies are basically the same as for text models.

Models for images and videos are typically constrained by the task submission rate and the number of concurrent tasks. You can use the same approach as the request rate limiting strategy: limit the task submission rate and use a semaphore to control concurrency.

Regardless of how the rate limiting metrics change, the principle of client-side throttling remains the same. You only need to replace the counter (such as an RPM token bucket) or the probing metric (such as TPT) with the metric for the corresponding modality. For specific rate limiting rules and metric definitions for a model, see Model Rate Limiting Conditions.

Atomicity in concurrent models

Example implementation: Because

asynciouses single-threaded cooperative scheduling, the state modification operations in the example code are inherently atomic and do not require additional concurrency protection within a single process.Production recommendation: When implementing in a multi-threaded or multi-process environment, ensure the concurrency safety of the token bucket and statistical window to guarantee the correctness of state updates. Otherwise, a race condition will cause the traffic control to fail.

Distributed rate limiting

Example implementation: The traffic control components in the example code are all in-memory implementations.

Production recommendation: In a multi-instance distributed deployment, each instance performs local traffic control independently. The actual total usage may exceed the limit and trigger global rate limiting. Use a centralized counter, such as Redis, to uniformly manage the usage of all nodes.

Priority queues and starvation prevention

Example implementation: None of the example code implements priority differentiation. The adaptive congestion control strategy, in particular, uses a non-strict FIFO wakeup mechanism to achieve maximum scheduling performance.

Production recommendation: When your business has high and low-priority requests, implement a weighted priority queue to guarantee bandwidth for high-priority requests. Also, introduce a starvation prevention mechanism to reserve a minimum quota for the low-priority queue, preventing it from being completely unschedulable during continuous high load.