Configure Cursor to use Qwen models from Alibaba Cloud Model Studio through the OpenAI-compatible API.

Cursor Pro or higher is required. The free plan only supports Auto mode and does not allow custom models.

Quick reference

If you have a Model Studio API key, enter these values in Cursor Settings > Models:

|

Setting |

Value |

|

OpenAI API Key |

Your Model Studio API key |

|

Override OpenAI Base URL |

|

This guide covers pay-as-you-go mode only. If you use a Coding Plan, see Coding Plan for Cursor for your exclusive base URL and API key.

Supported models

|

Text generation - Qwen |

Qwen-Max, Qwen-Plus, Qwen-Flash, Qwen-Turbo, Qwen-Coder, QwQ |

|

Text generation - Qwen - Open source |

Qwen3.5, Qwen3, QwQ - Open source, QwQ - Preview, Qwen2.5, Qwen-Coder |

For a full list, see Model list.

Model recommendations

Choose models by task:

|

Task |

Recommended models |

Reason |

|

Complex algorithms, architecture design, core logic |

|

Strong reasoning and coding capabilities. |

|

Code completion, explanations, daily scripting |

|

Fast and lightweight |

Prerequisites

Before you begin:

-

A Cursor Pro or higher subscription

-

An Alibaba Cloud account with identity verification completed. For help, see Create an Alibaba Cloud account.

-

Model Studio activated (see Step 1)

Step 1: Activate Model Studio

-

Go to Model Studio with your Alibaba Cloud account. Read and agree to the Terms of Service when prompted -- Model Studio activates automatically. If no Terms of Service appear, the service is already active.

First-time users receive a free quota for model inference, valid for 90 days. See New user free quota for details. Charges apply after quota expiration or depletion. To prevent unexpected charges, enable Free quota only. Actual fees depend on your final bill.

Step 2: Get your API key

Go to the API key management page and create an API key. Save it—you'll need it in the next step.

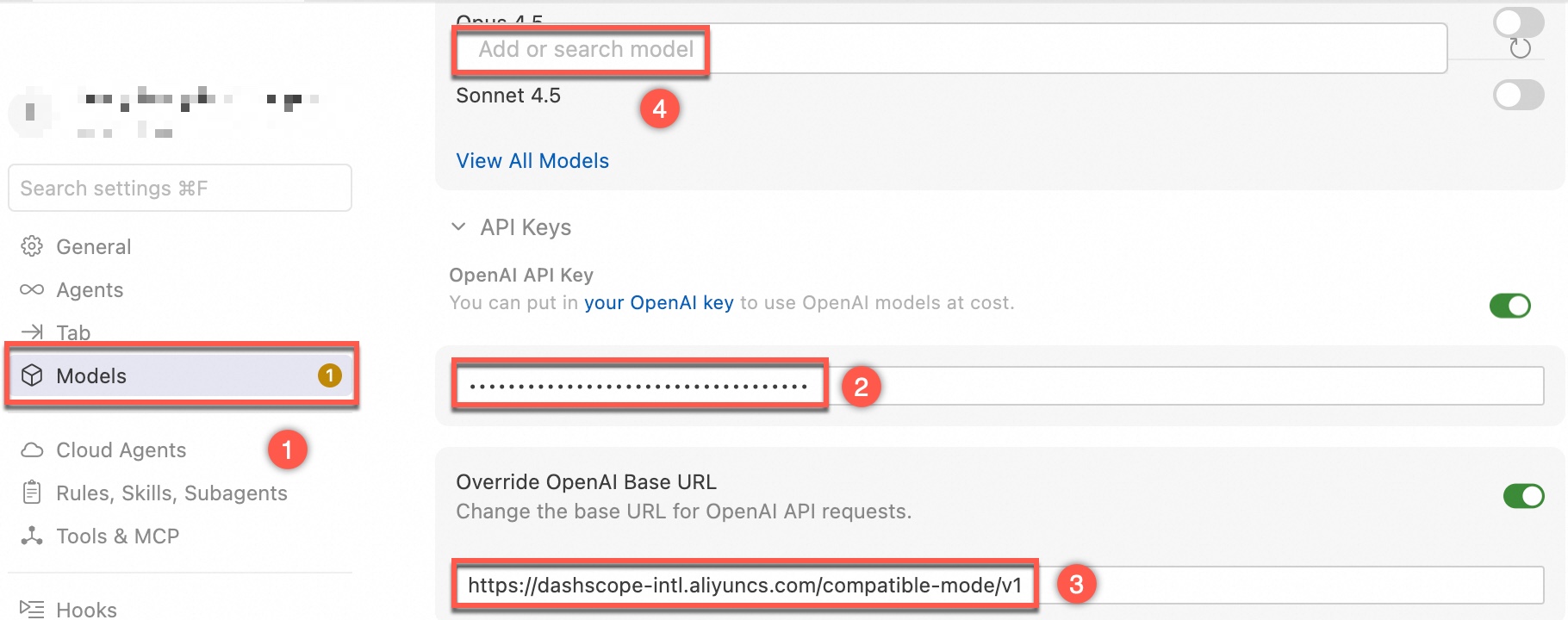

Step 3: Configure Cursor

-

Open Cursor. Click the

icon, then click Cursor Settings → Models.

icon, then click Cursor Settings → Models. -

Enable OpenAI API Key and enter your Model Studio API key.

-

Enable Override OpenAI Base URL and enter the endpoint for your region:

Region

Endpoint

Singapore

https://dashscope-intl.aliyuncs.com/compatible-mode/v1US (Virginia)

https://dashscope-us.aliyuncs.com/compatible-mode/v1China (Beijing)

https://dashscope.aliyuncs.com/compatible-mode/v1 -

In Add or search model, type the model name (for example,

qwen3-coder-next). Click Add Custom Model. -

In the chat panel, select the model you just added and start coding.

FAQ

"Cannot use added models" error

Your Cursor plan does not support custom models:

The model xxx does not work with your current plan or api key.Named models unavailable Free plans can only use Auto. Switch to Auto or upgrade plans to continue.Upgrade to Cursor Pro or higher to use custom models from Model Studio.

Added model does not appear in the drop-down list

Disable Auto mode in the chat panel to show the model in the drop-down list.

"We're having trouble connecting to the model provider" or "Unauthorized User API key"

Check the following:

-

Verify the model name. The model may not exist. See Model list for supported names.

-

Check for provider conflicts. After configuring Model Studio's base URL and API key, requests to other providers fail. To switch back, disable or reconfigure OpenAI API Key and Override OpenAI Base URL.

-

Rule out Cursor compatibility issues. Some models may not work due to Cursor limitations. Try another model to isolate the issue.

Slow responses

-

Switch to a faster model. For simple tasks, use

qwen3-coder-nextorqwen-flash. -

Check your network. Ensure your connection to the API endpoint is stable.

-

Reduce context length. Long conversation histories increase processing time -- start a new chat to reset context.