Chatbox is an AI client for interacting with large language models.

Prerequisites

-

Get an API key and activate Alibaba Cloud Model Studio.

-

Select a text generation model from the Model list. If you are a RAM user, verify model access permissions in Workspace management.

Model support and features depend on your Chatbox implementation.

1. Open Chatbox

Go to Chatbox. Download and install for your device, or click Launch Web App.

2. Configure the model and API key

2.1. Select a Custom Provider

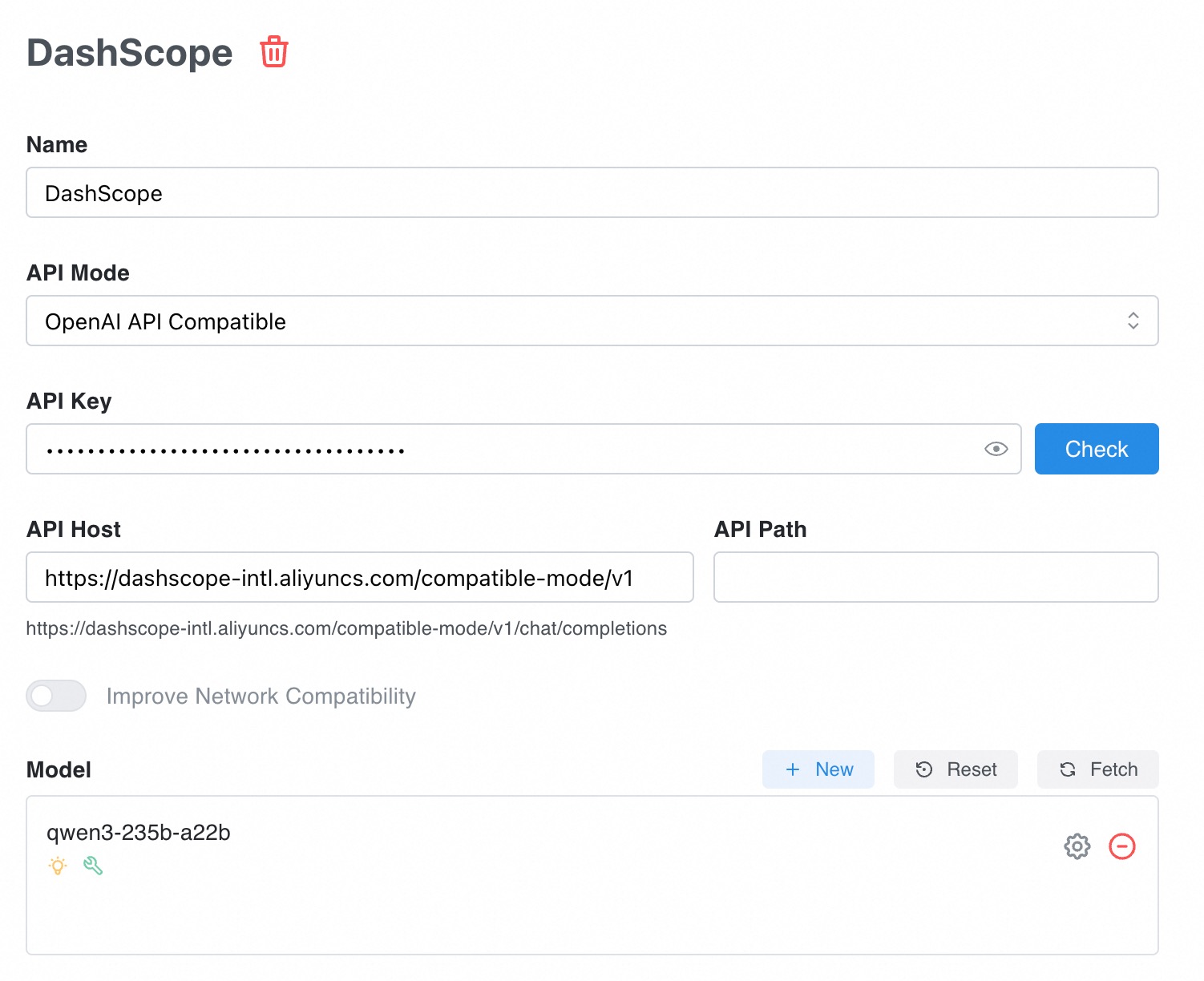

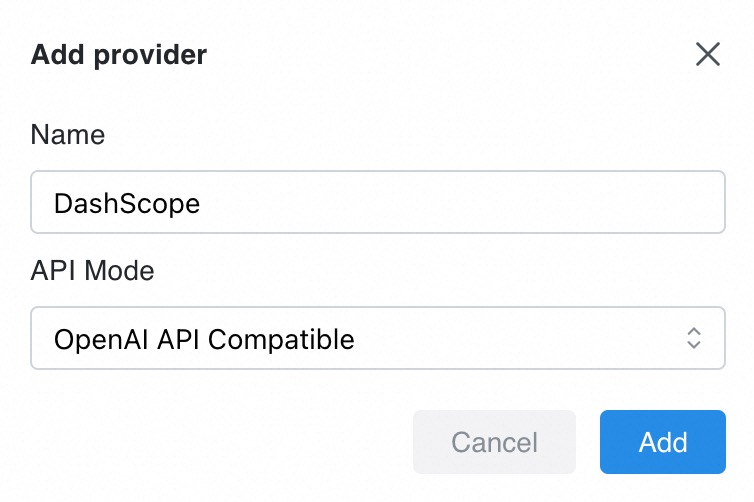

In the lower-left corner, click Settings → Model Provider → Add (at bottom of list).

In the dialog box, set Name to "DashScope" and API Mode to OpenAI API Compatible. Click Add.

2.2. Configure the model and API key

|

|

2.3. Chat settings

After configuring the model and API key, click Chat Settings in the navigation pane on the left. Set the Max Message Count in Context and Temperature:

-

Max Message Count in Context

Specifies how many previous conversation turns the model considers for each new question. For casual chats, use 5 to 10. Excessive context can cause error:

Range of input length should be [1, xxx]. -

Temperature

Controls diversity of the model's generated text.

-

Higher values produce more diverse text, ideal for content creation and brainstorming.

-

Lower values produce more deterministic text, ideal for code writing and mathematical reasoning.

Set to a number less than 2. Otherwise, error:

’temperature’ must be Float. -

-

Top P

Similar to temperature, this controls diversity of the generated text.

Set to a number ≤ 1. Otherwise, error: "xx is greater than the maximum of 1 - 'top_p'".

3. Start a chat

Enter a question in the dialog box to start the chat.

Note: Video and audio files are not supported.

Standard chat

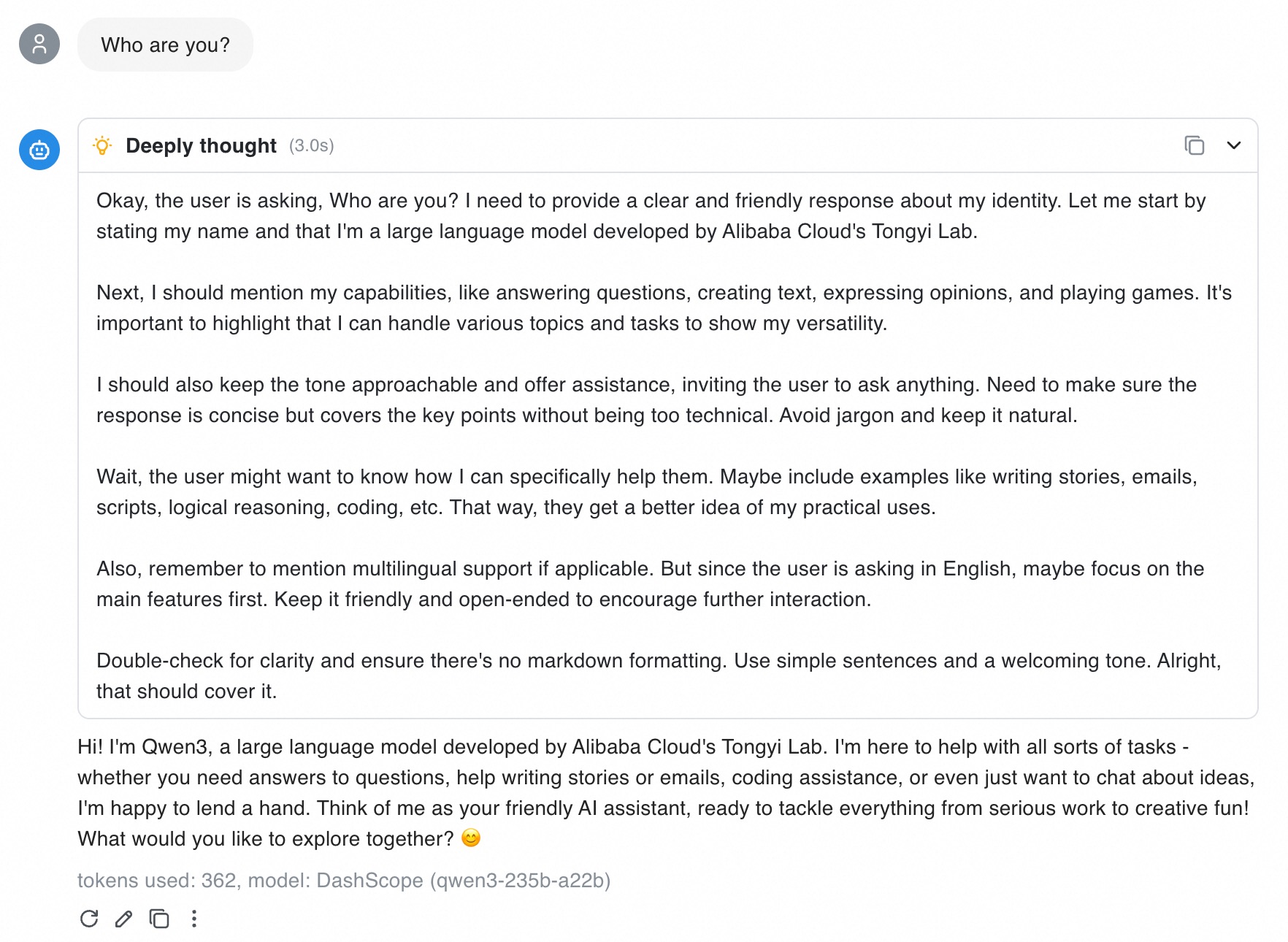

Test the chat by entering "Who are you?" below.

Chatbox displays the thinking process and Qwen3 model response.

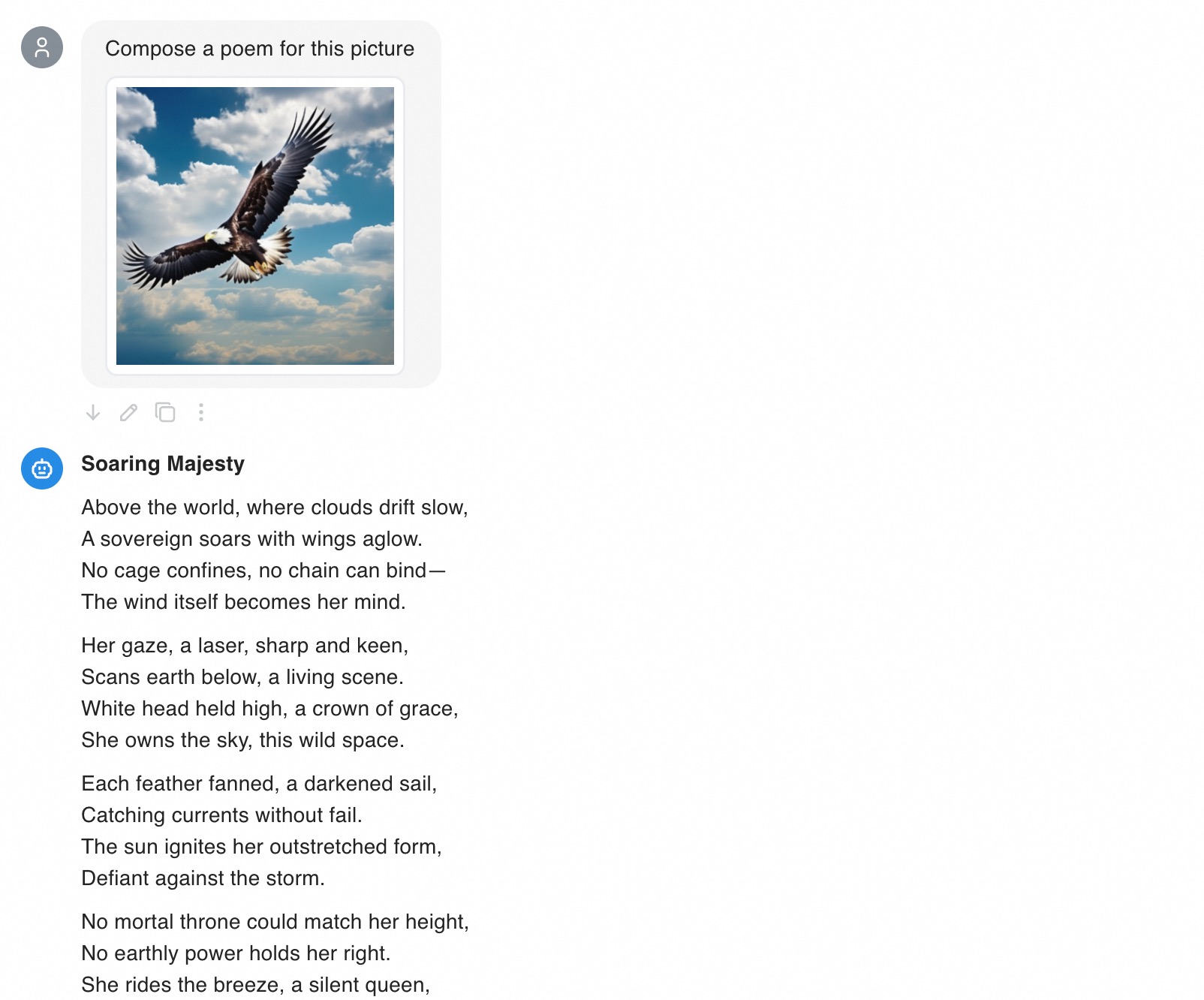

Use an image

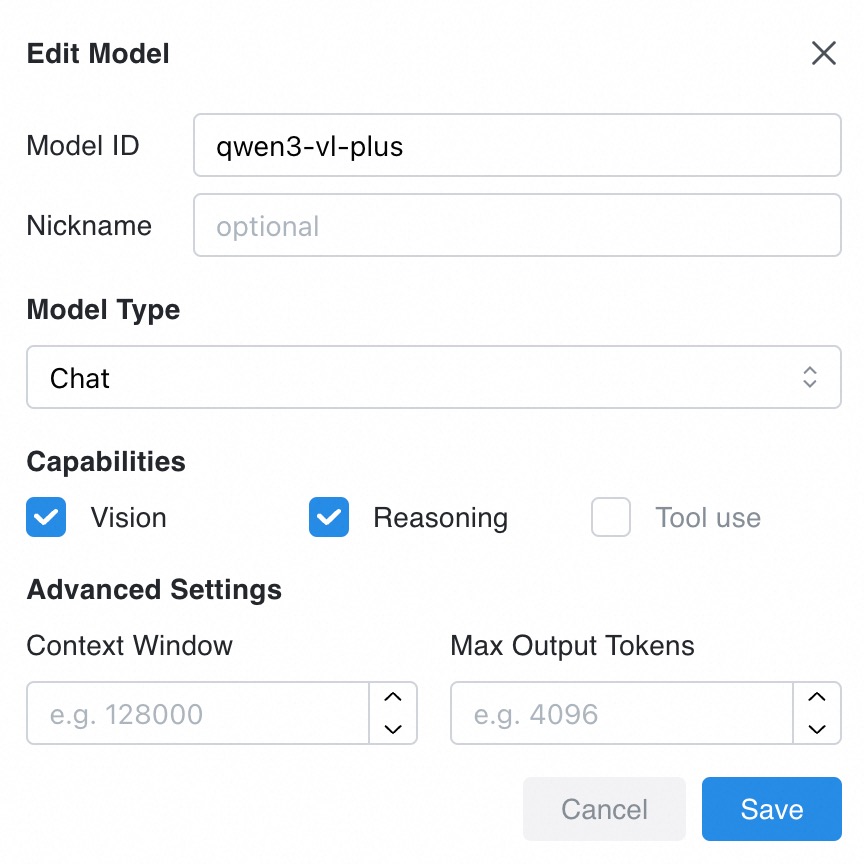

1. Select a model

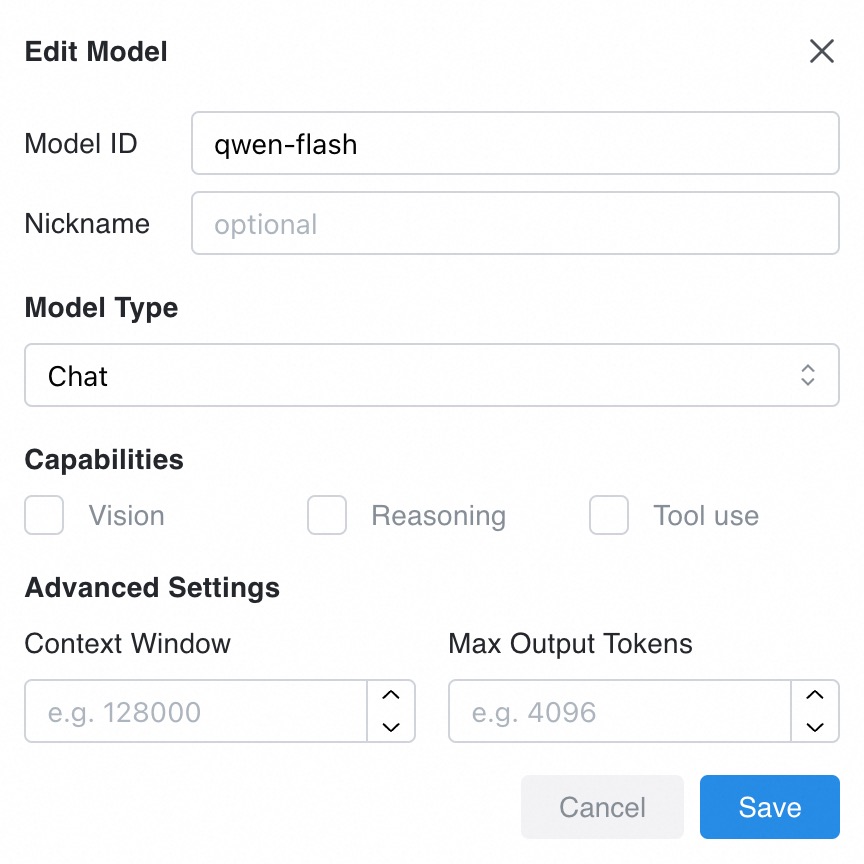

Image Q&A requires a model with visual capabilities. Select Qwen-VL, QVQ, or Qwen-Omni during configuration.

In 2.2. Configure the model and API key, add a visual model and select Vision.

2. Start the chat

Next to the Send button, select the visual model ![]() . Enter your question, click

. Enter your question, click ![]() , then Add Image to upload.

, then Add Image to upload.

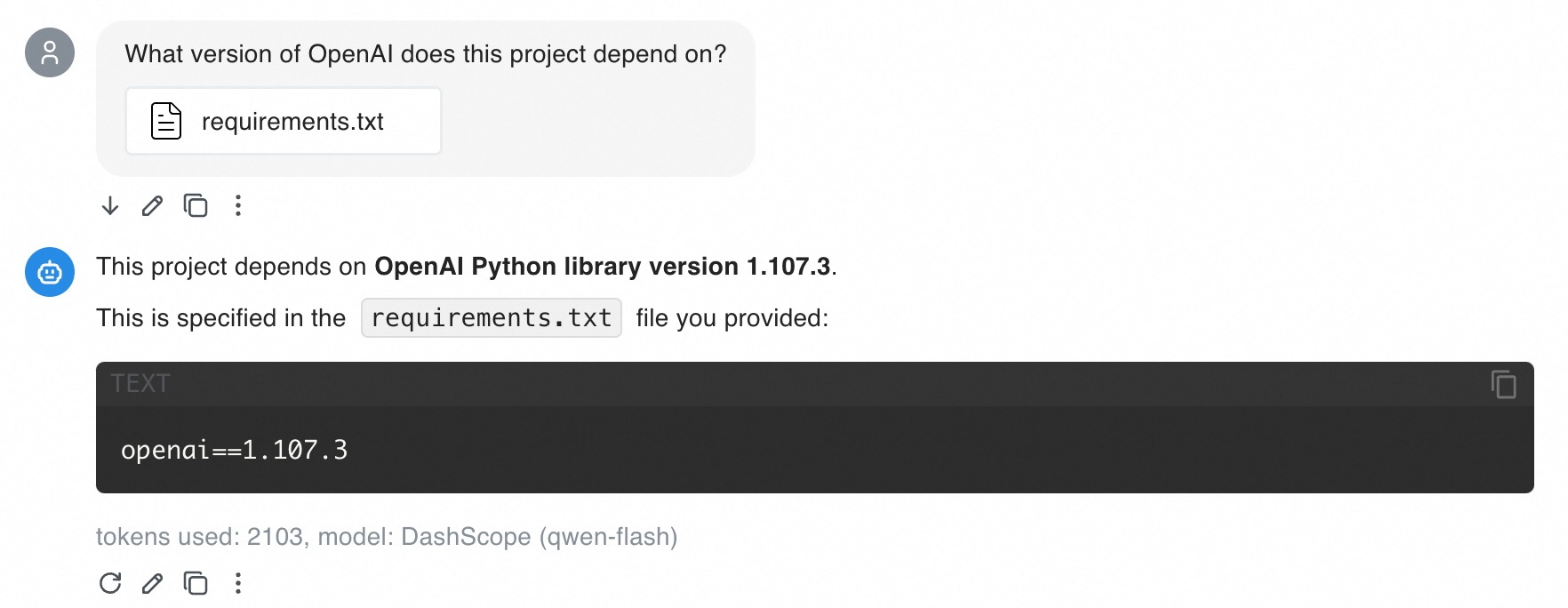

Use a document

Chatbox supports PDF, DOCX, and TXT files. Models answer questions based on provided documents.

Note: Images within documents are unsupported.

1. Select a model

For document Q&A, use models with long context capabilities like Qwen-Flash, Qwen-Long, qwen2.5-14b-instruct-1m, or qwen2.5-7b-instruct-1m. These process millions of tokens at lower cost.

In 2.2. Configure the model and API key, add your desired model (e.g., qwen-flash) in Model section.

2. Start the chat

Next to the Send button, select your added model like ![]() . Enter your question, click

. Enter your question, click ![]() , then Select File.

, then Select File.

Note: Consecutive document questions consume many tokens. To reduce costs: (1) lower Max Message Count in Context to reduce input tokens, or (2) use qwen-flash with context cache support, which reduces input token costs in multi-turn conversations.

FAQ

Q: How am I billed?

A: Model Studio bills based on input and output tokens. For model token cost details, see Models.

Note: Multi-turn conversations include historical chat records, consuming more tokens. To reduce consumption: start a new chat or lower Max Message Count in Context. For casual chats, set Max Message Count in Context to 5 to 10.

Q: What do I do if Chatbox reports the error “Failed to connect to Custom Provider”?

A: Troubleshoot based on the error message:

-

Range of input length should be [1, xxx]

This error occurs when input is too long or accumulated multi-turn context exceeds the model’s context window. Troubleshoot based on your scenario:

-

Error on the first turn of the conversation

Your input text or uploaded file may contain too many tokens. Use a model with 1,000,000 context length like qwen-flash or qwen-long.

-

Error after a multi-turn conversation

Accumulated tokens from multi-turn conversation may exceed the model’s context window. Try these solutions:

-

Start a new chat

The model will not reference previous conversation history in replies.

-

Reduce the Max Message Count in Context

This makes the model reference only limited previous messages instead of entire conversation history.

-

Change the model

Switch to a model with 1,000,000 context length like qwen-flash or qwen-long, enabling longer context for more conversation turns.

-

-

-

'temperature' must be Float

The

temperatureparameter must be less than 2. Set Temperature to a value below 2.

Note: For issues not described above, see Error messages for troubleshooting.

Q: What are the limits for uploaded images and documents?

A:

-

Images: See Image and video understanding.

-

Documents: Chatbox parses provided documents. Total token length of parsed text and context must not exceed model’s context window.