This topic describes how to use a PyODPS 2 node in DataWorks to query data from a level-1 partition of a MaxCompute partitioned table. Three methods are covered: synchronous SQL, asynchronous SQL, and the PyODPS DataFrame API.

This example uses the basic mode of DataWorks. When creating a workspace, Participate in Public Preview of Data Studio is disabled by default. This example does not apply to workspaces enrolled in the Data Studio public preview.

Prerequisites

Before you begin, ensure that you have:

Created a workflow in the DataWorks console

Step 1: Prepare test data

Create a partitioned table and a source table, and import data into the source table. For details, see Create tables and upload data. Use the following statements and sample data for this example.

Create the

user_detailpartitioned table:create table if not exists user_detail ( userid BIGINT comment 'user ID', job STRING comment 'job type', education STRING comment 'education level' ) comment 'user information table' partitioned by (dt STRING comment 'date',region STRING comment 'region');Create the

user_detail_odssource table:create table if not exists user_detail_ods ( userid BIGINT comment 'user ID', job STRING comment 'job type', education STRING comment 'education level', dt STRING comment 'date', region STRING comment 'region' );Create a file named

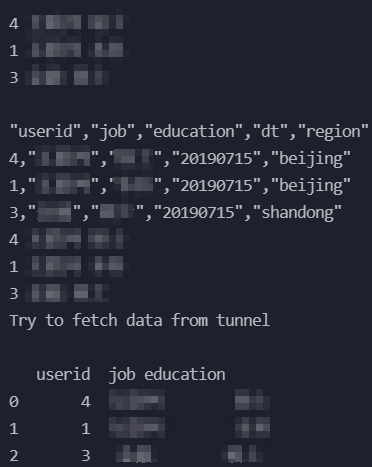

user_detail.txtwith the following content and import it intouser_detail_ods:0001,Internet,bachelor,20190715,beijing 0002,education,junior college,20190716,beijing 0003,finance,master,20190715,shandong 0004,Internet,master,20190715,beijing

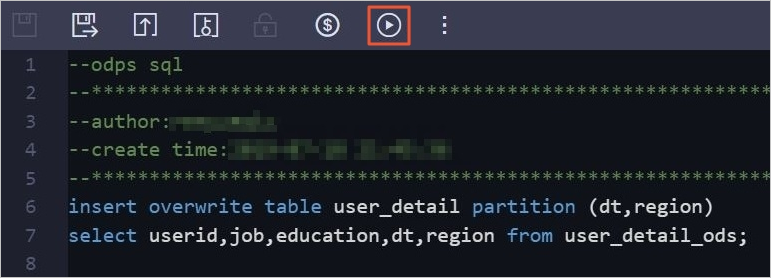

Right-click the workflow and choose Create Node > MaxCompute > ODPS SQL.

In the Create Node dialog box, specify Name and click Confirm.

In the code editor on the configuration tab, enter the following SQL to insert data from

user_detail_odsinto the partitioned table:insert overwrite table user_detail partition (dt,region) select userid,job,education,dt,region from user_detail_ods;Click the Run icon in the toolbar to insert the data from the

user_detail_odstable into theuser_detailpartitioned table.

Step 2: Read data from the level-1 partition

Choose the method that fits your use case:

| Method | API | Use when |

|---|---|---|

| Synchronous mode | o.execute_sql().open_reader() | Query runs quickly; you want results immediately |

| Asynchronous mode | o.run_sql() + wait_for_success() + open_reader() | Query is long-running; you want non-blocking submission |

| PyODPS DataFrame | DataFrame(o.get_table().get_partition()) | You need DataFrame operations after reading |

Log on to the DataWorks console.

In the left-side navigation pane, click Workspace.

Find your workspace, then choose Shortcuts > Data Development in the Actions column.

On the DataStudio page, right-click the workflow and choose Create Node > MaxCompute > PyODPS 2.

In the Create Node dialog box, specify Name and click Confirm.

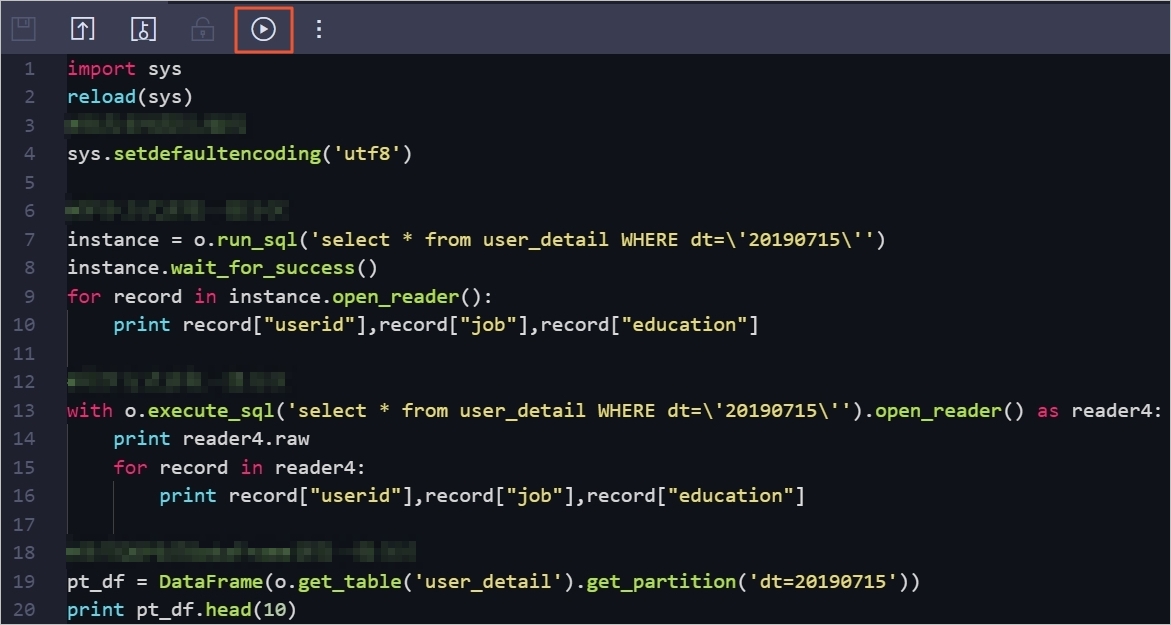

In the code editor on the configuration tab, enter the following code:

import sys reload(sys) # Set UTF-8 as the default encoding format. sys.setdefaultencoding('utf8') # Method 1: Synchronous mode — use for short-running queries. with o.execute_sql('select * from user_detail WHERE dt=\'20190715\'').open_reader() as reader4: print reader4.raw for record in reader4: print record["userid"],record["job"],record["education"] # Method 2: Asynchronous mode — use for long-running queries. # The call returns immediately; wait_for_success() blocks until the query completes. instance = o.run_sql('select * from user_detail WHERE dt=\'20190715\'') instance.wait_for_success() for record in instance.open_reader(): print record["userid"],record["job"],record["education"] # Method 3: PyODPS DataFrame — use when you need DataFrame operations after reading. pt_df = DataFrame(o.get_table('user_detail').get_partition('dt=20190715')) print pt_df.head(10)All three methods filter on the level-1 partition key

dtto read only the rows wheredt='20190715'.Click the Run icon in the toolbar.

View the output on the Run Log tab.