When working with large MaxCompute datasets organized by partition keys such as date or region, you need a way to read only the relevant partition rather than scanning the entire table. A PyODPS node in DataWorks lets you use the PyODPS SDK to query specific partitions directly, reducing data scanned and simplifying your Python-based pipeline.

Prerequisites

Before you begin, ensure that you have:

-

An activated MaxCompute service. For more information, see Activate MaxCompute.

-

An activated DataWorks service. For more information, see Activate DataWorks.

-

A workflow created in the DataWorks console. This example uses a workflow in a DataWorks workspace running in basic mode. For more information, see Create a workflow.

Step 1: Prepare test data

Create a partitioned table, load source data into a staging table, then insert the data into the partitioned table using an ODPS SQL node.

-

Create a partitioned table and a source table, and import data to the source table. For more information, see Create tables and upload data. Use the following DDL statements and sample data.

-

Create a partitioned table named

user_detail:create table if not exists user_detail ( userid BIGINT comment 'user ID', job STRING comment 'job type', education STRING comment 'education level' ) comment 'user information table' partitioned by (dt STRING comment 'date',region STRING comment 'region'); -

Create a source table named

user_detail_ods:create table if not exists user_detail_ods ( userid BIGINT comment 'user ID', job STRING comment 'job type', education STRING comment 'education level', dt STRING comment 'date', region STRING comment 'region' ); -

Create a file named

user_detail.txtwith the following content and import it into theuser_detail_odstable:0001,Internet,bachelor,20190715,beijing 0002,education,junior college,20190716,beijing 0003,finance,master,20190715,shandong 0004,Internet,master,20190715,beijing

-

-

Right-click the workflow and choose Create Node > MaxCompute > ODPS SQL.

-

In the Create Node dialog box, specify Name and click Confirm.

-

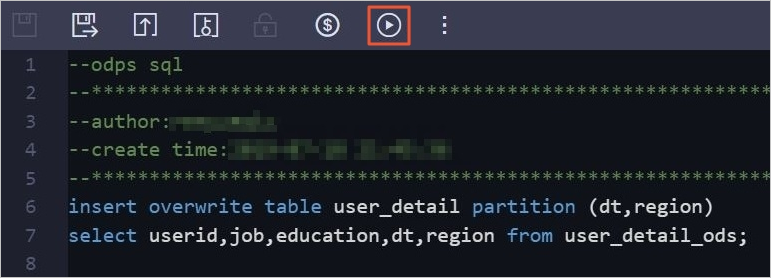

In the code editor on the node configuration tab, enter the following SQL statement:

insert overwrite table user_detail partition (dt,region) select userid,job,education,dt,region from user_detail_ods; -

Click the Run icon in the toolbar to insert data from

user_detail_odsinto theuser_detailpartitioned table.

Step 2: Read data from the partitioned table using a PyODPS node

-

Log on to the DataWorks console.

-

In the left-side navigation pane, click Workspace.

-

Find your workspace, and choose Shortcuts > Data Development in the Actions column.

-

On the DataStudio page, right-click the workflow and choose Create Node > MaxCompute > PyODPS 2.

-

In the Create Node dialog box, specify Name and click Confirm.

-

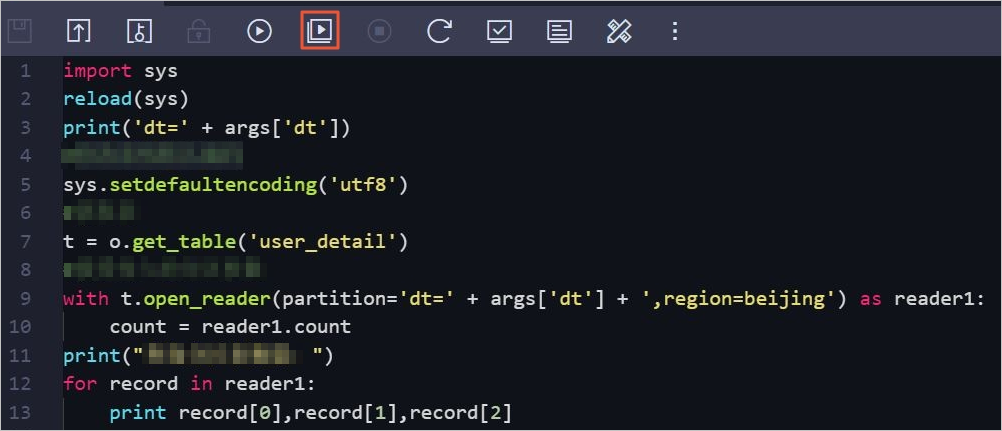

In the code editor on the node configuration tab, enter the following code:

Method How to use Best for Method 1: open_reader()withwithWraps the reader in a context manager Automatic resource cleanup Method 2: open_reader()directOpens the reader and iterates directly Accessing records by column name without a withblockMethod 3: read_table()Calls read_table()on theODPSobjectConcise one-liner reads import sys from odps import ODPS reload(sys) print('dt=' + args['dt']) # Set UTF-8 as the default encoding format. sys.setdefaultencoding('utf8') # Get the partitioned table. t = o.get_table('user_detail') # Check whether a specific partition exists. print t.exist_partition('dt=20190715,region=beijing') # List all partitions in the table. for partition in t.partitions: print partition.name # Method 1: Use open_reader() as a context manager. # The reader closes automatically when the with block exits, which ensures proper resource cleanup. with t.open_reader(partition='dt=20190715,region=beijing') as reader1: count = reader1.count print("Query data in the partitioned table by using Method 1:") for record in reader1: print record[0],record[1],record[2] # Method 2: Use open_reader() without a context manager. # Access records by column name instead of index position. print("Query data in the partitioned table by using Method 2:") reader2 = t.open_reader(partition='dt=20190715,region=beijing') for record in reader2: print record["userid"],record["job"],record["education"] # Method 3: Use read_table() on the ODPS object directly. # This is the most concise option for simple reads. print("Query data in the partitioned table by using Method 3:") for record in o.read_table('user_detail', partition='dt=20190715,region=beijing'): print record["userid"],record["job"],record["education"]The

partitionargument specifies the exact partition to read using the formatkey=valuefor each partition column, separated by commas. All three methods read a single partition at a time. -

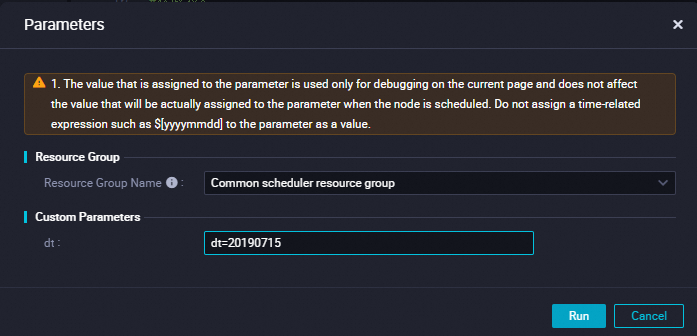

Click the Run with Parameters icon in the toolbar.

-

In the Parameters dialog box, configure the following parameters and click Run:

Parameter Description Resource Group Name Select Common scheduler resource group. dt Set to dt=20190715.

-

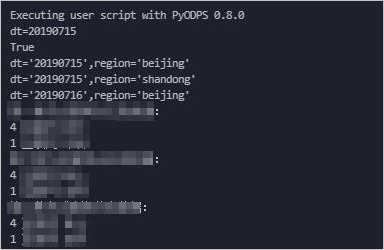

View the results on the Run Log tab.