This topic describes how to use Schemaless Query in MaxCompute to read Parquet files generated by Spark SQL and export the computation results to OSS by using the UNLOAD command. This example uses an E-MapReduce Serverless Spark cluster.

Prerequisites

An EMR Serverless Spark workspace is created.

This topic uses the China (Hangzhou) region as an example. The workspace name is

schemaless_testand the OSS bucket name isoss-mc-test.A MaxCompute project is created.

Step 1: Generate Parquet data based on Serverless Spark

Log on to the E-MapReduce console. In the left-side navigation pane, choose .

On the Spark page, click the name of the created workspace. On the EMR Serverless Spark page, click Data Development in the left-side navigation pane.

Create a Spark SQL job, enter the following SQL commands to create a table in Parquet format and write data to it, then click Run.

ImportantBefore you execute the following commands, make sure that the data directory and database selected in the upper-right corner of the page are associated with the path of the created OSS bucket.

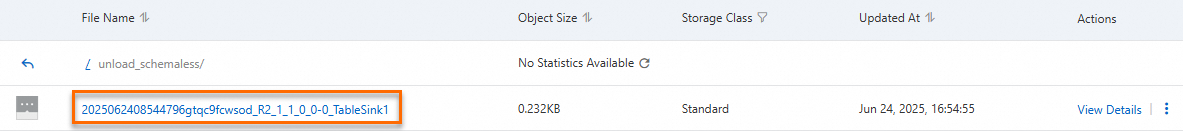

CREATE TABLE example_table01 ( id INT, name STRING, age INT ) USING PARQUET; INSERT INTO example_table01 VALUES (1, 'Alice', 30), (2, 'Bob', 25), (3, 'Charlie', 35), (4, 'David', 40), (5, 'Eve', 32), (6, 'Frank', 28), (7, 'Grace', 33), (8, 'Hannah', 29), (9, 'Ian', 36), (10, 'Julia', 31); SELECT * FROM example_table01;The following figure shows the sample output.

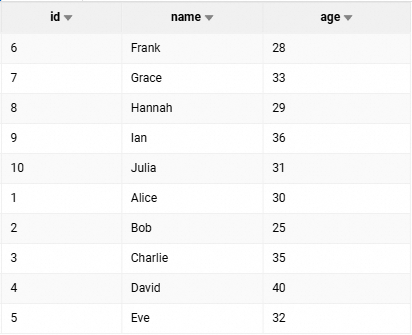

After the job is successfully executed, you can view the generated

example_table01table on the Metadata page in the Data Lake Formation console.

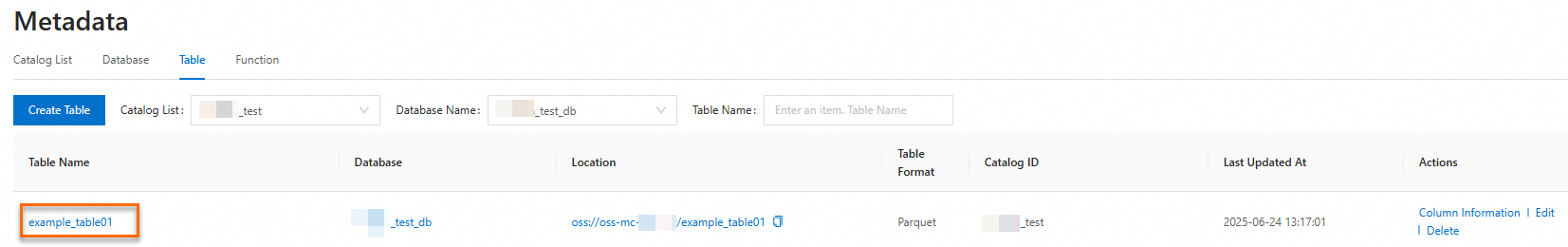

You can also view the generated Parquet files in the OSS bucket directory.

Step 2: Read data by using Schemaless Query

For more information, see Schemaless query.

When you write data to a Parquet table, a file named _SUCCESS is generated. You must use the file_pattern_blacklist parameter to add the _SUCCESS file to the blacklist so that this file is not read. If you do not add this parameter, an error will occur.

SELECT * FROM

LOCATION 'oss://oss-cn-hangzhou-internal.aliyuncs.com/oss-mc-test/example_table01/'

(

'file_format'='parquet',

'file_pattern_blacklist'='.*_SUCCESS.*'

);The following result is returned:

+------------+------------+------------+

| id | name | age |

+------------+------------+------------+

| 1 | Alice | 30 |

| 2 | Bob | 25 |

| 3 | Charlie | 35 |

| 4 | David | 40 |

| 5 | Eve | 32 |

| 6 | Frank | 28 |

| 7 | Grace | 33 |

| 8 | Hannah | 29 |

| 9 | Ian | 36 |

| 10 | Julia | 31 |

+------------+------------+------------+Step 3: Perform calculations using SQL

Query the total number of people who are older than 30.

SELECT COUNT(*) FROM

LOCATION 'oss://oss-cn-hangzhou-internal.aliyuncs.com/oss-mc-test/example_table01/'

(

'file_format'='parquet',

'file_pattern_blacklist'='.*_SUCCESS.*'

)

WHERE age>30;The following result is returned:

+------------+

| _c0 |

+------------+

| 6 |

+------------+Step 4: Export calculation results to OSS using the UNLOAD command

MaxCompute allows you to export data from a MaxCompute project to external storage such as OSS for use by other computing engines. For more information, see UNLOAD.

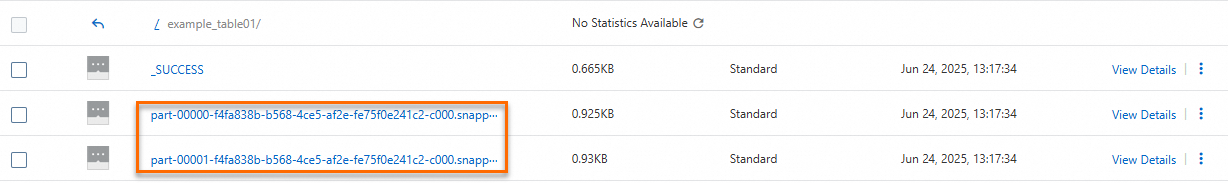

Execute the following command in MaxCompute to export the calculation results from Step 3: Perform calculations using SQL to OSS in Parquet format.

Before you execute the following code, you must create the

unload_schemalessdirectory in the OSS bucketoss-mc-test.UNLOAD FROM ( SELECT COUNT(*) FROM LOCATION 'oss://oss-cn-hangzhou-internal.aliyuncs.com/oss-mc-test/example_table01/' ('file_format'='parquet','file_pattern_blacklist'='.*_SUCCESS.*') WHERE age>30 ) INTO LOCATION 'oss://oss-cn-hangzhou-internal.aliyuncs.com/oss-mc-test/unload_schemaless/' ROW FORMAT SERDE 'org.apache.hadoop.hive.ql.io.parquet.serde.ParquetHiveSerDe' WITH SERDEPROPERTIES ('odps.properties.rolearn'='acs:ram::<uid>:role/AliyunODPSDefaultRole') STORED AS PARQUET PROPERTIES('mcfed.parquet.compression'='SNAPPY') ;Log on to the OSS console to check whether the UNLOAD operation is successful.