Use DataWorks Data Integration to automatically partition and migrate data from ApsaraDB RDS to MaxCompute. This tutorial covers three scenarios:

| Scenario | Use when |

|---|---|

| Daily incremental sync with automatic date partitioning | You want to sync new records daily and store them in date-based partitions automatically |

| Backfill historical data | You have existing records in RDS that predate the scheduled sync and need to populate past partitions |

| Partition by non-date fields | You need to distribute records by a field such as region or category rather than by date |

How it works

The sync pipeline runs on a daily schedule. Each run reads data from a MySQL source in ApsaraDB RDS, writes it to a partitioned MaxCompute table, and creates a new partition automatically.

The partition value is controlled by ${bizdate}, a built-in DataWorks scheduling parameter. By default, ${bizdate} resolves to the day before the task execution date in yyyymmdd format. This is because ETL jobs typically process the previous day's business data.

To use a different date offset, replace ${bizdate} with a custom scheduling parameter.

Prerequisites

Before you begin, make sure you have:

-

Activated MaxCompute and DataWorks. See Activate MaxCompute and DataWorks

-

Created a workflow in DataWorks (this tutorial uses basic mode). See Create a workflow

-

Added a MySQL data source. See Configure a MySQL data source

-

Added a MaxCompute data source. See Configure a MaxCompute data source

This tutorial uses the basic mode of DataWorks. When you create a workspace, the Use Data Studio (New Version) option is deselected by default. The steps below are not applicable to workspaces in public preview.

Set up automatic date partitioning

Step 1: Create the destination table

-

Log in to the DataWorks console.

-

In the left navigation pane, click Workspace.

-

In the Actions column of your workspace, click Shortcuts > Data Development.

-

Right-click the workflow and choose Create Table > MaxCompute > Table.

-

On the Create Table page, select the engine instance, schema, and path. Enter a Table Name and click Create.

-

On the table editing page, click the

icon to switch to DDL mode.

icon to switch to DDL mode. -

In the DDL dialog box, paste the following statement and click Generate Table Schema. In the Confirm dialog box, click OK.

CREATE TABLE IF NOT EXISTS ods_user_info_d ( uid STRING COMMENT 'User ID', gender STRING COMMENT 'Gender', age_range STRING COMMENT 'Age range', zodiac STRING COMMENT 'Zodiac sign' ) PARTITIONED BY ( dt STRING ); -

Click Submit to Production Environment.

Step 2: Create an offline sync node

-

On the data analytics page, right-click the workflow and choose Create Node > Data Integration > Offline synchronization.

-

In the Create Node dialog box, enter a Name and click Confirm.

-

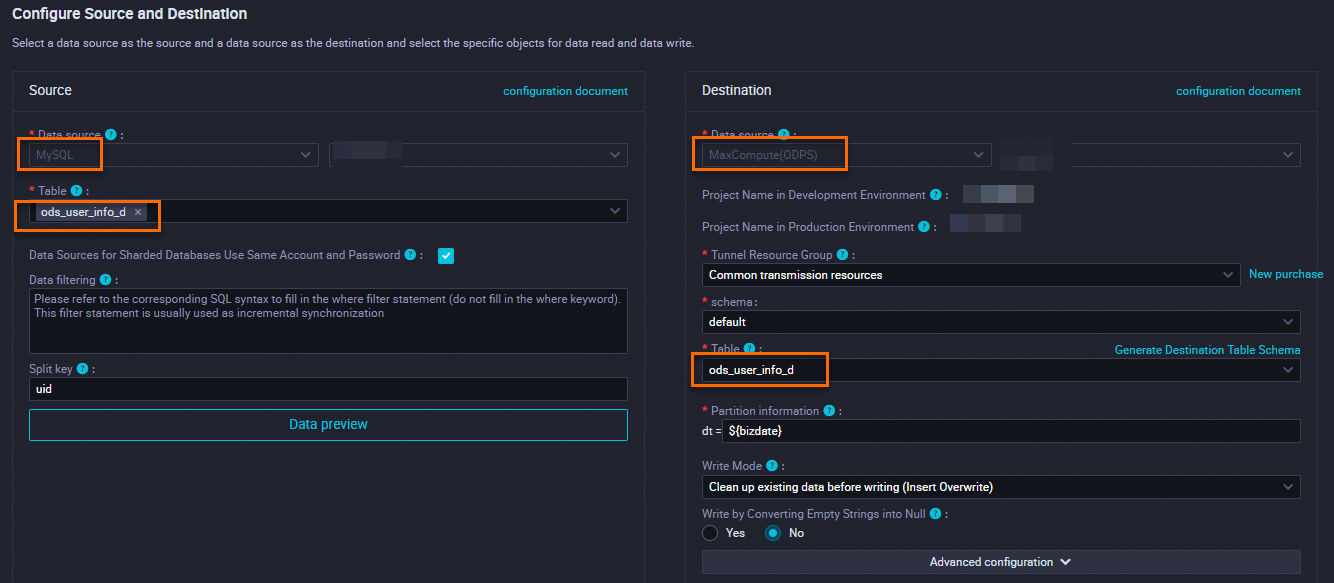

Configure the data source and destination:

Field Value Source Select the MySQL data source you created Resource Group Select an exclusive resource group for Data Integration Destination Select the MaxCompute data source you created -

Test connectivity, then click Next to configure the task.

Step 3: Configure partition parameters

-

In the right-side navigation pane, click Properties.

-

In the Scheduling Parameters section, review the default parameter. The default value is

${bizdate}inyyyymmddformat. This maps to the partition field in the Destination section and resolves to the previous day's date at runtime. To use a different date offset, replace${bizdate}with a custom parameter. Wrap the date formula in square brackets[]:The default calculation unit is days. For

add_months, the unit is months:$[add_months(yyyymmdd,12*N)-M/24/60]computes(current timestamp − 12×N months − M/24/60 days)and returns the result inyyyymmddformat.Offset Formula Notes N days later $[yyyymmdd+N]Default unit is days N days earlier $[yyyymmdd-N]Default unit is days N weeks later $[yyyymmdd+7*N]Multiply by 7 for weeks N weeks earlier $[yyyymmdd-7*N]Multiply by 7 for weeks N months later $[add_months(yyyymmdd,N)]add_monthsunit is monthsN months earlier $[add_months(yyyymmdd,-N)]add_monthsunit is monthsN years later $[add_months(yyyymmdd,12*N)]12 months per year N years earlier $[add_months(yyyymmdd,-12*N)]12 months per year N hours later $[hh24miss+N/24]Result in hh24miss format N hours earlier $[hh24miss-N/24]Result in hh24miss format N minutes later $[hh24miss+N/24/60]Result in hh24miss format N minutes earlier $[hh24miss-N/24/60]Result in hh24miss format

Step 4: Run and verify

-

Click the

icon to run the node.

icon to run the node. -

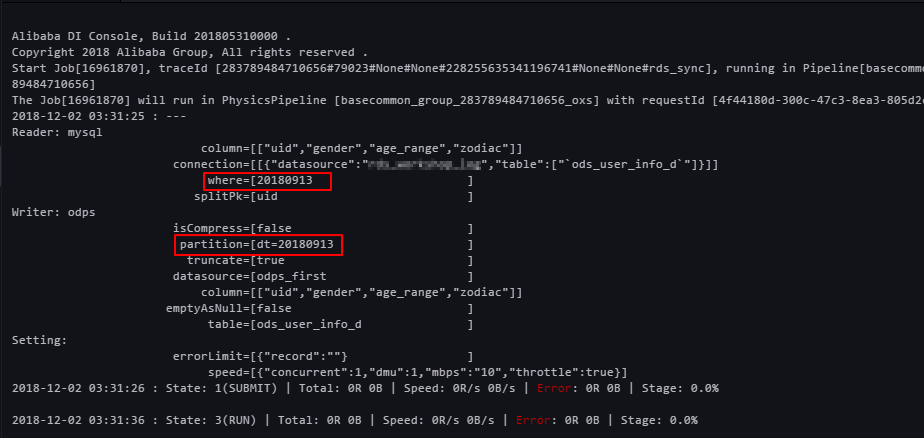

After the run completes, open the Operation Log to confirm the data was written successfully.

-

On the MaxCompute client, run the following query to verify the partition was created and data was loaded:

SELECT count(*) from ods_user_info_d where dt = 20180913;

Backfill historical data

If you have historical data in ApsaraDB RDS that predates your scheduled sync, use the Data Backfill feature in DataWorks Operation Center to fill in the missing partitions.

-

In the sync node's Source section, set the Data Filtering condition to filter records by date. For example, use

${bizdate}to filter by business date. -

In DataWorks Operation Center, select the sync node and use the Data Backfill feature to submit runs for the historical date range. See Perform a data backfill operation and view the data backfill instance (new version).

-

After the runs complete, check the run log to confirm that MaxCompute created a partition for each date.

-

On the MaxCompute client, run the following query to verify data in a specific partition:

SELECT count(*) from ods_user_info_d where dt = 20180913;

Partition by non-date fields

Data Integration creates partitions automatically only for date-based fields. To partition data by a non-date field — such as a region or category — use a three-node workflow that loads data into a temporary table and then uses a dynamic INSERT OVERWRITE statement to distribute records into the correct partitions.

The temporary table holds a full copy of the source data before partitioning. Make sure your MaxCompute project has enough storage for the intermediate data. The workflow drops the temporary table after partitioning is complete to reclaim storage.

Step 1: Set up the three-node workflow

Node 1 — Create and populate a temporary table

Create an SQL script node and run the following statements. This creates a non-partitioned temporary table and loads all data from the source MaxCompute table into it.

drop table if exists ods_user_t;

CREATE TABLE ods_user_t (

dt STRING,

uid STRING,

gender STRING,

age_range STRING,

zodiac STRING);

-- Copy data from the source table into the temporary table.

insert overwrite table ods_user_t select dt,uid,gender,age_range,zodiac from ods_user_info_d;Node 2 — Sync all data from ApsaraDB RDS to MaxCompute

Create an offline sync node named mysql_to_odps. Configure it to sync all data from ApsaraDB RDS to MaxCompute without setting any partition.

Node 3 — Dynamically partition the data into the destination table

Create an SQL script node and run the following statements. The INSERT OVERWRITE ... partition(dt) syntax tells MaxCompute to read the dt field from each row and write it to the matching partition, creating the partition if it does not exist.

drop table if exists ods_user_d;

-- Create the partitioned destination table.

CREATE TABLE ods_user_d (

uid STRING,

gender STRING,

age_range STRING,

zodiac STRING

)

PARTITIONED BY (

dt STRING

);

-- Dynamic partitioning: MaxCompute reads the dt field from each row and

-- writes it to the matching partition. For example, a row where dt=20181025

-- is written to the partition dt=20181025, which is created automatically.

-- Include dt in the SELECT list so MaxCompute can use it as the partition key.

insert overwrite table ods_user_d partition(dt)

select dt,uid,gender,age_range,zodiac from ods_user_t;

-- Drop the temporary table to free up storage.

drop table if exists ods_user_t;In MaxCompute, you can use SQL statements to complete data synchronization.For more information about SQL statements, see Learning about MaxCompute, a big data tool of Alibaba Cloud--Use of partitioned tables.

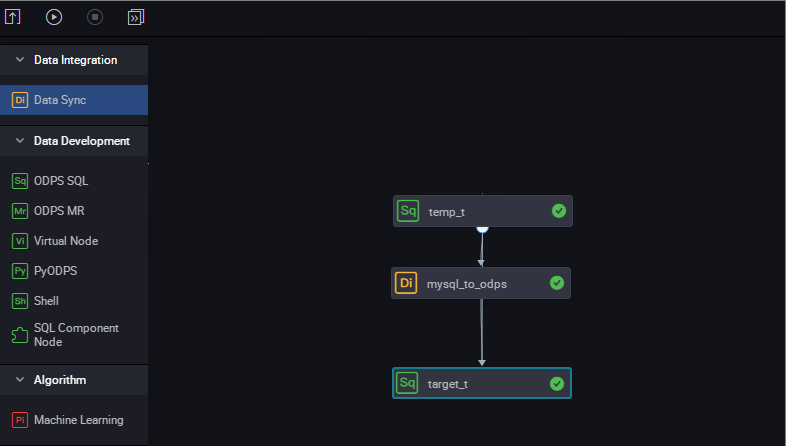

Step 2: Configure the workflow dependencies

Set the three nodes to run sequentially: Node 1 → Node 2 → Node 3.

Step 3: Run and verify

-

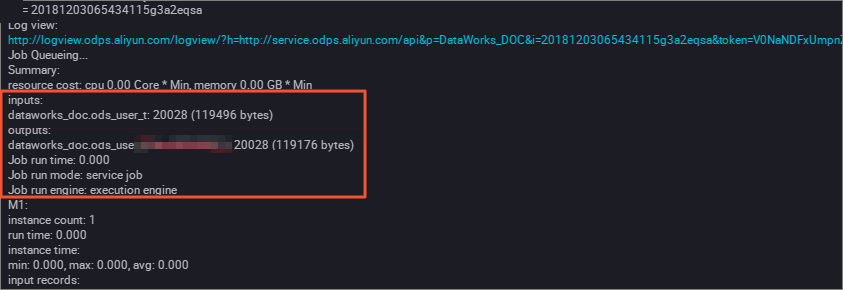

Trigger the workflow. Monitor the execution progress, paying attention to the dynamic partitioning step in Node 3.

-

After the workflow completes, run the following query on the MaxCompute client to verify data in a specific partition:

SELECT count(*) from ods_user_d where dt = 20180913;