This topic describes how to use Flink to create a Paimon DLF Catalog, read MySQL Change Data Capture (CDC) business data, and write the data to DLF. You can then use a MaxCompute external project to perform federated query and analysis on the data lake and write the results back to DLF. This topic uses the new version of DLF, which differs from DLF 1.0. For more information about the new version of DLF, see Data Lake Formation.

Prerequisites

You have activated the OSS service.

You have activated the DLF service.

You have activated the Flink service.

You have created a MaxCompute project and enabled the schema switch for project-level metadata.

You have created an RDS for MySQL instance.

Procedure

Step 1: Prepare the source data

If you already have MySQL test data, skip this step.

This step simulates real-time data updates from a business system. Flink writes the data to the data lake in Paimon format.

Log on to the RDS console.

In the navigation pane on the left, click Instances. Then, select a region in the upper-left corner.

On the Instances page, click the target instance's Instance ID/Name to open its details page.

In the left navigation pane, click Databases.

Click Create Database. Configure the following parameters:

Parameter

Required

Description

Example

Database Name

Required

The name must be 2 to 64 characters in length.

It must start with a letter and end with a letter or a digit.

It can contain lowercase letters, digits, underscores (_), and hyphens (-).

The database name must be unique within the instance.

If the database name contains a hyphen (

-), the hyphen (-) in the folder name of the created database is replaced with@002d.

dlf25_paimonSupported Character Set

Required

Select a character set as needed.

utf8Authorized By

Optional

Select the accounts that need to access this database. You can leave this parameter empty and attach accounts after the database is created.

Only standard accounts are displayed here. Privileged accounts have all permissions on all databases and do not require authorization.

DefaultDescription

Optional

Enter remarks about the database for future management. The remarks can be up to 256 characters in length.

Create a test database for the external project DLF 2.5.Click Log On to Database. In the left navigation pane, select Database Instances. Double-click the database that you created. On the SQLConsole page, execute the following statements to create a test table and write test data.

If the instance exists but the target database is not displayed after you expand the instance, the reason may be one of the following:

The logon account does not have access to the target database: You can go to the Accounts page of the RDS instance to modify the account permissions or change the logon database account.

The metadata is not synchronized, which prevents the directory from being displayed: Hover the mouse pointer over the instance that contains the target database. Click the

button to the right of the instance name to refresh the database list.

button to the right of the instance name to refresh the database list.

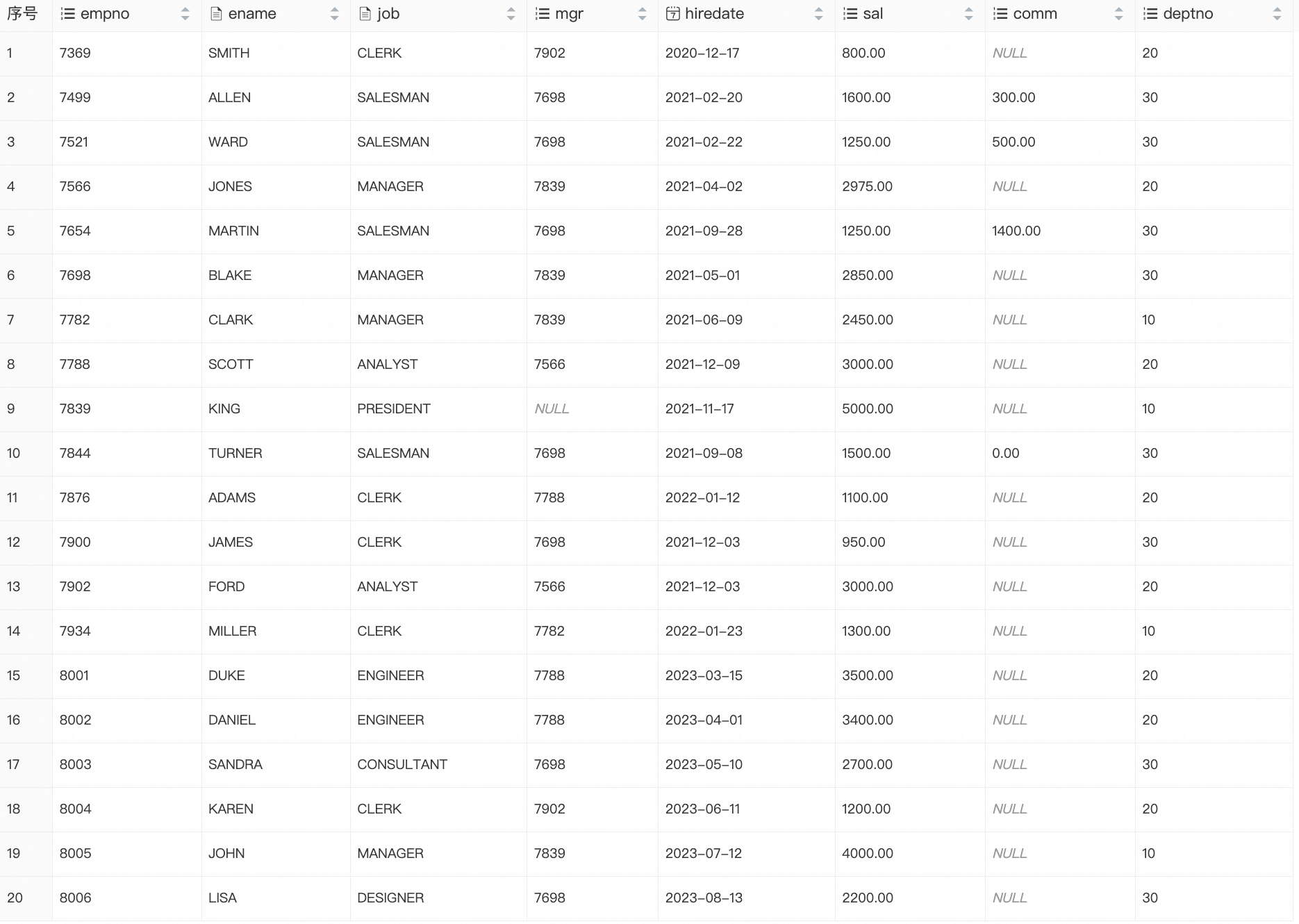

CREATE TABLE emp ( empno INT PRIMARY KEY, ename VARCHAR(20), job VARCHAR(20), mgr INT, hiredate DATE, sal DECIMAL(10,2), comm DECIMAL(10,2), deptno INT ); INSERT INTO emp VALUES (7369,'SMITH','CLERK',7902,'2020-12-17', 800.00,NULL,20), (7499,'ALLEN','SALESMAN',7698,'2021-02-20',1600.00,300.00,30), (7521,'WARD','SALESMAN',7698,'2021-02-22',1250.00,500.00,30), (7566,'JONES','MANAGER',7839,'2021-04-02',2975.00,NULL,20), (7654,'MARTIN','SALESMAN',7698,'2021-09-28',1250.00,1400.00,30), (7698,'BLAKE','MANAGER',7839,'2021-05-01',2850.00,NULL,30), (7782,'CLARK','MANAGER',7839,'2021-06-09',2450.00,NULL,10), (7788,'SCOTT','ANALYST',7566,'2021-12-09',3000.00,NULL,20), (7839,'KING','PRESIDENT',NULL,'2021-11-17',5000.00,NULL,10), (7844,'TURNER','SALESMAN',7698,'2021-09-08',1500.00,0.00,30), (7876,'ADAMS','CLERK',7788,'2022-01-12',1100.00,NULL,20), (7900,'JAMES','CLERK',7698,'2021-12-03', 950.00,NULL,30), (7902,'FORD','ANALYST',7566,'2021-12-03',3000.00,NULL,20), (7934,'MILLER','CLERK',7782,'2022-01-23',1300.00,NULL,10), (8001,'DUKE','ENGINEER',7788,'2023-03-15',3500.00,NULL,20), (8002,'DANIEL','ENGINEER',7788,'2023-04-01',3400.00,NULL,20), (8003,'SANDRA','CONSULTANT',7698,'2023-05-10',2700.00,NULL,30), (8004,'KAREN','CLERK',7902,'2023-06-11',1200.00,NULL,20), (8005,'JOHN','MANAGER',7839,'2023-07-12',4000.00,NULL,10), (8006,'LISA','DESIGNER',7698,'2023-08-13',2200.00,NULL,30);Query the data in the test table.

SELECT * FROM emp;The following result is returned:

Step 2: Prepare a DLF metadatabase

Log on to the Data Lake Formation (DLF) console and select a region in the upper-left corner.

In the navigation pane on the left, choose Catalog List.

On the Catalogs page, click Create Catalog.

On the Data Lake Formation (DLF) page, enter the following parameters and then click Create Catalog:

Parameter

Required

Description

Catalog Name

Required

A custom catalog name. The name must start with a letter and can be 1 to 256 characters in length. It can contain letters, digits, and underscores (_). For example,

db_dlf25_oss.Description

Optional

A custom description.

Storage Type

Required

Standard.

Storage Redundancy Type

Required

Locally redundant storage: Data is stored in a single zone. If the zone where the data is stored becomes unavailable, the data becomes inaccessible. We recommend that you use zone-redundant storage.

Zone-redundant storage: This is a multi-zone redundancy mechanism within the same region. If a single zone becomes unavailable, your data remains available. After a catalog is created, you cannot change its storage redundancy type from zone-redundant to locally redundant. Zone-redundant storage provides higher data availability than locally redundant storage but is more expensive. We recommend zone-redundant storage for data that requires high availability.

Automatic Storage Tiering

Required

After you enable this feature, the system automatically tiers the storage of hot and cold data for all tables in the catalog based on the configured lifecycle rules. Specify the following parameters as needed:

Infrequent Access, Archive, and Cold Archive have minimum retention periods of 30 days, 60 days, and 180 days, respectively. If you delete data before the minimum retention period ends, you are still charged for the minimum retention period.

When you use a lifecycle rule to change the storage class, fees for the corresponding number of requests are incurred. Configure this setting with caution.

Cold Archive is a locally redundant storage class. Its data is stored redundantly within a specific zone. If that zone becomes unavailable, the data becomes inaccessible. If your business requires higher availability, we strongly recommend that you use zone-redundant storage classes (Standard, Infrequent Access, or Archive) to store and use your data.

After data is converted to Cold Archive, you must restore it before you can access it. Within the restoration quota, the restoration latency is 2 to 5 hours. You cannot access the data until the restoration is complete. Proceed with caution.

Step 3: Create Paimon and MySQL catalogs using Flink

Create a Paimon catalog

Log on to the Flink console, and select a region in the upper-left corner.

Click the name of the target workspace. In the navigation pane on the left, select Catalogs .

On the Catalog List page, click Create Catalog on the right. In the Create Catalog dialog box, select Apache Paimon, click Next , and configure the following parameters:

Parameter

Required

Description

metastore

Required

The metastore type. In this example, select

dlf.catalog name

Required

Select the DLF Catalog of the version you want to associate. Select DLF V2.5. In this example, it is

db_dlf25_osscreated in DLF.

Create a MySQL catalog

Log on to the Flink console, and select a region in the upper-left corner.

Add an IP address to the whitelist.

In the Actions column for the target workspace, click Details.

In the Workspace Details panel, copy the CIDR Block of the vSwitch.

Log on to the RDS console.

In the navigation pane on the left, click Instances. Then, select a region in the upper-left corner.

On the Instances page, click the target instance's Instance ID/Name to open its details page.

In the navigation pane on the left, click Whitelist and SecGroup.

On the Whitelist Settings tab, click Modify.

In the Edit Whitelist dialog box, add the copied CIDR block to the IP Addresses field, and then click OK.

Log on to the Flink console, and select a region in the upper-left corner.

Click the name of the target workspace. In the navigation pane on the left, select Catalogs .

On the Catalog List page, click Create Catalog. In the Create Catalog dialog box, select MySQL, click Next, and configure the following parameters:

Parameter

Required

Description

catalog name

Required

A custom name for the MySQL catalog. For example,

mysql-catalog-dlf25.hostname

Required

The IP address or hostname of the MySQL database.

You can log on to the RDS for MySQL console. On the database instance details page, click Database Connection to view the database's Internal Endpoint, Public Endpoint, and Internal Port.

When accessing across VPCs or over the Internet, you need to establish network connectivity. For more information, see Network Connectivity.

port

Default

The port used to connect to the server. The default value is 3306.

default database

Required

The default database name. For example,

dlf25_paimon.username

Required

The username used to connect to the MySQL database server. You can log on to the RDS for MySQL console. On the instance details page, click Account Management to view the username.

password

Required

The password used to connect to the MySQL database server. You can log on to the RDS for MySQL console. On the instance details page, click Account Management to view the password.

Step 4: Read MySQL data and write it to a Paimon table in DLF using Flink

Log on to the Flink console, and select a region in the upper-left corner.

Click the target workspace name, and then in the left navigation pane, select .

On the Drafts tab, click

to create a new folder.

to create a new folder.Right-click the folder and select New Blank Stream Draft. In the New Draft dialog box, enter a Name and select an Engine Version.

In the file, write and execute the following SQL statement. Make sure to modify the names in the code based on your actual environment.

CREATE TABLE IF NOT EXISTS `db_dlf25_oss`.`default`.`emp` WITH ( 'bucket' = '4', 'changelog-producer' = 'input' ) AS TABLE `mysql-catalog-dlf25`.`dlf25_paimon`.`emp`;(Optional) In the upper-right corner, click Validate to validate the syntax of the job's Flink SQL statement.

Click Deploy in the upper-right corner. In the Deploy draft dialog box, specify the Comment, Label, and Deployment Target, and then click Confirm.

Click the target workspace name. In the navigation pane on the left, select .

On the Deployments page, click the name of the target job to open its Configuration page.

In the upper-right corner of the deployment details page for the target job, click Start, select Initial Mode, and then click Start.

Query the Paimon data

In the left navigation pane, select .

On the New Script tab, you can click

to create a new query script.

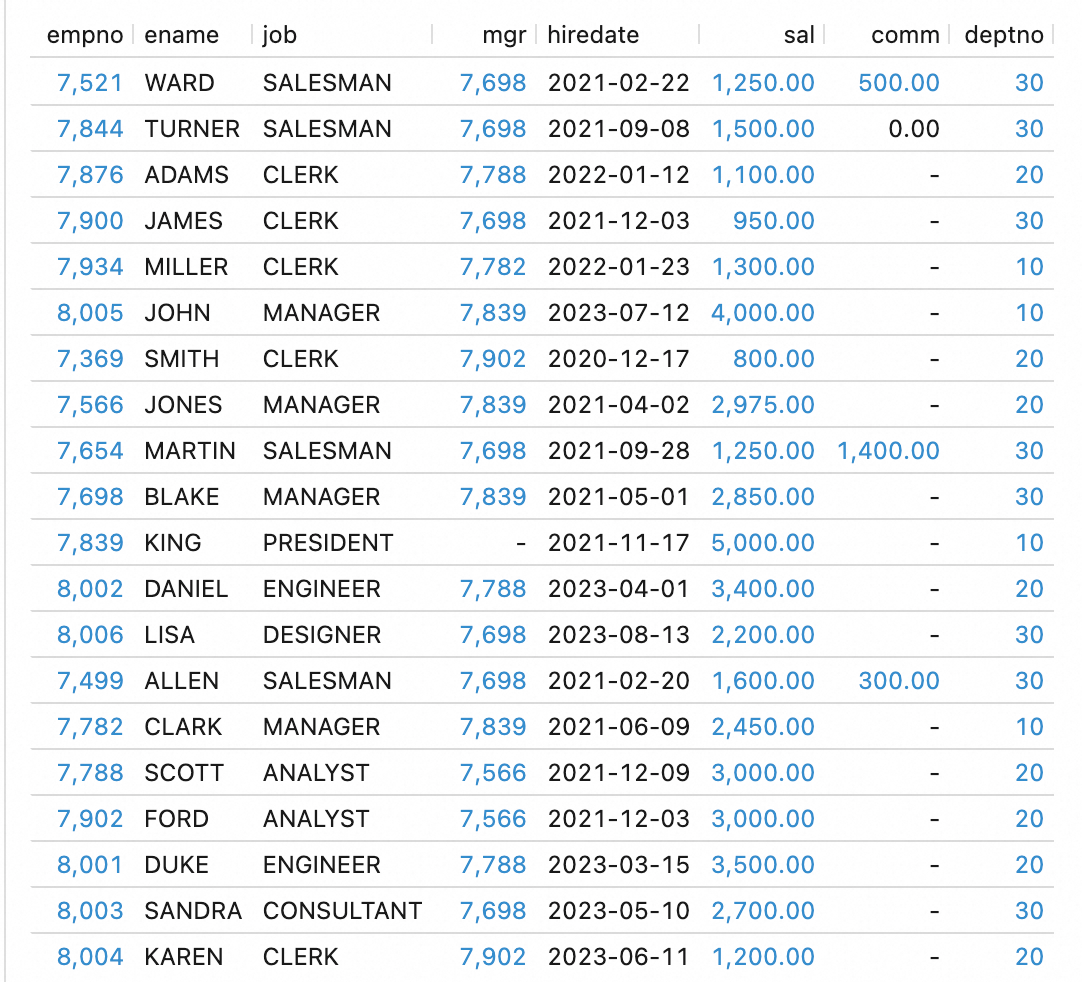

to create a new query script.SELECT * FROM `db_dlf25_oss`.`default`.`emp`;The following result is returned:

Log on to the Data Lake Formation (DLF) console. In the navigation pane, choose . Click the data catalog to go to the database and view the details of the synchronized table.

Step 5: Create a Paimon_DLF external data source in MaxCompute

Log on to the MaxCompute console, and select a region in the upper-left corner.

In the navigation pane on the left, choose .

On the External Data Source page, click Create External Data Source.

In the Create External Data Source dialog box, configure the parameters. The following tables describe the parameters.

Parameter

Required

Description

External Data Source Type

Required

Select DLF+OSS.

External Data Source Name

Required

A custom name. The naming conventions are as follows:

The name must start with a letter and can contain only lowercase letters, underscores (_), and digits.

The name cannot exceed 128 characters in length.

For example,

mysql_paimon_dlf25.Description

Optional

Enter a description as needed.

Region

Required

The default value is the current region.

DLF Endpoint

Required

The default value is the DLF Endpoint of the current region.

OSS Endpoint

Required

The default value is the OSS Endpoint of the current region.

RoleARN

Required

The Alibaba Cloud Resource Name (ARN) of the RAM role. This role must have permissions to access both DLF and OSS.

Log on to the Resource Access Management (RAM) console.

In the navigation pane on the left, choose .

In the Basic Information section, you can find the ARN.

Example:

acs:ram::124****:role/aliyunodpsdefaultrole.Foreign Server Supplemental Properties

Optional

Special attributes for the external data source. After you specify them, tasks that use this external data source can access the source system according to the defined behavior.

NoteFor information about the supported parameters, see the official documentation. More parameters will be supported as the product evolves.

Click OK to create the external data source.

On the External Data Source page, find the target data source and click Details in the Actions column.

Step 6: Create a Paimon_DLF external project in MaxCompute

Log on to the MaxCompute console, and select a region in the upper-left corner.

In the navigation pane on the left, choose .

On the External Project tab, click Create Project.

In the Create Project dialog box, configure the project information as prompted and click OK.

Parameter

Required

Description

Project Type

Required

The default value is External Project.

Region

Required

The default value is the current region. You cannot change it here.

Project Name (Globally Unique)

Required

The name must start with a letter, contain letters, digits, and underscores (_), and be 3 to 28 characters in length.

MaxCompute Foreign Server Type

Optional

The default value is Paimon_DLF.

MaxCompute Foreign Server

Optional

Use Existing: A list of created external data sources appears.

Create Foreign Server: Create and use a new external data source.

MaxCompute Foreign Server Name

Required

Use Existing: Select the name of a created external data source from the drop-down list.

New external data source: Use the name of the new external data source.

Authentication And Authorization

Required

The identity of the task executor. If a service-linked role does not exist, you must create one before you can use this mode.

Service-linked Role

Required

Generated by default.

Endpoint

Required

Generated by default.

Data Catalog

Required

The DLF data catalog.

Billing Method

Required

Subscription or Pay-as-you-go.

Default Quota

Required

Select an existing quota.

Description

Optional

A custom project description.

Step 7: Analyze data

Use a connection tool to log on to the external project.

List the schemas in the external project.

-- Enable the schema syntax at the session level. SET odps.namespace.schema=true; SHOW schemas; -- The following result is returned. ID = 20250919****am4qb default system OKList the tables in the schema of the external project.

USE schema default; SHOW tables; -- The following result is returned. ID = 20250919****am4qb acs:ram::<uid>:root emp OKRead data from the Paimon table in DLF.

SELECT * FROM emp; -- The following result is returned. +------------+------------+------------+------------+------------+------------+------------+------------+ | empno | ename | job | mgr | hiredate | sal | comm | deptno | +------------+------------+------------+------------+------------+------------+------------+------------+ | 7521 | WARD | SALESMAN | 7698 | 2021-02-22 | 1250 | 500 | 30 | | 7844 | TURNER | SALESMAN | 7698 | 2021-09-08 | 1500 | 0 | 30 | | 7876 | ADAMS | CLERK | 7788 | 2022-01-12 | 1100 | NULL | 20 | | 7900 | JAMES | CLERK | 7698 | 2021-12-03 | 950 | NULL | 30 | | 7934 | MILLER | CLERK | 7782 | 2022-01-23 | 1300 | NULL | 10 | | 8005 | JOHN | MANAGER | 7839 | 2023-07-12 | 4000 | NULL | 10 | | 7369 | SMITH | CLERK | 7902 | 2020-12-17 | 800 | NULL | 20 | | 7566 | JONES | MANAGER | 7839 | 2021-04-02 | 2975 | NULL | 20 | | 7654 | MARTIN | SALESMAN | 7698 | 2021-09-28 | 1250 | 1400 | 30 | | 7698 | BLAKE | MANAGER | 7839 | 2021-05-01 | 2850 | NULL | 30 | | 7839 | KING | PRESIDENT | NULL | 2021-11-17 | 5000 | NULL | 10 | | 8002 | DANIEL | ENGINEER | 7788 | 2023-04-01 | 3400 | NULL | 20 | | 8006 | LISA | DESIGNER | 7698 | 2023-08-13 | 2200 | NULL | 30 | | 7499 | ALLEN | SALESMAN | 7698 | 2021-02-20 | 1600 | 300 | 30 | | 7782 | CLARK | MANAGER | 7839 | 2021-06-09 | 2450 | NULL | 10 | | 7788 | SCOTT | ANALYST | 7566 | 2021-12-09 | 3000 | NULL | 20 | | 7902 | FORD | ANALYST | 7566 | 2021-12-03 | 3000 | NULL | 20 | | 8001 | DUKE | ENGINEER | 7788 | 2023-03-15 | 3500 | NULL | 20 | | 8003 | SANDRA | CONSULTANT | 7698 | 2023-05-10 | 2700 | NULL | 30 | | 8004 | KAREN | CLERK | 7902 | 2023-06-11 | 1200 | NULL | 20 | +------------+------------+------------+------------+------------+------------+------------+------------+Query the emp table to retrieve the complete information of the employees who have the highest and lowest salaries in each department.

WITH ranked AS ( SELECT e.*, ROW_NUMBER() OVER (PARTITION BY deptno ORDER BY sal DESC) AS rn_desc, ROW_NUMBER() OVER (PARTITION BY deptno ORDER BY sal ASC) AS rn_asc FROM emp e ) SELECT * FROM ranked WHERE rn_desc = 1 OR rn_asc = 1 ORDER BY deptno, sal DESC; -- The following result is returned. +-------+--------+-----------+------+------------+------+------+--------+------------+------------+ | empno | ename | job | mgr | hiredate | sal | comm | deptno | rn_desc | rn_asc | +-------+--------+-----------+------+------------+------+------+--------+------------+------------+ | 7839 | KING | PRESIDENT | NULL | 2021-11-17 | 5000 | NULL | 10 | 1 | 4 | | 7934 | MILLER | CLERK | 7782 | 2022-01-23 | 1300 | NULL | 10 | 4 | 1 | | 8001 | DUKE | ENGINEER | 7788 | 2023-03-15 | 3500 | NULL | 20 | 1 | 8 | | 7369 | SMITH | CLERK | 7902 | 2020-12-17 | 800 | NULL | 20 | 8 | 1 | | 7698 | BLAKE | MANAGER | 7839 | 2021-05-01 | 2850 | NULL | 30 | 1 | 8 | | 7900 | JAMES | CLERK | 7698 | 2021-12-03 | 950 | NULL | 30 | 8 | 1 | +-------+--------+-----------+------+------------+------+------+--------+------------+------------+

Step 8: Write the analysis results back to DLF

In the external project from the previous step, create a table to store the SQL analysis results.

CREATE TABLE emp_detail ( empno INT, ename VARCHAR(20), job VARCHAR(20), mgr INT, hiredate DATE, sal DECIMAL(10,2), comm DECIMAL(10,2), deptno INT );You can write the analysis results from Step 5 to a new table.

WITH ranked AS ( SELECT e.*, ROW_NUMBER() OVER (PARTITION BY deptno ORDER BY sal DESC) AS rn_desc, ROW_NUMBER() OVER (PARTITION BY deptno ORDER BY sal ASC) AS rn_asc FROM emp e ) insert into emp_detail SELECT empno,ename,job,mgr, hiredate,sal,comm,deptno FROM ranked WHERE rn_desc = 1 OR rn_asc = 1 ORDER BY deptno, sal DESC;Query the new table.

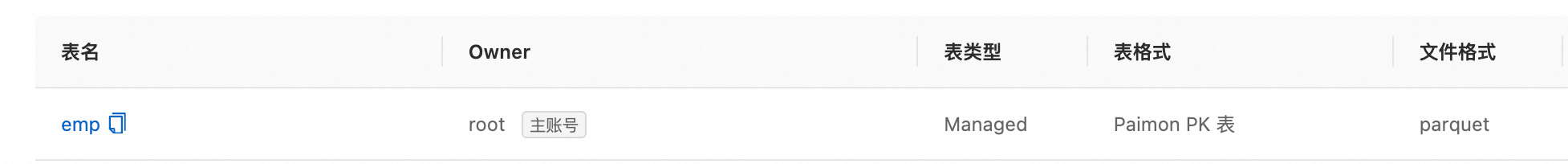

SELECT * FROM emp_detail; -- The following result is returned. +------------+------------+------------+------------+------------+------------+------------+------------+ | empno | ename | job | mgr | hiredate | sal | comm | deptno | +------------+------------+------------+------------+------------+------------+------------+------------+ | 7839 | KING | PRESIDENT | NULL | 2021-11-17 | 5000 | NULL | 10 | | 7934 | MILLER | CLERK | 7782 | 2022-01-23 | 1300 | NULL | 10 | | 8001 | DUKE | ENGINEER | 7788 | 2023-03-15 | 3500 | NULL | 20 | | 7369 | SMITH | CLERK | 7902 | 2020-12-17 | 800 | NULL | 20 | | 7698 | BLAKE | MANAGER | 7839 | 2021-05-01 | 2850 | NULL | 30 | | 7900 | JAMES | CLERK | 7698 | 2021-12-03 | 950 | NULL | 30 | +------------+------------+------------+------------+------------+------------+------------+------------+Log on to the Data Lake Formation (DLF) console. In the navigation pane on the left, choose Catalog List. The newly created

emp_detailtable appears in the list.