The RAY resource group for the Lindorm compute engine provides a distributed computing service for end-to-end processing of AI workloads. This resource group is compatible with the RAY computing model and programming interfaces. It is deeply integrated with the Lindorm multi-model storage engine to efficiently handle data pre-processing, training, and inference tasks. This topic describes how to enable and manage RAY resource groups and explains their billing methods.

The RAY resource group is currently in invitational preview. To use this feature, contact Lindorm technical support (DingTalk ID: s0s3eg3) to request access.

Prerequisites

You have enabled LindormTable.

You have enabled the Lindorm compute engine.

Billing methods

RAY resource groups operate in a persistent mode. The fees consist of two parts:

Persistent resource fees: These fees are calculated in CUs based on the configured persistent resources for the head and worker nodes.

Elastic resource fees: Worker nodes support elastic scaling based on the workload. The fees for these elastically scaled worker nodes are calculated in CUs based on usage duration.

Enable a RAY resource group

Log on to the Lindorm console. In the upper-left corner of the page, select the region of the instance. On the Instances page, click the ID of the target instance or click View Instance Details in the Actions column for the instance.

On the Instance Details page, in the Configurations section, click Resource Groups in the Operations column for the Compute Engine.

On the Resource Group Details page, click Create Resource Group and configure the following parameters:

Resource Group Type: Select RAY.

Resource Group Name: The name of the resource group. The name can contain only lowercase letters and numbers, and must be 63 characters or less in length. For example,

raycg.Running Mode: The default is Resident. In Resident mode, a Ray cluster is always running, and you can submit RAY jobs to this cluster. When no jobs are running, the cluster operates with a minimal amount of resources. After a job is submitted, the cluster dynamically requests resources based on the job's requirements.

Parameters for RAY resident resource groups:

Head node configuration. Select the resource specifications and disk space for the head node based on your cluster size.

Number of worker groups. Select one or more worker groups as needed. Each worker group can have different resource specifications.

Worker group configuration. Configure the resource specifications, disk space, and the minimum and maximum number of running replicas for each worker group.

Head node configuration

Configuration item

Description

Head resource type

RAY resource groups support CPU and GPU resource types.

Head Resource Specifications

For the CPU resource type, select a CPU and memory quota, such as 4 cores and 8 GB, 4 cores and 16 GB, or 8 cores and 32 GB. Select a specification based on your cluster size. The default is 4 cores and 16 GB.

For the GPU resource type, contact Lindorm technical support (DingTalk ID: s0s3eg3) to use GPU resources. GPU resources are subject to limitations on machine types and inventory.

Head disk size

The disk space for the head node. This space is used to store logs, memory overflow files, and resource files used during job execution. The default size is 30 GB.

Worker group configuration

Configuration item

Description

Worker resource type

RAY resource groups support CPU and GPU resource types.

Worker Resource Specifications

For the CPU resource type, select a CPU and memory quota, such as 4 cores and 8 GB, 4 cores and 16 GB, or 8 cores and 32 GB. Select worker group resource specifications based on your actual job requirements. The default is 4 cores and 16 GB.

For the GPU resource type, contact Lindorm technical support (DingTalk ID: s0s3eg3) to use GPU resources. GPU resources are subject to limitations on machine types and inventory.

Worker disk space

The disk space for the worker node. This space is used to store logs, memory overflow files, and resource files used during job execution. The default size is 30 GB.

Minimum number of workers

The minimum number of replicas in the worker group. The group maintains this number of replicas when no jobs are running.

Maximum number of workers

The maximum number of replicas in the worker group. This is the maximum number of worker nodes that can be provisioned when jobs are running.

Click OK to create the RAY resource group. The creation process takes about 20 minutes.

Manage RAY resource groups

Log on to the Lindorm console. In the upper-left corner of the page, select the region of the instance. On the Instances page, click the ID of the target instance or click View Instance Details in the Actions column for the instance.

On the Instance Details page, in the Configurations section, click Resource Groups in the Operations column for Compute Engine.

On the Resource Group Details page, hover over WebUI in the Actions column for the RAY resource group to obtain its WebUI address. For example:

http://alb-57k7r581oht8rd****.cn-hangzhou.alb.aliyuncsslb.com/ray/raycg/dashboard/.Open the WebUI address of the resource group in a browser to view its running status.

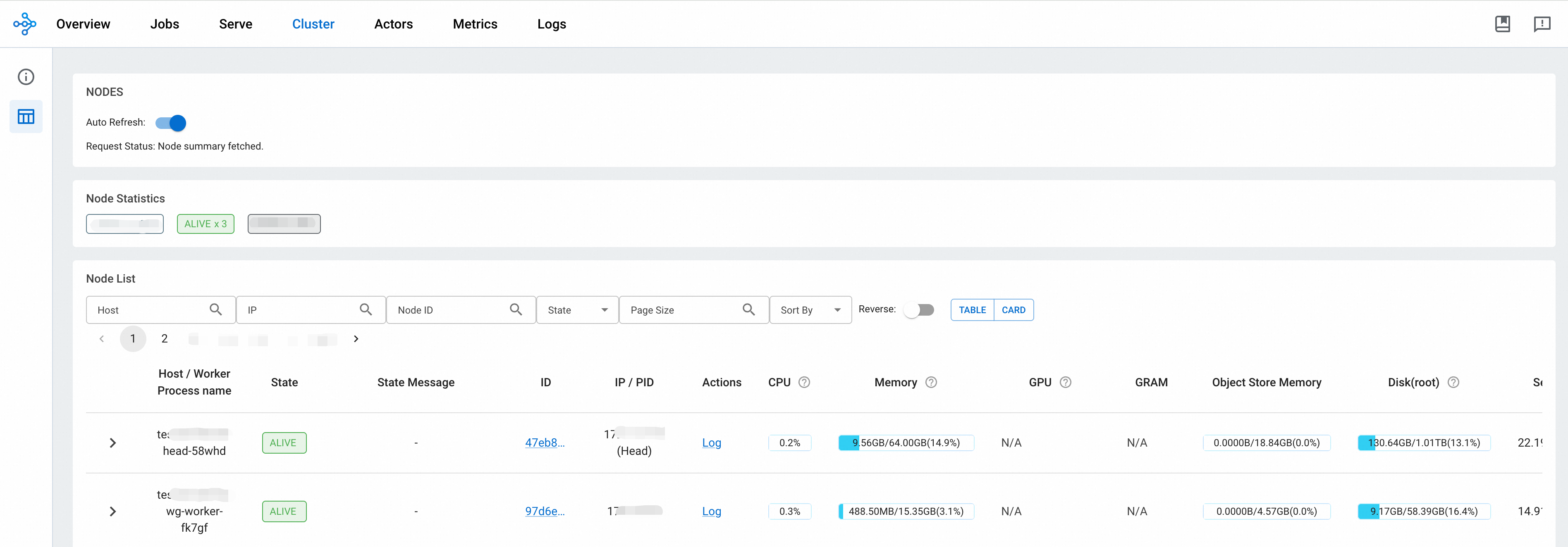

In the navigation bar at the top of the WebUI, you can switch between tabs to view the job list (Jobs), cluster status (Cluster), actor list (Actors), and cluster logs (Logs).

On the Cluster tab, you can view the resource usage for all nodes in the cluster, such as CPU, memory, GPU, and Object Store.

(Optional) On the Resource Group Details page, you can also Delete a resource group.

NoteRAY resource groups do not currently support modify or restart operations.