Get started with message delivery

Message delivery streams Flink job startup logs, resource usage data, and job events to Simple Log Service (SLS) in real time. Use it to persist operational data for troubleshooting, performance analysis, and audit workflows—without modifying your jobs.

How it works

Each message type is delivered independently on its own schedule:

Message type | What it contains | When it's delivered |

Job startup logs | Full log output from Flink environment initialization through JobManager startup and execution graph generation | Once, when the job starts successfully or reaches its final state (failed or finished) |

Resource usage | CPU and memory consumption and allocation for the namespace and its queues | Every 30 seconds for active namespaces |

Job events | Status transitions at each stage of job startup | Immediately when each event occurs |

Job resource consumption | CPU and memory used by running streaming jobs | Every 10 minutes while the job runs |

Job resource consumption covers running streaming jobs only. Batch jobs and jobs on session clusters are excluded. Resource usage data is for capacity tracking and does not support alerting.

Prerequisites

Before you begin, ensure that you have:

An SLS project and Logstore. See Collect and analyze ECS text logs using LoongCollector for setup steps.

(Optional) An AccessKey pair stored as workspace variables, if you plan to deliver messages across regions. See Variable management.

Limitations

Messages can be delivered only to SLS. Other destinations (OSS, Kafka) are not supported for this feature. To route job runtime logs to OSS, SLS, or Kafka, see Configure job log output.

Message delivery is free. SLS features such as Logstore indexing incur traffic and storage fees. See Billing overview.

You can set server-side encryption for your Logstore. Session record delivery inherits this setting. See Data encryption.

Configuration changes take up to 10 seconds to take effect.

Configure message delivery

Step 1: Open the configuration page

Log on to the Realtime Compute for Apache Flink management console.

Click Actions, then click Console for your target workspace.

In the left navigation pane, choose O&M > Configurations.

Step 2: Configure SLS delivery

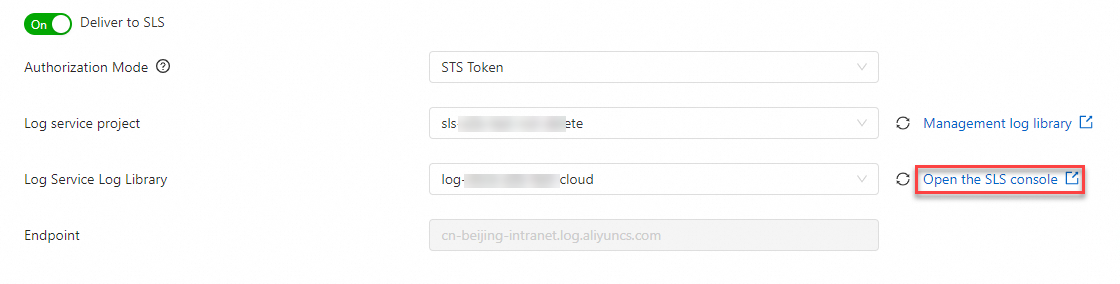

On the Message Delivery tab, turn on Deliver to SLS.

Set the following parameters:

Parameter

Description

Authorization mode

STS Token: Delivers to Logstores in the same region as your Flink workspace. Only the SLS project and Logstore are required. AccessKey: Delivers to Logstores in any region. Requires the endpoint, AccessKey ID, and AccessKey secret.

SLS project

The name of your SLS project.

SLS Logstore

The name of your Logstore.

Endpoint

The SLS service endpoint URL. In STS Token mode, the system sets this automatically. In AccessKey mode, set this manually.

Delivery scope

The message types to deliver. See Field descriptions for the fields included in each type.

AccessKeyId

Your AccessKey ID. Use a workspace variable to avoid exposing credentials. Click the drop-down arrow to select an existing variable, or click the icon to create a new one. For details, see Variable management and How do I view my AccessKey ID and AccessKey secret?

AccessKeySecret

Your AccessKey secret. Use a workspace variable for the same reason.

Click Save.

Cross-region delivery requires AccessKey mode. STS Token mode is limited to the same region as your Flink workspace.

View delivered messages

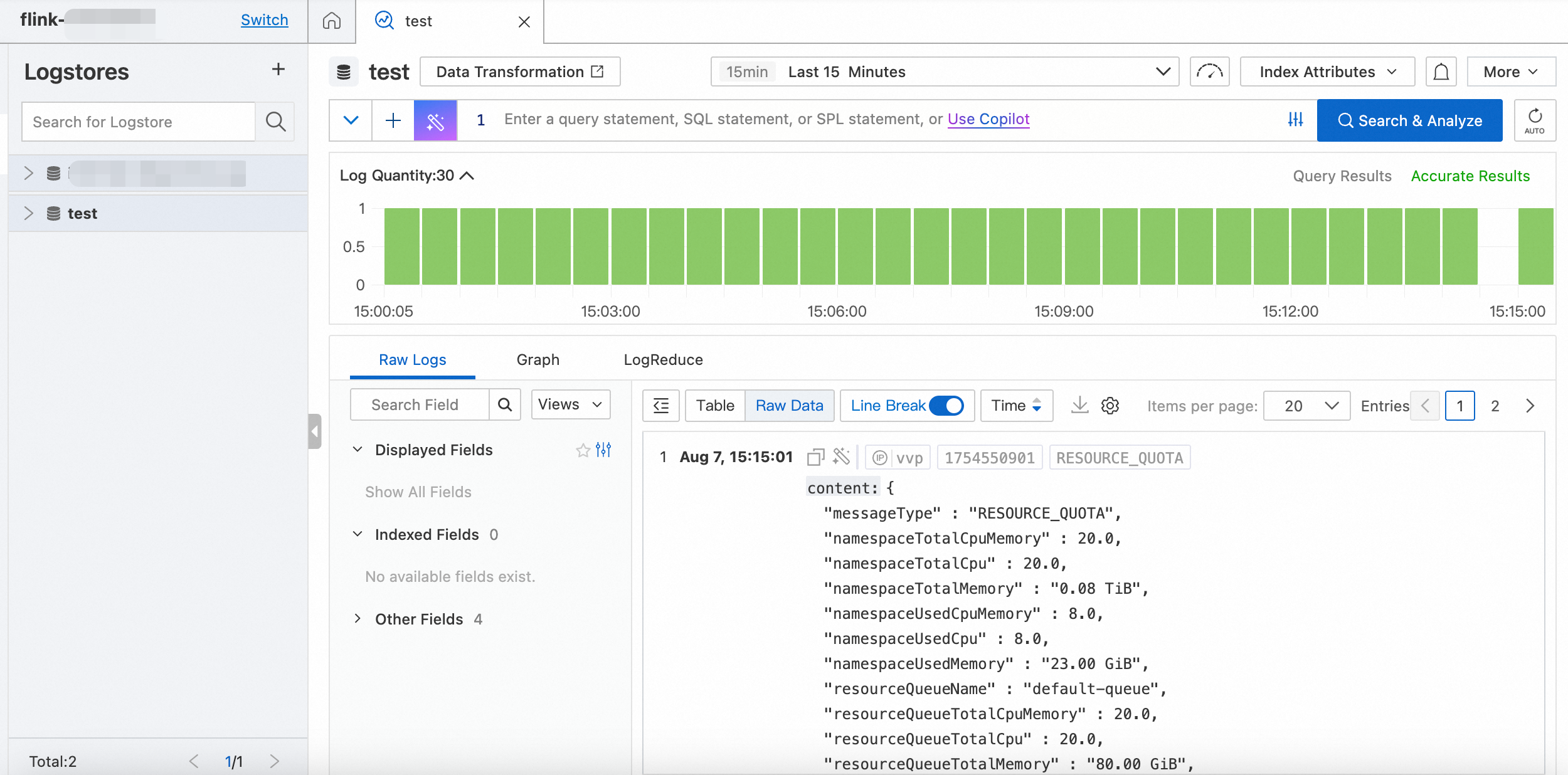

On the Message Delivery tab, locate SLS project, then click Open the SLS console on the right.

In the SLS console, navigate to your Logstore to view raw log entries.

Tip: Enable Logstore indexing to query and filter messages using SLS search syntax. Indexing generates index traffic and uses storage space. For pricing details, see Billing overview.

Field descriptions

Each message type uses the Topic field to identify its type and carries a fixed set of fields.

Job startup logs

Field | Description |

messageType | Fixed value: |

deploymentId | The job deployment ID. |

deploymentName | The job deployment name. |

jobId | The job instance ID. |

tag | The job tag. Empty if no tag is configured. |

length | The total length of the log. |

offset | The starting position of this entry when logs are sharded. |

content | The full job startup log content. |

workspace | The workspace ID. |

namespace | The namespace name. |

messageId | The message ID. |

timestamp | The message timestamp. |

Resource usage

Field | Description |

messageType | Fixed value: |

namespaceTotalCpuMemory | Total Compute Units (CUs) in the namespace. |

namespaceTotalCpu | Total CUs allocated in the namespace. |

namespaceTotalMemory | Total memory allocated in the namespace. |

namespaceUsedCpuMemory | CUs consumed in the namespace. |

namespaceUsedCpu | CUs in use in the namespace. |

namespaceUsedMemory | Memory in use in the namespace. |

resourceQueueName | The queue name. |

resourceQueueTotalCpuMemory | Total CUs in the queue. |

resourceQueueTotalCpu | Total CUs allocated in the queue. |

resourceQueueTotalMemory | Total memory allocated in the queue. |

resourceQueueUsedCpuMemory | CUs consumed in the queue. |

resourceQueueUsedCpu | CUs in use in the queue. |

resourceQueueUsedMemory | Memory in use in the queue. |

workspace | The workspace ID. |

namespace | The namespace name. |

messageId | The message ID. |

timestamp | The message timestamp. |

Job events

Field | Description |

messageType | Fixed value: |

deploymentId | The job deployment ID. |

deploymentName | The job deployment name. |

jobId | The job instance ID. |

tag | The job tag. Empty if no tag is configured. |

eventId | The event ID. |

eventName | The event name. |

content | The details of the job startup log. |

workspace | The workspace ID. |

namespace | The namespace name. |

messageId | The message ID. |

timestamp | The message timestamp. |

Job resource usage

Field | Description |

messageType | Fixed value: |

deploymentId | The job deployment ID. |

deploymentName | The job deployment name. |

jobId | The job instance ID. |

tag | The job tag. Empty if no tag is configured. |

jobUsedCpu | CUs used by the job. |

jobUsedMemory | Memory used by the job. |

workspace | The workspace ID. |

namespace | The namespace name. |

messageId | The message ID. |

timestamp | The message timestamp. |

Troubleshooting

Messages are not appearing in SLS

Verify that the configuration is saved and that 10 seconds have passed since saving.

Check the authorization mode. If using AccessKey mode, confirm the endpoint matches the SLS project region and that the AccessKey ID and secret are correct.

If using STS Token mode, confirm the SLS project is in the same region as your Flink workspace.

Confirm the SLS project and Logstore exist and are not deleted.

Check that the Delivery scope includes the message types you expect.

Delivered messages stop appearing

Resource usage messages are only sent for active namespaces. If no jobs are running, no resource usage data is delivered.

What's next

To configure log output for individual jobs (runtime logs to OSS, SLS, or Kafka), see Configure job log output.

To view logs directly in the development console, see View startup and operational logs, View job running events, View exception logs, and View historical job instance logs.