Use Log Search to query cluster logs by keyword and time range. Logs help you identify cluster issues and support day-to-day operations and maintenance (O&M).

Alibaba Cloud Elasticsearch supports six log types: main log, searching slow log, indexing slow log, GC log, ES access log, and audit log.

Limitations

ES access log: Available only for Elasticsearch instances running version 6.7 (minor engine version 1.0.2 or later) or version 7.10 or later.

Audit log: Console-based viewing is available only for instances running version 7.x or later in the following countries and regions.

Country or greater region Region China China (Beijing), China (Hangzhou), China (Shanghai), China (Zhangjiakou) Asia-Pacific Singapore, Malaysia (Kuala Lumpur), Indonesia (Jakarta), Japan (Tokyo) Europe and Americas US (Virginia), US (Silicon Valley), Germany (Frankfurt), UK (London)

Query logs

Prerequisites

Before you begin, ensure that you have:

An Alibaba Cloud Elasticsearch cluster

Access to the Alibaba Cloud Elasticsearch console

Steps

Log on to the Alibaba Cloud Elasticsearch console.

In the left navigation menu, click Elasticsearch Clusters.

In the top navigation bar, select the resource group and region for your cluster. On the Elasticsearch Clusters page, find the cluster and click its ID.

In the left navigation pane, click Log Search.

On the log page, enter search criteria, select a start time and an end time, and click Search. Log Search returns results in reverse chronological order. Keep the following in mind: Example: To find main logs where the content contains

health, the level isinfo, and the host is172.16.xx.xx, use:Logs from the last seven consecutive days are queryable.

The search box supports Lucene-based query string syntax.

The

ANDoperator must be in uppercase.If you leave the end time blank, the current time is used. If you leave the start time blank, the time one hour before the end time is used.

Up to 10,000 log entries are returned per query. If the results don't include the content you need, narrow the time range.

A single log entry displays up to 10,000 characters.

host:172.16.xx.xx AND content:health AND level:info

Log types

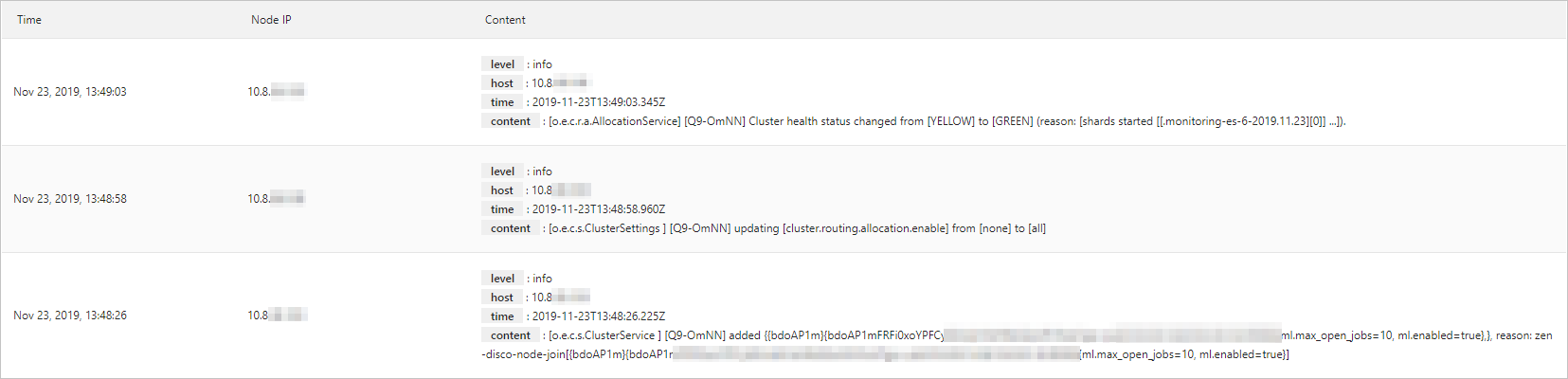

Main log

The main log records cluster health status, index queries, and write operations — including index creation, index mapping updates, write queue events, query exceptions, and cluster-level errors.

When to use it: Check the main log first when your application reports errors. Look for performance bottlenecks, Full GC events, index creation or deletion events, and node connectivity issues.

If an application-side issue occurs, check the main log and Cluster Monitoring together to rule out cluster-level causes before investigating the application.

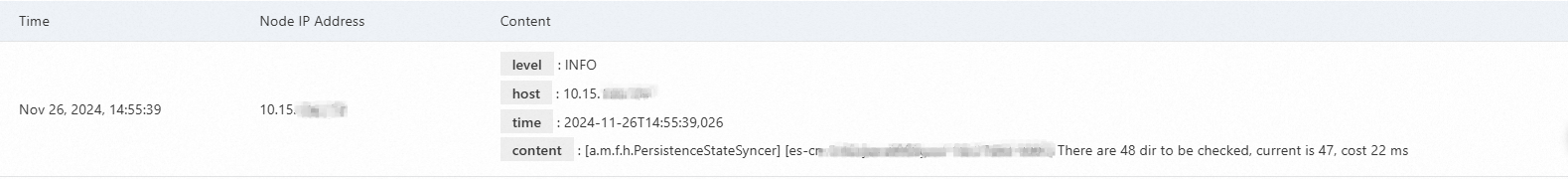

Each log entry includes:

| Field | Description |

|---|---|

| Time | The time when the log was generated. |

| Node IP | The IP address of the node that generated the log. |

| Content | The log details. Contains the sub-fields level, host, time, and content. |

The level field has the following values: trace, debug, info, warn, and error. GC logs do not have a level field.

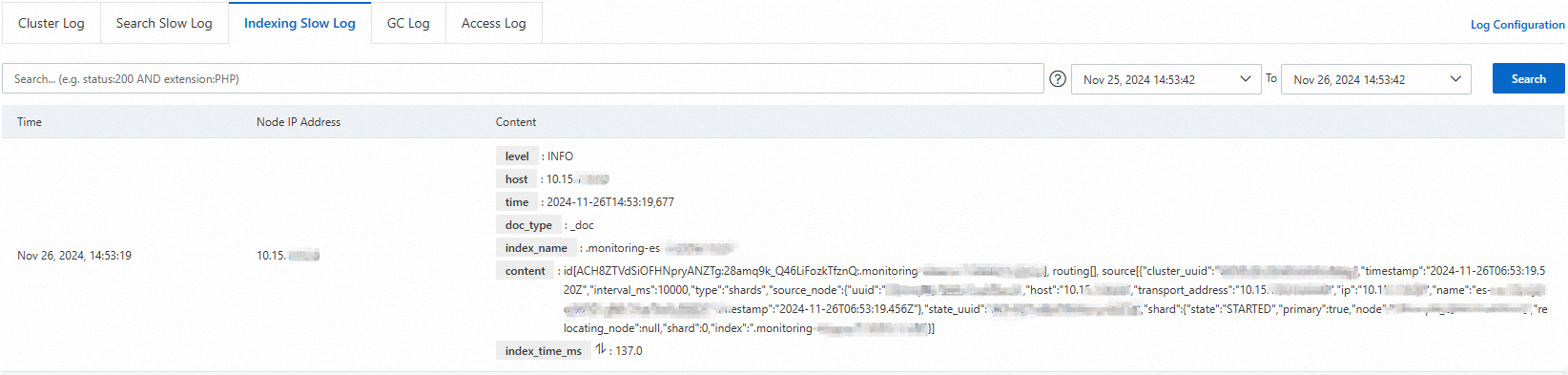

Slow logs

Slow logs capture read and write operations that exceed a configured time threshold. Searching slow logs cover query operations; indexing slow logs cover write operations.

When to use them: If queries or writes are taking longer than expected, check the corresponding slow log to identify which operations are slow and on which shards.

Slow logs consume cluster resources in proportion to the volume of qualifying traffic. Enable slow logs when actively troubleshooting, and consider targeting specific indices rather than all indices. Set thresholds high enough to capture only the operations you care about.

Configure slow log thresholds

The default slow log threshold records operations taking 5–10 seconds, which is too coarse for most troubleshooting. Reduce the threshold using one of the following methods.

Option 1 (recommended): Use the scenario-specific configuration template

After a cluster is created, the scenario-specific configuration template is enabled by default and automatically applied. The template includes slow log thresholds optimized for the general-purpose scenario:

| Log type | Level | Threshold |

|---|---|---|

| Search (query) | warn | 500 ms |

| Search (query) | info | 200 ms |

| Search (query) | debug | 100 ms |

| Search (query) | trace | 50 ms |

| Search (fetch) | warn | 200 ms |

| Search (fetch) | info | 100 ms |

| Search (fetch) | debug | 80 ms |

| Search (fetch) | trace | 50 ms |

| Indexing | warn | 200 ms |

| Indexing | info | 100 ms |

| Indexing | debug | 50 ms |

| Indexing | trace | 20 ms |

The template also sets indexing.slowlog.source to 1000, which controls how many characters of the document source are logged for indexing slow log entries.

If the Scenario-specific Configuration Template is disabled, enable and submit it to apply these defaults. For more information, see Modify a scenario-specific configuration template.

Option 2: Run a PUT _settings command in Kibana

Log on to the Kibana console and run the following command. For instructions on accessing Kibana, see Log on to the Kibana console.

PUT _settings

{

"index.indexing.slowlog.threshold.index.warn": "200ms",

"index.indexing.slowlog.threshold.index.trace": "20ms",

"index.indexing.slowlog.threshold.index.debug": "50ms",

"index.indexing.slowlog.threshold.index.info": "100ms",

"index.search.slowlog.threshold.fetch.warn": "200ms",

"index.search.slowlog.threshold.fetch.trace": "50ms",

"index.search.slowlog.threshold.fetch.debug": "80ms",

"index.search.slowlog.threshold.fetch.info": "100ms",

"index.search.slowlog.threshold.query.warn": "500ms",

"index.search.slowlog.threshold.query.trace": "50ms",

"index.search.slowlog.threshold.query.debug": "100ms",

"index.search.slowlog.threshold.query.info": "200ms"

}After you apply new thresholds, any read or write operation that exceeds the configured time appears on the Slow Log tab.

If many slow logs appear, check cluster resources and load to identify bottlenecks. Then expand the corresponding resources or use the aliyun-qos plugin to limit traffic and maintain cluster stability.

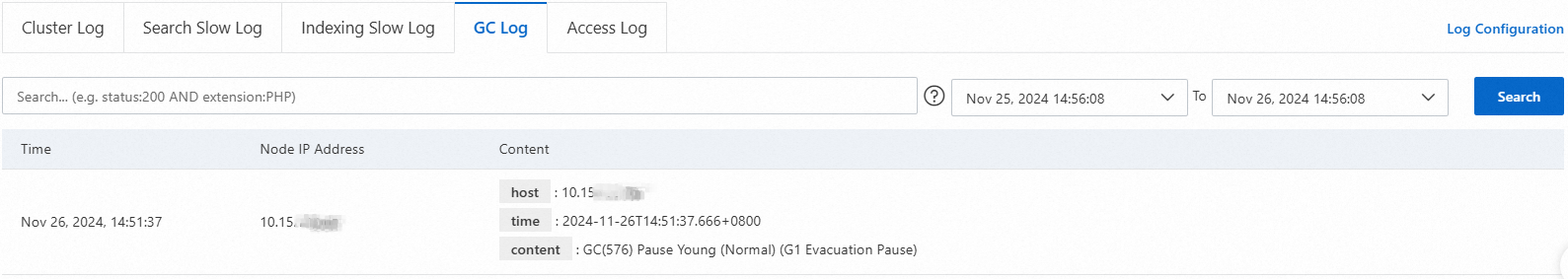

GC log

The GC log records all garbage collection events triggered by JVM heap memory usage, including Old GC, CMS GC, Full GC, and Minor GC events.

When to use it: If the cluster has performance issues, check GC logs for time-consuming or frequent GC events. Use the findings to determine whether to expand resources or apply traffic limiting.

Alibaba Cloud Elasticsearch clusters use the CMS garbage collector by default. If a data node has 32 GB or more of memory, switch to the G1 garbage collector to improve GC efficiency. For more information, see Configure a garbage collector.

GC log entries include the same fields as main log entries (time, node IP, and content), but do not have a level field.

ES access log

The ES access log records restSearchAction requests received by the cluster, including the cluster node and IP address, client IP, URI, request body size, request content, and request time.

When to use it: Use ES access logs to identify which clients are sending query requests to the cluster.

ES access logs are available only for instances running version 6.7.0 (minor engine version 1.0.2 or later) or 7.10.

ES access logs do not cover SQL queries, multi-search, scroll queries, or queries triggered by some Kibana visualization tools. For complete query and write request logging, enable audit logs instead. See Configure audit logs.

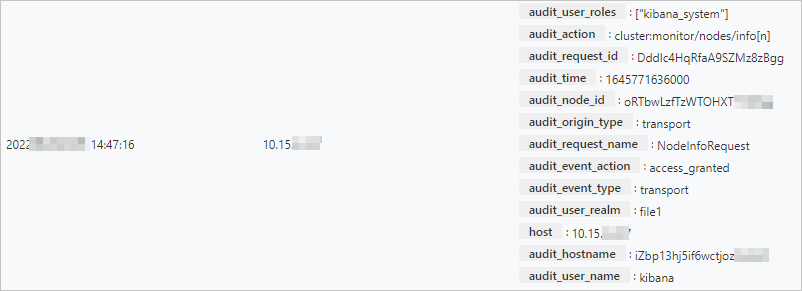

Audit log

Audit logs record create, delete, update, and query operations on an Elasticsearch instance.

When to use it: Use audit logs to troubleshoot authentication failures and connection denials, monitor data access events, and investigate suspicious activities such as changes to authorization or user security configurations.

Console-based audit log viewing is available only for instances running version 7.x or later in the regions listed in Limitations. For other instances, enable audit logs via the YML configuration. Logs are written as an index to the current cluster and can be viewed in the Kibana console by querying indices that start with .security_audit_log-*. For more information, see Configure YML parameters.

Enable audit log collection

Audit log collection is disabled by default. Follow these steps to enable it:

On the Log Search page, click Log Settings on the right.

In the Log Settings dialog box, turn on the Audit Log Collection switch.

Important- Enabling or disabling Audit Log Collection triggers a rolling cluster restart. If the cluster is in a Normal (green) state, each index has at least one replica, and resource usage is not excessively high, the cluster continues to serve requests during the restart. Perform this operation during off-peak hours. - By default, the following event types are collected:

access_denied,anonymous_access_denied,authentication_failed,connection_denied,tampered_request,run_as_denied, andrun_as_granted. To change the collected event types, modify thexpack.security.audit.logfile.events.includeparameter in the cluster's YML file. For more information, see Configure audit logs.Click Confirm in the prompt. The cluster restarts. Track progress in the Task List. After the restart, audit log collection is active.

On the Log Search page, click the Audit Log tab to view audit logs.

ImportantAudit log data consumes disk space and can affect cluster performance. Disable Audit Log Collection when you no longer need it.