When a task in an EMR Serverless Spark workspace calls other Alibaba Cloud services — such as Object Storage Service (OSS), Data Lake Formation (DLF), or MaxCompute — it uses an execution role for permission authentication and ActionTrail audits. When you create a workspace, you choose either the default execution role or a custom one.

After a workspace is created, the execution role cannot be changed.

Use cases

The execution role assumes a specified identity depending on the service being accessed:

Access OSS objects — The execution role accesses and manages objects stored in OSS.

Read and write DLF metadata — If access control is enabled for DLF, the execution role assumes different identities based on the task type:

Data development: assumes the identity of the Alibaba Cloud account or RAM user that submits the task.

Livy Gateway: assumes the identity of the token creator.

Read and write MaxCompute data — The execution role assumes the identity of the Alibaba Cloud account or RAM user that submitted the task. This identity is used for both authentication and ActionTrail audits.

Choose between the default and custom execution role

| Default execution role | Custom execution role | |

|---|---|---|

| Setup required | None — used automatically | Manual creation via RAM console |

| Permissions | Predefined by Alibaba Cloud; covers OSS, DLF, and MaxCompute | Defined by you; scoped to your specific resources |

| Policy maintenance | Automatically updated by Alibaba Cloud | Static; update manually as needed |

| When to use | Starting out or when predefined permissions are sufficient | When you need fine-grained access control or resource-level restrictions |

Use the default execution role

If you don't modify the Execution Role setting when creating a workspace, the system automatically uses AliyunEMRSparkJobRunDefaultRole.

This role has the following properties:

Role name:

AliyunEMRSparkJobRunDefaultRoleAssociated policy:

AliyunEMRSparkJobRunDefaultRolePolicy— a system policy that grants access to OSS, DLF, and MaxCompute.Maintenance: Created and maintained by Alibaba Cloud; automatically updated to meet service requirements.

Do not edit or delete AliyunEMRSparkJobRunDefaultRole. Doing so may cause workspace creation or task execution to fail.

Set up a custom execution role

A custom execution role gives you fine-grained control over which resources EMR Serverless Spark can access. The steps below configure a custom role for password-free access to OSS and DLF resources within the same account.

The policy in this procedure is an example. Custom execution role policies are static and are not automatically updated by Alibaba Cloud. To keep your tasks running correctly, periodically check and update the policy. Use the AliyunEMRSparkJobRunDefaultRolePolicy policy of the default role as a reference for the latest required permissions.Prerequisites

Before you begin, ensure that you have:

RAM administrator access to the RAM console

Access to the E-MapReduce console

Step 1: Create an access policy

This policy grants OSS read/write access to a specific bucket and full DLF metadata access.

Log on to the RAM console as a RAM administrator.

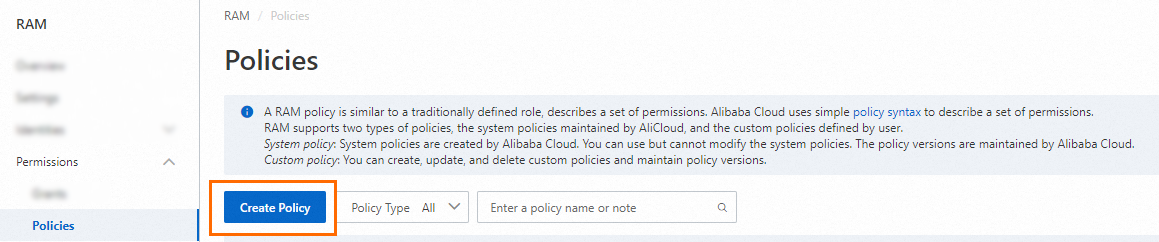

In the left navigation pane, choose Permissions > Policies.

On the Policies page, click Create Policy.

On the Create Policy page, click the Json tab.

Enter the following policy document and click OK. The policy grants: Replace

serverless-spark-test-resourceswith your actual OSS bucket name.OSS read/write access to a specific bucket (

serverless-spark-test-resources)Full DLF metadata read/write access

DLF access control delegation (

ActOnBehalfOfAnotherUser)

{ "Version": "1", "Statement": [ { "Action": [ "oss:ListBuckets", "oss:PutObject", "oss:ListObjectsV2", "oss:ListObjects", "oss:GetObject", "oss:CopyObject", "oss:DeleteObject", "oss:DeleteObjects", "oss:RestoreObject", "oss:CompleteMultipartUpload", "oss:ListMultipartUploads", "oss:AbortMultipartUpload", "oss:UploadPartCopy", "oss:UploadPart", "oss:GetBucketInfo", "oss:PostDataLakeStorageFileOperation", "oss:PostDataLakeStorageAdminOperation", "oss:GetBucketVersions", "oss:ListObjectVersions", "oss:DeleteObjectVersion" ], "Resource": [ "acs:oss:*:*:serverless-spark-test-resources/*", "acs:oss:*:*:serverless-spark-test-resources" ], "Effect": "Allow" }, { "Action": [ "dlf:AlterDatabase", "dlf:AlterTable", "dlf:ListCatalogs", "dlf:ListDatabases", "dlf:ListFunctions", "dlf:ListFunctionNames", "dlf:ListTables", "dlf:ListTableNames", "dlf:ListIcebergNamespaceDetails", "dlf:ListIcebergTableDetails", "dlf:ListIcebergSnapshots", "dlf:CreateDatabase", "dlf:Get*", "dlf:DeleteDatabase", "dlf:DropDatabase", "dlf:DropTable", "dlf:CreateTable", "dlf:CommitTable", "dlf:UpdateTable", "dlf:DeleteTable", "dlf:ListPartitions", "dlf:ListPartitionNames", "dlf:CreatePartition", "dlf:BatchCreatePartitions", "dlf:UpdateTableColumnStatistics", "dlf:DeleteTableColumnStatistics", "dlf:UpdatePartitionColumnStatistics", "dlf:DeletePartitionColumnStatistics", "dlf:UpdateDatabase", "dlf:BatchCreateTables", "dlf:BatchDeleteTables", "dlf:BatchUpdateTables", "dlf:BatchGetTables", "dlf:BatchUpdatePartitions", "dlf:BatchDeletePartitions", "dlf:BatchGetPartitions", "dlf:DeletePartition", "dlf:CreateFunction", "dlf:DeleteFunction", "dlf:UpdateFunction", "dlf:ListPartitionsByFilter", "dlf:DeltaGetPermissions", "dlf:UpdateCatalogSettings", "dlf:CreateLock", "dlf:UnLock", "dlf:AbortLock", "dlf:RefreshLock", "dlf:ListTableVersions", "dlf:CheckPermissions", "dlf:RenameTable", "dlf:RollbackTable" ], "Resource": "*", "Effect": "Allow" }, { "Action": [ "dlf-dss:CreateDatabase", "dlf-dss:CreateFunction", "dlf-dss:CreateTable", "dlf-dss:DropDatabase", "dlf-dss:DropFunction", "dlf-dss:DropTable", "dlf-dss:DescribeCatalog", "dlf-dss:DescribeDatabase", "dlf-dss:DescribeFunction", "dlf-dss:DescribeTable", "dlf-dss:AlterDatabase", "dlf-dss:AlterFunction", "dlf-dss:AlterTable", "dlf-dss:ListCatalogs", "dlf-dss:ListDatabases", "dlf-dss:ListTables", "dlf-dss:ListFunctions", "dlf-dss:CheckPermissions" ], "Resource": "*", "Effect": "Allow" }, { "Effect": "Allow", "Action": "dlf-auth:ActOnBehalfOfAnotherUser", "Resource": "*" } ] }Enter a Policy Name (for example,

test-serverless-spark) and an optional Description, then click OK. The policy elements: For more information about policy elements, see Basic elements of an access policy.Action— The operations granted on a resource. This example grants read/write and directory access for OSS and DLF.Resource— The target of the authorization. This example grants access to all objects in DLF and all content in the OSS bucketserverless-spark-test-resources.

Step 2: Create a RAM role

In the left navigation pane, choose Identities > Roles.

On the Roles page, click Create Role.

In the Create Role panel, set the following parameters and click OK:

Parameter Value Principal type Cloud Service Principal name spark.emr-serverless.aliyuncs.comEnter a Role Name (for example,

test-serverless-spark-jobrun) and click OK.

Step 3: Grant permissions to the RAM role

On the Roles page, find the role you created and click Grant Permission in the Actions column.

In the Grant Permissions panel, select Custom Policy and add the policy you created in Step 1.

Click OK, then click Close.

Step 4: Create a workspace and verify permissions

Log on to the E-MapReduce console.

In the navigation pane, choose EMR Serverless > Spark.

Click Create Workspace and configure the following parameters. For more information, see Create a workspace.

Workspace Directory: Select an OSS path to which the RAM role you created has read and write permissions.

Execution Role: Select the RAM role you created (for example,

test-serverless-spark-jobrun).

After the workspace is created, run a batch job to verify the permissions. For more information, see Quick start for JAR development.

If you upload a file to an authorized OSS bucket, the task runs as expected.

If you upload a file to an unauthorized OSS bucket, the task fails with a permission error for the OSS path.

Other policy examples

Access MaxCompute data

Add the following policy to the execution role. It grants permission to act on behalf of Alibaba Cloud accounts, RAM users, and RAM roles when accessing MaxCompute.

{

"Version": "1",

"Statement": [

{

"Effect": "Allow",

"Action": "odps:ActOnBehalfOfAnotherUser",

"Resource": [

"acs:odps:*:*:users/default/aliyun/*",

"acs:odps:*:*:users/default/ramuser/*",

"acs:odps:*:*:users/default/ramrole/*"

]

}

]

}Access OSS buckets with KMS encryption

Add the following policy to the execution role. It grants permission to list, describe, and use KMS keys for encryption and decryption.

{

"Version": "1",

"Statement": [

{

"Effect": "Allow",

"Action": [

"kms:List*",

"kms:DescribeKey",

"kms:GenerateDataKey",

"kms:Decrypt"

],

"Resource": "*"

}

]

}What's next

To access OSS resources across Alibaba Cloud accounts, see How do I access OSS resources across Alibaba Cloud accounts?

For common RAM policy examples, see Common examples of RAM policies