Apache Iceberg is an open table format for data lakes that supports ACID transactions, schema evolution, and time travel on large datasets stored in object storage or HDFS. This topic walks you through reading from and writing to Iceberg tables in E-MapReduce (EMR) Serverless Spark using a Spark SQL session.

Prerequisites

Before you begin, ensure that you have:

A workspace in EMR Serverless Spark. See Create a workspace.

Choose a catalog type

Every Iceberg read/write operation in EMR Serverless Spark requires a catalog. Choose a catalog type based on where you store Iceberg metadata.

| Catalog type | Metadata storage | Minimum engine version | Configuration required |

|---|---|---|---|

| Data Catalog | Managed by Data Lake Formation (DLF) | None | None — managed through the console |

| DLF | DLF | esr-4.7.0 / esr-3.6.0 | Spark configuration parameters |

| DLF-Legacy | DLF-Legacy (formerly DLF 1.0) | esr-4.3.0 / esr-3.3.0 / esr-2.7.0 | Spark configuration parameters |

| Hive Metastore | Hive Metastore | esr-4.3.0 / esr-3.3.0 / esr-2.7.0 | Spark configuration parameters |

| File system | Object Storage Service (OSS) or Hadoop Distributed File System (HDFS) | None | Spark configuration parameters |

Both Spark SQL and Notebook support reading from and writing to Iceberg tables. This topic uses a Spark SQL session as an example.

Step 1: Create a SQL session

Log on to the EMR console.

In the left-side navigation pane, choose EMR Serverless > Spark.

Click the name of the workspace you want to manage.

In the left-side navigation pane, choose Operation Center > Sessions.

On the SQL Sessions tab, click Create SQL Session.

On the Create SQL Session page, go to the Spark Configuration section and add the catalog configuration for your chosen catalog type. Then click Create. For general session settings, see Manage SQL sessions. For data catalog management, see Manage data catalogs.

Data Catalog (no configuration required)

Click Add Catalog on the Catalogs page in the console. The catalog becomes available for selection directly in Spark SQL — no Spark configuration parameters needed.

DLF

Requires engine version esr-4.7.0, esr-3.6.0, or later.

Add the following parameters to the Spark Configuration section:

spark.sql.extensions org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions

spark.sql.catalog.iceberg_catalog org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.iceberg_catalog.catalog-impl org.apache.iceberg.rest.RESTCatalog

spark.sql.catalog.iceberg_catalog.uri http://<region-id>-vpc.dlf.aliyuncs.com

spark.sql.catalog.iceberg_catalog.warehouse <catalog-name>

spark.sql.catalog.iceberg_catalog.io-impl org.apache.iceberg.rest.DlfFileIO

spark.sql.catalog.iceberg_catalog.rest.auth.type sigv4

spark.sql.catalog.iceberg_catalog.rest.auth.sigv4.delegate-auth-type none

spark.sql.catalog.iceberg_catalog.rest.signing-region <region-id>

spark.sql.catalog.iceberg_catalog.rest.signing-name DlfNext

spark.sql.catalog.iceberg_catalog.rest.access-key-id <access-key-id>

spark.sql.catalog.iceberg_catalog.rest.secret-access-key <access-key-secret>Replace the following placeholders:

<region-id>: The region where your DLF service is located, for example,cn-hangzhou.<catalog-name>: The name of your DLF catalog. Do not use a catalog created by data sharing.<access-key-id>: The AccessKey ID of your Alibaba Cloud account or RAM user.<access-key-secret>: The AccessKey secret of your Alibaba Cloud account or RAM user.

The static fields (org.apache.iceberg.spark.SparkCatalog, org.apache.iceberg.rest.RESTCatalog, org.apache.iceberg.rest.DlfFileIO, sigv4, none, DlfNext) do not need to be changed.

| Parameter | Description | Example |

|---|---|---|

spark.sql.extensions | Enables Iceberg Spark extensions. | org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions |

spark.sql.catalog.iceberg_catalog | Registers a Spark catalog named iceberg_catalog. | org.apache.iceberg.spark.SparkCatalog |

spark.sql.catalog.iceberg_catalog.catalog-impl | Sets the catalog implementation to Iceberg REST Catalog. | org.apache.iceberg.rest.RESTCatalog |

spark.sql.catalog.iceberg_catalog.uri | The REST API endpoint of the DLF Iceberg service. Format: http://<region-id>-vpc.dlf.aliyuncs.com. | http://cn-hangzhou-vpc.dlf.aliyuncs.com |

spark.sql.catalog.iceberg_catalog.warehouse | The associated DLF catalog name. | <catalog-name> |

spark.sql.catalog.iceberg_catalog.io-impl | Uses the DLF-customized FileIO implementation. | org.apache.iceberg.rest.DlfFileIO |

spark.sql.catalog.iceberg_catalog.rest.auth.type | Enables SigV4 signature authentication for REST requests. | sigv4 |

spark.sql.catalog.iceberg_catalog.rest.auth.sigv4.delegate-auth-type | Disables delegated authentication. The client signs requests directly with the AccessKey ID and AccessKey secret. | none |

spark.sql.catalog.iceberg_catalog.rest.signing-region | The region used for signing, which must match the DLF service region. | cn-hangzhou |

spark.sql.catalog.iceberg_catalog.rest.signing-name | The service name used for signing. | DlfNext |

spark.sql.catalog.iceberg_catalog.rest.access-key-id | The AccessKey ID of your Alibaba Cloud account or RAM user. | <access-key-id> |

spark.sql.catalog.iceberg_catalog.rest.secret-access-key | The AccessKey secret of your Alibaba Cloud account or RAM user. | <access-key-secret> |

DLF-Legacy (formerly DLF 1.0)

Requires engine version esr-4.3.0, esr-3.3.0, esr-2.7.0, or later.

Metadata is stored in DLF-Legacy (formerly DLF 1.0). Add the following parameters to the Spark Configuration section:

spark.sql.extensions org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions

spark.sql.catalog.<catalog-name> org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.<catalog-name>.catalog-impl org.apache.iceberg.aliyun.dlf.hive.DlfCatalog

spark.sql.catalog.<catalog-name>.dlf.catalog.id <catalog-name>Replace the following placeholder:

<catalog-name>: The name you assign to this catalog. Use the same value in both the parameter key anddlf.catalog.id.

Hive Metastore

Requires engine version esr-4.3.0, esr-3.3.0, esr-2.7.0, or later.

Metadata is stored in a specific Hive Metastore. Add the following parameters to the Spark Configuration section:

spark.sql.extensions org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions

spark.sql.catalog.<catalog-name> org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.<catalog-name>.catalog-impl org.apache.iceberg.hive.HiveCatalog

spark.sql.catalog.<catalog-name>.uri thrift://<hms-ip>:<port>Replace the following placeholders:

<catalog-name>: The name you assign to this catalog.<hms-ip>: The internal IP address of your Hive Metastore. For an external Metastore, see Connect to an external Hive Metastore service.<port>: The Hive Metastore port, typically9083.

File system

Metadata is stored directly in the file system using HadoopCatalog — no Hive Metastore needed. Add the following parameters to the Spark Configuration section:

spark.sql.extensions org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions

spark.sql.catalog.<catalog-name> org.apache.iceberg.spark.SparkCatalog

spark.sql.catalog.<catalog-name>.type hadoop

spark.sql.catalog.<catalog-name>.warehouse oss://<bucket-name>/warehouseReplace the following placeholders:

<catalog-name>: The name you assign to this catalog.<bucket-name>: The name of your OSS bucket.

Step 2: Run Iceberg SQL

All examples use iceberg_catalog — the catalog name configured in Step 1. If you used a different catalog name, replace iceberg_catalog accordingly.

On the EMR Serverless Spark page, click Data Development in the left-side navigation pane.

On the Development tab, click the

icon.

icon.In the New dialog box, enter a name (for example,

users_task), leave the type as SparkSQL, and click OK.Paste the following SQL into the new tab.

NoteIf you omit the database name, tables are created in the

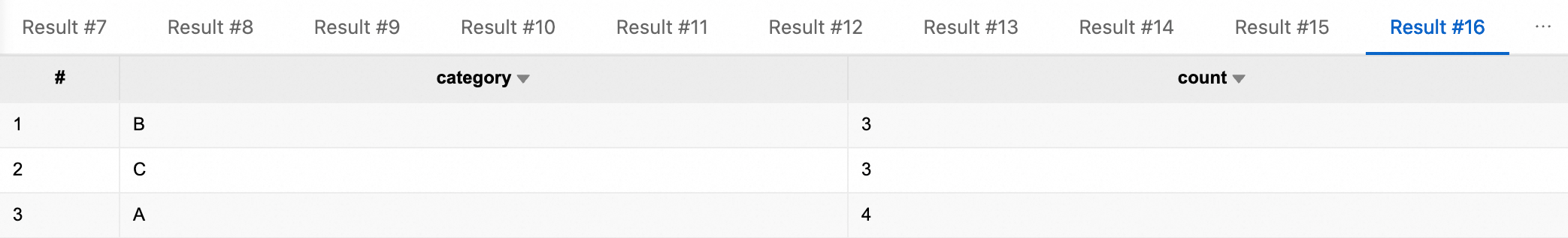

defaultdatabase of the catalog. Create a separate database to organize your tables.-- Create a database CREATE DATABASE IF NOT EXISTS iceberg_catalog.db; -- Create a non-partitioned table CREATE TABLE iceberg_catalog.db.tbl ( id BIGINT NOT NULL COMMENT 'unique id', data STRING ) USING iceberg; -- Insert data into the non-partitioned table INSERT INTO iceberg_catalog.db.tbl VALUES (1, 'Alice'), (2, 'Bob'), (3, 'Charlie'); -- Query all rows SELECT * FROM iceberg_catalog.db.tbl; -- Query with a filter SELECT * FROM iceberg_catalog.db.tbl WHERE id = 2; -- Update a row UPDATE iceberg_catalog.db.tbl SET data = 'David' WHERE id = 3; -- Confirm the update SELECT * FROM iceberg_catalog.db.tbl WHERE id = 3; -- Delete a row DELETE FROM iceberg_catalog.db.tbl WHERE id = 1; -- Confirm the deletion SELECT * FROM iceberg_catalog.db.tbl; -- Create a partitioned table CREATE TABLE iceberg_catalog.db.part_tbl ( id BIGINT, data STRING, category STRING, ts TIMESTAMP, dt DATE ) USING iceberg PARTITIONED BY (dt, category); -- Insert data into the partitioned table INSERT INTO iceberg_catalog.db.part_tbl VALUES (1 , 'data-01', 'A', timestamp'2026-01-01 10:00:00', date'2026-01-01'), (2 , 'data-02', 'A', timestamp'2026-01-01 11:00:00', date'2026-01-01'), (3 , 'data-03', 'A', timestamp'2026-01-02 09:30:00', date'2026-01-02'), (4 , 'data-04', 'B', timestamp'2026-01-02 12:15:00', date'2026-01-02'), (5 , 'data-05', 'B', timestamp'2026-01-03 08:05:00', date'2026-01-03'), (6 , 'data-06', 'B', timestamp'2026-01-03 14:20:00', date'2026-01-03'), (7 , 'data-07', 'C', timestamp'2026-01-04 16:45:00', date'2026-01-04'), (8 , 'data-08', 'C', timestamp'2026-01-04 18:10:00', date'2026-01-04'), (9 , 'data-09', 'C', timestamp'2026-01-05 07:55:00', date'2026-01-05'), (10, 'data-10', 'A', timestamp'2026-01-05 13:35:00', date'2026-01-05'); -- Query all rows from the partitioned table SELECT * FROM iceberg_catalog.db.part_tbl; -- Query by date partition SELECT * FROM iceberg_catalog.db.part_tbl WHERE dt = '2026-01-01'; -- Query by category SELECT * FROM iceberg_catalog.db.part_tbl WHERE category = 'A'; -- Query with multiple partition filters SELECT * FROM iceberg_catalog.db.part_tbl WHERE dt = '2026-01-01' AND category = 'A'; -- Aggregate by category SELECT category, COUNT(*) AS count FROM iceberg_catalog.db.part_tbl GROUP BY category; -- Drop the database (use with caution — the database must be empty first) -- DROP DATABASE iceberg_catalog.db;In the session drop-down list, select the SQL session you created, and click Run. Results appear below the editor after the job completes.

What's next

End-to-end task development: Quick start for Spark SQL development — covers the full SQL task development and orchestration workflow.

Session management: Manage SQL sessions — configure session resources and settings.

Notebook sessions: Manage notebook sessions — use Iceberg with Notebook instead of Spark SQL.

Iceberg SQL reference: Apache Iceberg documentation — DDL commands, querying, writing, and table maintenance.