EMR Serverless Spark lets you write and run SQL jobs directly in the console. This guide walks you through the full workflow: create two SparkSQL jobs, assemble them into a workflow, run the workflow, and verify the results.

By the end of this guide, you will be able to:

Create and publish SparkSQL jobs in the development editor.

Build a workflow that chains those jobs with a dependency.

Run the workflow and monitor its execution.

Verify the output data using a SQL query.

Prerequisites

Before you begin, make sure you have:

An Alibaba Cloud account. For more information, see Sign up for an account.

The required roles assigned. See Grant roles to an Alibaba Cloud account.

A workspace and a SQL session instance created. See Create a workspace and Manage SQL sessions.

Key concepts

Job: A unit of SQL code you develop, test, and publish. Jobs are the building blocks of workflows.

Workflow: A directed pipeline of nodes. Each node runs one published job. Nodes can declare upstream dependencies to control execution order.

Node: A reference to a published job inside a workflow. Adding a node to a workflow and configuring its Upstream Nodes defines the execution graph.

Step 1: Create and publish the jobs

A job must be published before it can be added to a workflow.

Navigate to the development editor:

Log on to the EMR console.

In the left navigation pane, choose .

Click the target workspace name.

In the left navigation pane, click Development.

Create the users_task job

On the Development tab, click the

icon.

icon.In the New dialog box, enter

users_taskas the name, leave the type as SparkSQL, and click OK.Copy the following code into the

users_tasktab:CREATE TABLE IF NOT EXISTS students ( name VARCHAR(64), address VARCHAR(64) ) USING PARQUET PARTITIONED BY (data_date STRING) OPTIONS ( 'path'='oss://<bucketname>/path/' ); INSERT OVERWRITE TABLE students PARTITION (data_date = '${ds}') VALUES ('Ashua Hill', '456 Erica Ct, Cupertino'), ('Brian Reed', '723 Kern Ave, Palo Alto');The

${ds}placeholder is a date variable. The default value is the previous day. The following table lists all supported date variables.Variable Data type Format Example {data_date}str YYYY-MM-DD2023-09-18 {ds}str {dt}str {data_date_nodash}str YYYYMMDD20230918 {ds_nodash}str {dt_nodash}str {ts}str YYYY-MM-DDTHH:MM:SS2023-09-18T16:07:43 {ts_nodash}str YYYYMMDDHHMMSS20230918160743 From the database and session drop-down lists, select a database and a running session instance. To create a new session instead, select Connect to SQL Session from the drop-down list. See Manage SQL sessions.

Click Run. Results appear on the Execution Results tab. If an exception occurs, check the Execution Issues tab.

Click Publish.

In the dialog box, enter a description and click OK.

Job parameters are published with the job and applied when the job runs in a pipeline. Session parameters are applied when the job runs in the SQL editor.

Create the users_count job

On the Development tab, click the

icon.

icon.In the New dialog box, enter

users_countas the name, leave the type as SparkSQL, and click OK.Copy the following code into the

users_counttab:SELECT COUNT(1) FROM students;From the database and session drop-down lists, select a database and a running session instance. To create a new session instead, select Connect to SQL Session from the drop-down list. See Manage SQL sessions.

Click Run. Results appear on the Execution Results tab. If an exception occurs, check the Execution Issues tab.

Click Publish.

In the dialog box, enter a description and click OK.

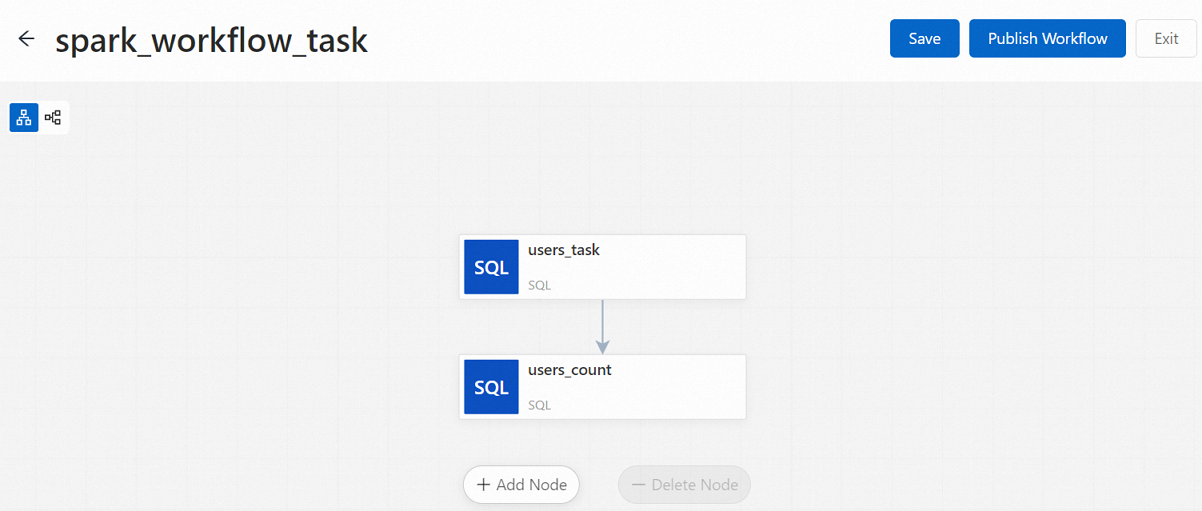

Step 2: Create a workflow

In the left navigation pane, click Workflows.

On the Workflows page, click Create Workflow.

In the Create Workflow panel, enter a name such as

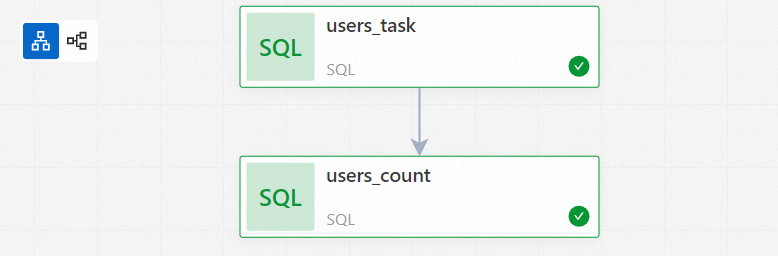

spark_workflow_task, then click Next. Configure the options in Other Settings as needed. See Manage workflows for parameter details.Add the

users_tasknode:On the node canvas, click Add Node.

In the Add Node panel, select

users_taskfrom the Source Path drop-down list, then click Save.

Add the

users_countnode:Click Add Node.

In the Add Node panel, select

users_countfrom the Source Path drop-down list, selectusers_taskfrom the Upstream Nodes drop-down list, then click Save.

Click Publish Workflow.

In the Publish dialog box, enter a description and click OK.

Step 3: Run the workflow

On the Workflows page, click the workflow name (for example,

spark_workflow_task).On the Workflow Runs page, click Run.

NoteAfter you configure a scheduling cycle, you can also start the schedule on the Workflows page by turning on the switch.

In the Start Workflow dialog box, click OK.

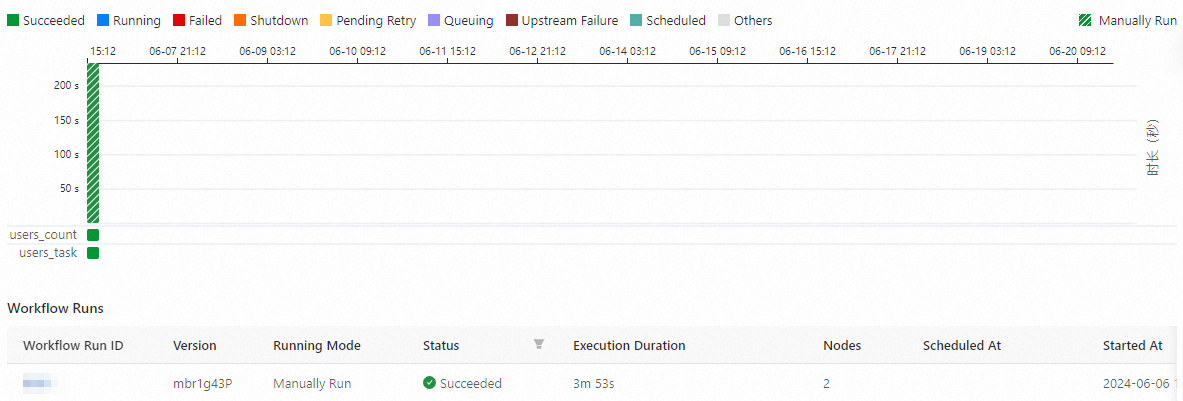

Step 4: Monitor the run

On the Workflows page, click the target workflow.

On the Workflow Runs page, view all workflow instances with their runtime and status.

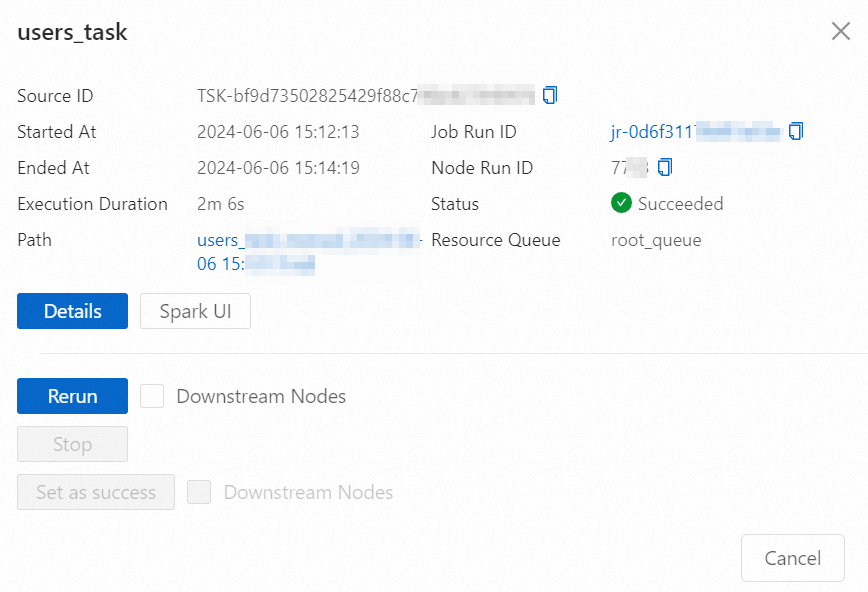

Click a Workflow Run ID in the Workflow Runs section, or open the Workflow Runs Diagram tab, to view the instance graph.

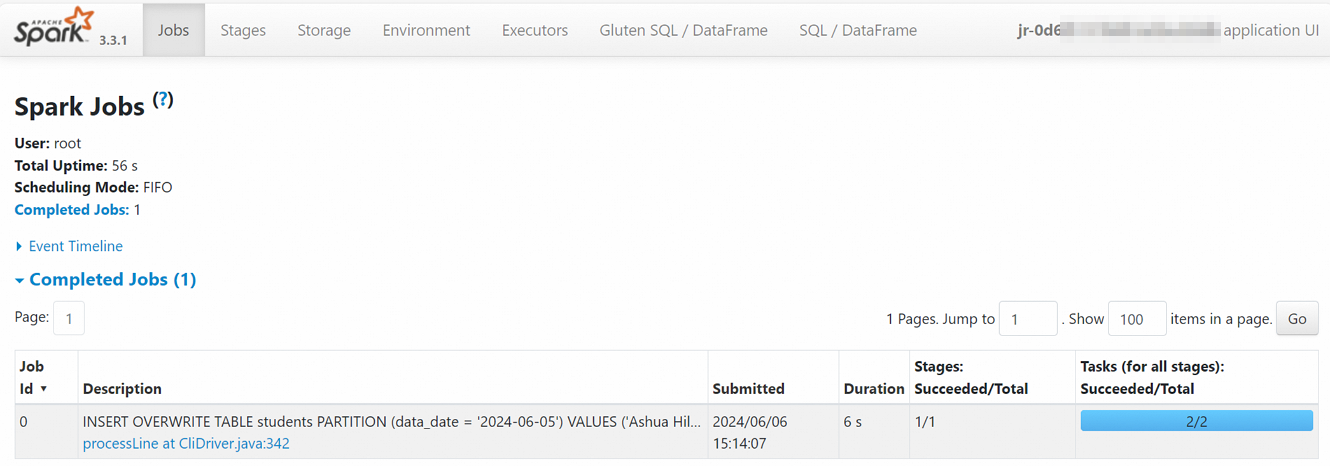

Click a node instance to open the node information dialog, where you can perform operations or view details. See View node instances for available operations. Click Spark UI to open the Spark Jobs page and view real-time task information.

Click the Name to open the Job History page, where you can review metrics, diagnostics, and logs.

Step 5: Manage the workflow

On the Workflows page, click the workflow name to open the Workflow Runs page:

In the Workflow Information section, edit workflow parameters as needed.

In the Workflow Runs section, view all workflow instances. Click a Workflow Run ID to open the corresponding instance graph.

Step 6: Verify the data

In the left navigation pane, click Development.

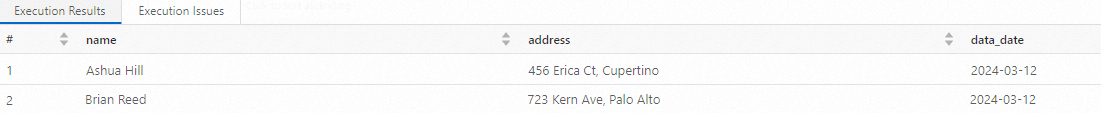

Create a SparkSQL job and run the following query to confirm the data was written:

SELECT * FROM students;The query returns:

What's next

Set up a resource queue to control compute allocation: Manage resource queues.

Learn more about SQL session management: Manage SQL sessions.