This tutorial walks you through running a PySpark Structured Streaming job on EMR Serverless Spark. The job reads from a Kafka topic hosted on an EMR on ECS cluster and processes the stream in real time—without managing any servers.

Overview

The end-to-end setup covers seven steps:

-

Provision a Kafka cluster — create a Dataflow cluster on EMR on ECS and produce messages to a Kafka topic.

-

Connect the networks — create a Network Connection so EMR Serverless Spark can reach the Kafka broker.

-

Open the Kafka port — add a security group rule to allow inbound traffic on port 9092.

-

Upload Kafka JARs to OSS — place the required Kafka and Spark connector JARs in Object Storage Service (OSS).

-

Upload the PySpark script — add your Python script to the Artifacts page.

-

Create and start the streaming job — configure, publish, and start the job in the Development console.

-

View logs — inspect execution output on the Log Exploration tab.

Prerequisites

Before you begin, ensure that you have:

-

A workspace. See Create a workspace.

Step 1: Create a real-time Dataflow cluster and generate messages

-

On the EMR on ECS page, create a Dataflow cluster that includes the Kafka service. For more information, see Create a cluster.

-

Log on to the master node of the EMR cluster. For more information, see Log on to a cluster.

-

Switch to the Taihao exporter log directory.

cd /var/log/emr/taihao_exporter -

Create a Kafka topic named

taihaometricswith 10 partitions and a replication factor of 2.kafka-topics.sh --partitions 10 --replication-factor 2 --bootstrap-server core-1-1:9092 --topic taihaometrics --create -

Start streaming messages to the topic.

tail -f metrics.log | kafka-console-producer.sh --broker-list core-1-1:9092 --topic taihaometrics

Step 2: Create a network connection

EMR Serverless Spark runs in an isolated network environment. A Network Connection bridges it to the virtual private cloud (VPC) where your EMR cluster runs, enabling the Spark job to reach the Kafka broker at core-1-1:9092.

-

In the left navigation pane of the EMR console, choose EMR Serverless > Spark.

-

On the Spark page, click the name of the target workspace.

-

On the EMR Serverless Spark page, click Network Connection in the left navigation pane.

-

On the Network Connection page, click Create Network Connection.

-

In the Create Network Connection dialog box, configure the following parameters, and then click OK. When the Status changes to Succeeded, the network connection is ready.

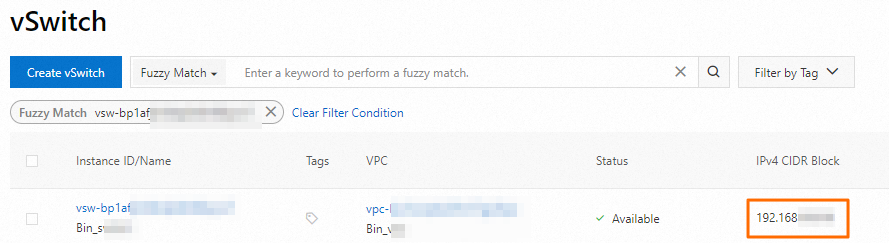

Parameter Description Name Enter a name for the connection. For example, connection_to_emr_kafka.VPC Select the same VPC as the EMR cluster. If no VPC is available, click Create VPC to create one. For more information, see Create and manage a VPC. vSwitch Select the vSwitch in the same VPC as the EMR cluster. If no vSwitch is available in the current zone, create one in the VPC console. For more information, see Create and manage a vSwitch.

Step 3: Add a security group rule for the EMR cluster

The EMR cluster's security group must allow inbound traffic from the EMR Serverless Spark network on port 9092 (the Kafka broker port).

-

Obtain the CIDR block of the vSwitch for the cluster node. On the NodesVPC console page, click a node group name to view the associated vSwitch information. Then, look up the CIDR block on the VPC console vSwitch page.

-

Add an inbound security group rule.

-

On the EMR on ECS page, click the ID of the target cluster.

-

On the Basic Information page, click the link next to Cluster Security Group.

-

On the Security Group Details page, in the Rules section, click Add Rule. Configure the following parameters, and then click OK.

Parameter

Description

Source

Enter the CIDR block of the vSwitch you obtained in the previous step.

ImportantDo not set this to

0.0.0.0/0, as it exposes the cluster to external access.Destination (Current Instance)

Enter port

9092.

-

Step 4: Upload JAR packages to OSS

The streaming job requires four JAR packages for Kafka connectivity. Download kafka.zip, extract it, and upload all four JARs to OSS. For upload instructions, see Simple upload.

The archive contains:

-

commons-pool2-2.11.1.jar -

kafka-clients-2.8.1.jar -

spark-sql-kafka-0-10_2.12-3.3.1.jar -

spark-token-provider-kafka-0-10_2.12-3.3.1.jar

Record the OSS paths after upload—you will reference them in Step 6.

Step 5: Upload the resource file

-

On the EMR Serverless Spark page, click Artifacts in the left navigation pane.

-

On the Artifacts page, click Upload File.

-

In the Upload File dialog box, click the upload area and select the pyspark_ss_demo.py file.

Step 6: Create and start a streaming job

-

On the EMR Serverless Spark page, click Development in the left navigation pane.

-

On the Development tab, click the

icon to create a new job.

icon to create a new job. -

Enter a name, select Application(Streaming) > PySpark as the job type, and then click OK.

-

In the new tab, configure the following parameters. Leave all other parameters at their default values. Spark Configurations example:

Parameter Description Main Python Resource Select pyspark_ss_demo.py, the file you uploaded in Step 5.Engine Version Select the Spark version. For available versions, see Engine versions. Execution Parameters Enter the internal IP address of the core-1-1node. Find it under the Core node group on the Nodes page of the EMR cluster.Spark Configurations Enter the Spark configuration. See the example below. Parameter Required Description spark.jarsYes Comma-separated OSS paths of the four Kafka JARs. All four must be included. spark.emr.serverless.network.service.nameYes Name of the network connection created in Step 2. spark.jars oss://<path>/commons-pool2-2.11.1.jar,oss://<path>/kafka-clients-2.8.1.jar,oss://<path>/spark-sql-kafka-0-10_2.12-3.3.1.jar,oss://<path>/spark-token-provider-kafka-0-10_2.12-3.3.1.jar spark.emr.serverless.network.service.name connection_to_emr_kafkaReplace

oss://<path>/with the actual OSS paths of the JARs you uploaded in Step 4, and replaceconnection_to_emr_kafkawith the name of the network connection you created in Step 2. The two required Spark configuration parameters are: -

Click Save.

-

Click Publish, and then click OK in the Publish dialog box.

-

Start the streaming job.

-

Click Go To O&M.

-

Click Start.

-

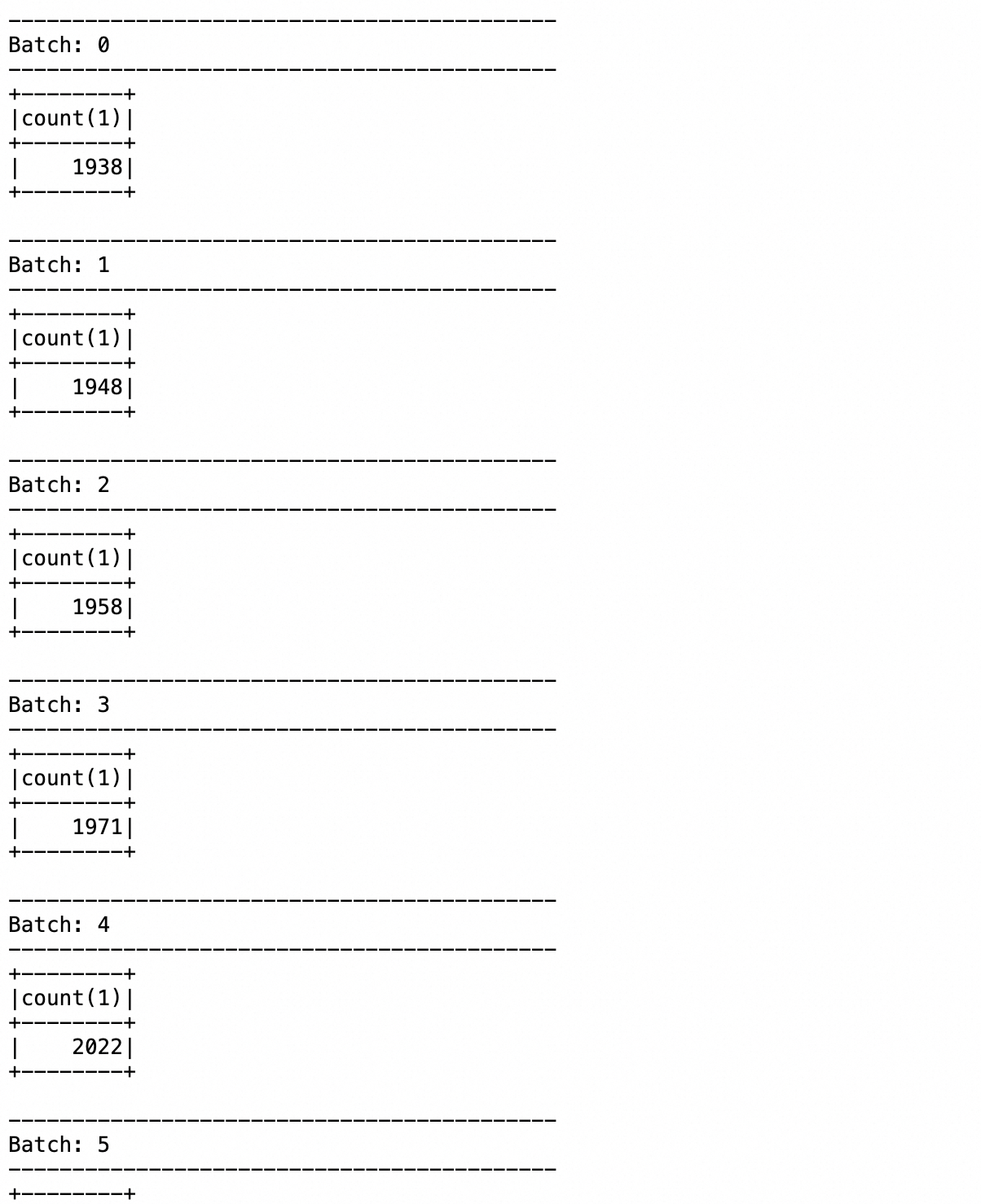

Step 7: View logs

-

Click the Log Exploration tab.

-

Review the application execution output and returned results.

Troubleshooting

Network connection status does not reach Succeeded

Verify that the VPC and vSwitch you selected in Step 2 match those of the EMR cluster. Mismatched network settings are the most common cause of connection failures.

Streaming job cannot connect to Kafka

If the job fails with a connection timeout or broker unreachable error:

-

Confirm the security group rule in Step 3 allows inbound traffic on port 9092 from the correct CIDR block.

-

Verify the Execution Parameters field contains the correct internal IP address of the

core-1-1node. -

Check that

spark.emr.serverless.network.service.namematches the name of the network connection you created in Step 2. -

Confirm all four JAR paths in

spark.jarspoint to valid OSS objects.

What's next

For more information about PySpark development, see PySpark development quick start.