Serverless Spark supports reading from and writing to MySQL through a Java Database Connectivity (JDBC) connector. The MySQL JDBC driver (version 8.0.33) loads automatically at startup — no manual installation required. Connect via an SQL session, a Notebook session, or a Spark batch job.

Prerequisites

Before you begin, ensure that you have:

A Serverless Spark workspace. See Create a workspace.

A MySQL instance — self-managed, ApsaraDB RDS for MySQL, or PolarDB for MySQL. The examples in this topic use ApsaraDB RDS for MySQL.

Network connectivity established between Serverless Spark and MySQL. See Establish network connectivity between EMR Serverless Spark and other VPCs. When you add security group rules, open TCP port

3306.

Never hardcode database credentials in your code. Store the username and password in a secrets manager and reference them at runtime.

Connect via an SQL session

In Session Manager, create an SQL session and select a pre-configured Network Connection. See Create an SQL session.

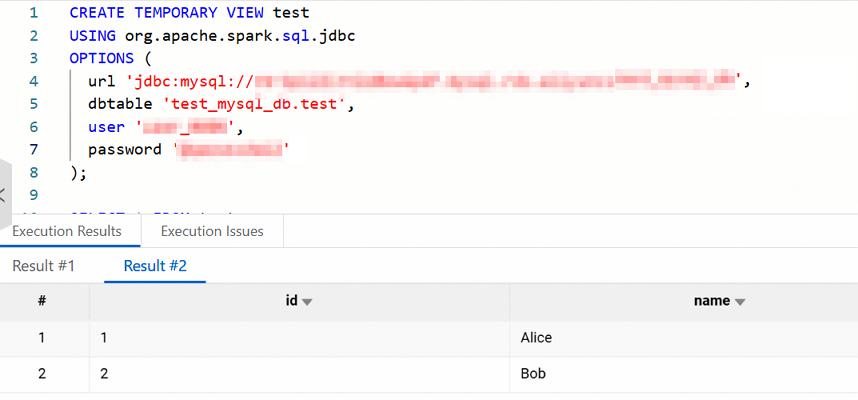

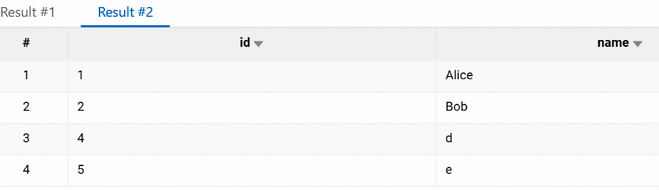

In Data Development, create a SparkSQL task and run the following SQL to verify the connection:

Parameter Description urlJDBC connection string. Format: jdbc:mysql://<jdbc_url>/dbtableDatabase table to read. Format: <db>.<table>— for example,test_mysql_db.testuserMySQL username. The user must have read permissions on the target table. passwordMySQL password CREATE TEMPORARY VIEW test USING org.apache.spark.sql.jdbc OPTIONS ( url 'jdbc:mysql://<jdbc_url>/', dbtable '<db>.<table>', user '<username>', password '<password>' ); SELECT * FROM test;A successful connection returns the table contents.

Insert data into the MySQL table:

INSERT INTO test VALUES(4, 'd'),(5, 'e'); SELECT * FROM test;If the inserted rows appear in the query results, the write operation succeeded.

Connect via a Notebook session

In Session Manager, create a Notebook session and select a pre-configured Network Connection. See Create a Notebook session.

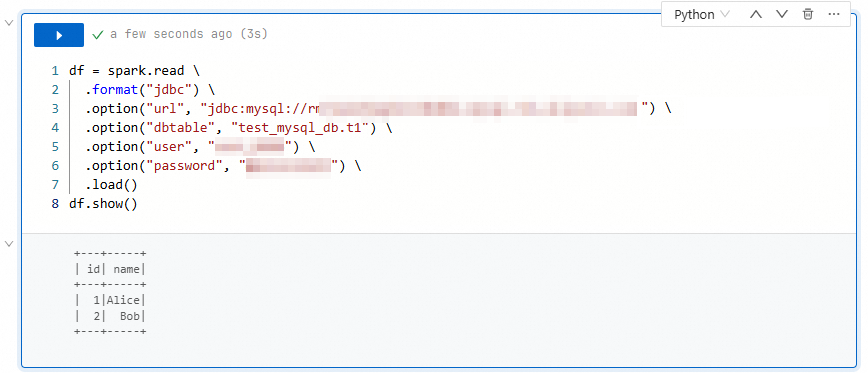

In Data Development, create an Interactive Development > Notebook task and run the following Python code to verify the connection:

df = spark.read \ .format("jdbc") \ .option("url", "jdbc:mysql://<jdbc_url>") \ .option("dbtable", "<db>.<table>") \ .option("user", "<username>") \ .option("password", "<password>") \ .load() df.show()A successful connection returns the table contents.

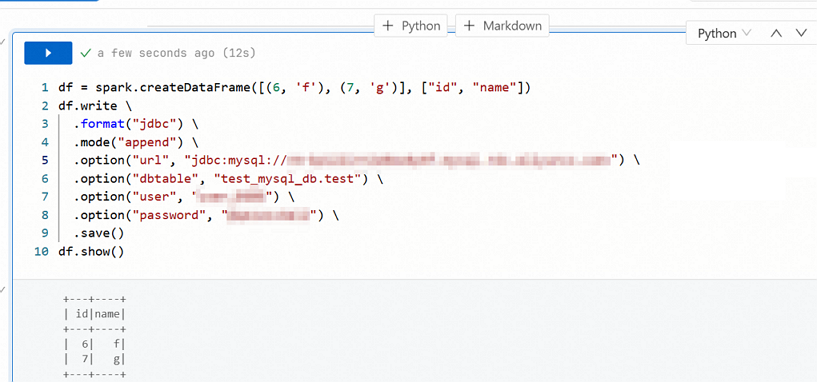

Insert data into the MySQL table:

df = spark.createDataFrame([(6, 'f'), (7, 'g')], ["id", "name"]) df.write \ .format("jdbc") \ .mode("append") \ .option("url", "jdbc:mysql://<jdbc_url>") \ .option("dbtable", "<db>.<table>") \ .option("user", "<username>") \ .option("password", "<password>") \ .save() df.show()mode("append")adds rows to the existing table without overwriting or deleting existing data. If the inserted rows appear in the query results, the write operation succeeded.

Connect via a Spark batch job

Compile the following Scala code and package it into a JAR file:

package spark.test import org.apache.spark.sql.SparkSession object Main { def main(args: Array[String]): Unit = { val spark = SparkSession.builder() .appName("test") .getOrCreate() val newRows = spark.createDataFrame(Seq((6, "f"), (7, "g"))).toDF("id", "name") newRows.write.format("jdbc") .mode("append") .option("url", "jdbc:mysql://<jdbc_url>") .option("dbtable", "<db>.<table>") .option("user", "<username>") .option("password", "<password>") .save() spark.read.format("jdbc") .option("url", "jdbc:mysql://<jdbc_url>") .option("dbtable", "<db>.<table>") .option("user", "<username>") .option("password", "<password>") .load() .show() spark.stop() } }In Data Development, create a Batch Job > JAR task and configure the following parameters. See Develop a batch job or streaming job for details.

Parameter Value Main JAR Resource Path to the JAR file Main Class spark.test.MainNetwork Connection An existing network connection After the task completes, click Log Investigation in the Run Record section. On the Stdout tab of the Driver Log, verify that the MySQL table contents appear as expected.