This topic provides answers to some frequently asked questions about StarRocks.

Business testing and evaluation

Purchasing

Usage

How does the COUNT function work in various StarRocks data models?

How do I reduce the disk usage of the /mnt/disk1/starrocks/storage/trash/ directory?

What do I do if an error occurs when I create a materialized view?

Why do I fail to access a StarRocks cluster by using port 8030 or port 8040?

How does StarRocks distribute data to the data disks of backend (BE) nodes?

After task nodes are added to a StarRocks cluster, the nodes are not used to run tasks. Why?

What requirements does StarRocks have for hardware resources?

Machine configurations

We recommend that the servers for backend (BE) nodes have at least 16 CPU cores and 64 GB of memory, and the servers for frontend (FE) nodes have at least 8 CPU cores and 16 GB of memory.

In most cases, the servers for FE nodes in a production environment have 16 CPU cores, 32 or 64 GB of memory, and an NVMe disk with 200 to 500 GB of memory.

Disks

HDD and SSD can be used.

The disk capacity can be estimated based on the following condition: The compression ratio is 3, and the disk usage is 70% to 75%.

If you want to import Hive data in Parquet or ORC format into StarRocks, estimate the disk capacity based on the compression ratio of 1:1.

For example, if the size of the Hive data that you want to import is 3 TB, the size of the data imported into StarRocks is 3 TB.

CPU

The CPU must support the Advanced Vector Extensions 2 (AVX2) instruction set. You can run the

cat /proc/cpuinfo |grep avx2command to determine whether the AVX2 instruction set is supported.The fully vectorized execution engine makes more efficient use of CPU processing power if the CPU has the instruction set. If not, we recommend that you change the server.

Network

We recommend that you select 10GE network interface controllers (NICs) and 10GE switches.

What requirements does StarRocks have for software configurations?

For more information, see Parameter configuration.

How many replicas do I need to specify in a production environment?

In most cases, 2 or 3 replicas are needed in a production environment. We recommend that you specify 3 replicas.

How do I determine the number of partitions of a table?

Proper partitions help you effectively decrease the amount of data to be scanned.

In most cases, you can select partition keys based on your business requirements. For example, you can select the time or region column as the partition key.

If you need partitions to be automatically created, you can enable dynamic partitioning.

How do I determine the number of tablets?

You can select a column with high cardinality as a bucketing key to prevent data skew between tablets.

If a unique ID column exists, we recommend that you select the unique ID column as the bucketing key.

If data cannot be evenly distributed into each tablet by using one bucketing column, you can select multiple columns as the bucketing key. However, do not use an excessive amount of columns as the bucketing key.

You can estimate the optimal size of tablets based on the following information. The number of tablets can be calculated based on the following items and the total data amount.

For raw data, such as data in the CSV format, the size of a tablet ranges from 1 GB to 10 GB.

For data in the Parquet format, the size of a tablet is about 1 GB.

If you want to make full use of machine resources, use the following formula to calculate the number of tablets:

Number of BE nodes × Number of CPU cores/2.

How do I determine the sort key?

The sort key should be designed based on the characteristics of data queries.

You can select columns that are frequently used for filter conditions and in the Group BY clause as the sort key to accelerate queries.

If a large number of point queries are often performed, set the ID column of the point queries as the first column of the sort key columns.

For example, if the query statement is

select sum(revenue) from lineorder where user_id='aaa100';and high concurrency occurs, we recommend that you set theuser_idcolumn as the first column of the sort key columns.If aggregate queries and scans are mainly performed, we recommend that you set the columns with low cardinality first.

For example, if the query statement is

select region, nation, count(*) from lineorder_flat group by region, nation, we recommend that you specify theregioncolumn as the first column and thenationcolumn as the second column.

How do I select proper data types?

We recommend that you select data types with high precision. For example, select an integer data type rather than a string data type, and select the INT data type rather than the BIGINT data type. Data types with high precision can help you fully make use of the performance of databases.

How do I perform parameter tuning when the Routine Load import method has performance issues?

Parameter tuning policies

If the Routine Load import method has performance issues, you can perform parameter tuning from the following dimensions:

Job scheduling cycle

You can modify the max_batch_interval parameter to shorten the scheduling cycle and accelerate data consumption. However, if you shorten the scheduling cycle, more CPU resources may be consumed.

ImportantThe minimum scheduling cycle is 5 seconds.

Job parallelism

If a large number of partitions and BE nodes are involved, you can configure the following parameters to accelerate the job execution. However, if you increase the degree of parallelism (DOP), more CPU resources may be consumed.

max_routine_load_task_concurrent_num

desired_concurrent_number

A Routine Load job is split into several tasks based on the number of partitions in a Kafka topic and distributed to multiple BE nodes for execution based on the number of BE nodes. The DOP of a job refers to the number of tasks that a Routine Load job is split into.

You can use the following formula to calculate the DOP of a job.

concurrent_num = Min( Min( partition_num, Min( desired_concurrent_num, alive_be_num ) ),Config.max_routine_load_task_concurrent_num )Import batch size

routine_load_task_consume_second: You can accelerate data consumption by increasing the duration of a read operation.

max_routine_load_batch_size: You can accelerate data consumption by increasing the data amount in a read operation.

You can use the following logs to determine whether the max_routine_load_batch_size parameter is set to a lower value. The value of the

left_bytesfield in the logs should be no less than 0, which indicates that the amount of data read at a time does not exceed the value of the max_routine_load_batch_size parameter. Otherwise, the max_routine_load_batch_size parameter is set to a lower value.I0325 20:27:50.410579 15259 data_consumer_group.cpp:131] consumer group done: 41448fb1a0ca59ad-30e34dabfa7e47a0. consume time(ms)=3261, received rows=179190, received bytes=9855450, eos: 1, left_time: -261, left_bytes: 514432550, blocking get time(us): 3065086, blocking put time(us): 24855 1

Parameters of Routine Load jobs

Parameter | Type | Default value | Description |

max_routine_load_job_num | fe.conf | 100 | The maximum number of Routing Load jobs in the NEED_SCHEDULE, RUNNING, or PAUSED state. |

max_routine_load_task_concurrent_num | fe.conf | 5 | The maximum DOP of a Routine Load job. |

max_routine_load_task_num_per_be | fe.conf | 5 | The maximum number of Routine Load jobs that can be allocated to a single BE node. |

max_routine_load_batch_size | fe.conf | 500 MB | The maximum amount of data that can be read from Kafka at a time. |

routine_load_task_consume_second | fe.conf | 3 | The maximum period used to read data from Kafka at a time. |

routine_load_task_timeout_second | fe.conf | 15 | The timeout period for which a Routine Load job can run. |

max_consumer_num_per_group | be.conf | 3 | The maximum number of consumers in a consumer group. |

desired_concurrent_number | properties | 3 | The expected DOP of a Routine Load job. The actual DOP is calculated based on the following formula: |

max_batch_interval | properties | 10s | The scheduling cycle of a Routine Load job. |

max_batch_rows | properties | 200000 | This parameter is used only to specify the range of the error detection window. The range of the window is calculated by multiplying 10 and the value of this parameter. |

max_error_number | properties | 0 | The maximum number of error rows allowed in the sampling window. The value must be greater than or equal to 0. The default value 0 specifies that no error row is allowed. Important Error rows do not contain the rows that are filtered out by a WHERE clause. |

strict_mode | properties | true | Specifies whether to enable the strict mode. By default, the strict mode is enabled. After you enable the strict mode, if a column of the raw data is changed from NOT NULL to NULL, the data is filtered out. |

How do I select a data model?

StarRocks provides the following four data models. You can choose a data model based on your business requirements.

Data model | Scenario |

duplicate key |

|

Aggregate key model |

|

uniq key | Data is updated for real-time analysis. |

primary key | Data is updated for real-time analysis. If some columns are updated, we recommend that the number of updated columns is no more than 200. |

How does the COUNT function work in various StarRocks data models?

StarRocks has four data models, which are duplicate key, unique key, aggregate key, and primary key models. The way in which the COUNT function works varies based on different data models. The following items describe the differences:

Duplicate key model: This data model does not require merge operations, and thus the result of the COUNT function is returned in a quick manner.

Unique key and aggregate key models: The two data models require merge operations on multiple versions of data, and thus the result of the COUNT function is returned later than that of the duplicate key model.

Theoretically, if the key column is of the STRING type, the result of the COUNT function is more slowly returned.

Primary key model: The data model supports the primary key index and the delete operation on vectors when data is read. The model does not require merge operations. As a result, the result of the COUNT function is returned in the model faster than those in the unique key and aggregate key models.

We recommend that you select the primary key model if your data involves the update operations.

How do I reduce the disk usage of the /mnt/disk1/starrocks/storage/trash/ directory?

The deleted data is stored in the /mnt/disk1/starrocks/storage/trash/ directory. If you want to reduce the disk usage of this directory, you can reduce the value of the trash_file_expire_time_sec parameter in the be.conf file to shorten the retention period of the trash directory. The default retention period is 259,200 seconds, which equals 72 hours.

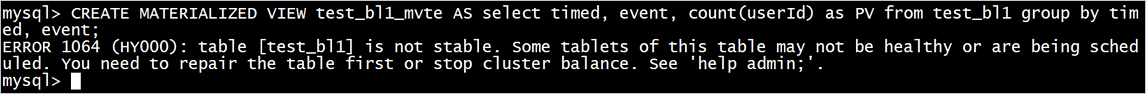

What do I do if an error occurs when I create a materialized view?

Problem description: The following figure shows the error message.

Solutions:

Execute the following statements:

show proc "/cluster_balance";andshow proc "/statistic";.Check whether a tablet is rebalanced.

If yes, wait until the execution is complete.

If not, you can execute the

set disable_balance=truestatement and create a materialized view.

What do I do if a slow data query occurs?

We recommend that you use the following solution:

Enable profile reporting and then view the profile information on StarRocks user interface (UI).

You can execute the

SET is_report_success = true;statement to enable profile reporting.Common troubleshooting methods:

Adjust the parallelism.

Pipeline

SET pipeline_dop = 8; SET enable_pipeline_engine = true;Non-pipeline engine

SET enable_pipeline_engine=false; SET parallel_fragment_exec_instance_num=8;

View the data distribution of tablets.

show data xxx;NoteThe recommended size of a tablet is 1 to 10 GB.

View the created table.

You can determine the I/O wait based on the profile information. If the wait time is high, you can delete unnecessary indexes, such as the frequently created bitmap indexes.

View the table data model and select an appropriate data model. For example, in the unique key model, the pushdown does not work before the compaction phase is incomplete. This causes slow queries.

Why do I fail to access a StarRocks cluster by using port 8030 or port 8040?

For StarRocks clusters of E-MapReduce (EMR) V5.8.0, EMR V3.42.0, or a minor version that is earlier than EMR V5.8.0 or EMR V3.42.0, the port number of load_url is 8030 and the port number of webserver_port is 8040. However, for StarRocks clusters of EMR V5.9.0 or a later minor version, or EMR V3.43.0 or a later minor version, the port number of load_url is 18030 and the port number of webserver_port is 18040. You must select the port that you want to use to access the StarRocks cluster based on your cluster version.

You can run the show frontends command to view the actual port number of the StarRocks cluster.

In which regions is EMR StarRocks available?

StarRocks is available in all regions.

How does StarRocks distribute data to the data disks of BE nodes?

By default, four enhanced SSDs of PL1 are configured for each BE node. StarRocks evenly distributes data to BE nodes based on loads and the bucket mechanism. The data in the same tablet and replica is stored on the same disk.

How do I reset a cluster if a data exception occurs?

After you perform the following operations, cluster data is cleared. Proceed with caution.

Stop the services that are deployed on the FE and BE nodes.

Clear the directory in which the metadata of FE nodes is stored.

View the fe.conf file in the

/opt/apps/STARROCKS/starrocks-current/fe/conf/directory and obtain the configuration directory ofmeta_dir.Delete the bdb folder from the configuration directory.

Clear the image folder in the configuration directory.

Clear BE data and metadata.

View the be.conf file in the

/opt/apps/STARROCKS/starrocks-current/be/conf/directory and obtain the configuration directory ofstorage_root_path.Delete all folders and files except for the data and meta folders from the configuration directory.

Clear the data and meta folders in the configuration directory.

NoteThe configuration directory may exist in multiple paths. You must perform the preceding operations for each path.

Restart the services that are deployed on the FE and BE nodes.

How do I view the logs of the FE and BE nodes?

The logs of the FE and BE nodes are stored in the following directories:

FE nodes

/opt/apps/STARROCKS/starrocks-current/fe/log/

/mnt/disk1/log/starrocks/

BE nodes

/opt/apps/STARROCKS/starrocks-current/be/log/

/mnt/disk1/log/starrocks/

After task nodes are added to a StarRocks cluster, the nodes are not used to run tasks. Why?

Problem description

After three task nodes are added to a StarRocks cluster as compute nodes (CNs), the added nodes are not used to run tasks. The workload on BE nodes is high. However, the newly added task nodes are not fully utilized, and the monitoring data shows that the workload on task nodes is low.

Cause

For StarRocks clusters that adopt the compute-storage integration architecture, CN nodes can be used to query data only from external tables. In scenarios in which you want to query data from internal tables, BE nodes are always used. As a result, the newly added task nodes are not fully utilized.

Solution

Before you run query tasks, execute the following SQL statement to allow the tasks to be preferentially run on CNs:

SET GLOBAL prefer_compute_node=true;How do I view the password of a StarRocks cluster?

The password of a StarRocks cluster refers to the password that you set for the root user when you create the StarRocks cluster. If you forget the password, you can reset the password. For more information, see the How do I reset my password? section of the "Log on to a cluster" topic.