Data Transmission Service (DTS) supports two-way data synchronization between two PostgreSQL databases, such as databases in ApsaraDB RDS for PostgreSQL instances and self-managed PostgreSQL databases. This enables active geo-redundancy (unit-based) and geo-disaster recovery architectures where each instance owns a distinct partition of data and writes are operationally segregated by partition.

Two-way synchronization is not a general multi-master solution. To maintain data consistency, each record must be written on only one instance at a time—records with the same primary key or unique key must never be updated concurrently on both instances. DTS detects and handles conflicts, but cannot guarantee full consistency when the same record is modified simultaneously on both sides.

Two-way synchronization limits

Before you configure a two-way synchronization task, review the following limits that apply specifically to the two-way topology.

| Limit | Detail |

|---|---|

| Number of instances | DTS supports two-way synchronization between exactly two PostgreSQL databases. Synchronization among three or more databases is not supported. |

| Full data synchronization | Only one task in a two-way pair can run full data synchronization plus incremental data synchronization. The other task must run incremental data synchronization only. |

| Reverse task object selection | Do not select the same objects in the reverse task that are already selected in the forward task. |

| Object name mapping | Object name mapping is not supported in the reverse task. Using it may cause data inconsistency. |

| DDL synchronization direction | DDL operations are synchronized only in the forward direction (source to destination). DDL operations in the reverse direction are ignored. |

Prerequisites

Before you begin, make sure that:

-

Both the source and destination ApsaraDB RDS for PostgreSQL instances are created. For more information, see Create an ApsaraDB RDS for PostgreSQL instance.

-

The

wal_levelparameter is set tologicalon both instances. For more information, see Modify the parameters of an ApsaraDB RDS for PostgreSQL instance.

For supported PostgreSQL versions, see Overview of data synchronization scenarios.

Billing

| Synchronization type | Fee |

|---|---|

| Schema synchronization and full data synchronization | Free |

| Incremental data synchronization | Charged. For details, see Billing overview. |

Supported objects

-

SCHEMA and TABLE — including PRIMARY KEY, UNIQUE KEY, FOREIGN KEY, built-in data types, and DEFAULT CONSTRAINT.

-

VIEW, PROCEDURE (PostgreSQL V11 or later), FUNCTION, RULE, SEQUENCE, EXTENSION, TRIGGER, AGGREGATE, INDEX, OPERATOR, and DOMAIN.

SQL operations that can be synchronized

| Operation type | SQL statements |

|---|---|

| DML | INSERT, UPDATE, and DELETE |

| DDL | CREATE TABLE, DROP TABLE, ALTER TABLE (RENAME TABLE, ADD COLUMN, ADD COLUMN DEFAULT, ALTER COLUMN TYPE, DROP COLUMN, ADD CONSTRAINT, ADD CONSTRAINT CHECK, ALTER COLUMN DROP DEFAULT), TRUNCATE TABLE (the version of the source self-managed PostgreSQL database must be 11 or later), CREATE INDEX ON TABLE |

DDL synchronization requirements:

-

DDL operations can be synchronized only by tasks created after October 1, 2020.

-

For tasks created before May 12, 2023, you must create triggers and functions in the source database before configuring the task. For details, see Use triggers and functions to implement incremental DDL migration for PostgreSQL databases.

-

The source database account must be a privileged account, and the ApsaraDB RDS for PostgreSQL instance must be running minor engine version 20210228 or later. For details about updating the minor engine version, see Update the minor engine version.

DDL operations are synchronized only in the forward direction (source to destination). DDL operations in the reverse direction are ignored. Additional DDL restrictions apply:

-

CASCADE and RESTRICT clauses are not synchronized.

-

DDL statements executed via

SET session_replication_role = replicaare not synchronized. -

DDL statements invoked through functions are not synchronized.

-

If a batch commit contains both DML and DDL statements, the DDL statements are not synchronized.

-

DDL statements affecting objects not in the sync scope are not synchronized.

-

To use a data synchronization task created before May 12, 2023 to synchronize DDL operations, you must create triggers and functions in the source database to capture DDL information before you configure the data synchronization task. For more information, see Use triggers and functions to implement incremental DDL migration for PostgreSQL databases.

-

Data of the BIT type cannot be synchronized during incremental data synchronization.

Conflict detection

DTS checks for and handles conflicts to keep two-way synchronization stable. The source data of the current task can be synchronized only to the destination database in the task—synchronized data is not used as the source data of the other task.

DTS detects the following conflict types:

-

INSERT conflicts — If a record with the same primary key is inserted on both instances at nearly the same time, one insert fails because the key already exists on the other side.

-

UPDATE conflicts — If the record to be updated does not exist in the destination instance, DTS converts the UPDATE into an INSERT, which may then trigger an INSERT conflict.

-

DELETE conflicts — If the record to be deleted does not exist in the destination instance, DTS ignores the DELETE regardless of the conflict resolution policy.

DTS cannot guarantee that the conflict detection mechanism prevents all conflicts. System clock differences and synchronization latency between instances mean that concurrent updates to the same record may still result in inconsistency. Design your application so that records with the same primary key are always written on only one of the two instances.

Other limits

Source and destination database limits

-

Tables without primary keys and UNIQUE constraints require the Exactly-Once write feature to be enabled, or the destination database may contain duplicate records. For details, see Synchronize tables without primary keys or UNIQUE constraints.

-

If you select tables as objects and need to rename tables or columns in the destination, a single task can synchronize up to 5,000 tables. Exceeding this limit causes a request error. In that case, configure multiple tasks or synchronize at the database level instead.

-

DTS cannot synchronize temporary tables, internal triggers, or certain internal procedures and functions written in C.

-

DTS can synchronize custom parameters of COMPOSITE, ENUM, and RANGE types. Tables must have PRIMARY KEY, FOREIGN KEY, UNIQUE, or CHECK constraints.

-

DTS cannot synchronize tables that have inheritance relationships across schemas.

-

If a table contains a SERIAL data type, DTS automatically creates a sequence in the source database. When selecting Schema Synchronization under Synchronization Types, also select Sequence or synchronize at the schema level to prevent task failures.

-

If a schema is the sync object and you create a new table or rename a table in that schema, run the following statement before writing data to the table:

ALTER TABLE schema.table REPLICA IDENTITY FULL;Replace

schemaandtablewith the actual names. Run this during off-peak hours and avoid locking the table to prevent deadlocks.

Write-ahead log (WAL) requirements

-

Set

wal_leveltological. -

For incremental data synchronization only: retain WAL logs for more than 24 hours.

-

For full data synchronization plus incremental data synchronization: retain WAL logs for at least seven days. After full data synchronization completes, you can set the retention period to more than 24 hours. If the retention period is too short, DTS may fail to retrieve the WAL logs, causing task failure or data loss.

-

If the source database has long-running transactions, WAL logs generated before those transactions commit may accumulate, consuming disk space.

Operations on the source database

-

Before performing a primary/secondary switchover on the source instance, enable the Logical Replication Slot Failover feature to prevent interruption of logical subscriptions. For details, see Logical Replication Slot Failover.

-

If the size of a single incremental change exceeds 256 MB, the synchronization instance fails and cannot be recovered. You must configure a new task.

-

Do not execute DDL statements during schema synchronization or full data synchronization. Doing so causes the task to fail.

-

A major version upgrade of the source database causes the running synchronization instance to fail without recovery. You must configure a new task.

-

Do not modify the endpoint or zone of the source ApsaraDB RDS for PostgreSQL instance while the task is running.

Other limits

-

A single synchronization task covers one database. To synchronize multiple databases, create a separate task for each.

-

DTS creates the following temporary tables in the source database for DDL capture, schema tracking, and heartbeat. Do not delete them while the task is running. After the DTS instance is released, they are deleted automatically:

public.dts_pg_class,public.dts_pg_attribute,public.dts_pg_type,public.dts_pg_enum,public.dts_postgres_heartbeat,public.dts_ddl_command,public.dts_args_session, andpublic.aliyun_dts_instance. -

DTS adds a heartbeat table named

dts_postgres_heartbeatto the source database to maintain accurate synchronization latency metrics. -

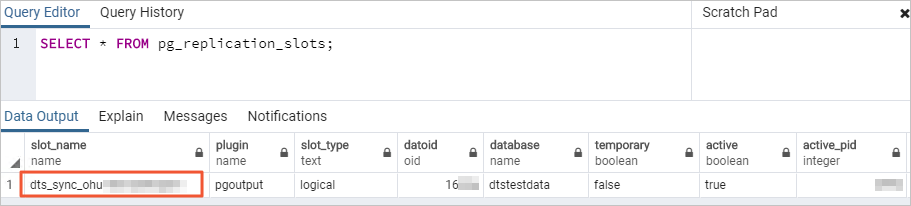

DTS creates a replication slot prefixed with

dts_sync_in the source database. This slot retains the incremental logs from the past 15 minutes.The replication slot is deleted automatically when the DTS instance is released. However, if you change the source database password or remove DTS IP addresses from the whitelist, the slot cannot be deleted automatically—delete it manually to prevent accumulation. If the task is released or fails, DTS automatically deletes the replication slot. After a primary/secondary switchover, log in to the secondary database to delete the slot.

-

DTS does not validate metadata such as sequences. Validate metadata manually.

-

Before switching workloads to the destination database, query the maximum sequence values in the source database, then set those values as the starting values in the destination database:

The output contains all sequences in the source database. Apply the relevant

setvalstatements in the destination database based on your requirements.do language plpgsql $$ declare nsp name; rel name; val int8; begin for nsp,rel in select nspname,relname from pg_class t2 , pg_namespace t3 where t2.relnamespace=t3.oid and t2.relkind='S' loop execute format($_$select last_value from %I.%I$_$, nsp, rel) into val; raise notice '%', format($_$select setval('%I.%I'::regclass, %s);$_$, nsp, rel, val+1); end loop; end; $$; -

Before you synchronize data, evaluate the impact of data synchronization on the performance of the source and destination databases. We recommend that you synchronize data during off-peak hours.

-

Initial full data synchronization runs concurrent INSERT operations, which can cause table fragmentation in the destination database. As a result, the used tablespace in the destination database may be larger than in the source database after full data synchronization completes.

-

If you use only DTS to write data to the destination database, you can use Data Management (DMS) to perform online DDL operations on source tables during synchronization. For details, see Change schemas without locking tables.

-

If other sources also write to the destination database during synchronization, data inconsistency may occur. For example, running DMS online DDL statements while other sources write to the destination can cause data loss.

-

If the destination database account is a privileged account or superuser, and the tables being synchronized contain foreign keys, triggers, or event triggers, DTS temporarily sets

session_replication_roletoreplicaat the session level. If the account does not have the required permissions, set this parameter manually. Ifsession_replication_roleis set toreplicaand cascade updates or deletes are performed on the source database during synchronization, data inconsistency may occur. After the task is released, you can resetsession_replication_roletoorigin. -

If a DTS task fails, DTS technical support attempts to restore it within 8 hours. During restoration, the task may be restarted and task parameters may be modified.

Only task parameters are modified—database parameters are not changed. For a list of parameters that may be modified, see the Modify instance parameters section of the Modify the parameters of a DTS instance topic.

-

For self-managed PostgreSQL databases: the values of

max_wal_sendersandmax_replication_slotsmust exceed the sum of existing replication slots and the number of DTS instances to be created for that database. -

For Google Cloud SQL for PostgreSQL: set the Database Account to an account with the

cloudsqlsuperuserpermission. When selecting objects, only select objects the account is authorized to manage, or grant the OWNER permission on those objects to the account.An account with

cloudsqlsuperusercannot manage data owned by othercloudsqlsuperuseraccounts.

Configure two-way synchronization

A two-way synchronization setup requires two tasks: a forward task and a reverse task. Configure them in order—the reverse task can only be configured after the forward task reaches the Running state.

Step 1: Purchase a two-way synchronization instance

Purchase a DTS instance. On the purchase page, set both Source Instance and Destination Instance to PostgreSQL, and set Synchronization Topology to Two-way Synchronization.

Step 2: Configure the forward synchronization task

The forward task synchronizes data from instance A to instance B, and runs full data synchronization plus incremental data synchronization.

-

Go to the Data Synchronization page of the DTS console.

Alternatively, log in to the Data Management (DMS) console. In the top navigation bar, move your pointer over Data Management (DMS) consoleData + AI and choose DTS (DTS) > Data Synchronization.

-

In the upper-left corner, select the region where the purchased instance resides.

-

Find the synchronization instance and click Configure Task in the Actions column of the first (forward) task.

-

Configure the source and destination databases.

WarningRead the Limits displayed on the page before confirming. Skipping this step may cause task failure or data inconsistency.

Section Parameter Description N/A Task Name A name for the DTS task. DTS generates a default name. Specify a descriptive name for easier identification. The name does not need to be unique. Source Database Database Type Select PostgreSQL. Connection Type Select Alibaba Cloud Instance. Instance Region The source region selected on the purchase page. Read-only. Instance ID The ID of the source ApsaraDB RDS for PostgreSQL instance. Database Name The name of the source database. Database Account A privileged account that is the owner of the source database. For details on creating an account and granting permissions, see Create an account and Create a database. Database Password The password for the database account. Encryption Select Non-encrypted or SSL-encrypted based on your requirements. For SSL encryption, upload the CA Certificate, Client Certificate, and Private Key of Client Certificate as needed, and specify the Private Key Password of Client Certificate. For details on configuring SSL encryption, see SSL encryption. Destination Database Database Type Select PostgreSQL. Connection Type Select Alibaba Cloud Instance. Instance Region The destination region selected on the purchase page. Read-only. Instance ID The ID of the destination ApsaraDB RDS for PostgreSQL instance. Database Name The name of the destination database. Database Account A privileged account that is the owner of the destination database. Database Password The password for the database account. Encryption Same options as the source database. -

Click Test Connectivity and Proceed.

DTS server CIDR blocks must be added to the security settings of both databases. For self-managed databases not using the Alibaba Cloud Instance access method, click Test Connectivity in the CIDR Blocks of DTS Servers dialog box. For details, see Add the CIDR blocks of DTS servers.

-

Configure the objects to synchronize. In the Configure Objects step, set the following parameters:

Renaming an object with object name mapping may break synchronization for other objects that depend on it.

Parameter Description Synchronization Types Select Schema Synchronization, Full Data Synchronization, and Incremental Data Synchronization. After the precheck completes, DTS synchronizes historical data from the source to the destination as the basis for subsequent incremental synchronization. Selecting Schema Synchronization also synchronizes foreign keys. Processing Mode of Conflicting Tables Precheck and Report Errors: fails the precheck if the destination contains tables with the same names as source tables. Use object name mapping to resolve conflicts if destination tables cannot be deleted or renamed. For details, see Map object names. Ignore Errors and Proceed: skips this precheck. If the source and destination tables have the same schema and a conflicting primary or unique key exists, DTS retains the destination record during full data synchronization but overwrites it during incremental data synchronization. If schemas differ, initialization may fail or only partial columns may be synchronized. Synchronization Topology Select Two-way Synchronization. Exclude DDL Operations Yes: excludes DDL operations. No: synchronizes DDL operations (forward direction only). This parameter is displayed only when configuring the forward task. Conflict Resolution Policy Select a policy based on your requirements: TaskFailed — stops the task on conflict; you must resolve conflicts manually. Ignore — ignores the conflicting statement and retains the destination record. Overwrite — overwrites the destination record with the incoming data. Capitalization of Object Names in Destination Instance Controls the capitalization of database names, table names, and column names. Defaults to DTS default policy. For details, see Specify the capitalization of object names in the destination instance. Source Objects Select one or more objects and click the  icon to move them to Selected Objects.

icon to move them to Selected Objects.Selected Objects To rename a single object, right-click it. For bulk renaming, click Batch Edit. For details, see Map object names. To filter data by SQL conditions, right-click a table and specify the conditions. For details, see Use SQL conditions to filter data. -

Click Next: Advanced Settings and configure the following parameters:

Parameter Description Dedicated Cluster for Task Scheduling By default, DTS schedules tasks to the shared cluster. Purchase a dedicated cluster for higher stability. For details, see What is a DTS dedicated cluster. Retry Time for Failed Connections The time range during which DTS retries failed connections after the task starts. Valid values: 10–1440 minutes. Default: 720 minutes. Set this to a value greater than 30 minutes. If the connection is restored within this range, the task resumes. Otherwise, the task fails. If multiple tasks share the same source or destination database, the shortest retry time applies to all. Retry Time for Other Issues The time range during which DTS retries failed DML or DDL operations. Valid values: 1–1440 minutes. Default: 10 minutes. Set this to a value greater than 10 minutes. This value must be less than Retry Time for Failed Connections. Enable Throttling for Full Data Synchronization Throttles full data synchronization by limiting Queries per second (QPS) to the source database, RPS of Full Data Migration, and Data migration speed for full migration (MB/s). Available only when Full Data Synchronization is selected. Enable Throttling for Incremental Data Synchronization Throttles incremental data synchronization by limiting RPS of Incremental Data Synchronization and Data synchronization speed for incremental synchronization (MB/s). Environment Tag An optional tag for identifying the DTS instance. Monitoring and Alerting Configure alerting for task failures or latency threshold breaches. Select Yes to set alert thresholds and notification settings. For details, see Configure monitoring and alerting. -

Click Next Step: Data Verification and configure data verification as needed. For details, see Configure a data verification task.

-

Save the task settings and run the precheck.

-

To preview the API parameters for this configuration, hover over Next: Save Task Settings and Precheck and click Preview OpenAPI parameters.

-

Click Next: Save Task Settings and Precheck to save and start the precheck.

The task cannot start until it passes the precheck. If the precheck fails, click View Details next to each failed item, resolve the issues, and rerun the precheck. For alert items that can be safely ignored, click Confirm Alert Details > Ignore > OK, then click Precheck Again.

-

-

Wait for the success rate to reach 100%, then click Back.

Step 3: Configure the reverse synchronization task

The reverse task synchronizes data from instance B back to instance A. It must run incremental data synchronization only—full data synchronization must not be selected for the reverse task, because only one task in a two-way pair can run full data synchronization.

-

Wait until initial synchronization completes and the forward task enters the Running state.

-

Find the reverse synchronization task and click Configure Task.

-

Repeat steps 4 through 10 from the forward task configuration, with the following differences:

-

Source and destination instances are swapped. The source instance in the reverse task is the destination instance in the forward task, and vice versa. Make sure the database name, account, and password are consistent with the instances, not the roles.

-

The Instance Region parameter cannot be modified.

-

For Synchronization Types, select Incremental Data Synchronization only. Do not select full data synchronization.

-

DTS automatically excludes tables already synchronized to the destination in the forward task from the Processing Mode of Conflicting Tables check.

-

Do not select the same Selected Objects as the forward task.

-

Do not use the object name mapping feature for the reverse task.

-

-

After the reverse task is configured, wait until both tasks enter the Running state. Two-way data synchronization is now active.