DataWorks PAI DLC nodes let you wrap existing Deep Learning Containers (DLC) jobs in a scheduling workflow — adding periodic triggers, upstream/downstream dependencies, and centralized monitoring.

Prerequisites

Before you begin, ensure that you have:

-

DataWorks permissions to access Platform for AI (PAI). Go to the authorization page to complete one-click authorization. Only an Alibaba Cloud account or a Resource Access Management (RAM) user with the AliyunDataWorksFullAccess policy can perform this authorization. For details, see AliyunServiceRoleForDataWorksEngine

-

A project folder. See Project Folder

-

A PAI DLC node. See Create a node for a scheduling workflow

Write task code

On the PAI DLC node editing page, write the task code using one of two methods.

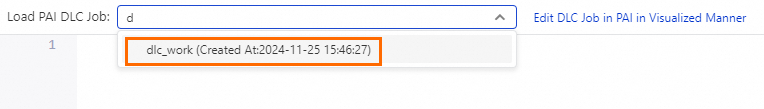

Load an existing DLC job

Search for a DLC job by name in PAI and load it. The node editor generates code from the job's existing configuration, which you can then edit.

If you don't have permission to load or create a job, follow the on-screen instructions to grant access. If no jobs are available, go to the PAI console to create one — see Create a training job, Create a training job: Python SDK, and Create a training job: command line.

Write task code directly

Write task code directly in the PAI DLC node editor. Use the dlc submit pytorchjob command to submit a PyTorchJob.

Define variables in your code using the ${variable_name} format, then assign values in the Scheduling Parameters section under Properties on the right side of the page. This lets you pass parameters dynamically at scheduling time. For more information, see Sources and expressions of scheduling parameters.

The following example submits a PyTorchJob with common configuration options:

dlc submit pytorchjob \

--name=test \

--command='echo '\''hi'\''' \

--workspace_id=309801 \

--priority=1 \

--workers=1 \

--worker_image=<image> \

--image_repo_username=<username> \

--image_repo_password=<password> \

--data_source_uris=oss://oss-cn-shenzhen.aliyuncs.com/::/mnt/data/:{mountType:jindo} \

--worker_spec=ecs.g6.xlargeReplace the placeholders before running:

| Placeholder | Description |

|---|---|

<image> |

Path of the container image for the worker |

<username> |

Username for authenticating against a private image registry |

<password> |

Password for authenticating against a private image registry |

Key parameters:

| Parameter | Description |

|---|---|

--name |

Name of the DLC job. Use a variable name or the DataWorks node name. |

--command |

Command to run inside the container. |

--workspace_id |

ID of the PAI workspace where the job runs. |

--priority |

Job priority. Valid values: 1–9. 1 is the lowest priority; 9 is the highest. |

--workers |

Number of worker nodes. Set to more than 1 to run a distributed training job across multiple nodes. |

--worker_image |

Container image for each worker node. |

--image_repo_username / --image_repo_password |

Credentials for a private image registry. Omit for public images. |

--data_source_uris |

Mounts an Object Storage Service (OSS) data source into the container at the specified path. The example uses the jindo mount type. |

--worker_spec |

Instance type for each worker node, for example, ecs.g6.xlarge. |

Configure and run the node

-

In the Run Configurations section, set Resource Group to a scheduling resource group that can reach your data source. For network connectivity options, see Network connectivity solutions.

-

In the toolbar, click Run to run the node task immediately.

Configure scheduling (optional)

To run the node on a recurring schedule, configure its scheduling properties — including recurrence type, time zone, and upstream dependencies. See Configure scheduling properties for a node.

Publish the node

After the node is configured, publish it to the production scheduling environment. See Publish a node or workflow.

Monitor scheduled runs

After the node is published, auto-triggered task instances appear in the Operation Center. For a walkthrough, see Get started with Operation Center.

What's next

-

Configure scheduling properties for a node — set up recurrence, time zones, and dependencies

-

Publish a node or workflow — move from development to production

-

Get started with Operation Center — monitor and manage scheduled runs