The whole database real-time synchronization feature combines a one-time full synchronization with continuous incremental capture to synchronize an entire source database, such as MySQL or Oracle, to a destination system with low latency. A whole database real-time synchronization task first performs a full synchronization of historical data, automatically initializing the schema and data in the destination. It then seamlessly switches to a real-time incremental mode, using technologies like change data capture (CDC) to continuously capture and synchronize subsequent data changes. This capability is ideal for use cases like real-time data warehousing and data lake construction. This topic uses synchronizing a MySQL database to MaxCompute as an example to explain how to configure the task.

Prerequisites

-

Data source preparation

-

You have created the source and destination data sources. For more information, see Data source management.

-

Ensure the data sources support whole database real-time synchronization. For more information, see Supported data sources and synchronization solutions.

-

Some data sources, such as MySQL, Hologres, and Oracle, require you to enable logging. The method for enabling logs varies by data source. For more information, see Data source list.

-

MaxCompute: The Decimal data type is supported only in MaxCompute 2.0. Before starting synchronization, enable MaxCompute 2.0 data types. For more information, see MaxCompute 2.0 data types.

-

-

Resource group: Purchase and configure a serverless resource group.

-

Network connectivity: Configure network connectivity between the resource group and the data sources.

Usage notes

-

DataWorks supports two types of database synchronization: whole database real-time synchronization and whole database full & incremental (near real-time). Both types perform a full synchronization of historical data and then automatically switch to an incremental mode. However, they differ in latency and destination table requirements:

-

Latency: Whole database real-time synchronization achieves second-to-minute latency. Whole database full & incremental (near real-time) provides T+1 latency.

-

Destination table (MaxCompute):

-

PK Delta Table: Supports all features of whole database real-time synchronization.

-

Standard Table and Append Delta Table: Support only the Append mode when you select the incremental-only synchronization mode for a whole database real-time synchronization task.

-

Whole database full & incremental (near real-time): Supports all preceding table types.

-

-

-

You can configure a whole database real-time synchronization task in either DataStudio or Data Integration. The functionality is identical in both modules.

-

Consistent configuration: The configuration interface, parameter settings, and underlying functions are exactly the same, regardless of where you create the task.

-

Auto-sync between modules: Tasks created in the Data Integration module are automatically synchronized to and displayed in the

data_integration_jobsdirectory of the Data Studio module. These tasks are categorized by channel based on thesource type-destination typeformat to facilitate centralized management.

-

Configure the task

Step 1: Create a synchronization task

-

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose . On the page that appears, select the desired workspace from the drop-down list and click Go to Data Integration.

-

In the left-side navigation pane, click Synchronization Task. Then, click Create Synchronization Task at the top of the page and configure the following task information:

-

Source Type:

MySQL. -

Destination Type:

MaxCompute. -

Specific Type:

Real-time migration of entire database. -

Synchronization Mode:

-

Schema Migration: Automatically creates database objects such as tables, fields, and data types in the destination that match the source, but without the data.

-

Full Synchronization (optional): Performs a one-time, complete copy of all historical data from specified source objects, such as tables, to the destination. This is typically used for initial data migration or initialization.

-

Incremental Sync (optional): After the full synchronization is complete, this step continuously captures and synchronizes data changes (inserts, updates, and deletes) from the source to the destination.

-

-

Step 2: Configure data sources and resources

-

In the Source Data Source section, select your

MySQLdata source. In the Destination section, select yourMaxComputedata source. -

In the Running Resources section, select the Resource Group for the synchronization task and allocate CU for the task.

NoteWhen the task log shows a message about insufficient resources, such as

Please confirm whether there are enough resources..., it means that the available Computing Units (CUs) in the current resource group are insufficient to start or run the task. You can increase the number of CUs that the task uses in the Configure Resource Group panel to allocate more computing resources.For recommended resource settings, see Recommended CUs for Data Integration. Adjust the settings based on your actual workload.

-

Ensure that both the source and destination data sources pass the Connectivity Check.

Step 3: Configure the synchronization solution

1. Configure the data source

-

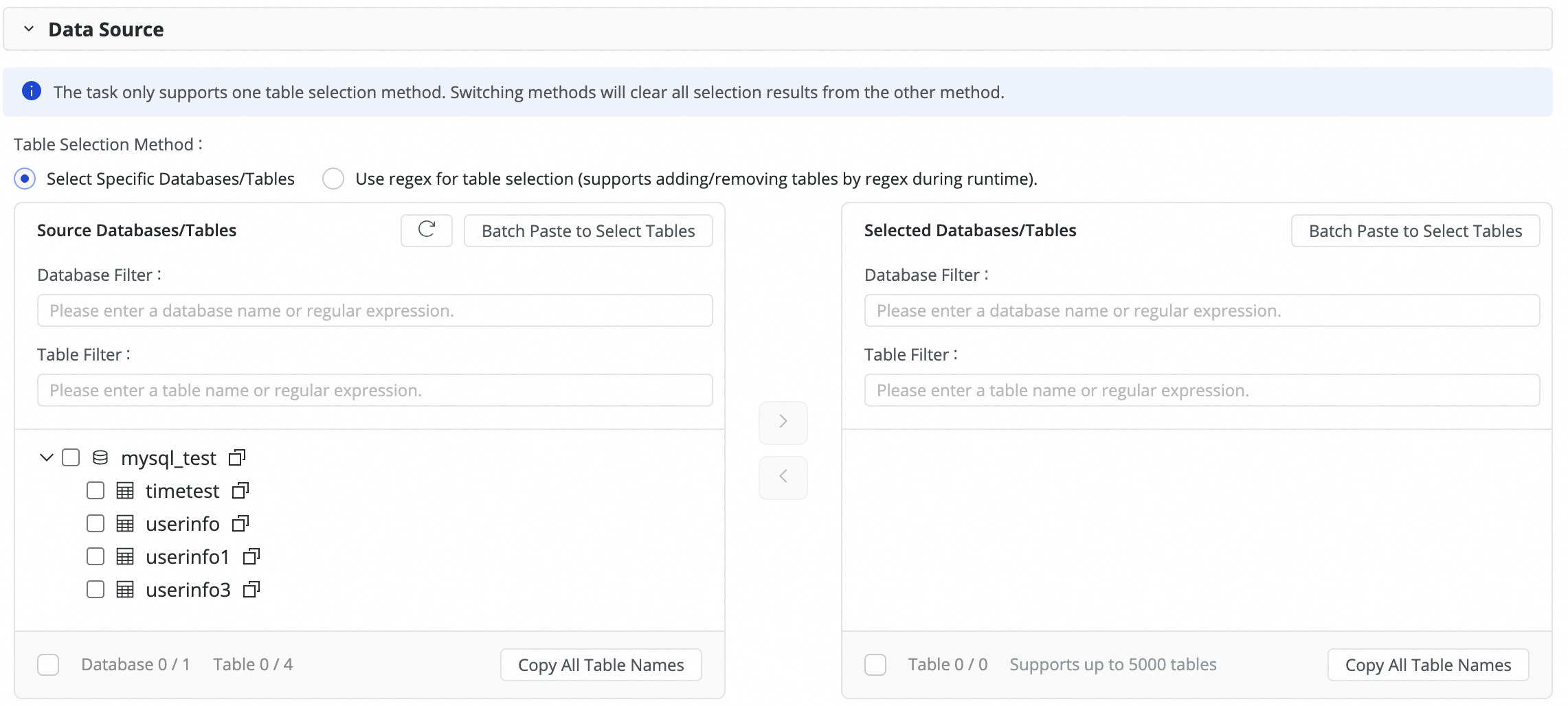

In this step, select the tables to synchronize from the Source Tables list and click the

icon to move them to the Selected Tables list. If you have many tables, you can use Database Filtering or Table filtering to select tables by configuring regular expressions.

icon to move them to the Selected Tables list. If you have many tables, you can use Database Filtering or Table filtering to select tables by configuring regular expressions.

-

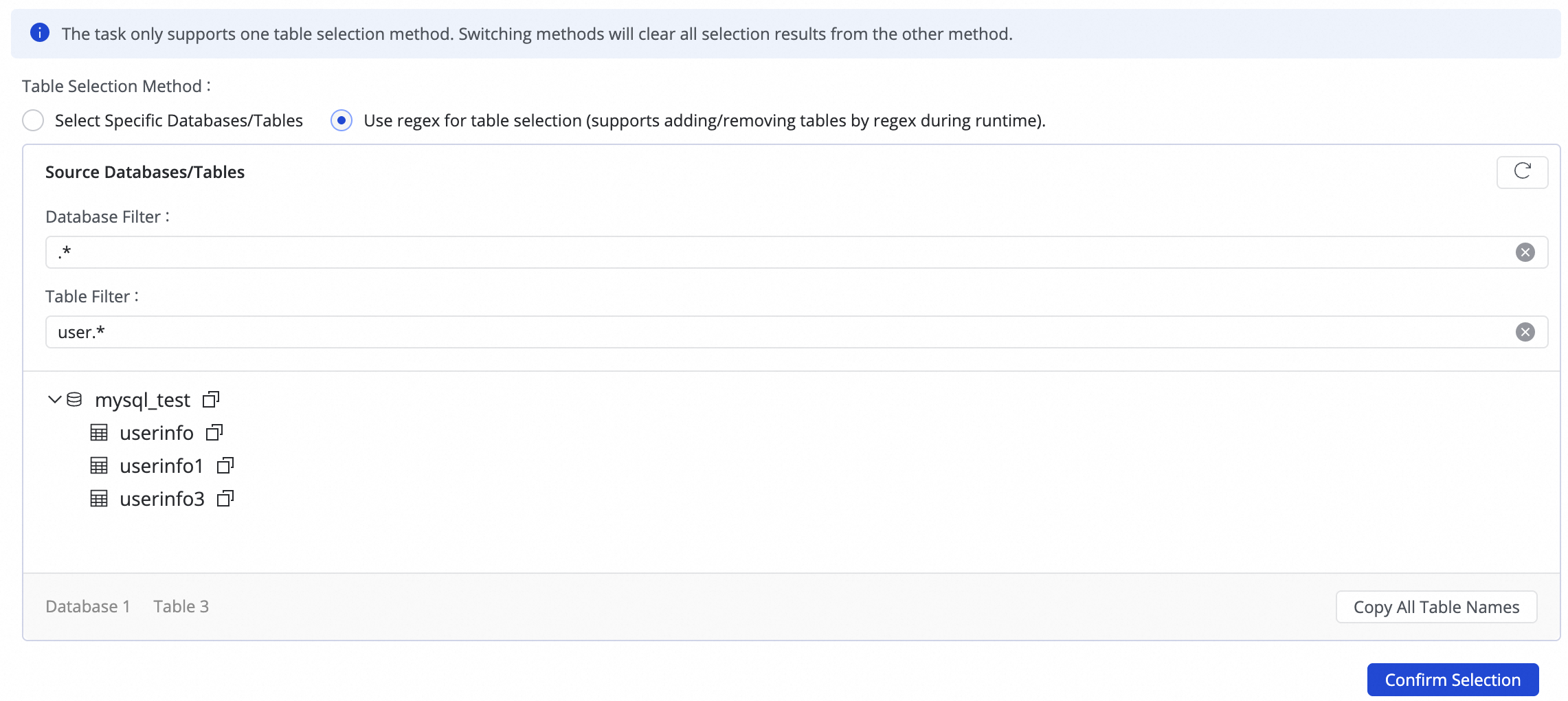

To write data from multiple sharded databases and tables with the same structure into a single destination table, you can use regex for table selection.

Enter a regular expression in the source table configuration. DataWorks automatically identifies all matching source tables and writes their data to the destination table that corresponds to the expression.NoteThis method is suitable for merging and synchronizing sharded tables, which improves configuration efficiency by eliminating the need to add multiple many-to-one synchronization rules.

2. Configure the data destination

If you select only Incremental Sync for the whole database real-time synchronization task, you can configure the incremental synchronization mode for writing to the destination table.

-

Replay: This mode is supported only for PK Delta Tables. Similar to normal synchronization, it only synchronizes data fields.

-

Incremental stream: This mode is supported for Standard Tables and Append Delta Tables. It appends real-time data from the source table, along with metadata such as inserts, updates, and deletes, to the destination table. For the incremental stream format, see Appendix: Incremental stream table format.

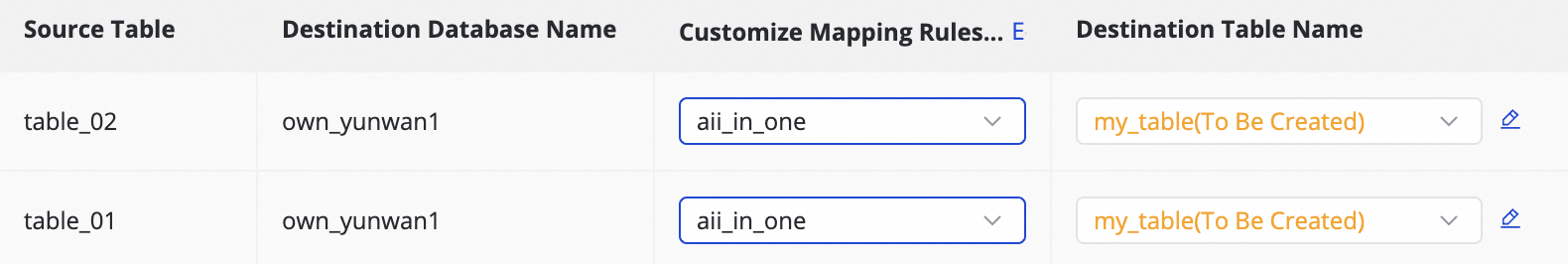

3. Map destination tables

In this step, you need to define mapping rules between source and destination tables and specify rules for primary keys, dynamic partitions, and DDL/DML configurations to determine how data is written.

|

Actions |

Description |

||||||||||||

|

Refresh |

The system automatically lists the source tables you selected, but the specific attributes of the destination tables take effect only after you refresh and confirm the mapping.

|

||||||||||||

|

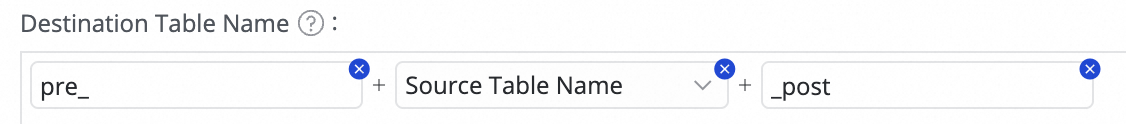

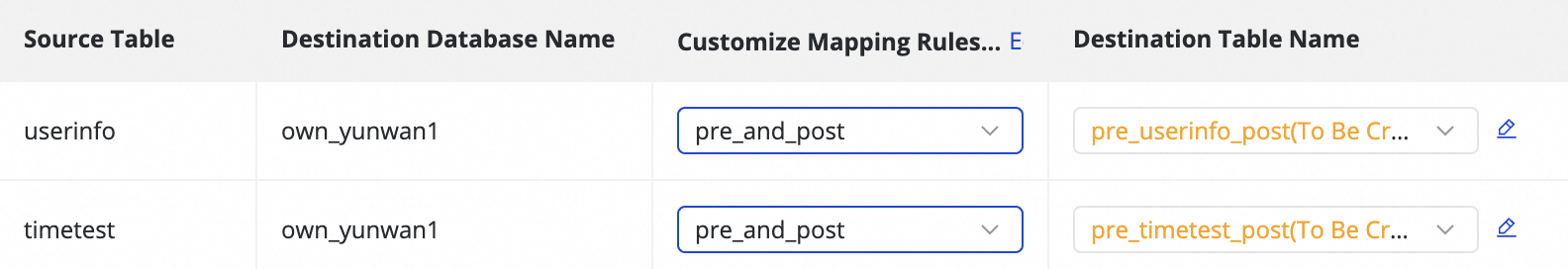

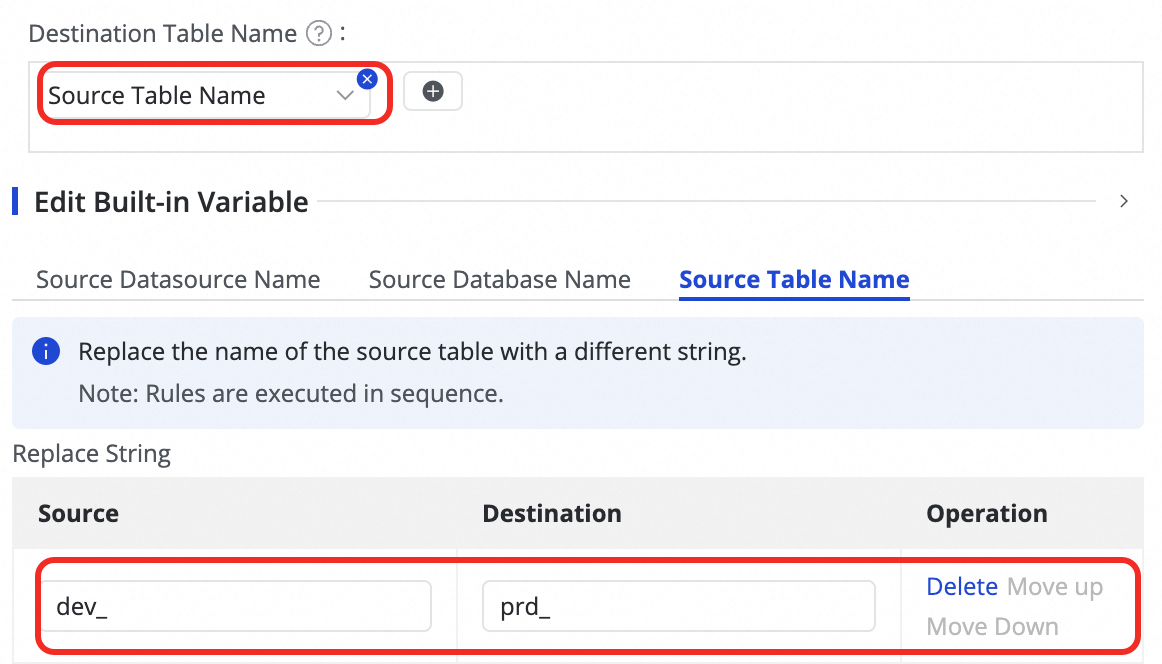

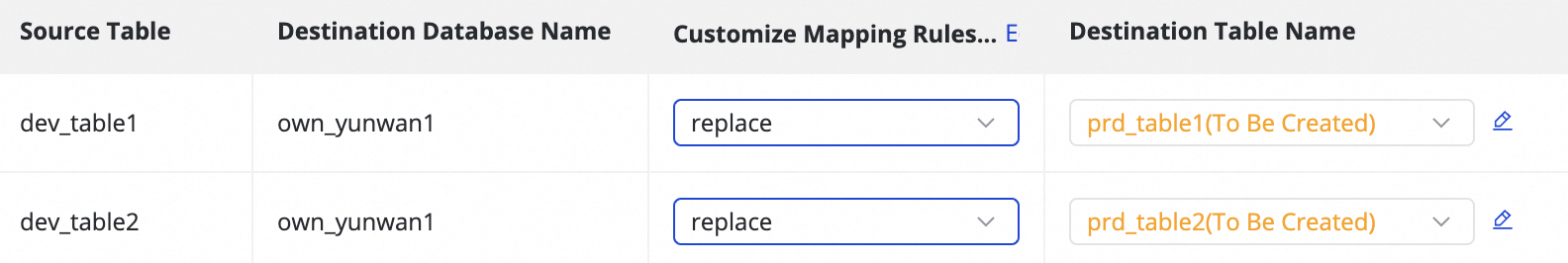

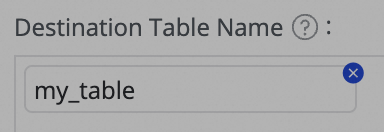

Customize Mapping Rules for Destination Table Names (optional) |

The system has a default table name generation rule:

This feature supports the following scenarios:

|

||||||||||||

|

Edit field type mapping (optional) |

The system provides default mappings between source and destination field types. You can click Edit Mapping of Field Data Types in the upper-right corner of the table to customize the mapping relationship. After configuration, click Apply and Refresh Mapping. When editing the field type mapping, ensure that the type conversion rules are correct. Otherwise, type conversion may fail, leading to dirty data and task interruptions. |

||||||||||||

|

Edit destination table structure (optional) |

Based on the custom table name mapping rules, the system creates new destination tables or reuses existing ones with the same name. DataWorks generates the destination schema based on the source schema. Manual intervention is usually not required. You can also modify the table schema in the following ways:

For existing tables, you can only add fields. For new tables, you can add fields, partition fields, and set table types or properties. For more details, refer to the editable areas in the UI. |

||||||||||||

|

Value assignment |

Native fields are automatically mapped based on fields with the same name in the source and destination tables. You must manually assign values for newly added fields and partition fields from the previous steps. Perform the following operations:

You can assign constants and variables by switching the type in Value Type. The following methods are supported:

Note

Creating an excessive number of partitions can negatively impact synchronization efficiency. If more than 1,000 new partitions are created in a single day, partition creation will fail and the task will be terminated. Therefore, when defining the assignment method for partition fields, you must estimate the potential number of partitions. Use caution when creating partitions at the second or millisecond level. |

||||||||||||

|

Source Split Column |

You can select a field from the source table in the Source Split Column drop-down list, or select Not Split. During execution, the synchronization task is divided into multiple subtasks based on this field to enable concurrent, batched data reading. We recommend using the table's primary key as the source split column. String, float, and date types are not supported. Currently, source split columns are supported only for MySQL sources. |

||||||||||||

|

Skip full synchronization for table |

If you have configured full synchronization in the task steps, you can choose to skip it for a specific table. This is useful when the full data has already been synchronized to the destination through other means. |

||||||||||||

|

Full condition |

This allows you to filter the source data during the full synchronization phase. You only need to write the |

||||||||||||

|

Configure DML Rule |

DML message processing allows you to filter and control the captured data changes ( |

||||||||||||

|

Other |

Table Type: MaxCompute supports Standard Table,

For a detailed introduction to Delta Tables, see Delta Table. |

Step 4: Advanced settings

Advanced parameters

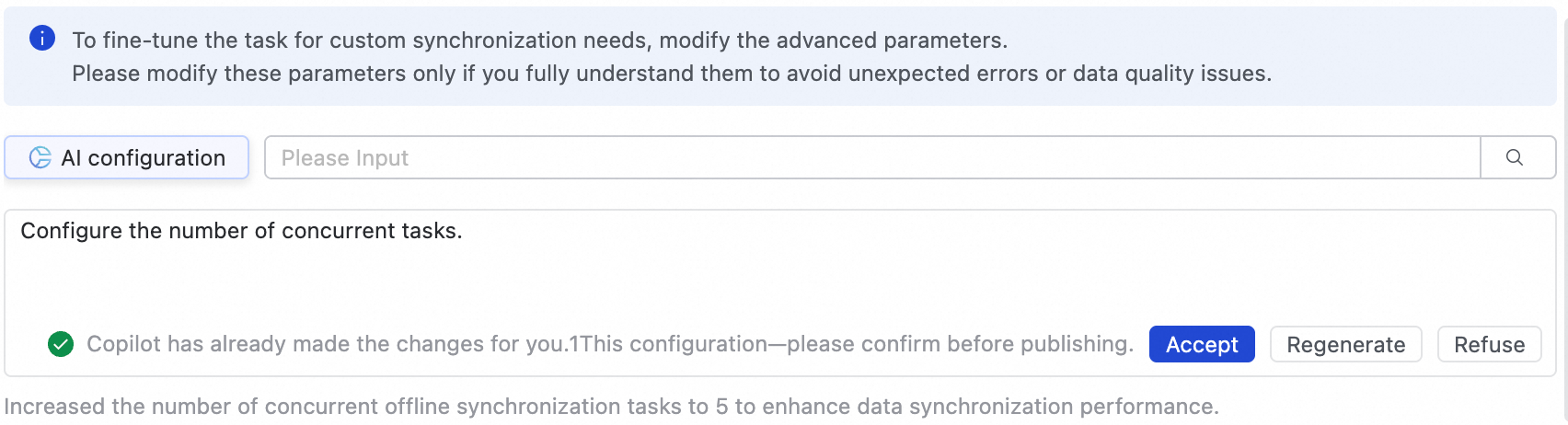

If you need to fine-tune the task configuration for custom synchronization requirements, you can go to the Advanced Parameters tab to modify advanced parameters.

-

Click Advanced Settings in the upper-right corner of the UI to go to the advanced parameter configuration page.

-

Modify the parameter values according to the tooltips. The meaning of each parameter is explained after its name.

-

You can also use AI-powered configuration. Enter modification instructions in natural language, such as adjusting the task's concurrency. The AI model will generate recommended parameter values, which you can choose to accept based on your actual needs.

Modify these parameters only if you fully understand their meaning to avoid unexpected issues such as task delays, excessive resource consumption that blocks other tasks, or data loss.

DDL configuration

Some real-time synchronization tasks can detect metadata changes in the source table structure and notify the destination. The destination can then either synchronize the update or take other actions, such as sending an alert, ignoring the change, or terminating the task.

You can click Configure DDL Capability in the upper-right corner of the UI to set a processing policy for each type of change. The supported policies vary depending on the channel.

-

Normal processing: The destination processes the DDL change from the source.

-

Ignore: The change message is ignored, and no modification is made at the destination.

-

Error: The whole database real-time synchronization task is terminated, and its status is set to Error.

-

Alert: An alert is sent to the user when this type of change occurs at the source. You must configure a DDL notification rule in the Configure Alert Rule settings.

When DDL synchronization adds a new column to the destination, the system does not backfill data for that column in existing rows.

Step 5: Deploy and run the task

-

After configuring the task, click Save.

-

Whole database synchronization tasks cannot be debugged directly. They must be deployed to the Operation Center to run. Therefore, whether you are creating a new task or editing an existing one, you must Deploy the task for the changes to take effect.

-

When you deploy the task, if you select Start immediately after deployment, the task is also started. Otherwise, after the deployment is complete, go to the page and manually start the task in the Actions column of the target task.

-

Click the Name/ID of the corresponding task in the Tasks to view the detailed execution process.

Step 6: Configure alarms

1. Add an alarm rule

In the list, find the corresponding real-time full-database synchronization task, and click in the Actions column to configure an alert policy for the task.

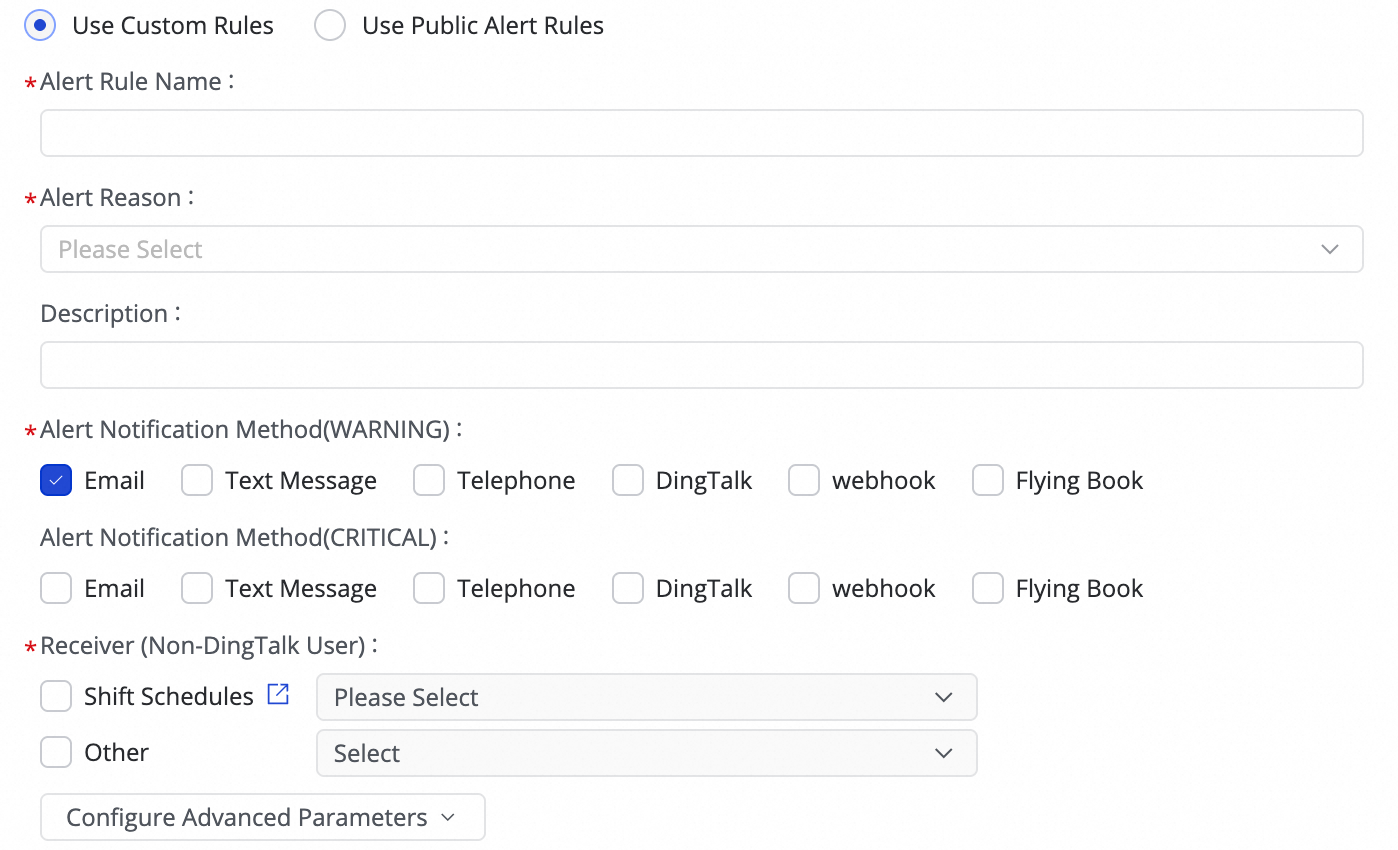

(1) Click Create Rule to configure an alarm rule.

By setting the Alert Reason, you can monitor task metrics such as Business delay, Failover, Task status, DDL Notification, and Task Resource Utilization. You can set CRITICAL or WARNING alarm levels based on specified thresholds.

-

By using Configure Advanced Parameters, you can control the interval for sending alarm messages to prevent alert fatigue and message backlogs.

-

If you select Business delay, Task status, or Task Resource Utilization as the alarm trigger, you can also enable recovery notifications to inform recipients when the task returns to a normal state.

(2) Manage alarm rules.

For created alarm rules, you can use the alarm switch to enable or disable them. You can also send alarms to different personnel based on the alarm level.

2. View alarms

Click for a task to go to the Alarm Events page, where you can view information about triggered alarms.

Manage the task

Edit the task

-

On the page, find the synchronization task that you created, click More in the Operation column, and then click Edit. You can modify the task information. The procedure is the same as that for task configuration.

-

For tasks that are not running, you can directly modify the configuration, save it, and then deploy the task to the Operation Center for the changes to take effect.

-

For tasks that are Running, if you edit and deploy the task without selecting Start immediately after deployment, the original operation button changes to Apply Updates. You must click this button for the changes to take effect in the Operation Center.

-

After you click Apply Updates, the system performs a "Stop, Deploy, Restart" sequence to apply the changes.

-

If the change is to add a new table or switch to an existing table:

You cannot select a checkpoint when applying the updates. After you click confirm, the system performs structural migration and full synchronization for the new table. Once complete, incremental synchronization begins for the new table alongside the original ones.

-

If you modify other information:

You can select a checkpoint when applying the updates. After you click confirm, the task resumes from the specified checkpoint. If you do not specify a checkpoint, it resumes from the point where it last stopped.

Unmodified tables are not affected. After the update and restart, they will resume from their last stopping point.

-

View the task

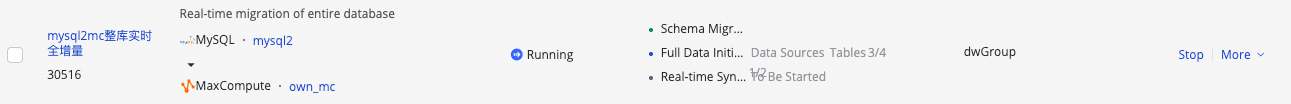

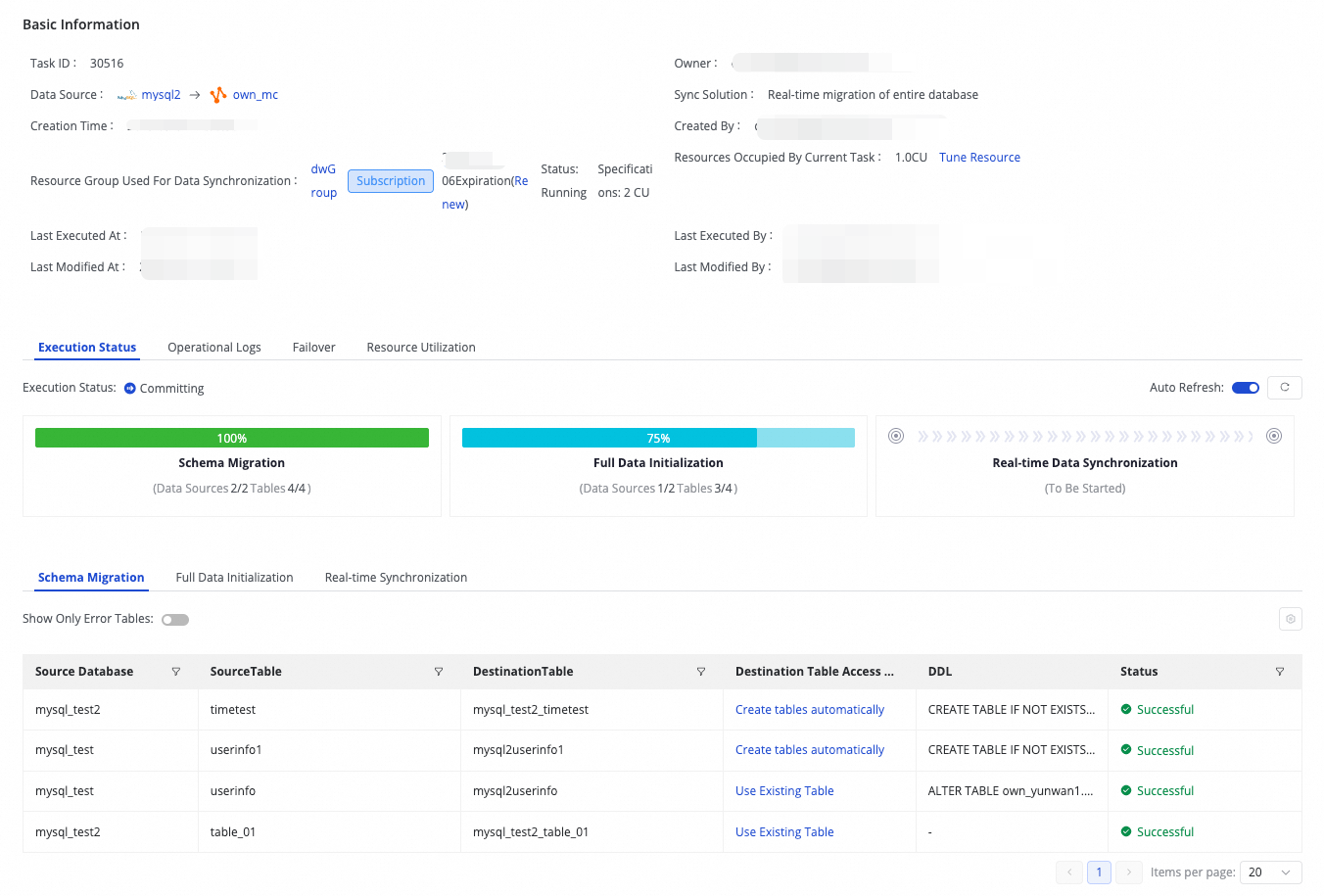

After you create a synchronization task, you can view the list of created tasks and their basic information on the synchronization task page.

-

In the Operation column, you can Start or Stop the synchronization task. In the More menu, you can perform other operations such as Edit and View.

-

For started tasks, you can see the basic running status in the Execution Overview. You can also click the corresponding overview area to view execution details.

Resume from breakpoint

Use cases

Manually resetting the checkpoint is useful in the following scenarios:

-

Task recovery and data continuation: When a task is interrupted, you may need to manually specify an interruption time as the new starting checkpoint to ensure that data recovery is accurate.

-

Data issue troubleshooting and backtracking: If data is lost or abnormal, roll back the checkpoint to a time before the issue occurred to replay and repair the data.

-

Major task configuration changes: After making significant adjustments to the task configuration, such as the destination table structure or field mappings, we recommend resetting the checkpoint to start synchronization from a specific point in time to ensure data accuracy with the new configuration.

Procedure

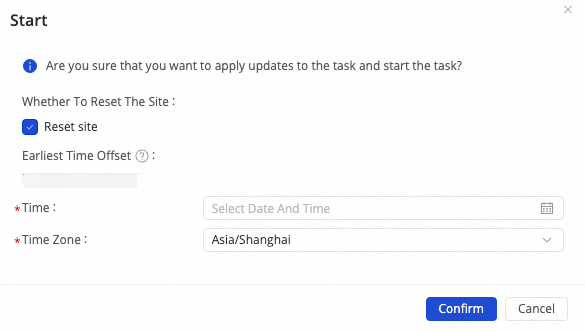

Click Start. In the dialog box that appears, you can choose whether to Whether to reset the site:

-

To resume from the last stop time, leave the reset option unchecked and run the task directly. The task continues from the last recorded checkpoint.

-

To start from a specific time, select the reset option and choose a time. The task starts from the specified time checkpoint. Ensure the selected time is within the source's binlog retention period.

If you encounter a "checkpoint error" or "checkpoint does not exist" message when running the synchronization task, try the following solutions:

-

Reset checkpoint: When starting the real-time synchronization task, reset the checkpoint and select the earliest available checkpoint in the source database.

-

Adjust log retention time: If the database checkpoint has expired, consider increasing the log retention period in the database, for example, to 7 days.

-

Data synchronization: If data has been lost, perform a full synchronization again or create an offline synchronization task to manually sync the missing data.

FAQ

For frequently asked questions about whole database real-time synchronization, see FAQ about real-time synchronization and FAQ about full and incremental synchronization tasks.

Appendix: Incremental stream format

Flattened source table fields

|

Field Name |

Description |

|

sequence_id |

The record ID of the incremental event. The value is unique and incremental. |

|

operation_type |

The operation type (I/D/U). |

|

execute_time |

The timestamp corresponding to the data. |

|

before_image |

Indicates whether it is the image before the change (Y/N). |

|

after_image |

Indicates whether it is the image after the change (Y/N). |

|

src_datasource |

The source data source. |

|

src_database |

The source database. |

|

src_table |

The source table. |

|

Field 1 |

Actual data field 1. |

|

Field 2 |

Actual data field 2. |

|

Field 3 |

Actual data field 3. |

Source fields merged into JSON

|

Field Name |

Description |

|

sequence_id |

The record ID of the incremental event. The value is unique and incremental. |

|

operation_type |

The operation type. |

|

execute_time |

The timestamp corresponding to the data. |

|

before_image |

Indicates whether it is the image before the change (Y/N). |

|

after_image |

Indicates whether it is the image after the change (Y/N). |

|

src_datasource |

The source data source. |

|

src_database |

The source database. |

|

src_table |

The source table. |

|

ddl_sql |

For DDL operations, this field contains the DDL statement. |

|

data_columns |

The actual data fields, merged into a JSON object. |

button in the Target Table column to add a field.

button in the Target Table column to add a field. tooltip in the UI.

tooltip in the UI.