EMR Trino nodes let you run interactive, cross-source SQL queries inside DataWorks Data Studio. Use them to perform large-scale multidimensional data aggregation or report analysis across multiple systems such as Hive and MySQL.

Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud E-MapReduce (EMR) cluster bound to DataWorks. See Data Studio: Associate an EMR computing resource.

-

(Optional, Resource Access Management (RAM) users only) Been added to the workspace with the Developer or Workspace Administrator role. The Workspace Administrator role has extensive permissions — grant it with caution. See Add members to a workspace.

Limitations

| Limitation | Details |

|---|---|

| Serverless resource group required | EMR Trino nodes run only on a serverless resource group. |

| EMR-HOOK required for metadata management | To manage metadata for DataLake or custom clusters, configure EMR-HOOK on the cluster first. Without it, DataWorks cannot display real-time metadata, generate audit logs, show data lineage, or perform EMR-related administration. See Configure EMR-HOOK for Hive. |

| LDAP authentication requires a keystore file | If Lightweight Directory Access Protocol (LDAP) authentication is enabled for Trino, upload the keystore file to DataWorks before running queries (see steps below). |

If LDAP authentication is enabled, upload the keystore file before running any queries:

-

Log on to the EMR Master node and download the keystore file from

/etc/taihao-apps/trino-conf. -

In the DataWorks console, switch to the destination region.

-

Find your workspace and click Management in the Actions column to open Management Center.

-

In the left navigation pane, choose Computing Resources, find your EMR cluster, and click Account Mapping.

-

Click Edit Account Mapping, then click Upload Keystore File.

Create and run an EMR Trino node

The node editing page has three main areas:

-

SQL editing area (center): write your Trino SQL statements here.

-

Schedule Configuration pane (right): configure scheduling parameters and advanced EMR node parameters.

-

Run Configuration pane (right): select the computing resource and resource group before running.

Step 1: Configure connectors

Trino uses connectors to access external data sources. Configure the relevant connector before writing queries:

| Data source | Reference |

|---|---|

| MySQL tables | MySQL connector |

| Hive tables | Hive connector |

| Other data sources | Configure connectors |

Step 2: Write SQL

In the SQL editing area, write your Trino SQL statements.

Cross-source queries require fully qualified table names. Trino's federated query capability lets you query across multiple data sources in a single statement. To do this, specify the full three-part path — <catalog>.<schema>.<table> — in every FROM and JOIN clause. The catalog identifies the data source (connector), the schema identifies the database, and the table identifies the target table. Omitting any part of the path causes the query to fail.

-- Query a Hive table: hive = catalog, default = schema, hive_table = table

SELECT * FROM hive.default.hive_table;

-- Query a MySQL table

SELECT * FROM mysql.rt_data.rt_user;

-- Join a Hive table and a MySQL table across data sources

SELECT DISTINCT a.id, a.name, b.rt_name

FROM hive.default.hive_table a

INNER JOIN mysql.rt_data.rt_user b ON a.id = b.id;

-- Use a scheduling parameter as a table name

SELECT * FROM hive.default.${table_name};Using scheduling parameters: Define variables in the ${variable_name} format. Assign values in the Schedule Parameter section of the Schedule Configuration pane — DataWorks substitutes the values at runtime. See Supported formats for scheduling parameters.

Step 3: Configure advanced parameters (optional)

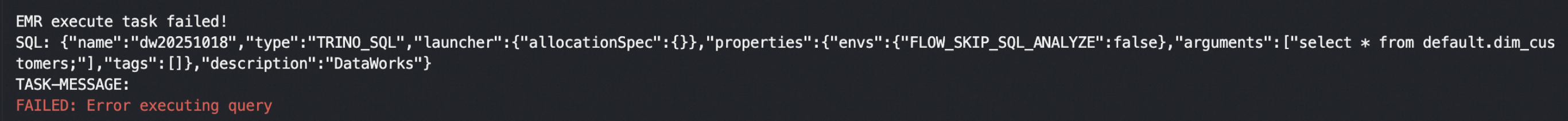

In the Schedule Configuration pane, go to EMR Node Parameters > DataWorks Parameters to configure the following:

| Parameter | Description | Default |

|---|---|---|

| FLOW_SKIP_SQL_ANALYZE | Controls how SQL statements execute. Set to true to execute multiple SQL statements at a time; set to false to execute one SQL statement at a time. |

false |

| DATAWORKS_SESSION_DISABLE | Controls Java Database Connectivity (JDBC) connection reuse when running tests in the development environment. Set to true to create a new JDBC connection for each SQL statement; set to false to reuse the same JDBC connection across statements within the same node. |

false |

For additional open-source Spark properties, go to EMR Node Parameters > Spark Parameters. See Spark configuration.

Step 4: Run the node

-

In the Run Configuration pane, set the Computing Resource and Resource Group parameters.

Note Adjust Schedule CUs based on your task's resource requirements. The default value is0.25. To access data sources over the public internet or through a Virtual Private Cloud (VPC), use a schedule resource group that has passed the connectivity test with that data source. See Network connectivity solutions. -

In the toolbar, select the data source and click Run.

Note Query results are limited to 10,000 rows and 10 MB of total data.

What's next

After validating the node, configure scheduling and publish it to production:

-

Configure scheduling: set the run schedule for the node. See Node scheduling configuration.

-

Publish: publish the node to make it available for scheduled runs. See Publish nodes and workflows.

-

Monitor: track the execution status of scheduled tasks in Operation Center. See Get started with Operation Center.

FAQ

The node run fails with a connection timeout.

The most likely cause is that the network connection between the resource group and the EMR cluster has not been established or needs to be refreshed. Go to the computing resource list page, find the resource, and click Re-initialize. In the dialog box that appears, confirm the action. Verify that the initialization completes successfully before retrying.