Use Data Integration batch synchronization tasks to load data from MySQL and Object Storage Service (OSS) into MaxCompute. This tutorial walks through creating destination tables, registering data sources, configuring two synchronization nodes, and verifying results — covering the data collection phase of a user profile analysis workflow.

What you'll learn

By the end of this tutorial, you'll know how to:

-

Create partitioned MaxCompute tables to receive synchronized data

-

Register an ApsaraDB RDS for MySQL data source and an HttpFile data source in DataWorks

-

Configure a batch synchronization node for structured relational data (MySQL to MaxCompute)

-

Configure a batch synchronization node for raw log files (OSS to MaxCompute)

-

Set up daily scheduling with

$bizdateparameterization -

Verify synchronization results using an ad hoc query

Prerequisites

Before you begin, make sure you have:

-

Completed the experiment introduction and have a general understanding of the tutorial goals

-

Completed environment preparation, including purchasing a serverless resource group and configuring an Internet NAT gateway

The test data and data sources used in this tutorial are pre-provisioned mock data for DataWorks experiments. The data can only be read through Data Integration and is not intended for production use.

Data synchronization overview

This tutorial synchronizes data from two sources into MaxCompute:

| Source type | Data | Source table or object | Destination table |

|---|---|---|---|

| MySQL | Basic user information | ods_user_info_d |

ods_user_info_d_odps |

| HttpFile (OSS) | Website access logs | user_log.txt |

ods_raw_log_d_odps |

Why batch synchronization? Batch synchronization is appropriate here because the source data accumulates throughout the day and is processed as a daily snapshot. If you need to process data within minutes of it arriving, use a real-time synchronization approach instead.

Go to DataStudio

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose Data Development and O&M > Data Development. Select your workspace from the drop-down list, then click Go to Data Development.

Step 1: Design the workflow

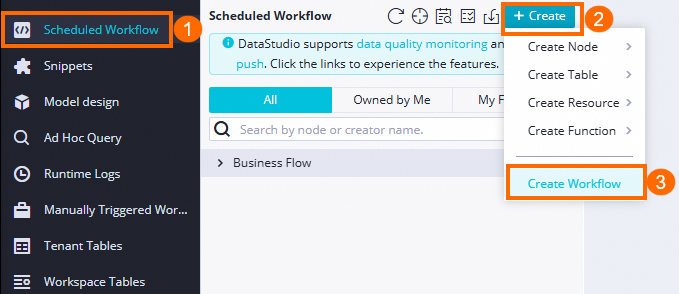

Create a workflow

Development in DataWorks is organized around workflows. Before creating nodes, create a workflow named User profile analysis_MaxCompute. For instructions, see Create a workflow.

Add nodes to the workflow

After creating the workflow, the workflow canvas opens automatically. Click Create Node in the upper part of the canvas, drag nodes onto the canvas, and draw dependency lines between them. For the full workflow design, see the workflow design reference.

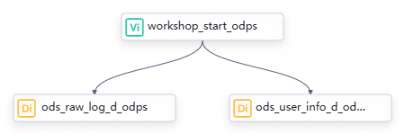

The workflow for this tutorial uses the following three nodes:

| Node classification | Node type | Node name | Purpose |

|---|---|---|---|

| General | Zero load node | workshop_start_odps |

Triggers the workflow at 00:30 daily and manages the overall execution order |

| Data Integration | Batch synchronization | ods_user_info_d_odps |

Syncs basic user information from MySQL to MaxCompute |

| Data Integration | Batch synchronization | ods_raw_log_d_odps |

Syncs website access logs from OSS to MaxCompute |

Name each synchronization node after the destination table it populates.

Because no data lineage exists between the zero load node and the synchronization nodes, configure their dependencies by drawing lines in the workflow canvas. For details, see Scheduling dependency configuration guide.

All nodes in a DataWorks workflow require a parent node. Both synchronization nodes in this tutorial depend on workshop_start_odps, so they start running after that node completes.

Configure scheduling for the zero load node

The workshop_start_odps zero load node triggers the entire workflow. Configure its scheduling properties as follows. Leave the scheduling properties of the two synchronization nodes at their defaults for now — you'll configure them in Step 2.

| Configuration item | Setting | Description |

|---|---|---|

| Scheduling time | 00:30 |

The workflow runs daily at 00:30. |

| Scheduling dependencies | Root node of the workspace | The zero load node has no parent nodes, so it depends on the workspace root node. |

For more information about scheduling options, see Configure scheduling time for nodes in a workflow in different scenarios and the scheduling overview.

Step 2: Configure data synchronization tasks

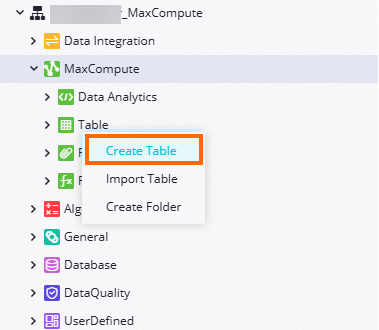

Create the destination MaxCompute tables

Create the MaxCompute tables that will receive the synchronized data before configuring the synchronization tasks.

-

Go to the table creation entry point.

-

Create the

ods_raw_log_d_odpstable. In the Create Table dialog box, enterods_raw_log_d_odpsin the Name field. Click DDL, paste the following statement, and click Generate Table Schema. In the Confirm dialog box, click Confirmation to overwrite the existing configuration.CREATE TABLE IF NOT EXISTS ods_raw_log_d_odps ( col STRING ) PARTITIONED BY ( dt STRING ) LIFECYCLE 7; -

Create the

ods_user_info_d_odpstable. In the Create Table dialog box, enterods_user_info_d_odpsin the Name field. Click DDL, paste the following statement, and click Generate Table Schema. In the Confirm dialog box, click Confirmation to overwrite the existing configuration.CREATE TABLE IF NOT EXISTS ods_user_info_d_odps ( uid STRING COMMENT 'The user ID', gender STRING COMMENT 'The gender', age_range STRING COMMENT 'The age range', zodiac STRING COMMENT 'The zodiac sign' ) PARTITIONED BY ( dt STRING ) LIFECYCLE 7; -

Commit and deploy both tables. For each table, click Commit to Development Environment and then Commit to Production Environment on the configuration tab. This creates the physical tables in the MaxCompute projects associated with your workspace in each environment.

After committing, you can view the tables in the MaxCompute project for the corresponding environment. Tables committed to the development environment appear in the development MaxCompute project; tables committed to the production environment appear in the production MaxCompute project.

Register data sources

Register the data sources before configuring synchronization tasks, so you can select them by name during task configuration.

The test data for both data sources is hosted on the internet. Make sure an Internet NAT gateway is configured for your DataWorks resource group as described in Step 2 of environment preparation. Without this, connectivity tests will fail with timeout errors.

To navigate to the Data Sources page:

-

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose More > Management Center. Select your workspace from the drop-down list, then click Go to Management Center.

-

In the left-side navigation pane of the SettingCenter page, click Data Sources.

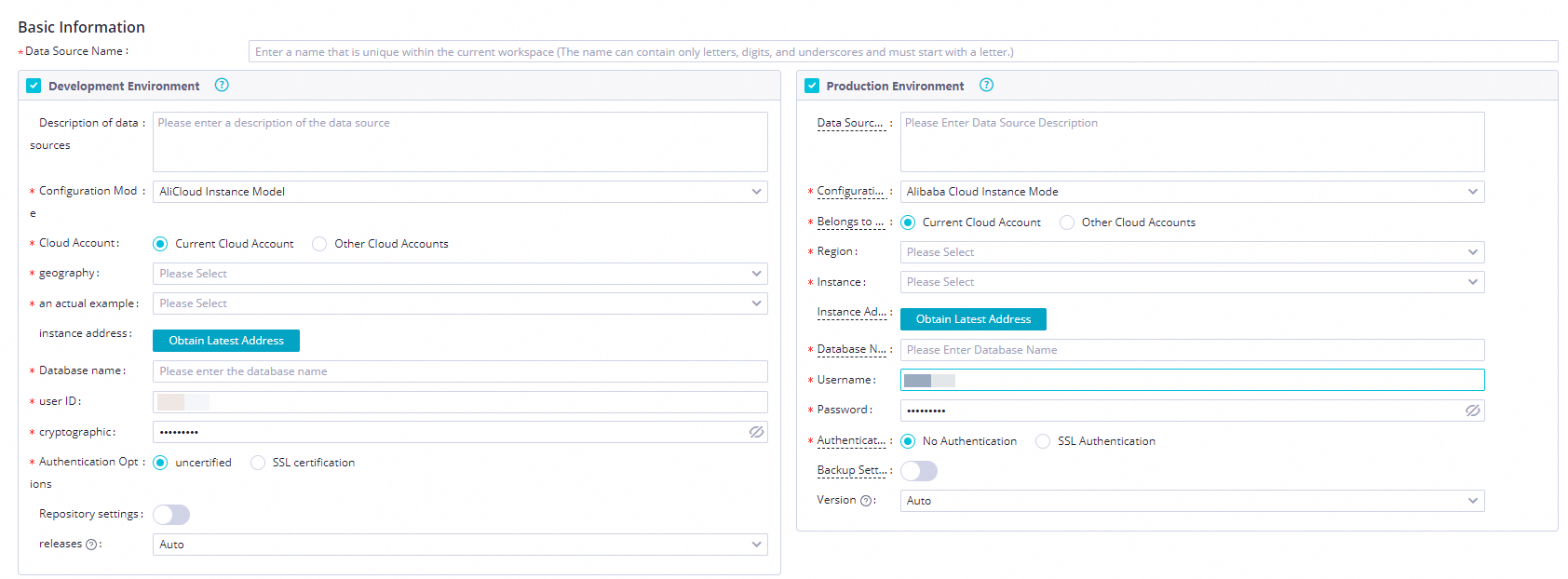

Register the MySQL data source

Add the ApsaraDB RDS for MySQL data source (user_behavior_analysis_mysql) to access the basic user information table.

-

On the Data Sources page, click Add Data Source.

-

In the Add Data Source dialog box, click MySQL.

-

On the Add MySQL Data Source page, configure the following parameters:

Set Environment to Development and Production. A data source must exist in both environments; otherwise, tasks fail when they run against the production environment.

Parameter Value Data Source Name user_behavior_analysis_mysqlConfiguration Mode Connection String Mode Environment Development and Production Host IP Address rm-bp1z69dodhh85z9qa.mysql.rds.aliyuncs.comPort Number 3306Database Name workshopUsername workshopPassword workshop#2017Authentication Method No Authentication

-

In the Connection Configuration section, find your serverless resource group and click Test Network Connectivity in the Connection Status column. Test connectivity for both the development and production environments. When the test succeeds, the status changes to Connected.

Register the HttpFile data source

Add the HttpFile data source (user_behavior_analysis_httpfile) to access the website access log file stored in OSS.

-

On the Data Sources page, click Add Data Source.

-

In the Add Data Source dialog box, click HttpFile.

-

On the Add HttpFile Data Source page, configure the following parameters:

Set Environment to both Development Environment and Production Environment to avoid task failures when running in production.

Parameter Value Data Source Name user_behavior_analysis_httpfileEnvironment Development Environment and Production Environment URL Domain https://dataworks-workshop-2024.oss-cn-shanghai.aliyuncs.com -

In the Connection Configuration section, click Test Network Connectivity for your serverless resource group. Test both environments. When the test succeeds, the status changes to Connected.

Configure the MySQL to MaxCompute synchronization task

This task reads the ods_user_info_d table from MySQL and writes the data to the ods_user_info_d_odps MaxCompute table.

-

Double-click the

ods_user_info_d_odpsbatch synchronization node to open its configuration tab. -

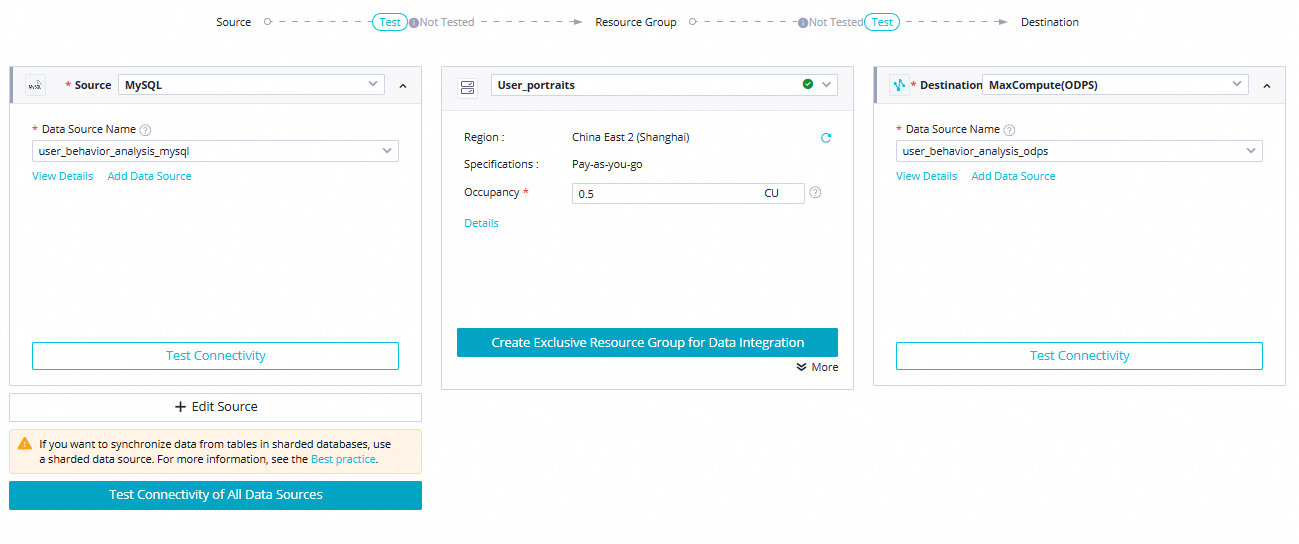

Configure the connection. Set the source, resource group, and destination, then click Next and complete the connectivity test.

Parameter Value Source Type: MySQL; Data Source Name:user_behavior_analysis_mysqlResource Group The serverless resource group you purchased during environment preparation Destination Type: MaxCompute; Data Source Name:user_behavior_analysis_mysql

-

Configure the source and destination settings. Use the following settings. Leave all other parameters at their defaults. For field mapping and Channel Control settings, the defaults work for this tutorial. For other configuration options, see Configure a task in the codeless UI.

Section Parameter Value Source Table ods_user_info_dSource Split key uid(primary key; used to parallelize data reads)Destination Tunnel Resource Group Common transmission resources (default) Destination Schema defaultDestination Table ods_user_info_d_odpsDestination Partition information ${bizdate}Destination Write Mode Clean up existing data before writing (Insert Overwrite) Destination Write by Converting Empty Strings into Null No -

Configure scheduling properties. Click Properties in the right-side navigation pane. Use the following settings and leave all other parameters at their defaults.

The node runs after

workshop_start_odpscompletes each day. DataWorks uses the node output to resolve scheduling dependencies for downstream SQL nodes through automatic data lineage parsing.Section Parameter Value Scheduling Parameter Parameter Name / Value bizdate/$bizdate(date of the previous day inyyyymmddformat)Schedule Scheduling Cycle DaySchedule Scheduled time 00:30Schedule Rerun Allow Regardless of Running Status Resource Group — The serverless resource group from environment preparation Dependencies Parent node workshop_start_odpsDependencies Node output Verify that an output named <MaxCompute project name in the production environment>.ods_user_info_d_odpsexists. Add it manually if it is missing.

Configure the HttpFile to MaxCompute synchronization task

This task reads the user_log.txt log file from OSS via HttpFile and writes the raw log data to the ods_raw_log_d_odps MaxCompute table.

-

Double-click the

ods_raw_log_d_odpsbatch synchronization node to open its configuration tab. -

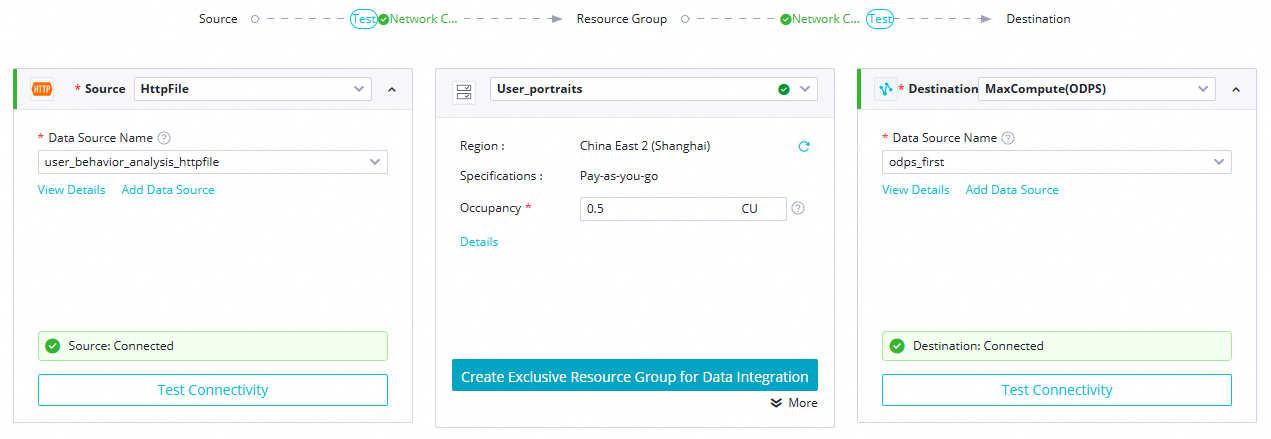

Configure the connection. Set the source, resource group, and destination, then click Next and complete the connectivity test.

Parameter Value Source Type: HttpFile; Data Source Name:user_behavior_analysis_HttpFileResource Group The serverless resource group you purchased during environment preparation Destination Type: MaxCompute; Data Source Name:user_behavior_analysis_mysql

-

Configure the source and destination settings. Use the following settings. Leave all other parameters at their defaults. After setting the source parameters, click Confirm Data Structure to verify that DataWorks can read the log file. For field mapping and Channel Control settings, the defaults work for this tutorial. For other configuration options, see Configure a task in the codeless UI.

Section Parameter Value Source File Path /user_log.txtSource File Type textSource Column Delimiter |Source (Advanced) Coding UTF-8Source (Advanced) Compression format UTF-8Source (Advanced) Skip Header No Destination Tunnel Resource Group Common transmission resources (default) Destination Schema defaultDestination Table ods_raw_log_d_odpsDestination Partition information ${bizdate}Destination Write Mode Clean up existing data before writing (Insert Overwrite) Destination Write by Converting Empty Strings into Null No -

Configure scheduling properties. Click Properties in the right-side navigation pane. Use the following settings and leave all other parameters at their defaults.

Section Parameter Value Scheduling Parameter Parameter Name / Value bizdate/$bizdate(date of the previous day inyyyymmddformat)Schedule Scheduling Cycle DaySchedule Scheduled time 00:30Schedule Rerun Allow Regardless of Running Status Resource Group — The serverless resource group from environment preparation Dependencies Parent node workshop_start_odpsDependencies Node output Verify that an output named <MaxCompute project name in the production environment>.ods_raw_log_d_odpsexists. Add it manually if it is missing.

Step 3: Run the workflow and verify results

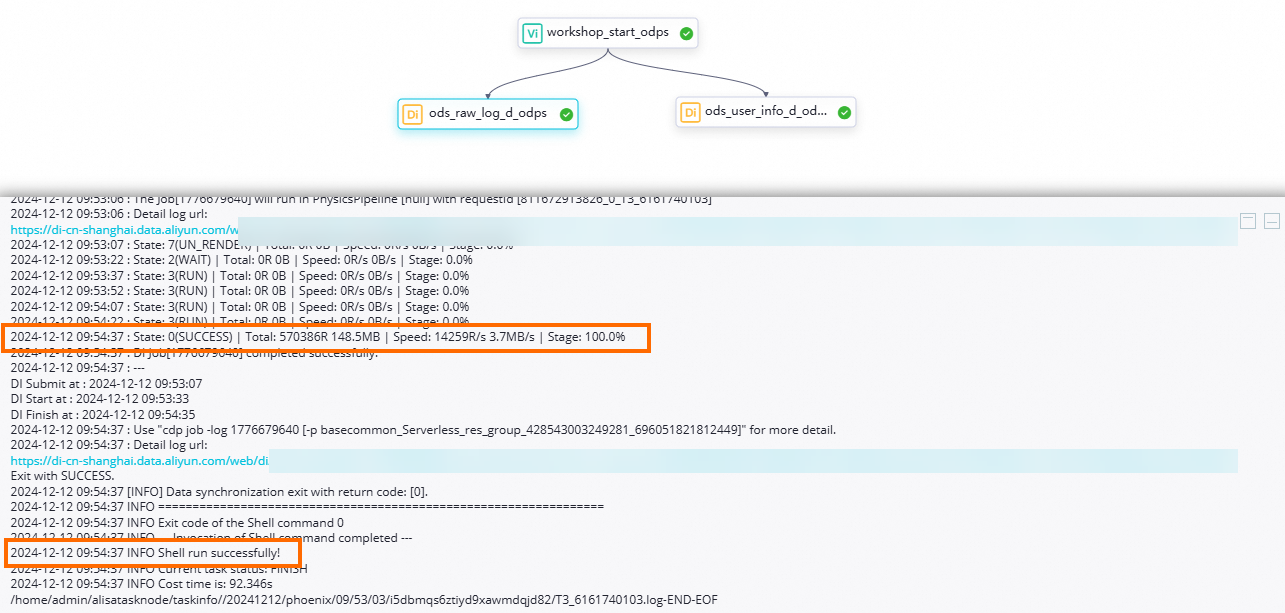

Run the workflow

-

In the DataStudio left-side navigation pane, under Business Flow, double-click the

User profile analysis_MaxComputeworkflow. -

On the workflow canvas, click the

icon in the top toolbar to trigger all nodes based on their scheduling dependencies.

icon in the top toolbar to trigger all nodes based on their scheduling dependencies.

Check node status

-

Node state indicator: A node displaying the

icon is running normally.

icon is running normally. -

Logs: Right-click the

ods_user_info_d_odpsorods_raw_log_d_odpsnode and select View Logs. A successful run shows a completion message in the log output.

Verify the synchronization results

When the workflow completes successfully:

-

All records from

ods_user_info_din ApsaraDB RDS for MySQL are written to the previous day's partition inworkshop2024_01_dev.ods_user_info_d_odps. -

All access log records from

user_log.txtin OSS are written to the previous day's partition inworkshop2024_01_dev.ods_raw_log_d_odps.

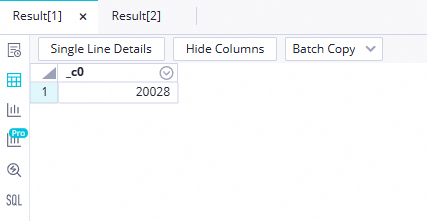

To confirm the row counts, run an ad hoc query:

-

In the DataStudio left-side navigation pane, click the

icon. In the Ad Hoc Query pane, right-click Ad Hoc Query and choose Create Node > ODPS SQL.

icon. In the Ad Hoc Query pane, right-click Ad Hoc Query and choose Create Node > ODPS SQL. -

Run the following queries, replacing

Data timestampwith the$bizdatevalue for your run (the date of the previous day inyyyymmddformat — for example, if the node ran on June 21, 2023, the data timestamp is20230620):select count(*) from ods_user_info_d_odps where dt='Data timestamp'; select count(*) from ods_raw_log_d_odps where dt='Data timestamp';

Nodes run in DataStudio execute in the development environment by default. Query results reflect data in the workshop2024_01_dev MaxCompute project.

What's next

Data collection is complete. The next tutorial covers processing the user information and access logs you just synchronized into MaxCompute. See Process data.