This guide explains how to improve development efficiency with practices like code reuse, dataset mounting, and parameter management. It also covers best practices and debugging techniques for connecting to compute engines, including MaxCompute Spark, EMR Serverless Spark, and AnalyticDB for Spark.

Read Basic Notebook development before proceeding.

Development vs. production environments

DataWorks Notebook is designed as a development and analysis tool that can be scheduled for execution. It operates in two distinct environments:

-

Development environment: Code runs in a personal development environment instance, designed for rapidly validating and debugging code.

-

Production environment: Triggered by periodic scheduling or data backfills, code runs in an isolated, ephemeral task instance, ensuring stable and reliable execution.

The two environments differ in how key features behave:

| Feature | Development environment | Production environment |

|---|---|---|

| Reference project resources (`.py` files) | Initial reference: Downloaded automatically and takes effect. After an update: Click Restart in the toolbar to reload the updated .py module. Set Dataworks › Notebook › Resource Reference: Download Strategy to autoOverwrite in DataStudio settings. |

Takes effect automatically. |

| Read/Write datasets (OSS/NAS) | Mount the dataset in the personal development environment. | Mount the dataset in scheduling configurations. |

| Reference workspace parameters (`${...}`) | Text substitution occurs automatically before code execution. | Text substitution occurs automatically before task execution. |

| Spark session management | Default idle timeout: two hours. The session is automatically released if no new code runs within this period. | A short-lived, task-instance-level session is automatically created and destroyed with the task instance. |

Reuse code and data

Choose a code reuse method

DataWorks Notebook supports several ways to reuse code and share data across tasks. Use the following table to pick the right approach:

| Method | Use when | Notes |

|---|---|---|

| Project resources (`.py` files) | Sharing Python utility functions across Notebook tasks (recommended) | Published to MaxCompute Resource Management; available in both development and production. |

| Datasets (OSS/NAS) | Reading or writing large files during task execution | Mount separately for development and production environments. |

| Workspace parameters | Sharing global configuration values across tasks | Available in DataWorks Professional Edition and higher. Requires creation in Operation Center. |

Reference project resources

Encapsulate common functions or classes into .py files and reference them as MaxCompute resources using ##@resource_reference{"custom_name.py"}. This modularizes code, improves reusability, and simplifies maintenance.

Create and publish a Python resource

-

In the left-side navigation pane of DataWorks DataStudio, click

to go to Resource Management.

to go to Resource Management. -

Right-click the target directory or click the + icon in the upper-right corner. Select Create Resource > MaxCompute Python, and name the file

my_utils.py. -

In the File Content section, click Edit Online, paste your utility function code, and click Save.

# my_utils.py def greet(name): return f"Hello, {name} from resource file!" -

Click Save, then Publish in the toolbar. The resource becomes available to both development and production tasks.

Reference the resource in a Notebook

In the first line of a Python cell, use ##@resource_reference to reference the published resource:

##@resource_reference{"my_utils.py"}

# If the resource is in a subdirectory (e.g., my_folder/my_utils.py),

# reference it without the directory name: ##@resource_reference{"my_utils.py"}

from my_utils import greet

message = greet('DataWorks')

print(message)Debug in the development environment

Run the Python cell. The output is:

Hello, DataWorks from resource file!In the development environment, the system detects the ##@resource_reference declaration and automatically downloads the file to workspace/_dataworks/resource_references in your personal directory. If a ModuleNotFoundError occurs, click Restart in the editor toolbar to reload the resource.

Publish to the production environment

After saving and publishing the Notebook node, go to Operation Center > Recurring Tasks and click Test. After the task succeeds, the output Hello, DataWorks from resource file! appears in the logs.

If a There is no file with id ... error occurs, publish the Python resource to the production environment first.

For more information, see MaxCompute resources and functions.

Read and write datasets

Notebook tasks can read from and write to large files stored on OSS or NAS during execution.

Debug in the development environment

-

Mount a dataset: On your personal development environment's details page, configure the dataset under Storage Configuration > Dataset.

-

Access in code: The dataset is mounted to a path in the personal development environment. Read from or write to this path directly:

# Assume the dataset is mounted to /mnt/data/dataset. import pandas as pd file_path = '/mnt/data/dataset/testfile.csv' df = pd.read_csv(file_path) # Write data to MaxCompute using PyODPS. o = %odps o.write_table('mc_test_table', df, overwrite=True) print(f"Data successfully written to MaxCompute table mc_test_table.")

Deploy to production

-

Mount a dataset: On the Notebook node editing page, add the dataset under scheduling configurations > scheduling policy in the right-side navigation pane.

-

Access in code: After committing and publishing the node, the dataset is mounted to a path in the production environment. Use the same path in your code:

# Assume the dataset is mounted to /mnt/data/dataset. import pandas as pd file_path = '/mnt/data/dataset/testfile.csv' df = pd.read_csv(file_path) # Write data to MaxCompute using PyODPS. o = %odps o.write_table('mc_test_table', df, overwrite=True) print(f"Data successfully written to MaxCompute table mc_test_table.")

For more information, see Use datasets in a personal development environment.

Reference workspace parameters

This feature is available only in DataWorks Professional Edition and higher.

Workspace parameters let you reuse global configurations and isolate environments across tasks and nodes. Reference a workspace parameter in a SQL or Python cell using the format ${workspace.param}, where param is the name you assigned when creating the parameter.

-

Create a workspace parameter: Go to Operation Center > Scheduling Settings > Workspace Parameters and create the parameter.

-

Reference the parameter in code: In a SQL cell:

SELECT '${workspace.param}';In a Python cell:

print('${workspace.param}')After the cell runs, the resolved value of the workspace parameter is printed.

For more information, see Use workspace parameters.

Interact with compute engines using magic commands

Magic commands are special commands prefixed with % or %% that simplify interactions between a Python cell and various compute resources.

A single Notebook node can connect to only one type of compute resource using a magic command.

Connect to MaxCompute

Bind a MaxCompute compute resource before establishing a connection.

%odps — Get a PyODPS entry object

Returns an authenticated PyODPS object bound to the current MaxCompute project. This avoids hard-coding AccessKeys in your code.

o = %odpsAfter running the command, a compute resource selector appears in the lower-right corner with a project automatically selected. Click the project name to switch projects.

Use the object to run PyODPS scripts. For example, to list all tables in the current project:

with o.execute_sql('show tables').open_reader() as reader:

print(reader.raw)%maxframe — Establish a MaxFrame connection

Creates a MaxFrame session for distributed, pandas-like data processing on MaxCompute data.

# Connect to MaxCompute using MaxFrame.

mf_session = %maxframe

df = mf_session.read_odps_table('your_mc_table')

print(df.head())

# Destroy the session manually to release resources after debugging.

mf_session.destroy()Connect to Spark resources

DataWorks Notebook supports connections to multiple Spark engines. These engines differ in connection method, execution context, and resource management.

Engine comparison

| Feature | MaxCompute Spark | EMR Serverless Spark | AnalyticDB for Spark |

|---|---|---|---|

| Connection command | %maxcompute_spark |

%emr_serverless_spark |

%adb_spark add |

| Prerequisites | Bind a MaxCompute resource | Bind an EMR compute resource and create a Livy Gateway | Bind an ADB Spark compute resource |

| Development environment | Automatically creates or reuses a Livy session | Connects to an existing Livy Gateway to create a session | Automatically creates or reuses a Spark Connect Server |

| Production environment | Livy mode: submits Spark jobs through the Livy service | spark-submit batch processing mode: pure batch, no session state retention | Spark Connect Server mode: interacts through the Spark connection service |

| Resource release in production | Session automatically released after the task instance ends | Resources automatically cleaned up after the task instance ends | Resources automatically released after the task instance ends |

| Use cases | General-purpose batch processing and ETL tasks tightly integrated with the MaxCompute ecosystem | Complex analysis tasks requiring flexible configuration and open-source ecosystem integration (for example, Hudi and Iceberg) | High-performance interactive queries on AnalyticDB for MySQL C-Store tables |

After connecting to a Spark engine, the execution context of the entire Notebook kernel switches to the remote PySpark environment. Write PySpark code directly in subsequent cells.

MaxCompute Spark

Bind a MaxCompute compute resource before establishing a connection.

Connect through Livy to the Spark engine built into your MaxCompute project.

-

Establish a connection: Run the following command in a Python cell. The system automatically creates or reuses a Spark session.

# Create a Spark session and start Livy. %maxcompute_spark -

Execute PySpark code: Use the

%%sparkcell magic in a new Python cell.# Python cells using MaxCompute Spark must start with %%spark. %%spark df = spark.sql("SELECT * FROM your_mc_table LIMIT 10") df.show() -

Release the connection: After debugging, stop or delete the session. In a production environment, the system automatically stops and deletes the Livy session when the task instance ends.

# Stop the Spark session and Livy. %maxcompute_spark stop # Stop Livy and delete it. %maxcompute_spark delete

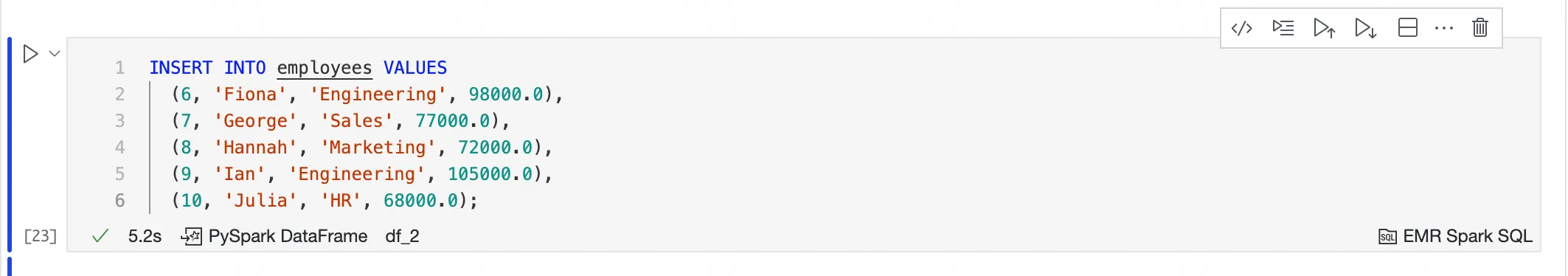

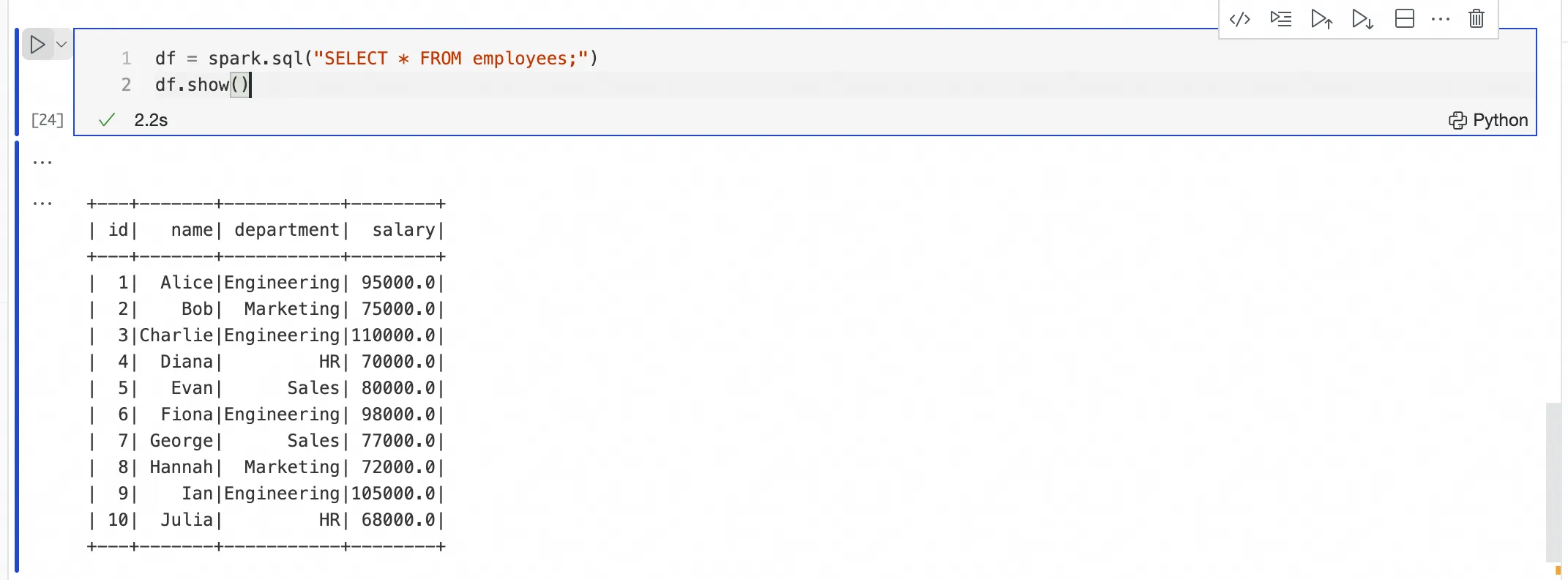

EMR Serverless Spark

Bind an EMR Serverless Spark compute resource to your workspace and create a Livy Gateway before establishing a connection.

Interact with EMR Serverless Spark by connecting to a Livy Gateway created in advance.

-

Establish a connection: Select the EMR compute resource and Livy gateway in the lower-right corner of the cell, then run one of the following commands:

-

Selected: global configuration overrides the session's custom parameters.

-

Not selected: the session's custom parameters override the global configuration.

# Basic connection %emr_serverless_sparkTo pass custom Spark parameters, use

%%emr_serverless_spark(two percent signs):%%emr_serverless_spark { "spark_conf": { "spark.emr.serverless.environmentId": "<EMR_Serverless_Spark_Runtime_Environment_ID>", "spark.emr.serverless.network.service.name": "<EMR_Serverless_Spark_Network_Connection_ID>", "spark.driver.cores": "1", "spark.driver.memory": "8g", "spark.executor.cores": "1", "spark.executor.memory": "2g", "spark.driver.maxResultSize": "32g" } }Custom parameters apply only to the current session. If omitted, the system uses the global parameters configured in Admin Center. To share configurations across tasks and users, set them globally in Admin Center > Serverless Spark > SPARK parameters. The Prioritize Global Configurations option in Admin Center controls priority when the same parameter appears in both places:

-

-

(Optional) Reconnect: If an administrator deletes the token on the Livy gateway page, recreate it with:

# Reconnect and refresh the Livy token. %emr_serverless_spark refresh_token -

Execute PySpark or SQL code: After a successful connection, the kernel switches. Write PySpark code directly in a Python cell, or write SQL in an EMR Spark SQL cell.

-

Submit SQL to EMR Serverless Spark using an EMR Spark SQL cell — the cell reuses the connection from

%emr_serverless_sparkand submits the job automatically. No compute resource selection is needed in the cell.

-

Submit PySpark code in a Python cell — no

%%sparkprefix is required.

-

-

Release the connection:

ImportantIf multiple users share a Livy Gateway,

stopordeleteaffects all users currently on that gateway. Use these commands with caution.# Stop the Spark session and Livy. %emr_serverless_spark stop # Stop Livy and delete it. %emr_serverless_spark delete

AnalyticDB for Spark

Bind an AnalyticDB for Spark compute resource to your workspace before establishing a connection.

Connect to an AnalyticDB for Spark engine by creating a Spark Connect Server.

-

Establish a connection: Select an ADB Spark compute resource in the lower-right corner of the cell. Configure the vSwitch ID and Security Group ID to ensure network connectivity, then run:

-

vSwitch ID (`vswitchId`): In the Alibaba Cloud AnalyticDB MySQL console, view the vSwitch ID in Network Information on the instance details page.

-

Security Group ID (`securityGroupId`): In Network Settings on your personal development environment's details page, find the ID of the selected Security Group — it starts with

sg-.

ImportantTo ensure network connectivity, select the same Virtual Private Cloud (VPC) and vSwitch as your AnalyticDB for Spark instance when creating the personal development environment.

# Configure the vSwitch ID and Security Group ID for network connectivity. %adb_spark add --spark-conf spark.adb.version=3.5 --spark-conf spark.adb.eni.enabled=true --spark-conf spark.adb.eni.vswitchId=<vSwitch_ID_of_ADB> --spark-conf spark.adb.eni.securityGroupId=<Security_Group_ID_of_personal_development_environment>How to find the vSwitch and Security Group IDs:

-

-

Execute PySpark code: After the connection is established, run PySpark in a new Python cell.

The AnalyticDB for Spark engine can only process C-Store tables that have the

'storagePolicy'='COLD'attribute.# AnalyticDB for Spark can only process C-Store tables. df = spark.sql("SELECT * FROM my_adb_cstore_table LIMIT 10") df.show() -

Release the connection: After debugging, clean up the connection session to save resources. In production, the system cleans up automatically.

%adb_spark cleanup

Connect to Lindorm Ray

The RAY resource group of the Lindorm compute engine provides distributed computing services that support end-to-end AI workloads. Connect to Lindorm Ray resources in a Notebook for interactive development, then publish the Notebook as a production scheduling task.

Establish a connection

Run %lindorm_ray in a Python cell. A compute resource selector appears in the lower-right corner — select your Lindorm compute resource and the created RAY resource group.

# Connect to the specified Lindorm Ray resource group.

%lindorm_ray-

After connecting to a Lindorm Ray compute resource, SQL cells in the same Notebook can no longer be run. Lindorm Ray focuses on executing Python and Ray code.

-

Running the same code cell multiple times automatically terminates the previous Ray job and starts a new one, preventing resource waste and task conflicts.

Execute Ray code

After a successful connection, write and execute Ray code in a new Python cell. Logs stream back to the cell's output area in real time.

The following example defines a remote task using the @ray.remote decorator. The task runs on the Ray cluster, and its logs and result are returned to the output area:

import ray

import time

@ray.remote

def hello_world():

print("Hello from Lindorm Ray!")

time.sleep(5)

return "Task finished."

# Submit the remote task.

result_ref = hello_world.remote()

print(ray.get(result_ref))Specify custom startup parameters (optional)

To install third-party Python packages or upload local code files, use %%lindorm_ray to establish the connection with a custom runtime environment configuration.

Example 1: Install dependencies

Install the jieba package in the Ray environment using the pip parameter:

%%lindorm_ray

{

"runtime_env": {

"pip": ["jieba"]

}

}After the environment is ready, import and use the package in subsequent Ray tasks:

import ray

@ray.remote

def do_work(x):

import jieba

return "/".join(jieba.cut(x))

print(ray.get(do_work.remote("Welcome to the DataWorks+LindormRay solution")))Example 2: Upload and use a DataWorks resource

Use the working_dir parameter to upload a resource from DataWorks Resource Management to the Ray cluster:

-

Files uploaded via

working_dircome directly from your development environment. A 100 MB size limit applies. For larger files (over 100 MB), upload to OSS and read from OSS in your code, or package them into a custom image. -

In the development environment, after running the cell that contains

##@resource_reference, rerun the%%lindorm_raycell to include the downloaded resource inworking_dir. This step is not required in production.

# Reference a resource from DataStudio Resource Management and declare its path.

%%lindorm_ray

{

"runtime_env": {

"working_dir": "/mnt/workspace/_dataworks/resource_references"

}

}Assume ray_resource.py has been uploaded to DataStudio Resource Management:

ray_resource.py:

def fun():

print("This is a test function in ray_resource.py")Reference and use it in a Ray task:

import ray

##@resource_reference{"ray_resource.py"}

@ray.remote

def do_work(x):

print('Ray says:', x)

from ray_resource import fun

fun()

return x

worker = do_work.remote("Welcome to the DataWorks+LindormRay solution")

print(ray.get(worker))Production scheduling and O&M

After development and debugging, commit and publish the Notebook node. It runs as a Lindorm Ray node in a DAG on a periodic schedule.

-

Parameterization: Use standard DataWorks scheduling parameters such as

${bizdate}. -

Log viewing: To prevent excessive logs from affecting performance, the system loads only the first 1 MB of logs by default. If logs are truncated, the output includes a link to the Lindorm console for the complete task logs.

-

Resource release: After a scheduled production task ends, the Lindorm Ray task enters its desired state and releases resources. During interactive development, restart the kernel or close the Notebook to release resources.

Magic command quick reference

| Magic command | Example | Description | Engine |

|---|---|---|---|

%odps |

o = %odps |

Gets a PyODPS entry object. | MaxCompute |

%maxframe |

mf_session = %maxframe |

Establishes a MaxFrame connection. | MaxCompute |

%maxcompute_spark |

%maxcompute_spark |

Creates a Spark session. | MaxCompute Spark |

%maxcompute_spark stop |

%maxcompute_spark stop |

Cleans up the Spark session and stops Livy. | MaxCompute Spark |

%maxcompute_spark delete |

%maxcompute_spark delete |

Cleans up the Spark session, then stops and deletes Livy. | MaxCompute Spark |

%%spark |

%%spark |

In a Python cell, connects to an already created Spark compute resource. | MaxCompute Spark |

%emr_serverless_spark |

%emr_serverless_spark |

Creates a Spark session. | EMR Serverless Spark |

%emr_serverless_spark info |

%emr_serverless_spark info |

Views detailed information about the Livy Gateway. | EMR Serverless Spark |

%emr_serverless_spark stop |

%emr_serverless_spark stop |

Cleans up the Spark session and stops Livy. | EMR Serverless Spark |

%emr_serverless_spark delete |

%emr_serverless_spark delete |

Cleans up the Spark session, then stops and deletes Livy. | EMR Serverless Spark |

%emr_serverless_spark refresh_token |

%emr_serverless_spark refresh_token |

Refreshes the Livy token for the personal development environment. | EMR Serverless Spark |

%adb_spark add |

%adb_spark add --spark-conf ... |

Creates and connects to a reusable ADB Spark session. | AnalyticDB for Spark |

%adb_spark info |

%adb_spark info |

Views Spark session information. | AnalyticDB for Spark |

%adb_spark cleanup |

%adb_spark cleanup |

Stops and cleans up the current Spark connection session. | AnalyticDB for Spark |

%lindorm_ray |

%lindorm_ray |

Establishes a Lindorm Ray connection. | Lindorm Ray |

%%lindorm_ray |

%%lindorm_ray with JSON config |

Establishes a Lindorm Ray connection and configures a custom runtime environment. | Lindorm Ray |

FAQ

Why do I get a `ModuleNotFoundError` or "There is no file with id ..." error when referencing a workspace resource?

-

Go to Data Development > Resource Management and confirm the MaxCompute Python resource has been saved.

-

In production, confirm the resource has been published to the production environment.

-

Click Restart in the Notebook editor toolbar to reload the resource.

After updating a workspace resource, why is the old version still being used?

Set the resource conflict handling policy Dataworks › Notebook › Resource Reference: Download Strategy to autoOverwrite in DataStudio settings, then click Restart Kernel in the Notebook toolbar.