Concepts

What are scheduling dependencies?

Scheduling dependencies define the execution order between nodes. When a dependency is configured, a node starts only after its ancestor node completes successfully — so the downstream node reads the latest data rather than stale or missing results.

Why configure scheduling dependencies?

Without a dependency, DataWorks cannot guarantee that upstream data is ready when a downstream node runs. Configuring a dependency lets DataWorks track the ancestor node's completion status and block the descendant node until fresh data is available.

How do scheduling dependencies work?

A dependency is established by wiring the output name of one node to the input name of another:

-

SQL nodes: DataWorks parses

SELECT,INSERT, andCREATEstatements automatically and sets inputs and outputs accordingly. -

Data Integration synchronization nodes: Manually add the output table in

Project name.Table nameformat so DataWorks can resolve the dependency through automatic parsing.

The output name must be unique across the workspace and follow the Project name.Table name format.

Which tables cannot be tracked by scheduling dependencies?

DataWorks can only monitor data produced by auto triggered nodes. If your SQL node reads from any of the following table types, delete the auto-parsed scheduling dependency for that table:

-

Tables uploaded from on-premises machines

-

Dimension tables

-

Tables not generated by DataWorks-scheduled nodes

-

Tables generated by manually triggered nodes

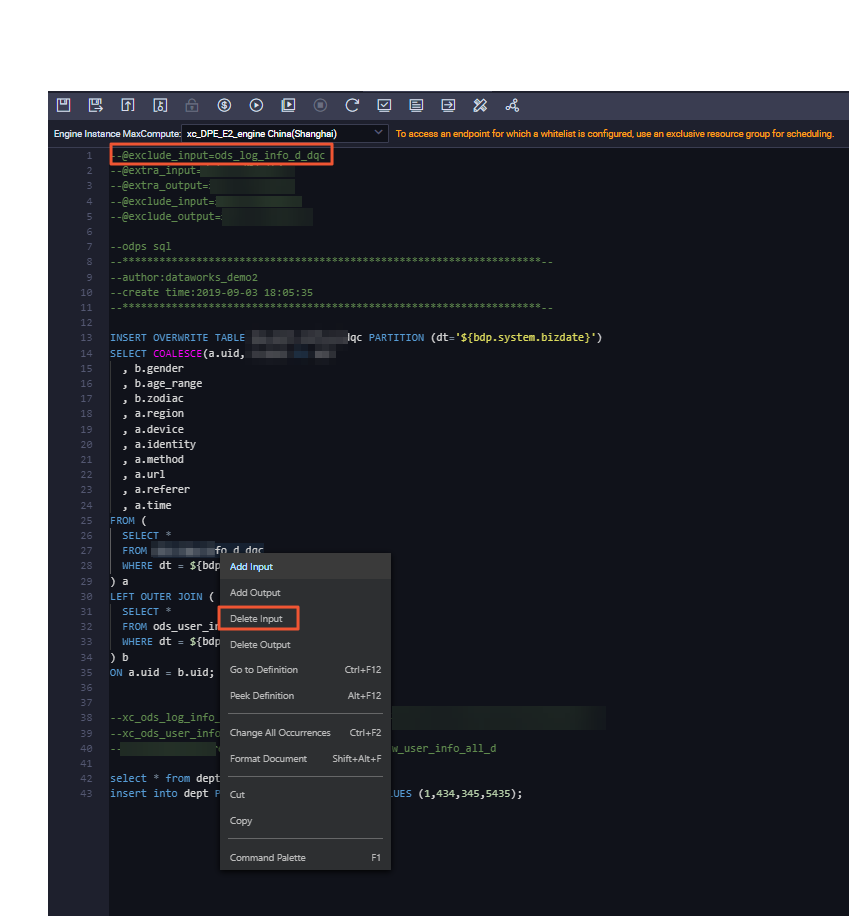

To delete an unwanted dependency: open the node's configuration tab in the Scheduled Workflow pane of DataStudio, right-click the table name in the code, and select Delete Input. Then click Parse Input and Output from Code in the Dependencies section of the Properties tab to re-parse valid dependencies.

Output names

What is an output name used for?

An output name is the identifier other nodes use to declare a dependency on this node. If node A has output name proj.table_a, any node that lists proj.table_a as a parent input depends on node A.

Can a node have multiple output names?

Yes. A node can expose multiple output names, and any other node can reference any one of them to establish a dependency.

Can two nodes share the same output name?

No. Output names must be unique. If two auto triggered nodes in the same workspace write to the same table, DataWorks reports:

The${nodename1}node and the${nodename2}node in the${projectname}workspace use the same output name${node_outputname}. Multiple nodes cannot have the same output name.

Identify which node produces the final version of the table, keep its output name, and rename the others.

Configuration errors

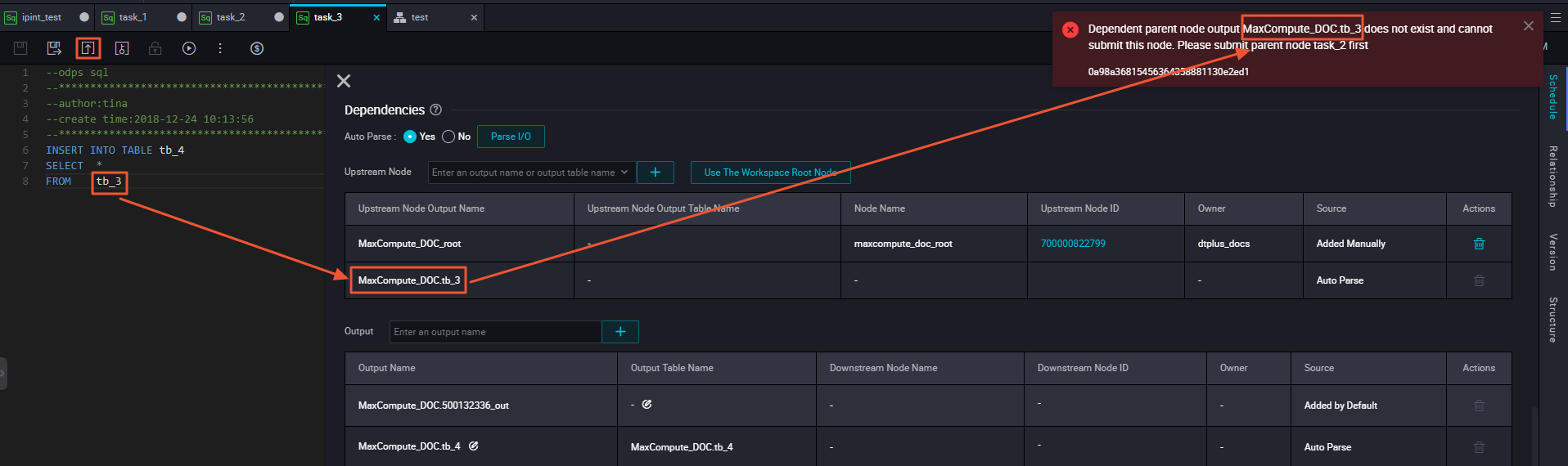

Commit error: ancestor node output not found

The system cannot locate the node that produces the listed output. Two common causes:

-

The ancestor node has not been committed yet. Commit it and retry.

-

The ancestor node is committed, but its configured output name differs from what the automatic parser detected. Update the output name on the ancestor node to match, in

Project name.Table nameformat.

If the table is not produced by an auto triggered node at all, delete the dependency: right-click the table name in the code and select Delete Input, then re-parse dependencies.

For detailed troubleshooting steps, see When I commit Node A, the system reports an error that the output name of the dependent ancestor node of Node A does not exist. What do I do?

Commit error: input/output inconsistent with data lineage

Auto-parsed parent references a non-existent output

Automatic parsing found a table name in your code and added it as a parent input, but cannot resolve which node produces that table. Check:

-

Is the producing node committed? If not, commit it.

-

Does the producing node's configured output name match what parsing detected? If not, correct the output name.

-

Is this table produced by an auto triggered node? If not, right-click the table name and select Delete Input, then re-parse.

Why is the descendant node name or ID blank in my node's output?

The descendant node field is empty when no downstream node has been configured yet. Once you add a downstream node that references this node's output name as its input, the field populates automatically.

Why does a deleted output name still appear in ancestor searches?

DataWorks searches committed output names in the scheduling system. If you deleted an output name from a node but did not recommit the node, the old name remains visible. Recommit the node to remove it from the index.

Undeploy error: node has descendant nodes

A node can only be undeployed after no other nodes depend on it — in both the development and production environments. Check Operation Center in both environments for dependencies that may not appear on the Properties tab.

What rules determine when a node should depend on its ancestor nodes?

In the DataWorks scheduling system, configure a dependency between two nodes when the downstream node requires data generated by the upstream node. You can determine whether a dependency is needed based on the data lineages of the tables involved. For more information, see Scheduling dependency configuration guide.

Multi-node dependencies

How do I make a node depend on multiple upstream nodes?

Add the output name of each upstream node to Parent Nodes for the downstream node. All listed parents must complete successfully before the downstream node runs.

For example: node C needs data from node A (hourly) and node B (daily). If you add only node A's output name to Parent Nodes for node C, node C may run before node B finishes, causing node C to fail. Add both output names.

Only configure a dependency if the downstream node truly requires fresh data from the upstream node. If stale data is acceptable, a dependency is not necessary.

How do I run node A, node B, and node C in sequence every hour?

-

Wire the output of node A as the input of node B, and the output of node B as the input of node C.

-

Set the scheduling cycle to hourly for all three nodes.

How do I configure dependencies across workflows or workspaces?

The mechanism is the same: add the output name of the upstream node to Parent Nodes for the downstream node. Nodes can belong to different workflows or workspaces within the same region.

Cross-cycle dependencies

Cross-cycle dependencies appear as dashed lines in Operation Center. Regular same-cycle dependencies appear as solid lines.

When do I need a cross-cycle (self) dependency?

Configure a node's current-cycle instance to depend on its own previous-cycle instance when:

-

The node needs data produced by the same node in the prior cycle.

-

An hourly node depends on a daily node: after the daily node completes, all hourly instances for that day become runnable simultaneously, causing a parallel surge. Setting Cross-Cycle Dependency to Instances of Current Node on the hourly node serializes its instances.

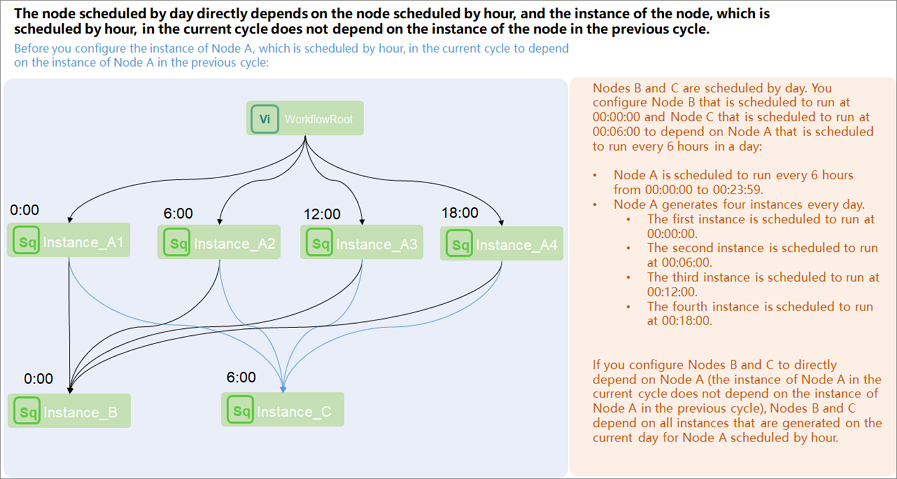

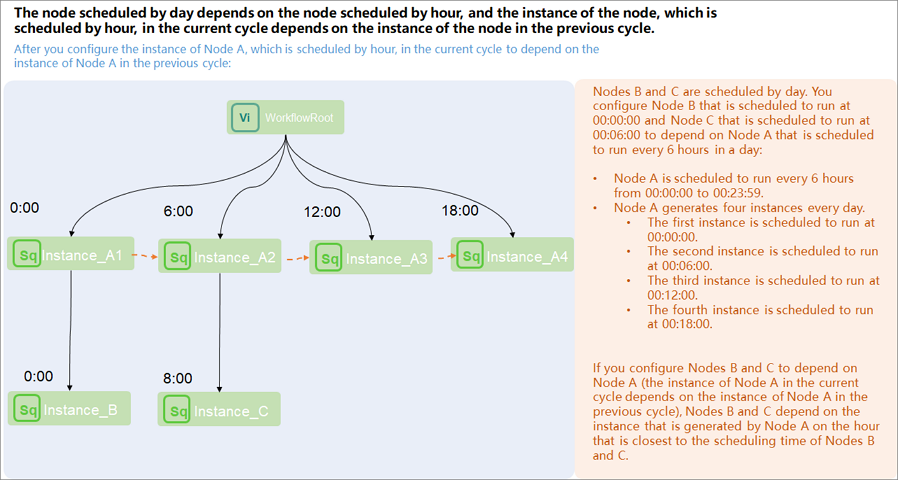

Day-node depends on hourly-node: which scenario fits?

| Goal | Configuration |

|---|---|

| Day-node waits for all current-day hourly instances | Add the hourly node's output name to Parent Nodes for the day-node. No cross-cycle setting needed. |

| Day-node waits for a specific hourly instance on the current day (e.g., 12:00) | Set the hourly node's Cross-Cycle Dependency to Instances of Current Node. Add the hourly node's output name to Parent Nodes for the day-node. Set the day-node's scheduling time to 12:00. |

| Day-node waits for all previous-day hourly instances | On the day-node's Properties tab, set Cross-Cycle Dependency to Other Nodes and enter the hourly node's ID. Remove the hourly node from Parent Nodes for the day-node. |

Scenario 1 — direct dependency (all current-day instances):

Scenario 2 — self-dependency on the hourly node (specific instance):

If you configure a cross-cycle dependency on the hourly node for the day-node and keep the hourly node in Parent Nodes, the day-node will depend on instances from both the previous day and the current day.

When does a day-node start if its parent is an hourly node?

The day-node waits for all hourly instances produced on the current day. For a node that runs every hour starting at 00:00, all 24 instances must complete before the day-node starts.

To verify in Operation Center: open the directed acyclic graph (DAG) for the day-node, right-click the node, and select Show Ancestor Nodes. All 24 current-day hourly instances appear as solid-line dependencies.

If node A (hourly) still runs into the next day, does node B (daily) run?

Yes. Node B's instance depends on all of node A's current-day instances. Once the last instance of node A completes — even if that happens past midnight — node B runs. Scheduling parameters resolve correctly for that execution.

How do I make a daily node run after only the first hourly instance each day?

Set Cross-Cycle Dependency to Instances of Current Node on node A. Set node B's scheduling time to 00:00. Node B then depends only on the 00:00 instance of node A — the first one each day.

Other questions

How do I configure an ancestor for the start node of a workflow?

Create a zero load node and make it the workflow's start node. Then set the workspace root node as the ancestor of the zero load node. See Create and use a zero load node.

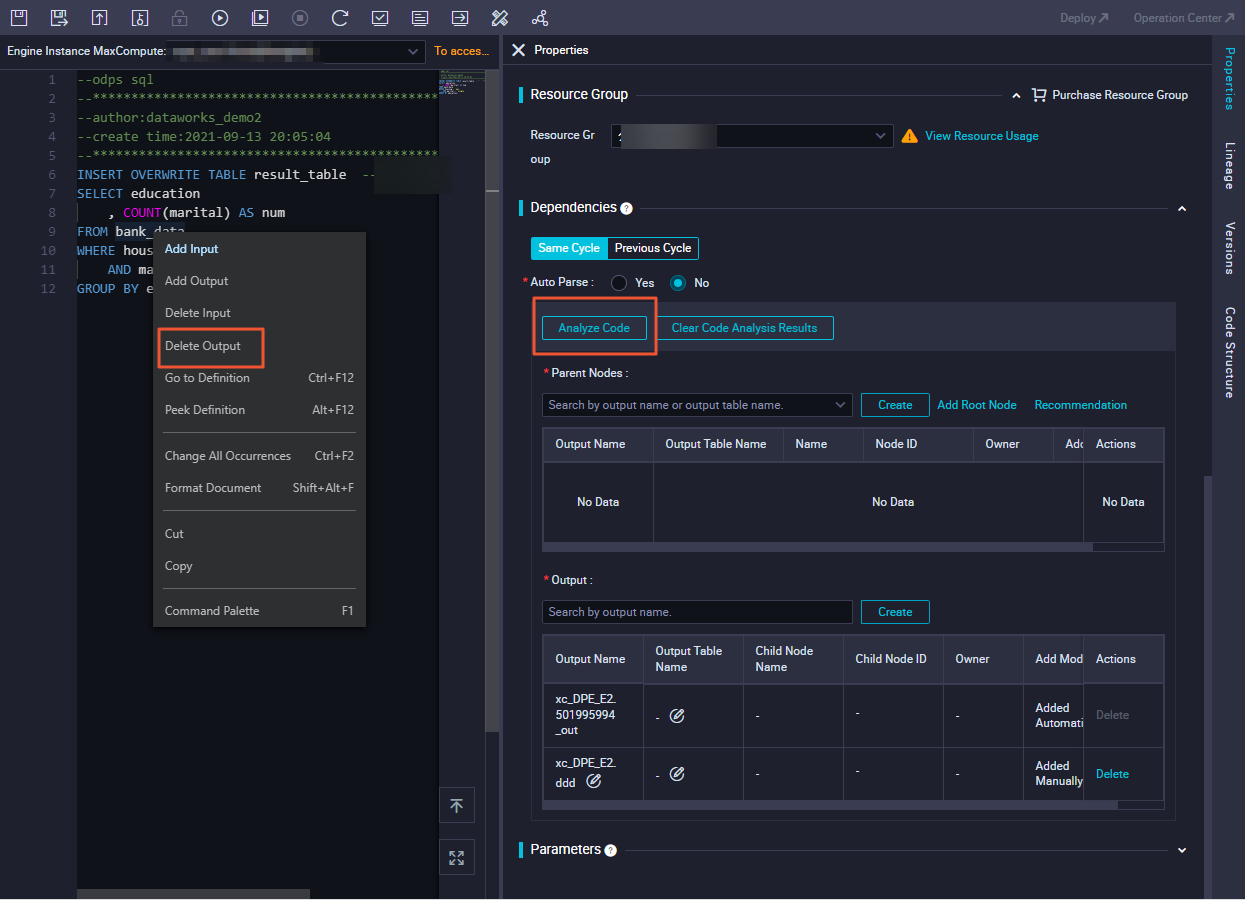

How do I stop DataWorks from parsing temporary tables as dependencies?

Open the node's configuration tab, right-click the temporary table name in the SQL code, and select Delete Input or Delete Output. Then click Parse Input and Output from Code in the Dependencies section of the Properties tab.

How do I delete a dependency on a table my node doesn't actually use?

On the node's configuration tab, right-click the table name in the code and select Delete Input. Then re-parse dependencies from the Dependencies section of the Properties tab.

Node doesn't rerun after failure — "Task Run Timed Out, Killed by System!!!" appears

The Timeout definition parameter in the Schedule section of the Properties tab is set for this node. When a node times out, it stops immediately and cannot be automatically rerun — even if Rerun is set to Allow Regardless of Running Status or Allow upon Failure Only.

Manually rerun the node from Operation Center.