This topic describes how to use Alibaba Cloud Data Transmission Service (DTS) to migrate data from an Amazon Aurora PostgreSQL database to an ApsaraDB RDS for PostgreSQL database or a PolarDB for PostgreSQL database. The procedure of migrating data from an Amazon Aurora PostgreSQL database to an ApsaraDB RDS for PostgreSQL database is used as an example.

Prerequisites

The Public accessibility option of the Amazon Aurora PostgreSQL instance is set to Yes. This ensures that DTS can access the Amazon Aurora PostgreSQL instance over the Internet.

If you want to migrate incremental data from an Amazon Aurora PostgreSQL database, make sure the database version is later than 10.0, the value of rds.logical_replication is 1, and the value of synchronous_commit is on. For more information, see Setting up logical replication for your Aurora PostgreSQL DB cluster and Amazon Aurora PostgreSQL parameters.

An ApsaraDB RDS for PostgreSQL instance is created. The storage space of the instance is larger than that occupied by the database to be migrated from Amazon Aurora PostgreSQL. For more information, see Create an ApsaraDB RDS for PostgreSQL instance.

Precautions

DTS uses read and write resources of the source and destination databases during full data migration. This may increase the loads of the database servers. If the database performance is unfavorable, the specification is low, or the data volume is large, database services may become unavailable. For example, DTS occupies a large amount of read and write resources in the following cases: a large number of slow SQL queries are performed on the source database, the tables have no primary keys, or a deadlock occurs in the destination database. Before you migrate data, evaluate the impact of data migration on the performance of the source and destination databases. We recommend that you migrate data during off-peak hours. For example, you can migrate data when the CPU utilization of the source and destination databases is less than 30%.

Amazon Aurora PostgreSQL 10.0 and earlier versions do not support incremental data migration.

Each data migration task can migrate data from only a single database. To migrate data from multiple databases, you must create a data migration task for each database.

DTS does not migrate functions that are written in the C programming language.

The tables to be migrated in the source database must have PRIMARY KEY or UNIQUE constraints and all fields must be unique. Otherwise, the destination database may contain duplicate data records.

If a data migration task fails, DTS automatically resumes the task. Before you switch your workloads to the destination instance, stop or release the data migration task. Otherwise, the data in the source instance overwrites the data in the destination instance after the task is resumed.

Billing rules

Migration type | Task configuration fee | Internet traffic fee |

Schema migration and full data migration | Free of charge. | Charged only when data is migrated from Alibaba Cloud over the Internet. For more information, see Billing overview. |

Incremental data migration | Charged. For more information, see Billing overview. |

Migration types

Schema migration

DTS migrates the schemas of objects to the ApsaraDB RDS for PostgreSQL instance. DTS supports schema migration for the following types of objects: table, trigger, view, sequence, function, user-defined type, rule, domain, operation, and aggregate.

NoteDTS does not migrate functions that are written in the C programming language.

Full data migration

DTS migrates the existing data of objects from the Amazon RDS for PostgreSQL instance to the ApsaraDB RDS for PostgreSQL instance.

Incremental data migration

After full data migration is complete, DTS migrates incremental data from the Amazon RDS for PostgreSQL instance to the ApsaraDB RDS for PostgreSQL instance. Incremental data migration allows you to ensure service continuity when you migrate data from the Amazon RDS for PostgreSQL instance to the ApsaraDB RDS for PostgreSQL instance.

Permissions required for database accounts

Instance | Schema migration | Full data migration | Incremental data migration |

Amazon Aurora PostgreSQL | The USAGE permission on pg_catalog | The SELECT permission on the objects to be migrated | rds_superuser permissions |

ApsaraDB RDS for PostgreSQL | The CREATE and USAGE permissions on the objects to be migrated | Permissions of the schema owner | Permissions of the schema owner |

For more information about how to create a database account and grant permissions to the account, see the following topics.

Amazon Aurora PostgreSQL database: Understanding PostgreSQL roles and permissions

ApsaraDB RDS for PostgreSQL instance: Create an account

Data migration process

To prevent data migration failures caused by dependencies between objects, DTS migrates the schemas and data of the source Amazon RDS for PostgreSQL instance in the following order:

Migrate the schemas of tables, views, sequences, functions, user-defined types, rules, domains, operations, and aggregates.

Perform full data migration.

Migrate the schemas of triggers and foreign keys.

Perform incremental data migration.

NoteBefore incremental data migration, do not perform DDL operations on the objects in the Amazon RDS for PostgreSQL instance. Otherwise, the objects may fail to be migrated.

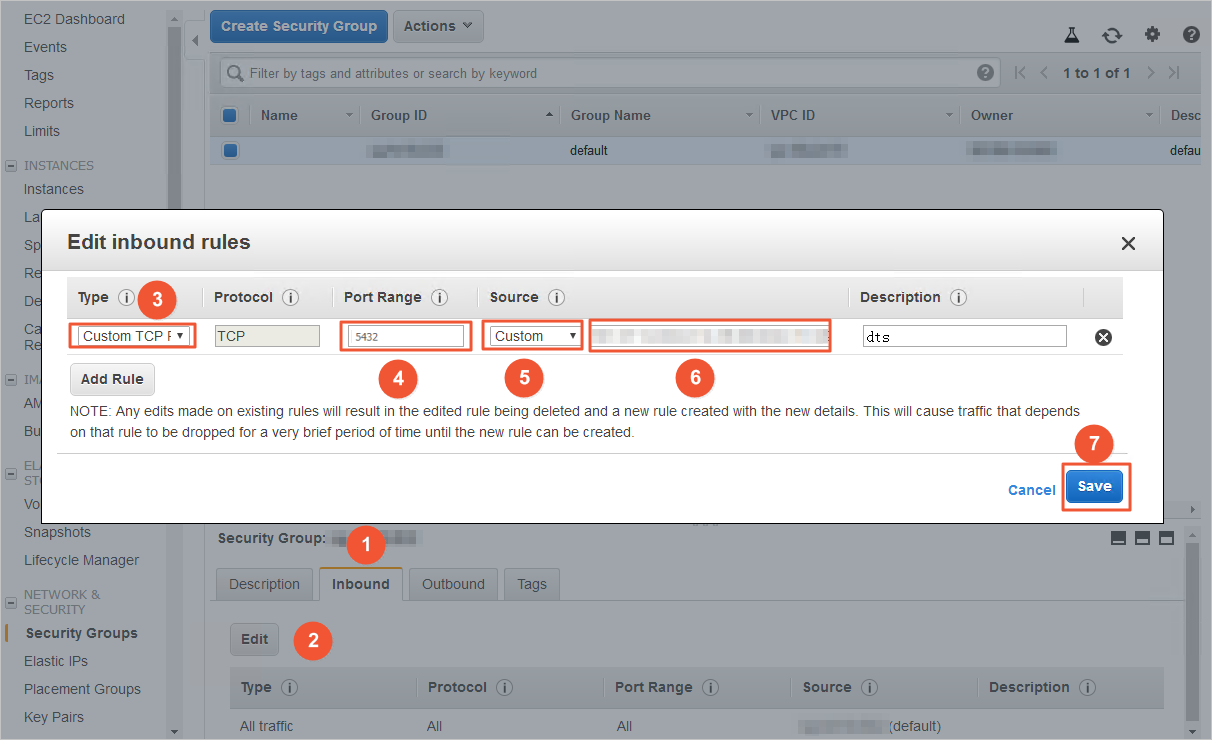

Preparation 1: Edit the inbound rule of the Amazon Aurora PostgreSQL instance

Log on to the Amazon Aurora console.

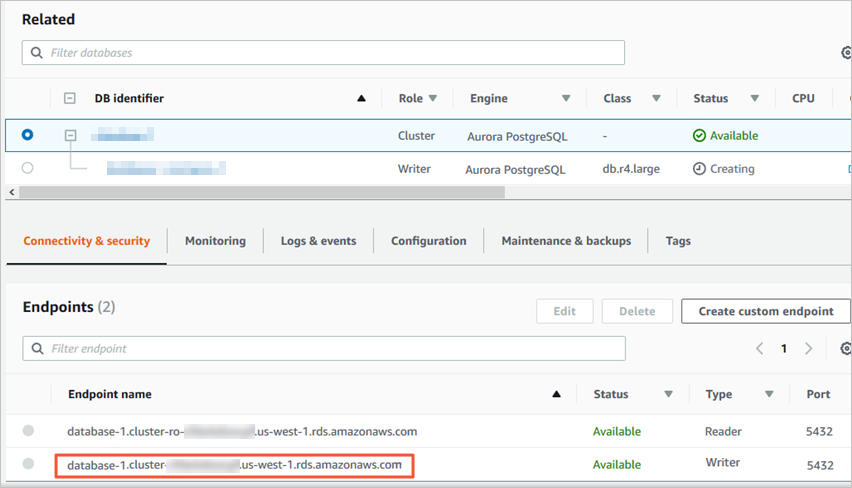

Go to the basic information page of the Amazon Aurora PostgreSQL instance.

Click the DB identifier of the node whose Role is Writer instance.

On the Connectivity & security tab, click the name of the VPC security group that corresponds to the node.

On the Security Groups page, click the Inbound tab in the Security Group section. On the Inbound tab, click Edit. In the Edit inbound rules dialog box, add the CIDR blocks of DTS servers that reside in the corresponding region to the inbound rule. For more information, see Add the CIDR blocks of DTS servers.

Note

NoteYou need to add only the CIDR blocks of DTS servers that reside in the same region as the destination database. For example, the source database resides in the Singapore region and the destination database resides in the China (Hangzhou) region. You need to add only the CIDR blocks of DTS servers that reside in the China (Hangzhou) region.

You can add all of the required CIDR blocks to the inbound rule at a time.

If you have other questions, see the official documentation of Amazon or contact technical support.

Preparation 2: Create a database and a schema in the destination ApsaraDB RDS for PostgreSQL instance

Create a database and a schema on the destination ApsaraDB RDS for PostgreSQL instance based on the database and schema information of the objects to be migrated. The schema name of the source and destination databases must be the same. For more information, see Create a database and Manage accounts by using schemas.

Procedure (in the new DTS console)

Use one of the following methods to go to the Data Migration page and select the region in which the data migration instance resides.

DTS console

Log on to the DTS console.

In the left-side navigation pane, click Data Migration.

In the upper-left corner of the page, select the region in which the data migration instance resides.

DMS console

NoteThe actual operation may vary based on the mode and layout of the DMS console. For more information, see Simple mode and Customize the layout and style of the DMS console.

Log on to the DMS console.

In the top navigation bar, move the pointer over .

From the drop-down list to the right of Data Migration Tasks, select the region in which the data synchronization instance resides.

Click Create Task to go to the task configuration page.

Configure the source and destination databases. The following table describes the parameters.

WarningAfter you configure the source and destination databases, we recommend that you read the Limits that are displayed in the upper part of the page. Otherwise, the task may fail or data inconsistency may occur.

Section

Parameter

Description

N/A

Task Name

The name of the DTS task. DTS automatically generates a task name. We recommend that you specify an informative name that makes it easy to identify the task. You do not need to specify a unique task name.

Source Database

Select a DMS database instance

The database that you want to use. You can choose whether to use an existing database based on your business requirements.

If you select an existing database, DTS automatically populates the parameters for the database.

If you do not select an existing database, you must configure the following database information.

NoteClick Add DMS Database Instance to register a database instance in the DMS console. For more information, see Register an Alibaba Cloud database instance and Register a database hosted on a third-party cloud service or a self-managed database.

In the DTS console, register a database with DTS on the Databasr Connection page or the new configuration page. For more information, see Manage database connections.

Database Type

The type of the source database. Select PostgreSQL.

Access Method

The access method of the source database. Select Public IP Address.

Instance Region

The region in which the Amazon Aurora PostgreSQL instance resides.

NoteIf the region in which the Amazon Aurora PostgreSQL instance resides is not displayed in the drop-down list, select a region that is geographically closest to the Amazon Aurora PostgreSQL instance.

Domain Name or IP

The endpoint that is used to access the Amazon Aurora PostgreSQL instance.

NoteYou can obtain the endpoint on the basic information page of the Amazon Aurora PostgreSQL instance.

Port Number

The service port number of the Amazon Aurora PostgreSQL instance. Default value: 5432.

Database Name

Enter the name of the source database in the Amazon Aurora PostgreSQL instance.

Database Account

The database account of the Amazon Aurora PostgreSQL instance. For information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password that is used to access the database instance.

Encryption

Specifies whether to encrypt the connection to the source database. You can configure this parameter based on your business requirements. In this example, Non-encrypted is selected.

If you want to establish an SSL-encrypted connection to the source database, perform the following steps: Select SSL-encrypted, upload CA Certificate, Client Certificate, and Private Key of Client Certificate as needed, and then specify Private Key Password of Client Certificate.

NoteIf you set Encryption to SSL-encrypted for a self-managed PostgreSQL database, you must upload CA Certificate.

If you want to use the client certificate, you must upload Client Certificate and Private Key of Client Certificate and specify Private Key Password of Client Certificate.

For information about how to configure SSL encryption for an ApsaraDB RDS for PostgreSQL instance, see SSL encryption.

Destination Database

Select a DMS database instance

The database that you want to use. You can choose whether to use an existing database based on your business requirements.

If you select an existing database, DTS automatically populates the parameters for the database.

If you do not select an existing database, you must configure the following database information.

NoteClick Add DMS Database Instance to register a database instance in the DMS console. For more information, see Register an Alibaba Cloud database instance and Register a database hosted on a third-party cloud service or a self-managed database.

In the DTS console, register a database with DTS on the Databasr Connection page or the new configuration page. For more information, see Manage database connections.

Database Type

The type of the destination database. Select PostgreSQL.

Access Method

The access method of the destination database. Select Alibaba Cloud Instance.

Instance Region

The region in which the destination ApsaraDB RDS for PostgreSQL instance resides.

Instance ID

The ID of the destination ApsaraDB RDS for PostgreSQL instance.

Database Name

The name of the destination database in the ApsaraDB RDS for PostgreSQL instance. The name can be different from the name of the source database in the Amazon Aurora PostgreSQL instance.

NoteBefore you configure the data migration task, make sure that the destination database is created in the ApsaraDB RDS for PostgreSQL instance. For more information, see Create a database.

Database Account

The database account of the destination ApsaraDB RDS for PostgreSQL instance. For information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password that is used to access the database instance.

Wait until Success Rate becomes 100%. Then, click Next: Purchase Instance.

Configure the objects to be migrated.

On the Configure Objects page, configure the objects that you want to migrate.

Parameter

Description

Migration Types

To perform full data migration only, select both Schema Migration and Full Data Migration.

To migrate data without service downtime, select Schema Migration, Full Data Migration, and Incremental Data Migration.

NoteTo ensure data consistency, do not write data to the source instance during data migration if Incremental Data Migration is not selected.

Processing Mode of Conflicting Tables

Precheck and Report Errors: checks whether the destination database contains tables that use the same names as tables in the source database. If the source and destination databases do not contain tables that have identical table names, the precheck is passed. Otherwise, an error is returned during the precheck and the data migration task cannot be started.

NoteIf the source and destination databases contain tables with identical names and the tables in the destination database cannot be deleted or renamed, you can use the object name mapping feature to rename the tables that are migrated to the destination database. For more information, see Map object names.

Ignore Errors and Proceed: skips the precheck for identical table names in the source and destination databases.

WarningIf you select Ignore Errors and Proceed, data inconsistency may occur and your business may be exposed to the following potential risks:

If the source and destination databases have the same schema, and a data record has the same primary key as an existing data record in the destination database, the following scenarios may occur:

During full data migration, DTS does not migrate the data record to the destination database. The existing data record in the destination database is retained.

During incremental data migration, DTS migrates the data record to the destination database. The existing data record in the destination database is overwritten.

If the source and destination databases have different schemas, only specific columns are migrated or the data migration task fails. Proceed with caution.

Capitalization of Object Names in Destination Instance

The capitalization of database names, table names, and column names in the destination instance. By default, DTS default policy is selected. You can select other options to make sure that the capitalization of object names is consistent with that of the source or destination database. For more information, see Specify the capitalization of object names in the destination instance.

Source Objects

Select one or more objects from the Source Objects section. Click the

icon and add the objects to the Selected Objects section. Note

icon and add the objects to the Selected Objects section. NoteYou can select columns, tables, or schemas as the objects to be migrated. If you select tables or columns as the objects to be migrated, DTS does not migrate other objects, such as views, triggers, or stored procedures, to the destination database.

Selected Objects

To rename an object that you want to migrate to the destination instance, right-click the object in the Selected Objects section. For more information, see Map the name of a single object.

To rename multiple objects at a time, click Batch Edit in the upper-right corner of the Selected Objects section. For more information, see Map multiple object names at a time.

NoteIf you use the object name mapping feature to rename an object, other objects that are dependent on the object may fail to be migrated.

Click Next: Advanced Settings to configure advanced settings.

Parameter

Description

Dedicated Cluster for Task Scheduling

By default, DTS schedules the data migration task to the shared cluster if you do not specify a dedicated cluster. If you want to improve the stability of data migration tasks, purchase a dedicated cluster. For more information, see What is a DTS dedicated cluster.

Retry Time for Failed Connections

The retry time range for failed connections. If the source or destination database fails to be connected after the data migration task is started, DTS immediately retries a connection within the retry time range. Valid values: 10 to 1,440. Unit: minutes. Default value: 720. We recommend that you set the parameter to a value greater than 30. If DTS is reconnected to the source and destination databases within the specified retry time range, DTS resumes the data migration task. Otherwise, the data migration task fails.

NoteIf you specify different retry time ranges for multiple data migration tasks that share the same source or destination database, the value that is specified later takes precedence.

When DTS retries a connection, you are charged for the DTS instance. We recommend that you specify the retry time range based on your business requirements. You can also release the DTS instance at the earliest opportunity after the source database and destination instance are released.

Retry Time for Other Issues

The retry time range for other issues. For example, if DDL or DML operations fail to be performed after the data migration task is started, DTS immediately retries the operations within the retry time range. Valid values: 1 to 1440. Unit: minutes. Default value: 10. We recommend that you set the parameter to a value greater than 10. If the failed operations are successfully performed within the specified retry time range, DTS resumes the data migration task. Otherwise, the data migration task fails.

ImportantThe value of the Retry Time for Other Issues parameter must be smaller than the value of the Retry Time for Failed Connections parameter.

Enable Throttling for Full Data Migration

Specifies whether to enable throttling for full data migration. During full data migration, DTS uses the read and write resources of the source and destination databases. This may increase the loads of the database servers. You can enable throttling for full data migration based on your business requirements. To configure throttling, you must configure the Queries per second (QPS) to the source database, RPS of Full Data Migration, and Data migration speed for full migration (MB/s) parameters. This reduces the loads of the destination database server.

NoteYou can configure this parameter only if you select Full Data Migration for the Migration Types parameter.

Enable Throttling for Incremental Data Migration

Specifies whether to enable throttling for incremental data migration. To configure throttling, you must configure the RPS of Incremental Data Migration and Data migration speed for incremental migration (MB/s) parameters. This reduces the loads of the destination database server.

NoteYou can configure this parameter only if you select Incremental Data Migration for the Migration Types parameter.

Environment Tag

The environment tag that is used to identify the DTS instance. You can select an environment tag based on your business requirements. In this example, no environment tag is selected.

Configure ETL

Specifies whether to enable the extract, transform, and load (ETL) feature. For more information, see What is ETL? Valid values:

Yes: configures the ETL feature. You can enter data processing statements in the code editor. For more information, see Configure ETL in a data migration or data synchronization task.

No: does not configure the ETL feature.

Monitoring and Alerting

Specifies whether to configure alerting for the data migration task. If the task fails or the migration latency exceeds the specified threshold, the alert contacts receive notifications. Valid values:

No: does not configure alerting.

Yes: configures alerting. In this case, you must also configure the alert threshold and alert notification settings. For more information, see the Configure monitoring and alerting when you create a DTS task section of the Configure monitoring and alerting topic.

Click Next Step: Data Verification to configure the data verification task.

For more information about how to use the data verification feature, see Configure a data verification task.

Save the task settings and run a precheck.

To view the parameters to be specified when you call the relevant API operation to configure the DTS task, move the pointer over Next: Save Task Settings and Precheck and click Preview OpenAPI parameters.

If you do not need to view or have viewed the parameters, click Next: Save Task Settings and Precheck in the lower part of the page.

NoteBefore you can start the data migration task, DTS performs a precheck. You can start the data migration task only after the task passes the precheck.

If the task fails to pass the precheck, click View Details next to each failed item. After you analyze the causes based on the check results, troubleshoot the issues. Then, run a precheck again.

If an alert is triggered for an item during the precheck:

If an alert item cannot be ignored, click View Details next to the failed item and troubleshoot the issues. Then, run a precheck again.

If the alert item can be ignored, click Confirm Alert Details. In the View Details dialog box, click Ignore. In the message that appears, click OK. Then, click Precheck Again to run a precheck again. If you ignore the alert item, data inconsistency may occur, and your business may be exposed to potential risks.

Purchase an instance.

Wait until Success Rate becomes 100%. Then, click Next: Purchase Instance.

On the Purchase Instance page, configure the Instance Class parameter for the data migration instance. The following table describes the parameters.

Section

Parameter

Description

New Instance Class

Resource Group

The resource group to which the data migration instance belongs. Default value: default resource group. For more information, see What is Resource Management?

Instance Class

DTS provides instance classes that vary in the migration speed. You can select an instance class based on your business scenario. For more information, see Instance classes of data migration instances.

Read and agree to Data Transmission Service (Pay-as-you-go) Service Terms by selecting the check box.

Click Buy and Start. In the message that appears, click OK.

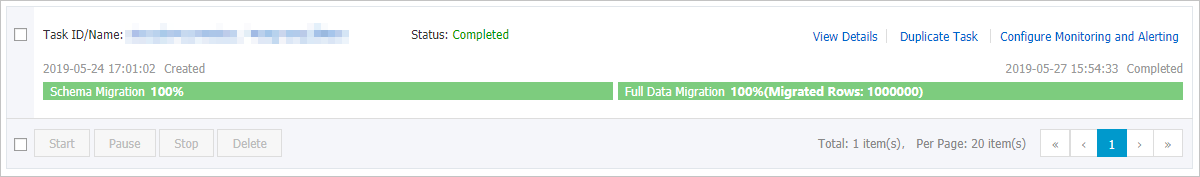

You can view the progress of the task on the Data Migration page.

Procedure (in the old DTS console)

Log on to the DTS console.

NoteIf you are redirected to the Data Management (DMS) console, you can click the

icon in the

icon in the  to go to the previous version of the DTS console.

to go to the previous version of the DTS console.In the left-side navigation pane, click Data Migration.

At the top of the Migration Tasks page, select the region where the destination cluster resides.

In the upper-right corner of the page, click Create Migration Task.

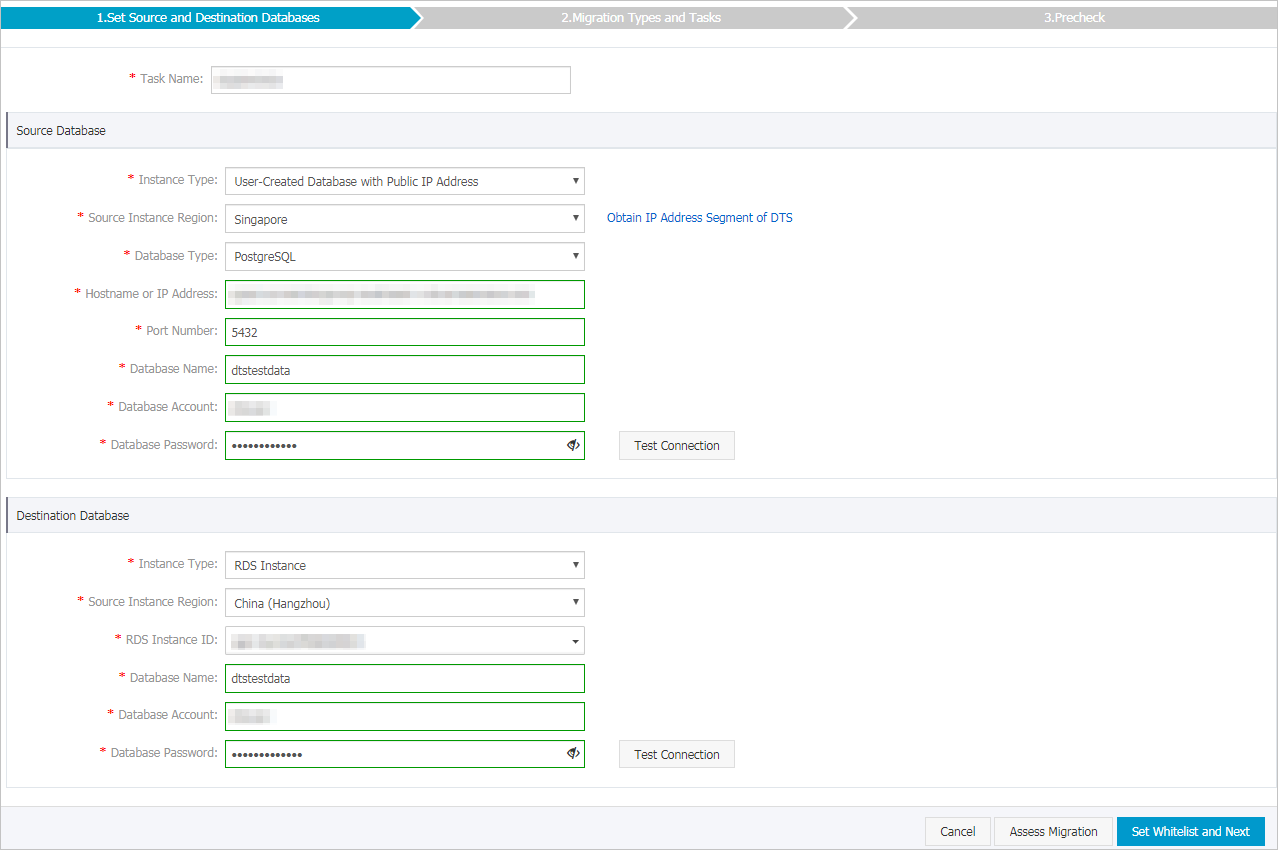

Configure the source and destination databases.

Section

Parameter

Description

N/A

Task Name

The name of the task. DTS automatically generates a task name. We recommend that you specify a descriptive name to identify the task. You do not need to specify a unique task name.

Source Database

Instance Type

The instance type of the source database. Select User-Created Database with Public IP Address.

Instance Region

The region in which the source instance resides. If you select User-Created Database with Public IP Address as the instance type, you do not need to configure the Instance Region parameter.

Database Type

The type of the source database. Select PostgreSQL.

Hostname or IP Address

The endpoint that is used to access the Amazon Aurora PostgreSQL instance.

NoteYou can obtain the endpoint on the basic information page of the Amazon Aurora PostgreSQL instance.

Port Number

Enter the service port number of the Amazon Aurora PostgreSQL instance. The default port number is 5432.

Database Name

Enter the name of the source database in the Amazon Aurora PostgreSQL instance.

Database Account

The database account of the Amazon Aurora PostgreSQL instance. For information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password of the database account.

NoteAfter you configure the source database parameters, click Test Connectivity next to Database Password to verify whether the configured parameters are valid. If the configured parameters are valid, the Passed message is displayed. If the Failed message is displayed, click Check next to Failed to modify the source database parameters based on the check results.

Destination Database

Instance Type

The instance type of the destination database. Select RDS Instance.

Instance Region

The region in which the ApsaraDB RDS for PostgreSQL instance resides.

RDS Instance ID

The ID of the ApsaraDB RDS for PostgreSQL instance.

Database Name

The name of the destination database in the ApsaraDB RDS for PostgreSQL instance. The name can be different from the name of the source database in the Amazon Aurora PostgreSQL instance.

NoteYou must create a database and a schema in the destination ApsaraDB RDS for PostgreSQL instance before you configure the data migration task. For more information, see the Preparation 2: Create a database and a schema in the destination ApsaraDB RDS for PostgreSQL instance section of this topic.

Database Account

The database account of the ApsaraDB RDS for PostgreSQL instance. For information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password of the database account.

NoteAfter you configure the destination database parameters, click Test Connectivity next to Database Password to verify whether the configured parameters are valid. If the configured parameters are valid, the Passed message is displayed. If the Failed message is displayed, click Check next to Failed to modify the destination database parameters based on the check results.

In the lower-right corner of the page, click Set Whitelist and Next.

If the source or destination database instance is an Alibaba Cloud database instance, such as an ApsaraDB RDS for MySQL or ApsaraDB for MongoDB instance, or is a self-managed database hosted on Elastic Compute Service (ECS), DTS automatically adds the CIDR blocks of DTS servers to the whitelist of the database instance or ECS security group rules. If the source or destination database is a self-managed database on data centers or is from other cloud service providers, you must manually add the CIDR blocks of DTS servers to allow DTS to access the database. For more information about the CIDR blocks of DTS servers, see the "CIDR blocks of DTS servers" section of the Add the CIDR blocks of DTS servers to the security settings of on-premises databases topic.

WarningIf the CIDR blocks of DTS servers are automatically or manually added to the whitelist of the database or instance, or to the ECS security group rules, security risks may arise. Therefore, before you use DTS to migrate data, you must understand and acknowledge the potential risks and take preventive measures, including but not limited to the following measures: enhance the security of your username and password, limit the ports that are exposed, authenticate API calls, regularly check the whitelist or ECS security group rules and forbid unauthorized CIDR blocks, or connect the database to DTS by using Express Connect, VPN Gateway, or Smart Access Gateway.

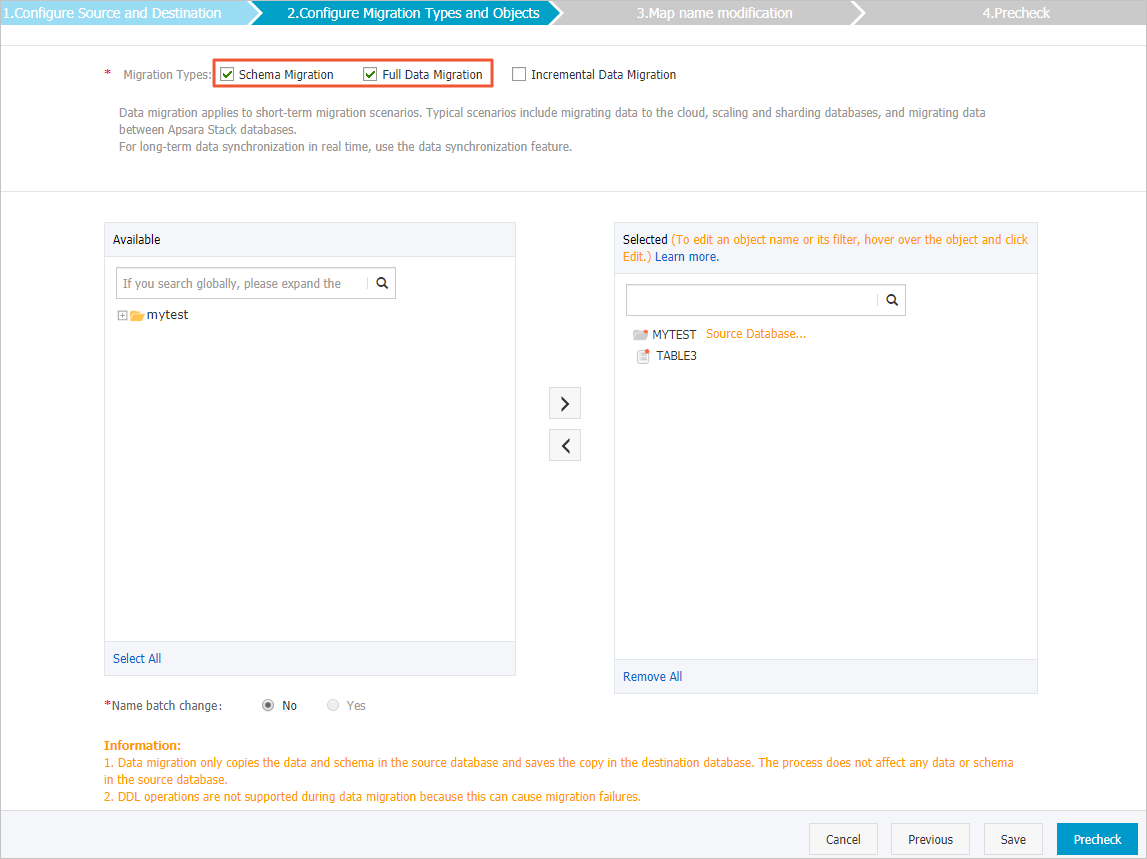

Select the objects to be migrated and the migration types.

Setting

Description

Select the migration types

To perform only full data migration, select both Schema Migration and Full Data Migration

To migrate data without service downtime, select Schema Migration, Full Data Migration, and Incremental Data Migration.

NoteTo ensure data consistency, do not write data to the Amazon Aurora PostgreSQL database during data migration if Incremental Data Migration is not selected.

Select the objects to be migrated

Select one or more objects from the Available section and click the

icon to move the objects to the Selected section. Note

icon to move the objects to the Selected section. NoteYou can select columns, tables, or databases as the objects to migrate.

By default, after an object is migrated, the object name in the destination database remains the same as the object name in the source database. You can use the object name mapping feature to rename the objects that are migrated to the destination database. For more information, see Object name mapping.

If you use the object name mapping feature to rename an object, other objects that are dependent on the object may fail to be migrated.

Specify whether to rename objects

You can use the object name mapping feature to rename the objects that are migrated to the destination instance. For more information, see Object name mapping.

Specify the retry time range for a failed connection to the source or destination database

By default, if DTS fails to connect to the source or destination database, DTS retries within the next 12 hours. You can specify a retry time range based on your needs. If DTS reconnects to the source and destination databases within the specified period of time, DTS resumes the data migration task. Otherwise, the migration task fails.

NoteWhen DTS retries a connection, you are charged for the DTS instance. We recommend that you specify the retry time range based on your business requirements. You can also release the DTS instance at the earliest opportunity after the source and destination instances are released.

In the lower-right corner of the page, click Precheck.

NoteBefore you can start the data migration task, DTS performs a precheck. You can start the data migration task only after the task passes the precheck.

If the task fails to pass the precheck, you can click the

icon next to each failed item to view details.

icon next to each failed item to view details. You can troubleshoot the issues based on the causes and run a precheck again.

If you do not need to troubleshoot the issues, you can ignore failed items and run a precheck again.

After the task passes the precheck, click Next.

In the Confirm Settings dialog box, specify the Channel Specification parameter and select Data Transmission Service (Pay-As-You-Go) Service Terms.

- Click Buy and Start to start the data migration task. Note We recommend that you do not manually stop the task during full data migration. Otherwise, the data migrated to the destination database will be incomplete. You can wait until the data migration task automatically stops.

Switch your workloads to the ApsaraDB RDS for PostgreSQL instance.