The SQL Explorer and Audit module in Database Autonomy Service (DAS) lets you search, filter, and export SQL logs for connected database instances. Use it to trace database activity, investigate performance issues, and produce audit records for compliance or incident response.

If you have enabled DAS Enterprise Edition V3, the search feature is renamed to the audit feature. The Search tab becomes the Audit tab.

Prerequisites

Before you begin, make sure that:

The database instance is connected to DAS and is in the Normal Access state.

SQL Explorer and Audit is enabled for the instance. See the Enable SQL Explorer and Audit section in the "Overview" topic.

If you are a Resource Access Management (RAM) user, the AliyunHDMReadOnlyWithSQLLogArchiveAccess policy is attached to your RAM account. See the Use system policies to grant permissions to a RAM user section in the "How do I use DAS as a RAM user?" topic.

To grant a RAM user access using a custom policy instead, see the "Use custom policies to grant a RAM user the permissions to use the search and export features in the SQL Explorer and Audit module" section in the How do I use DAS as a RAM user? topic.

Supported databases and regions

Usage notes

SQL statement length limit: SQL logs capture up to 8,192 bytes per statement. If a statement exceeds this limit, the excess is not recorded. For ApsaraDB RDS for MySQL instances and PolarDB for MySQL clusters, configure the maximum length with the following parameters: Take note of the following:

If you set the parameter to a value of 8,192 bytes or less, that value is used as the upper limit. Because a prefix is added during data collection, the effective limit is slightly less than the specified value.

If you set the parameter to more than 8,192 bytes, the limit defaults to 8,192 bytes. Because a prefix is added during data collection, the effective limit is slightly less than 8,192 bytes.

MySQL version Parameter MySQL 5.6 or 5.7 loose_rds_audit_max_sql_sizeMySQL 8.0 loose_rds_audit_log_event_buffer_sizeStatus column: A Completed status means the SQL statement ran without error. This includes statements in transactions that were later rolled back — if the statement itself executed successfully, the status shows Completed.

IP address accuracy: Connections to ApsaraDB RDS for MySQL instances and PolarDB for MySQL clusters may be reused through connection pooling. The IP addresses and ports shown in the Logs section may differ from the actual client addresses. See Configure the connection pooling feature in the ApsaraDB RDS for MySQL documentation and Connection pools in the PolarDB for MySQL documentation.

Transaction ID queries: To query by transaction ID using advanced query, the database instance must meet these version requirements:

ApsaraDB RDS for MySQL: major version 8.0, minor version 20210311 or later, and the

loose_rds_audit_log_versionparameter set toMYSQL_V3. See Parameters supported by ApsaraDB RDS instances that run MySQL 8.0.PolarDB for MySQL: version 8.0.1.1.15 or later.

Query and export SQL logs

Log on to the DAS console.

In the left-side navigation pane, choose Intelligent O&M Center > Instance Monitoring.

Find the database instance and click its ID to open the instance details page.

In the left-side navigation pane, choose SQL Explorer and Audit > Search.

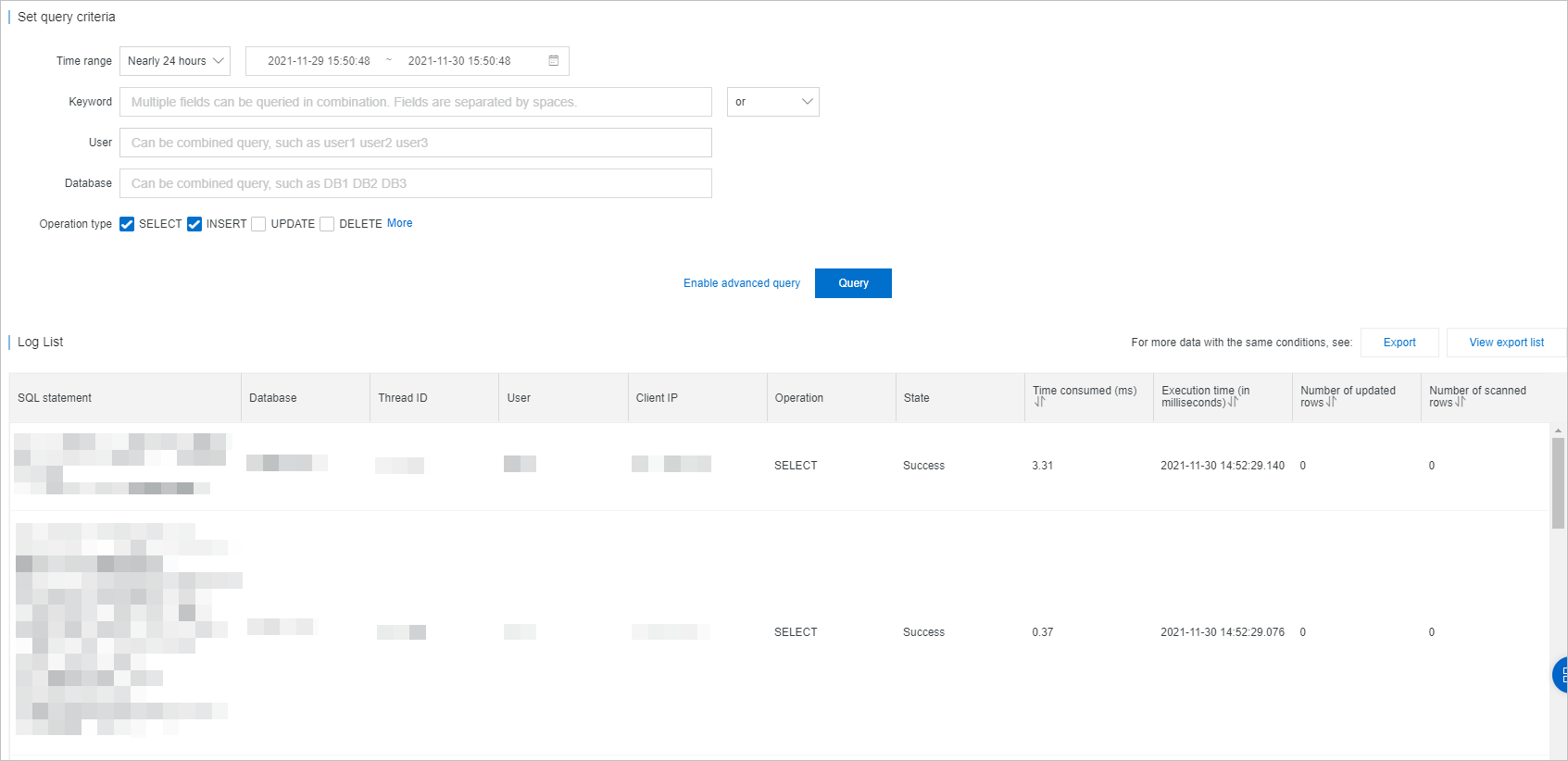

On the Search tab (or Audit tab for DAS Enterprise Edition V3), configure the query parameters: To apply additional filters such as transaction ID, click Enable Advanced Query.

NoteQuerying by transaction ID requires the version conditions described in Usage notes.

Parameter Description Time Range The period to query. Online queries support a maximum of 24 hours, and the start time must fall within the SQL Explorer data storage duration. For queries spanning more than 24 hours, export the logs instead. In hot-and-cold-storage deployments, both query and export are limited to 24 hours at a time. Keyword Keywords to filter SQL statements. Separate multiple keywords with spaces. Each keyword must be at least 4 characters. Fuzzy queries are not supported. User Database usernames to filter by. Separate multiple usernames with spaces. Example: user1 user2 user3.Database Database names to filter by. Separate multiple names with spaces. Example: DB1 DB2 DB3.Operation Type The types of SQL operations to include. You can select one or more operation types. Click Query. The matching SQL statements appear in the Logs section. Key columns in the Logs section:

Column Description Status Execution result of the SQL statement. Completed means the statement ran without error, including statements in transactions that were later rolled back. Scanned Rows Number of rows scanned on the InnoDB storage engine. Shows 0 if the result was served from the fast query cache.Database Database name from the session context. May differ from the database name in the SQL statement for cross-database queries or dynamic SQL. Client IP IP address of the connected client. In connection-pooled environments, this may not reflect the actual client address.

Export logs

To download a log file:

Click Export. The Export SQL Records dialog box opens.

Select the fields to include under Exported Fields.

Set the Export Time Range for the logs you want to download.

Each export can include up to 10 million records within a 7-day window.

Only data for which the audit log feature is enabled can be exported.

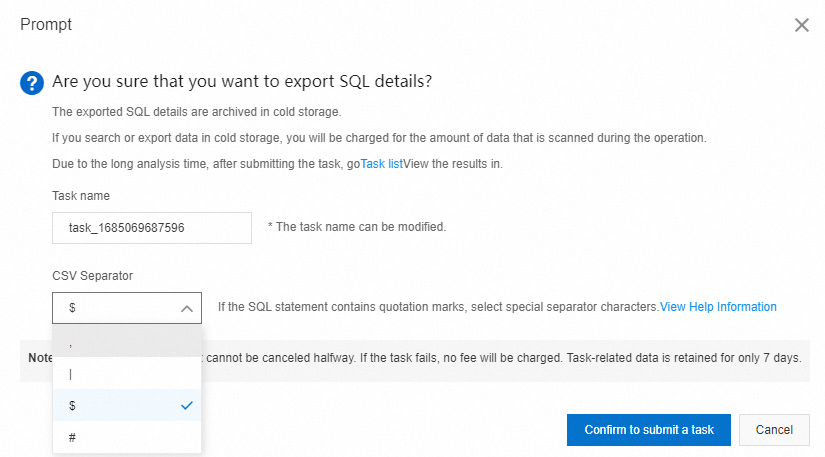

In hot-and-cold-storage deployments, querying or exporting cold-storage data creates an asynchronous task. Click Task list to track progress and view task history.

For hot-and-cold-storage deployments, the CSV Separator module lets you select a custom column delimiter before exporting. This prevents column-merging issues when opening the CSV file in spreadsheet tools. See Configure and open a CSV file.

Configure and open a CSV file

When you open an exported CSV file in a tool like Excel, all data may appear in a single column if the SQL logs contain the default comma delimiter. To avoid this, select a custom column delimiter when exporting — one that does not appear in your SQL logs, such as $.

This section uses Excel 2019 on Windows as an example.

After creating an export task, a dialog box prompts you to select a column delimiter. Choose a special character not present in your SQL logs, such as

$.

Open the exported CSV file in Excel.

Select the data you want to split into columns. In the top navigation bar, click Data. In the Data Tools section, click Text to Columns.

In the Convert Text to Columns Wizard, select Delimited in the Original data type section. Click Next.

In the Delimiters section, select Other and enter

$. Click Next. The Data preview section shows how the columns will appear.In the Column data format section, select General. Click Finish.

FAQ

API reference

The following API operations let you query SQL logs for ApsaraDB RDS for MySQL, ApsaraDB RDS for PostgreSQL, or ApsaraDB RDS for SQL Server instances.

| Operation | Description |

|---|---|

| DescribeSQLLogRecords | Queries audit logs generated by the SQL Explorer (SQL Audit) feature for an ApsaraDB RDS instance. |

| DescribeSQLLogFiles | Queries log files generated by the SQL Explorer (SQL Audit) feature for an ApsaraDB RDS instance. |