Container Compute Service (ACS) integrates with Simple Log Service (SLS) to collect container logs, including stdout/stderr and log files inside containers. This topic describes how to set up log collection for an ACS cluster using pod environment variables.

Prerequisites

Before you begin, ensure that you have:

-

An ACS cluster (see Create a stateless application using a Deployment for cluster setup details)

-

Access to the ACS console

Step 1: Enable the SLS component

The easiest way to enable log collection is to select Enable Log Service when you create an ACS cluster. If you skipped that option, install the alibaba-log-controller add-on manually:

-

Log on to the ACS console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, find the target cluster and click its ID. In the left navigation pane, choose Operations > Add-ons.

-

On the Logs and Monitoring tab, find alibaba-log-controller and click Install. In the Install alibaba-log-controller dialog box, click OK.

Upgrading an existing alibaba-log-controller installation resets all component parameters. Any customized configuration and environment variables are overwritten and must be reconfigured after the upgrade.

Step 2: Configure log collection

Configure log collection when you create an application. Two methods are available: the console wizard for quick setup, or a YAML file for repeatable, version-controlled configuration.

Use the console wizard

-

Log on to the ACS console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, find the cluster and click its ID. In the left navigation pane, choose Workloads > Deployments.

-

On the Deployments page, select a namespace from the Namespace drop-down list, then click Create from Image in the upper-left corner.

This example uses a stateless application. The steps are the same for other workload types.

-

On the Basic Information page, set Name, Replicas, and Type, then click Next to go to the Container page.

Only log-related parameters are described here. For all other application parameters, see Create a stateless application using a Deployment.

-

In the Log section, configure log collection:

-

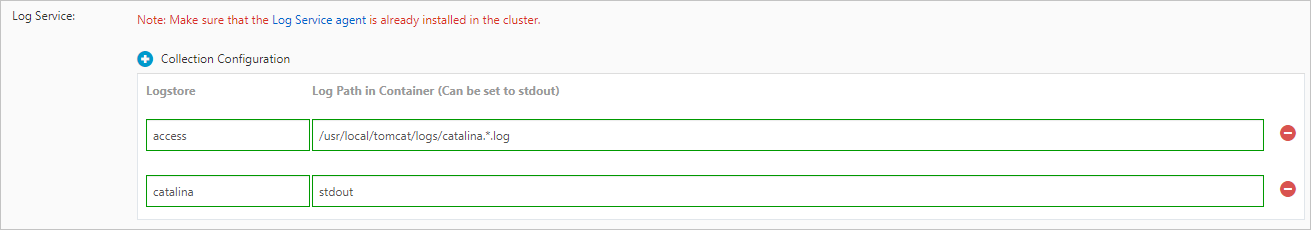

Click Collection Configuration to add a configuration entry. Each entry requires two fields: Each collection configuration corresponds to one Logstore. Logs are collected line by line by default.

-

Logstore: The name of the Logstore where collected logs are stored. If the Logstore does not exist, ACS creates it automatically in the SLS project associated with the cluster. The default log retention period is 180 days.

-

Log Path in Container (Can be set to stdout): The path to collect logs from. Set to

stdoutto collect both stdout and stderr. Set to a file path (for example,/usr/local/tomcat/logs/catalina.*.log) to collect log files.

-

-

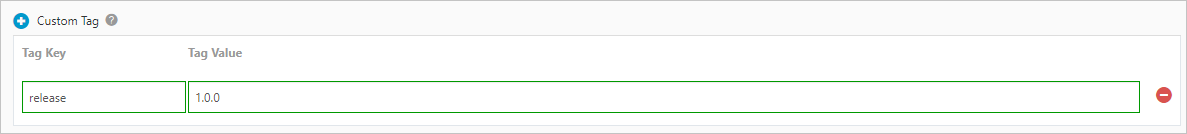

Click Custom Tag to add key-value tags appended to every collected log entry. Use tags to mark logs with metadata such as a version number.

-

-

Complete the remaining wizard steps. For details, see Create a stateless application using a Deployment.

Use a YAML file

Configure log collection by adding environment variables prefixed with aliyun_logs_ to the container spec.

-

Log on to the ACS console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, find the cluster and click its name. In the left navigation pane, choose Workloads > Deployments.

-

On the Deployments page, select a namespace from the Namespace drop-down list, then click Create from YAML in the upper-right corner.

This example uses a stateless application. The steps are the same for other workload types.

-

Configure the YAML template. The following example pod demonstrates collection configuration and custom tags:

-

aliyun_logs_log-stdout— creates a Logstore namedlog-stdoutand collects stdout/stderr from the container. -

aliyun_logs_log-varlog— creates a Logstore namedlog-varlogand collects/var/log/*.logfiles.

ImportantFor any collection path other than

stdout, add a correspondingvolumeMountsentry to the container spec. The example above collects/var/log/*.log, so avolumeMountsfor/var/logis required.apiVersion: v1 kind: Pod metadata: name: my-demo spec: containers: - name: my-demo-app image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest' env: # Log collection: send stdout to the "log-stdout" Logstore - name: aliyun_logs_log-stdout value: stdout # Log collection: collect /var/log/*.log files to the "log-varlog" Logstore - name: aliyun_logs_log-varlog value: /var/log/*.log # Custom tag: append tag1=v1 to all logs in the "mytag1" configuration - name: aliyun_logs_mytag1_tags value: tag1=v1 command: ["sh", "-c"] args: ["echo 'Starting my demo app'; sleep 3600"]All log-related environment variables use the

aliyun_logs_prefix. The example above creates two collection configurations: Custom tags use thealiyun_logs_{key}_tagsformat. The{key}value can be any name that does not contain an underscore (_). For advanced log collection requirements, see Step 3: Advanced parameters. -

-

Click Create to deploy the configuration to the cluster.

Step 3: Advanced parameters

Use environment variables to fine-tune log collection behavior. All parameters except aliyun_logs_{key} are optional.

| Environment variable | Description | Default | Example |

|---|---|---|---|

aliyun_logs_{key} |

Required. Sets the collection path. Use stdout for container output, or a file path for log files. {key} can contain only lowercase letters, digits, and hyphens (-). The {key} value becomes the Logstore name and must be unique in the cluster. |

— | - name: aliyun_logs_access-log<br> value: /var/log/nginx/access.log |

aliyun_logs_{key}_tags |

Adds tags to collected logs. Format: {tag-key}={tag-value}. |

— | - name: aliyun_logs_catalina_tags<br> value: app=catalina |

aliyun_logs_{key}_project |

Specifies the SLS project. The project must be in the same region as the SLS component. | Project selected during installation | - name: aliyun_logs_catalina_project<br> value: my-k8s-project |

aliyun_logs_{key}_logstore |

Specifies the Logstore name. | {key} |

- name: aliyun_logs_catalina_logstore<br> value: my-logstore |

aliyun_logs_{key}_shard |

Number of shards for a new Logstore. Range: 1–10. No effect if the Logstore already exists. | 2 | - name: aliyun_logs_catalina_shard<br> value: 4 |

aliyun_logs_{key}_ttl |

Log retention period in days. Range: 1–3650. Set to 3650 for permanent retention. No effect if the Logstore already exists. |

90 | - name: aliyun_logs_catalina_ttl<br> value: 3650 |

aliyun_logs_{key}_machinegroup |

Node group where the application is deployed. | Node group where alibaba-log-controller is deployed |

- name: aliyun_logs_catalina_machinegroup<br> value: my-machine-group |

aliyun_logs_{key}_logstoremode |

Logstore type. No effect if the Logstore already exists. | standard |

- name: aliyun_logs_catalina_logstoremode<br> value: query |

Logstore modes:

| Mode | Best for | SQL analysis | Index cost |

|---|---|---|---|

standard |

Real-time monitoring, interactive analysis, comprehensive observability | Yes | Standard |

query |

Large data volumes, long retention periods, query-only workloads (weeks or months of logs) | No | ~50% of standard |

The default collection mode is simple mode, which collects logs without parsing. To parse log data, configure parsing rules in the SLS console after setup.

Special use case 1: Collect from multiple applications into the same Logstore

Point multiple applications to the same Logstore using aliyun_logs_{key}_logstore. Each application needs a unique {key}, but all can write to a shared Logstore.

The following example routes stdout from two applications to a single stdout-logstore:

Application 1:

apiVersion: v1

kind: Pod

metadata:

name: my-demo-1

spec:

containers:

- name: my-demo-app

image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest'

env:

- name: aliyun_logs_app1-stdout # unique key for app1

value: stdout

- name: aliyun_logs_app1-stdout_logstore

value: stdout-logstore # shared destination Logstore

command: ["sh", "-c"]

args: ["echo 'Starting my demo app'; sleep 3600"]Application 2:

apiVersion: v1

kind: Pod

metadata:

name: my-demo-2

spec:

containers:

- name: my-demo-app

image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest'

env:

- name: aliyun_logs_app2-stdout # unique key for app2

value: stdout

- name: aliyun_logs_app2-stdout_logstore

value: stdout-logstore # same shared destination Logstore

command: ["sh", "-c"]

args: ["echo 'Starting my demo app'; sleep 3600"]Special use case 2: Route logs from different applications to different projects

To send logs from different applications to separate SLS projects:

-

In each target project, create a machine group. Set the machine group type to Custom ID and set the ID to

k8s-group-{cluster-id}, where{cluster-id}is your ACS cluster ID. You can also use a custom machine group name. -

For each application, configure the project, Logstore, and machine group using environment variables. Applications in the same cluster can share a machine group. Application 1 (logs to

app1-project):apiVersion: v1 kind: Pod metadata: name: my-demo-1 spec: containers: - name: my-demo-app image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest' env: - name: aliyun_logs_app1-stdout value: stdout - name: aliyun_logs_app1-stdout_project value: app1-project - name: aliyun_logs_app1-stdout_logstore value: app1-logstore - name: aliyun_logs_app1-stdout_machinegroup value: app1-machine-group command: ["sh", "-c"] args: ["echo 'Starting my demo app'; sleep 3600"]Application 2 (logs to

app2-project):apiVersion: v1 kind: Pod metadata: name: my-demo-2 spec: containers: - name: my-demo-app image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest' env: - name: aliyun_logs_app2-stdout value: stdout - name: aliyun_logs_app2-stdout_project value: app2-project - name: aliyun_logs_app2-stdout_logstore value: app2-logstore - name: aliyun_logs_app2-stdout_machinegroup value: app1-machine-group # apps in the same cluster can share a machine group command: ["sh", "-c"] args: ["echo 'Starting my demo app'; sleep 3600"]

Step 4: View logs in the SLS console

After the application is running, logs are automatically collected and stored in SLS.

-

Log on to the SLS console.

-

In the Projects section, select the project for your ACS cluster. The default project name is

k8s-log-{ACS cluster ID}. -

Go to the Logstores tab. Find the Logstore configured for log collection, hover over its name, and click the

icon. Then click Search & Analyze.

icon. Then click Search & Analyze.

If log entries appear in the query results with custom tags visible in the log fields, the configuration is working correctly.

What's next

-

For all ACS log information, see the SLS console.

-

If logs are not appearing, see What do I do if errors occur when I use Logtail to collect logs?