In Terway network mode, each Pod gets its IP address from a vSwitch through an elastic network interface (ENI). When a vSwitch runs out of IP addresses, new Pods can't start. This topic describes how to add a vSwitch to expand the IP address pool for your ACK cluster.

You might need this if:

A vSwitch is exhausted and Pods are stuck in

ContainerCreating.You're scaling out nodes and need more IP capacity in a specific zone.

You want to spread IP usage across multiple vSwitches for better balance.

Prerequisites

Before you begin, ensure that you have:

An ACK cluster running in Terway network mode

Access to the VPC console and the Container Service Management Console

Sufficient permissions to edit Terway add-on configuration or edit ConfigMaps in the

kube-systemnamespace

Limitations

The vSwitch you add must be in the same zone as your nodes. If no vSwitch in the list covers a node's zone, Terway falls back to the vSwitch associated with the node's primary ENI.

You cannot change the vSwitch configuration for an existing ENI. After you update the Pod vSwitch configuration, add new nodes or perform a rolling restart to apply the change.

Detect insufficient vSwitch IP resources

When a vSwitch runs out of IP addresses, Pods that need a new ENI fail to start and stay in ContainerCreating.

To confirm the cause, run the following command on the node where the Pod is scheduled:

kubectl logs --tail=100 -f terway-eniip-***** -n kube-system -c terwayIf the output contains an error like the following, the vSwitch has no available IP addresses:

time="20**-03-17T07:03:40Z" level=warning msg="Assign private ip address failed: Aliyun API Error: RequestId: 2095E971-E473-4BA0-853F-0C41CF52651D Status Code: 403 Code: InvalidVSwitchId.IpNotEnough Message: The specified VSwitch \"vsw-***\" has not enough IpAddress., retrying"Also check the Available IP Addresses count for the vSwitch in the VPC console. Go to vSwitch in the left navigation pane. If the count is 0, the vSwitch has no IP addresses left.

When changes take effect

Terway applies the new vSwitch configuration only when it creates a new ENI. Changes do not apply to existing ENIs.

Existing nodes: The new configuration does not take effect on a node while:

An ENI on the node is still in use (has running Pods or is a trunk ENI).

The node has reached its ENI limit (determined by the instance type).

New nodes: Nodes added to the cluster after the configuration update use the new vSwitch immediately.

Modify the vSwitch for Pods

Console method

Terway v1.4.4 and later support the console method. For earlier versions, use the kubectl method.

Create a new vSwitch in the VPC console. The vSwitch must be in the same zone as the nodes that need more IP addresses. For instructions, see Create and manage vSwitches.Virtual Private Cloud (VPC) consoleVirtual Private Cloud (VPC) consoleVirtual Private Cloud (VPC) console

NotePod density tends to grow over time. Create vSwitches for Pods with a subnet mask of /19 or smaller to provision at least 8,192 IP addresses per CIDR block.

Log on to the Container Service Management Console. In the left navigation pane, click Clusters.

On the Clusters page, click the name of your cluster. In the left navigation pane, click Add-ons.

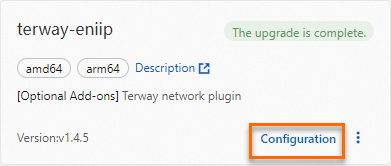

On the Add-ons page, click the Network tab. Find the Terway add-on and click Upgrade. If the Upgrade button is not shown, Terway is already at the latest version. After the upgrade completes, click Configuration.

NoteChanges made to deployed add-ons through other methods are overwritten when the add-on is redeployed.

On the Parameters page for terway-eniip, select the new vSwitch in the PodVswitchId section. Leave all other parameters at their default values.

Click OK.

kubectl method

Use this method if Terway is earlier than v1.4.4, or if you prefer to manage configuration through kubectl.

Create a new vSwitch in the VPC console. The vSwitch must be in the same zone as the nodes that need more IP addresses. For instructions, see Create and manage vSwitches.

NotePod density tends to grow over time. Create vSwitches for Pods with a subnet mask of /19 or smaller to provision at least 8,192 IP addresses per CIDR block.

Edit the Terway ConfigMap to add the new vSwitch:

kubectl edit cm eni-config -n kube-systemAdd the new vSwitch ID to the

vswitchesfield. The following example addsvsw-BBBalongside the existing vSwitchvsw-AAA:eni_conf: | { "version": "1", "max_pool_size": 25, "min_pool_size": 10, "vswitches": {"cn-shanghai-f":["vsw-AAA", "vsw-BBB"]}, "service_cidr": "172.21.0.0/20", "security_group": "sg-CCC" }Restart all Terway pods so they pick up the new configuration. The system automatically recreates them.

For the ENI multi-IP scenario, you can execute the following command to delete all Terway pods:

kubectl delete -n kube-system pod -l app=terway-eniipFor the ENI single-IP scenario, you can execute the following command to delete all Terway pods:

kubectl delete -n kube-system pod -l app=terway-eni

Verify that all Terway pods are running:

kubectl get pod -n kube-system | grep terwayCreate a test Pod and confirm it receives an IP address from the new vSwitch.

NoteAfter you update the vSwitch configuration, the new settings apply only to newly created ENIs. Existing ENIs keep the original vSwitch. To apply the new settings to all nodes, perform a rolling restart.

vSwitch selection strategies

When multiple vSwitches are configured for the same zone, Terway uses a selection strategy to decide which vSwitch to use for each new ENI. Configure the strategy using the vswitch_selection_policy parameter. For configuration details, see Customize Terway configuration parameters.

Default: ordered

Terway sorts vSwitches by remaining IP count in descending order and always picks the one with the most available IPs. This works well in most cases.

Edge case: During rapid scaling—when many nodes or replicas are added at the same time—multiple ENI creation requests run the selection logic simultaneously. Because the IP counts haven't changed yet, all requests may pick the same vSwitch, causing uneven IP distribution.

Alternative: random

Terway picks a vSwitch at random for each new ENI. This distributes IP usage more evenly across vSwitches and prevents any single vSwitch from becoming a hotspot during burst scaling.

Isolate vSwitches by node pool

For strict control over IP allocation, configure node-level network settings for each node pool and bind a unique Pod vSwitch to each node pool per zone. This creates a one-to-one mapping between vSwitches and node pools, eliminating IP allocation contention.

FAQ

Pods lost Internet access after I added a vSwitch

The new vSwitch doesn't have a SNAT rule, so Pods using IP addresses from that vSwitch can't reach the Internet. Configure a public SNAT rule for the new vSwitch using NAT Gateway. For instructions, see Enable Internet access for your cluster.

A Pod's IP address is outside the configured vSwitch CIDR block

Pod IP addresses come from ENIs, and you can only specify a vSwitch when an ENI is created. Once an ENI exists, all Pods on it get IPs from that ENI's vSwitch—regardless of what you configure later.

This typically happens when:

A node was previously used in another cluster and still has leftover ENIs from that cluster (the node was removed without draining its Pods first).

You updated the vSwitch configuration, but existing ENIs on the node still use the old vSwitch.

Add new nodes or perform a rolling restart of existing nodes so that fresh ENIs are created with the updated configuration.

IP usage is unbalanced across vSwitches

This is caused by the ordered strategy during rapid scaling. When many ENIs are created at the same time, all requests see the same vSwitch as having the most IPs and pick it, exhausting that vSwitch while others remain underused.

Depending on your situation:

For existing nodes with near-exhausted vSwitches: Perform a rolling restart of some nodes to release their ENIs. Releasing ENIs returns IPs to the vSwitch, helping rebalance usage.

For new nodes or future deployments: Change

vswitch_selection_policyfromorderedtorandom. This prevents hotspots by randomly distributing ENIs across vSwitches. For details, see Customize Terway configuration parameters.For strict isolation: Use node-level network configuration to assign exactly one Pod vSwitch per zone per node pool.