Schema Registry provides a centralized service for managing and validating the structure of messages sent through Kafka topics. By registering schemas, producers and consumers enforce a shared data contract -- preventing malformed messages from entering the pipeline and catching compatibility issues before they reach production.

This guide covers the end-to-end workflow on Linux: clone the Confluent sample project, create a topic, enable schema validation, register an Avro schema, and produce and consume messages with schema-aware serializers.

Before you begin

Before you begin, make sure that you have:

An ApsaraMQ for Confluent instance. See Purchase and deploy instances.

RBAC permissions to access the Kafka and Schema Registry clusters. See RBAC authorization.

Java 8 or 11. For supported versions, see Java.

Maven 3.8 or later. See Maven downloads.

Step 1: Prepare the sample project

Clone the Confluent examples repository and switch to the 7.9.0-post branch:

git clone https://github.com/confluentinc/examples.git

cd examples/clients/avro

git checkout 7.9.0-postConfigure client properties

Create $HOME/.confluent/java.config with the following content:

# Kafka connection

bootstrap.servers={{ BROKER_ENDPOINT }}

security.protocol=SASL_SSL

sasl.jaas.config=org.apache.kafka.common.security.plain.PlainLoginModule required username='{{ CLUSTER_API_KEY }}' password='{{ CLUSTER_API_SECRET }}';

sasl.mechanism=PLAIN

# Required for correctness in Apache Kafka clients prior to 2.6

client.dns.lookup=use_all_dns_ips

# Recommended for higher availability in clients prior to 3.0

session.timeout.ms=45000

# Prevent data loss

acks=all

# Schema Registry connection

schema.registry.url=https://{{ SR_ENDPOINT }}

basic.auth.credentials.source=USER_INFO

basic.auth.user.info={{ SR_API_KEY }}:{{ SR_API_SECRET }}Replace the placeholders with your actual values:

| Placeholder | Description | Where to find it | Example |

|---|---|---|---|

{{ BROKER_ENDPOINT }} | Kafka service endpoint | Access Links and Ports page in the ApsaraMQ for Confluent console. To use the public endpoint, enable Internet access first. For security settings, see Configure network access and security settings. | pub-kafka-xxxxxxxxxxx.csp.aliyuncs.com:9092 |

{{ CLUSTER_API_KEY }} | LDAP username for the Kafka cluster | Users page in the ApsaraMQ for Confluent console. For testing, use the root account. For production, create a dedicated user and grant Kafka cluster permissions. | root |

{{ CLUSTER_API_SECRET }} | LDAP password for the Kafka cluster | Same as above | ****** |

{{ SR_ENDPOINT }} | Schema Registry service endpoint | Access Links and Ports page in the ApsaraMQ for Confluent console. To use the public endpoint, enable Internet access first. For security settings, see Configure network access and security settings. | pub-schemaregistry-xxxxxxxxxxx.csp.aliyuncs.com:443 |

{{ SR_API_KEY }} | LDAP username for Schema Registry | Users page in the ApsaraMQ for Confluent console. For testing, use the root account. For production, create a dedicated user and grant Schema Registry permissions. | root |

{{ SR_API_SECRET }} | LDAP password for Schema Registry | Same as above | ****** |

Step 2: Create a topic

The sample code uses a topic named transactions. Create this topic in Control Center, or replace transactions in the code with your own topic name.

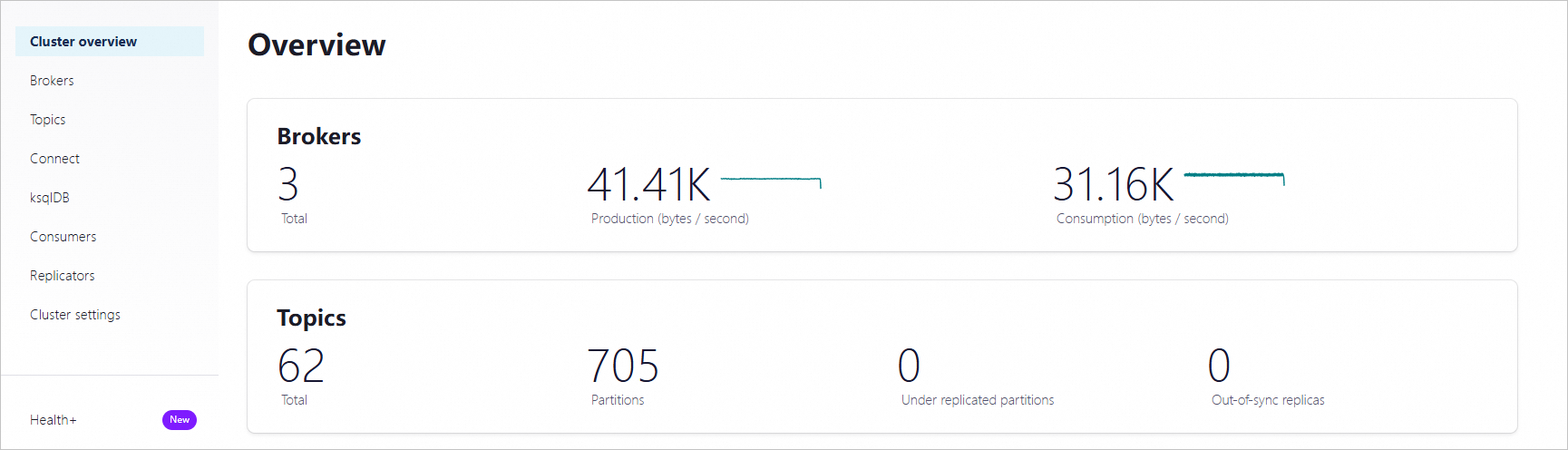

Log on to Control Center. On the Home page, click the controlcenter.clusterk card to open the Cluster overview page.

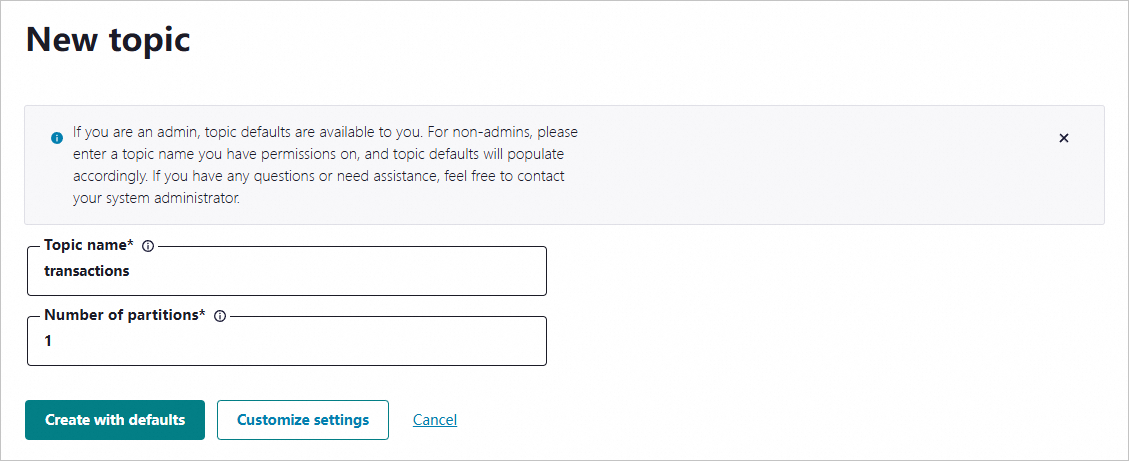

In the left-side navigation pane, click Topics. In the upper-right corner, click + Add topic.

On the New topic page, enter the topic name and partition count, then click Create with defaults.

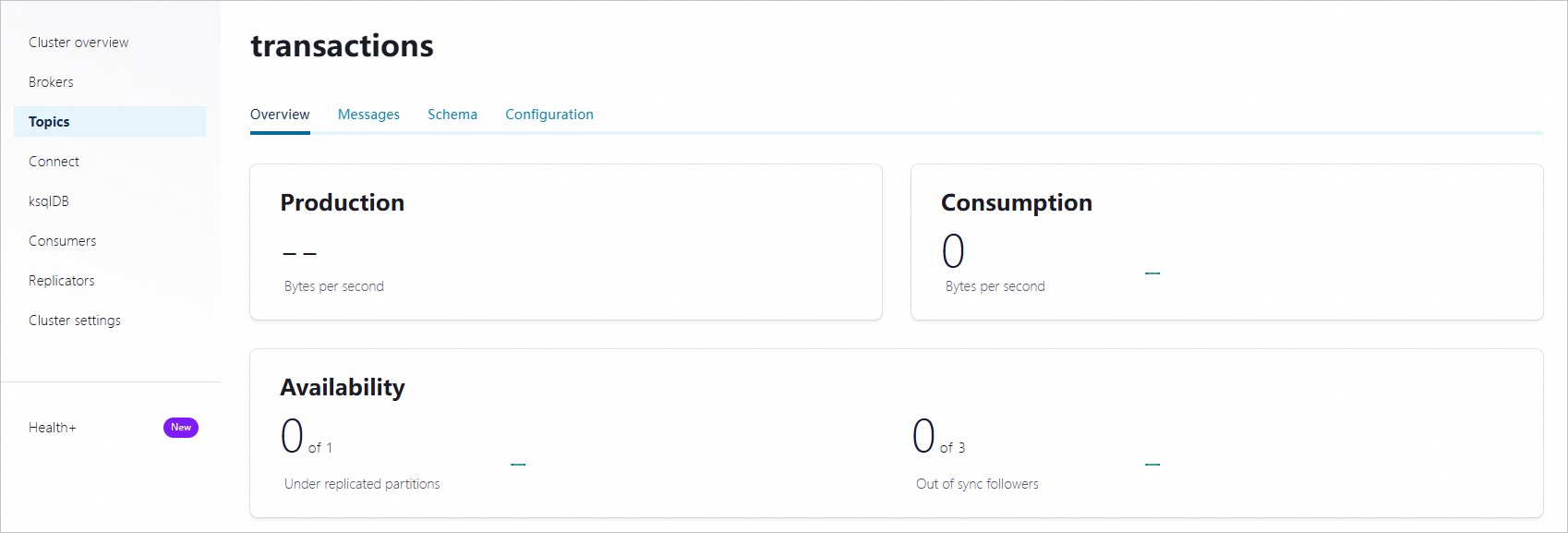

After the topic is created, open the topic details page to verify the configuration.

Step 3: Enable schema validation

Schema validation rejects any message that does not conform to the registered schema at the broker level, for both producers and consumers.

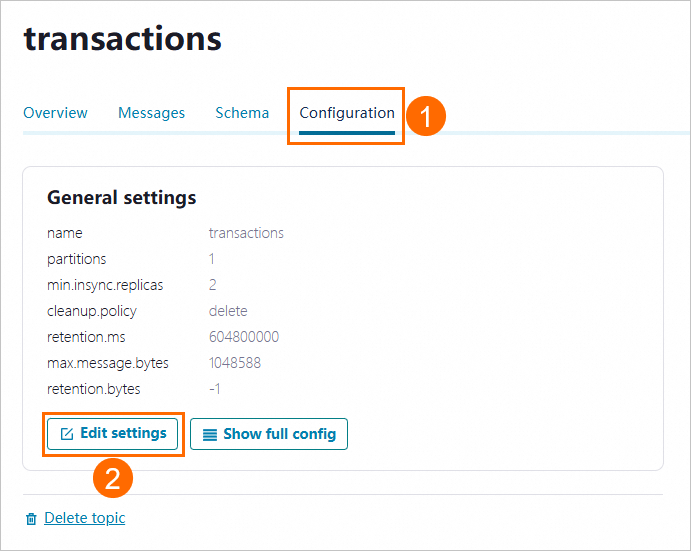

On the topic details page, click the Configuration tab, then click Edit settings.

Click Switch to expert mode.

Set

confluent_value_schema_validationtotrue, then click Save changes.

Step 4: Register an Avro schema

The sample project includes an Avro schema file (Payment.avsc) that defines a payment record with two fields: id (string) and amount (double).

View the schema file:

cat src/main/resources/avro/io/confluent/examples/clients/basicavro/Payment.avscOutput:

{

"namespace": "io.confluent.examples.clients.basicavro",

"type": "record",

"name": "Payment",

"fields": [

{"name": "id", "type": "string"},

{"name": "amount", "type": "double"}

]

}Register this schema in Control Center:

On the topic details page, click the Schema tab, then click Set a schema.

Select Avro as the format, paste the schema content into the code editor, and click Create.

Step 5: Produce and consume messages

With schema validation enabled and a schema registered, produce and consume Avro-serialized messages using the Confluent Java client.

Build the project

From the examples/clients/avro directory, compile the project:

mvn clean compile packageProduce messages

The producer uses KafkaAvroSerializer to serialize message values against the registered Avro schema. Keys are serialized as plain strings.

Run the producer:

mvn exec:java -Dexec.mainClass=io.confluent.examples.clients.basicavro.ProducerExample \

-Dexec.args="$HOME/.confluent/java.config"Expected output:

...

Successfully produced 10 messages to a topic called transactions

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

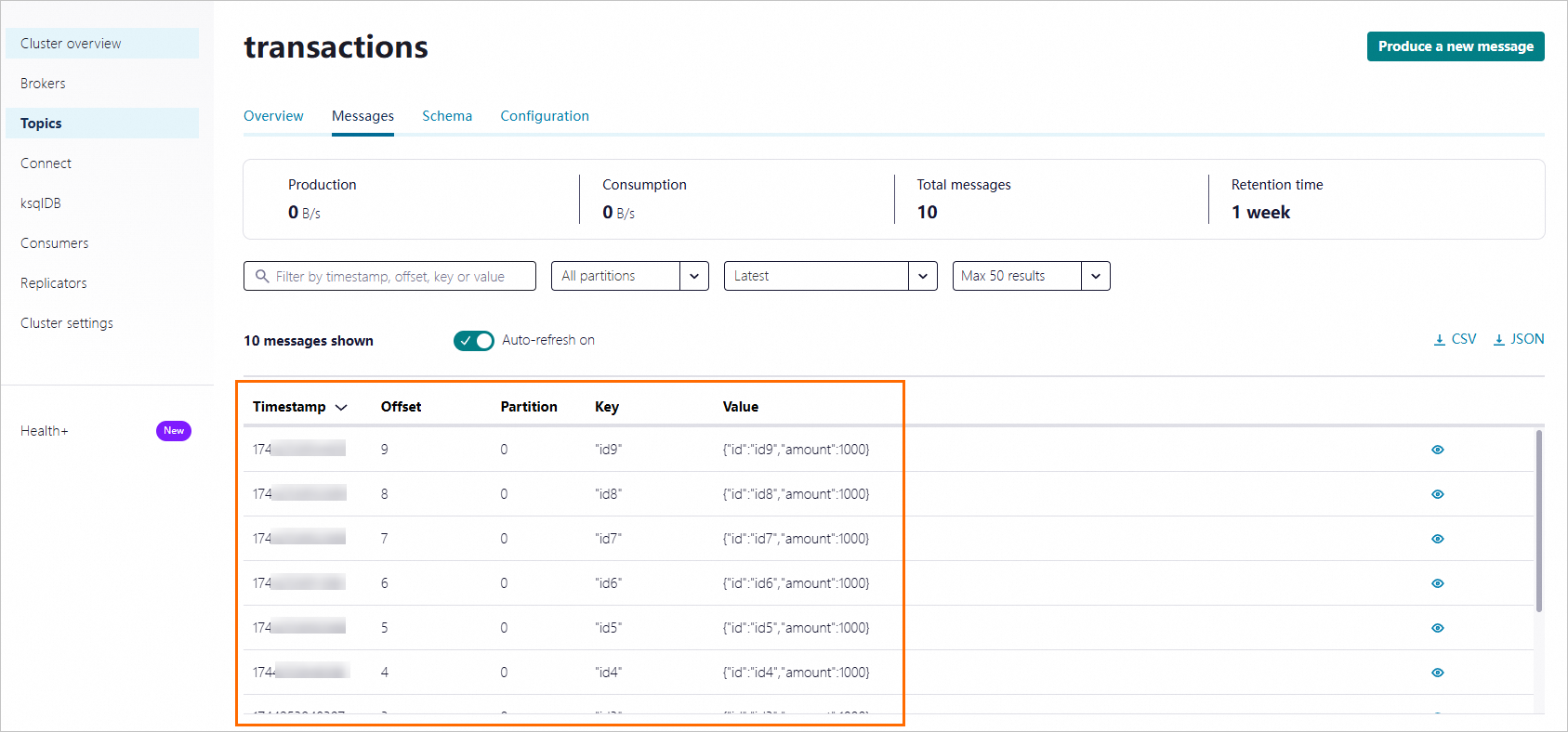

...Verify the messages in Control Center by opening the topic and checking the Messages tab.

Consume messages

The consumer uses KafkaAvroDeserializer to deserialize message values back into Payment objects. Setting SPECIFIC_AVRO_READER_CONFIG to true enables deserialization into the generated Payment class rather than a generic Avro record.

Run the consumer:

mvn exec:java -Dexec.mainClass=io.confluent.examples.clients.basicavro.ConsumerExample \

-Dexec.args="$HOME/.confluent/java.config"Expected output:

...

key = id0, value = {"id": "id0", "amount": 1000.0}

key = id1, value = {"id": "id1", "amount": 1000.0}

key = id2, value = {"id": "id2", "amount": 1000.0}

key = id3, value = {"id": "id3", "amount": 1000.0}

key = id4, value = {"id": "id4", "amount": 1000.0}

key = id5, value = {"id": "id5", "amount": 1000.0}

key = id6, value = {"id": "id6", "amount": 1000.0}

key = id7, value = {"id": "id7", "amount": 1000.0}

key = id8, value = {"id": "id8", "amount": 1000.0}

key = id9, value = {"id": "id9", "amount": 1000.0}

...What to do next

Evolve your schema -- Add or modify fields while maintaining backward compatibility. See Schema Registry for Confluent Platform.

Manage users and permissions -- Create dedicated LDAP users for production workloads and assign fine-grained RBAC roles. See Manage users and grant permissions to them.