This topic describes how to synchronize data from a self-managed SQL Server database to an AnalyticDB for PostgreSQL instance by using Data Transmission Service (DTS).

Prerequisites

- The version of the self-managed SQL Server database is supported by DTS. For more information, see Overview of data synchronization scenarios.

- The destination AnalyticDB for PostgreSQL instance is created. For more information, see Create an instance.

- The available storage space of the destination AnalyticDB for PostgreSQL instance is larger than the total size of the data in the self-managed SQL Server database.

- If the source ApsaraDB RDS for SQL Server instance meets one of the following conditions, we recommend that you synchronize data by using the backup feature of ApsaraDB RDS for SQL Server. For more information, see Migrate data from a self-managed database to an ApsaraDB RDS for SQL Server instance.

- The source instance contains more than 10 databases.

- A single database of the source instance backs up its logs at an interval of less than 1 hour.

- A single database of the source instance executes more than 100 DDL statements each hour.

- Logs are written at a rate of 20 MB/s for a single database of the source instance.

- The change data capture (CDC) feature needs to be enabled for more than 1,000 tables in the source ApsaraDB RDS for SQL Server instance.

- The logs of a database in the source ApsaraDB RDS for SQL Server instance involve heap tables, tables without primary keys, compressed tables, or tables with computed columns. You can execute the following SQL statements to check whether the source database contains these tables.

- Execute the following SQL statement to check for heap tables:

SELECT s.name AS schema_name, t.name AS table_name FROM sys.schemas s INNER JOIN sys.tables t ON s.schema_id = t.schema_id AND t.type = 'U' AND s.name NOT IN ('cdc', 'sys') AND t.name NOT IN ('systranschemas') AND t.object_id IN (SELECT object_id FROM sys.indexes WHERE index_id = 0); - Execute the following SQL statement to check for tables without primary keys:

SELECT s.name AS schema_name, t.name AS table_name FROM sys.schemas s INNER JOIN sys.tables t ON s.schema_id = t.schema_id AND t.type = 'U' AND s.name NOT IN ('cdc', 'sys') AND t.name NOT IN ('systranschemas') AND t.object_id NOT IN (SELECT parent_object_id FROM sys.objects WHERE type = 'PK'); - Execute the following SQL statement to check for primary key columns contained in clustered index columns:

SELECT s.name AS schema_name, t.name AS table_name FROM sys.schemas s INNER JOIN sys.tables t ON s.schema_id= t.schema_id WHERE t.type= 'U' AND s.name NOT IN('cdc', 'sys') AND t.name NOT IN('systranschemas') AND t.object_id IN (SELECT pk_columns.object_id AS object_id FROM (select sic.object_id object_id, sic.column_id FROM sys.index_columns sic, sys.indexes sis WHERE sic.object_id= sis.object_id AND sic.index_id= sis.index_id AND sis.is_primary_key= 'true') pk_columns LEFT JOIN (SELECT sic.object_id object_id, sic.column_id FROM sys.index_columns sic, sys.indexes sis WHERE sic.object_id= sis.object_id AND sic.index_id= sis.index_id AND sis.index_id= 1) cluster_colums ON pk_columns.object_id= cluster_colums.object_id WHERE pk_columns.column_id != cluster_colums.column_id); - Execute the following SQL statement to check for compressed tables:

SELECT s.name AS schema_name, t.name AS table_name FROM sys.objects t, sys.schemas s, sys.partitions p WHERE s.schema_id = t.schema_id AND t.type = 'U' AND s.name NOT IN ('cdc', 'sys') AND t.name NOT IN ('systranschemas') AND t.object_id = p.object_id AND p.data_compression != 0; - Execute the following SQL statement to check for tables with computed columns:

SELECT s.name AS schema_name, t.name AS table_name FROM sys.schemas s INNER JOIN sys.tables t ON s.schema_id = t.schema_id AND t.type = 'U' AND s.name NOT IN ('cdc', 'sys') AND t.name NOT IN ('systranschemas') AND t.object_id IN (SELECT object_id FROM sys.columns WHERE is_computed = 1);

- Execute the following SQL statement to check for heap tables:

Usage notes

- During schema synchronization, DTS synchronizes foreign keys from the source database to the destination database.

- During full synchronization and incremental synchronization, DTS temporarily disables checking of foreign key constraints and foreign key cascade operations at the session level. If you perform the cascade update and delete operations on the source database during data synchronization, data inconsistency may occur.

| Category | Description |

|---|---|

| Limits on the source database |

|

| Other limits |

|

Billing

| Synchronization type | Task configuration fee |

|---|---|

| Schema synchronization and full data synchronization | Free of charge. |

| Incremental data synchronization | Charged. For more information, see Billing overview. |

Supported synchronization topologies

- One-way one-to-one synchronization

- One-way one-to-many synchronization

- One-way many-to-one synchronization

SQL operations that can be synchronized

| Operation type | SQL statement |

|---|---|

| DML | INSERT, UPDATE, and DELETE |

| DDL |

Note

|

Permissions required for database accounts

| Database | Required permission | References |

|---|---|---|

| Self-managed SQL Server database | sysadmin | CREATE USER and GRANT (Transact-SQL) |

| AnalyticDB for PostgreSQL instance |

Note You can use the initial account of the AnalyticDB for PostgreSQL instance. | Create a database account and Manage users and permissions |

Preparations

Before you configure a data synchronization task, configure log settings and create clustered indexes on the self-managed SQL Server database.- Execute the following statement on the self-managed SQL Server database to change the recovery model to full. You can also change the recovery model by using SQL Server Management Studio (SSMS). For more information, see View or Change the Recovery Model of a Database (SQL Server).

Parameters:use master; GO ALTER DATABASE <database_name> SET RECOVERY FULL WITH ROLLBACK IMMEDIATE; GO<database_name>: the name of the source database.

Example:use master; GO ALTER DATABASE mytestdata SET RECOVERY FULL WITH ROLLBACK IMMEDIATE; GO - Execute the following statement to create a logical backup for the source database. Skip this step if you have already created a logical backup.

Parameters:BACKUP DATABASE <database_name> TO DISK='<physical_backup_device_name>'; GO- <database_name>: the name of the source database.

- <physical_backup_device_name>: the storage path and file name of the backup file.

BACKUP DATABASE mytestdata TO DISK='D:\backup\dbdata.bak'; GO - Execute the following statement to create a log backup for the source database.

Parameters:BACKUP LOG <database_name> to DISK='<physical_backup_device_name>' WITH init; GO- <database_name>: the name of the source database.

- <physical_backup_device_name>: the storage path and file name of the backup file.

BACKUP LOG mytestdata TO DISK='D:\backup\dblog.bak' WITH init; GO

Procedure

- Go to the Data Synchronization page of the new DTS console. Note You can also log on to the Data Management (DMS) console. In the top navigation bar, click DTS. In the left-side navigation pane, choose .

- In the upper-left corner of the page, select the region in which the data synchronization instance resides.

- Click Create Task. On the page that appears, configure the source and destination databases.

Section Parameter Description N/A Task Name The name of the task. DTS automatically generates a task name. We recommend that you specify a descriptive name for easy identification. You do not need to specify a unique task name.

Source Database Database Type The type of the source database. Select SQL Server. Access Method The access method of the source database. Select Self-managed Database on ECS. Instance Region The region in which the self-managed SQL Server database resides. ECS Instance ID The ID of the Elastic Compute Service (ECS) instance that hosts the self-managed SQL Server database. Database Account The account of the self-managed SQL Server database. For information about the permissions that are required for the account, see Permissions required for database accounts. Database Password The password of the database account.

Encryption Specifies whether to encrypt the connection to the database. Select Non-encrypted or SSL-encrypted based on your business requirements.

Destination Database Database Type The type of the destination database. Select AnalyticDB for PostgreSQL. Access Method The access method of the destination database. Select Alibaba Cloud Instance. Instance Region The region in which the destination AnalyticDB for PostgreSQL instance resides. Instance ID The ID of the destination AnalyticDB for PostgreSQL instance. Database Name The name of the destination database in the destination AnalyticDB for PostgreSQL instance. Database Account The database account of the destination AnalyticDB for PostgreSQL instance. For information about the permissions that are required for the account, see Permissions required for database accounts. Database Password The password of the database account.

- In the lower part of the page, click Test Connectivity and Proceed. If the source or destination database is an Alibaba Cloud database instance, such as an ApsaraDB RDS for MySQL or ApsaraDB for MongoDB. DTS automatically adds the CIDR blocks of DTS servers to the whitelist of the instance. For more information, see Add the CIDR blocks of DTS servers to the security settings of on-premises databases. If the source or destination database is a self-managed database hosted on an Elastic Compute Service (ECS) instance, DTS automatically adds the CIDR blocks of DTS servers to the security group rules of the ECS instance, and you need to manually add the CIDR blocks of DTS servers to the whitelist of the self-managed database on the ECS instance to allow DTS to access the database. If the source or destination database is a self-managed database that is deployed in a data center or provided by a third-party cloud service provider, you must manually add the CIDR blocks of DTS servers to the whitelist of the database to allow DTS to access the database.Warning

- If the CIDR blocks of DTS servers are automatically or manually added to the whitelist or ECS security group rules, security risks may arise. Therefore, before you use DTS to synchronize data, you must understand and acknowledge the potential risks and take preventive measures, including but not limited to the following measures: enhancing the security of your username and password, limiting the ports that are exposed, authenticating API calls, regularly checking the whitelist or ECS security group rules and forbidding unauthorized CIDR blocks, or connecting the database to DTS by using Express Connect, VPN Gateway, or Smart Access Gateway.

- After the DTS task is complete or released, we recommend that you manually remove the CIDR blocks of DTS servers from the whitelist or ECS security group rules. You must remove the IP address whitelist group whose name contains

dtsfrom the whitelist of the Alibaba Cloud database instance or the security group rules of the ECS instance. You must also remove the CIDR blocks of DTS servers from the whitelist of the self-managed database on the ECS instance. For more information about the CIDR blocks that you must remove from the whitelist of the self-managed databases that are deployed in data centers or databases that are hosted on third-party cloud services, see Add the CIDR blocks of DTS servers to the security settings of on-premises databases.

- Select objects for the task and configure advanced settings.

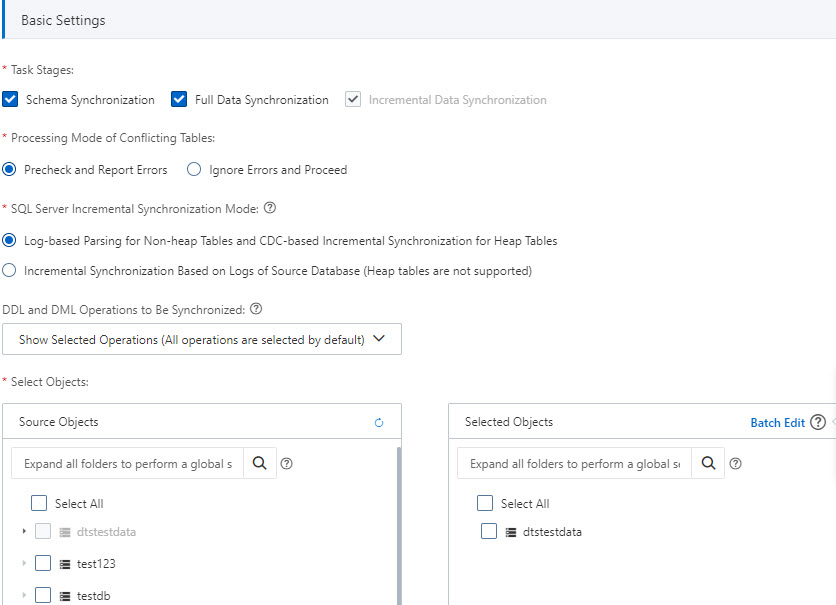

- Basic Settings

Parameter or setting Description Task Stages By default, Incremental Data Synchronization is selected. You must also select Schema Synchronization and Full Data Synchronization. After the precheck is complete, DTS synchronizes the historical data of selected objects from the source instance to the destination cluster. The historical data is the basis for subsequent incremental synchronization.

Processing Mode of Conflicting Tables Precheck and Report Errors: checks whether the destination database contains tables that have the same names as tables in the source database. If the source and destination databases do not contain tables that have identical table names, the precheck is passed. Otherwise, an error is returned during the precheck and the data synchronization task cannot be started.

Note You can use the object name mapping feature to rename the tables that are synchronized to the destination database. You can use this feature if the source and destination databases contain identical table names and the tables in the destination database cannot be deleted or renamed. For more information, see Map object names .- Ignore Errors and Proceed: skips the precheck for identical table names in the source and destination databases. Warning If you select Ignore Errors and Proceed, data inconsistency may occur, and your business may be exposed to potential risks.

- If the source and destination databases have the same schemas, and a data record has the same primary key value as an existing data record in the destination database:

- During full data synchronization, DTS does not synchronize the data record to the destination database. The existing data record in the destination database is retained.

- During incremental data synchronization, DTS synchronizes the data record to the destination database. The existing data record in the destination database is overwritten.

- If the source and destination databases have different schemas, initial data synchronization may fail. In this case, only specific columns are synchronized, or the data synchronization task fails.

- If the source and destination databases have the same schemas, and a data record has the same primary key value as an existing data record in the destination database:

DDL and DML Operations to Be Synchronized The DDL and DML operations that you want to synchronize. For more information, see SQL operations that can be synchronized. Note To select the SQL operations performed on a specific database or table, perform the following steps: In the Selected Objects section, right-click an object. In the dialog box that appears, select the SQL operations that you want to synchronize.SQL Server Incremental Synchronization Mode - Log-based Parsing for Non-heap Tables and CDC-based Incremental Synchronization for Heap Tables:

- Advantages:

- This mode supports heap tables, tables without primary keys, compressed tables, and tables with computed columns.

- This mode provides higher stability and a variety of complete DDL statements.

- Disadvantage:

DTS creates the trigger named dts_cdc_sync_ddl, the heartbeat table named dts_sync_progress, and the DDL storage table named dts_cdc_ddl_history in the source database, and enables change data capture (CDC) for the source database and specific tables.

- Advantages:

- Incremental Synchronization Based on Logs of Source Database:

- Advantage:

No intrusion to the source database.

- Disadvantage:

This mode does not support heap tables, tables without primary keys, compressed tables, or tables with computed columns.

- Advantage:

Select Objects Select one or more objects from the Source Objects section and click the

icon to add the objects to the Selected Objects section. Note In this scenario, data synchronization is performed between heterogeneous databases. Therefore, only tables can be synchronized. Other objects such as views, triggers, or stored procedures are not synchronized to the destination database.

icon to add the objects to the Selected Objects section. Note In this scenario, data synchronization is performed between heterogeneous databases. Therefore, only tables can be synchronized. Other objects such as views, triggers, or stored procedures are not synchronized to the destination database.Rename Databases and Tables - To rename an object that you want to synchronize to the destination instance, right-click the object in the Selected Objects section. For more information, see Map the name of a single object.

- To rename multiple objects at a time, click Batch Edit in the upper-right corner of the Selected Objects section. For more information, see Map multiple object names at a time.

Filter data You can specify WHERE conditions to filter data. For more information, see Use SQL conditions to filter data.

Select the SQL operations to be synchronized In the Selected Objects section, right-click an object. In the dialog box that appears, select the DML and DDL operations that you want to synchronize. For more information, see SQL operations that can be synchronized. - Advanced Settings

Parameter Description Set Alerts Specifies whether to configure alerting for the data synchronization task. If the task fails or the synchronization latency exceeds the specified threshold, alert contacts will receive notifications. Valid values:- No: does not configure alerting.

- Yes: configures alerting. If you select Yes, you must also specify the alert threshold and alert contacts. For more information, see Configure monitoring and alerting when you create a DTS task.

Retry Time for Failed Connection The retry time range for failed connections. If the source or destination database fails to be connected after the data synchronization task is started, DTS immediately retries a connection within the time range. Valid values: 10 to 1440. Unit: minutes. Default value: 720. We recommend that you set the parameter to a value greater than 30. If DTS reconnects to the source and destination databases within the specified time range, DTS resumes the data synchronization task. Otherwise, the data synchronization task fails.Note- If you set different retry time ranges for multiple DTS tasks that have the same source or destination database, the shortest retry time range that is set takes precedence.

- If DTS retries a connection, you are charged for the DTS instance. We recommend that you specify the retry time range based on your business requirements, or release the DTS instance at the earliest opportunity after the source and destination instances are released.

- Basic Settings

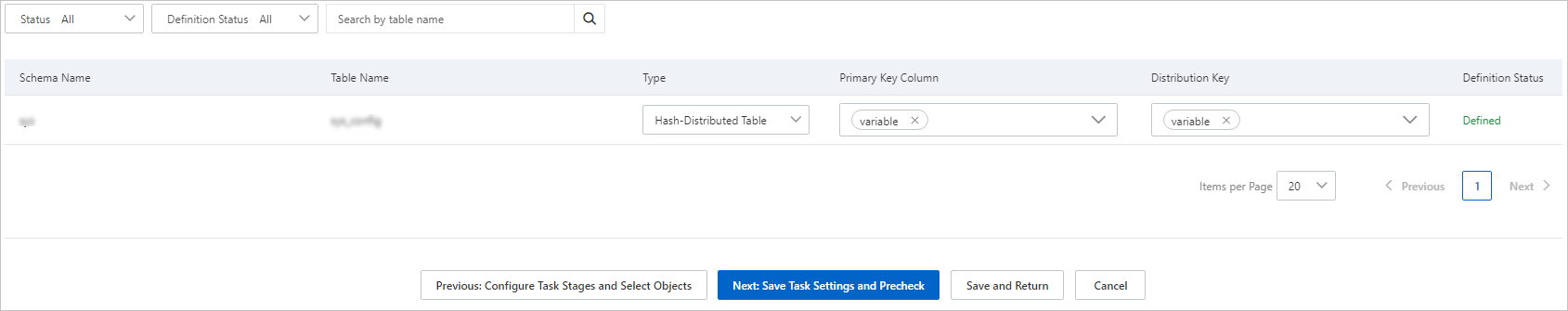

- In the lower part of the page, click Next: Configure Database and Table Fields. On the page that appears, set the primary key columns and distribution key columns of the tables that you want to synchronize to the destination AnalyticDB for PostgreSQL instance.

- In the lower part of the page, click Next: Save Task Settings and Precheck. Note

- Before you can start the data synchronization task, DTS performs a precheck. You can start the data synchronization task only after the task passes the precheck.

- If the task fails to pass the precheck, click View Details next to each failed item. After you analyze the causes based on the check results, troubleshoot the issues. Then, run a precheck again.

- If an alert is generated for an item during the precheck, perform the following operations based on the scenario:

- In scenarios in which you cannot ignore the alert item, click View Details next to the failed item. After you analyze the causes based on the check results, troubleshoot the issues. Then, run a precheck again.

- In scenarios in which you can ignore the alert item, click Confirm Alert Details next to the failed item. In the View Details dialog box, click Ignore. In the message that appears, click OK. Then, click Precheck Again to run a precheck again. If you ignore the alert item, data inconsistency may occur and your business may be exposed to potential risks.

- Wait until the success rate becomes 100%. Then, click Next: Purchase Instance.

- On the Purchase Instance page, configure the Billing Method and Instance Class parameters for the data synchronization instance. The following table describes the parameters.

Section Parameter Description New Instance Class Billing Method - Subscription: You pay for the instance when you create an instance. The subscription billing method is more cost-effective than the pay-as-you-go billing method for long-term use.

- Pay-as-you-go: A pay-as-you-go instance is charged on an hourly basis. The pay-as-you-go billing method is suitable for short-term use. If you no longer require a pay-as-you-go instance, you can release the pay-as-you-go instance to reduce costs.

Instance Class DTS provides several instance classes that have different performance in synchronization speed. You can select an instance class based on your business scenario. For more information, see Specifications of data synchronization instances. Subscription Duration If you select the subscription billing method, set the subscription duration and the number of instances that you want to create. The subscription duration can be one to nine months or one to three years. Note This parameter is displayed only if you select the subscription billing method. - Read and select the check box for Data Transmission Service (Pay-as-you-go) Service Terms.

- Click Buy and Start to start the data synchronization task. You can view the progress of the task in the task list.