Deploy a production-ready DeepSeek-R1-Distill-Qwen-7B inference service on Alibaba Cloud Container Service for Kubernetes (ACK) using KServe and Arena. This guide covers GPU sizing, model preparation, service deployment, verification, and observability setup.

Background

DeepSeek-R1

KServe

Arena

Prerequisites

Before you begin, ensure that you have:

A Kubernetes cluster with GPU nodes — see Add a GPU node pool to a cluster

kubectl connected to the cluster — see Connect to a cluster using kubectl

The ack-kserve component installed — see Install the ack-kserve component

The Arena client configured — see Configure the Arena client

GPU sizing

Model parameters are the main consumer of GPU memory during inference. Use the following formula to estimate the required GPU memory:

GPU memory = Number of parameters × Bytes per parameterFor a 7B model at FP16 precision: 7 × 10⁹ × 2 bytes ≈ 13.04 GiB

Beyond loading the model weights, you need additional GPU memory for the KV cache and computation. Use a GPU instance with at least 24 GiB of GPU memory, such as ecs.gn7i-c8g1.2xlarge or ecs.gn7i-c16g1.4xlarge.

For instance specifications and pricing, see GPU-accelerated compute optimized instance family and Elastic GPU Service billing.

Deploy the inference service

Step 1: Prepare model files

Download the DeepSeek-R1-Distill-Qwen-7B model from ModelScope.

Make sure git-lfs is installed before cloning. Install it with

yum install git-lfsorapt-get install git-lfs. For more options, see Install Git Large File Storage.git lfs install GIT_LFS_SKIP_SMUDGE=1 git clone https://www.modelscope.cn/deepseek-ai/DeepSeek-R1-Distill-Qwen-7B.git cd DeepSeek-R1-Distill-Qwen-7B/ git lfs pullUpload the model to an OSS bucket.

For ossutil installation and usage, see Install ossutil.

ossutil mkdir oss://<your-bucket-name>/models/DeepSeek-R1-Distill-Qwen-7B ossutil cp -r ./DeepSeek-R1-Distill-Qwen-7B oss://<your-bucket-name>/models/DeepSeek-R1-Distill-Qwen-7BCreate a persistent volume (PV) and persistent volume claim (PVC) named

llm-modelto mount the model into the cluster. For step-by-step instructions, see Use ossfs 1.0 statically provisioned volumes.Console

Configure the PV with the following settings:

Configuration item Value PV type OSS Name llm-model Access certificate AccessKey ID and AccessKey secret for OSS Bucket ID The OSS bucket created in the previous step OSS path /models/DeepSeek-R1-Distill-Qwen-7BConfigure the PVC with the following settings:

Configuration item Value PVC type OSS Name llm-model Allocation mode Select an existing PV Existing volumes Select the PV created above Kubectl

Apply the following YAML to create a Secret, PV, and PVC:

apiVersion: v1 kind: Secret metadata: name: oss-secret stringData: akId: <your-oss-ak> # AccessKey ID for OSS akSecret: <your-oss-sk> # AccessKey secret for OSS --- apiVersion: v1 kind: PersistentVolume metadata: name: llm-model labels: alicloud-pvname: llm-model spec: capacity: storage: 30Gi accessModes: - ReadOnlyMany persistentVolumeReclaimPolicy: Retain csi: driver: ossplugin.csi.alibabacloud.com volumeHandle: llm-model nodePublishSecretRef: name: oss-secret namespace: default volumeAttributes: bucket: <your-bucket-name> # The bucket name url: <your-bucket-endpoint> # Endpoint, e.g., oss-cn-hangzhou-internal.aliyuncs.com otherOpts: "-o umask=022 -o max_stat_cache_size=0 -o allow_other" path: <your-model-path> # e.g., /models/DeepSeek-R1-Distill-Qwen-7B/ --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: llm-model spec: accessModes: - ReadOnlyMany resources: requests: storage: 30Gi selector: matchLabels: alicloud-pvname: llm-model

Step 2: Deploy the inference service

Run the following command to start the inference service.

arena serve kserve \

--name=deepseek \

--image=kube-ai-registry.cn-shanghai.cr.aliyuncs.com/kube-ai/vllm:v0.6.6 \

--gpus=1 \

--cpu=4 \

--memory=12Gi \

--data=llm-model:/models/DeepSeek-R1-Distill-Qwen-7B \

"vllm serve /models/DeepSeek-R1-Distill-Qwen-7B --port 8080 --trust-remote-code --served-model-name deepseek-r1 --max-model-len 32768 --gpu-memory-utilization 0.95 --enforce-eager"Arena parameters

| Parameter | Required | Description |

|---|---|---|

--name | Yes | Name of the inference service. Must be globally unique. |

--image | Yes | Container image for the inference service. |

--gpus | No | Number of GPUs to allocate. Default: 0. |

--cpu | No | Number of CPUs to allocate. |

--memory | No | Amount of memory to allocate. |

--data | No | Mounts the llm-model PVC to /models/DeepSeek-R1-Distill-Qwen-7B in the container. |

vLLM serve parameters

| Parameter | Description |

|---|---|

--port 8080 | Port for the vLLM server to listen on. |

--trust-remote-code | Allows loading custom model code from the model repository. Required for DeepSeek models. |

--served-model-name deepseek-r1 | Model name exposed in the API. Used as the model field in API requests. |

--max-model-len 32768 | Maximum token length for input + output. Set to 32,768 to balance context window and GPU memory. |

--gpu-memory-utilization 0.95 | Fraction of GPU memory reserved for the model and KV cache. Set to 0.95 to maximize memory use while leaving headroom. |

--enforce-eager | Disables CUDA graph capture and runs in eager execution mode. Reduces startup memory overhead, useful when GPU memory is tight. |

Expected output:

inferenceservice.serving.kserve.io/deepseek created

INFO[0003] The Job deepseek has been submitted successfully

INFO[0003] You can run `arena serve get deepseek --type kserve -n default` to check the job statusStep 3: Verify the deployment

Check the service status.

arena serve get deepseekExpected output:

Name: deepseek Namespace: default Type: KServe Version: 1 Desired: 1 Available: 1 Age: 3m Address: http://deepseek-default.example.com Port: :80 GPU: 1 Instances: NAME STATUS AGE READY RESTARTS GPU NODE ---- ------ --- ----- -------- --- ---- deepseek-predictor-7cd4d568fd-fznfg Running 3m 1/1 0 1 cn-beijing.172.16.1.77The

Available: 1andRunningstatus confirm the service is ready.Send a test request via the NGINX Ingress gateway.

# Get the NGINX Ingress IP address NGINX_INGRESS_IP=$(kubectl -n kube-system get svc nginx-ingress-lb -ojsonpath='{.status.loadBalancer.ingress[0].ip}') # Get the inference service hostname SERVICE_HOSTNAME=$(kubectl get inferenceservice deepseek -o jsonpath='{.status.url}' | cut -d "/" -f 3) # Send a chat completion request curl -H "Host: $SERVICE_HOSTNAME" \ -H "Content-Type: application/json" \ http://$NGINX_INGRESS_IP:80/v1/chat/completions \ -d '{"model": "deepseek-r1", "messages": [{"role": "user", "content": "Say this is a test!"}], "max_tokens": 512, "temperature": 0.7, "top_p": 0.9, "seed": 10}'Expected output:

{"id":"chatcmpl-0fe3044126252c994d470e84807d4a0a","object":"chat.completion","created":1738828016,"model":"deepseek-r1","choices":[{"index":0,"message":{"role":"assistant","content":"<think>\n\n</think>\n\nIt seems like you're testing or sharing some information. How can I assist you further? If you have any questions or need help with something, feel free to ask!","tool_calls":[]},"logprobs":null,"finish_reason":"stop","stop_reason":null}],"usage":{"prompt_tokens":9,"total_tokens":48,"completion_tokens":39,"prompt_tokens_details":null},"prompt_logprobs":null}

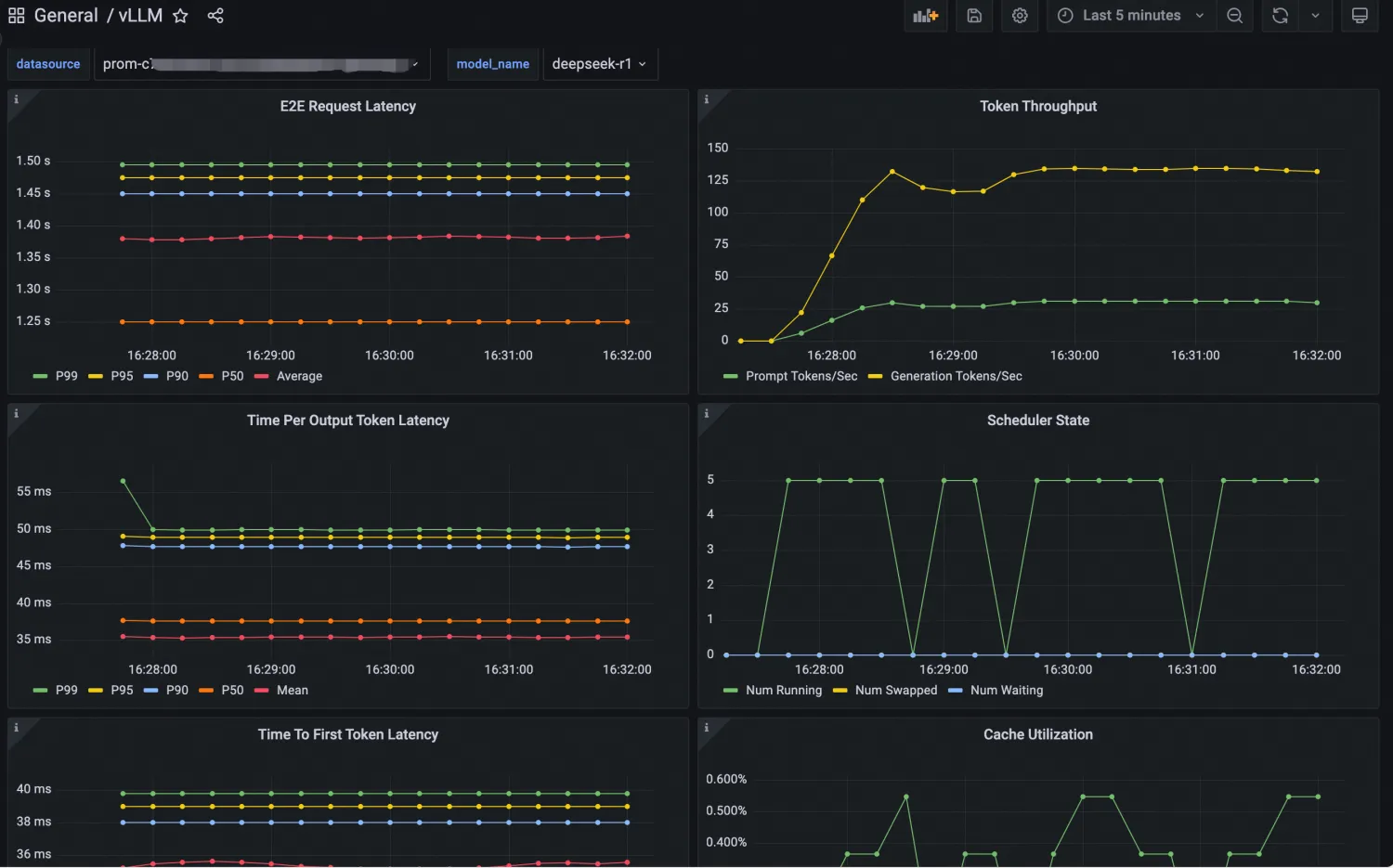

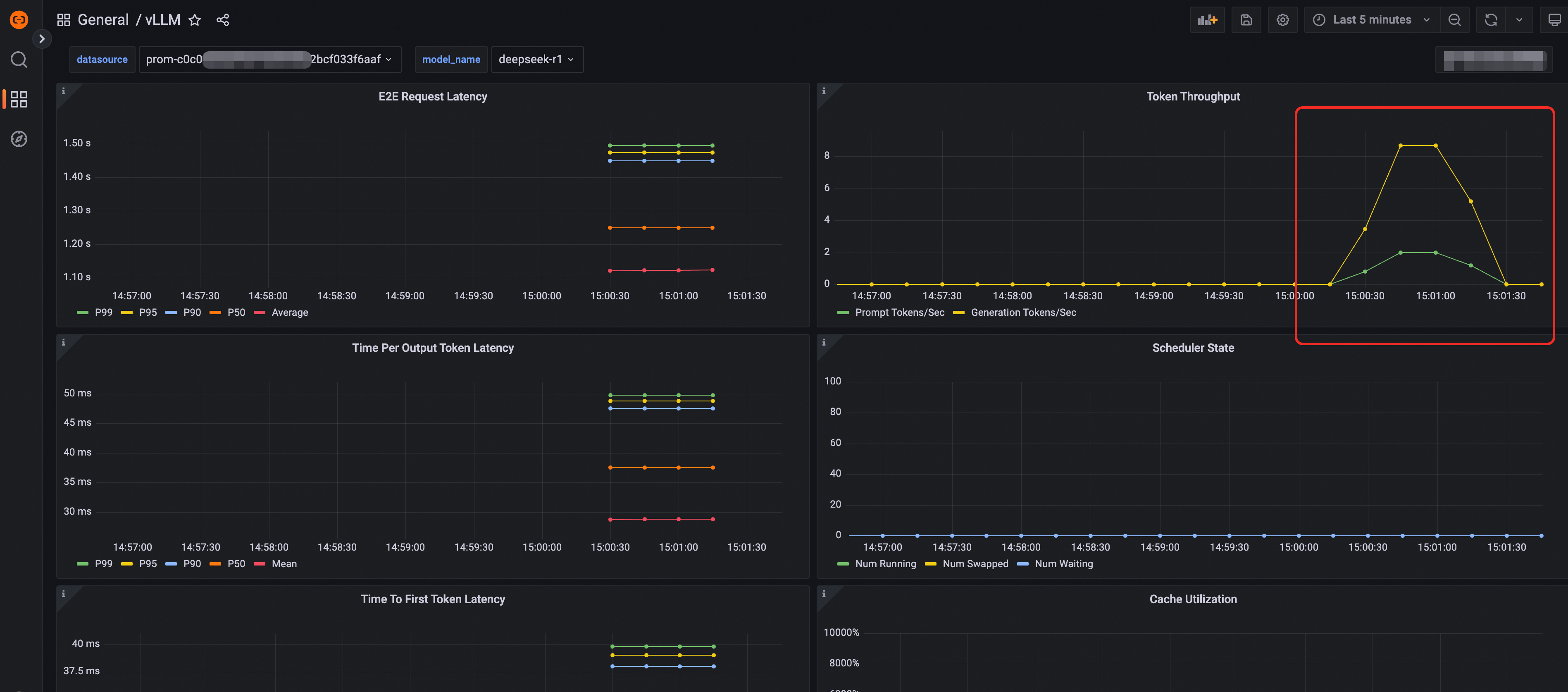

Observability

LLM inference services generate a rich set of metrics. vLLM exposes inference metrics such as token throughput and latency; KServe adds service health and performance metrics. Both are integrated into Arena and can be enabled with a single flag.

To enable Prometheus metrics, add --enable-prometheus=true to the arena serve kserve command:

arena serve kserve \

--name=deepseek \

--image=kube-ai-registry.cn-shanghai.cr.aliyuncs.com/kube-ai/vllm:v0.6.6 \

--gpus=1 \

--cpu=4 \

--memory=12Gi \

--enable-prometheus=true \

--data=llm-model:/models/DeepSeek-R1-Distill-Qwen-7B \

"vllm serve /models/DeepSeek-R1-Distill-Qwen-7B --port 8080 --trust-remote-code --served-model-name deepseek-r1 --max-model-len 32768 --gpu-memory-utilization 0.95 --enforce-eager"For the full list of vLLM metrics, see the vLLM Metrics documentation.

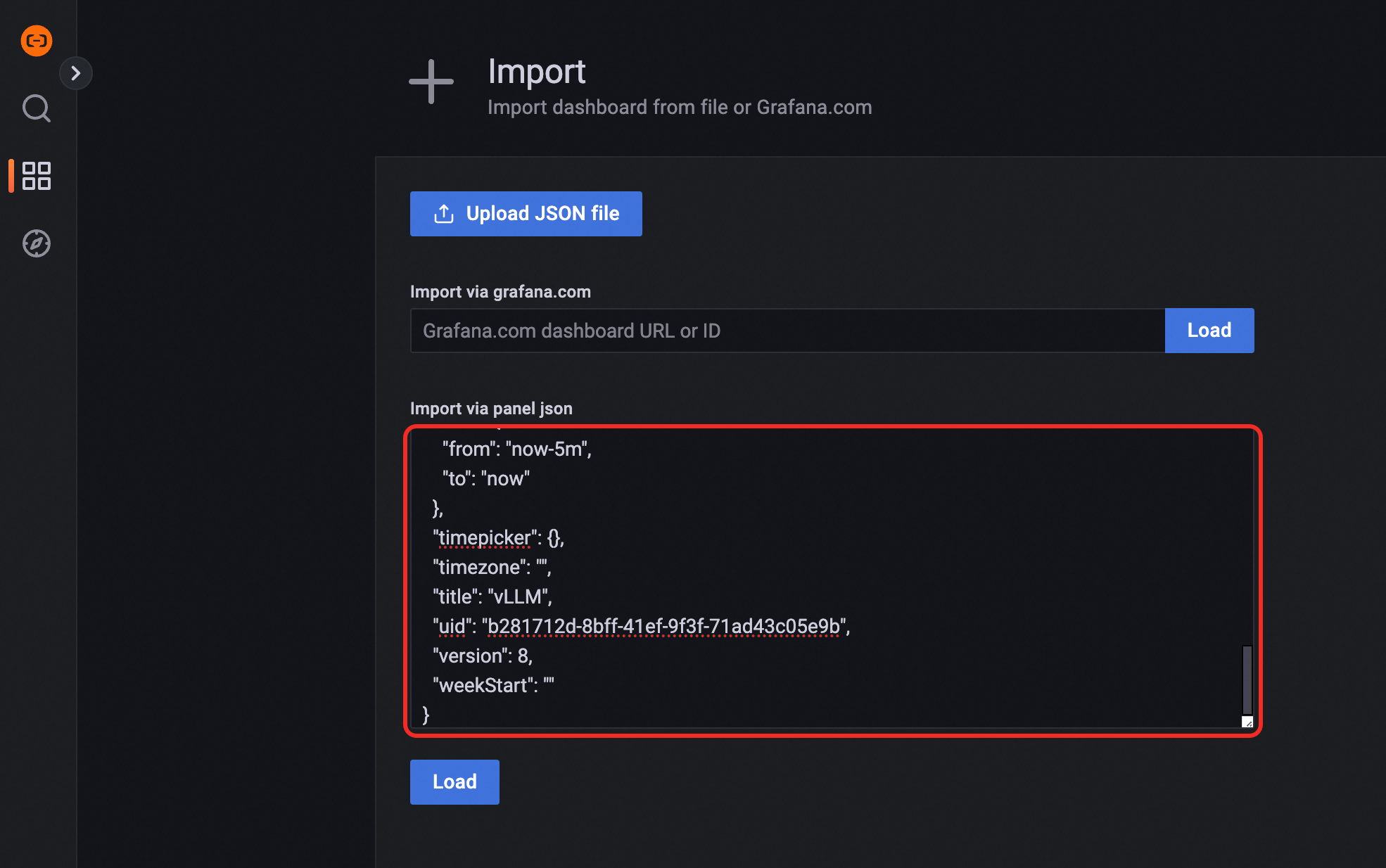

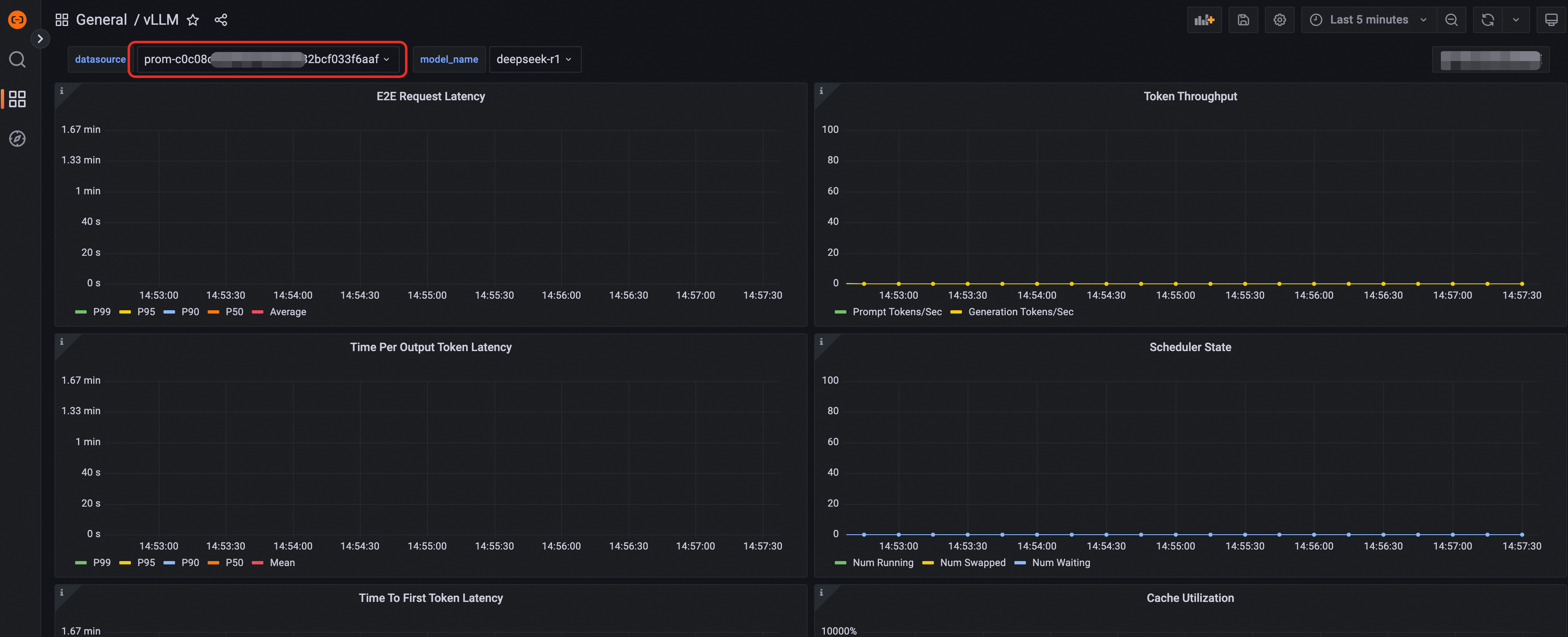

Import a Grafana dashboard

Production enhancements

Elastic scaling

If your inference workload has variable traffic, use KServe's Horizontal Pod Autoscaler (HPA) integration with the ack-alibaba-cloud-metrics-adapter component from ACK to automatically scale pods based on CPU, memory, GPU utilization, and custom metrics. This keeps the service stable during traffic spikes without over-provisioning.

For more information, see Configure auto scaling for a service.

Model acceleration

When the cluster pulls large model files from OSS or NAS, high latency and cold start delays can affect service availability. Use Fluid to cache model data close to the inference pods, significantly reducing load time.

For more information, see Use Fluid for model acceleration.

Phased release

When updating a model or configuration in production, use phased release to roll out changes to a subset of traffic first and validate stability before a full rollout. ACK supports both traffic percentage-based and request header-based phased release strategies.

For more information, see Implement phased release for inference services.

GPU sharing

The DeepSeek-R1-Distill-Qwen-7B model requires approximately 14 GB of GPU memory. If you are using a higher-spec GPU, consider GPU sharing to run multiple inference services on a single GPU and improve overall GPU utilization.

For more information, see Deploy GPU sharing inference services.

What's next

Use ACS computing power in an ACK managed cluster Pro edition

Build a DeepSeek distillation model inference service using ACS GPU computing power

Build a DeepSeek full-version model inference service using ACS GPU computing power

Build a distributed DeepSeek full-version inference service using ACS GPU computing power