Container Service for Kubernetes (ACK) supports unified scheduling and operations management for various models of compute-optimized GPU resources. This topic describes how to create a GPU-accelerated node pool and verify that GPU nodes are ready.

Prerequisites

Before you begin, ensure that you have:

Limitations

-

If no GPU-accelerated instance type is available in the Create Node Pool form, change the specified vSwitches and try again.

-

On nodes running Ubuntu 22.04 or Red Hat Enterprise Linux (RHEL) 9.3 64-bit, the

ack-nvidia-device-plugincomponent sets theNVIDIA_VISIBLE_DEVICES=allenvironment variable for pods by default. After the node runssystemctl daemon-reloadorsystemctl daemon-reexec, the node may lose access to GPU devices, causing the NVIDIA Device Plugin to stop working. For details, see What do I do if the "Failed to initialize NVML: Unknown Error" error occurs when I run a GPU container?.

Create a GPU-accelerated node pool

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, find the cluster to manage and click its name. In the left navigation pane, choose Nodes > Node Pools.

-

Click Create Node Pool, set Instance Type to Elastic GPU Service, and set Expected Nodes to the required number of nodes. For information about GPU-accelerated instance types supported by ACK, see GPU-accelerated ECS instance types supported by ACK. For other node pool parameters, see Create and manage a node pool.

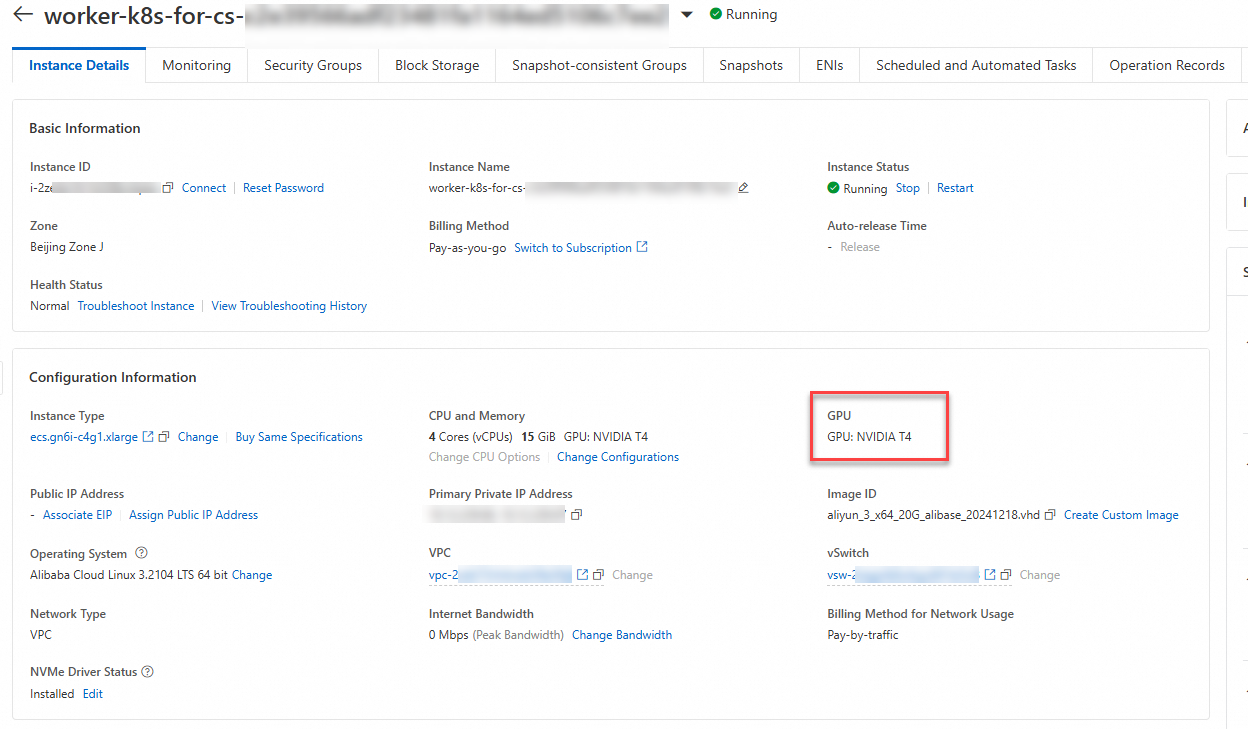

Verify GPU nodes

After the node pool is created, confirm that GPU devices are attached to the nodes and are schedulable.

Verify via the ACK console

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the cluster name. In the left navigation pane, choose Nodes > Nodes.

-

Find the target node and click Details in the Actions column to view the GPUs attached to it.