SysOM provides OS kernel-level monitoring dashboards for pods and nodes in ACK clusters. Use it to diagnose container memory issues — including the hidden memory consumption patterns that standard metrics miss — and trace high working set usage down to the specific memory type responsible.

Prerequisites

Before you begin, ensure that you have:

-

An ACK Managed Cluster or an ACK Serverless Cluster created after October 2021, running Kubernetes 1.18.8 or later. To upgrade, see Manually upgrade a cluster.

-

Prometheus Service for Alibaba Cloud enabled.

-

The ack-sysom-monitor feature enabled. See Enable the ack-sysom-monitor feature.

Billing

Enabling ack-sysom-monitor causes the component to automatically send monitoring metrics to Prometheus Service for Alibaba Cloud. These metrics are billed as Custom Metrics and incur additional fees.

Review the billing overview for Prometheus Service for Alibaba Cloud before enabling this feature. Actual costs depend on your cluster size and the number of applications running. Use the Resource Consumption feature to monitor and manage usage.

Key concepts

Container memory components

The ACK team, in collaboration with the Alibaba Cloud GuestOS team, uses container monitoring capabilities at the OS kernel level to precisely control memory usage and prevent OOM issues.

Container memory has three categories:

| Category | Subcategory | Description |

|---|---|---|

| Application memory | Anonymous memory: heap, stack, and data segments of a process, allocated via brk and mmap system calls |

Memory used by the running application |

File cache: data cached for file read/write operations. Frequently accessed cache is called ActiveFileCache and is not easily reclaimed. |

||

| Buffers: metadata for block devices or file systems | ||

| HugeTLB: memory allocated via HugePages technology | ||

| Kernel memory | Slab: memory pool for kernel object caches | Memory used by the OS kernel |

| Vmalloc: mechanism for allocating large blocks of virtual memory space | ||

| allocpage: mechanism for allocating local memory | ||

| Others: kernel stack, page table, and reserved memory | ||

| Free memory | — | Unused, available memory |

Working set vs. RSS

Kubernetes tracks two key memory metrics:

-

Working set — the memory a container actively uses in a given time frame:

working set = inactive_anon + active_anon + active_file. This is the value the OOM killer and Kubernetes monitor to decide whether to evict or terminate a container. -

RSS (resident set size) — the physical memory mapped into the container's address space, excluding file cache. RSS shows actual application memory consumption but does not include cached memory that Kubernetes counts against the container's limit.

Because Kubernetes uses working set for eviction decisions, a large file cache can silently inflate working set usage and trigger OOM kills even when the application itself is not consuming excessive memory. SysOM breaks down working set into its components so you can identify exactly which memory type is causing the problem.

How it works

SysOM surfaces OS kernel-level metrics in Prometheus Monitoring dashboards within the ACK console. The dashboards organize pod memory into the components used in the working set formula, letting you trace high memory usage from the pod level down to specific memory types.

Use these formulas when diagnosing memory issues:

-

Total pod memory = RSS + Cache ≈ inactive_anon + active_anon + inactive_file + active_file -

working set = inactive_anon + active_anon + active_file

Locate and fix container memory issues

Step 1: Open the SysOM dashboard

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of your cluster. In the left navigation pane, click Operations > Prometheus Monitoring.

-

On the Prometheus Monitoring page, click the SysOM tab, then click SysOM - Pods.

Step 2: Identify the memory black hole

-

In the Pod Memory Monitor section, apply the total pod memory formula to break down memory into cache and RSS. Review the proportional composition of cache (

active_file,inactive_file,shmem) and RSS (active_anon,inactive_anon). In the following example,inactive_anonaccounts for the largest proportion of memory usage.

-

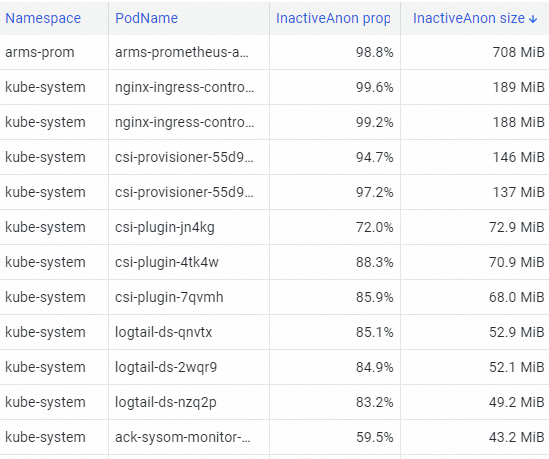

In the Pod Resource Analysis section, use the top tool to identify the pod with the highest InactiveAnon memory consumption in the cluster. In the following example, the arms-prom pod has the highest memory consumption.

-

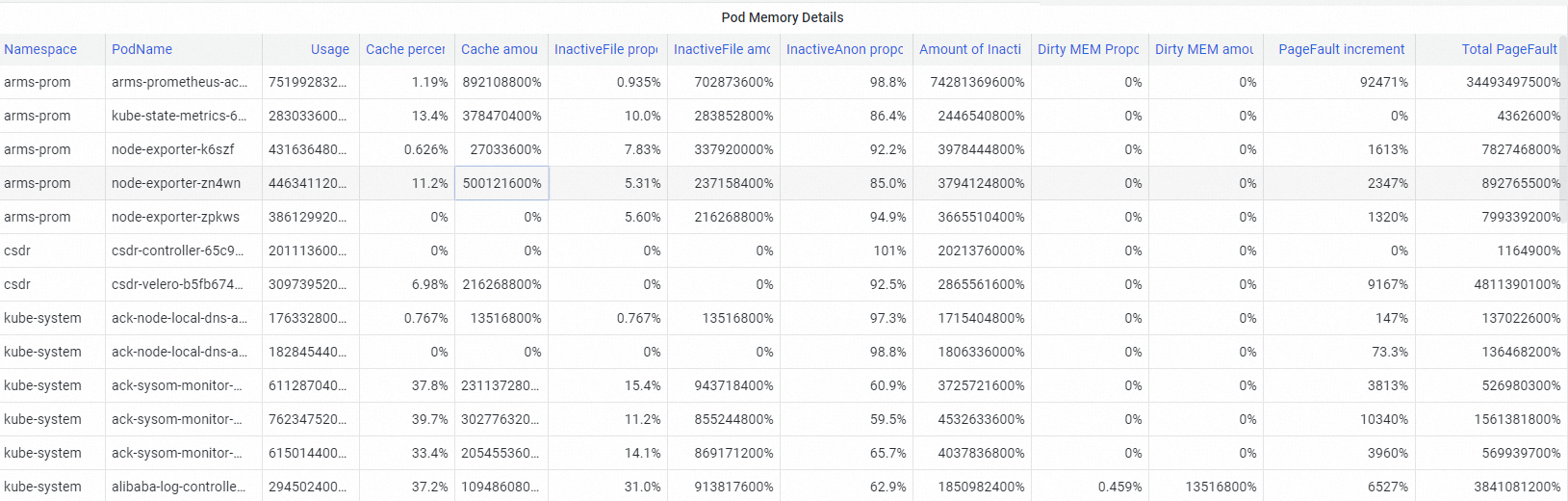

In the Pod Memory Details section, view the detailed memory composition of the identified pod. The dashboard shows components including Pod Cache, InactiveFile (inactive file cache), InactiveAnon (inactive anonymous memory), and dirty memory (memory modified but not yet written to disk). Use these breakdowns to pinpoint which memory type is the black hole.

Step 3: Investigate file cache usage

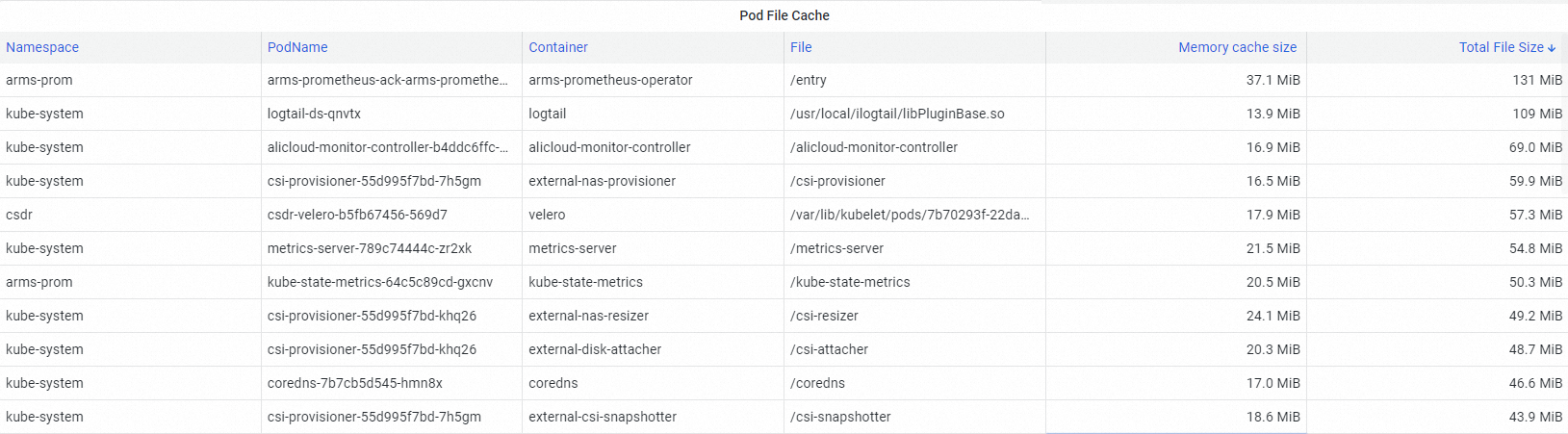

In the Pod File Cache section, identify what is driving high cache memory usage.

A large file cache in a pod increases working set usage. When this cached memory is not reclaimed, it becomes a memory black hole — one that counts toward the pod's memory limit and can trigger eviction or OOM kills without any increase in application memory usage.

Step 4: Fix the memory black hole

After identifying the memory black hole, use the fine-grained scheduling feature of ACK to resolve it. For configuration steps, see Enable container memory QoS.

What's next

-

For the full list of metrics available in SysOM, see SysOM kernel-level container monitoring.

-

For the kernel capabilities that ACK memory QoS relies on, see Overview of kernel features and interfaces.