GPU inference workloads with cyclical traffic or slow pod initialization create a scaling problem: standard HPA reacts only after utilization thresholds are crossed, which can be too late to avoid resource exhaustion or latency spikes. The Advanced Horizontal Pod Autoscaler (AHPA) solves this by combining real-time GPU metrics from Prometheus, historical load trends, and prediction algorithms to scale pods proactively—before demand arrives.

Use this guide if your workload has one or more of these characteristics:

Cyclical or time-of-day traffic patterns (for example, high GPU utilization during business hours, idle overnight)

Pods that take significant time to initialize, causing latency spikes during reactive scale-out

Cost sensitivity that requires accurate scale-in when GPUs are idle

Prerequisites

Before you begin, ensure that you have:

An ACK managed cluster with GPU-accelerated nodes. See Create an ACK cluster with GPU-accelerated nodes

AHPA installed and data sources configured. See AHPA overview

Managed Service for Prometheus enabled with at least 7 days of application statistics collected, including GPU resource usage details

AHPA's prediction quality depends directly on historical data. Do not skip or abbreviate the 7-day data collection period—insufficient history produces inaccurate predictions and may cause AHPA to under-provision or over-provision replicas.

How it works

Managed Service for Prometheus collects real-time GPU utilization and memory usage from your workloads. Prometheus Adapter converts these metrics into the format that Kubernetes recognizes and exposes them to AHPA. AHPA combines current metrics with historical load trends and prediction algorithms to forecast future GPU demand, scaling pods before utilization spikes rather than after.

Unlike standard HPA—which scales only after a threshold is crossed—AHPA completes scale-out before resources run out and scales in automatically when GPUs are idle.

Step 1: Deploy the metrics adapter

Get the internal HTTP API endpoint of your cluster's Prometheus instance.

Log on to the ARMS console.

In the left-side navigation pane, choose Managed Service for Prometheus > Instances.

At the top of the Instances page, select the region where your ACK cluster is located.

Click the name of the Managed Service for Prometheus instance. In the left-side navigation pane of the instance details page, click Settings and record the endpoint shown in the HTTP API URL section.

Deploy

ack-alibaba-cloud-metrics-adapter.Log on to the ACK console. In the left-side navigation pane, choose Marketplace > Marketplace.

On the Marketplace page, click the App Catalog tab, find

ack-alibaba-cloud-metrics-adapter, and click it.In the upper-right corner of the ack-alibaba-cloud-metrics-adapter page, click Deploy.

On the Basic Information page, specify Cluster and Namespace, then click Next.

On the Parameters page, set Chart Version and enter the internal HTTP API endpoint you recorded in the previous step as the value of the

prometheus.urlparameter. Click OK.

Step 2: Configure AHPA for GPU-based predictive scaling

This example deploys a BERT intent detection inference service, then configures AHPA to scale pods when GPU utilization exceeds 20%.

Deploy the inference service

Deploy the inference service:

cat <<EOF | kubectl create -f - apiVersion: apps/v1 kind: Deployment metadata: name: bert-intent-detection spec: replicas: 1 selector: matchLabels: app: bert-intent-detection template: metadata: labels: app: bert-intent-detection spec: containers: - name: bert-container image: registry.cn-hangzhou.aliyuncs.com/ai-samples/bert-intent-detection:1.0.1 ports: - containerPort: 80 resources: limits: cpu: "1" memory: 2G nvidia.com/gpu: "1" requests: cpu: 200m memory: 500M nvidia.com/gpu: "1" --- apiVersion: v1 kind: Service metadata: name: bert-intent-detection-svc labels: app: bert-intent-detection spec: selector: app: bert-intent-detection ports: - protocol: TCP name: http port: 80 targetPort: 80 type: LoadBalancer EOFVerify the pod is running:

kubectl get pods -o wideExpected output:

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES bert-intent-detection-7b486f6bf-f**** 1/1 Running 0 3m24s 10.15.1.17 cn-beijing.192.168.94.107 <none> <none>Test the inference service. Get the external IP address first:

kubectl get svc bert-intent-detection-svcReplace

47.95.XX.XXwith the IP address returned, then send a test request:curl -v "http://47.95.XX.XX/predict?query=Music"Expected output:

* Trying 47.95.XX.XX... * TCP_NODELAY set * Connected to 47.95.XX.XX (47.95.XX.XX) port 80 (#0) > GET /predict?query=Music HTTP/1.1 > Host: 47.95.XX.XX > User-Agent: curl/7.64.1 > Accept: */* > * HTTP 1.0, assume close after body < HTTP/1.0 200 OK < Content-Type: text/html; charset=utf-8 < Content-Length: 9 < Server: Werkzeug/1.0.1 Python/3.6.9 < Date: Wed, 16 Feb 2022 03:52:11 GMT < * Closing connection 0 PlayMusicHTTP 200 and a query result confirm the inference service is running.

Configure data sources for AHPA

Create a file named

application-intelligence.yamlwith the following content. SetprometheusUrlto the internal endpoint you recorded in Step 1.apiVersion: v1 kind: ConfigMap metadata: name: application-intelligence namespace: kube-system data: prometheusUrl: "http://cn-shanghai-intranet.arms.aliyuncs.com:9090/api/v1/prometheus/da9d7dece901db4c9fc7f5b*******/1581204543170*****/c54417d182c6d430fb062ec364e****/cn-shanghai"Apply the ConfigMap:

kubectl apply -f application-intelligence.yaml

Deploy AHPA

Create a file named

fib-gpu.yamlwith the following content. This example usesobservermode, which lets AHPA collect prediction data without acting on it. Use observer mode to validate predictions before enabling automatic scaling. For the full parameter reference, see Parameters.apiVersion: autoscaling.alibabacloud.com/v1beta1 kind: AdvancedHorizontalPodAutoscaler metadata: name: fib-gpu namespace: default spec: metrics: - resource: name: gpu target: averageUtilization: 20 # Scale when average GPU utilization across pods exceeds 20% type: Utilization type: Resource minReplicas: 0 maxReplicas: 100 prediction: quantile: 95 # Use the 95th percentile of historical values (conservative estimate) scaleUpForward: 180 # Start scaling 180 seconds before predicted demand peaks scaleStrategy: observer # observer: collect predictions without scaling; change to auto to enable scaling scaleTargetRef: apiVersion: apps/v1 kind: Deployment name: bert-intent-detection instanceBounds: # Time-based replica bounds that override minReplicas/maxReplicas during each window - startTime: "2021-12-16 00:00:00" endTime: "2022-12-16 00:00:00" bounds: - cron: "* 0-8 ? * MON-FRI" # Off-peak hours: allow scale-in to 4 replicas maxReplicas: 50 minReplicas: 4 - cron: "* 9-15 ? * MON-FRI" # Business hours: keep at least 10 replicas maxReplicas: 50 minReplicas: 10 - cron: "* 16-23 ? * MON-FRI" # Evening peak: keep at least 12 replicas maxReplicas: 50 minReplicas: 12Key parameters:

Parameter Description averageUtilizationGPU utilization threshold (%) that triggers scaling across all pods targeted by AHPA quantilePercentile of historical data used to generate predictions. Higher values are more conservative and reduce the risk of under-provisioning scaleUpForwardHow many seconds before predicted demand AHPA starts scaling out scaleStrategyobserver: predict and report only.auto: predict and actinstanceBoundsCron-based replica floor and ceiling overrides for specific time windows Deploy AHPA:

kubectl apply -f fib-gpu.yamlVerify AHPA status:

kubectl get ahpaExpected output:

NAME STRATEGY REFERENCE METRIC TARGET(%) CURRENT(%) DESIREDPODS REPLICAS MINPODS MAXPODS AGE fib-gpu observer bert-intent-detection gpu 20 0 0 1 10 50 6d19hCURRENT(%)is0andTARGET(%)is20, indicating GPU utilization is currently 0% and scaling triggers when it exceeds 20%.

Step 3: Test autoscaling and evaluate predictions

Generate load

Deploy fib-loader to send continuous requests to the inference service:

kubectl apply -f - <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: fib-loader

namespace: default

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: fib-loader

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: fib-loader

spec:

containers:

- args:

- -c

- |

/ko-app/fib-loader --service-url="http://bert-intent-detection-svc.${NAMESPACE}/predict?query=Music" --save-path=/tmp/fib-loader-chart.html

command:

- sh

env:

- name: NAMESPACE

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.namespace

image: registry.cn-huhehaote.aliyuncs.com/kubeway/knative-sample-fib-loader:20201126-110434

imagePullPolicy: IfNotPresent

name: loader

ports:

- containerPort: 8090

name: chart

protocol: TCP

resources:

limits:

cpu: "8"

memory: 16000Mi

requests:

cpu: "2"

memory: 4000Mi

EOFVerify scaling behavior

Check AHPA status under load:

kubectl get ahpaExpected output:

NAME STRATEGY REFERENCE METRIC TARGET(%) CURRENT(%) DESIREDPODS REPLICAS MINPODS MAXPODS AGE

fib-gpu observer bert-intent-detection gpu 20 189 10 4 10 50 6d19hCURRENT(%) is 189, which exceeds TARGET(%) of 20. AHPA recommends scaling to 10 pods (DESIREDPODS). Because scaleStrategy is observer, no actual scaling occurs yet.

Review prediction results

Retrieve the prediction chart to compare predicted versus actual GPU utilization:

kubectl get --raw '/apis/metrics.alibabacloud.com/v1beta1/namespaces/default/predictionsobserver/fib-gpu'|jq -r '.content' |base64 -d > observer.htmlOpen observer.html to see the prediction results based on the last 7 days of GPU data.

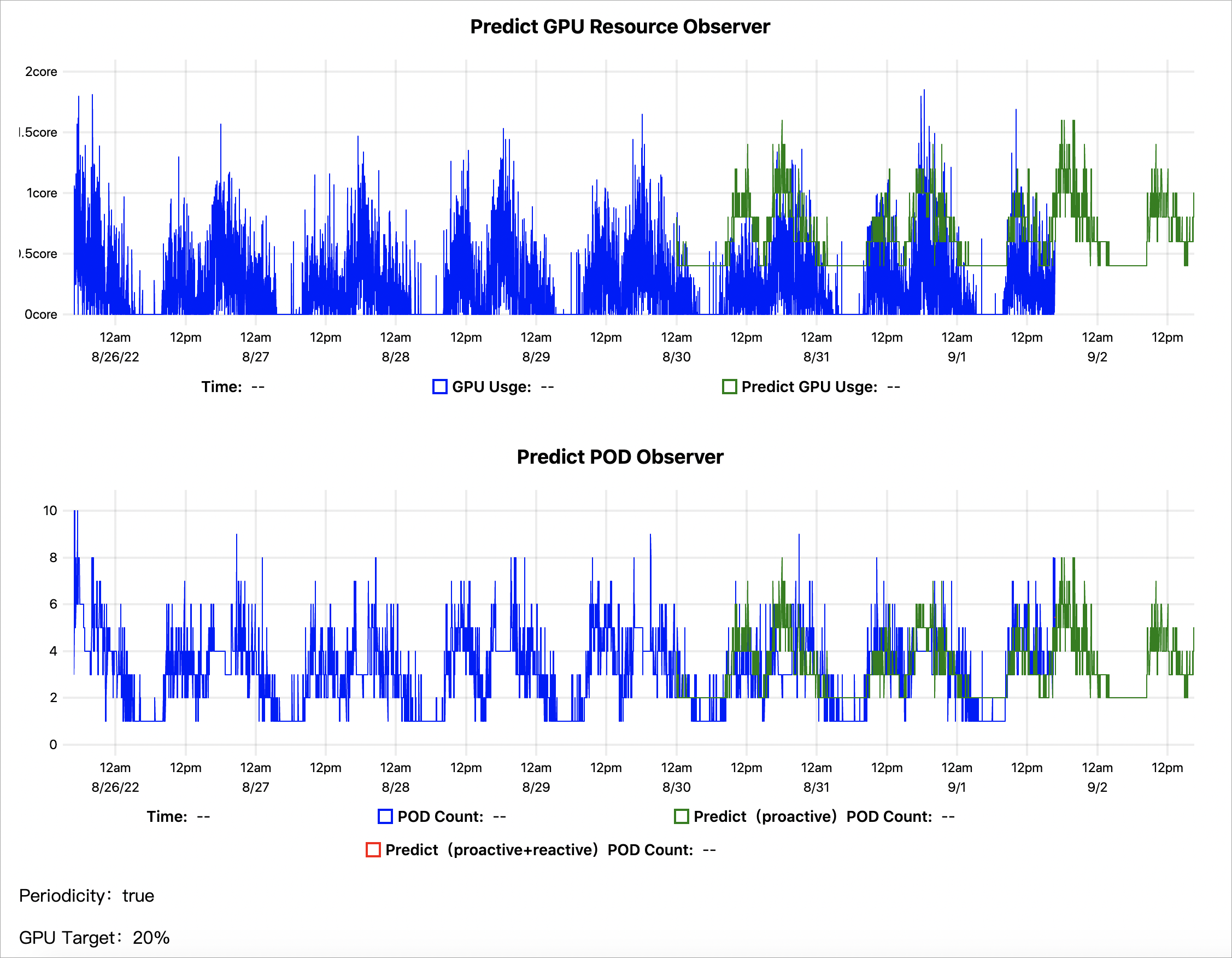

The chart contains two views:

Predict GPU Resource Observer: The blue line shows actual GPU utilization; the green line shows AHPA's prediction. A well-calibrated prediction runs slightly above actual utilization to ensure enough capacity is reserved.

Predict POD Observer: The blue line shows actual replica counts from scaling events; the green line shows AHPA's predicted replica counts. When predicted pod counts are lower than actual counts, switching to

automode lets AHPA pre-scale more efficiently and reduce over-provisioning.

Switch to automatic scaling

Review the prediction chart before switching to auto mode. The prediction is ready when both conditions are true:

The predicted GPU utilization (green line) consistently tracks above—but close to—actual utilization (blue line), without large gaps that would indicate over-provisioning

The predicted pod count (green line) approximates the actual scaling events (blue line) over several business cycles

When the predictions are stable, update scaleStrategy in fib-gpu.yaml:

scaleStrategy: autoApply the change:

kubectl apply -f fib-gpu.yamlWith auto mode active, AHPA scales pods based on predictions rather than waiting for GPU utilization thresholds to be crossed.

What's next

Serverless GPU inference with Knative: AHPA works in serverless Kubernetes (ASK) clusters. For workloads with periodic traffic patterns, AHPA predicts resource changes and prefetches capacity to reduce cold start impact. See Use AHPA to enable predictive scaling in Knative.

Custom metrics-based scaling: To scale based on HTTP QPS, message queue length, or other application metrics, use the External Metrics mechanism with

alibaba-cloud-metrics-adapter. See Use AHPA to configure custom metrics for application scaling.