CPU and memory metrics don't always reflect real application load. When your scaling signal is business-level — such as HTTP requests per second (RPS) or message queue depth — custom metrics give you a more accurate trigger. This guide shows how to configure AdvancedHorizontalPodAutoscaler (AHPA) with the ack-alibaba-cloud-metrics-adapter component to autoscale a deployment based on a Prometheus-scraped metric.

AHPA uses the Kubernetes External Metrics API, which lets it query any metric available in your Prometheus instance — not just pod-level metrics. Compared to standard HPA custom metrics (Pods/Object types), External Metrics provide broader flexibility. Use External Metrics when your scaling signal comes from a monitoring service such as Managed Service for Prometheus.

Prerequisites

Before you begin, ensure that you have:

-

An ACK cluster with Managed Service for Prometheus enabled

Step 1: Deploy the sample app and configure metric scraping

This step deploys a sample app that exposes requests_per_second as a Prometheus metric and a load generator, then configures Prometheus to scrape it.

Deploy the app and load generator

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

Click the name of your cluster. In the left navigation pane, click Workloads > Deployments.

-

On the Deployments page, click Create from YAML. Paste the following YAML, then click Create. This deploys:

-

sample-app: a server that exposes therequests_per_secondmetric at/metricson port 8080 -

fib-loader-qps: a load generator that sends traffic tosample-app

apiVersion: apps/v1 kind: Deployment metadata: name: sample-app labels: app: sample-app spec: replicas: 1 selector: matchLabels: app: sample-app template: metadata: labels: app: sample-app spec: containers: - image: registry.cn-hangzhou.aliyuncs.com/acs/knative-sample-fib-server:v1 name: metrics-provider ports: - name: http containerPort: 8080 --- apiVersion: v1 kind: Service metadata: name: sample-app namespace: default labels: app: sample-app spec: ports: - port: 8080 name: http protocol: TCP targetPort: 8080 selector: app: sample-app type: ClusterIP --- apiVersion: apps/v1 kind: Deployment metadata: name: fib-loader-qps namespace: default spec: progressDeadlineSeconds: 600 replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: fib-loader-qps strategy: rollingUpdate: maxSurge: 25% maxUnavailable: 25% type: RollingUpdate template: metadata: creationTimestamp: null labels: app: fib-loader-qps spec: containers: - args: - -c - | /ko-app/fib-loader --service-url="http://sample-app.${NAMESPACE}:8080/" --save-path=/tmp/fib-loader-chart.html command: - sh env: - name: NAMESPACE valueFrom: fieldRef: apiVersion: v1 fieldPath: metadata.namespace image: registry.cn-huhehaote.aliyuncs.com/kubeway/knative-sample-fib-loader:20201126-110434 imagePullPolicy: IfNotPresent name: loader ports: - containerPort: 8090 name: chart protocol: TCP -

Enable the ServiceMonitor in ARMS

-

Log on to the ARMS console. In the left navigation pane, click Integration Management.

-

On the Integrated Environments tab, click the Container Service tab. Find your ACK instance and click Metric Scraping in the Actions column.

-

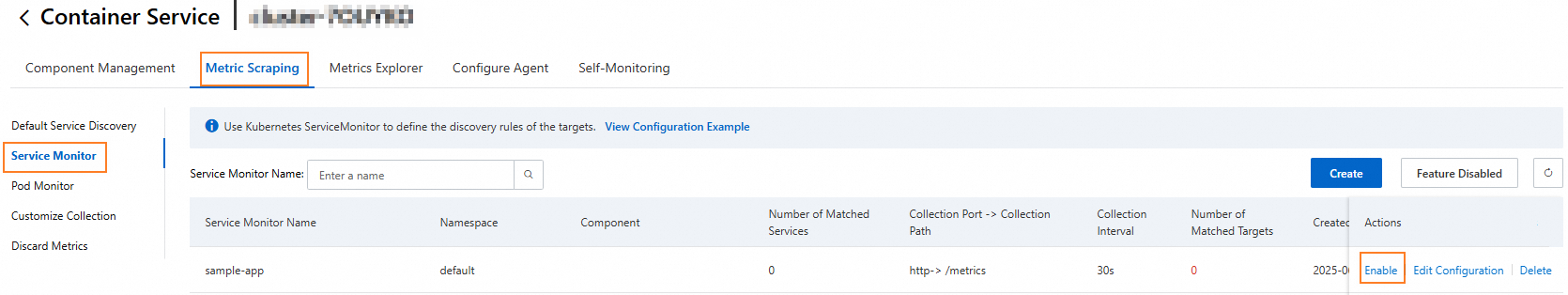

On the Metric Collection page, click the Service Monitor tab. Find

sample-app, click Enable in the Actions column, and confirm.

Step 2: Deploy the metrics adapter

The metrics adapter bridges Managed Service for Prometheus to the Kubernetes External Metrics API so AHPA can query your custom metric.

Get the Prometheus internal endpoint

-

Log on to the ARMS console. In the left navigation pane, choose Managed Service for Prometheus > Instances.

-

On the Prometheus Instances page, select the region of your instance and click the instance name. Instance names follow the format

arms_metrics_{RegionId}_XXX. -

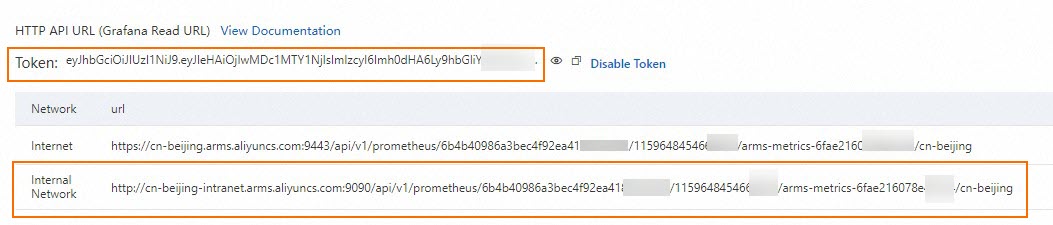

In the left navigation bar, click Settings. Under HTTP API Address (Grafana Read Address), copy the Internal network URL.

-

If token-based authentication is enabled for your instance, also copy the access token.

-

Install ack-alibaba-cloud-metrics-adapter

-

In the ACK console, click Marketplace > Marketplace in the left navigation pane.

-

Click the App Catalog tab, search for

ack-alibaba-cloud-metrics-adapter, and click Deploy in the upper-right corner. -

On the Basic Information page, select your Cluster and Namespace, then click Next.

-

In the Parameter Configuration wizard, select a Chart Version. In the Parameters area, set the following values, then click OK.

Parameter Required Description prometheus.urlYes Internal network URL of the Managed Service for Prometheus instance prometheus.prometheusHeaderNo Authorization header for token-based authentication. Leave blank if authentication is not enabled. prometheus: enabled: true # Required: the internal network URL of Managed Service for Prometheus url: http://cn-beijing-intranet.arms.aliyuncs.com:9090/api/v1/prometheus/<instance-id>/<uid>/<cluster-id>/<region> # Required only if token-based authentication is enabled prometheusHeader: - Authorization: <your-access-token>

Configure custom metrics

-

In the ACK console, click Applications > Helm in the left navigation pane. Find alibaba-cloud-metrics-adapter and click Update in the Actions column.

-

Copy the following YAML content and paste it to overwrite the corresponding parameters in the template. Note that the

requests_per_secondparameter must be changed to the actual Prometheus metric name. Then, click Update.The following is a partial configuration snippet. Paste it to overwrite the corresponding parameters in the existing template; do not replace the entire Helm values file.

Parameter Required Description seriesQueryYes The metric name in Prometheus. Must match exactly. name.asYes The name exposed via the External Metrics API. AHPA references this name. metricsQueryYes The PromQL aggregation applied when AHPA queries the metric. enabledYes Set to trueto activate the Prometheus adapter....... prometheus: adapter: rules: custom: - metricsQuery: sum(<<.Series>>{<<.LabelMatchers>>}) name: as: requests_per_second # Name exposed to the External Metrics API resources: overrides: namespace: resource: namespace seriesQuery: requests_per_second # Must match the metric name in Prometheus default: false enabled: true # Set to true to enable the Prometheus adapter ...... -

Verify the metric is available via the External Metrics API:

kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1/namespaces/default/requests_per_second"Expected output:

{"kind":"ExternalMetricValueList","apiVersion":"external.metrics.k8s.io/v1beta1","metadata":{},"items":[{"metricName":"requests_per_second","metricLabels":{},"timestamp":"2023-08-15T07:59:09Z","value":"10"}]}If the metric appears in the response, the adapter is correctly configured.

Step 3: Create an AHPA resource

With the External Metrics API returning data, create an AHPA resource to autoscale sample-app based on requests_per_second.

-

Apply the following YAML. Adjust the metric name,

averageValuethreshold, andminReplicas/maxReplicasto match your workload.This example sets

minReplicas: 0, which allows AHPA to scale the deployment to zero. Scaling from zero to one replica requires the metric value to exceed theaverageValuethreshold. When the deployment is scaled to zero, AHPA is responsible for the 0-to-1 transition. Once at least one replica is running, the standard scaling logic (1 to N replicas) applies based on theaverageValuethreshold.Parameter Description metrics[].external.metric.nameMust match the name.asvalue in the adapter configuration.target.averageValueTrigger threshold per pod. Scale out when the metric exceeds this value. prediction.quantilePercentile of the historical metric forecast used for proactive scaling. prediction.scaleUpForwardSeconds to scale ahead of a predicted traffic spike. scaleStrategySet to observerto let AHPA observe and report without enforcing scale decisions.instanceBoundsTime-based min/max replica overrides using cron expressions. apiVersion: autoscaling.alibabacloud.com/v1beta1 kind: AdvancedHorizontalPodAutoscaler metadata: name: customer-deployment namespace: default spec: metrics: - external: metric: name: requests_per_second # Must match name.as in the adapter config selector: matchLabels: namespace: default service: sample-app target: type: AverageValue averageValue: 10 # Scale out when average RPS per pod exceeds 10 type: External minReplicas: 0 maxReplicas: 50 prediction: quantile: 95 # Use the 95th-percentile forecast for proactive scaling scaleUpForward: 180 # Pre-scale 180 seconds before predicted demand spike scaleStrategy: observer scaleTargetRef: apiVersion: apps/v1 kind: Deployment name: sample-app instanceBounds: - startTime: "2023-08-01 00:00:00" endTime: "2033-08-01 00:00:00" bounds: - cron: "* 0-8 ? * MON-FRI" maxReplicas: 50 minReplicas: 4 - cron: "* 9-15 ? * MON-FRI" maxReplicas: 50 minReplicas: 5 - cron: "* 16-23 ? * MON-FRI" maxReplicas: 50 minReplicas: 1 -

Verify that AHPA is scaling correctly:

kubectl get ahpaExpected output:

NAME STRATEGY REFERENCE METRIC TARGETS DESIREDPODS REPLICAS MINPODS MAXPODS AGE customer-deployment observer Deployment/sample-app requests_per_second 60000m/10 6 1 1 50 7h53mKubernetes expresses metric values in milli units (m) for precision. In this example,

60000mequals 60 requests per second. With a threshold of 10, AHPA calculatesDESIREDPODSas 6 (60 / 10).

Troubleshoot AHPA scaling issues

If AHPA is not scaling as expected, inspect the status conditions:

kubectl describe ahpa customer-deploymentCheck the Conditions section of the output. Key fields:

| Field | What to check |

|---|---|

AbleToScale |

Whether AHPA can issue scale decisions. False indicates a controller or permission issue. |

ScalingActive |

Whether AHPA successfully retrieved the metric. False usually means the External Metrics API is not returning data. |

ScalingLimited |

Whether the current replica count is constrained by minReplicas, maxReplicas, or instanceBounds. |

If ScalingActive is False, re-run the verification command from Step 2 to confirm the metric is available:

kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1/namespaces/default/requests_per_second"What's next

-

To move from

observermode to active autoscaling, changescaleStrategyto a scaling strategy and remove theobserversetting. -

To apply this pattern to a different metric, update

seriesQueryandname.asin the adapter configuration, then update themetrics[].external.metric.namein the AHPA resource to match.