Dify on Alibaba Cloud

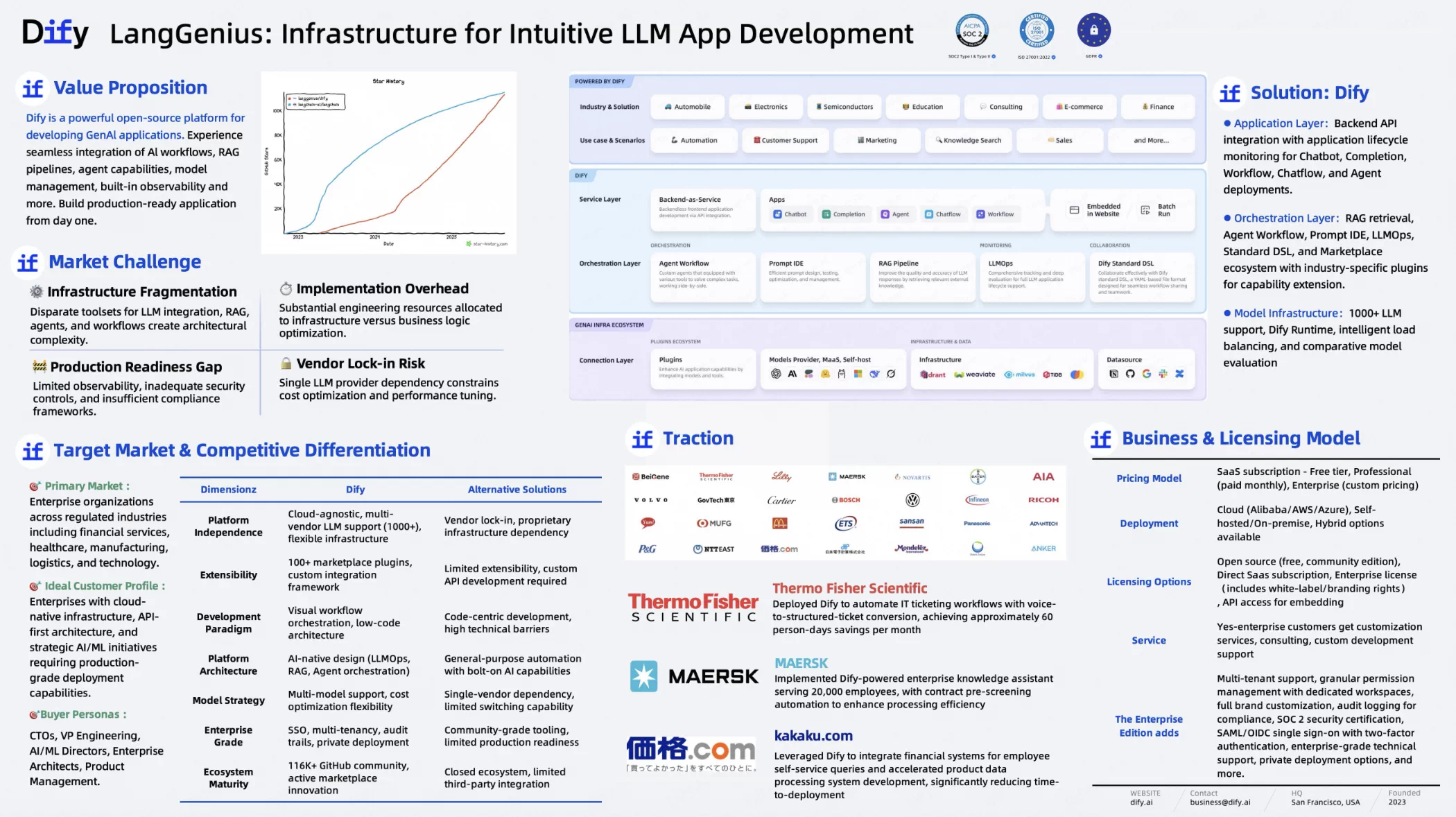

Dify.AI: Streamlining Enterprise LLM Application Development

Dify.AI offers an intuitive development environment that enables both technical and non-technical teams to build sophisticated AI applications without extensive coding expertise.

The platform abstracts the complexities of LLM integration, allowing organizations to focus on creating business value rather than managing technical infrastructure. From intelligent chatbots and content generation tools to knowledge management systems and custom workflows, Dify.AI supports a wide range of AI-powered applications.

Dify.AI is an enterprise-grade platform that streamlines the development and deployment of Large Language Model (LLM) applications. It enables businesses to rapidly turn AI concepts into production-ready solutions by providing user-friendly tools, abstracting technical complexity, and supporting diverse use cases like chatbots, content generation, and knowledge management.![]() Multi-Model Flexibility: Supports integration of LLMs from multiple providers (vendor-agnostic), allowing optimized selection based on performance, cost, and functionality.

Multi-Model Flexibility: Supports integration of LLMs from multiple providers (vendor-agnostic), allowing optimized selection based on performance, cost, and functionality.![]() Enterprise-Ready Infrastructure: Built with robust security, scalability, and compliance to meet strict corporate requirements.

Enterprise-Ready Infrastructure: Built with robust security, scalability, and compliance to meet strict corporate requirements.![]() Agile Development: Rapid prototyping and iterative testing reduce time-to-market, enabling faster user feedback and refinement.

Agile Development: Rapid prototyping and iterative testing reduce time-to-market, enabling faster user feedback and refinement.

Partner Ecosystem

Leverage diverse partnerships to scale solutions, integrate expertise, and drive innovation across industries.

Reseller Partners

Partners who resell Dify Enterprise while offering paid technical services and training at their own pricing, plus co-sell other products Dify.

Service Partners

Partners who leverage consulting or other professional services to help clients learn and use Dify Enterprise Dify. These partners provide implementation support, strategic consulting, and system integration services to help organizations adopt Dify.

High Business Continuity Requirement

Financial business is sensitive to service instability and data inaccuracies. Both regulators and customers require transactions to be processed accurately and quickly with near-zero downtime. FinTech companies must have solid business continuity capabilities, such as running on a reliable, highly available platform to be able to recover from disasters.

Solution

RAG Pipeline

A solution that extracts data from various sources, transforms it, and indexes it into vector databases for optimal use with large language models

Enables AI applications to access and utilize company-specific or domain-specific knowledge, making LLMs more accurate and relevant by grounding responses in your own data rather than relying solely on pre-trained information.

Agentic Workflows

Visual drag-and-drop workflow builder that allows users to create sophisticated AI applications and workflows capable of handling diverse tasks and evolving business needs in minutes.

Enables rapid development without coding, with one case showing an estimated annual reduction of 18,000 hours and another saving 300 man-hours each month, dramatically accelerating AI implementation and ROI.

MCP Integration

Native Model Context Protocol (MCP) integration that bridges systems and platforms by accessing external APIs, databases, and services through standardized protocols, and the ability to publish Dify-built workflows as universal MCP servers.

Simplifies integration and reduces maintenance, enabling seamless AI-enterprise system connections and scaling solutions for unlimited MCP clients.