By Huizhi, Yuzhi, and Yujia

In a cloud-native context, stateful applications need to use a set of storage solutions for data persistence storage. Compared with distributed storage, local storage is better in terms of ease of use, maintainability, and IO performance. However, using local storage as the low-cost delivery Kubernetes clusters has many problems:

The Native Local Storage Capacity of Kubernetes Is Limited. Node retention cannot be achieved through Hostpath, which causes application data lost after pods drift. However, semi-automatic static Local PV can be used to ensure node retention, but it fails to be fully automated. Human participation (such as creating folder paths and tagging nodes) is still required. Some advanced storage capabilities (such as snapshots) cannot be used.

The Open-Local application was created to solve the preceding problems. Let's look at the performance of Open-Local.

Open-source address: https://github.com/alibaba/open-local

Open-Local is a local storage management system open-sourced by Alibaba. With Open-Local, using local storage on Kubernetes is as simple as using centralized storage.

Currently, open-local supports the following storage features: Local storage pool management, dynamic allocation of persistent volume, storage scheduling algorithm expansion, persistent volume expansion, snapshots, monitoring, and I/O throttling, native block devices, and temporary volumes.

┌─────────────────────────────────────────────────────────────────────────────┐

│ Master │

│ ┌───┬───┐ ┌────────────────┐ │

│ │Pod│PVC│ │ API-Server │ │

│ └───┴┬──┘ └────────────────┘ │

│ │ bound ▲ │

│ ▼ │ watch │

│ ┌────┐ ┌───────┴────────┐ │

│ │ PV │ │ Kube-Scheduler │ │

│ └────┘ ┌─┴────────────────┴─┐ │

│ ▲ │ open-local │ │

│ │ │ scheduler-extender │ │

│ │ ┌────►└────────────────────┘◄───┐ │

│ ┌──────────────────┐ │ │ ▲ │ │

│ │ NodeLocalStorage │ │create│ │ │ callback │

│ │ InitConfig │ ┌┴──────┴─────┐ ┌──────┴───────┐ ┌────┴────────┐ │

│ └──────────────────┘ │ External │ │ External │ │ External │ │

│ ▲ │ Provisioner │ │ Resizer │ │ Snapshotter │ │

│ │ watch ├─────────────┤ ├──────────────┤ ├─────────────┤ │

│ ┌─────┴──────┐ ├─────────────┴──┴──────────────┴──┴─────────────┤GRPC│

│ │ open-local │ │ open-local │ │

│ │ controller │ │ CSI ControllerServer │ │

│ └─────┬──────┘ └────────────────────────────────────────────────┘ │

│ │ create │

└──────────┼──────────────────────────────────────────────────────────────────┘

│

┌──────────┼──────────────────────────────────────────────────────────────────┐

│ Worker │ │

│ │ │

│ ▼ ┌───────────┐ │

│ ┌──────────────────┐ │ Kubelet │ │

│ │ NodeLocalStorage │ └─────┬─────┘ │

│ └──────────────────┘ │ GRPC Shared Disks │

│ ▲ ▼ ┌───┐ ┌───┐ │

│ │ ┌────────────────┐ │sdb│ │sdc│ │

│ │ │ open-local │ create volume └───┘ └───┘ │

│ │ │ CSI NodeServer ├───────────────► VolumeGroup │

│ │ └────────────────┘ │

│ │ │

│ │ Exclusive Disks │

│ │ ┌─────────────┐ ┌───┐ │

│ │ update │ open-local │ init device │sdd│ │

│ └────────────────┤ agent ├────────────────► └───┘ │

│ └─────────────┘ Block Device │

│ │

└─────────────────────────────────────────────────────────────────────────────┘1) Scheduler-extender: As an extension component of the Kube-Scheduler, it is implemented in the Extender mode and adds a local storage scheduling algorithm.

2) CSI Plug-In: According to Container Storage Interface (CSI) standard to implement local disk management ability. It has the capability of creating, deleting, and expanding persistent volumes, creating and deleting snapshots, and exposing metrics of persistent volume.

3) Agent: Each node running in the cluster initializes the storage device according to the configuration list and reports the information of the local storage device in the cluster for the Scheduler-Extender to realize decision scheduling.

4) Controller: It obtains the initialization configuration of cluster storage and sends a detailed resource configuration list to the agent running on each node.

1) NodeLocalStorage: Open-Local reports the storage device information on each node through NodeLocalStorage resources. This resource is created by the controller and its status is updated by the agent component of each node. This CRD is a global resource.

2) NodeLocalStorageInitConfig: Open-Local controller can create each NodeLocalStorage resource by using NodeLocalStorageInitConfig resources. NodeLocalStorageInitConfig resources contain the global default node configuration and the specific node configuration. If the node label of the node conforms with the expression, the specific node configuration is used. Otherwise, the default configuration is used.

Precondition: lvm tools have been installed in the environment.

Open-Local is installed by default during ack-distro deployment. Edit NodeLocalStorageInitConfig resources to perform storage initialization configurations.

# kubectl edit nlsc open-localUsing Open-Local requires a VolumeGroup (VG) in the environment. If a VG already exists in your environment and space is left, you can configure Open-Local in a whitelist. If no VG exists in your environment, you need to provide a block device name for Open-Local to create a VG.

apiVersion: csi.aliyun.com/v1alpha1

kind: NodeLocalStorageInitConfig

metadata:

name: open-local

spec:

globalConfig: # The global default node configuration. When the NodeLocalStorage is initialized and created, it will be populated into its Spec.

listConfig:

vgs:

include: # VolumeGroup Whitelist. Regular expression supported

- open-local-pool-[0-9]+

- your-vg-name # If a VG already exists in the environment, the whitelist that can be written is resourceToBeInited by open-local management.

resourceToBeInited:

vgs:

- devices:

- /dev/vdc # If there is no VG in the environment, the user needs to provide a block device.

name: open-local-pool-0 # To initialize the block device /dev/vdc to a VG named open-local-pool-0After the NodeLocalStorageInitConfig resources are edited, the controller and agent update the NodeLocalStorage resources of all nodes.

Open-Local deploys some storage templates in the cluster by default. We take open-local-lvm, open-local-lvm-xfs, and open-local-lvm-io-throttling as examples.

# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

open-local-lvm local.csi.aliyun.com Delete WaitForFirstConsumer true 8d

open-local-lvm-xfs local.csi.aliyun.com Delete WaitForFirstConsumer true 6h56m

open-local-lvm-io-throttling local.csi.aliyun.com Delete WaitForFirstConsumer true Create a StatefulSet that uses the open-local-lvm storage template. As such, the created PV file system is ext4. If the user designates an open-local-lvm-xfs storage template, the PV file system is xfs.

# kubectl apply -f https://raw.githubusercontent.com/alibaba/open-local/main/example/lvm/sts-nginx.yamlCheck the Pod/PVC/PV status to see if the PV is created successfully:

# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-lvm-0 1/1 Running 0 3m5s

# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

html-nginx-lvm-0 Bound local-52f1bab4-d39b-4cde-abad-6c5963b47761 5Gi RWO open-local-lvm 104s

# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS AGE

local-52f1bab4-d39b-4cde-abad-6c5963b47761 5Gi RWO Delete Bound default/html-nginx-lvm-0 open-local-lvm 2m4s

kubectl describe pvc html-nginx-lvm-0Edit the spec.resources.requests.storage field corresponding with PVC and expand the declared storage size of the PVC from 5Gi to 20Gi:

# kubectl patch pvc html-nginx-lvm-0 -p '{"spec":{"resources":{"requests":{"storage":"20Gi"}}}}'Check the status of PVC/PV:

# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

html-nginx-lvm-0 Bound local-52f1bab4-d39b-4cde-abad-6c5963b47761 20Gi RWO open-local-lvm 7h4m

# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

local-52f1bab4-d39b-4cde-abad-6c5963b47761 20Gi RWO Delete Bound default/html-nginx-lvm-0 open-local-lvm 7h4mOpen-Local has the following snapshot classes:

# kubectl get volumesnapshotclass

NAME DRIVER DELETIONPOLICY AGE

open-local-lvm local.csi.aliyun.com Delete 20mCreate a VolumeSnapshot resource:

# kubectl apply -f https://raw.githubusercontent.com/alibaba/open-local/main/example/lvm/snapshot.yaml

volumesnapshot.snapshot.storage.k8s.io/new-snapshot-test created

# kubectl get volumesnapshot

NAME READYTOUSE SOURCEPVC SOURCESNAPSHOTCONTENT RESTORESIZE SNAPSHOTCLASS SNAPSHOTCONTENT CREATIONTIME AGE

new-snapshot-test true html-nginx-lvm-0 1863 open-local-lvm snapcontent-815def28-8979-408e-86de-1e408033de65 19s 19s

# kubectl get volumesnapshotcontent

NAME READYTOUSE RESTORESIZE DELETIONPOLICY DRIVER VOLUMESNAPSHOTCLASS VOLUMESNAPSHOT AGE

snapcontent-815def28-8979-408e-86de-1e408033de65 true 1863 Delete local.csi.aliyun.com open-local-lvm new-snapshot-test 48sCreate a new pod. The PV data corresponding with the pod is the same as the previous snapshot point:

# kubectl apply -f https://raw.githubusercontent.com/alibaba/open-local/main/example/lvm/sts-nginx-snap.yaml

service/nginx-lvm-snap created

statefulset.apps/nginx-lvm-snap created

# kubectl get po -l app=nginx-lvm-snap

NAME READY STATUS RESTARTS AGE

nginx-lvm-snap-0 1/1 Running 0 46s

# kubectl get pvc -l app=nginx-lvm-snap

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

html-nginx-lvm-snap-0 Bound local-1c69455d-c50b-422d-a5c0-2eb5c7d0d21b 4Gi RWO open-local-lvm 2m11sOpen-Local supports that created PV is mounted in containers as block devices (In this example, the block devices are in the container /dev/sdd path):

# kubectl apply -f https://raw.githubusercontent.com/alibaba/open-local/main/example/lvm/sts-block.yamlCheck the status of Pod, PVC, and PV:

# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-lvm-block-0 1/1 Running 0 25s

# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

html-nginx-lvm-block-0 Bound local-b048c19a-fe0b-455d-9f25-b23fdef03d8c 5Gi RWO open-local-lvm 36s

# kubectl describe pvc html-nginx-lvm-block-0

Name: html-nginx-lvm-block-0

Namespace: default

StorageClass: open-local-lvm

...

Access Modes: RWO

VolumeMode: Block # # Loading container in the form of block device

Mounted By: nginx-lvm-block-0

...Open-Local supports IO throttling for PVs. The following storage class templates support IO throttling.

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: open-local-lvm-io-throttling

provisioner: local.csi.aliyun.com

parameters:

csi.storage.k8s.io/fstype: ext4

volumeType: "LVM"

bps: "1048576" # Limit read/write throughput to 1024KiB/s

iops: "1024" # Limit IOPS to 1024

reclaimPolicy: Delete

volumeBindingMode: WaitForFirstConsumer

allowVolumeExpansion: trueCreate a StatefulSet that uses the open-local-lvm-io-throttling storage template:

# kubectl apply -f https://raw.githubusercontent.com/alibaba/open-local/main/example/lvm/sts-io-throttling.yamlAfter the pod is running, it enters the pod container:

# kubectl exec -it test-io-throttling-0 shAt this time, the PV is mounted on the /dev/sdd as a native block device and runs the fio command:

# fio -name=test -filename=/dev/sdd -ioengine=psync -direct=1 -iodepth=1 -thread -bs=16k -rw=readwrite -numjobs=32 -size=1G -runtime=60 -time_based -group_reportingThe following is the result. The visible read/write throughput is limited to around 1024KiB/s:

......

Run status group 0 (all jobs):

READ: bw=1024KiB/s (1049kB/s), 1024KiB/s-1024KiB/s (1049kB/s-1049kB/s), io=60.4MiB (63.3MB), run=60406-60406msec

WRITE: bw=993KiB/s (1017kB/s), 993KiB/s-993KiB/s (1017kB/s-1017kB/s), io=58.6MiB (61.4MB), run=60406-60406msec

Disk stats (read/write):

dm-1: ios=3869/3749, merge=0/0, ticks=4848/17833, in_queue=22681, util=6.68%, aggrios=3112/3221, aggrmerge=774/631, aggrticks=3921/13598, aggrin_queue=17396, aggrutil=6.75%

vdb: ios=3112/3221, merge=774/631, ticks=3921/13598, in_queue=17396, util=6.75%Open-Local allows you to create temporary volumes for pods. The lifecycle of a temporary volume is the same as a pod. Therefore, a temporary volume is deleted after a pod is deleted. This can be understood as the Open-Local version of emptydir.

# kubectl apply -f ./example/lvm/ephemeral.yamlThe following is the result:

# kubectl describe po file-server

Name: file-server

Namespace: default

......

Containers:

file-server:

......

Mounts:

/srv from webroot (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-dns4c (ro)

Volumes:

webroot: # This is the CSI temporary volume.

Type: CSI (a Container Storage Interface (CSI) volume source)

Driver: local.csi.aliyun.com

FSType:

ReadOnly: false

VolumeAttributes: size=2Gi

vgName=open-local-pool-0

default-token-dns4c:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-dns4c

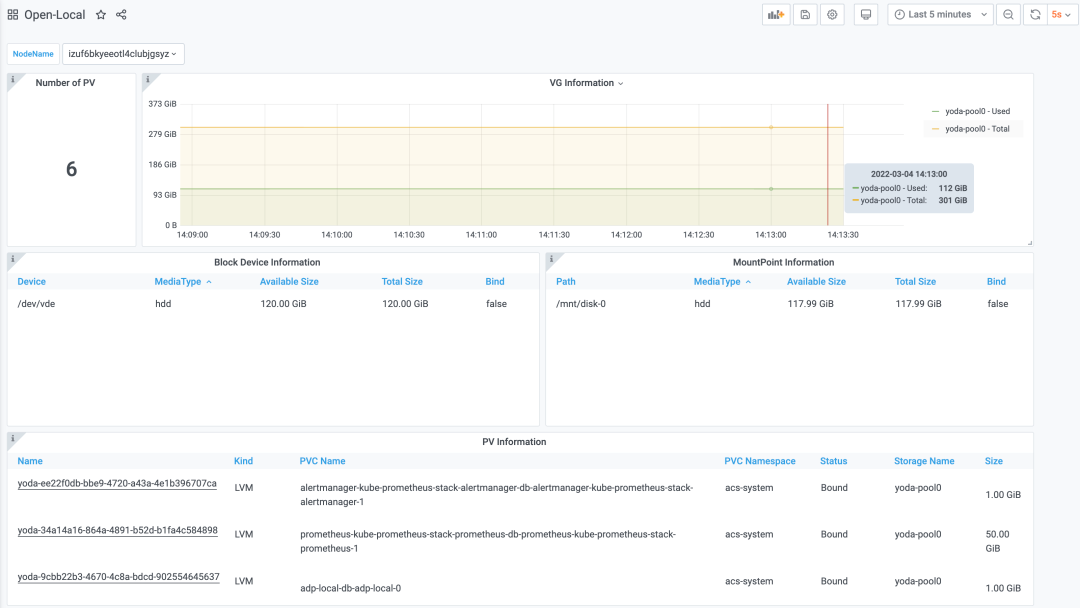

Optional: falseOpen-Local is equipped with a monitoring dashboard. You can use Grafana to view the local storage information of the cluster, including information about storage devices and PVs.

In summary, you can reduce labor costs in O&M and improve the stability of cluster runtime with Open-Local. In terms of features, it maximizes the advantages of local storage so that users can not only experience the high performance of local disks but also enrich application scenarios with various advanced storage features. Therefore, it allows developers to experience the benefits of cloud-native and realizes a crucial step of applications migration to the cloud, especially the cloud-native deployment of stateful applications.

105 posts | 6 followers

FollowAlibaba Cloud Native - May 23, 2022

Alibaba Cloud Community - February 10, 2023

Alibaba Clouder - June 9, 2020

Alibaba Cloud Native Community - February 26, 2020

Alibaba Cloud Native Community - May 15, 2023

Alibaba Clouder - August 1, 2019

105 posts | 6 followers

Follow Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn More Hybrid Cloud Distributed Storage

Hybrid Cloud Distributed Storage

Provides scalable, distributed, and high-performance block storage and object storage services in a software-defined manner.

Learn MoreMore Posts by OpenAnolis