By Zhang Cheng (Yuanyi), Head of Alibaba Cloud Logtail

In the past year, more users sought consulting on how to build a log system for Kubernetes or how to solve issues throughout that process. Therefore, the following article describes our years of experience in building log systems, hoping to give you a shortcut to successfully building a log system for Kubernetes. This article is one in a series focusing on our practices and experiences. The content is subject to updates, as the involved technology evolves.

In 2016, Kubernetes was still in a three-way tie with Docker Swarm and Apache Mesos. Finally, Kubernetes gained dominance by virtue of a series of advantages, such as high scalability, declarative APIs, and Cloud friendly.

As the only core project of the Cloud-native Computing Foundation (CNCF), Kubernetes is the base for Cloud-native implementations. At present, Alibaba has started the transformation of Cloud-native based on Kubernetes. All of Alibaba's businesses will be migrated to the public Cloud within one to two years.

In CNCF, Cloud-native builds and runs elastically scalable application systems with high fault tolerance, easy management, observability, and loose coupling through containers, service meshes, microservices, executable infrastructure, and declarative APIs in the public Cloud, private Cloud, and hybrid Cloud. Observability is essential to an application system. Notably, one of the Cloud-native design concepts is the diagnosability design, which involves cluster-level logs, metrics, and traces.

The general process of locating an online issue is as follows:

Detect the issue based on metrics, locate the problematic module by using the trace system, and pinpoint the cause of the issue based on module logs. Logs contain information, such as errors, key variables, and code running routes, which are the core elements for troubleshooting. Therefore, logs are necessary for online problem-solving.

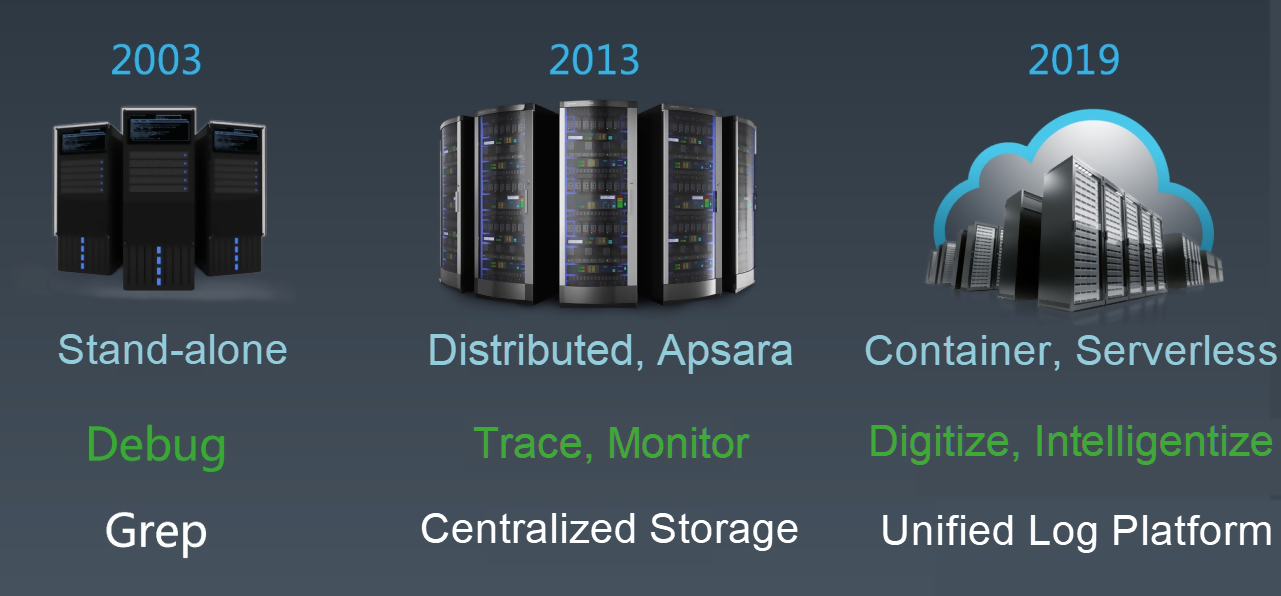

Over the past decade, Alibaba's log system has evolved along with the development of computing forms. This evolution can be divided into three stages:

In the stand-alone era, almost all applications were deployed in stand-alone systems. As service pressure increased, users could only turn to IBM mini-computers with higher specifications. As a part of an application system, the log service was mainly used for program debugging. Normally, logs were analyzed by using common Linux text commands, such as grep.

As the stand-alone system became a bottleneck for Alibaba Cloud business development, the Apsara 5K project was officially launched in 2013 for substantial scale-out. At this stage, the transformation into distributed businesses had been implemented, enabling a shift from local service calls to distributed service calls. To better manage, debug, and analyze distributed applications, we developed the trace (distributed link tracking) system and various monitoring systems. A commonality amongst these systems is that they store all their logs, including metrics, in a centralized manner.

In recent years, to support faster development and upgrading, we carried out the container-based transformation and began to embrace the Kubernetes ecosystem, migrate all businesses to the Cloud, and conduct serverless practices. At this stage, logs exploded in both scale and category, and the demand for digital and intelligent analysis of logs increased, as well. The result was a unified log platform.

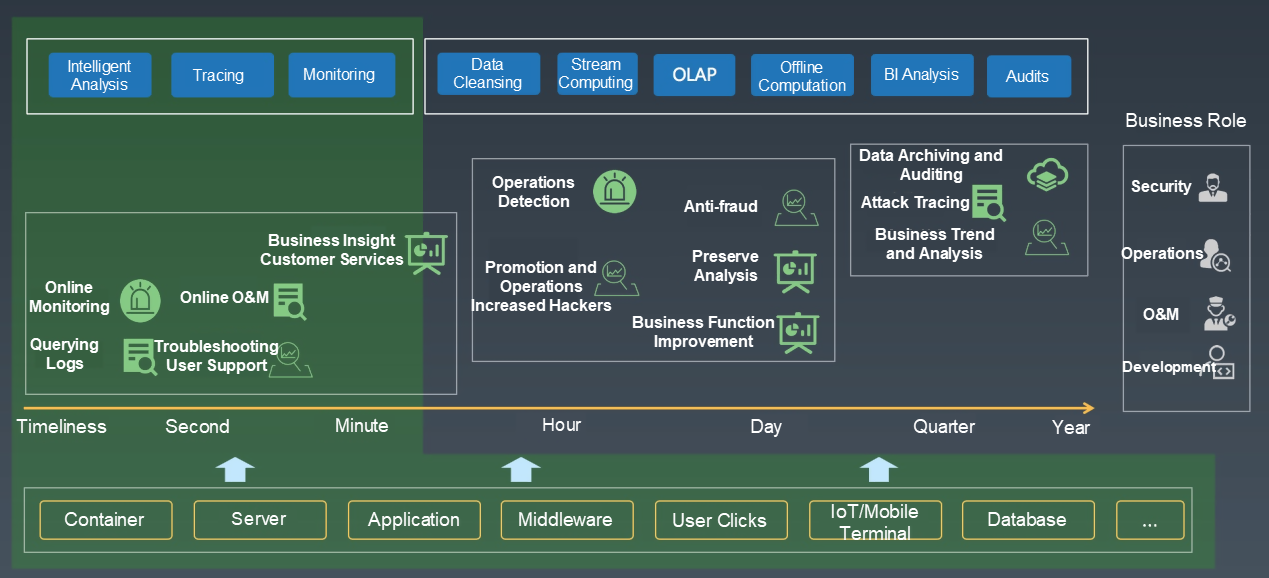

In CNCF, observability is used to diagnose issues. At the enterprise level, observability involves not only DevOps but business, operations, business intelligence (BI), auditing, and security. The ultimate objective of observability is to achieve the digitization and intelligence of all aspects of the enterprise.

At Alibaba, almost all business roles use various types of log data. To support different application scenarios, we have developed many tools and functions, such as real-time log analysis, tracing analysis, monitoring, data manipulation, stream computing, offline computation, BI system, and audit system. The log system is connected to various stream computing and offline systems. It focuses on real-time data collection, cleansing, intelligent analysis, and monitoring.

There are many mature solutions for simple log systems, which we do not describe in detail. Instead, this article only focuses on the construction of a log system in Kubernetes. The log system solution for Kubernetes is quite different from those for physical and virtual machines.

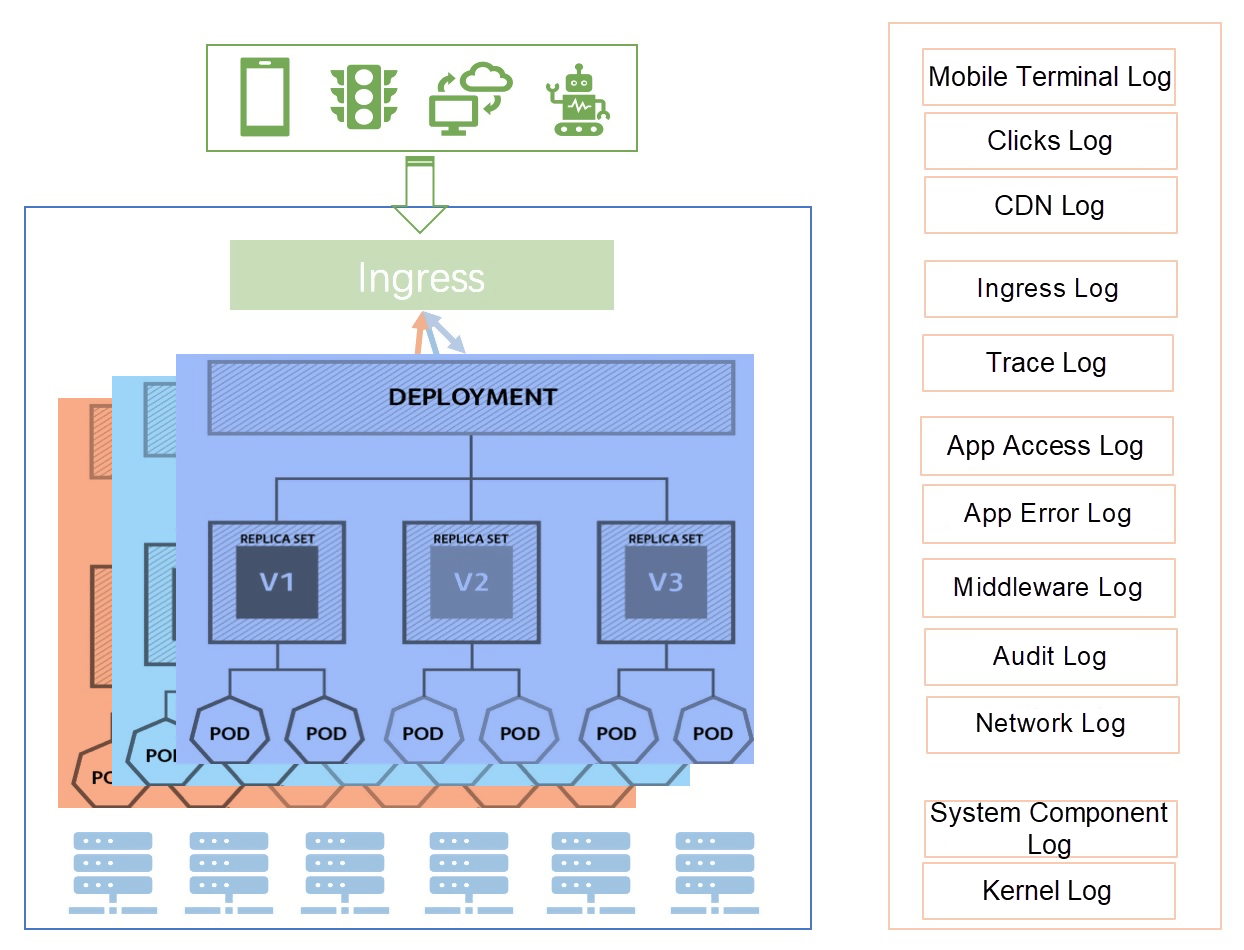

The forms of logs are more complex and cover not only logs from physical and virtual machines, but the standard container output, files in containers, container events, and Kubernetes events.

On the other hand, the environment becomes more dynamic. In Kubernetes, devices may be down, offline, online, scaled out, or scaled in. Pods may be destroyed. In this case, logs are transient. For example, the logs of a pod are gone after the pod is destroyed). Therefore, log data must be collected and recorded on the server in real-time. In addition, the log collection must be able to adapt to this highly dynamic scenario.

There are more types of logs. A typical Kubernetes architecture, in which a request from a client needs to go through multiple components, such as CDN, Ingress, Service Mesh, and a pod. This involves various types of infrastructure and additional types of logs, such as Kubernetes system component logs, audit logs, Service Mesh logs, and Ingress logs.

The business architecture changes. More companies are implementing microservice architectures in Kubernetes. In microservice systems, there are increasing dependencies between services and underlying products, making service development and troubleshooting more complex. As a result, it's difficult to associate logs of different dimensions.

It is difficult to integrate log solutions. Generally, we build a CICD system in Kubernetes. This CICD system needs to automatically integrate and deploy businesses whenever possible. In addition, log collection, storage, and cleansing must be integrated into this system in the same declarative deployment mode used by Kubernetes. However, existing log systems are independent systems in most cases, and integrating them into the CICD system is costly.

The number of logs increases. Generally, we use an on-premises open-source log system in the early stages of the system. This method is feasible during the testing and verification, or early stage of a company. However, as businesses grow and log volume increases to a certain scale, the on-premises open-source system encounters various issues, such as tenant isolation, query latency, data reliability, and system availability. Although the log system is not the most critical IT sector, issues that occur at critical moments can cause devastating results.

For example, if an emergency occurs during major promotions, concurrent queries by troubleshooting engineers can flood the log system, leading to slow fault recovery and affecting the major promotions.

If you are involved in the construction of a Kubernetes log system, you need to have a deep understanding of the difficulties analyzed in this article. To learn how to construct a Kubernetes log system, stay tuned for our upcoming articles or visit our Kubernetes product page to start building your own.

726 posts | 59 followers

FollowAlibaba Developer - June 30, 2020

Alibaba Cloud Native Community - November 24, 2025

Aliware - March 19, 2021

Aliware - August 18, 2021

Alibaba Cloud Serverless - November 27, 2023

Alibaba Cloud Native Community - June 13, 2025

726 posts | 59 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Microservices Engine (MSE)

Microservices Engine (MSE)

MSE provides a fully managed registration and configuration center, and gateway and microservices governance capabilities.

Learn MoreMore Posts by Alibaba Cloud Native Community