By Zhang Yifei (Wupeng)

Over the last two years, developers have become interested in the concept of serverless. Numerous practices, services, and products have been developed.

There is an explosive trend in the sharing of the serverless topics. For example, at the "KubeCon + CloudNativeCon" community conference, which is influential in the field of cloud-native, the number of topics concerning serverless was 20 in 2018 and increased to 35 in 2019.

Serverless computing-oriented cloud infrastructure is becoming more abundant because a large number of serverless computing products are emerging, from the earliest AWS Lambda to Azure Functions, Google Functions, and Google CloudRun, as well as Alibaba Cloud Serverless Kubernetes, Serverless App Engine, and Function Compute within China.

New concepts and new products do not appear out of thin air. Their initial purpose was to resolve problems at that time. Serverless products are no exception. As practitioners get a clearer and deeper understanding of the problem domain, they will iterate the problem-solving methods and provide solutions closer to the nature of the problem.

Understanding the solution from the perspective of the problem domain is essential. It avoids two extreme conditions; it can solve all problems or it is too advanced to understand.

Therefore, this article attempts to take the daily development process as a starting point. Upon the problem analysis in each phase, it combines solutions to extract a serverless development model and maps the serverless product form proposed by the industry. Eventually, a reference is provided for developers to adopt serverless architectures and services.

Iterative Model

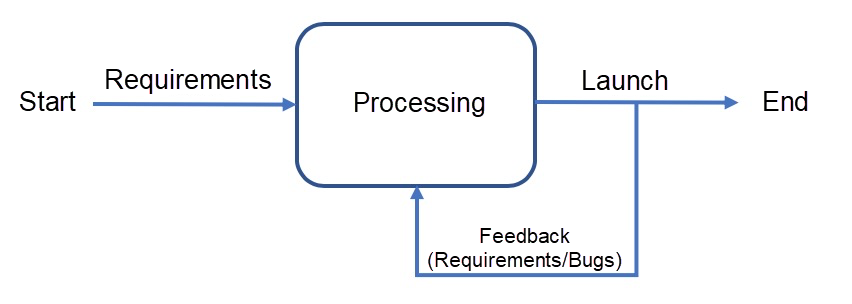

From the overall perspective of the project:

The goal of this model is to meet customer needs. Passive iteration is used to meet customer needs and gradually dig deeper into the nature of customer needs to better understand them. Active iteration is used to work with the customer to adopt better solutions or tackle the problem at its source.

Each demand feedback will deepen the understanding of customer needs, so we can provide services that better meet the needs. Each bug feedback will deepen the understanding of the solution, so we can provide more stable services.

After the model is launched, the core issue during daily work focuses on how to accelerate iterations.

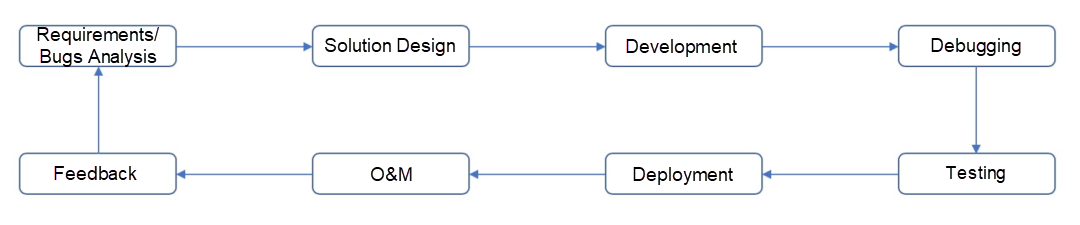

To accelerate iterations, it is necessary to understand the constraints and targets. The following figure shows a development model from the development perspective:

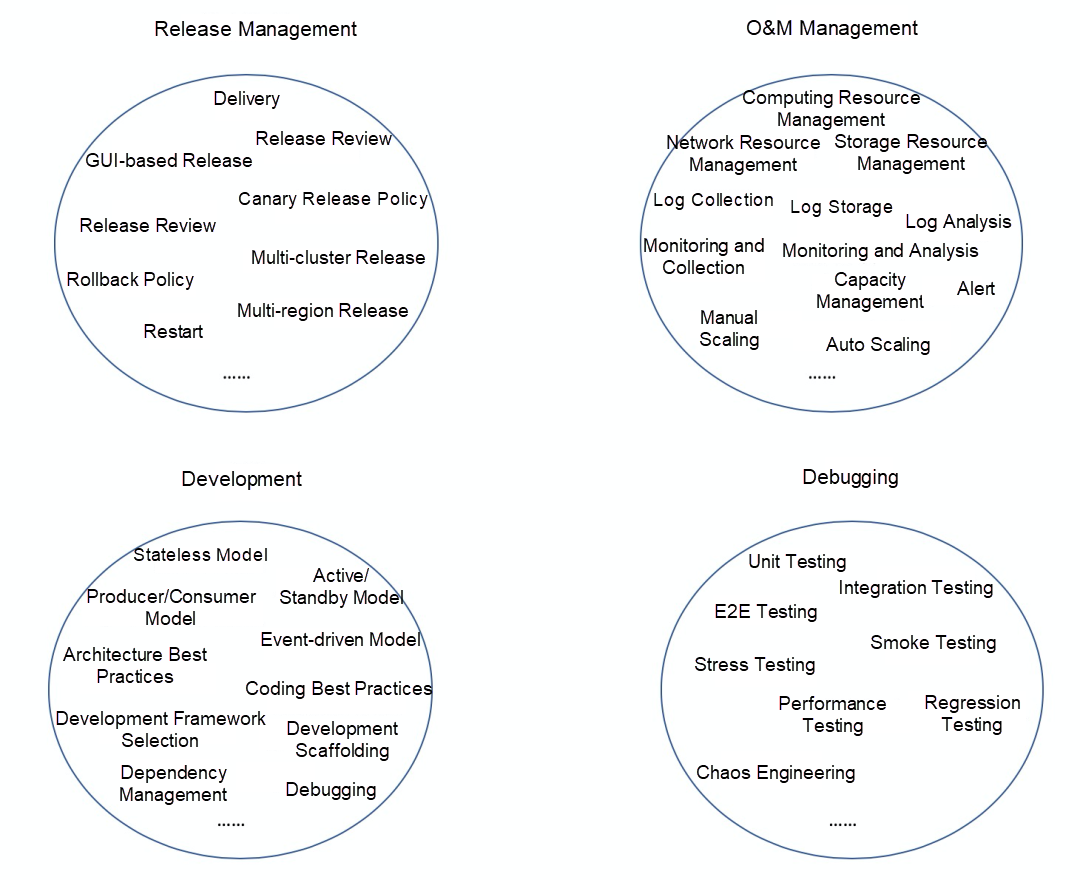

Although there are different programming languages and development architectures, there are common problems at each phase:

In addition to solving the preceding problems, it is also necessary to provide standardized solutions to reduce developers' learning and usage costs as well as to shorten the time required from an idea to release.

If we analyze the time spent during different phases of the preceding process, we can find the following items in the project lifecycle:

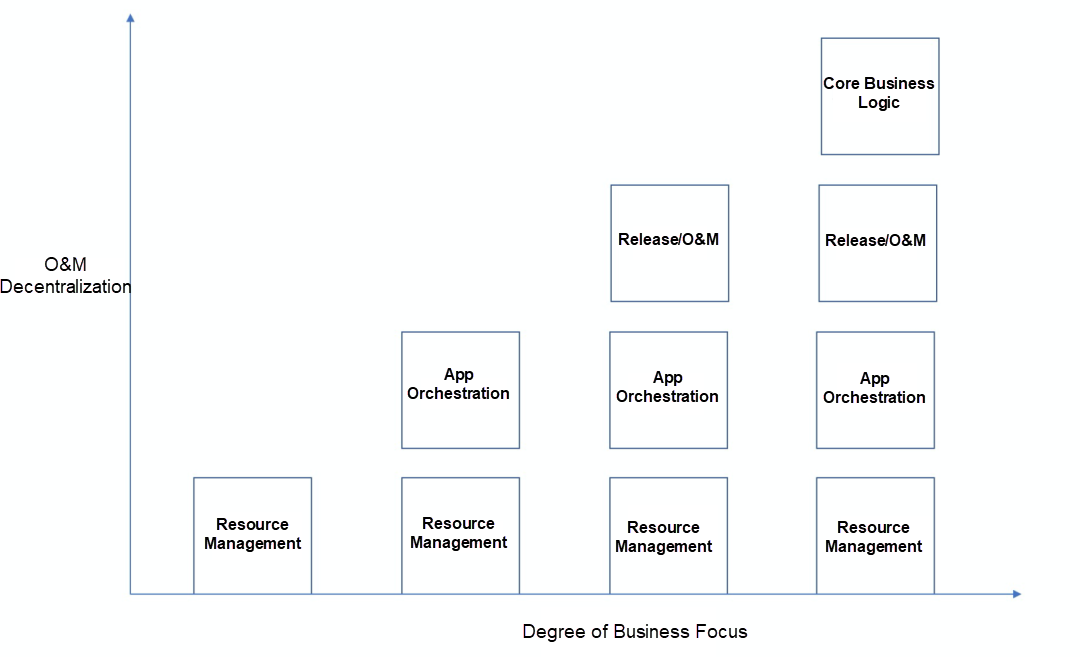

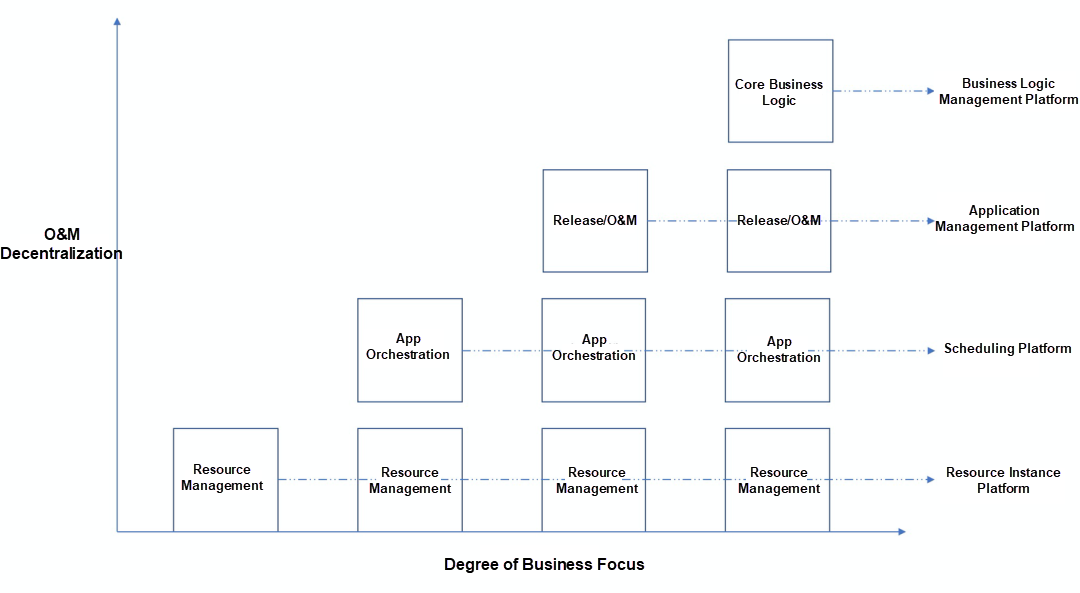

To accelerate iterations, it is necessary to solve the parts that take up time and energy in turn, as shown in the following figure:

Figure 1

From left to right, reduce the costs of "deployment and O&M" through decentralization of different levels of O&M. Reduce the costs at the general logic level as well. With cost reduction from these two perspectives, developers can further focus on the business during iterations.

This process is the same process that takes place from cloud hosting to cloud-native, so you can fully enjoy the technical benefits brought by cloud-native.

Due to the high coupling between the software design and deployment architectures and the environments at different times, in the face of new concepts, services, and products, the technologies adopted in the iteration process of inventory applications need to be adjusted accordingly. The development and deployment methods need to be modified. The development of new applications and the deployment of new concepts to existing applications have certain learning and practical costs.

Therefore, the preceding process cannot be completed overnight. We need to select matching services and products according to the current priority of the pain point of the business, carry out technical pre-research in advance according to future planning, and select suitable services and products at different phases.

Wikipedia provides a comprehensive definition [1] of serverless:

"Serverless computing is a cloud computing execution model in which the cloud provider runs the server, and dynamically manages the allocation of machine resources. Pricing is based on the actual amount of resources consumed by an application, rather than on pre-purchased units of capacity. It can be a form of utility computing."

This computing model brings the following benefits:

Serverless computing can simplify the process of deploying code into production. Scaling, capacity planning, and maintenance operations may be hidden from the developer or operator. Serverless code can be used in conjunction with code deployed in traditional styles, such as microservices. Alternatively, applications can be written to be purely serverless and use no provisioned servers at all.

A concept is essentially an abstraction of the problem domain and a summary of the characteristics of the problem domain. To understand concepts through characteristics, we can avoid focusing on text descriptions rather than the value of concepts.

From the user's perspective, we can abstract the following features of serverless:

In a company of a certain scale, if the roles of development and O&M are strictly differentiated, this computing form already exists and is not completely new. However, the current technology trend is to use the scale of the cloud and the advantages of the technology dividend to reduce the cost of the business on the technology side through the cloud and to feed the business through the technology dividend. Therefore, discussions of serverless in the industry focus on the serverless capabilities embodied in cloud services and products.

Serverless Architectures [2] by Martin Fowler describes the serverless development model from the perspective of architecture. It is summarized to include three elements:

Serverless development adopts the event-driven model [3], and the architecture is designed around the production and response of events, such as HTTP/HTTPS requests, time, and messages. In this model, the event production and processing are the core. Events drive the entire service process and focus on the entire processing process; the deeper the understanding of the service, the better the matching of event types and services, and the more effective the interaction between technology and services.

The event-driven model changes the concept of terminate-and-stay resident (TSR) service from a required choice to an optional choice, so you can better cope with changes in the number of requests, such as auto scaling. In addition, the non-TSR service can reduce the resource and maintenance costs required and accelerate project iterations.

We can more intuitively understand this model through the following two figures selected from Serverless Architectures [2]:

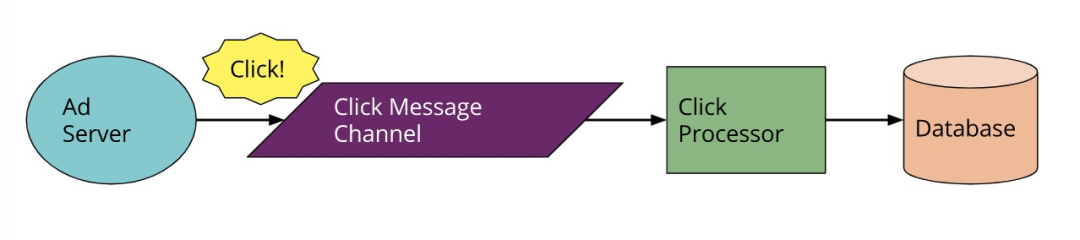

Figure 2

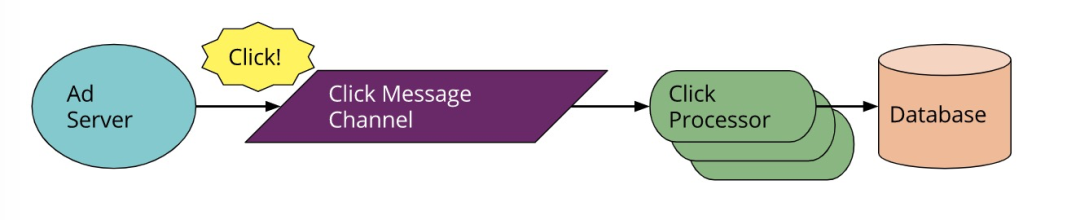

Figure 3

Figure 2 shows a common development model. The Click Processor service is a TSR service that responds to all the click requests from users. In a production environment, services are typically deployed on multiple instances, and TSR is a key feature. Routine O&M focuses on ensuring the stability of TSR services.

Figure 3 is an event-driven development model. This model focuses on event generation and response, specifically, whether the response service is TSR is an optional choice.

Serverless is conceptually different from Platform as a Service (PaaS) and Container as a Service (CaaS.) The key difference is whether to use auto scaling as the core feature of the concept.

Serverless scenarios need to be combined with the event-driven development model. Then, the transparency of auto scaling needs to be deepened, allowing developers to shift their focus from developing static processing capabilities to developing dynamic processing capabilities. Therefore, this solution can better cope with the uncertain volume of business requests after its launch.

For development, images or language packaging (such as the .war or .jar package in Java) can be used for delivery. The platform is responsible for runtime related work. To go a step further, the Function as a Service (FaaS) concept can be used to provide only business logic functions based on the platform or standardized FaaS solutions, with the platform being responsible for running current operations, such as request handler, request calling, and auto scaling.

Regardless of the delivery method, the concept of Backend as a Service (BaaS) [4] can be used in the cloud to implement partial logic (such as permission management and middleware management) through cloud platforms or the third-party open APIs. This enables developers to focus more on the business.

The serverless service model focuses on cloud vendors' support for serverless computing. The differences in different services and product forms mainly lie in their understanding of serverless features and the degree of satisfaction to which it is delivered.

From the O&M-free perspective, the most basic thing is to save server O&M costs. Developers can apply for resources on a pay-as-you-go basis. For conventional O&M tasks, such as capacity management, auto scaling, traffic management, logging, and monitoring and alerting, different services and products will focus on adopting suitable methods to satisfy according to their own positioning and target customer characteristics.

In terms of billing methods, on the one hand, cloud vendors determine the billing dimensions, such as resources and requests, based on their positioning; on the other hand, cloud vendors determine the billing granularity based on the current technical capabilities.

According to the preceding analysis, different serverless service models from cloud vendors are not static. These models iterate with the product positioning, characteristics of target customers, technical capabilities, and other features, and grow with customers.

The serverless service model needs to meet actual requirements. Returning to Figure 1, the serverless service models of cloud vendors can be divided into the following categories:

A summary is shown in the chart below:

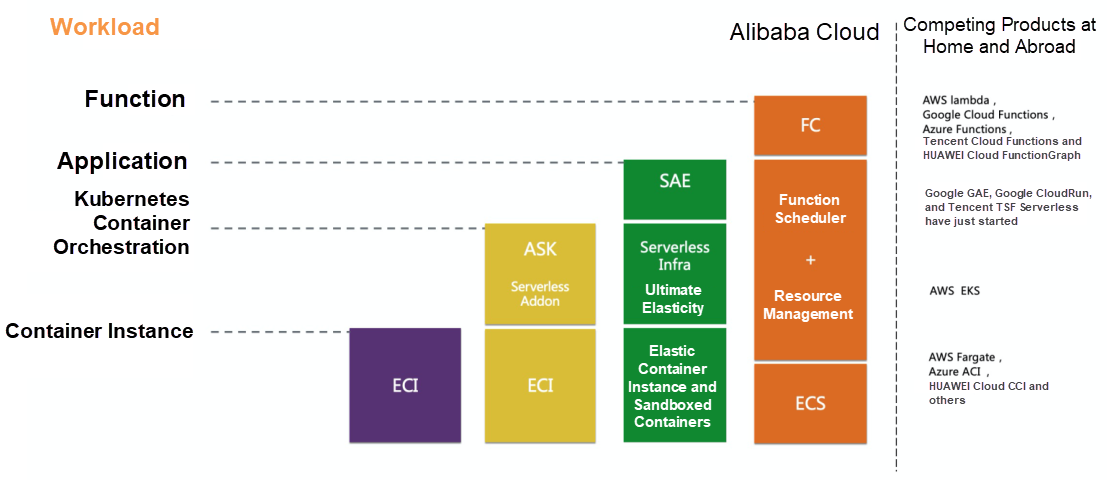

Alibaba Cloud provides relatively comprehensive serverless services and products. This article uses Alibaba Cloud as an example to describe how to smoothly migrate your service to another cloud vendor.

From the materials [5] published by Alibaba Cloud, we can learn about the following types of serverless products:

The preceding cloud product categories can be mapped to the models in Figure 1. Then, identify the phases and pain points of your current business technologies, and determine the requirements for cloud solutions. After that, select the appropriate cloud service and product for the current phase based on the product form of the cloud vendor.

The focus of the mapping relationship is to know whether cloud product positioning can meet the business needs for a long time:

At the same time, it is necessary to understand whether cloud products can develop with the business, especially in the technical needs of the business and the limitations of cloud product positioning. What are the limitations of the technical implementation of current cloud products?

If the positioning of cloud products brings restrictions, then it is necessary to consider the use of cloud products that match the business requirements. If limitations are imposed by current technologies, we recommend that you have a chance to grow with cloud products and give cloud product feedback promptly, so cloud products can better meet their business needs.

At the business level, the richness of cloud vendors' own service types needs attention as well. The richer their own services are, the larger the scale will be, which will cause a scale effect. This brings more technical benefits and cost advantages to the business.

Fortunately, cloud products usually have abundant documentation and user groups, which allows users to directly face the product director (PD) and development teams. The PD and development teams are also looking forward to directly facing users, listening to user feedback and needs, and building better solutions with users.

Let's have an overall impression of Alibaba Cloud serverless products and the corresponding DingTalk groups.

Alibaba Cloud Elastic Container Instance (ECI) [6] is a serverless and containerized elastic computing service. It allows you to run containers simply by providing packaged images without the need to manage the underlying servers. You only need to pay for the resources consumed by running containers.

Alibaba Cloud Serverless Kubernetes (ASK) is a form of the Alibaba Cloud Container Service product [7]. It manages the master components of Kubernetes and provides pods through Alibaba Cloud ECI. You can use the Kubernetes scheduling capabilities without the need to maintain master nodes and agent nodes. For more information, see the ASK documentation [8].

Alibaba Cloud Serverless App Engine (SAE) [9] is an application-oriented serverless PaaS platform. It helps PaaS users maintain the Infrastructure as a Service (IaaS) as required and uses the pay-as-you-go billing method. On this platform, you can migrate microservice-oriented applications to the cloud without the need for maintenance of the infrastructure, effectively solving cost and efficiency problems. It supports popular development frameworks, such as Spring Cloud, Dubbo, and High-Speed Service Framework (HSF), and achieves the perfect integration of serverless architectures and microservices models. In addition to microservice-oriented applications, you can also deploy applications in any language by using Docker images.

Alibaba Cloud has two function compute products: Function Compute and Serverless Workflow. Function Compute [10] is an event-driven and fully managed serverless computing service. You can use it to write and upload code without having to manage infrastructure, such as servers. Function Compute provides computing resources that allow you to run code more flexibly and reliably. Serverless Workflow [11] is a fully-hosted serverless cloud service that coordinates the execution of multiple distributed tasks. Serverless Workflow is designed to simplify tedious work,s such as task coordination, state management, and fault handling. This enables you to focus on business logic development only. You can orchestrate distributed tasks in sequence, branch, or parallel mode. This service reliably coordinates task execution according to the preset steps, tracks the state change of each task, and runs the user-defined retry logic when necessary.

Serverless is essentially a problem domain. It abstracts the non-business core problems in the development process but affects business iteration and provides solutions accordingly. This concept was not suddenly generated but has been more or less applied to our daily work. However, with the wave of cloud computing, serverless services and products on the cloud are more systematic and competitive. Based on its superior scale and rich product lines, serverless products can continue to provide services that better meet business needs for the problem domain.

The serverless concept does not only flourish in the centralized cloud, but it is also gradually developing at the edge end. It makes the operation of services more extensive, which can better satisfy the customers of their own businesses and provide stable lower-latency services.

This article attempts to start from the daily process of a development project, to help you understand the concept of serverless from the perspective of daily practices, and to select suitable serverless services and products based on the current stage. It also tries to share the concept of co-construction between cloud products and users from the perspective of internal cloud products to better transfer and create value through different divisions of labor.

Zhang Yifei (Wupeng), Alibaba Cloud Technical Expert, is currently responsible for the research and development of SAE infrastructure. He has an in-depth understanding of and practical experience with serverless, secure containers, Kubernetes, and other products. Zhang focuses on implementing serverless infrastructure technologies.

[1] Wikipedia: Serverless Computing

[2] Martin Fowler: Serverless Architectures

[3] Wikipedia: Event-Driven Architecture

[4] Wikipedia: Mobile Backend as a Service

[5] From DevOps to NoOps, a Discussion of the Serverless Technology Implementation Approach (Article in Chinese)

[6] Alibaba Cloud ECI Homepage

[7] Alibaba Cloud Container Service for Kubernetes

[8] Alibaba Cloud Serverless Kubernetes

[9] Alibaba Cloud Serverless App Engine

[10] Alibaba Cloud Function Compute

[11] Alibaba Cloud Serverless Workflow

[12] Cloud Programming Simplified: A Berkeley View on Serverless Computing

Start Your Cloud-Native Applications with the Open-Source Platform "Nacos"

The Evolution of Cloud-Native: Success Stories from Our Customers

212 posts | 13 followers

FollowApsaraDB - June 18, 2021

Alibaba Clouder - January 30, 2018

Farah Abdou - December 21, 2025

JDP - July 30, 2021

JDP - July 31, 2020

Alibaba Clouder - October 30, 2018

212 posts | 13 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More IDaaS

IDaaS

Make identity management a painless experience and eliminate Identity Silos

Learn More Elastic Desktop Service

Elastic Desktop Service

A convenient and secure cloud-based Desktop-as-a-Service (DaaS) solution

Learn MoreMore Posts by Alibaba Cloud Native

Dikky Ryan Pratama May 8, 2023 at 4:03 pm

I wanted to take a moment to express my gratitude for the wonderful article you recently published on Alibaba Cloud Blog. Your writing was engaging and insightful, and I found myself fully immersed in the content from start to finish.The way you presented the information was both informative and easy to understand, which made it an enjoyable read for me. Your hard work and dedication to providing high-quality content are truly appreciated.Thank you once again for sharing your knowledge and expertise on this subject. I look forward to reading more of your work in the future.