By: Jeremy Pedersen

We've got a nice, short blog post this week, focusing on something a little ridiculous...can we run a raytracer) inside Function Compute?

I was inspired by Kevin Beason's smallpt raytracer, which has been a part of my life since college, when I used it to learn NVIDIA's CUDA C++ toolkit.

It's a clever little program: it packs a full raytracer into just 99 lines of C++, and doesn't have any external dependencies beyond the standard C libraries.

This got me thinking...would it be possible to get smallpt to work inside a Function Compute function, maybe with an HTTP trigger?

Almost immediately, I ran into two big problems:

I wanted to use Python to handle the incoming HTTP requests and do all the URL parameter parsing, then use Python's subprocess.run() to actually execute the smallpt raytracer program.

That immediately bought up two questions:

smallpt requires?smallpt built outside of Function Compute (say, in Ubuntu Linux) run OK inside the Function Compute environment?After a little testing, I determined that the answer to both of these questions was no.

This meant I had to go a little deeper and learn about Function Compute runtimes.

Luckily, Function Compute gives you a lot of flexibility in how you run Function Compute functions.

Unluckily, it can be hard to determine which method you should use. Let's walk through each of the possibilities and discuss the pros and cons, then I'll show you which method I ended up using.

For common everyday tasks, Function Compute includes built-in runtimes for common programming languages like Python and C++.

These are (essentially) Docker container images pre-built by Alibaba Cloud.

These runtimes are easy to use, and work well as long as most (or all) of what you need is provided by the built-in environment.

Take the built-in Python 3 runtime as an example. This runtime includes common Python libraries like requests, as well as the Alibaba Cloud Python SDK, which means you can use the standard Python 3 runtime to parse URL parameters, talk to RESTful APIs over the Internet, and communicate with Alibaba Cloud services like ECS or OSS.

Standard runtimes are great for handling events in your Alibaba Cloud account and responding to simple HTTP requests. They are also really nice because code you include in a standard runtime can be edited in Function Compute's online editor, which also lets you invoke your function and review logs. It makes testing and debugging very easy.

Unfortunately, you also get limited functionality. In my case, I needed a runtime that would include Python 3 and allow me to build and run a C++ program. The standard runtimes can't do that.

Let's say the standard built-in runtime environment has almost everything you need: you're just missing one or two key Python or Java libraries. Or maybe you need just one small utility installed, that you could easily get with apt-get on Debian or Ubuntu.

In this case, you can install additional dependencies alongside your Function Compute code. They'll be included in the built-in runtime environment alongside your code.

This is a lot easier than building a full custom runtime (which we'll talk about next), so this is the way to go if you're just including one or two additional libraries or utilities.

There are several different ways to do this. Take a look at the "Use Custom Modules" section in this document to see how this works for Python.

If you aren't a Python person, don't worry: the Function Compute documentation also includes sections on how to include third party dependencies into Functions written in other languages, like Node.js or Java.

Probably the simplest, most repeatable way to add third-party dependencies is by writing a Funfile and including it alongside your Function Compute code. A Python Funfile might look like this:

RUNTIME python

RUN fun-install pip install PyMySQLWhile a Node.js Funfile woud look like this:

RUNTIME nodejs8

RUN npm install mysqlLook familiar? It should: it's very similar to a Dockerfile and in fact calling fun build or s build will indeed create a local Docker container and execute the instructions from the Funfile inside that docker container to fetch the third-party libraries you've asked for.

A quick note: If you have some experience already with fun, the Alibaba Cloud command line tool for Function Compute, please be aware that it is being replaced by Serverless Devs, a more general-purpose, multi-cloud tool for managing serverless applications.

Don't worry, both tools can read a Funfile just fine, and there are instructions on migrating your projects from fun to Serverless Devs on the Alibaba Cloud site.

Of course, this approach only works for simple third-party dependencies.

What I want to do is build a C++ application, include it into my Function Compute runtime, and have it called from within a Python program. That's outside the scope of what the Funfile is designed for. So let's move on to the custom runtime.

Custom Runtimes trade simplicity for flexibility. In a normal Function Compute function, the container that runs your function code actually contains an HTTP server that listens for incoming requests on port 9000. This is how both HTTP-triggered and event-triggered Functions work: input is passed to the function via an HTTP request on port 9000.

In a normal function, this is hidden from you. When your function executes, this invisible webserver component actually receives an HTTP request, parses the request, then calls your handler function (your Function Compute code), passing in the data from the request as variables to your handler function.

With the custom runtime, you provide the webserver component along with your actual code. This gives you a lot more control over how your code executes and also allows you to write functions in languages not supported by the standard runtimes, including C++ and Lua.

To get custom runtimes working, you need to:

GET request on /

bootstrap executable file that Function Compute will call to start up your HTTP server when your Function is invokedThis approach is great if you want more control and flexibility, but in my case this still wasn't enough, because I need the ability to have my HTTP server component written in one language (Python) and my raytracer written in another (C++).

This brings us to the nuclear option: custom containers

Custom containers are the final, most powerful option in customized Function Compute runtimes.

Much like the custom runtime, the custom container needs to have an HTTP server listening on port 9000, and needs to start that server up when your Function is invoked.

The difference is that you have total control over the container that runs the code. Custom containers are created from a standard Dockerfile: there's no need to specify a specific boostramp file. Instead, a CMD or ENTRYPOINT directive at the end of your Dockerfile is enough to get your code running.

In my case, this is exactly what was needed: I could install Python 3, install g++, build my C++ program, load up a Python 3 program running Flask to handle requests, and call my C++ program from within that Python 3 program. No problem!

Honest opinion: If you are at the point where (like me), you find you need a custom runtime environment, I recommend skipping right over the custom runtime and going straight for custom container. You get more flexibility that way, and it's really not that much more complicated to set up.

Ok, we're past the boring explanatory bits...let's see what it takes to get our raytracer running in Function Compute!

To get everything working for this one, you are going to have to do some setup in advance.

You will need:

s commandline tool) and Docker installedThat's about it!

Important note for Apple M1 / arm64 users: If your local machine does NOT use a standard Intel or AMD processor (x86_64), you'll need to use docker buildx build instead of docker build whenever you are creating container images, otherwise your containers won't run properly on the Function Compute platform, since your containers will be built for arm64 and not for x86_64. Specifically, wherever you see docker build mentioned in this blog or in configuration files, you want to replace it with docker buildx build --platform linux/amd64.

The code (and README file) for this project are available here on GitHub. You'll need to download a .zip file of that project or clone it using git clone, like this:

git clone https://github.com/Alicloud-Academy/function-compute.gitFollow along with the README file in the raytracer directory to get everything set up. Remember, you need to have Docker and s (Serverless Devs) installed locally first, and you need to have a working docker container registry set up in ACR (Alibaba Cloud Container Registry) as well.

After running docker build and s deploy (again, see the README file for full details), you should end up with a Function Compute URL which you can paste into your browser. Try it out! In my case, my Function Compute URL looked like this:

https://5483593200991628.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/raytrace-service/raytrace-function/I tried several different values for the ?spp= parameter, and got nicer and nicer renders each time (at the cost of some VERY long waits):

First render: spp=4

https://5483593200991628.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/raytrace-service/raytrace-function/?spp=4

Second render: spp=8

https://5483593200991628.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/raytrace-service/raytrace-function/?spp=8

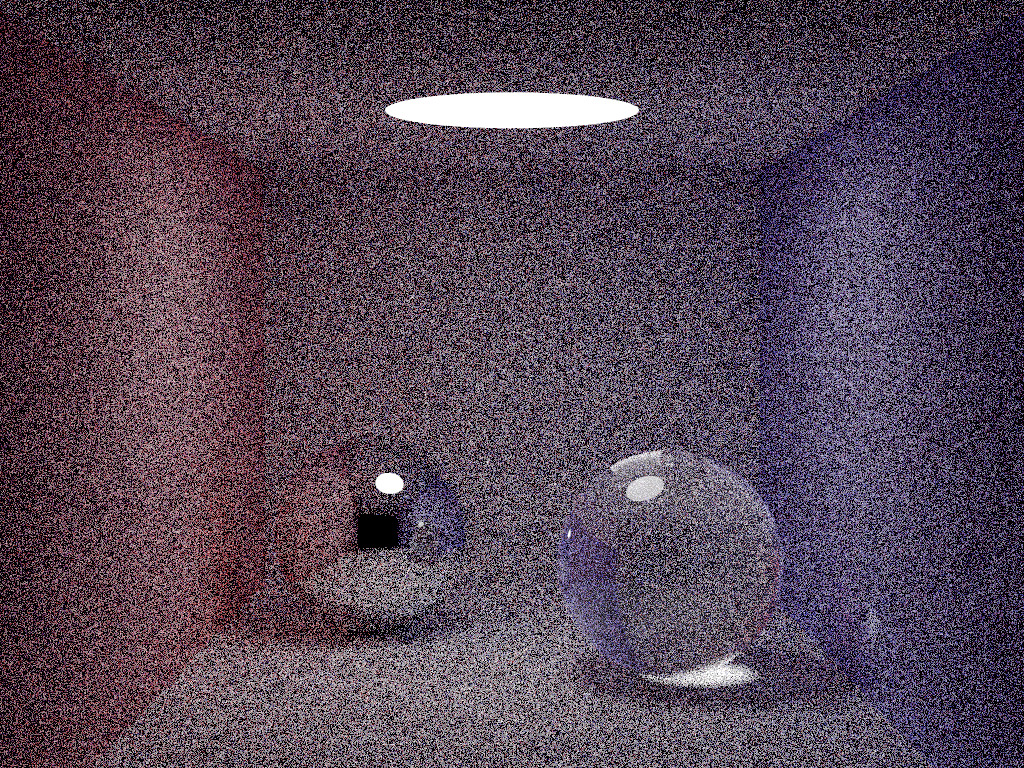

Third render: spp=16

https://5483593200991628.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/raytrace-service/raytrace-function/?spp=16

Note that there is an upper limit on how long your function can run: it's about 15 minutes, so you need to choose values for spp that are short enough that the rendering process completes before your function times out. ^_^

That's it! Take a close look at the code and see if you can figure out how to build your own custom container runtimes. See what you can do!

Great! Reach out to me at jierui.pjr@alibabacloud.com and I'll do my best to answer in a future Friday Q&A blog.

You can also follow the Alibaba Cloud Academy LinkedIn Page. We'll re-post these blogs there each Friday.

Talking to RDS MySQL From Function Compute - Friday Blog - Week 38

What's New On Alibaba Cloud This Week? - Friday Blog - Week 40

JDP - September 15, 2021

JDP - October 21, 2021

JDP - December 23, 2021

JDP - July 2, 2021

JDP - April 23, 2021

JDP - May 20, 2022

Alibaba Cloud Academy

Alibaba Cloud Academy

Alibaba Cloud provides beginners and programmers with online course about cloud computing and big data certification including machine learning, Devops, big data analysis and networking.

Learn More Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn MoreMore Posts by JDP